Validating Non-Targeted Methods for Food Authenticity: A Complete Guide for Researchers

This article provides a comprehensive overview of the validation of non-targeted methods (NTMs) for food authenticity.

Validating Non-Targeted Methods for Food Authenticity: A Complete Guide for Researchers

Abstract

This article provides a comprehensive overview of the validation of non-targeted methods (NTMs) for food authenticity. Aimed at researchers, scientists, and professionals in food development and regulation, it covers the foundational principles of NTMs, explores diverse methodological approaches and their real-world applications, addresses key challenges in implementation and optimization, and outlines current frameworks and considerations for rigorous method validation. By synthesizing the latest research and emerging trends, this guide serves as a critical resource for developing reliable, fit-for-purpose NTMs to combat food fraud and ensure product integrity.

Understanding Non-Targeted Methods: The Foundation of Modern Food Authenticity

Non-targeted methods (NTMs) represent a paradigm shift in analytical chemistry, moving away from the traditional "needle in a haystack" approach that focuses on predefined analytes. Instead, NTMs exploit the comprehensive analytical signature of the entire sample matrix, capturing a holistic view of its chemical composition [1]. In the specific context of food authenticity research, these methods have emerged as powerful tools for characterizing complex food systems, detecting subtle variations indicative of adulteration, verifying origin, and ensuring overall product quality [2].

The core principle of NTMs lies in their ability to perform comprehensive characterization without a priori knowledge of the sample's chemical content [3]. This is achieved through the synergistic combination of high-resolution analytical instrumentation, such as mass spectrometry (MS) or nuclear magnetic resonance (NMR) spectroscopy, with advanced chemometrics and machine learning algorithms [1]. By capturing a complete spectral or chromatographic "fingerprint," NTMs reduce the complex data into manageable variables that provide an extensive metabolite snapshot, encompassing everything from minor compounds to major constituents [2]. The resulting data-rich outputs support stricter quality control and are critical in a marketplace increasingly concerned with food provenance, integrity, and safety [2].

Key Concepts and Comparative Analysis

Foundational Terminology

Understanding the expanding set of terminologies is essential for the widespread adoption and correct application of NTMs. Key concepts include:

Non-Targeted Analysis (NTA): A theoretical concept broadly defined as the characterization of the chemical composition of any given sample without using a priori knowledge regarding the sample's chemical content [3]. It is also referred to as "non-target screening" and "untargeted screening".

Features: In the context of data analysis, a feature represents a set of grouped, associated m/z-retention time pairs (mz@RTs) that represent a set of MS1 components for an individual compound, such as an individual compound and its associated isotopologue, adduct, and in-source product ion m/z peaks [3].

Wet Lab and Dry Lab Procedures: All steps involved in the NTM until the analytical measurements are performed on a lab bench are collectively the "wet lab" procedures. The subsequent "dry lab" procedures involve a chemometric, statistical, or machine learning model that parses the multi-dimensional dataset [1].

Reference Databases: NTMs rely on large, community-built datasets containing empirical data from authentic reference samples to define sample populations or classes [2] [1]. The robustness of these databases is critical for the reliability of any ensuing NTM.

Targeted vs. Non-Targeted Approaches

The fundamental difference between targeted and non-targeted strategies dictates their respective applications, advantages, and limitations.

Table 1: Comparison of Targeted and Non-Targeted Analytical Approaches

| Aspect | Targeted Methods | Non-Targeted Methods (NTMs) |

|---|---|---|

| Analytical Focus | Aims at a predefined "needle in a haystack"; analysis of a well-defined set of known metabolites [2] [1]. | Exploits all constituents of the "haystack"; holistic, impartial examination of complex compositions [2] [1]. |

| Primary Output | Identification and quantification of specific, known compounds. | A unique fingerprint (e.g., NMR spectrum, chromatogram) of a food sample [2]. |

| Typical Workflow | Comparisons with reference compounds and internal standards [2]. | Multi-step procedure: metadata collection, sample prep, data acquisition, and multi-variate data analysis [2]. |

| Main Application | Verification and quantification of known substances. | Discovery of unknown markers, sample classification, authentication, and detection of unanticipated adulterants [3]. |

| Data Complexity | Lower; focused data easily analyzed with automated methods. | High; requires advanced data processing and modeling to parse multi-dimensional datasets [1]. |

Workflow and Experimental Protocol

The successful application of an NTM relies on a rigorously defined and validated workflow. The following protocol outlines the general steps for an NMR-based non-targeted method for food authenticity, which can be adapted for other spectroscopic or spectrometric platforms.

General Workflow for NMR-Based Non-Targeted Analysis

A unified workflow is essential for achieving consistent, high-quality metabolomics data that can be reproduced across different laboratories [2]. The general procedure encompasses several critical stages:

- Selection of Authentic Reference Samples: This initial step is critical for ensuring the representativeness of the sample population. A sufficient number of authentic samples, accounting for biological variance (e.g., from genetics, environment, and management), must be selected to build a robust reference database and classification model [2] [4].

- Sample Preparation: Depending on the food matrix, this step may involve processes such as extraction, concentration, or purification. The protocol must be optimized and standardized to minimize technical variation [2].

- NMR Measurement: The analysis is performed using optimized and agreed-upon acquisition methods and conditions (e.g., pulse sequences, temperature, number of scans) to ensure spectral reproducibility and comparability even across different instruments [2].

- Processing of NMR Spectra: Raw data (Free Induction Decay, FID) is processed (e.g., Fourier transformation, phasing, baseline correction) and often normalized (e.g., Constant Sum Normalization) to reduce unwanted technical variance [2].

- Data Analysis and Model Building: The processed spectral data, reduced to manageable variables, is analyzed using chemometric methods (e.g., PCA, PLS-DA) to build a classification model that can authenticate an unknown sample based on its metabolic fingerprint [2].

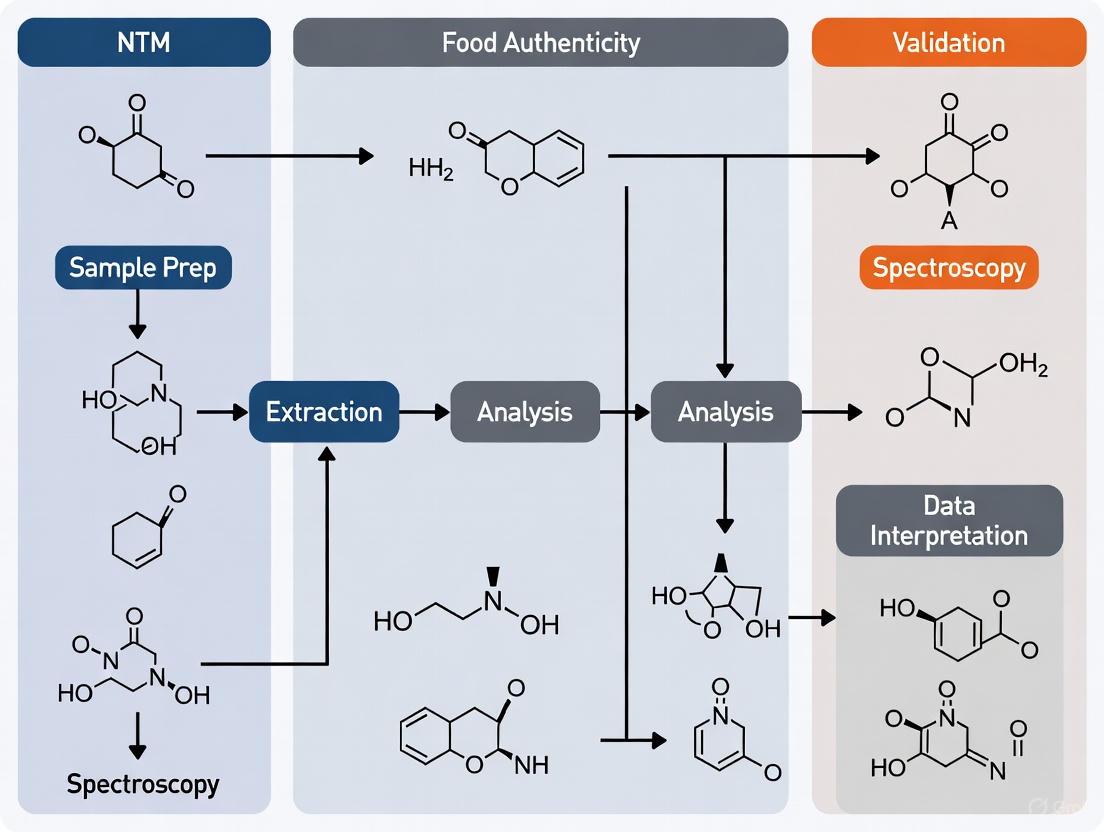

The following diagram visualizes this integrated workflow, highlighting the seamless connection between the physical sample and the data-driven decision.

Protocol: Fisher Ratio Analysis for GC-MS Data

Fisher ratio (F-ratio) analysis is a specific, supervised, non-targeted, discovery-based method used to compare chromatograms from different sample classes and identify features that best differentiate them [5]. The following is a detailed protocol for applying pixel-based F-ratio analysis to discover minute, class-distinguishing compounds in a complex matrix, such as detecting adulterants in food.

Objective: To discover non-native (e.g., adulterating) analytes in a complex food matrix (e.g., an edible oil) by comparing chromatograms of authentic and suspect samples.

Principles: The F-ratio is defined as the ratio of class-to-class variance (( \sigma{cl}^2 )) to the sum of within-class variances (( \sigma{err}^2 )) [5]. It is calculated as: [ \text{Fisher ratio} = \frac{\sigma{cl}^2}{\sigma{err}^2} ] A high F-ratio indicates a feature (chromatographic peak) with large variation between sample classes relative to the variation within each class, marking it as a strong candidate for a class-distinguishing compound.

Experimental Steps:

Sample Preparation and Data Acquisition:

- Prepare samples from two classes (e.g., Class A: authentic oil, Class B: potentially adulterated oil). Include a sufficient number of replicates per class to reliably estimate within-class variance.

- Analyze all samples using GC-MS under consistent, optimized chromatographic conditions to generate the raw data files.

Data Pre-processing and Alignment:

- Convert raw data files into a standard format.

- Use appropriate software to perform retention time alignment on the total ion chromatograms to correct for minor shifts in retention time, which is crucial for minimizing false positives [5].

Pixel-Based F-Ratio Calculation:

- Export the fully aligned, three-dimensional (retention time × m/z × intensity) data.

- Using a custom script or software, calculate the F-ratio for every data point (pixel) in the retention time and m/z plane.

- This involves, for each pixel, calculating the variance between the mean intensities of Class A and Class B, and dividing by the sum of the variances within Class A and within Class B.

Generate and Filter the Hit List:

- Organize all calculated F-ratios into a ranked "hit list," with the highest F-ratio values at the top.

- To establish a statistical cutoff and avoid false positives, perform a null distribution analysis. This involves recalculating F-ratios multiple times with randomly permuted class labels to create a null F-ratio distribution. The 99th percentile of this null distribution can be used as a significance threshold [5].

Hit Identification:

- For the significant hits (peaks) above the threshold, examine the mass spectrum associated with the retention time and m/z of the feature.

- Use mass spectral libraries (e.g., NIST) to identify the compound responsible for the discriminating signal.

Advantages: Pixel-based F-ratio analysis has been shown to be more sensitive than peak table- or tile-based approaches, capable of discovering spiked analytes at low concentrations in a complex gasoline background [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

The implementation of NTMs requires specific reagents, materials, and tools to ensure data quality and reproducibility. The following table details key components for a typical NTM workflow in food authenticity.

Table 2: Essential Reagents and Materials for Non-Targeted Methods

| Item Name | Function/Application | Example/Specification |

|---|---|---|

| Deuterated Solvent | Provides a field-frequency lock and internal reference for NMR spectroscopy; essential for stable instrument operation. | Deuterium oxide (D₂O) for polar extracts; chloroform-d (CDCl₃) for non-polar extracts. |

| Internal Standard | Serves as a reference for chemical shift (NMR) or retention time (MS), and for quantification. | 4,4-dimethyl-4-silapentane-1-sulfonic acid (DSS) for NMR; stable isotope-labeled compounds for MS. |

| Chemical Shift Reference | Provides a precise, internal point for chemical shift calibration in NMR spectra. | DSS or Tetramethylsilane (TMS) added to the sample at a known concentration [2]. |

| Quality Control (QC) Pool Sample | Monitors instrument stability and performance over a sequence of analyses. | A pooled sample created by combining small aliquots of all test samples analyzed intermittently throughout the run. |

| Authentic Reference Materials | Used to build and validate the classification model; defines the "authentic" class. | Certified, well-characterized samples with verified provenance, covering expected biological variance [4]. |

| Proficiency Testing (PT) Schemes | Provides an external check of laboratory performance and method validity. | Schemes available via organizations like EPTIS, which allow labs to compare their results with others [6]. |

Validation and Standardization Framework

For NTMs to transition from academic research to reliable tools for routine testing and official controls, rigorous validation is imperative [1]. Unlike targeted methods, validating NTMs presents unique challenges as it is the entire analytical process—from sample preparation to the final classification result—that must be validated to be considered fit-for-purpose [1].

Key performance characteristics to be assessed include:

- Discrimination Ability: The method's capability to correctly differentiate between defined classes (e.g., authentic vs. adulterated, or different geographical origins).

- Specificity: Demonstrated by the consistent detection of the unique pattern or fingerprint that characterizes a class.

- Transferability/Robustness: The reproducibility of results across different instruments, laboratories, and over time. The high reproducibility of NMR spectroscopy, for instance, makes it particularly suitable for building collaborative databases [2].

- Sensitivity and False Positive/Negative Rates: While more challenging to define than in targeted analysis, these can be estimated using a sufficient number of test samples with known class memberships.

International efforts are ongoing to develop harmonized guidelines for NTM validation. The AOAC International has developed Standard Method Performance Requirements (SMPRs) for non-targeted testing of various foods, including extra virgin olive oil, honey, and milk, providing minimum performance criteria that methods must fulfil [6]. Furthermore, the Benchmarking and Publications for Non-Targeted Analysis Working Group (BP4NTA) was formed to establish consensus definitions, share best practices, and improve the transparency and reproducibility of peer-reviewed NTA studies [3]. These initiatives are critical for fostering global consumer confidence in the authenticity and quality of the food supply chain [2].

Non-targeted methods represent a paradigm shift in analytical food testing. Unlike traditional targeted analyses that focus on predefined "needles in a haystack," NTMs exploit information from all measurable constituents within a sample, creating comprehensive analytical fingerprints that can be mined for patterns indicative of authenticity or fraud [7]. In food authenticity research, this approach is particularly valuable for detecting sophisticated adulteration, mislabeling, and substitution that might evade conventional targeted analyses [8]. The power of NTMs stems from the seamless integration of two complementary domains: the wet lab, where physical samples are processed and measured using advanced analytical platforms, and the dry lab, where complex data undergoes computational processing and statistical modeling to extract meaningful biological or chemical insights [8]. This integration enables researchers to distinguish closely related food products, such as spelt and wheat, with high reliability even when analyzing processed goods like flour and bread where conventional morphological identification fails [8]. The following sections detail the core components, workflows, and validation considerations for implementing integrated NTMs in food authenticity research.

Core Components of an Integrated NTM Framework

Wet Lab Components

The wet lab component is responsible for converting physical samples into standardized, high-quality analytical data. This process begins with sample preparation, which must be robust and reproducible to minimize technical variation that could interfere with biological or chemical signatures. For grain authentication, as demonstrated in spelt/wheat discrimination, this typically involves homogenization and standardized extraction protocols to ensure consistent recovery of analytes across sample batches [8].

The cornerstone of many modern NTMs is analytical fingerprinting using platforms such as Liquid Chromatography coupled to High-Resolution Mass Spectrometry (LC-HRMS) [8]. This platform generates highly resolved spectra that capture subtle differences in food composition arising from genetic factors, growing conditions, or processing methods. The resulting fingerprints comprise data points across mass/charge (m/z) ratios and retention times (Rt), creating a rich, multidimensional dataset for subsequent pattern recognition [8]. The resolution and accuracy of the mass analyzer (e.g., Time-of-Flight or TOF) are critical, as they determine the ability to detect minute but consistent differences between authentic and adulterated products.

Dry Lab Components

The dry lab component transforms raw analytical data into actionable classification models. Data preprocessing is an essential first step, potentially including normalization, peak alignment, and feature extraction to reduce instrumental noise and enhance biological signals [8]. For LC-HRMS data, this often involves creating a standardized mass window (such as in SWATH acquisition) and organizing peak intensity values across different dimensions [8].

Statistical modeling and machine learning form the analytical core of the dry lab. Convolutional Neural Networks (CNNs) have shown remarkable efficacy for classifying complex spectral data, automatically learning discriminative patterns without requiring manual feature selection [8]. These models can be developed using a nested cross-validation (NCV) approach to ensure robustness and prevent overfitting, particularly important when dealing with limited sample sizes [8]. The output of these models can be quantified using novel metrics such as the D score, which provides a quantitative measure of classification confidence and enables comparison across different models or experimental conditions [8].

Table 1: Core Components of an Integrated NTM for Food Authenticity

| Component | Sub-Process | Key Techniques | Output |

|---|---|---|---|

| Wet Lab | Sample Preparation | Homogenization, extraction | Standardized analyte mixture |

| Analytical Fingerprinting | LC-HRMS, SWATH acquisition | 2D spectra (m/z vs Rt with intensities) | |

| Dry Lab | Data Preprocessing | Normalization, peak alignment, feature extraction | Cleaned, standardized feature set |

| Statistical Modeling | Convolutional Neural Networks (CNN), Nested Cross-Validation | Trained classification model, D scores |

Experimental Workflow and Protocol

Integrated NTM Workflow

The following diagram illustrates the complete integrated workflow for an NTM in food authenticity research, from sample receipt to final classification:

Detailed Wet Lab Protocol: LC-HRMS Fingerprinting for Grain Authentication

Sample Preparation:

- Homogenization: Begin with 100g of grain sample (spelt or wheat cultivars). Mill to a consistent particle size using a standardized milling procedure.

- Extraction: Weigh 50mg of homogenized material into extraction tubes. Add 1mL of extraction solvent (e.g., methanol:water, 80:20 v/v) and vortex for 30 seconds.

- Sonication: Sonicate samples for 15 minutes at room temperature, then centrifuge at 14,000g for 10 minutes.

- Filtration: Transfer supernatant to LC vials through 0.2μm PTFE filters.

LC-HRMS Analysis:

- Chromatographic Separation: Inject 5μL of extract onto a reversed-phase C18 column (2.1 × 100mm, 1.7μm) maintained at 40°C. Use a binary gradient with mobile phase A (0.1% formic acid in water) and B (0.1% formic acid in acetonitrile) at a flow rate of 0.3mL/min.

- Mass Spectrometric Detection: Operate HRMS in positive electrospray ionization mode with data-independent acquisition (SWATH). Set mass range to 50-1200m/z with resolution >30,000. Use collision energy spread of 20-50eV for fragmentation.

- Quality Control: Include quality control samples (pooled quality control) every 10 injections to monitor system stability.

Detailed Dry Lab Protocol: CNN Model Development for Spelt/Wheat Discrimination

Data Preprocessing:

- Peak Alignment: Use reference-based alignment to correct for retention time drift across samples.

- Feature Detection: Extract ion features with intensity >1000 counts and presence in at least 80% of samples in at least one group.

- Data Normalization: Apply probabilistic quotient normalization to correct for overall intensity differences.

- Data Augmentation: For small datasets, apply minor random variations to expand training set and improve model robustness.

CNN Architecture and Training:

- Input Layer: Format preprocessed 2D spectral data (m/z × retention time) as input images.

- Network Architecture: Implement a CNN with:

- Two convolutional layers with 32 and 64 filters respectively

- Max-pooling layers (2×2) after each convolutional layer

- Fully connected layer with 128 units

- Output layer with softmax activation for binary classification

- Training Parameters: Use nested cross-validation with 5 outer folds and 3 inner folds. Train for 100 epochs with early stopping (patience=10). Use Adam optimizer with learning rate of 0.001.

- Validation: Evaluate model performance on external validation set including artificially mixed spectra and processed goods.

Table 2: Validation Parameters for NTM in Food Authenticity

| Performance Characteristic | Assessment Method | Target Value |

|---|---|---|

| Repeatability | Intra-day precision (n=5) | CV < 15% |

| Reproducibility | Inter-day/laboratory precision | CV < 20% |

| Recovery Yield | Spiked samples | 67-131% |

| Inter-laboratory Agreement | Multiple laboratory comparison | >80% |

| Classification Accuracy | External validation set | >90% |

Validation Framework for NTMs

Validation of NTMs requires specialized approaches that differ from traditional method validation. The fit-for-purpose principle guides validation, with parameters tailored to the specific authentication question [7]. Key validation parameters include:

Analytical Validation: This encompasses traditional parameters such as repeatability (intra-day precision) and reproducibility (inter-day, inter-laboratory precision). In spelt/wheat discrimination studies, repeatability should demonstrate coefficient of variation (CV) <15% for peak intensities in quality control samples [8]. Reproducibility is demonstrated through inter-laboratory comparisons targeting >80% agreement [9] [10]. Recovery yield, assessed using spiked samples, should fall within 67-131% [9] [10].

Model Validation: For the dry lab component, robust validation requires using independent sample sets not used in model training. The nested cross-validation approach prevents overfitting and provides realistic performance estimates [8]. External validation should include challenging samples such as artificially mixed spectra, processed goods, and atypical cultivars to demonstrate real-world applicability [8].

The following diagram illustrates the validation framework for NTMs:

Essential Research Reagent Solutions

Successful implementation of integrated NTMs requires specific research reagents and materials. The following table details essential components for the spelt/wheat discrimination protocol:

Table 3: Essential Research Reagents and Materials for NTM Food Authentication

| Category | Specific Material/Reagent | Function in Protocol | Specifications |

|---|---|---|---|

| Chromatography | Reversed-phase C18 column | Separation of complex extracts | 2.1 × 100mm, 1.7μm particle size |

| Formic acid in water (0.1%) | Mobile phase A | LC-MS grade | |

| Formic acid in acetonitrile (0.1%) | Mobile phase B | LC-MS grade | |

| Sample Preparation | Methanol (80%) | Extraction solvent | LC-MS grade |

| PTFE filters (0.2μm) | Sample clarification | Sterile, non-binding | |

| Mass Spectrometry | Reference mass solution | Mass accuracy calibration | Suitable for m/z range 50-1200 |

| Tuning and calibration solution | Instrument performance verification | Manufacturer-specified | |

| Data Analysis | CNN software framework | Model development and training | Python with TensorFlow/PyTorch |

| Spectral processing tools | Feature extraction and alignment | OpenMS, XCMS, or similar |

Application in Food Authenticity Research

The integrated NTM approach has demonstrated particular efficacy in challenging authentication scenarios. In the spelt/wheat case study, the method successfully distinguished eleven cultivars each of spelt and wheat, achieving reliable classification even for processed goods (spelt bread and flour) and atypical cultivars not included in the original model training [8]. This capability is significant for regulatory enforcement, as German guidelines stipulate that spelt bread must contain at least 90% spelt, creating a need for accurate quantification in mixed matrices [8].

The approach also shows promise for addressing other food fraud challenges, including geographic origin verification, detection of adulterants, and verification of organic growing claims [8]. As regulatory frameworks such as EU regulations 2017/625 and 1169/2011 emphasize correct labeling and food safety, NTMs provide analytical support for compliance monitoring and enforcement actions [8].

The integration of wet lab and dry lab processes creates a powerful synergy for food authentication. The wet lab generates comprehensive, high-quality analytical data, while the dry lab extracts subtle patterns and relationships that would be undetectable through conventional analysis. This integrated framework represents a significant advancement in food authenticity research, providing a robust, flexible approach for addressing evolving food fraud challenges.

The Critical Role of Reference Databases in NTM Classification

In the field of food authenticity research, Non-Targeted Methods (NTMs) have emerged as a powerful analytical technique for detecting food fraud and verifying product origin, quality, and safety [1]. Unlike targeted approaches that focus on predefined analytes, NTMs exploit a comprehensive "fingerprint" of the sample, combining high-resolution analytical instruments with advanced chemometrics and machine learning algorithms [1] [11]. The reliability of these methods depends critically on the quality and scope of reference databases that form the foundation for statistical models and classification systems. This application note examines the pivotal role of reference databases in NTM classification, providing detailed protocols and considerations for their development and validation within food authenticity research.

Database Fundamentals for NTM Classification

Core Components and Terminology

Non-Targeted Methods consist of two fundamental components: "wet lab" procedures encompassing all steps until analytical measurements, and "dry lab" procedures involving chemometric/statistical/machine learning models that parse multi-dimensional datasets [1]. Reference databases serve as the critical bridge between these components, providing the empirical data needed to define sample populations and classes (e.g., olive oil from Italy versus Spain, wild versus farmed salmon) [1].

Database Diversity in Food Authenticity

The term "database" in NTM contexts encompasses diverse technological implementations, from cloud-based storage and management systems to local repositories [1]. These databases address different classification challenges in food authentication, including geographic origin, production methods (organic versus conventional), biological species, and processing techniques [1]. The construction of these databases must accommodate various analytical technologies, including chromatographic separation coupled with mass spectrometry, NMR, FTIR, NIR, Raman spectroscopy, and next-generation sequencing (NGS) technologies [1].

Table 1: Analytical Platforms and Their Applications in Food Authenticity NTMs

| Analytical Platform | Measured Signals | Example Food Applications | Reference |

|---|---|---|---|

| GC-MS, LC-MS | Chromatograms, mass spectra | Virgin olive oil quality grading, honey geographical origin | [1] [11] |

| NMR Spectroscopy | Spectral fingerprints | Detection of protein hydrolysates in turkey meat | [11] |

| FT-NIR Spectroscopy | Near-infrared spectra | Truffle species differentiation | [11] |

| DART-HRMS | Mass spectra | Chestnut honey geographical origin discrimination | [11] |

| NGS/Metabarcoding | DNA sequences | Multi-species identification in complex products | [1] |

Database Construction and Curation Protocols

Sample Collection and Preparation

The development of a robust reference database begins with comprehensive sample collection that accurately represents the natural variability within defined classes. For geographical origin authentication, this includes samples across multiple harvest years, growing regions, and processing facilities. Sample preparation for NTMs typically employs simple protocols to capture as many matrix components as possible, contrasting with targeted methods that often require complex, selective extractions [11].

Protocol 3.1: Representative Sample Collection for Food Authenticity Databases

- Define classification objectives: Clearly establish the authentication goal (geographical origin, species, production method)

- Establish inclusion criteria: Determine sample requirements (minimum number per class, sourcing documentation)

- Collect reference materials: Obtain certified reference materials where available

- Document metadata: Record comprehensive sample information including origin, date, processing details, and storage conditions

- Implement quality controls: Include positive and negative controls across analytical batches

Analytical Measurement and Data Acquisition

Consistent analytical performance is fundamental to building reliable databases. The analytical methods used for database construction must be validated regarding their performance characteristics to ensure future standardization potential [1].

Protocol 3.2: Standardized Analytical Procedures for Database Building

- Method validation: Perform single-laboratory validation of analytical methods before database building

- Instrument calibration: Establish and document calibration procedures for all instruments

- Quality control samples: Analyze QC samples at regular intervals throughout data acquisition

- Standard operating procedures: Develop detailed SOPs for all analytical steps

- Data formatting: Implement consistent file naming conventions and data formats

Data Processing and Quality Control Pipeline

The exponential growth of reference data necessitates robust computational pipelines for database construction and quality control [12]. As demonstrated in metagenomic classification, database quality directly impacts research conclusions, with contamination leading to spurious classifications [12].

Protocol 3.3: Database Quality Control and Curation

- Sequence decontamination: Apply tools like Conterminator to identify and remove cross-contamination [12]

- Low-complexity masking: Use algorithms like NCBI dustmasker to mask uninformative regions [12]

- Length filtering: Remove sequences below established thresholds (e.g., <100 bases for genomic data) [12]

- Taxonomic consistency: Verify alignment between sequence data and taxonomic information [12]

- Version documentation: Maintain comprehensive records of database versions and build parameters

Table 2: Database Quality Control Measures and Their Impacts

| Quality Control Step | Tool/Method | Impact on Database Performance | Reference |

|---|---|---|---|

| Reference Decontamination | Conterminator | Reduces spurious classifications; eliminated false Plasmodium annotations in metagenomic study | [12] |

| Low-Complexity Masking | dustmasker | Removes uninformative regions, improves classification specificity | [12] |

| Length Filtering | Custom scripts (Recentrifuge) | Excludes short sequences that reduce classification accuracy | [12] |

| Taxonomic Validation | NCBI Taxonomy Database | Ensures consistent taxonomic assignments across database | [12] |

| Temporal Synchronization | Custom pipeline | Minimizes inconsistencies from asynchronous updates between sequence and taxonomy databases | [12] |

Database Implementation in NTM Workflows

Classification Algorithms and Model Building

The transition from raw database to functional classification system requires appropriate chemometric approaches. Multiple studies demonstrate the effectiveness of combining spectroscopic or chromatographic data with multivariate statistics for food authentication [11].

Diagram 1: NTM Classification Workflow (67 characters)

Experimental Design for Method Validation

Validating NTM classification systems requires specialized approaches that differ from traditional method validation. The European Union Official Controls Regulation requires control laboratories to apply standardized methods when available, or otherwise methods validated through single-laboratory validation [1].

Protocol 4.2: Validation of NTM Classification Systems

- Performance characteristics: Establish accuracy, precision, specificity, and robustness metrics

- Class representation: Ensure validation samples are independent from training set

- Statistical significance: Implement appropriate significance testing for classification rates

- Cross-validation: Apply k-fold or leave-one-out cross-validation procedures

- External validation: Validate model performance with independently sourced samples

Case Studies in Food Authenticity

Geographical Origin Authentication

Multiple studies demonstrate the effectiveness of NTMs with comprehensive reference databases for determining geographical origin. Kim et al. used hydrophilic and lipophilic metabolite profiling via GC-MS with OPLS-DA to differentiate perilla and sesame seeds from China and Korea, identifying glycolic acid as a potential biomarker [11]. Similarly, Lippoli et al. developed a non-targeted method using DART-HRMS combined with multivariate statistics to discriminate chestnut honey from Portugal and Italy and acacia honey from Italy and China [11].

Species and Production Method Discrimination

The combination of analytical techniques with reference databases enables precise discrimination of species and production methods. Grazina et al. used fatty acid profiles determined by GC-FID with machine learning classifiers to differentiate wild from farmed salmon based on seventeen chemical features [11]. Segelke et al. demonstrated that FT-NIR spectroscopy with chemometrics could differentiate valuable truffle species (Tuber magnatum) from morphologically similar but less valuable species (Tuber borchii) with 100% accuracy [11].

Table 3: Performance of NTM Approaches in Food Authentication Case Studies

| Food Product | Authentication Challenge | Analytical Technique | Classification Performance | Reference |

|---|---|---|---|---|

| Olive Oil | Commercial category (extra virgin, virgin, lampante) | Flash GC with PLS-DA | High percentage correct classification in cross and external validation | [11] |

| Truffles | Species differentiation (T. magnatum vs T. borchii) | FT-NIR with chemometrics | 100% accuracy for expensive white truffle differentiation | [11] |

| Turkey Meat | Detection of protein hydrolysate adulteration | GC-MS and NMR spectroscopy | Detection of adulteration missed by targeted amino acid analysis | [11] |

| Honey | Geographical origin discrimination | ICP-OES with LDA | Successful distinction of honeys from industrial vs. non-industrial areas | [11] |

| Salmon | Wild vs. farmed discrimination | GC-FID fatty acids with machine learning | Successful discrimination of production method and geographical origin | [11] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Computational Tools for NTM Database Development

| Item/Category | Function in NTM Workflow | Specific Examples/Considerations |

|---|---|---|

| Reference Materials | Define class characteristics in database | Certified reference materials, geographically sourced verified samples |

| Chromatography-Mass Spectrometry Systems | Generate comprehensive chemical profiles | GC-MS, LC-MS systems with high resolution capabilities |

| Spectroscopic Instruments | Provide rapid fingerprinting capabilities | FT-NIR, NMR, Raman spectrometers for non-destructive analysis |

| DNA Sequencing Platforms | Species identification via genetic markers | Next-generation sequencing for metabarcoding approaches |

| Chemical Standards | Instrument calibration and method validation | Pure analytical standards for quality control procedures |

| Data Processing Software | Extract features from raw instrument data | XCMS, MS-DIAL, custom preprocessing scripts |

| Statistical Analysis Packages | Develop classification models | R, Python with specialized packages (scikit-learn, SIMCA) |

| Database Management Systems | Store and query reference data | SQL, NoSQL databases depending on data structure and volume |

| High-Performance Computing | Process large-scale datasets and build models | Cluster computing resources for database construction and analysis |

Reference databases for Non-Targeted Methods must be treated as dynamic entities requiring continuous quality control, validation, and updating akin to software development best practices [12]. The exponential growth of sequence data, with GenBank and NCBI nt database experiencing continuous expansion, presents both opportunities and challenges for NTM classification [12]. Future developments should focus on standardized data formats, interoperable database structures, and automated quality control pipelines to enhance reproducibility and reliability across laboratories.

The critical importance of reference databases in NTM classification is evident across food authenticity applications. From truffle speciation to geographical origin of honey and production method of salmon, the comprehensiveness and quality of the reference database directly determines the accuracy and reliability of authentication. As the field advances, treating reference databases with the same rigor as analytical instrumentation will be essential for advancing food authenticity research and combating increasingly sophisticated food fraud.

The global food supply chain faces escalating challenges from food fraud, defined as the deliberate and intentional adulteration, substitution, or misrepresentation of food products for economic gain [13]. Concurrently, consumer demand for transparency regarding food origin, safety, and authenticity has surged, driven by heightened health consciousness and several highly publicized food scandals [13] [14]. These dual pressures represent the key drivers necessitating advanced analytical solutions to verify food authenticity and protect consumers and legitimate producers.

Non-targeted methods (NTMs) represent a paradigm shift in food authenticity testing. Unlike traditional targeted methods that test for predefined analytes, NTMs exploit a comprehensive "fingerprint" of a food sample, enabling the detection of known, unknown, and unexpected adulterants [15] [16]. This application note details the integration of NTMs, specifically liquid chromatography–high-resolution mass spectrometry (LC–HRMS), within a robust validation framework to effectively address contemporary food fraud challenges and meet the demand for supply chain transparency.

Market and Regulatory Context

The global food authenticity testing market is experiencing significant growth, with an estimated value of approximately $3.6 billion in 2023 and a projected compound annual growth rate (CAGR) of around 7% between 2025 and 2033 [14]. This expansion is concentrated among large multinational testing companies but is fueled by several converging factors:

- Stringent Regulations: Governments worldwide are implementing stricter regulations on food safety, traceability, and labeling, mandating rigorous testing protocols. Non-compliance results in millions of dollars in fines annually [14].

- Economic Impact of Fraud: Food fraud inflicts substantial economic costs, including unfair competition and financial losses for legitimate businesses, extending to public health implications [17] [13].

- Consumer Awareness: Increasing consumer awareness of ethical sourcing, sustainability, and food safety is pushing brands to proactively demonstrate product authenticity to build trust and enhance brand reputation [14] [13].

Certain product categories are disproportionately targeted for fraud. The table below summarizes the market characteristics of key segments.

Table 1: Market Characteristics of High-Risk Food Authenticity Segments

| Food Segment | Market Share | Common Fraud Types | Primary Driver for Testing |

|---|---|---|---|

| Dairy Products | ~25% [14] | Milk adulteration, substitution [14] | Public health protection from hazardous adulterants [14] |

| Oils and Fats | ~20% [14] | Adulteration of olive oil with cheaper substitutes [14] | Guaranteeing product quality and preventing consumer deception [14] |

| Honey | ~15% [14] | Adulteration with sugar syrups, mislabeling [14] | Ensuring purity and quality to maintain consumer trust [14] |

| Meat and Grain | Significant [18] [13] | Species substitution, mislabeling of geographical origin [18] [13] | Economic fraud prevention, ethical sourcing verification [18] [13] |

Non-Targeted Method (NTM) Fundamentals and Advantages

NTMs comprise two core components: a "wet lab" procedure for analytical measurement and a "dry lab" procedure for statistical modeling and data evaluation [18]. The fundamental advantage of NTMs is their ability to conduct a comprehensive analysis without prior knowledge of potential adulterants, making them uniquely suited for detecting sophisticated and evolving fraud.

Key advantages include:

- Detection of Unknowns: Capable of identifying unexpected contaminants or adulterants, as demonstrated in the melamine milk scandal [16].

- Retrospective Analysis: Data can be archived and re-interrogated later when new information about a potential adulterant emerges [16].

- Broad Applicability: Useful for multiple applications, including food authenticity, foodomics, and nutrient analysis, by providing a complete view of sample composition [16].

Detailed Experimental Protocol: LC-HRMS for Food Authentication

The following protocol details a specific NTM application for distinguishing spelt from wheat, a common fraud due to the price premium of spelt [18]. This can be adapted for other grain and food matrix authentications.

Research Reagent Solutions and Essential Materials

Table 2: Essential Materials and Reagents for LC-HRMS Non-Targeted Analysis

| Item | Specification/Function |

|---|---|

| Liquid Chromatograph | System capable of high-pressure gradient separations. |

| Mass Spectrometer | High-resolution accurate mass (HRAM) analyzer (e.g., Time-of-Flight or Orbitrap). |

| Chromatography Column | Reversed-phase C18 column, 100 x 2.1 mm, 1.8 µm particle size. |

| Mobile Phase A | 0.1% Formic acid in water. Aids in protonation for positive electrospray ionization. |

| Mobile Phase B | 0.1% Formic acid in acetonitrile or methanol. |

| Quality Control Mixture | Non-targeted Standard Quality Control (NTS/QC) mixture containing ~89 compounds with diverse physicochemical properties to monitor instrument performance [16]. |

| Sample Solvent | Appropriate solvent compatible with LC-MS (e.g., water, acetonitrile, methanol). |

| Isotopically Labeled Standards | For checking retention time stability and ionization efficiency [19]. |

Step-by-Step Workflow

Figure 1: Experimental workflow for non-targeted food authentication using LC-HRMS and machine learning.

Sample Preparation:

- Collect authentic reference samples (e.g., 11 cultivars each of verified spelt and wheat) [18].

- For solid samples, homogenize and perform a simple extraction (e.g., with methanol/water) to capture a wide range of metabolites. The goal is a non-selective extraction to support the non-targeted approach [13].

- Include quality control (QC) samples, such as a pooled sample from all individual samples, and analyze them intermittently throughout the sequence to monitor instrument stability [16].

LC-HRMS Analysis:

- Chromatography: Use a reversed-phase C18 column with a gradient elution from 5% to 100% organic mobile phase over 20-30 minutes. The specific gradient should be optimized for the sample matrix.

- Mass Spectrometry: Acquire data in data-dependent acquisition (DDA) or data-independent acquisition (DIA, e.g., SWATH) mode. Ensure mass accuracy is maintained within 3 ppm [19]. Collect both MS1 (precursor) and MS/MS (fragmentation) data.

Data Pre-processing:

- Convert raw data files to an open format (e.g., mzML).

- Use software (vendor-specific or open-source like XCMS) for peak picking, alignment across samples, and intensity normalization.

- The output is a data matrix of molecular features (defined by m/z and retention time) and their intensities across all samples.

Data Analysis and Modeling:

- Chemometrics: Perform unsupervised analysis like Principal Component Analysis (PCA) to visualize natural clustering and identify outliers.

- Machine Learning: Employ supervised methods. For spectral data, a Convolutional Neural Network (CNN) can be highly effective.

- Input: Use the entire pre-processed spectrum as a 2D image (intensity vs. m/z and retention time) [18].

- Training: Train the CNN on a "calibration set" of known spelt and wheat samples using a nested cross-validation (NCV) approach to avoid overfitting [18].

- Validation: Validate the model with an external set of samples, including artificially mixed samples and processed goods (e.g., spelt bread with 10% wheat), to test real-world applicability [18].

Validation Considerations for Non-Targeted Methods

Validating NTMs requires a different approach than targeted methods, focusing on fit-for-purpose performance characteristics [15]. Key validation considerations include:

- Data Quality: The foundation of any NTM. Critical parameters include mass accuracy (e.g., < 3 ppm), isotopic ratio accuracy, and peak height reproducibility (e.g., < 20% RSD) [16]. These are monitored using a quality control mixture like the NTS/QC [16].

- Robustness and Reproducibility: The model must be tested against seasonal variation, different geographic origins, and varying agricultural practices to ensure it does not generate false positives/negatives when conditions change [20].

- Veracity of Training Set: The model is only as good as its training data. Absolute certainty about the authenticity of samples used to build the model is crucial; otherwise, fraud is "baked in" from the start [20].

- Thorough Reporting: Use tools like the NTA Study Reporting Tool (SRT) developed by the Benchmarking and Publications for Non‑Targeted Analysis (BP4NTA) group to ensure transparent and complete reporting of methods and results [16].

Data Processing and Advanced Analytics

The complex, high-dimensional data generated by NTMs requires advanced processing tools to extract meaningful information.

- Suspect Screening Analysis (SSA): A rapid method for putative identification by screening detected masses and fragmentation spectra against chemical databases. This can be refined using retention time prediction models based on log Kow values to reduce false positives [16] [19].

- Molecular Networking: Groups detected compounds into molecular families based on the similarity of their MS/MS fragmentation spectra. This is particularly useful for identifying unknown compounds that are not in existing spectral libraries [16].

- Challenges in Standardization: A significant challenge is the lack of standardization in data processing. Different software tools can report only ~10% overlap of compounds from the same dataset [16]. Efforts by groups like the Metabolomics Quality Assurance and Quality Control Consortium (mQACC) are ongoing to harmonize QA/QC best practices [16].

The convergence of increasing food fraud incidents and stringent consumer demand for transparency makes the adoption of robust, scientifically validated analytical strategies imperative. Non-targeted methods, particularly when built on LC-HRMS platforms and supported by advanced data processing and machine learning, provide a powerful solution to verify food authenticity. Successful implementation requires a holistic approach that integrates rigorous experimental protocols, comprehensive method validation, and sophisticated data analytics. By adopting this framework, researchers, testing laboratories, and food producers can better safeguard the integrity of the global food supply chain, ensure regulatory compliance, and build consumer trust.

In the ongoing effort to combat food fraud, analytical testing serves as a critical line of defense. Traditional targeted analysis is a reactive approach designed to detect specific, predefined adulterants [21]. While highly sensitive for known compounds, this method offers no protection against unexpected or novel fraud, creating a significant vulnerability in food authenticity programs [13] [21].

In contrast, non-targeted analysis (NTA) represents a paradigm shift towards proactive surveillance. Instead of hunting for specific molecules, NTA acquires a comprehensive chemical "fingerprint" of a sample, capturing a wide array of data points without pre-selection [13] [21]. This fundamental difference allows NTA to screen for deviations from an authentic profile, making it uniquely capable of revealing the presence of unknown or unexpected adulterants, thereby offering a powerful strategic advantage in protecting food integrity [13].

Comparative Analysis: Targeted vs. Non-Targeted Approaches

The distinction between targeted and non-targeted methods dictates their respective applications, strengths, and limitations within a food fraud mitigation strategy. The following table summarizes their core characteristics.

Table 1: Fundamental Comparison of Targeted and Non-Targeted Analytical Approaches

| Feature | Targeted Analysis | Non-Targeted Analysis |

|---|---|---|

| Analytical Focus | Pre-defined individual analytes or markers [21] | Global, comprehensive fingerprint [13] [21] |

| Primary Goal | Confirm or deny the presence/quantity of a specific substance [21] | Detect deviations from a reference database of authentic samples [21] |

| Detection Capability | Known, anticipated adulterants | Known and unknown adulterants [13] [21] |

| Sample Preparation | Often complex, optimized for specific analytes [13] | Generally simple, to capture a wide range of components [13] |

| Data Output | Quantitative data on specific compounds | Multivariate data patterns (e.g., spectra, chromatograms) [21] |

| Result Interpretation | Direct comparison to reference standards | Statistical, probabilistic (e.g., Chemometrics, Machine Learning) [13] [21] |

| Strategic Role | Reactive testing; compliance checks | Proactive screening; hypothesis generation [21] |

The core advantage of NTA is its ability to detect fraud for which no specific test exists. As noted by the IFST, "if an issue is not sought then it will not be found" in a targeted paradigm [21]. NTA overcomes this limitation by casting a wide net. Its power is further demonstrated by its ability to detect adulterations that elude targeted methods. For instance, in a study on turkey meat adulterated with protein hydrolysates, traditional amino acid profiling (targeted) failed to detect partial hydrolysates, whereas non-targeted metabolite profiling via GC-MS and NMR spectroscopy successfully identified the fraud [13].

Key Methodologies and Experimental Protocols

Non-targeted methods leverage a suite of advanced analytical platforms, each providing a different perspective on a sample's chemical composition. The workflow is fundamentally different from targeted analysis, emphasizing comprehensive data acquisition and pattern recognition.

General Workflow for Non-Targeted Analysis

The following diagram outlines the generalized logical workflow for applying non-targeted analysis to food authenticity problems.

Detailed Experimental Protocols

Protocol 1: Non-Targeted Metabolomics for Geographical Origin Authentication

This protocol is adapted from research aimed at discriminating the geographical origin of seeds and honey using metabolite profiling [13].

- 1. Objective: To differentiate perilla and sesame seeds from China and Korea based on their hydrophilic and lipophilic metabolite profiles.

- 2. Sample Preparation:

- Grind seeds to a homogeneous powder.

- Weigh 100 mg of powder into an extraction vial.

- Add 1 mL of a methanol:water:chloroform (2.5:1:1 v/v/v) extraction solvent.

- Vortex vigorously for 2 minutes, then sonicate for 15 minutes in an ice bath.

- Centrifuge at 14,000 x g for 10 minutes at 4°C.

- Transfer the upper (hydrophilic) and lower (lipophilic) layers to separate vials.

- Dry the extracts under a gentle stream of nitrogen.

- Derivatize for GC-MS analysis using a standard methoxyamination and silylation procedure.

- 3. Instrumental Analysis:

- Technique: Gas Chromatography-Mass Spectrometry (GC-MS)

- GC Conditions: Use a non-polar capillary column (e.g., DB-5MS). Employ a temperature gradient from 60°C to 330°C.

- MS Conditions: Electron Impact (EI) ionization at 70 eV; full scan mode from m/z 50 to 600.

- 4. Data Processing & Analysis:

- Deconvolute raw GC-MS data to align peaks and identify features across all samples.

- Create a data matrix of peak areas (features) versus samples.

- Import the matrix into a chemometric software package.

- Apply Orthogonal Partial Least Squares-Discriminant Analysis (OPLS-DA) to build a classification model.

- Identify potential biomarker metabolites (e.g., glycolic acid for perilla seeds) that drive the separation between groups.

Protocol 2: Non-Targeted Spectroscopy for Quality Grade Screening

This protocol is based on studies that used spectroscopic techniques for the rapid authentication of olive oil quality and truffle species [13].

- 1. Objective: To develop a classification model for predicting the commercial category of virgin olive oils (extra virgin, virgin, lampante).

- 2. Sample Preparation:

- No extensive preparation is required.

- Ensure samples are homogenous and at a consistent temperature before analysis.

- 3. Instrumental Analysis:

- Technique: Flash Gas Chromatography (Flash GC) or Fourier Transform Near-Infrared (FT-NIR) Spectroscopy.

- Flash GC Conditions: Inject 1 µL of sample; use a short, non-polar column; rapid temperature program to separate the volatile fraction.

- FT-NIR Conditions: Use an integrating sphere or fiber optic probe; collect spectra in the range of 800-2500 nm; average 32 scans per sample at a resolution of 8 cm⁻¹.

- 4. Data Processing & Analysis:

- For Flash GC, use the entire chromatogram as a fingerprint. For NIR, use the raw spectral data.

- Apply necessary pre-processing to reduce noise and correct baseline effects (e.g., Standard Normal Variate (SNV), multiplicative scatter correction, Savitzky-Golay derivatives).

- Use Partial Least Squares-Discriminant Analysis (PLS-DA) to build a predictive model using a large and diversified set of pre-classified authentic samples (n > 300 is ideal).

- Validate the model's robustness using cross-validation and an external validation set.

The Researcher's Toolkit: Essential Reagents and Materials

Successful implementation of non-targeted methods relies on a foundation of specific reagents, instrumentation, and software.

Table 2: Key Research Reagent Solutions for Non-Targeted Analysis

| Item | Function/Description | Application Example |

|---|---|---|

| Methoxyamine hydrochloride | Protects carbonyl groups during derivatization for GC-MS analysis. | Metabolite profiling in seeds, honey, and meat [13]. |

| N-Methyl-N-(trimethylsilyl)trifluoroacetamide (MSTFA) | Silylation reagent that volatilizes polar metabolites for GC-MS separation. | Metabolite profiling [13]. |

| Deuterated Solvent (e.g., CD₃OD, D₂O) | Provides a locking signal for NMR spectroscopy and enables sample dissolution without interference. | Metabolite profiling for detecting adulteration in turkey meat [13]. |

| Chemometric Software (e.g., SIMCA, PLS_Toolbox) | Software for multivariate statistical analysis, including PCA, PLS-DA, and OPLS-DA. | Essential for building classification and discrimination models from complex data [13]. |

| Authentic Reference Materials | Certified, well-characterized samples used to build the foundational reference database. | Critical for calibrating and validating any non-targeted model for any matrix [21]. |

| C18 / Normal Phase Solid-Phase Extraction (SPE) Cartridges | For selective cleanup or fractionation of complex samples to reduce matrix effects. | Can be used in lipidomics or targeted metabolite analysis within a non-targeted workflow [22]. |

Non-targeted analysis represents a transformative advance in food authenticity research, shifting the paradigm from reactive detection to proactive surveillance. Its principal advantage is the capacity to uncover unknown adulterants by identifying anomalous patterns against a background of authentic product profiles. While challenges remain—including the need for robust reference databases and sophisticated data analysis—the integration of NTA into food fraud mitigation strategies provides a powerful, forward-looking tool. It empowers scientists and regulators to not only confront known threats but also to build a more resilient food system capable of adapting to the evolving tactics of fraud.

Methodologies in Action: Analytical Platforms and Applications for Food Forensics

Within the framework of non-targeted methods (NTM) for food authenticity research, rapid fingerprinting techniques have emerged as powerful tools for addressing global challenges in food fraud and mislabeling. Fourier Transform Near-Infrared (FT-NIR) spectroscopy and Nuclear Magnetic Resonance (NMR) spectroscopy represent two leading analytical approaches that fulfill the critical need for efficient, high-throughput authentication capable of verifying geographical origin, botanical source, and processing methods without prior knowledge of potential adulterants [23] [15]. The growing implementation of these techniques stems from their ability to provide a comprehensive molecular snapshot of food matrices, enabling researchers to detect subtle compositional differences indicative of authenticity breaches. Unlike targeted methods that focus on specific analytes, non-targeted fingerprinting exploits the entire spectral profile, offering a more holistic approach to authenticity verification that can identify unexpected adulterants [23] [13]. This application note details the practical implementation, experimental protocols, and performance validation of FT-NIR and NMR spectroscopy within the context of NTM validation for food authenticity research.

Key Principles and Technological Comparison

FT-NIR and NMR spectroscopy, while both serving as non-targeted fingerprinting tools, operate on distinct physical principles that dictate their specific applications, strengths, and limitations in food authentication.

FT-NIR spectroscopy measures the absorption of near-infrared light (780-2500 nm), corresponding to overtone and combination vibrations of fundamental molecular bonds, primarily O-H, C-H, and N-H groups [24]. These interactions provide information on the organic composition of samples, making it particularly sensitive to differences in protein, fat, and moisture content. The technique generates complex, high-dimensional data that is inherently non-linear and requires sophisticated chemometric analysis for interpretation [25].

NMR spectroscopy, in contrast, exploits the magnetic properties of certain atomic nuclei (e.g., ^1H, ^13C) when placed in a strong magnetic field. The technique detects the resonance frequencies of these nuclei, which are exquisitely sensitive to their local chemical environment. This provides a definitive, reproducible fingerprint that can simultaneously identify and quantify a wide range of metabolites—from organic acids and amino acids to sugars and lipids—in a single experiment [23]. NMR's exceptional quantitative capabilities and high repeatability make it particularly valuable for building standardized databases and for regulatory applications [23] [26].

Table 1: Comparative Analysis of FT-NIR and NMR Spectroscopy for Food Authenticity

| Parameter | FT-NIR Spectroscopy | NMR Spectroscopy |

|---|---|---|

| Analytical Principle | Overtone/vibrational spectroscopy [24] | Magnetic nuclear spin transitions [23] |

| Sample Preparation | Minimal; often non-destructive [27] | May require extraction or dissolution [28] |

| Speed of Analysis | Very rapid (seconds to minutes) [25] [29] | Moderate (several minutes per sample) [23] |

| Metabolite Coverage | Broad, based on functional groups | Comprehensive, with specific identification [23] |

| Quantitative Nature | Semi-quantitative (requires calibration) | Highly quantitative and reproducible [23] |

| Primary Strengths | Portability, low cost, high-throughput screening | High specificity, structural elucidation, database building [23] [26] |

| Key Limitations | Indirect measurements, complex data interpretation | High initial cost, lower sensitivity than MS techniques [23] |

| Ideal Use Case | Rapid in-line screening and origin classification [25] [27] | Definitive authentication and regulatory testing [26] |

Experimental Protocols

FT-NIR Protocol for Geographical Origin Authentication

This protocol outlines the procedure for authenticating the geographical origin of almonds using FT-NIR spectroscopy, adaptable for other solid food matrices [29].

Materials and Equipment

- FT-NIR spectrometer equipped with a reflectance probe or integration sphere

- Cryogenic grinder with liquid nitrogen cooling

- Freeze-dryer

- Single-use sample cups or quartz windows

- Certified reference materials for instrument validation

Sample Preparation Steps

- Homogenization: For solid samples like almonds, grind representative portions using a cryogenic grinder to achieve a uniform particle size of <500 µm [29].

- Freeze-Drying: Transfer the ground sample to a freeze-dryer for approximately 72 hours to remove interfering water signals from the NIR spectrum [29].

- Presentation: Place the prepared sample in a consistent orientation in the sample cup, ensuring uniform packing density and surface topography for reproducible reflectance measurements.

Instrumental Analysis

- System Calibration: Verify instrument performance using certified white reference standards prior to analysis.

- Spectral Acquisition: Collect spectra in the range of 780-2500 nm (12,820-4,000 cm⁻¹) with a resolution of 4-16 cm⁻¹. Accumulate 32-64 scans per spectrum to optimize the signal-to-noise ratio [29] [24].

- Quality Control: Include control samples from known origins in each analytical batch to monitor system stability and performance.

Data Processing and Analysis

- Spectral Preprocessing: Apply mathematical treatments to reduce scattering effects and enhance spectral features. Common techniques include:

- Chemometric Modeling:

- Utilize Support Vector Machine (SVM) algorithms, particularly non-linear variants like Polynomial-SVM, which have demonstrated >95% classification accuracy for geographical origin determination [25] [29].

- Validate models using independent test sets and cross-validation to ensure robustness and prevent overfitting.

NMR Protocol for Honey Authenticity and Metabolite Profiling

This protocol describes the procedure for using NMR spectroscopy to authenticate honey origin and detect syrup adulteration, adaptable to other liquid food matrices [23] [26].

Materials and Equipment

- High-field NMR spectrometer (≥400 MHz)

- NMR tubes (e.g., 5 mm diameter)

- Precision analytical balance

- pH meter

- Buffer solution (e.g., phosphate buffer, pH 7.0)

- Deuterated solvent (e.g., D₂O)

- Internal standard (e.g., TSP-d₄ or DSS for chemical shift referencing)

Sample Preparation

- Weighing: Accurately weigh 40-50 mg of honey into a clean vial.

- Solution Preparation: Add 600 µL of phosphate buffer (pH 7.0) and 60 µL of D₂O containing 0.1% TSP-d₄ as an internal reference standard. The buffer minimizes pH-induced chemical shift variations [23].

- Mixing: Vortex the mixture for 30-60 seconds until the honey is completely dissolved.

- Transfer: Pipette 550-600 µL of the prepared solution into a clean 5 mm NMR tube.

NMR Data Acquisition

- Instrument Setup: Lock, tune, and shim the spectrometer using the D₂O signal to optimize magnetic field homogeneity.

- Pulse Program Selection: Employ standard one-dimensional (1D) ^1H NMR pulse sequences with water suppression (e.g., NOESYPRESAT or zgpr) [23].

- Parameter Setting:

- Spectral width: 12-14 ppm

- Number of scans: 64-128

- Relaxation delay: 4 seconds

- Acquisition temperature: 298 K

- Data Collection: Run the experiment, which typically requires 5-10 minutes per sample.

Data Processing and Multivariate Analysis

- Spectral Processing:

- Apply Fourier transformation to convert time-domain data to frequency-domain spectra.

- Perform phase correction and baseline correction.

- Calibrate the chemical shift scale to the internal standard (TSP-d₄ at 0.0 ppm).

- Data Reduction:

- Segment spectra into small regions (buckets/bins) of equal width (e.g., 0.04 ppm).

- Integrate the signal intensity within each bucket.

- Normalize the data to the total integral or a reference standard to account for concentration differences.

- Pattern Recognition:

- Apply unsupervised methods like Principal Component Analysis (PCA) for initial data exploration and outlier detection.

- Use supervised methods such as Orthogonal Partial Least Squares-Discriminant Analysis (OPLS-DA) to build classification models that differentiate authentic and adulterated samples or determine geographical origin [23].

Applications and Performance Data

FT-NIR Applications

FT-NIR spectroscopy has demonstrated exceptional performance across diverse food authentication scenarios:

- Mediterranean Anchovy Authentication: FT-NIR coupled with Polynomial-SVM and Random Forest algorithms achieved 95.7% and 95.5% accuracy, respectively, in classifying anchovies from the Adriatic, Balearic, and Tyrrhenian Seas. Savitzky-Golay derivative preprocessing combined with Standard Normal Variate was critical for this high performance [25].

- Paprika Adulteration Detection: FT-NIR successfully differentiated pure paprika from samples adulterated with illegal synthetic dyes (Sudan II-IV, Congo Red) at levels as low as 0.1%. The method achieved 96.8% accuracy in identifying adulterated samples and 100% accuracy in classifying Protected Designation of Origin (PDO) labels using OPLS-DA models [27].

- Almond Geographical Origin: Systematic comparison of sample preparation techniques revealed that freeze-drying after grinding produced the most reliable classification models (80.2% accuracy), though analysis of whole almonds remained valuable for rapid screening with lower workload [29].

NMR Applications

NMR spectroscopy provides robust solutions for challenging authentication problems:

- Honey Authenticity: NMR has been implemented as an official testing method in Estonia, effectively detecting adulteration with cheap sugar syrups and clearing the market of fraudulent products. The technique provides a comprehensive metabolite profile that enables verification of both authenticity and botanical/geographical origin [26].

- Food Metabolite Profiling: A review of 43 NMR-based food authentication studies (2019-2024) highlighted its extensive application in differentiating truffle species, discriminating olive oil categories, and determining the geographical origin of seeds and dairy products based on their distinct metabolic fingerprints [23].

- Turkey Meat Adulteration: NMR metabolomics successfully detected the injection of protein hydrolysates into turkey meat, even at low hydrolysis degrees where traditional amino acid analysis failed, demonstrating its advantage for identifying sophisticated adulteration practices [13].

Table 2: Quantitative Performance Metrics of Featured Applications

| Application | Technique | Classification Accuracy | Key Analytes/Markers | Reference |

|---|---|---|---|---|

| Anchovy Origin | FT-NIR + P-SVM | 95.7% (test set) | Non-linear spectral patterns | [25] |

| Paprika PDO Verification | FT-NIR + OPLS-DA | 100% | Spectral fingerprints of geographic origin | [27] |

| Paprika Adulteration Detection | FT-NIR + PLS | 96.8% | Sudan dyes, Congo Red | [27] |

| Almond Origin (Freeze-Dried) | FT-NIR + SVM | 80.2% | Moisture-free spectral features | [29] |

| Honey Authenticity | NMR + PCA/OPLS-DA | Official method (Estonia) | Comprehensive metabolite profile | [26] |

| Turkey Meat Adulteration | NMR Metabolomics | Successful detection | Sugars, hydrolysis by-products | [13] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of FT-NIR and NMR fingerprinting requires specific reagents and materials to ensure analytical rigor and reproducibility.

Table 3: Essential Research Reagents and Materials

| Item | Function/Application | Technical Considerations |

|---|---|---|

| Cryogenic Mill | Homogenizes solid samples to uniform particle size | Liquid nitrogen cooling prevents degradation of heat-sensitive compounds [29] |

| Freeze-Dryer | Removes water from samples for FT-NIR | Eliminates strong O-H absorption bands that can mask other spectral features [29] |

| Certified Reference Materials | Validates instrument performance and method accuracy | Essential for quality control and measurement traceability [15] |

| Deuterated Solvents (D₂O) | Provides lock signal for NMR spectroscopy | Enables stable magnetic field regulation during extended experiments [23] |

| Internal Standards (TSP-d₄) | Chemical shift reference for NMR | Provides a consistent δ = 0.0 ppm reference point unaffected by sample pH [23] |

| Buffer Solutions | Controls pH in NMR samples | Minimizes pH-induced chemical shift variations in metabolomic analyses [23] |

| SVM and RF Algorithms | Non-linear classification of spectral data | Superior to linear models for handling complex NIR spectra [25] |

| OPLS-DA Models | Supervised multivariate analysis for NMR data | Handles correlated X-variables and improves interpretation [23] [27] |

Workflow Visualization

FT-NIR and NMR spectroscopy have established themselves as cornerstone analytical techniques in the validation of non-targeted methods for food authenticity research. FT-NIR excels as a rapid, cost-effective screening tool for high-throughput applications such as geographical origin verification and adulteration detection, particularly when coupled with robust non-linear machine learning algorithms [25] [27]. NMR provides definitive, multi-parametric metabolite profiling with exceptional reproducibility, making it invaluable for building standardized databases and for regulatory decision-making [23] [26]. The continuing development of both techniques hinges on addressing key challenges, including the standardization of sample preparation protocols, the expansion of comprehensive spectral databases, and the establishment of harmonized validation guidelines specifically designed for non-targeted approaches [23] [15]. As the field advances, the integration of FT-NIR and NMR data with other analytical platforms, alongside the refinement of chemometric models, will further enhance the capability to safeguard food integrity and combat sophisticated fraud throughout the global supply chain.

The globalization of the food supply chain has significantly increased the complexity of ensuring food authenticity, driving the need for high-throughput, accurate, and rapid analytical techniques [22]. Food authenticity verification now extends beyond simple adulteration detection to encompass quality evaluation, label compliance, traceability determination, and other quality-related aspects [22]. Non-targeted methods (NTMs) have emerged as powerful analytical strategies for detecting food fraud and authenticating food substances, as they can capture subtle differences in sample composition without focusing on predetermined analytes [8]. These methods typically combine highly resolved analytical fingerprints with advanced statistical modeling and machine learning for data evaluation [8].

Chromatography coupled with mass spectrometry represents the cornerstone of modern food authenticity testing. Among these platforms, GC-MS, LC-MS, and high-resolution accurate mass (HRAM) Orbitrap systems offer complementary capabilities for analyzing diverse food matrices. GC-MS excels in separating volatile and semi-volatile compounds, LC-MS handles non-volatile and thermally labile substances, while HRAM Orbitrap instrumentation provides superior mass accuracy and resolution for confident compound identification [30]. The integration of these platforms within a foodomics framework—combining proteomics, lipidomics, flavoromics, metabolomics, and genomics with biostatistics and bioinformatics—has revolutionized food authentication from field to table [22].

This application note details experimental protocols and technical considerations for implementing these platforms in non-targeted food authenticity research, with a specific focus on method validation parameters essential for generating defensible scientific data.

Experimental Protocols

Non-Targeted LC-HRMS Method for Distinguishing Spelt and Wheat

Principle: This protocol utilizes liquid chromatography coupled to high-resolution mass spectrometry (LC-HRMS) to obtain highly resolved spectral fingerprints of spelt and wheat cultivars, followed by convolutional neural network (CNN) modeling for classification [8].

Materials and Reagents:

- Spelt and wheat cultivars (minimum eleven each for model training)

- Acetonitrile (LC-MS grade)

- Methanol (LC-MS grade)

- Formic acid (LC-MS grade)

- Deionized water (18.2 MΩ·cm)

Instrumentation:

- Liquid chromatography system (UHPLC capable)

- High-resolution mass spectrometer (Time-of-Flight or Orbitrap based)

- Chromatographic column: C18 column (e.g., 2.1 × 100 mm, 1.7 μm)

Sample Preparation:

- Grind grain samples to a fine powder using a laboratory mill.

- Weigh 100 ± 5 mg of homogenized sample into a 2 mL microcentrifuge tube.

- Add 1 mL of extraction solvent (acetonitrile:water:formic acid, 80:19:1 v/v/v).

- Vortex vigorously for 1 minute, then shake for 10 minutes.

- Centrifuge at 14,000 × g for 10 minutes at room temperature.

- Transfer supernatant to an autosampler vial for LC-HRMS analysis.

LC Conditions:

- Mobile Phase A: 0.1% formic acid in water

- Mobile Phase B: 0.1% formic acid in acetonitrile

- Flow Rate: 0.3 mL/min

- Column Temperature: 40°C

- Injection Volume: 5 μL

- Gradient Program:

- 0-2 min: 5% B

- 2-15 min: 5-95% B

- 15-18 min: 95% B

- 18-18.1 min: 95-5% B

- 18.1-21 min: 5% B (column re-equilibration)

HRMS Conditions:

- Ionization Mode: Electrospray ionization (ESI), positive and negative modes

- Mass Range: 100-1500 m/z

- Resolution: >30,000 FWHM

- Sheath Gas Flow: 40 arb

- Aux Gas Flow: 10 arb

- Spray Voltage: 3.5 kV

- Capillary Temperature: 320°C

Data Processing and CNN Modeling:

- Convert raw files to standardized format (e.g., mzML).

- Perform peak picking, alignment, and retention time correction.

- Normalize peak intensities using total ion current or probabilistic quotient normalization.

- Format 2D spectral data (m/z vs. retention time) as images for CNN input.

- Implement CNN architecture with convolutional, pooling, and fully connected layers.

- Train models using nested cross-validation to prevent overfitting.

- Validate model performance using an independent sample set including artificially mixed spectra and processed goods.

Validation Parameters:

- Calculate D-score metric for classification decisions

- Assess specificity and sensitivity across multiple cultivars

- Test model robustness with untypical spelt and old wheat cultivars not included in training

LC-Orbitrap-HRMS Screening Method for Antibiotics in Milk

Principle: This protocol describes a multiresidue screening method for 57 antibiotic compounds in bovine, ovine, and goat milk using LC-Orbitrap-HRMS, compliant with Commission Implementing Regulation (EU) 2021/808 [30].

Materials and Reagents:

- Milk samples (bovine, ovine, goat)

- Mixed antibiotic standards (57 compounds including beta-lactams, tetracyclines, sulfonamides, quinolones, pleuromutilins, macrolides, lincosamides)

- Acetonitrile (LC-MS grade)

- Methanol (LC-MS grade)

- Ethylenediaminetetraacetic acid (EDTA)

- Formic acid (LC-MS grade)

- Deionized water (18.2 MΩ·cm)

- HLB PRiME solid-phase extraction cartridges

Instrumentation:

- Liquid chromatography system