Validating New Texture Methods: A Strategic Framework for Biomedical and Pharmaceutical Innovation

This article provides a comprehensive guide for researchers and drug development professionals on validating novel texture analysis methods against established protocols.

Validating New Texture Methods: A Strategic Framework for Biomedical and Pharmaceutical Innovation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating novel texture analysis methods against established protocols. It covers the foundational principles of texture analysis, explores its cutting-edge applications from AI-driven ultrasound to material characterization, addresses common troubleshooting and optimization challenges, and outlines robust validation and comparative frameworks. By synthesizing current trends and regulatory expectations, this resource aims to equip scientists with the knowledge to ensure their texture methods are accurate, reproducible, and ready for clinical or industrial implementation.

The Principles and Expanding Role of Texture Analysis in Modern Science

Texture analysis represents a critical analytical paradigm across scientific disciplines, enabling the quantitative assessment of structural and compositional properties that are often imperceptible to human senses. In mechanical testing, texture analysis characterizes the physical properties of materials—such as hardness, cohesiveness, and adhesiveness—through direct physical interaction with probes and fixtures [1]. In medical imaging, a separate but conceptually related field known as radiomics or image texture analysis has emerged to extract sub-visual quantitative data from medical images [2] [3]. This high-throughput extraction of mineable data from radiographic medical images allows researchers to uncover disease characteristics that extend beyond semantic descriptors or size measurements [3]. While these applications differ in their fundamental approaches—mechanical testing through physical interaction versus image analysis through computational algorithms—they share a common objective: converting qualitative structural properties into quantitative, actionable data to guide decision-making in product development and clinical practice.

This guide provides a systematic comparison of these methodological approaches, focusing on the experimental frameworks for validating novel texture analysis techniques against established protocols. For researchers and drug development professionals, understanding this cross-disciplinary landscape is essential for advancing method validation and establishing robust analytical pipelines that bridge material science and medical diagnostics.

Methodological Comparison: Mechanical Testing vs. Image-Based Radiomics

Table 1: Fundamental Comparison Between Mechanical and Image-Based Texture Analysis

| Analytical Aspect | Mechanical Texture Analysis | Image-Based Radiomics Texture Analysis |

|---|---|---|

| Core Principle | Measures response to physical forces (compression, puncture, extrusion, tension, bending) [1] | Extracts quantitative features from digital images using data-characterisation algorithms [3] |

| Primary Output | Force-distance curves quantifying mechanical properties [1] | Image biomarkers (IBMs) quantifying tissue heterogeneity [2] |

| Sample Interaction | Direct physical contact (destructive or non-destructive) [1] | Non-invasive computational analysis [2] |

| Key Parameters | Hardness, cohesiveness, adhesiveness, elasticity, fractureability [1] | First-order statistics, shape features, and texture features (GLCM, GLRLM, GLSZM) [2] [4] |

| Data Acquisition | Texture analyzer with specialized probes/fixtures [1] | Medical imaging modalities (CT, MRI, US, PET) [2] |

| Standardization | ISO, AACC, ASTM methods; imitative tests [1] | Image Biomarker Standardisation Initiative (IBSI) [5] |

| Primary Applications | Food science, pharmaceuticals, material science [1] | Oncology, neurology, drug development [2] [4] |

Experimental Protocols in Image-Based Radiomics

The radiomics workflow follows a standardized pipeline that transforms medical images into quantitative biomarkers, with several critical stages requiring meticulous protocol implementation.

Image Acquisition and Preprocessing

The initial stage involves acquiring medical images using standardized protocols. Different imaging modalities—including computed tomography (CT), magnetic resonance imaging (MRI), ultrasound (US), and positron emission tomography (PET)—provide varying contrast mechanisms capturing distinct tissue properties [2] [4]. For quantitative analysis, preprocessing steps are essential to minimize technical variability. These include artifact correction, image registration for multi-modal studies, intensity normalization to account for acquisition parameter variations, and noise reduction [4]. In CT imaging, radio density standardization using internal references (e.g., aortic lumen) helps mitigate interscanner variability while preserving tissue characteristics [5].

Tumor Segmentation and Region of Interest (ROI) Identification

Segmentation determines which region will be analyzed (ROI) and includes manual, semi-automatic, and automatic methods [2]. Manual segmentation, while time-consuming, remains crucial in the radiomics workflow as radiological features are extracted from these segmented regions [2]. Commonly used tools include 3D Slicer, ITK-SNAP, and commercial solutions, though variations in segmentation approaches can significantly impact feature reproducibility [4] [5]. Automatic or semi-automatic techniques are increasingly studied to minimize manual input and improve consistency in delineating regions of interest [2].

Feature Extraction and Quantification

Feature extraction converts the ROI into quantitative data using mathematical transformations. The PyRadiomics open-source Python package has emerged as a standardized tool for this purpose, following Image Biomarker Standardization Initiative (IBSI) guidelines [4] [5]. Extracted features fall into three main categories:

- Shape-based features: Describe geometric properties of the ROI (e.g., volume, sphericity, surface area) [4]

- First-order statistics: Derived from histogram of pixel intensities (e.g., mean, median, skewness, kurtosis, entropy) [2] [4]

- Second- and higher-order texture features: Quantify spatial relationships between pixels using matrices including:

- Gray Level Co-occurrence Matrix (GLCM): Spatial relationship of pixel/voxel pairs [4]

- Gray Level Run Length Matrix (GLRLM): Distribution of continuous segments with same gray level [4]

- Gray Level Size Zone Matrix (GLSZM): Groups of interconnected pixels with same gray level [4]

- Neighborhood Gray-Tone Difference Matrix (NGTDM): Differences between pixel and its neighbors' mean gray level [4]

Table 2: Quantitative Texture Features and Their Clinical Correlations in Validation Studies

| Texture Feature Category | Specific Features | Representative Application | Performance Metrics |

|---|---|---|---|

| First-Order Statistics | Skewness [5] | Predicting ACC mitotic activity (venous phase CT) | AUC = 0.924 [5] |

| GLCM Features | Contrast, Correlation, Homogeneity, Energy [6] [7] | Early prediction of breast cancer chemotherapy response [6] | Accuracy = 86%, Specificity = 91% [6] |

| GLCM Texture Parameters | Autocorrelation, Cluster Prominence, Sum Average [7] | Detecting early retinal changes in diabetes [7] | 8/20 features showed significant changes (p<0.05) [7] |

| Multi-Parametric Signatures | 18 selected radiomic features [2] | Stratifying glioblastoma multiforme patients [2] | Improved patient stratification beyond clinical/genetic profiles [2] |

| Wavelet-Based Features | Transformed first-order statistics [4] | Characterizing tumor heterogeneity [4] | Enhanced prediction of treatment response [4] |

Statistical Analysis and Model Validation

The final stage involves statistical analysis to explore relationships between radiomic features and outcomes. This ranges from correlation analysis and group comparisons to building predictive models using machine learning algorithms [4]. Feature selection techniques are crucial to avoid overfitting, particularly in preclinical studies with limited sample sizes [4]. Validation in independent cohorts represents a critical step for clinical translation, as demonstrated in a 2025 breast cancer study where a QUS-based prediction model achieved 86% accuracy when validated in a separate patient cohort [6].

Validation Framework for Novel Texture Analysis Methods

Method Comparison Studies

Validating new texture analysis methods requires systematic comparison against established protocols. A 2016 study compared various texture classification methods using multiresolution analysis tools, including Discrete Wavelet Transform (DWT), Discrete Wavelet Packet Transform (DWPT), and Dual Tree Complex Wavelet Packet Transform (DTCWPT) combined with linear regression modeling [8]. The study found that DWT outperformed DWPT and DTCWPT in most classification scenarios, highlighting the importance of direct methodological comparisons when validating new analytical approaches [8].

Technical Validation and Reproducibility Assessment

Technical validation must address multiple factors influencing feature robustness. These include gray-level discretization, isotropic resampling, ROI identification methods (manual vs. automatic), image reconstruction algorithms, and acquisition parameter variations [9]. The IBSI has emerged to standardize feature extraction and nomenclature, providing critical guidelines for improving reproducibility across institutions [9] [5].

Clinical Validation Pathways

Clinical validation requires demonstrating correlation between texture features and clinically relevant endpoints. In oncology, this includes correlating radiomic features with pathological complete response, overall survival, tumor grade, and genetic markers [2] [10]. For example, a 2025 study validated quantitative ultrasound (QUS) texture features for predicting neoadjuvant chemotherapy response in breast cancer, achieving 86% accuracy in an independent cohort when applied in the first week of treatment [6].

Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Texture Analysis Studies

| Category | Specific Tools/Software | Primary Function | Application Context |

|---|---|---|---|

| Feature Extraction | PyRadiomics [4] [5] | Open-source feature extraction | Clinical & preclinical radiomics |

| IBEX [2] | Imaging biomarker exploration | Research environments | |

| LIFEx [2] | Radiomic feature analysis | Clinical research | |

| Image Segmentation | 3D Slicer [4] [5] | Manual 3D segmentation | Multi-modal imaging |

| ITK-SNAP [4] | Semi-automatic segmentation | Preclinical studies | |

| VivoQuant [4] | ROI delineation | Preclinical imaging | |

| Medical Imaging | CT Scanner [5] | Anatomical imaging | Tumor characterization |

| MRI Scanner [2] | Soft tissue contrast | Neuroimaging, oncology | |

| Ultrasound System [6] | Quantitative ultrasound | Treatment response monitoring | |

| Statistical Analysis | R/Python [10] | Statistical modeling | Feature selection, validation |

| Matlab [4] | Custom algorithm development | Methodological research |

Texture analysis, spanning from mechanical property testing to image-based radiomics, provides powerful quantitative frameworks for material and medical sciences. Validation of new texture analysis methods requires rigorous comparison against established protocols across technical, biological, and clinical domains. The exponential growth of radiomics in medical applications, particularly in oncology, underscores its potential to provide non-invasive biomarkers for personalized medicine. As the field advances, priorities should emphasize standardization, large-scale multicenter validation, and clinical translation to maximize the potential of texture analysis in both industrial and healthcare applications.

Texture Profile Analysis (TPA) and Grey Level Co-occurrence Matrix (GLCM) represent two established pillars of texture quantification across scientific disciplines. TPA provides direct mechanical quantification of material properties through controlled deformation, simulating the biting action [11]. In parallel, GLCM offers image texture characterization by statistically analyzing the spatial relationship of pixel intensities, revealing patterns not discernible to the human eye [12]. While TPA originated from material science and food technology, GLCM emerged from digital image processing and remote sensing [12] [11]. Despite their different origins, both methods have evolved into standardized protocols for objective texture assessment across pharmaceuticals, food science, medical imaging, and materials science. This guide provides a comparative analysis of their performance, experimental protocols, and applications, supporting researchers in selecting appropriate methodologies for validating new texture analysis techniques against these established standards.

Parameter Comparison: Quantitative Descriptors

Core Parameters of TPA and GLCM

Table 1: Fundamental Parameters of Texture Profile Analysis (TPA)

| Parameter | Definition | Interpretation | Representative Values |

|---|---|---|---|

| Hardness | Peak force during first compression cycle | Material firmness or resistance to deformation | 56.7 kPa (tofu) - 418.9 kPa (plant-based turkey) [13] |

| Springiness | Ratio of time difference during second vs. first compression | Rate at which deformed material returns to original state | Ratio of Time diff 4:5/Time diff 1:2 [11] |

| Cohesiveness | Ratio of positive force area during second vs. first compression | Internal bonding strength of the material | Ratio of Area 4:6/Area 1:3 [11] |

| Gumminess | Product of Hardness × Cohesiveness | Energy required to disintegrate a semisolid food for swallowing | Calculated parameter [11] |

| Chewiness | Product of Hardness × Cohesiveness × Springiness | Energy required to masticate a solid food for swallowing | Calculated parameter [11] |

| Adhesiveness | Negative force area during probe withdrawal | Work necessary to overcome attractive forces between food surfaces | Measured from negative area of curve [11] |

| Resilience | Ratio of 1st cycle decompression area to compression area | How quickly material recovers from deformation | Ratio of Area 2:3/Area1:2 [11] |

Table 2: Fundamental Haralick Features from Grey Level Co-occurrence Matrix (GLCM)

| Parameter | Mathematical Definition | Interpretation | Application Example |

|---|---|---|---|

| Contrast | $\sum_{i,j}(i-j)^2g(i,j)$ | Measures local intensity variations, representing texture clarity | Prognostic for esophageal adenocarcinoma survival (with GLCM_Correlation) [14] [15] |

| Correlation | $\sum_{i,j}\frac{(i-\mu)(j-\mu)g(i,j)}{\sigma^2}$ | Measures linear dependency of gray levels, representing pattern regularity | Independent prognostic value in cancer CT imaging [12] [15] |

| Energy | $\sum_{i,j}g(i,j)^2$ | Also called Angular Second Moment; measures textural uniformity | Used in crop classification from drone imagery [16] |

| Homogeneity | $\sum_{i,j}\frac{1}{1+(i-j)^2}g(i,j)$ | Also called Inverse Difference Moment; measures local homogeneity | Extracted from hyperspectral images of salmon fillets [17] |

| Entropy | $\sum{i,j}g(i,j)\log2 g(i,j)$ | Measures randomness and complexity of texture | Feature in salmon texture assessment (6.8 Shannon entropy for meats) [17] [18] |

| Cluster Shade | $\sum_{i,j}((i-\mu)+(j-\mu))^3g(i,j)$ | Measures skewness of the matrix, representing texture uniformity | Included in standard GLCM feature sets [12] |

| Cluster Prominence | $\sum_{i,j}((i-\mu)+(j-\mu))^4g(i,j)$ | Measures kurtosis of the matrix, representing texture asymmetry | Included in standard GLCM feature sets [12] |

Performance Comparison in Validation Studies

Table 3: Comparative Performance of TPA and GLCM Across Applications

| Field | TPA Performance | GLCM Performance | Comparative Efficacy |

|---|---|---|---|

| Food Science | Direct measurement of mechanical properties (hardness, chewiness) [13] | Indirect prediction of TPA parameters from images (R² = 0.62-0.89 for salmon) [17] | TPA: Gold standard for direct measurement; GLCM: Effective for non-destructive prediction |

| Medical Imaging | Not applicable to medical imaging | GLCMContrast + GLCMCorrelation improved survival prediction (AUC: 0.68 vs 0.56 for stage alone) [15] | GLCM provides unique prognostic value unavailable through other methods |

| Agriculture | Limited application for field use | 13.65% improvement in crop classification accuracy vs. spectral data alone [16] | GLCM enables superior pattern recognition in heterogeneous environments |

| Materials Science | Standard for viscoelastic characterization | Limited application to surface texture analysis | TPA remains primary method for bulk mechanical properties |

Experimental Protocols: Methodological Approaches

Standard TPA Protocol for Solid Foods

The double compression test serves as the gold standard for TPA characterization. The protocol involves:

Sample Preparation: Samples are typically cut into uniform bite-sized pieces (commonly cylindrical shapes with 1:1 height-to-diameter ratio). For plant-based and animal meats, samples are cut to 15mm height × 15mm diameter [13].

Instrument Settings: A texture analyzer (e.g., TA-XT plus, Stable Micro Systems) equipped with a compression platen is used with the following typical parameters:

Data Collection: The force-time curve is recorded throughout two complete compression-decompression cycles, with key parameters extracted from the resulting curve peaks and areas [11].

Parameter Calculation: The seven primary TPA parameters are calculated from the force-time curve according to established formulas [11].

Figure 1: TPA Force-Time Curve Analysis. Diagram shows key parameters extracted from a typical double compression test, including hardness (peak force), adhesiveness (negative area), and the various areas used to calculate cohesiveness and resilience [11].

Standard GLCM Extraction and Analysis Protocol

GLCM analysis follows a systematic procedure for quantifying texture patterns:

Image Acquisition: Obtain images using appropriate modality (satellite imagery, CT scans, hyperspectral imaging, etc.). For medical CT, use arterial phase with slice thickness interpolated to 2mm [15].

Image Preprocessing:

- Convert to grayscale if working with color images

- Apply intensity normalization (e.g., Hounsfield units for CT)

- Discretize gray levels (typically 8-bit, 256 levels)

- For radiomics, follow Image Biomarker Standardisation Initiative (IBSI) recommendations [15]

GLCM Construction: Calculate the matrix by defining:

- Spatial relationship (distance, d): Typically 1 pixel

- Orientation (θ): Often computed at 0°, 45°, 90°, and 135°, then averaged

- Matrix size: Corresponding to number of gray levels (typically 256×256) [12]

Feature Extraction: Calculate Haralick features from the normalized GLCM using standard mathematical formulas [12].

Validation: Assess feature stability with respect to imaging parameters and scanner variations [15].

Figure 2: GLCM Analysis Workflow. Diagram illustrates the sequential process from image input through feature calculation, highlighting key parameters that must be defined at each stage [12] [15] [16].

Comparative Applications Across Fields

Food Science and Quality Control

In food science, TPA serves as the reference method for direct mechanical characterization, while GLCM provides non-destructive alternatives through image analysis:

Meat Product Analysis: TPA quantified plant-based meats successfully replicating the full viscoelastic texture spectrum of processed animal meat, with stiffness values ranging from 56.7±14.1 kPa (tofu) to 418.9±41.7 kPa (plant-based turkey) [13]. GLCM analysis of confocal laser scanning microscopy images revealed microstructural differences in cooked meat products, with Shannon entropy values below 6.8 for all products and strong correlations to sensory attributes [18].

Salmon Quality Assessment: Hyperspectral imaging with GLCM texture features successfully predicted TPA parameters non-invasively, with spectral features outperforming image texture features for prediction accuracy. The method enabled spatial mapping of texture distribution across fillets, addressing sampling heterogeneity issues [17].

Mastication Studies: The ChewNet dataset integrated TPA measurements with robotic chewing simulations and image analysis, enabling correlation between mechanical properties, chewing cycles, and visual changes in food boluses [19].

Medical Imaging and Radiomics

GLCM has demonstrated significant value in medical imaging for prognostic assessment and disease characterization:

Esophageal Adenocarcinoma: In a multicenter validation study, GLCMCorrelation and GLCMContrast provided incremental prognostic information for 3-year overall survival beyond clinical staging. A clinicoradiomic model (ClinRad) incorporating these features achieved an AUC of 0.68, significantly outperforming staging alone (ΔAUC=0.12, p=0.04) [15].

Tumor Characterization: GLCM features quantify tumor heterogeneity, which correlates with pathological progression and treatment response. The method requires strict standardization of image acquisition parameters and following IBSI guidelines for reproducible radiomic analysis [14].

Remote Sensing and Agriculture

GLCM enables improved pattern recognition in satellite and aerial imagery:

Crop Classification: GLCM texture features improved classification accuracy of crops from drone imagery by 13.65% compared to using spectral information alone. Random Forest classification on GLCM features achieved 86.3% accuracy for distinguishing maize, bare soil, sugar beet, winter wheat, and grassland [16].

Land Use Classification: Studies comparing texture analysis methods found selected GLCM features and granulometric analysis effective for improving land use/cover classification in satellite imagery, with texture being more important for higher resolution images [12].

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Materials for Texture Analysis Protocols

| Category | Specific Products/Techniques | Function & Application |

|---|---|---|

| TPA Instruments | TA-XT Plus (Stable Micro Systems) | Universal testing machine for standardized TPA measurements [18] |

| GLCM Software | PyRadiomics (v3.0.1) | Open-source platform for standardized radiomic feature extraction [15] |

| Image Acquisition | Hyperspectral Imaging (400-1758 nm) | Captures spatial and spectral data for non-destructive texture prediction [17] |

| Medical Imaging | Contrast-enhanced CT (arterial phase) | Provides source images for radiomic feature extraction in medical applications [15] |

| Compression Accessories | Cylindrical probes (35-75mm diameter) | Standardized compression surfaces for TPA testing [13] |

| Sample Preparation | Custom cutting jigs | Ensures uniform sample dimensions (e.g., 15mm height × 15mm diameter) [13] |

TPA and GLCM serve complementary roles in texture analysis, with selection dependent on research objectives, sample type, and available resources. TPA remains the gold standard for direct mechanical characterization of material properties, providing fundamental measurements of hardness, cohesiveness, and elasticity that correlate well with sensory perception. GLCM offers powerful pattern quantification capabilities for image-based texture assessment, enabling non-destructive analysis, prognostic modeling in medical imaging, and improved classification in remote sensing.

For method validation studies, TPA provides reference measurements for calibrating and validating indirect GLCM approaches. The integration of both methods in multimodal assessment frameworks, as demonstrated in food science and medical imaging applications, provides comprehensive texture characterization across multiple scales. When implementing these established protocols, researchers should adhere to standardized procedures, report critical parameters, and validate method performance against domain-specific standards to ensure reproducible and interpretable results.

In the rapidly advancing field of precision oncology, the development of novel diagnostic and monitoring methods is accelerating. These new techniques, particularly those based on advanced imaging textures and genomic profiling, promise to enhance the personalization of cancer care. However, their promise can only be realized through rigorous validation against established protocols. This process is not merely an academic exercise but a critical step that directly impacts the core drivers of modern oncology: the speed of clinical decision-making, the cost-effectiveness of care, and the ultimate precision of therapeutic interventions. Validation ensures that new methods are reliable, reproducible, and truly contributory to patient outcomes before they are integrated into routine clinical practice and clinical trials [20] [21].

This guide objectively compares emerging methodologies with established protocols, providing a framework for researchers and drug development professionals to evaluate new techniques within the broader context of validating new texture methods against established protocols.

The Critical Role of Method Validation in Oncology

Validation serves as the essential bridge between innovative research and actionable clinical application. In precision oncology, where treatments are increasingly tailored to individual molecular and cellular profiles, the reliability of the data guiding these decisions is paramount. The primary goals of method validation include:

- Establishing Reliability and Reproducibility: Ensuring a new method produces consistent results across different instruments, operators, and time, which is a foundational requirement for multi-center clinical trials [21].

- Demonstrating Clinical Utility: Proving that the method provides information that can lead to better patient outcomes, more efficient use of resources, or both. This is especially crucial for novel digital measures where established reference standards may not exist [21].

- Guiding Implementation in Routine Care: Validation determines which parts of a new concept are mature enough for cost-effective implementation in routine healthcare, separating true advancement from premature translation [20].

Without robust validation, there is a risk of adopting technologies that may not deliver meaningful clinical benefit, potentially wasting scarce resources and, more importantly, leading to suboptimal patient care. This is particularly relevant for complex "omics"-based approaches, where the gap between a scientifically interesting finding and a clinically actionable result can be wide [20].

Comparative Analysis of Emerging vs. Established Methods

The following sections provide a detailed, data-driven comparison of two key areas where new methods are undergoing validation: treatment response assessment and genomic profiling.

Validating a Novel Imaging Method for Early Response Assessment

A significant challenge in oncology is the delay in assessing whether a patient is responding to treatment. An established protocol, like waiting to measure tumor shrinkage on a post-surgical specimen, can take months. A novel method using Quantitative Ultrasound (QUS) with texture analysis and machine learning aims to detect microstructural changes in tumors as early as one week after starting treatment [6].

Experimental Protocol for QUS Validation [6]:

- Objective: To validate a previously developed machine learning model for predicting response to neoadjuvant chemotherapy (NAC) in breast cancer patients using QUS texture features.

- Patient Cohort: 51 patients with breast cancer (median tumor size 3.6 cm) eligible for NAC.

- Methodology: Radio frequency ultrasound data were acquired volumetrically from breast tumors before treatment (week 0) and during the first week of treatment. Quantitative ultrasound parametric maps (e.g., mid-band fit, spectral slope) were generated. A Gray-Level Co-occurrence Matrix (GLCM) texture analysis was then applied to these maps to extract features like contrast, correlation, homogeneity, and energy. The changes in these features at week 1 were fed into a pre-trained support vector machine (SVM) classification model.

- Response Definition: Tumor response was defined as a ≥30% reduction in target lesion size on the resection specimen compared to baseline imaging.

- Validation Metric: The model's performance was assessed in this independent cohort using accuracy, sensitivity, specificity, and negative predictive value (NPV).

The table below summarizes the performance of this novel QUS method against the established protocol of pathological assessment post-surgery.

Table 1: Performance Comparison of Early Response Assessment Methods in Breast Cancer

| Method Characteristic | Established Protocol (Pathological Assessment) | Novel Method (QUS with Machine Learning) |

|---|---|---|

| Primary Technology | Histopathological examination of resected tumor | Quantitative ultrasound (QUS) texture analysis & machine learning |

| Time to Result | Several months (after surgery) | 1 week after treatment start |

| Key Performance Metrics | Considered the reference standard | Accuracy: 86%Specificity: 91%Sensitivity: 50%Negative Predictive Value (NPV): 93% |

| Main Advantage | Direct examination of tumor tissue | Ultra-early assessment, non-invasive |

| Main Limitation | Results come too late to change neoadjuvant therapy | Lower sensitivity; challenges with poorly defined tumors |

The high specificity (91%) and NPV (93%) of the QUS method are clinically significant. They indicate that if the model predicts a response, it is highly likely to be correct, potentially allowing clinicians to confidently continue effective regimens. Conversely, the lower sensitivity suggests a risk of missing some true responders, highlighting an area for further model refinement [6].

The Workflow of Validating a Novel Diagnostic Method

The process of validating a new method like QUS follows a structured pathway to ensure its conclusions are robust. The following diagram visualizes the key stages from initial development to the final assessment of clinical value.

Cost Analysis: Genomic Profiling in Precision Oncology

Precision oncology is often synonymous with genomic profiling. While established gene panels are widely used, understanding the true cost of these complex methods is essential for their sustainable implementation. A microcosting study from Norway provides a detailed breakdown of the costs associated with comprehensive genomic profiling using the TruSight Oncology 500 panel within a molecular tumor board infrastructure [22].

Experimental Protocol for Cost Analysis [22]:

- Objective: To conduct a microcosting of genomic profiling in precision cancer medicine, calculating costs per sample, by workflow steps, and by cost categories.

- Methodology: The study used a flexible costing framework informed by site visits and staff discussions at a major university hospital. Resource use was tracked for each step of the workflow, from sample preparation to data analysis and reporting. Sensitivity analyses were performed to account for uncertainties in estimates, different batch sizes, and the impact of automating specific steps.

- Cost Calculation: Total costs were calculated by summing the inputs across all workflow steps and cost categories (e.g., personnel, consumables, equipment).

Table 2: Microcosting Analysis of Genomic Profiling per Sample

| Cost Category | Cost (USD) | Percentage of Total Cost | Key Drivers |

|---|---|---|---|

| Total Cost per Sample | $2,944 | 100% | Sum of all categories |

| Consumables | - | Major category | Sequencing reagents, kits |

| Personnel | - | Major category | Technologist, bioinformatician, and analyst time |

| Equipment & Overhead | - | Significant category | Depreciation of sequencers, IT infrastructure, facility costs |

| Total Cost (Range) | $2,366 - $4,307 | - | Reflects uncertainties in resource estimates |

The study found that consumables and personnel were the most resource-intensive cost categories. A key finding was that automating the library preparation step could allow for a higher weekly batch size with a slightly lower cost per sample ($2,881), despite the initial investment in equipment. This detailed costing highlights that beyond the sticker price of a test, factors like workflow optimization, batch size, and personnel bottlenecks are critical drivers of the final cost and scalability of precision oncology methods [22].

The Scientist's Toolkit: Essential Reagents & Materials

The validation of new methods relies on a suite of specialized reagents and technological solutions. The following table details key resources mentioned in the featured research.

Table 3: Key Research Reagent Solutions for Validation Studies

| Item Name | Function / Description | Application Context |

|---|---|---|

| TruSight Oncology 500 | A comprehensive gene panel for genomic profiling of solid tumors. | Used in the microcosting study to identify actionable mutations and guide therapy [22]. |

| Gray-Level Co-occurrence Matrix (GLCM) | An image analysis technique that quantifies texture by statistically analyzing the spatial relationship of pixel intensities. | Used to extract texture features (e.g., contrast, homogeneity) from QUS parametric maps for machine learning [6]. |

| Support Vector Machine (SVM) | A type of supervised machine learning algorithm used for classification and regression analysis. | The classification algorithm used to differentiate treatment responders from non-responders based on QUS texture features [6]. |

| Molecular Tumor Board (MTB) | A multidisciplinary panel of specialists (oncologists, pathologists, geneticists) that interprets complex genomic data to guide therapy. | The clinical infrastructure for which the cost of genomic profiling was analyzed [22]. |

| Digital Health Technology (sDHT) | Sensor-based technologies (e.g., accelerometers, smartphone apps) used to capture physiological and behavioral data. | Subject of analytical validation frameworks to ensure they are fit for purpose in clinical decision-making [21]. |

The driving forces behind method validation—speed, cost, and precision—are interconnected. As the comparisons show, a successfully validated method like the QUS-based predictor can dramatically increase the speed of clinical decision-making, creating a window for early treatment adjustment. Meanwhile, detailed cost analyses are indispensable for the practical and equitable implementation of precision oncology, ensuring that advanced diagnostics remain financially sustainable [20] [22].

The future of method validation lies in moving beyond single-marker approaches. As one editorial notes, precision oncology must evolve to integrate multiple layers of biomarkers—including other 'omics', pharmacogenomics, and imaging—to create truly personalized predictions, likely powered by AI [20]. The ultimate validation of any new method will be its ability to integrate into clinical workflows, improve patient access to cutting-edge care, and demonstrably enhance outcomes through rigorous, evidence-based medicine [23].

The development of new pharmaceutical dosage forms traditionally relies on extensive lab experimentation and animal testing, a process that is both time-consuming and costly. The emergence of AI-generated textures and in silico validation models represents a paradigm shift in this field. These technologies enable researchers to create and analyze digital versions of drug products, dramatically accelerating development cycles and reducing reliance on physical experiments [24]. This guide provides an objective comparison of the current landscape of these technologies, with a specific focus on their validation against established physical protocols—a critical concern for research scientists and drug development professionals adopting these tools.

Comparative Analysis of AI Texture Generation & Validation Platforms

The following table summarizes the core performance metrics, technological approaches, and validation status of leading and emerging platforms in this domain.

Table 1: Performance Comparison of AI Texture Generation and In Silico Models

| Technology / Platform | Primary Application | Reported Performance / Accuracy | Key Strengths | Validation Status vs. Established Protocols |

|---|---|---|---|---|

| Generative AI for Pharmaceutical Formulations [24] | Oral tablet & long-acting implant optimization | Accurately predicted percolation threshold of 4.2% w/w MCC; Generated implants with controlled drug loading & particle size. | Creates realistic digital product variations from exemplar images; Guided by Critical Quality Attributes (CQAs). | High Fidelity: Synthesized structures showed comparable particle size distributions and transport properties in release media to real samples. |

| MorphDiff (Cellular Imaging) [25] | Predicting cell morphology post-perturbation | Top-k mechanism-of-action (MOA) retrieval improved by 16.9% over prior baselines and 8.0% over transcriptome-only. | Generates biologically faithful cell images from gene expression data (L1000 profile). | High Biological Concordance: >70% of generated feature distributions were statistically indistinguishable from real data; preserves correlation between gene expression and morphology. |

| MolEdit (3D Molecular Generation) [26] | De novo molecular design & lead optimization | Generates valid molecules with comprehensive symmetry; effective for zero-shot lead optimization and linker design. | Physics-aligned diffusion model; obeys physical laws and suppresses hallucinations; handles complex 3D scaffolds. | Physics-Informed: Incorporates Boltzmann-Gaussian Mixture (BGM) kernel to align with force-field energies and physical constraints, ensuring stable and realistic molecular configurations. |

| TextureGram (TXG) for RGB Image Analysis [27] | Predicting anthocyanins content in grapes | Improved model performance when fused with colourgrams (CLG) in PLS models. | Novel data reduction method codifying texture into a 1D signal; hybrid nature explores both colour and texture. | Empirically Validated: Performance statistically evaluated via ANOVA and PCA on a benchmark dataset, showing advantage in predicting chemical content. |

| AI 3D Generators (e.g., Tripo, Rodin) [28] | 3D asset creation for simulation & visualization | ~1 in 10 generations are client-ready without rework; best for simple geometry/background props. | Speed iteration for non-critical assets; Tripo offers best workflow/editability. | Limited for Precision Work: Not reliable for "hero assets" or complex geometry; requires manual QC and fixes, limiting validation against high-precision physical standards. |

Detailed Experimental Protocols for Key Technologies

Protocol: Generative AI for Pharmaceutical Formulation Structure Synthesis

This protocol outlines the methodology for creating and validating digital dosage forms, as validated in recent peer-reviewed literature [24].

- 1. Objective: To synthesize realistic, variable digital structures of pharmaceutical dosage forms in silico from exemplar images, guided by Critical Quality Attributes (CQAs) like particle size and drug loading.

- 2. Core Technology: A Continuous-Conditional Generative Adversarial Network (ccGAN) combined with an On-Demand Solid Texture Synthesis (STS) model architecture. The model is augmented with Feature-wise Linear Modulation (FiLM) layers to enable precise, interpretable control over output attributes [24].

- 3. Input Data Preparation:

- Exemplar Images: Acquire high-resolution 2D or 3D microstructure images of the sub-optimal formulation product (e.g., via microscopy).

- Conditioning Parameters: Define and quantify the CQAs (e.g., porosity, drug loading percentage, excipient concentration) for the exemplars.

- 4. Model Training & Synthesis:

- The ccGAN is trained on exemplar images, learning the underlying texture and structure.

- The conditioning parameters are fed via FiLM layers to steer the generative process, allowing synthesis of new digital structures that match desired CQAs, even outside the range of the original exemplar data.

- The On-Demand STS architecture allows for the deterministic generation of large, contiguous 3D texture volumes from smaller, independently computed chunks, reducing computational load [24].

- 5. In Silico Validation & Analysis:

- Percolation Analysis: For the case study on an oral tablet, the digital structures were analyzed to determine the minimum weight percentage (4.2%) of microcrystalline cellulose (MCC) required to form a continuous network.

- Drug Release Modeling: For the long-acting implant, the transport properties of the generated structures in release media were simulated and computed.

- 6. Physical Validation:

- The key predictions from the digital models (e.g., percolation threshold, release profile) are validated by manufacturing physical samples with the same CQAs and conducting traditional in vitro tests (e.g., dissolution testing).

- The particle size distributions and transport properties of the physical samples are compared quantitatively with those of the synthesized digital structures to confirm fidelity [24].

Protocol: MorphDiff for In Silico Cell Morphology Prediction

This protocol describes the workflow for using the MorphDiff diffusion model to predict cell morphology from transcriptomic data, a method with applications in toxicology and drug safety assessment [25].

- 1. Objective: To generate accurate, high-fidelity images of cellular morphology that would result from a specific genetic or chemical perturbation, using only gene expression data (L1000 profile) as input.

- 2. Core Technology: A latent diffusion model guided by a transcriptomic vector (L1000) via attention mechanisms. It uses a Morphology Variational Autoencoder (MVAE) to compress and reconstruct microscope images [25].

- 3. Input Data:

- L1000 Gene Expression Profile: A vector representing the expression levels of 978 landmark genes after a perturbation.

- (Optional) Control Image: For the image-to-image (I2I) transformation mode, a control cell image can be provided to be transformed into its perturbed state.

- 4. Image Generation:

- For Gene-to-Image (G2I) generation, the model starts from noise and denoises it over multiple steps, with each step conditioned on the L1000 transcriptome vector.

- For Image-to-Image (I2I) transformation, an SDEdit-style procedure is used, where noise is added to a control image and then denoised, guided by the transcriptome of the target perturbation [25].

- 5. Validation & Phenotypic Profiling:

- Feature Extraction: Hundreds of morphological features (texture, intensity, granularity) are extracted from both real and generated images using CellProfiler.

- Distribution Analysis: The distributions of these features are statistically compared (e.g., using CMMD metric) to ensure the generated data is indistinguishable from the ground truth.

- Mechanism-of-Action (MOA) Retrieval: The ultimate validation involves using DeepProfiler embeddings from the generated images to retrieve drugs with known MOAs from a database. The accuracy of this retrieval is benchmarked against using real images and transcriptome data alone [25].

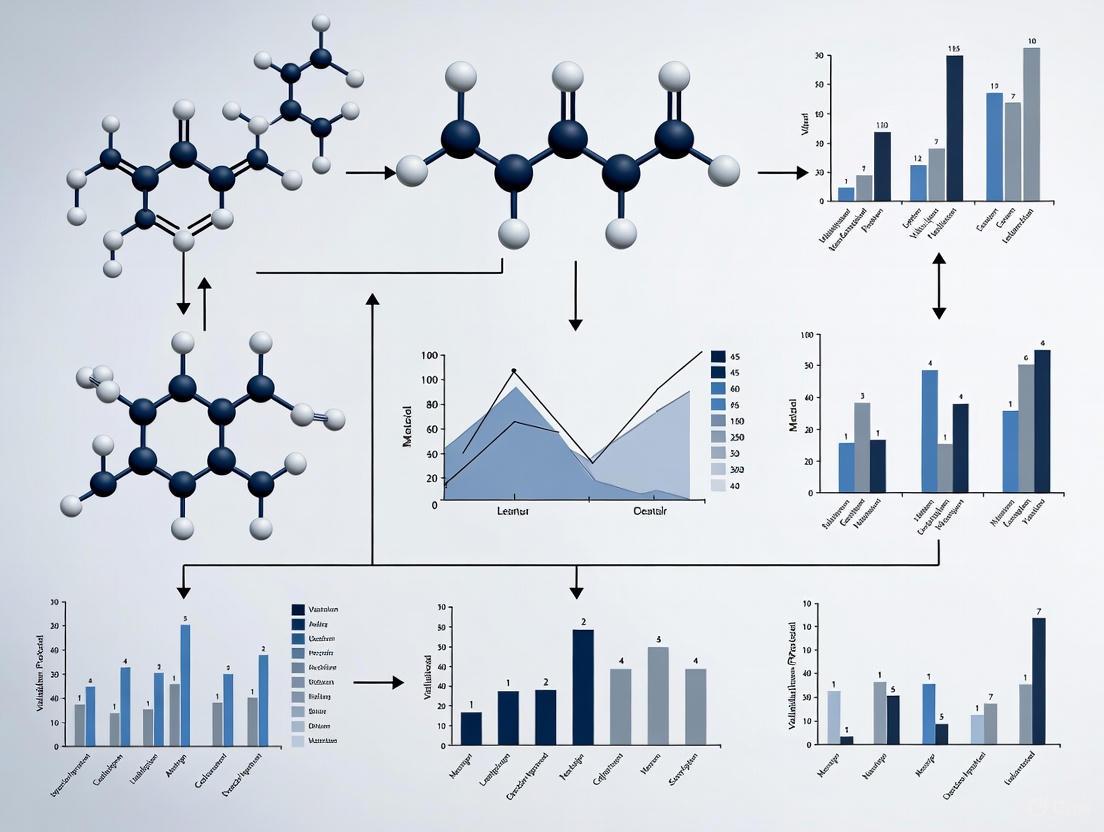

Workflow Visualization of In Silico Validation

The following diagram illustrates the core logical workflow for developing and validating an AI-based texture or structure generation model in a pharmaceutical context, integrating the protocols above.

In Silico Model Development and Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

The implementation and validation of AI-generated textures require a combination of digital and physical tools. The following table details key resources mentioned in the featured research.

Table 2: Essential Reagents and Solutions for AI Texture and In Silico Model Research

| Item / Solution | Function / Purpose | Application Context |

|---|---|---|

| High-Resolution Microscope Images | Serve as the foundational "exemplar data" for training generative AI models to understand material microstructure. | Pharmaceutical formulation optimization [24]; Wear particle classification [29]. |

| Critical Quality Attributes (CQAs) | Quantifiable properties (e.g., particle size, drug loading) used as conditional inputs to steer AI generation toward desired outputs. | Guided synthesis of digital dosage forms [24]. |

| L1000 Gene Expression Profile | A standardized molecular readout used as a conditional input to predict changes in cellular morphology. | MorphDiff model for predicting cell response to perturbations [25]. |

| Texture Analyser | An instrument that provides objective, quantitative measurements of material physical properties (e.g., hardness, firmness, adhesion). | Serves as the gold standard for empirical and imitative testing, providing ground-truth data for validating AI-generated physical predictions [30]. |

| CellProfiler / DeepProfiler | Open-source software for extracting quantitative features from biological images. Used to create a morphological "fingerprint." | Translating AI-generated cell images into quantifiable, biologically meaningful data for validation and MOA analysis [25]. |

| Generative AI Model Architectures (e.g., ccGAN, Diffusion Models) | The core computational engine that learns the distribution of real-world structures and generates novel, realistic digital counterparts. | Creating digital formulations, molecular structures, or cell images for preliminary in-silico screening [24] [25] [26]. |

| Physics-Informed Kernel (e.g., BGM Kernel) | A computational module that incorporates physical laws (e.g., force-field energies) into the AI's generation process. | Suppresses physically impossible "hallucinations" in generated 3D molecular structures, ensuring thermodynamic stability and realism [26]. |

The frontier of AI-generated textures and in silico models is rapidly advancing, with technologies like ccGANs and diffusion models demonstrating high fidelity against established physical and biological validation protocols. While tools for 3D molecular generation and cellular imaging are showing remarkable accuracy in specialized tasks, the broader field of general 3D asset creation remains less mature for precision-critical applications. The ongoing challenge and focus of current research is the tight integration of physics-informed constraints and robust, standardized experimental benchmarking to ensure these powerful in silico tools are both predictive and reliable for accelerating scientific discovery and drug development.

Implementing Novel Texture Methods: From Bench to Bedside

For patients with locally advanced breast cancer (LABC), neoadjuvant chemotherapy (NAC) is a standard initial treatment aimed at reducing tumour size before surgery. However, tumour response to NAC is highly variable, with only 15-40% of patients achieving a complete pathological response, while approximately 30% demonstrate little to no response [31] [32]. The critical clinical challenge lies in the timing of response assessment; determining whether a patient is responding typically relies on post-surgical pathological evaluation or anatomical imaging months after treatment initiation. This delay in identifying non-responders prevents timely adjustment of therapy and may compromise patient outcomes [6].

This case study examines the validation of Quantitative Ultrasound (QUS) as a non-invasive method for early prediction of chemotherapy response. Unlike conventional B-mode ultrasound, which primarily images anatomical structures, QUS analyzes raw radiofrequency (RF) signals to quantify microstructural tissue properties. The core hypothesis is that QUS can detect chemotherapy-induced cellular changes—such as alterations in cell density, size, and organization—that precede macroscopic tumour shrinkage [6] [33]. We frame this investigation within the broader thesis of validating novel texture analysis methods against established clinical and pathological protocols, assessing whether QUS-derived biomarkers can reliably predict treatment outcomes earlier than current standards.

Comparative Performance of Imaging Modalities

Multiple imaging modalities have been investigated for predicting and monitoring chemotherapy response. The table below summarizes the reported performance metrics of QUS alongside other prominent imaging techniques.

Table 1: Performance Comparison of Imaging Modalities for Predicting NAC Response in Breast Cancer

| Imaging Modality | Prediction Timing | Key Predictive Features | Accuracy | Sensitivity | Specificity | AUC |

|---|---|---|---|---|---|---|

| Quantitative Ultrasound (QUS) with Machine Learning [6] | Week 1 of NAC | QUS texture features (contrast, correlation, homogeneity, energy) from parametric maps | 86% | 50% | 91% | 0.71 |

| QUS with Texture Derivative & Molecular Subtypes [32] | Pre-treatment (Baseline) | QUS texture derivatives from tumor core & margin combined with molecular subtype | 83% | 79%* | 86%* | 0.87 |

| Deep Learning of QUS Multi-parametric Images [33] | Pre-treatment (Baseline) | Deep convolutional neural network features from QUS parametric maps of tumor core and margin | 88% | N/A | N/A | 0.86 |

| CT Texture Analysis with Machine Learning [31] | Pre-treatment (Baseline) | 851 textural biomarkers from original and wavelet-transformed images | 77% | 56% | 80% | N/A |

| Conventional Ultrasound (B-mode) [34] | N/A | Anatomical changes (tumor shrinkage) | N/A | 93% | 52% | 0.84 |

| Conventional US + Elastography [34] | N/A | Tissue stiffness characteristics | N/A | 93% | 71% | 0.93 |

| Conventional US + Contrast-Enhanced US (CEUS) [34] | N/A | Microvascular perfusion patterns | N/A | 91% | 79% | 0.91 |

Note: Metrics estimated from available data in source publications. N/A indicates data not available in the searched literature.

QUS demonstrates competitive performance, particularly in specificity, which is crucial for minimizing false positives in non-responder identification. The integration of QUS with machine learning and molecular subtyping enhances its predictive power, potentially offering a cost-effective and rapid alternative to MRI-based approaches [32].

Experimental Protocols: Validating QUS Methodology

Patient Cohort and Study Design

Validation studies for QUS typically employ a prospective observational design. For example, one cited study [6] recruited breast cancer patients with tumours larger than 1.5 cm who were scheduled for NAC. Patients underwent QUS imaging at baseline (before treatment) and at predefined intervals during chemotherapy (e.g., week 1). The study's primary goal was to validate a previously developed QUS-based machine learning model in an independent cohort of 51 patients. The ground truth for treatment response was determined post-surgery using a modified response grading system, classifying patients as responders (≥30% reduction in tumour size or cellularity <5%) or non-responders (<30% reduction in size) [6] [35]. This rigorous pathological correlation is essential for validating any new predictive biomarker.

QUS Data Acquisition and Processing

The technical workflow for QUS involves specialized data acquisition and processing steps that differentiate it from conventional ultrasound:

Table 2: Key Research Reagent Solutions for QUS Experiments

| Item Name | Function in QUS Protocol |

|---|---|

| Sonix RP Clinical Research System [6] [32] | Research-grade ultrasound machine capable of capturing raw Radiofrequency (RF) data, which contains more information than processed B-mode images. |

| L14-5/60 Linear Transducer [6] [33] | High-frequency linear array probe (central frequency 6.5 MHz) used for breast imaging, providing the necessary bandwidth for spectral analysis. |

| Reference Phantom [6] | Used with the "reference phantom method" to remove system-dependent effects from the QUS parameters, ensuring quantifiable and reproducible measurements. |

| GLCM-based Texture Analysis [6] [32] | Computational method applied to QUS parametric maps to extract features like Contrast, Correlation, Homogeneity, and Energy, which quantify tissue microstructure patterns. |

- RF Data Acquisition: Using a clinical research system (e.g., Sonix RP) with a linear transducer, volumetric RF data is acquired from the breast tumour across multiple image planes. The transducer focus is set to the mid-depth of the tumour [32] [33].

- Parametric Map Generation: A Fast Fourier Transform (FFT)-based algorithm is applied to the RF data to construct quantitative parametric images. These maps represent microstructural properties derived from the backscattered ultrasound signal [6]. Key parameters include:

- Mid-band fit (MBF): Related to the overall backscatter signal intensity.

- Spectral slope (SS): Influenced by the scatterer size and concentration.

- Spectral intercept (SI): Related to the number density of scatterers.

- Average scatterer diameter (ASD) and Average acoustic concentration (AAC): Estimated by fitting a scattering model to the backscatter coefficient [6] [32] [33].

- Texture Feature Extraction: A Grey-Level Co-occurrence Matrix (GLCM) analysis is applied to the QUS parametric maps. This second-order statistical method extracts textural features (e.g., contrast, correlation, energy, homogeneity) that quantify the spatial relationships between pixels, capturing intra-tumoural heterogeneity [6]. More advanced studies also compute texture-derivative parameters by creating GLCM-based texture maps, which provide an even more refined representation of tissue heterogeneity [32].

- Machine Learning Model Development and Validation: The extracted features (mean QUS parameters and texture features) are used to train machine learning classifiers, such as Support Vector Machines (SVM) or k-Nearest Neighbours (KNN). The model is trained to differentiate between responders and non-responders. Crucially, its performance is then validated on a separate, independent cohort of patients to assess real-world generalizability [6] [32].

Diagram 1: QUS data analysis workflow for response prediction.

Validation Pathway and Integration with Established Protocols

The validation of QUS as a predictive biomarker follows a structured pathway aligned with the principles of analytic validation, clinical validation, and clinical utility [35] [36].

Diagram 2: Key stages in the validation of QUS for clinical use.

Key stages in this pathway include:

- Independent Cohort Validation: A critical step involves testing a pre-specified QUS model in a new, prospective patient cohort. One study validated a week-1 prediction model in 51 independent patients, achieving 86% accuracy, which confirms the model's robustness beyond its development cohort [6].

- Benchmarking Against Established Protocols: QUS performance is consistently compared to the reference standard of post-surgical pathological evaluation. Furthermore, its incremental value over conventional ultrasound is demonstrated by its superior specificity (91% for QUS vs. 52% for conventional US in one study) in identifying non-responders [6] [34].

- Integration with Multi-Parametric Data: The most advanced QUS models combine QUS features with other clinically established data, such as tumour molecular subtypes (e.g., HER2-positive, triple-negative). This integration recognizes that response biology is multifaceted and leads to improved predictive performance (accuracy improved from 80% to 83% in one model) [32].

Challenges and Future Research Directions

Despite promising results, several challenges remain before QUS can be widely adopted in clinical practice.

- Technical and Clinical Hurdles: Model misclassifications have been associated with poorly defined tumour borders, which complicate accurate region-of-interest identification [6]. The presence of ductal carcinoma in situ (DCIS) within tumours can also confound QUS analysis, as its microstructure differs from invasive carcinoma [35].

- Path to Clinical Integration: Future work must focus on standardizing acquisition protocols across different ultrasound systems and operators to ensure reproducibility [36]. Furthermore, the ultimate test of clinical utility requires prospective interventional trials where treatment is modified based on QUS predictions. The critical question is whether switching non-responders to alternative therapies based on early QUS assessment ultimately improves survival and patient outcomes [35].

This validation case study demonstrates that Quantitative Ultrasound, particularly when enhanced with texture analysis and machine learning, represents a highly promising non-invasive tool for the early prediction of breast cancer response to chemotherapy. Its ability to detect microstructural changes within the first week of treatment offers a significant temporal advantage over established anatomical imaging protocols. The successful validation of QUS models in independent cohorts, achieving accuracies between 83% and 88% in pre-treatment and early-treatment settings, provides compelling evidence for its robustness [6] [32] [33].

Framed within the broader thesis of validating new texture methods, QUS exemplifies a modern approach that moves beyond simple anatomical visualization to quantify tissue texture properties as clinically actionable biomarkers. Its integration with established pathological and molecular protocols creates a powerful, multi-parametric predictive framework. Future research aimed at overcoming technical challenges and definitively proving its utility in guiding treatment decisions will be the final step in establishing QUS as a new standard in personalized oncology.

The efficacy, safety, and stability of transdermal and topical products (TTPs) are paramount to consumer acceptance and compliance [37]. Within pharmaceutical development, characterizing these products extends beyond basic formulation to encompass critical textural and physical properties that directly influence application, drug release, and patient experience [38]. Texture analysis provides a comprehensive approach to quantifying these properties, offering objective data that complements traditional efficacy testing [39]. For researchers validating new texture methods against established protocols, understanding these measurable characteristics—such as spreadability, adhesiveness, and hardness—is essential for demonstrating methodological robustness and equivalence [37] [40].

The European Medicines Agency (EMA) emphasizes the importance of characterizing Critical Quality Attributes (CQAs) throughout a product's lifecycle [40]. As the industry moves toward more sophisticated and patient-centric delivery systems, including microneedles and nano-carriers, the tools for characterizing these systems must similarly evolve [41] [42]. This guide objectively compares the performance of various characterization techniques and products, providing researchers with a framework for the rational design and optimization of transdermal delivery systems [41].

Characterizing Topical & Transdermal Products: A Comparative Analysis

The following sections compare key product types and the analytical techniques used to characterize their performance, providing supporting experimental data where applicable.

Performance Comparison of Topical Formulations

Table 1: Comparative Analysis of Topical Semisolid Formulations

| Formulation Type | Key Textural Properties | Typical Measurement Techniques | Influencing Factors | Consumer/Sensory Perception |

|---|---|---|---|---|

| Creams & Lotions [38] | Firmness, Spreadability, Stickiness, Consistency [39] [38] | Compression, Extrusion, Tension Testing [38] | Emulsion stability, viscosity, oil/water phase ratio [39] | Light, non-greasy, smooth, creamy, rich texture [38] |

| Gels [38] | Gel Strength, Firmness, Adhesiveness, Stickiness [39] [37] | Gel Strength Testing, Compression, Texture Profile Analysis (TPA) [38] | Type and concentration of gelling agent, ionic strength [39] | Cool, slick, can be sticky or non-sticky [38] |

| Ointments | Hardness, Adhesiveness, Spreadability, Viscosity [39] | Extrusion, Compression, Spreadability Fixtures [39] | Base composition (e.g., hydrocarbon vs. absorption bases) | Greasy, occlusive, protective [42] |

| Solid Sticks (e.g., Lipstick, Deodorant) [38] | Hardness, Fracture Strength, Break Resistance [39] [38] | Penetration/Puncture, Shear/Snap/Break Testing [38] | Wax composition, oil content, powder fillers [39] | Smooth application, no crumbling or flaking [38] |

| Pressed Powders (e.g., Makeup) [38] | Cake Strength, Compaction, Fracturability, Flowability [39] [38] | Penetration/Puncture Testing, Visual Clump Inspection [38] | Binder type and ratio, compression force, particle size | Even, blendable, no caking or clumping [38] |

Advanced Transdermal Delivery Systems

Table 2: Performance Comparison of Enhanced Transdermal Delivery Systems

| Delivery Technology | Mechanism of Action | Key Characterization Parameters | Experimental Findings | Advantages & Challenges |

|---|---|---|---|---|

| Chemical Penetration Enhancers [41] | Disrupts stratum corneum lipid bilayer to increase permeability [41] | Permeation flux, Lag time, Enhancement ratio (via Franz cell) [41] | Enhancers like ethanol, terpenes, fatty acids can increase permeability 2 to 10-fold for small molecules [41] | + Simple to formulate; - Potential for skin irritation, non-specific action [41] |

| Microneedles (µNDs) [37] [42] | Creates micro-scale conduits in stratum corneum for direct drug access [42] | Insertion/Puncture Force, Fracture Force, Mechanical Strength [37] | Texture analysis quantifies force required for skin insertion (e.g., 0.1-1.0 N per needle), ensuring penetration without fracture [37] | + Painless, bypasses barrier; - Manufacturing complexity, potential for breakage [37] [42] |

| Nanocarriers (e.g., Liposomes, Ethosomes) [41] | Encapsulates drug, facilitating transport through skin layers [41] | Particle size, Zeta potential, Entrapment efficiency, Deformation index [41] | Ethosomes shown to deliver drugs 5-10 times more effectively than standard liposomes into deeper skin layers [41] | + Targeted delivery, reduced irritation; - Stability issues, complex scale-up [41] |

| Iontophoresis [41] [42] | Uses low electrical current to drive charged molecules across skin [41] | Current density, Duration of application, Drug flux [41] | Enables delivery of small ions and peptides (e.g., < 10 kDa) with linear relationship between current and flux [41] | + Controlled, active delivery; - Requires power source, for charged molecules only [41] |

Experimental Protocols for Key Characterization Methods

Texture Profile Analysis (TPA) of Semisolid Formulations

Texture Profile Analysis (TPA) is a two-cycle compression test that mimics the sensory evaluation of a product in the mouth or between the fingers, providing insights into the structure and sensory attributes of semisolid formulations [37].

- Objective: To mechanically characterize key texture parameters of semisolid formulations such as creams, gels, and ointments.

- Equipment: Texture Analyzer equipped with a load cell, a flat-ended cylindrical probe (e.g., 25-50 mm diameter), and TPA software [37] [38].

- Procedure:

- Sample Preparation: Fill a standard container with the test formulation, ensuring a smooth, level surface. Allow to rest at a controlled temperature (e.g., 25°C) to equilibrate.

- Test Setup: Set the probe to compress the sample to a predefined strain level (e.g., 30-50% of original height) at a fixed test speed (e.g., 1-2 mm/s).

- Test Run: The probe performs two consecutive compression cycles with a brief pause between them (e.g., 5 seconds). The force-time curve is recorded throughout the test [37].

- Data Analysis: The resulting force-time curve is analyzed for key parameters [37]:

- Hardness: The peak force during the first compression cycle.

- Adhesiveness: The negative force area after the first withdrawal, representing the work required to overcome attractive forces between the sample and the probe (stickiness).

- Cohesiveness: The ratio of the area under the second compression cycle to the area under the first compression cycle, indicating how well the product withstands a second deformation.

- Springiness: The distance the sample recovers in height during the time between the end of the first cycle and the start of the second cycle.

In Vitro Release Test (IVRT) for Product Equivalence

IVRT is a critical quality control tool used to demonstrate product sameness and equivalence, especially for locally applied, locally acting cutaneous products [40].

- Objective: To measure the rate and amount of drug release from a topical formulation using an artificial membrane, supporting quality assessments and equivalence claims.

- Equipment: Automated diffusion cell system (e.g., Franz cell), synthetic membrane (e.g., cellulose ester or polysulfone), receptor medium, and HPLC/UV for assay [40].

- Procedure:

- Assembly: The selected membrane is placed between the donor and receptor compartments of the Franz cell. The receptor compartment is filled with a suitable medium (e.g., phosphate buffer) that maintains sink conditions.

- Dosing: A finite dose of the test product is applied uniformly to the membrane surface in the donor compartment.

- Sampling: The receptor fluid is maintained at 32°C ± 1°C and stirred continuously. Aliquots are withdrawn from the receptor compartment at predetermined time intervals (e.g., 1, 2, 4, 6, 8 hours) and replaced with fresh medium.

- Analysis: The withdrawn samples are analyzed using a validated analytical method (e.g., HPLC) to determine the cumulative amount of drug released per unit area over time [40].

- Data Analysis: The release rate is typically calculated from the linear portion of the cumulative amount released versus the square root of time plot, as described by the Higuchi model. The 90% confidence interval for the ratio of the release rates of the test and reference products should fall within predefined limits (e.g., 75-133.33%) to demonstrate equivalence [40].

Mechanical Strength Testing of Microneedles

Ensuring microneedles possess sufficient mechanical strength to penetrate the skin without breaking is a critical quality attribute for both safety and efficacy [37].

- Objective: To determine the force required to pierce the skin and the fracture force of individual microneedles or arrays.

- Equipment: Texture Analyzer with a small, flat-ended cylindrical probe (smaller than the microneedle spacing) and a rigid, flat substrate [37].

- Procedure:

- Insertion Force Test: A single microneedle or an array is placed on the base. The probe is aligned and moved downward at a constant speed (e.g., 0.5-1.0 mm/s) against the needle tip until a predetermined displacement is reached. The force-displacement curve is recorded.

- Fracture Force Test: The test is repeated, but the probe continues its downward movement until a sharp drop in force is observed, indicating needle fracture [37].

- Data Analysis:

- Puncture/Insertion Force: The force required to initially pierce a substrate (or simulated skin model) is determined from the curve.

- Fracture Force: The maximum force sustained by the microneedle before breakage is recorded. This data is crucial for ensuring needles are strong enough to penetrate the stratum corneum (typically 10-20 µm thick) but may dissolve or degrade appropriately afterward [37].

Research Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Transdermal Product Characterization

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| Franz Diffusion Cell [41] [40] | Standard apparatus for in vitro permeation (IVPT) and release (IVRT) testing [41] | Measuring the rate and extent of drug permeation through excised human skin or synthetic membranes [40] |

| Texture Analyzer [39] [37] | Instrument for quantifying mechanical and textural properties of formulations and delivery systems [38] | Performing TPA on creams, adhesion tests on patches, and fracture force tests on microneedles [37] [38] |

| Chemical Penetration Enhancers [41] | Substances that temporarily reduce the barrier function of the stratum corneum [41] | Added to formulations to improve the skin permeation of poorly absorbed active pharmaceutical ingredients (APIs) [41] |

| Pressure-Sensitive Adhesives (PSAs) [37] | Key component of transdermal patches that ensures contact with the skin [37] | Formulating transdermal patches; characterized for peel, tack, and shear strength using texture analysis [37] |

| Synthetic Membranes [40] | Used in IVRT as a more reproducible alternative to biological tissue [40] | Differentiated release testing of topical products for quality control and equivalence assessment [40] |

Visualizing Method Validation and Product Characterization

The following diagrams illustrate the logical workflow for method validation and the key relationships in product characterization.

Transdermal Product Characterization Workflow

Texture Analysis Parameter Relationships

Texture feature analysis represents a critical frontier in computational image analysis, enabling the quantification of subtle patterns and spatial relationships within digital images that are often imperceptible to the human eye. In recent years, artificial intelligence (AI) has dramatically transformed this field, moving from traditional handcrafted feature extraction to deep learning models that automatically learn discriminative texture representations directly from data [10]. This evolution has particular significance for biomedical research and drug development, where texture analysis serves as a non-invasive method for detecting pathological changes, assessing treatment efficacy, and understanding disease mechanisms at microstructural levels.

The validation of new texture analysis methods against established protocols remains a fundamental requirement for scientific acceptance and clinical translation. As researchers and drug development professionals increasingly adopt AI-driven approaches, understanding the comparative performance, methodological requirements, and application-specific suitability of different machine learning models becomes paramount. This review systematically compares contemporary AI methodologies for texture feature analysis, providing experimental data and protocols to guide model selection and implementation within rigorous scientific workflows.

Traditional vs. AI-Enhanced Texture Analysis: Methodological Foundations

Texture analysis encompasses multiple technical approaches for quantifying the spatial distribution of pixel intensities in digital images. Traditional methods typically rely on handcrafted feature extraction followed by classification using machine learning algorithms, while deep learning approaches integrate feature extraction and classification into end-to-end trainable architectures.

Traditional Texture Feature Extraction Methods

Traditional texture analysis employs mathematical frameworks to quantify spatial patterns, with several distinct methodological families:

Statistical-based methods: Analyze the spatial distribution of pixel values using approaches like Gray-Level Co-occurrence Matrices (GLCM), which calculate statistical measures from how pairs of pixels with specific values and spatial relationships occur in an image [43]. These methods extract features such as contrast, correlation, energy, and homogeneity to quantify texture properties [44].

Transform-based methods: Convert images into alternative representations using mathematical transforms like Fourier, Wavelet, or Gabor filters to capture frequency-domain texture characteristics.

Structural-based methods: Model texture as arrangements of primitive textural elements according to specific placement rules, effectively capturing regular, pattern-like textures.

Model-based methods: Use stochastic models or fractal analysis to represent textures, with fractal dimension calculations particularly effective for quantifying complexity and self-similarity in biological and material structures [44].

AI-Enhanced Texture Analysis Approaches

Artificial intelligence has expanded texture analysis capabilities through several paradigm-shifting approaches:

Deep Learning Feature Extraction: Convolutional Neural Networks (CNNs) automatically learn hierarchical texture representations from raw pixel data, with pre-trained models like DenseNet201, ResNet50, and Inceptionv3 serving as powerful feature extractors. These CNN features can be coupled with classifiers like Support Vector Machines (SVM) for texture classification tasks, achieving accuracies of 85%-95% across various texture databases [45].

End-to-End Deep Learning: Architectures like U-Net integrate feature extraction and segmentation in a unified framework, particularly effective for medical image analysis tasks such as multiple sclerosis lesion segmentation [46].

Radiomics and AI Integration: The field of radiomics exemplifies the transition from basic texture analysis to AI-driven approaches, extracting numerous quantitative features from medical images to develop predictive models for disease diagnosis, prognosis, and treatment response assessment [10].

Table 1: Comparison of Texture Analysis Methodological Approaches

| Method Category | Key Techniques | Representative Features | Primary Applications |

|---|---|---|---|

| Statistical | GLCM, Fractal Analysis | Contrast, Correlation, Energy, Homogeneity, Fractal Dimension | Medical imaging [46], Materials science [44] |

| Structural | Mathematical Morphology | Primitive elements, Placement rules | Regular pattern analysis, Industrial inspection |

| Transform-based | Gabor filters, Wavelets | Frequency-domain coefficients | Texture segmentation, Multi-scale analysis |

| Deep Learning | CNNs, Autoencoders | Hierarchical feature maps | Complex texture classification [45], Segmentation [46] |

| Radiomics/AI | Feature extraction + ML | High-dimensional feature sets | Disease diagnosis [10], Treatment response prediction |

Comparative Analysis of Machine Learning Models for Texture Classification

The performance of machine learning models for texture analysis varies significantly based on dataset characteristics, feature extraction methods, and application domains. Below we systematically compare the quantitative performance and computational requirements of prominent approaches.

Performance Metrics Across Models and Datasets

Table 2: Comparative Performance of Machine Learning Models on Texture Classification Tasks

| Model Category | Specific Model | Feature Extraction Method | Dataset | Reported Accuracy | Key Strengths | Limitations |

|---|---|---|---|---|---|---|

| Traditional ML | Support Vector Machine (SVM) | GLCM [44] | Medical Images | 80-90% (varies by application) | Effective with good features, Less data hungry | Dependent on feature engineering |

| Traditional ML | Random Forest | GLCM [44] | Medical Images | 80-90% (varies by application) | Handles non-linear relationships, Robust to outliers | May overfit without careful tuning |

| Traditional ML | K-Nearest Neighbors | GLCM [44] | Medical Images | 80-90% (varies by application) | Simple implementation, No training phase | Computationally intensive for large datasets |

| Deep Learning | CNN + SVM | DenseNet201 features [45] | KTH-TIPS, CURET | 85-95% | Superior accuracy, Automatic feature learning | High computational requirements |

| Deep Learning | CNN + SVM | ResNet50 features [45] | KTH-TIPS, CURET | 85-95% | Balance of accuracy and efficiency | Requires large datasets for training |

| Deep Learning | CNN + SVM | Inceptionv3 features [45] | KTH-TIPS, CURET | 85-95% | Multi-scale processing | Complex architecture |

| Deep Learning | U-Net | End-to-end learning [46] | Medical images (MS lesions) | High Dice score reported | Excellent for segmentation, Preserves spatial context | Primarily for segmentation tasks |

| Deep Learning | Autoencoders | Latent space representation [47] | Various | Varies by application | Dimensionality reduction, Unsupervised learning | May miss discriminative features |

Model Selection Guidelines for Research Applications

Choosing the appropriate machine learning model for texture analysis requires careful consideration of multiple factors:

Data availability: Deep learning models typically require large datasets (thousands of samples) for optimal performance, while traditional ML models can achieve good results with smaller datasets [45].

Computational resources: CNNs and other deep learning architectures demand significant memory and processing power, making traditional ML with handcrafted features more practical for resource-constrained environments.

Interpretability requirements: Traditional ML models with explicit feature extraction (e.g., GLCM with SVM) offer greater interpretability for scientific validation compared to the "black box" nature of deep neural networks.