Targeted vs. Non-Targeted Method Validation: A Strategic Guide for Analytical Scientists

This article provides a comprehensive comparison of targeted and non-targeted analytical method validation for researchers and drug development professionals.

Targeted vs. Non-Targeted Method Validation: A Strategic Guide for Analytical Scientists

Abstract

This article provides a comprehensive comparison of targeted and non-targeted analytical method validation for researchers and drug development professionals. It explores the foundational principles, strategic applications, and distinct validation pathways for each approach, grounded in current regulatory frameworks like ICH Q14 and leveraging advancements in high-resolution mass spectrometry. The content addresses common troubleshooting scenarios and offers a practical framework for selecting and optimizing methods based on project goals, whether for precise quantification or comprehensive biomarker discovery. By synthesizing key challenges and comparative strengths, this guide aims to empower scientists in making informed decisions to ensure robust, reliable, and fit-for-purpose analytical procedures in biomedical and clinical research.

Core Principles: Defining Targeted and Non-Targeted Analytical Strategies

Targeted analytical paradigms are fundamental to hypothesis-driven research, enabling the precise and accurate quantification of predefined analytes in complex biological matrices. Unlike non-targeted approaches that screen for unknown compounds, targeted methods focus on specific molecules of interest, utilizing advanced instrumentation like triple quadrupole mass spectrometry to achieve exceptional sensitivity and specificity. This approach is particularly critical in pharmaceutical development, clinical diagnostics, and metabolic research where precise quantification of known biomarkers, therapeutics, or pathway metabolites is required for decision-making. The core strength of targeted methodologies lies in their ability to provide absolute quantification through calibration curves and isotopically labeled standards, delivering the rigorous data quality demanded in regulated environments [1].

This guide objectively compares the performance of targeted analytical approaches against non-targeted alternatives, providing experimental data and detailed methodologies to illustrate their respective capabilities in life sciences research.

Performance Comparison: Targeted vs. Non-Targeted Methods

A systematic comparison of targeted and non-targeted metabolomics methods reveals distinct performance characteristics suited to different research objectives [2]. The targeted approach demonstrates superior performance for quantitative precision, while non-targeted methods provide broader coverage for biomarker discovery.

Table 1: Analytical Performance Comparison Between Targeted and Non-Targeted Metabolomics

| Performance Characteristic | Targeted Metabolomics | Non-Targeted Metabolomics |

|---|---|---|

| Primary Objective | Quantification of known metabolites | Discovery of unknown biomarkers |

| Number of Metabolites | 181 metabolites (39 quantitative, 142 semi-quantitative) [2] | Thousands of chromatographic features |

| Quantification Capability | Absolute quantification using native & isotopic standards [2] | Relative quantification (peak area) |

| Analytical Precision | Superior precision in replicate analyses [2] | Lower precision, requires drift correction |

| Metabolite Identification | Definitive identification with standards | Tentative identification via databases |

| Data Quality | Reduced false positives; accounts for matrix effects [2] | Higher risk of false identifications |

Table 2: Method Validation Parameters for Targeted Proteomics in Clinical Applications

| Validation Parameter | Performance Requirement | Example: Chromogranin A Assay [1] |

|---|---|---|

| Dynamic Range | 4 orders of magnitude | Quantification over 4 orders of magnitude |

| Accuracy | Comparison to reference methods | Wider dynamic range vs. immunoassay |

| Precision | High inter-laboratory concordance | Demonstrated in monoclonal antibody assays |

| Specificity | Monitor multiple ion transitions | Minimum 2 transitions per analyte (quantifier & qualifier) |

| Throughput | Comparable or improved vs. alternatives | Increased throughput per batch vs. immunoassay |

Experimental Protocols for Method Comparison

Protocol for Targeted Metabolomics

Sample Preparation:

- Protein precipitation using cold acetonitrile

- Addition of stable isotope-labeled internal standards (SIS) for each analyte

- Derivatization for certain metabolite classes (e.g., amino acids)

- Reconstruction in mobile phase-compatible solvent [2]

Instrumental Analysis:

- Quantitative Analysis: UPLC-MS/MS with scheduled MRM for 39 metabolites (amino acids, biogenic amines, neurotransmitters, nucleobases)

- Semi-Quantitative Analysis: Flow injection-MS/MS for 142 lipids (50 carnitines, 83 phosphatidylcholines, 9 sphingomyelins)

- Chromatographic separation: HILIC or C18 columns depending on metabolite class

- Calibration curves using authentic standards covering expected physiological ranges [2]

Data Processing:

- Peak integration and review using vendor software

- Concentration calculation based on standard curves and internal standard correction

- Quality assessment using quality control (QC) samples [2]

Protocol for Non-Targeted Metabolomics

Sample Preparation:

- Protein precipitation with methanol:acetonitrile (1:1) for broad metabolite coverage

- Pooled QC samples for monitoring instrumental performance

- No internal standards for unknown compounds [2]

Instrumental Analysis:

- UPLC-HRMS (Orbitrap platform) with positive electrospray ionization

- Data-dependent acquisition (DDA) for MS/MS fragmentation

- Testing of ionization modes and stationary phases (C18 vs. HILIC) to maximize feature detection [2]

Data Processing:

- Feature detection, alignment, and integration using software (e.g., Compound Discoverer)

- Signal drift correction using algorithms (e.g., batchCorr)

- Compound identification using MS2 libraries (e.g., mzCloud)

- Multivariate statistical analysis for biomarker discovery [2]

Protocol for Comparison of Methods Experiment

Experimental Design:

- Analysis of minimum 40 patient specimens covering entire working range [3]

- Selection of specimens representing spectrum of diseases expected in routine application

- Analysis over minimum of 5 days to minimize systematic errors from single run [3]

- Specimens analyzed within two hours by test and comparative methods to ensure stability [3]

Statistical Analysis:

- Graphical analysis using difference plots (test minus comparative result vs. comparative result)

- Calculation of linear regression statistics (slope, y-intercept, standard deviation about the line)

- Estimation of systematic error (SE) at medical decision concentrations: Yc = a + bXc; SE = Yc - Xc [3]

- Correlation coefficient (r) calculation to assess data range adequacy [3]

Targeted Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Targeted Mass Spectrometry

| Reagent/Material | Function | Application Example |

|---|---|---|

| Stable Isotope-Labeled Standards (SIS) | Internal standards for precise quantification; correct for matrix effects and recovery [1] | SIS peptides/proteins in targeted proteomics [1] |

| Authentic Chemical Standards | Calibration curve generation; definitive metabolite identification [2] | Native standards for amino acids, lipids, etc. [2] |

| Quality Control Materials | Monitor analytical performance across batches; assess precision and accuracy [4] | Pooled QC (PQC) or surrogate QC (sQC) samples [4] |

| Immunoaffinity Enrichment Reagents | Enrich target analytes from complex matrices; improve sensitivity [1] | Anti-peptide antibodies for thyroglobulin assay [1] |

| Sample Preparation Consumables | Deplete high-abundance proteins; clean up samples [1] | Solid-phase extraction cartridges; precipitation reagents [1] |

Analytical Method Selection Logic

Analytical and Regulatory Requirements for Clinical Translation

The translation of targeted assays into clinical practice requires meeting stringent regulatory requirements and analytical performance metrics. For protein biomarker assays using targeted proteomics, this involves establishing test characteristics, defining intended use, and demonstrating clinical benefit during feasibility assessment [1]. Currently, targeted proteomics assays fall under Laboratory Developed Tests (LDTs), requiring individual laboratories to develop, validate, and implement methods on approved instrumentation while maintaining compliance with quality management systems [1].

Key validation parameters include accuracy, precision, sensitivity, specificity, and reproducibility. The comparison of methods experiment is particularly critical for assessing systematic error when implementing new clinical methods [3]. This involves analyzing patient specimens by both new and comparative methods, then estimating systematic errors based on observed differences, with particular attention to errors at critical medical decision concentrations [3].

Targeted analytical paradigms provide the precision, accuracy, and reproducibility required for quantitative analysis of known analytes in complex biological systems. While non-targeted approaches offer advantages for discovery-phase research, targeted methods deliver the rigorous quantification necessary for clinical application, therapeutic monitoring, and hypothesis-driven research. The selection between these approaches should be guided by research objectives, with targeted methods providing optimal performance for quantification of predefined analytes and non-targeted methods excelling at comprehensive biomarker discovery. As demonstrated through systematic comparisons, targeted methodologies consistently demonstrate superior precision and quantitative capabilities, making them indispensable for applications requiring high data quality and reproducibility.

The field of chemical analysis is undergoing a fundamental transformation, moving from a focused, hypothesis-driven approach to a comprehensive, discovery-oriented paradigm. Targeted analysis has long been the gold standard for quantitative analytical chemistry, focusing on predefined compounds with established methods and reference standards. In contrast, non-targeted analysis (NTA) represents a paradigm shift toward hypothesis-generating exploration that comprehensively characterizes samples without predefined targets [5]. This methodological evolution is driven by the recognition that targeted methods inherently miss unexpected or unknown chemicals present in complex samples [6]. The growing importance of NTA stems from its capacity to detect both familiar components and completely uninvestigated compounds, providing crucial insights into sample composition that would otherwise remain obscured by traditional targeted frameworks [5].

The fundamental distinction between these approaches lies in their core objectives: targeted methods confirm presence or absence of known analytes, while NTA aims to discover previously unidentified chemicals [7]. This comparative analysis examines the current landscape of both methodologies, their validation frameworks, performance characteristics, and practical applications to guide researchers in selecting appropriate strategies for their analytical challenges.

Fundamental Principles and Comparative Workflows

Core Conceptual Differences

The conceptual foundations of targeted and non-targeted methodologies reflect their divergent analytical purposes:

Targeted Analysis operates within a closed-list framework where analysts determine beforehand which specific compounds to monitor. This approach relies on reference standards for each target compound to generate calibration curves and establish retention times [8]. The targeted paradigm provides excellent sensitivity and quantification for known compounds but offers no capability to detect analytes outside its predefined scope [6].

Non-Targeted Analysis employs an open-list framework designed to capture as many chemical features as possible without prior knowledge of what might be present [5]. Rather than confirming predetermined hypotheses, NTA generates new hypotheses about sample composition through comprehensive data acquisition and advanced data mining techniques [9]. This makes NTA particularly valuable for discovering emerging contaminants, transformation products, and unexpected chemicals in complex matrices [10].

Workflow Comparison

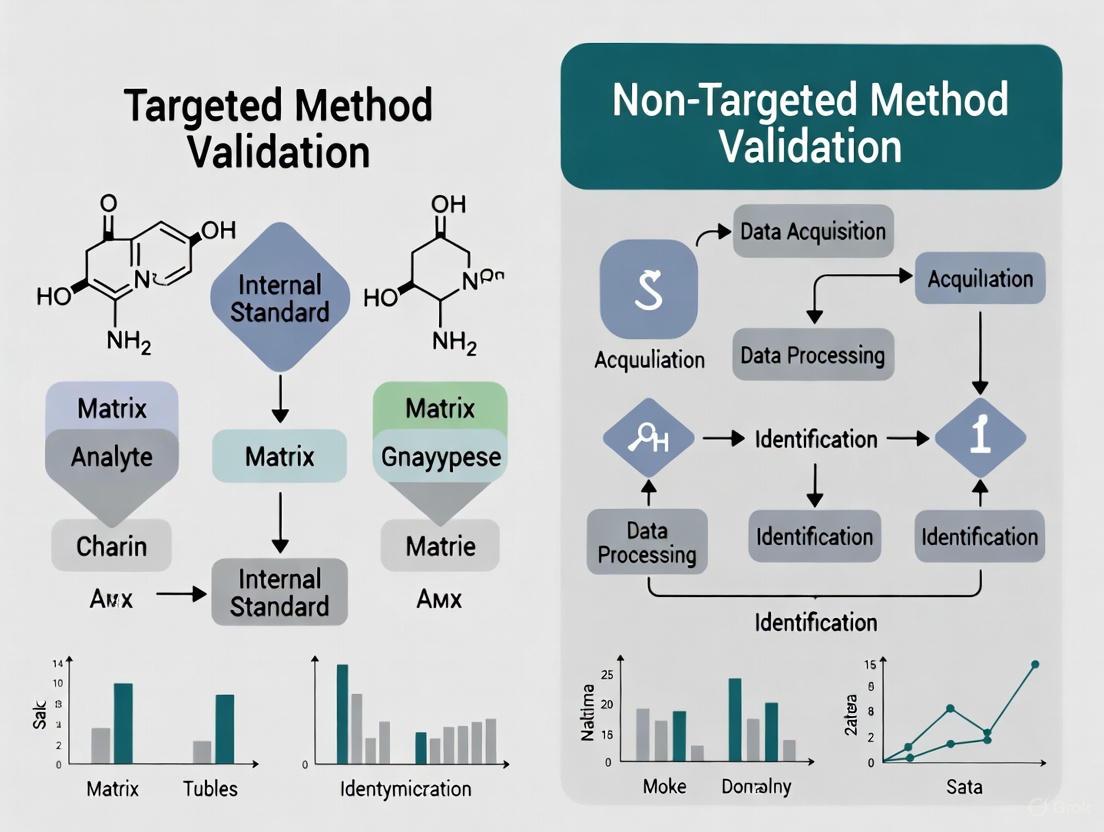

The operational workflows for targeted and non-targeted approaches differ significantly in sample preparation, instrumentation, data acquisition, and processing requirements. The following diagram illustrates the core workflow of the non-targeted paradigm:

Table 1: Core Workflow Differences Between Targeted and Non-Targeted Approaches

| Workflow Component | Targeted Analysis | Non-Targeted Analysis |

|---|---|---|

| Sample Preparation | Selective extraction optimized for specific analytes | Minimal/generic preparation to preserve chemical diversity [5] |

| Extraction Techniques | Solid-phase extraction (SPE) with selective sorbents | Multi-sorbent SPE, QuEChERS, or dilute-and-shoot [9] |

| Chromatography | Optimized separation for target compounds | Generic gradients for broad coverage [11] |

| Mass Spectrometry | Low-resolution (triple quadrupole) with MRM | High-resolution MS (Orbitrap, Q-TOF) with full-scan acquisition [10] [5] |

| Data Acquisition | Multiple reaction monitoring (MRM) | Data-independent (DIA) or data-dependent (DDA) acquisition [12] [11] |

| Data Processing | Targeted integration using predefined transitions | Untargeted peak picking, alignment, and compound identification [9] |

| Compound Identification | Matching retention time and MRM transition to standards | Spectral library matching, in silico fragmentation, retention time prediction [10] |

Performance Metrics and Validation Frameworks

Quantitative Performance Comparison

Rigorous performance assessment reveals fundamental trade-offs between targeted and non-targeted approaches. A systematic comparison of quantitative performance using per- and polyfluoroalkyl substances (PFAS) as a model system demonstrated distinct characteristics for each method [6]:

Table 2: Quantitative Performance Comparison Between Targeted and Non-Targeted Approaches

| Performance Metric | Targeted Analysis | qNTA with Expert-Selected Surrogates | qNTA with Global Surrogates |

|---|---|---|---|

| Relative Accuracy | Benchmark (1×) | ~1.5× decrease | ~4× decrease |

| Uncertainty | Lowest | ~70× increase | ~1000× increase |

| Reliability | Highest | ~5% decrease | ~5% decrease |

| Calibration Approach | Compound-specific calibration curves | Surrogate calibration using similar compounds | Bootstrap-sampled calibration from all available surrogates |

| Internal Standard Use | Matched isotope-labeled standards | Limited or class-based internal standards | Limited or class-based internal standards |

The data reveal that while targeted approaches provide superior accuracy and precision for known compounds, quantitative non-targeted analysis (qNTA) strategies offer viable semi-quantitative estimates when reference standards are unavailable [6]. The performance degradation in qNTA stems primarily from response factor variability between structurally diverse compounds, highlighting the critical importance of surrogate selection strategies.

Validation Parameters

Traditional method validation follows established guidelines with clearly defined parameters, while NTA validation requires more flexible, fit-for-purpose approaches:

Table 3: Validation Parameters for Targeted Versus Non-Targeted Methods

| Validation Parameter | Targeted Analysis | Non-Targeted Analysis |

|---|---|---|

| Specificity | Demonstrated for each target analyte | Method capability to detect diverse chemical classes |

| Accuracy | Spike recovery with reference standards | Limited to identified compounds with available standards |

| Precision | Repeatability and intermediate precision | System stability and feature detection reproducibility |

| Detection Limit | Established for each target | Variable across chemical space; depends on ionization efficiency |

| Linearity | Demonstrated for each target | Typically assessed using quality control samples |

| Identification Confidence | Based on retention time and transition matching | Tiered system (Level 1-5) based on spectral matching and standards [9] |

Traditional validation parameters defined in guidelines like ICH Q2(R1) apply well to targeted methods but require adaptation for NTA [8]. The tiered confidence level system for identification (Level 1: confirmed with reference standard; Level 5: exact mass unknown) has emerged as a crucial validation framework for NTA [9].

Experimental Protocols and Applications

Representative Methodologies

Non-Targeted Analysis of Emerging Contaminants

Protocol Overview: This methodology enables comprehensive screening of unknown environmental contaminants through high-resolution mass spectrometry and advanced data processing [10].

Sample Preparation:

- Minimal Processing: Protein precipitation with methanol/acetonitrile (1:1) for biological samples [13]

- Broad-Spectrum Extraction: Multi-sorbent solid-phase extraction (Oasis HLB + ISOLUTE ENV+) for water samples [9]

- Quality Controls: Include pooled quality control samples and procedural blanks

Instrumental Analysis:

- Platform: UHPLC coupled with Q-TOF or Orbitrap mass spectrometer

- Chromatography: Reversed-phase (C18) or HILIC with generic gradient

- Acquisition: Data-independent acquisition (DIA) for comprehensive fragmentation data

Data Processing:

- Peak Picking: Using software like XCMS, MS-DIAL, or OpenMS

- Compound Identification: Spectral library searching (GNPS, NIST) and in silico fragmentation

- Statistical Analysis: Multivariate analysis (PCA, PLS-DA) and machine learning classification

Validation Approach:

- Reference Materials: Use of certified reference materials when available

- Cross-Platform Comparison: Analysis of same samples with complementary techniques

- Tiered Identification: Reporting confidence levels based on available evidence [9]

Targeted Pharmaceutical Validation

Protocol Overview: This approach provides validated quantification of specific pharmaceutical compounds according to regulatory standards [8] [11].

Sample Preparation:

- Selective Extraction: Optimized solid-phase extraction or liquid-liquid extraction

- Internal Standards: Deuterated or stable isotope-labeled analogs for each target

Instrumental Analysis:

- Platform: HPLC coupled with triple quadrupole mass spectrometer

- Chromatography: Optimized isocratic or gradient separation

- Acquisition: Multiple reaction monitoring (MRM) with compound-specific transitions

Method Validation:

- Specificity: No interference at retention times of target analytes

- Linearity: Minimum of 5 concentration points across working range

- Accuracy and Precision: Within 15% of nominal values for all targets

- Stability: Evaluation under various storage and processing conditions

Application Case Studies

Rheumatoid Arthritis Biomarker Discovery

A comprehensive multi-center study exemplifies the integration of non-targeted discovery with targeted validation [13]. Researchers analyzed 2,863 blood samples across seven cohorts using:

- Non-Targeted Discovery: UHPLC-HRMS profiling identified potential metabolite biomarkers distinguishing rheumatoid arthritis from osteoarthritis and healthy controls

- Targeted Validation: Six promising biomarkers were validated using targeted LC-MS/MS with stable isotope-labeled internal standards

- Model Performance: The metabolite-based classifiers achieved AUC values of 0.8375-0.9280 for RA vs. healthy controls and 0.7340-0.8181 for RA vs. osteoarthritis across independent validation cohorts

This integrated approach demonstrates how non-targeted discovery generates hypotheses that can be rigorously validated using targeted methods for clinical application.

Food Contact Material Safety Assessment

Non-targeted analysis has proven particularly valuable for identifying non-intentionally added substances (NIAS) in plastic food contact materials, where the complete chemical composition is unknown [12]. The workflow includes:

- Migration Testing: Exposure of materials to food simulants under controlled conditions

- Comprehensive Screening: UHPLC-HRMS analysis with data-independent acquisition

- Risk Assessment: Application of Threshold of Toxicological Concern (TTC) and Cramer classification to prioritize unidentified features

This application highlights the unique capability of NTA to address analytical challenges where the targets are fundamentally unknown.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Reagents and Solutions for Non-Targeted Analysis

| Category | Specific Products/Techniques | Function and Application |

|---|---|---|

| Sample Preparation | QuEChERS, Oasis HLB SPE, ISOLUTE ENV+ | Broad-spectrum extraction with minimal analyte discrimination [9] |

| Chromatography | Acquity BEH C18, HSS T3, Accucore Phenyl Hexyl columns | Separation of diverse chemical classes with different selectivity [11] |

| Mass Spectrometry | Orbitrap Exploris, Q-TOF systems | High-resolution accurate mass measurement for elemental composition assignment [10] [13] |

| Data Processing | XCMS, MS-DIAL, Compound Discoverer | Untargeted peak detection, alignment, and feature reduction [9] |

| Compound Identification | NIST, GNPS, mzCloud libraries | Spectral matching for structural elucidation [12] |

| Quantitative Surrogates | Perdeuterated internal standards, class-based surrogates | Response factor estimation for quantitative NTA [6] |

| Quality Control | Pooled QC samples, NIST SRM 1950 | Monitoring system stability and data quality [9] |

Integrated Workflow for Contemporary Analytical Challenges

The complementary strengths of targeted and non-targeted approaches suggest an integrated workflow that leverages the advantages of both paradigms:

This integrated approach begins with non-targeted screening to characterize sample composition and identify potential compounds of interest, followed by targeted method development for priority substances requiring precise quantification [13]. The workflow creates a virtuous cycle where non-targeted analysis discovers new relevant compounds that can be incorporated into future targeted methods.

The analytical landscape continues to evolve from purely targeted approaches toward integrated strategies that leverage the discovery power of non-targeted analysis with the quantitative rigor of targeted methods. While targeted analysis remains essential for regulatory compliance and precise quantification, non-targeted approaches provide unprecedented capability to discover novel contaminants, transformation products, and unexpected chemicals in complex matrices [10] [12].

The choice between these paradigms depends fundamentally on the analytical question: targeted methods answer "how much is there of these specific compounds?" while non-targeted approaches address "what is in this sample?" [5] [7]. As analytical technologies advance and computational tools become more sophisticated, the integration of both approaches will increasingly drive innovation in environmental monitoring, pharmaceutical development, food safety, and clinical diagnostics [13] [9].

Future directions will likely focus on improving quantitative performance of NTA through better response prediction models [10], expanding spectral libraries for compound identification [12], and developing harmonized validation frameworks that accommodate the unique characteristics of non-targeted methods [7]. By understanding the complementary strengths and limitations of each approach, researchers can design more comprehensive analytical strategies that address the complex chemical characterization challenges of the future.

The International Council for Harmonisation (ICH) Q14 guideline, entitled "Analytical Procedure Development," provides a modernized, science-based framework for the development and lifecycle management of analytical procedures used in the assessment of drug substance and drug product quality [14] [15]. Effective in March 2024, this guideline, together with the revised ICH Q2(R2), aims to facilitate more efficient, science-based, and risk-based post-approval change management [15]. The core principle of ICH Q14 is the introduction of an Analytical Procedure Lifecycle approach, which encourages a structured path from initial development through continuous monitoring and improvement, ensuring methods remain fit-for-purpose over their entire use [16]. This foundational framework is critical for the effective validation of both traditional targeted methods and the increasingly prevalent non-targeted methods.

The following diagram illustrates the key stages and decision points in the analytical procedure lifecycle as guided by ICH Q14.

Core Principles of ICH Q14 and Their Impact on Method Validation

ICH Q14 emphasizes a systematic, knowledge-driven approach to analytical procedure development, which directly enhances the validation process. The guideline outlines two approaches for development: the traditional approach and the more enhanced approach. The enhanced approach is strongly recommended as it builds a deeper understanding of the procedure's performance, directly contributing to a more robust and reliable validation [14] [15]. A cornerstone of this enhanced approach is the establishment of an Analytical Target Profile (ATP), which is a predefined objective that articulates the required quality of the analytical data the procedure must produce [16]. The ATP fundamentally shapes validation by defining the specific performance criteria, such as accuracy and precision, that the method must demonstrate to be deemed suitable for its intended use.

Furthermore, ICH Q14 promotes the use of risk management and multivariate studies during development to understand the impact of various procedure parameters on the results [15]. This knowledge is critical for defining the method's robustness during validation—a key characteristic that ensures the method remains unaffected by small, deliberate variations in method parameters [17]. By integrating these principles, the transition from method development to validation becomes a seamless, predictable process where the performance characteristics are thoroughly understood and confirmed, rather than being investigated for the first time.

Comparative Analysis: Targeted vs. Non-Targeted Method Validation

The analytical landscape is broadly divided into targeted and non-targeted methods. Targeted methods are designed to accurately measure one or a few predefined analytes, while non-targeted methods aim to detect a wide range of unknown compounds or patterns in a sample, often for screening or discovery purposes [18] [7]. The validation of these two approaches differs significantly in its objectives and execution, a distinction that becomes clear when framed within the ICH Q14 lifecycle.

Table 1: Core Comparison of Targeted vs. Non-Targeted Method Validation

| Validation Characteristic | Targeted Methods (e.g., HPLC, LC-MS/MS) | Non-Targeted Methods (e.g., HRMS, NMR) |

|---|---|---|

| Primary Objective | Quantify or identify specific, known analytes [19] | Detect patterns or differences; identify unknown compounds [7] [20] |

| Specificity | High specificity for target analyte(s), free from interference [17] | Ability to discriminate between sample classes; not tied to a single analyte [20] |

| Accuracy & Precision | Formally demonstrated using reference standards [17] | Focus on model/prediction precision and stability; often lacks a true reference [18] [20] |

| Linearity & Range | Established for target analytes over a defined concentration range [17] | Not applicable in the same way; focus is on the "chemical coverage" of the method [18] |

| Sensitivity (LOD/LOQ) | Defined Limit of Detection (LOD) and Limit of Quantification (LOQ) [17] | System sensitivity is linked to signal-to-noise and ability to detect meaningful markers [7] |

| Robustness | Tested against variations in key method parameters (e.g., pH, temperature) [17] | Critical to ensure model performance is stable over time and across instrument platforms [7] |

| Key Challenge | Ensuring selectivity in complex matrices | Data handling, model validation, and proving fitness-for-purpose [7] [20] |

Experimental Protocols and Workflow

The experimental workflow for validating these methods highlights their fundamental differences. The targeted method workflow is a linear, confirmatory process, whereas the non-targeted workflow is iterative and exploratory, heavily reliant on multivariate statistics and model building.

The following diagram contrasts the generalized experimental workflows for the validation of targeted and non-targeted methods.

Detailed Protocol for Targeted Method Validation [19] [17]:

- Define Objectives and ATP: Specify the analyte, matrix, acceptance criteria (e.g., precision ≤15% RSD), and the intended use.

- Specificity Testing: Inject blank matrix, standard, and spiked matrix to demonstrate no interference at the retention time of the analyte.

- Linearity and Range: Prepare and analyze a minimum of 5 concentration levels across the expected range. Calculate the correlation coefficient (R²) and y-intercept.

- Accuracy and Precision: Prepare QC samples at Low, Mid, and High concentrations (n≥5 each). Analyze and report %Bias (accuracy) and %RSD (precision).

- Robustness Testing: Deliberately vary key parameters (e.g., column temperature ±2°C, mobile phase pH ±0.1) and evaluate impact on system suitability criteria.

Detailed Protocol for Non-Targeted Method Workflow [7] [20]:

- Experimental Design: Assemble a large and diverse set of authentic samples representing the different classes to be discriminated (e.g., authentic vs. adulterated food).

- Data Acquisition: Use high-resolution mass spectrometry (HRMS) or NMR with minimal sample prep to maximize chemical coverage. Include quality control (QC) pooled samples.

- Data Pre-processing: Use software for peak picking, alignment, and normalization to create a data matrix of features (m/z, retention time, intensity).

- Chemometric Modeling: Apply unsupervised (e.g., PCA) and supervised (e.g., PLS-DA, Random Forests) methods to build a classification model.

- Model Validation: Use strict validation techniques such as cross-validation, test-set validation, or external validation to confirm the model's predictive ability and avoid overfitting.

Essential Research Reagent Solutions and Materials

The execution of both targeted and non-targeted analyses relies on a suite of specialized reagents and materials. The table below details key items essential for experiments in this field.

Table 2: Key Research Reagent Solutions and Materials

| Item | Function & Application |

|---|---|

| Certified Reference Standards | Provides the "ground truth" for targeted method validation; used to establish accuracy, linearity, and range [19]. |

| Stable Isotope-Labeled Internal Standards | Corrects for matrix effects and analytical variability in quantitative targeted LC-MS/MS assays [19]. |

| High-Purity Solvents & Mobile Phase Additives | Essential for achieving high sensitivity and specificity; minimizes background noise in chromatographic systems [17]. |

| Characterized Column Chemistry | Provides reproducible selectivity; critical for both targeted separations and the consistent retention times required in non-targeted workflows [17]. |

| Quality Control (QC) Reference Materials | A characterized control sample run in sequence to monitor system stability and data quality over time, crucial for both method types [7]. |

| Well-Characterized Sample Sets | For non-targeted methods, a set of authentic samples with verified class labels is the primary reagent for building and validating models [20]. |

ICH Q14's Analytical Procedure Lifecycle provides a vital, modernized structure that strengthens the regulatory foundation for analytical science. By mandating a science- and risk-based approach from development through continuous monitoring, it ensures methods are robust and fit-for-purpose. The comparative analysis reveals that while targeted method validation is a mature, quantitative paradigm focused on predefined performance characteristics for known analytes, non-targeted validation is a evolving, qualitative paradigm centered on model reliability and predictive power for unknown chemical patterns. Understanding these distinctions is essential for researchers, scientists, and drug development professionals to effectively develop, validate, and maintain analytical procedures in a compliant and scientifically rigorous manner.

In analytical chemistry, particularly within pharmaceutical development and food authentication, the choice between targeted and non-targeted methods represents a fundamental strategic decision. A targeted method is designed to focus on predefined analytes, optimizing for the precise detection and quantification of specific "needles in a haystack" [21]. In contrast, a non-targeted method (NTM) aims to exploit a broader analytical signature, capturing a wide range of constituents without a predefined target, thus characterizing the entire "haystack" [22]. This guide provides an objective comparison of these approaches based on four key performance metrics: Precision, Coverage, Identification Confidence, and Throughput, framed within the context of analytical method validation research.

The following table summarizes the core performance characteristics of targeted versus non-targeted methods, highlighting their inherent trade-offs.

Table 1: Core Metric Comparison between Targeted and Non-Targeted Methods

| Metric | Targeted Methods | Non-Targeted Methods |

|---|---|---|

| Precision | High. Optimized for repeatability and reproducibility of specific analyte measurements [23]. | Moderate to Variable. Focuses on pattern recognition and relative comparison; absolute quantification can be less precise [22]. |

| Coverage | Narrow. Limited to a predefined set of analytes (e.g., known impurities, specific markers) [21]. | Broad. Capable of detecting a wide range of expected and unexpected components [22]. |

| Identification Confidence | High for target analytes, supported by reference standards [23]. Not designed for unknown identification. | Variable for unknowns. Highly dependent on database completeness and computational prediction accuracy [24]. |

| Throughput | Typically high for routine analysis once validated. Streamlined for specific targets [23]. | Often lower in data acquisition and significantly lower in data processing and interpretation due to complexity [22]. |

Detailed Metric Analysis and Experimental Data

Precision

Precision measures the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [23].

Targeted Method Performance: In regulated pharmaceutical development, targeted assays for potency or impurities are rigorously validated to demonstrate high precision. For instance, a typical HPLC-UV assay for a drug substance may achieve a %RSD (Relative Standard Deviation) of less than 2.0% for peak areas in repeatability experiments, ensuring reliable quantification of the active pharmaceutical ingredient (API) [23]. This high precision is achievable because the method is optimized around the chemical properties of a specific analyte.

Non-Targeted Method Performance: The precision of NTMs is often assessed through the stability of the analytical fingerprint and the reproducibility of multivariate models. Performance is less about the precise quantification of a single compound and more about the consistent profile of a sample. Validation studies focus on the method's ability to consistently classify samples or detect deviations, which can be influenced by instrumental drift and sample preparation variability [22].

Coverage

Coverage defines the scope of analytes that a method can detect and is a primary differentiator between the two paradigms.

Targeted Analysis: Coverage is intrinsically limited to the list of analytes defined during method development. This is ideal for monitoring known compounds, such as in a stability-indicating method that tracks the API and its known degradation products [23]. Its strength is depth, not breadth.

Non-Targeted Analysis: NTMs are designed for breadth. Techniques like high-resolution mass spectrometry (HRMS) and NMR are used to capture data from thousands of features in a single run. This makes them powerful for discovery, such as identifying novel metabolites or detecting unknown food fraud [22]. A study on metabolomics noted that a key advantage of NTMs is their ability to move beyond the limitations imposed by the availability of authentic chemical standards, thereby expanding the "identifiable molecular universe" [24].

Identification Confidence

Confidence in identifying a compound is tied to the quality of the reference data used for comparison.

Targeted Analysis: Confidence is high because identification is based on direct comparison with authentic reference standards under validated conditions. For a chromatographic method, this involves matching the retention time and spectral data (e.g., UV, MS) of the sample to a certified standard [23]. This aligns with regulatory requirements for definitive identification [23].

Non-Targeted Analysis: Confidence is probabilistic and multi-layered. It relies on comparing analytical signatures (e.g., mass-to-charge ratio, fragmentation spectra, collision cross section) against reference databases [24]. A key challenge is that the number of potential annotations for an unknown feature is inversely related to the precision of the measurements. Research has shown that annotation confidence increases significantly when using multidimensional signatures (e.g., combining accurate mass, retention time, and CCS) as this reduces the search space and ambiguity [24]. The maturation of computational prediction tools is creating a "reference-free" paradigm, but gauging confidence in these predictions remains an active area of research [24].

Table 2: Impact of Multi-dimensional Data on Identification Confidence in Non-Targeted Analysis

| Properties Used for Identification | Effect on Search Space & Identification Confidence |

|---|---|

| Single Property (e.g., m/z only) | Large search space, low confidence, high risk of misidentification due to isomers. |

| Two Properties (e.g., m/z + RT) | Reduced search space, moderate confidence. |

| Three+ Properties (e.g., m/z + RT + MS/MS) | Significantly constrained search space, high confidence annotation [24]. |

Throughput

Throughput considers the speed of analysis from sample preparation to final report.

Targeted Analysis: Once developed and validated, targeted methods are typically high-throughput for routine analysis. Sample preparation and instrumentation (e.g., LC-MS/MS) are optimized for a specific workflow, enabling fast cycle times and automated data processing with clear pass/fail criteria [23].

Non-Targeted Analysis: Throughput is often lower. Data acquisition itself can be longer due to the need for high-resolution, full-scan data. The most significant bottleneck is data processing and interpretation. The complex datasets require sophisticated bioinformatics pipelines, statistical analysis, and often manual validation, which drastically reduces overall throughput compared to targeted assays [22].

Experimental Protocols for Method Comparison

To objectively compare these methodologies, the following experimental protocols can be employed.

Protocol for Assessing Precision and Throughput

This protocol is designed to generate quantitative data for the metrics of precision and throughput.

- Sample Preparation: Prepare a set of identical samples spiked with a panel of 10 known analytes at relevant concentrations.

- Targeted Analysis:

- Instrumentation: Use a triple quadrupole LC-MS/MS system in MRM mode.

- Execution: Analyze the sample set in triplicate over three different days.

- Data Analysis: For each analyte, calculate the %RSD for retention time and peak area to determine intra-day and inter-day precision. Record the average instrument cycle time per sample.

- Non-Targeted Analysis:

- Instrumentation: Use a high-resolution LC-Q-TOF (Time-of-Flight) mass spectrometer in data-dependent acquisition (DDA) mode.

- Execution: Analyze the same sample set with the same replication schedule.

- Data Analysis: For the same 10 analytes, perform peak picking and alignment using computational software. Calculate the %RSD for retention time and peak area. Record the total instrument cycle time and the additional time required for data processing.

- Comparison: Compare the precision (%RSD) and total analysis time (sample-to-result) between the two methods for the 10 target analytes.

Protocol for Assessing Coverage and Identification Confidence

This protocol evaluates the ability to identify both expected and unexpected components.

- Sample Preparation: Prepare a complex sample (e.g., plant extract, biological fluid) containing both known and unknown components. Spike it with a blinded compound not included in the initial targeted list.

- Targeted Analysis:

- Execution: Analyze the sample using the validated targeted LC-MS/MS method.

- Data Analysis: Report the detection and quantification of the pre-defined analytes. Note the failure to detect the spiked, unknown compound.

- Non-Targeted Analysis:

- Execution: Analyze the sample using the LC-Q-TOF method.

- Data Analysis: Process the data using untargeted workflows. Document the total number of detectable molecular features. Attempt to identify the spiked unknown compound by searching its accurate mass and MS/MS spectrum against public (e.g., HMDB, MassBank) and commercial databases.

- Comparison: Report the number of features detected by the NTM versus the number quantified by the targeted method. Report the confidence level of the identification for the spiked unknown (e.g., Level 1: Confirmed by standard, Level 2: Probable structure based on spectral library match) [24].

Workflow and Decision Pathways

The following diagram illustrates the logical decision process for selecting between targeted and non-targeted methods based on analytical goals.

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental protocols and applications described rely on a foundation of specific reagents, instruments, and computational tools.

Table 3: Essential Reagents, Instruments, and Software for Analytical Method Comparison

| Category | Item | Function in Research |

|---|---|---|

| Reference Standards | Authentic Chemical Standards | Provide definitive identification and calibration for targeted analysis; essential for validating non-targeted identifications [23]. |

| Chromatography | HPLC/UPLC System, C18 Columns, Buffers/Mobile Phases | Separates complex mixtures to reduce ion suppression and resolve isomers, critical for both analytical paradigms [23]. |

| Mass Spectrometry | Triple Quadrupole (QQQ) Mass Spectrometer | The workhorse for sensitive, quantitative targeted analysis (e.g., MRM) [23]. |

| Quadrupole-Time of Flight (Q-TOF) Mass Spectrometer | Provides high-resolution accurate mass (HRAM) measurements for untargeted discovery and confident molecular formula assignment [24]. | |

| Data Analysis | CDS (Chromatography Data System) Software | Controls instrumentation and processes data for targeted methods (e.g., calculates peak area, %RSD) [23]. |

| Bioinformatics Platforms (e.g., XCMS, MS-DIAL) | Processes complex HRMS data for non-targeted analysis, performing peak picking, alignment, and statistical analysis [24]. | |

| Reference Databases | HMDB, MassBank, METLIN, NIST MS/MS Library | Spectral libraries used to query and identify unknown features detected in non-targeted analysis [24]. |

Strategic Implementation: Choosing the Right Tool for Your Research Question

The fundamental divide between targeted and untargeted approaches represents a critical strategic decision in analytical science, influencing every subsequent stage of experimental workflow and data acquisition. While Next-Generation Sequencing (NGS) offers a powerful illustration of this dichotomy, the core principles directly inform method validation research across fields, including Nanoparticle Tracking Analysis (NTA). Targeted methods focus on predefined analytes with high sensitivity, whereas untargeted approaches provide a comprehensive, hypothesis-generating view of complex samples [25]. This guide objectively compares these paradigms through experimental data, detailed protocols, and analytical outcomes to inform researchers and drug development professionals in their methodological selections.

Methodological Foundations: Core Principles and Workflows

Defining Targeted and Untargeted Approaches

Targeted methods are characterized by their specificity for predetermined targets. These techniques employ probes, primers, or capture reagents designed to enrich specific analytes from a complex background. Examples include tiled-amplicon sequencing for specific viral genomes [25] and hybrid-capture enrichment using predefined probe panels [25]. The primary advantage lies in significantly enhanced sensitivity for low-abundance targets, making these methods indispensable for diagnostic applications and specific variant detection.

In contrast, untargeted (shotgun) methods undertake a comprehensive analysis of all components within a sample without prior selection. Shotgun metagenomic sequencing exemplifies this approach, theoretically enabling the detection of novel or unexpected analytes [25]. However, this breadth comes at the cost of sensitivity for specific targets, as sequencing depth is distributed across all sample constituents, potentially obscuring low-abundance targets amidst dominant background signals.

Generalized Workflow Architecture

The following diagram illustrates the core decision pathways and procedural steps in targeted versus untargeted methodologies:

Figure 1: Generalized workflow comparing targeted and untargeted methodological pathways.

Experimental Comparison: Performance Evaluation in Sequencing Applications

Study Design and Protocol Specifications

A 2023 study directly compared metagenomic (untargeted) and targeted methods for detecting viral pathogens in wastewater, providing robust experimental data on methodological performance [25]. The protocols were implemented as follows:

1. Untargeted Shotgun Metagenomic Sequencing Protocol [25]:

- Input Material: Total nucleic acid extracted from influent wastewater.

- Processing: Centrifugation to remove solids and bacterial cells, followed by ammonium sulfate precipitation to enrich viral particles.

- Library Preparation: Standard metagenomic library construction without target enrichment.

- Sequencing Parameters: Deep sequencing to a mean depth of 303 million (±9.6 million) 2×150 bp read pairs per library on Illumina platforms.

2. Targeted Hybrid-Capture Enrichment Protocol [25]:

- Input Material: Metagenomic libraries from the same wastewater extracts.

- Enrichment Method: Hybrid-capture using a Respiratory Virus Oligos Panel (RVOP).

- Processing: Library hybridization with target-specific probes, washing, and amplification of captured targets.

- Sequencing Parameters: Mean depth of 106 million (±3.4 million) 2×150 bp read pairs per library.

3. Targeted Tiled-PCR Sequencing Protocol [25]:

- Input Material: Same wastewater nucleic acid extracts.

- Primer Design: Novel primer schemes for tiled-PCR amplification of specific viral genomes (SARS-CoV-2, enterovirus D68, norovirus GII, human adenovirus F41).

- Amplification: Multiplex PCR amplification across entire target genomes.

- Sequencing: Standard Illumina library preparation from amplified products.

Quantitative Performance Metrics

The following table summarizes the key performance outcomes from the comparative study:

Table 1: Experimental performance comparison of sequencing methodologies for viral detection [25].

| Methodological Approach | Percentage of Viral Reads | Genome Coverage for Targets | Detection of Novel Variants | Sensitivity for Low-Abundance Targets |

|---|---|---|---|---|

| Untargeted Shotgun | <0.6% of total reads | Insufficient for robust genomics | Possible but limited by sensitivity | Poor (dominated by background bacteria) |

| Targeted Hybrid-Capture | Significantly increased vs. shotgun | 15/25 targets with significantly increased coverage | Enabled for panel targets | Good for enriched targets |

| Targeted Tiled-PCR | Highest among methods | Optimal for individual viruses | Limited to known target regions | Excellent (designed for low concentrations) |

Comparative Analysis of Methodological Biases

Beyond sensitivity, each method introduces distinct analytical biases that impact data interpretation:

GC Bias and Coverage Uniformity: Targeted methods employing PCR amplification can introduce GC bias, leading to uneven coverage across genomic regions with extreme GC content [26]. PCR-free protocols, such as Illumina's TruSeq DNA PCR-Free kit, demonstrate improved coverage uniformity for G-rich, high GC, and promoter regions compared to PCR-dependent methods [27].

Enrichment Efficiency and Cross-Reactivity: Hybrid-capture enrichment demonstrated notable cross-reactivity for genetically similar targets not explicitly included in the probe panel. For example, probes designed for HAdV-B and -C also enriched HAdV-A, -D, and -F, broadening detection capabilities beyond the intended targets [25].

The Scientist's Toolkit: Essential Research Reagent Solutions

Selecting appropriate reagent systems is critical for implementing either targeted or untargeted methodologies. The following table catalogs key commercial solutions referenced in the experimental literature:

Table 2: Key research reagent solutions for nucleic acid analysis workflows.

| Product Name | Supplier | Primary Function | Key Applications | Notable Features |

|---|---|---|---|---|

| TruSeq DNA PCR-Free Prep | Illumina | Library preparation for whole genome sequencing | De novo assembly, WGS | Eliminates PCR bias, input: 25 ng–300 ng [27] |

| TruSeq DNA Nano | Illumina | Library preparation from low-input samples | Genotyping, WGS | Requires only 100 ng input DNA [27] |

| xGen ssDNA & Low-Input DNA Library Prep Kit | Integrated DNA Technologies | Library preparation from challenging samples | Sequencing of low-quality degraded DNA/ssDNA | Compatible with 10 pg–250 ng input [27] |

| NEBNext Ultra DNA Kit | New England Biolabs | Library preparation for Illumina platforms | WGS, target enrichment | Slightly cheaper and faster workflow than comparable kits [26] |

| Respiratory Virus Oligos Panel (RVOP) | Illumina | Hybrid-capture enrichment | Targeted respiratory virus detection | Enables simultaneous genomic epidemiology of multiple pathogens [25] |

| AMPure XP Beads | Beckman-Coulter | Magnetic bead-based size selection | DNA library cleanup and size selection | Alternative to gel extraction [26] |

| Qubit Broad Range dsDNA Assay | Life Technologies | Accurate DNA quantification | Pre-library preparation quality control | Essential for accurate input normalization [26] |

Data Acquisition and Analytical Outcomes

Impact on Downstream Analytical Capabilities

The choice between targeted and untargeted approaches fundamentally shapes downstream analytical possibilities:

Genomic Epidemiology Resolution: In the wastewater surveillance study, only targeted methods (both hybrid-capture and tiled-PCR) generated sufficient genome coverage for robust phylogenetic analysis and variant calling [25]. The untargeted shotgun approach failed to provide the consistent >90% genome coverage required for confident variant identification.

Multiplexing Capability: Hybrid-capture enrichment uniquely enabled simultaneous genomic epidemiology of multiple viral pathogens from a single sample, providing a balanced approach between specificity and target breadth [25]. This multiplexing capability is particularly valuable for surveillance applications where multiple pathogens may be of interest.

Operational Considerations: The NEBNext Ultra DNA kit demonstrated advantages in workflow efficiency, being both "slightly cheaper and faster" than the comparable TruSeq Nano kit while producing equivalent or superior sequencing data [26]. Such operational factors significantly impact practical implementation in both research and diagnostic settings.

Decision Framework for Method Selection

The following diagram outlines a systematic approach for selecting between methodological strategies based on research objectives and sample characteristics:

Figure 2: Decision framework for selecting between targeted and untargeted methodological strategies.

The comparative data clearly demonstrates that methodological selection requires careful consideration of research priorities. Targeted approaches provide superior sensitivity and reliability for known targets, with tiled-PCR offering the highest sensitivity for individual targets and hybrid-capture providing an effective balance for multiple targets [25]. Untargeted methods maintain value for discovery-phase research but require high target abundance or extensive sequencing depth for meaningful detection [25].

For researchers designing validation studies, these findings emphasize that method selection must align with explicit analytical goals. Targeted methods prove indispensable for clinical validations requiring high sensitivity and reproducibility, while untargeted strategies offer broader exploratory potential at the cost of sensitivity. As technological advancements continue to improve the sensitivity and efficiency of both approaches, their complementary application will further enhance analytical capabilities across basic research and drug development contexts.

The Analytical Target Profile (ATP) as a Guide for Method Selection

The Analytical Target Profile (ATP) is a foundational concept in modern analytical science, first formally introduced in the ICH Q14 Guideline in 2022 [28]. It serves as a prospective summary of the quality characteristics an analytical procedure must possess to be fit for its intended purpose [28]. Fundamentally, the ATP defines what the method needs to achieve—the required specificity, accuracy, precision, and range—before deciding how to achieve it through specific technologies or methodologies [29]. This paradigm shift toward a goal-oriented approach provides a structured framework for selecting the most appropriate analytical method, whether targeted or non-targeted, based on predefined objective criteria rather than conventional practices alone.

Within the context of comparing targeted and non-targeted analytical methods, the ATP acts as a crucial neutral benchmark. It frames the method selection process within a systematic, science- and risk-based approach, ensuring the chosen technique—be it targeted for specific known analytes or non-targeted for broader chemical profiling—is appropriate for the decision-making need [28]. The ATP captures the measuring requirements for critical quality attributes (CQAs), establishing the performance characteristics necessary to ensure confidence in results that will guide development, quality control, or regulatory decisions [28].

ATP's Role in the Analytical Lifecycle and Regulatory Framework

The ATP is integral to the enhanced approach for analytical procedure development described in ICH Q14, which emphasizes science- and risk-based methodologies over traditional minimal approaches [28]. It forms the foundation for the entire analytical procedure lifecycle, from initial design and technology selection through procedure performance qualification and continued performance verification [29].

Regulatory Foundation and Lifecycle Management

The implementation of ATP is guided by key regulatory and standards documents:

- ICH Q14: Provides the framework for analytical procedure development and describes the ATP as a core component of the enhanced approach [28].

- ICH Q2(R2): Focuses on the validation of analytical procedures, with the ATP serving as the basis for defining validation parameters and acceptance criteria [28] [29].

- USP Chapter 1220: Embodies the analytical procedure lifecycle concept, aligning with ATP principles for ongoing method verification and management [29].

Throughout the analytical procedure lifecycle, methods inevitably undergo changes that require evaluation. The ATP provides the stable reference point against which the impact of any change is assessed, guiding whether revalidation is needed and which performance characteristics require reassessment [28]. This structured change management process, facilitated by the ATP, enhances regulatory interactions by clearly documenting the development rationale and control strategy [28].

Core Components of an Effective ATP

A well-constructed ATP contains several critical components that collectively define the analytical requirements. The table below outlines these essential elements and their functions in guiding method selection and development.

Table 1: Core Components of an Analytical Target Profile (ATP)

| ATP Component | Description | Role in Method Selection |

|---|---|---|

| Intended Purpose | Clear statement of what the procedure must measure (e.g., quantitation of active ingredient, impurity level, biological activity) [28]. | Defines the fundamental analytical need, guiding the choice between targeted quantification or non-targeted profiling. |

| Link to CQAs | Summary of how the procedure will provide reliable results about the specific Critical Quality Attributes being assessed [28]. | Ensures the selected method delivers data relevant to product quality and safety decisions. |

| Performance Characteristics | Key parameters including accuracy, precision, specificity, linearity, range, and robustness with defined acceptance criteria [28]. | Provides measurable benchmarks for evaluating candidate methods' capabilities. |

| Reportable Range | The range of concentrations or values over which the method must provide accurate and precise results [28]. | Determines whether a method's operational range suits the application's needs. |

| Technology Selection | Description and rationale for the selected analytical technology (e.g., HPLC, LC-MS, GC-MS) [28]. | Documents the justification for choosing a particular platform based on capability to meet ATP requirements. |

Targeted vs. Non-Targeted Analysis: An ATP-Driven Comparison

The choice between targeted and non-targeted analytical strategies is fundamentally guided by the ATP's defined "Intended Purpose." Each approach serves distinct needs, with the ATP providing the objective criteria for selection based on the specific analytical question.

Defining the Approaches

Targeted analysis is an analytical approach designed to identify and quantify a specific set of known compounds. It relies on available reference standards and mass information for confirmation, making it highly reliable and straightforward to implement for defined analytes [5]. In contrast, non-targeted analysis (NTA) is an analytical approach that aims to profile chemical mixtures by detecting and identifying both known and unknown compounds without prior knowledge of the sample's complete chemical composition [5]. NTA is particularly valuable for discovering previously unidentified substances in complex matrices.

Comparative Analysis Using ATP Criteria

The following diagram illustrates how the ATP guides the decision-making process between targeted and non-targeted approaches based on the analytical objectives and sample characteristics:

Table 2: Comparative Analysis: Targeted vs. Non-Targeted Methods Through the ATP Lens

| Evaluation Parameter | Targeted Analysis | Non-Targeted Analysis (NTA) |

|---|---|---|

| Primary Objective | Accurate identification and precise quantification of predefined analytes [5]. | Comprehensive profiling to detect and identify known and unknown compounds [5]. |

| Typical Applications | Routine quality control, stability testing, assay/potency determination, specified impurity testing [28]. | Extractables & Leachables (E&L) profiling, metabolomics, impurity discovery, environmental contaminant screening [5] [30]. |

| Reference Standards | Requires authentic standards for all target analytes [5]. | Uses a representative set of reference standards to estimate response factors for unknowns [30]. |

| Data Complexity | Lower complexity; focused data analysis [5]. | High complexity; requires advanced bioinformatics tools for data interpretation [5]. |

| Quantification Capability | Absolute quantification possible with appropriate standards [5]. | Typically limited to relative quantification; semi-quantitative without authentic standards [5]. |

| Throughput | Generally higher throughput for routine analysis. | Lower throughput due to extensive data acquisition and processing requirements [5]. |

| Key Strengths | High reliability, precision, and accuracy for known compounds; regulatory familiarity [5]. | Ability to discover unexpected compounds; comprehensive sample characterization [5]. |

| Main Limitations | Limited to predefined compounds; cannot detect unknown substances [5]. | Cannot guarantee complete identification of all components; complex data interpretation [5]. |

Case Study: ATP for IVRT Method Selection

A practical application of the ATP for method selection was demonstrated in a 2024 study comparing four different In Vitro Release Test (IVRT) apparatuses for diclofenac sodium topical formulations [29]. This case study exemplifies how the ATP provides objective criteria for selecting the most appropriate technology.

Experimental Design and Methodology

The study defined an ATP specifying the required performance characteristics for the IVRT method, including accuracy, precision, and robustness [29]. Researchers then evaluated four different technologies against these ATP criteria:

- USP Apparatus II with immersion cell

- USP Apparatus IV with semisolid adapter

- Static vertical diffusion cell (Franz cell)

- In-house-developed flow-through diffusion cell (FTDC) [29]

The experimental protocol involved testing diclofenac sodium hydrogel and cream formulations across all four apparatuses using standardized conditions: maintaining temperature at 32 ± 0.5°C, using pH 7.4 phosphate-buffered saline as receptor medium, and collecting samples at predetermined time points over 6 hours [29]. Samples were analyzed using validated UHPLC methods to determine drug release rates [29].

Comparative Performance Data

Table 3: Experimental Results Comparing IVRT Apparatus Performance Against ATP Criteria [29]

| IVRT Apparatus | Cumulative Release (6h) | Precision (%RSD) | Robustness | Overall ATP Conformance |

|---|---|---|---|---|

| USP II + Immersion Cell | 19.8% | <5% | High | Best - Selected for QC method development |

| USP IV + Semisolid Adapter | 15.2% | 5-10% | Moderate | Moderate - More variable performance |

| Static Vertical Diffusion Cell | 22.1% | <5% | Moderate | Good - Similar precision but more complex operation |

| Flow-Through Diffusion Cell | 18.5% | >10% | Low | Poor - Higher variability and operational challenges |

The comprehensive data generated through this ATP-driven comparison enabled the evidence-based selection of USP Apparatus II with immersion cell as the most appropriate technology for IVRT quality control testing of the evaluated formulations [29]. This outcome demonstrates how the ATP framework facilitates objective technology selection based on fitness for purpose rather than convention alone.

Special Considerations for Non-Targeted Analysis

Implementing non-targeted analysis presents unique challenges that require specific adaptations to the ATP framework. Unlike targeted methods, NTA must accommodate the inherent uncertainty of analyzing unknown compounds.

Reference Standard Selection for NTA

For NTA, establishing an appropriate set of reference standards is critical for semi-quantification. A 2025 study proposed a systematic approach for selecting reference standards for NTA of polymer additives in medical devices, establishing six key criteria [30]:

- Reference standard availability: Candidates should be widely commercially available [30].

- Chemical relevance: Standards should align with expected E&L profiles [30].

- Chemical stability: Standards must be stable and readily detectable under analysis conditions [30].

- Toxicological coverage: Inclusion of chemicals with diverse toxicological profiles [30].

- Frequency of use: Prioritization of chemicals with broader applications and higher detection likelihood [30].

- Analytical compatibility: Physicochemical properties must fall within detectable ranges of GC-MS and LC-MS techniques [30].

This approach led to a curated set of 106 reference standards encompassing diverse physicochemical properties and toxicological concerns, enabling more reliable semi-quantification in NTA [30].

Uncertainty Factors in NTA

In NTA, the Uncertainty Factor (UF) is a critical parameter that addresses analytical variability when estimating concentrations of unknown compounds. The UF is calculated using the formula:

$${UF} = \frac{1}{(1-{RSD})}$$

where RSD is the relative standard deviation of the response factors from the reference standard database [30]. Proper selection of reference standards directly impacts the RSD value, which in turn affects the Analytical Evaluation Threshold (AET) - the concentration above which E&L must be identified and quantified [30]. An inappropriate reference standard set can underestimate the UF, potentially leading to underreporting of toxicologically significant compounds [30].

Essential Research Reagents and Materials

Successful implementation of analytical methods, whether targeted or non-targeted, requires specific high-quality reagents and materials. The following table details essential items for conducting these analyses based on the cited experimental work.

Table 4: Essential Research Reagents and Materials for Analytical Method Development

| Reagent/Material | Specification/Function | Application Context |

|---|---|---|

| Reference Standards | High-purity chemical substances for instrument calibration and quantification [30]. | Required for both targeted method validation and establishing response factors in NTA. |

| Internal Standards | Stable isotopically labeled compounds for signal normalization and improved accuracy [30]. | Used in both targeted and non-targeted LC-MS/GC-MS methods to correct for variability. |

| HPLC/MS Grade Solvents | High-purity solvents (methanol, acetonitrile, water) with minimal interference [29]. | Mobile phase preparation for chromatographic separation in LC-MS methods. |

| Artificial Membranes | MCE filters (0.22 µm pore size) for release rate studies [29]. | IVRT apparatus assembly for topical formulation testing. |

| Buffer Components | Salts for phosphate-buffered saline (PBS) at physiological pH [29]. | Receptor medium preparation for release tests and bio-relevant extraction studies. |

| Protein Precipitation Reagents | Solvents or agents for removing proteins from biological matrices [5]. | Sample preparation for complex matrices in bioanalytical NTA. |

| Solid Phase Extraction (SPE) Sorbents | Stationary phases for extracting, concentrating, and cleaning up analytes from complex samples [5]. | Sample preparation to enhance sensitivity and reduce matrix effects. |

The Analytical Target Profile provides a systematic, science-based framework that guides the selection of analytical methods with objective criteria rather than convention. By clearly defining requirements before selecting technologies, the ATP ensures the chosen method—whether targeted for precise quantification of known entities or non-targeted for comprehensive profiling—is fit-for-purpose for its specific decision-making context.

The comparative analysis presented demonstrates that targeted and non-targeted approaches serve complementary roles in the analytical toolkit. The ATP serves as the crucial decision-making framework that aligns analytical capabilities with project goals, regulatory requirements, and ultimately, product quality and patient safety. As analytical science continues to evolve with increasingly complex challenges, the ATP provides the stable foundation for evaluating and selecting appropriate methodologies based on their ability to deliver reliable, meaningful data.

In modern analytical science, the choice between targeted and non-targeted methods represents a fundamental strategic decision for researchers. Targeted analysis focuses on the precise identification and quantification of a predefined set of analytes, delivering high precision for specific questions. In contrast, non-targeted approaches aim to comprehensively capture all measurable components in a sample without prior selection, enabling hypothesis-free discovery and the detection of unexpected patterns. This guide objectively compares these methodologies across three critical applications: biomarker discovery, exposomics, and food fraud detection, providing experimental data and protocols to inform methodological selection.

The core distinction lies in their analytical focus. Targeted methods validate known entities with high precision, answering "how much" of a specific substance is present. Non-targeted methods screen for unknown patterns or markers, answering "what is different" between sample groups. Each approach demands distinct validation strategies, with targeted methods requiring rigorous quantification standards and non-targeted methods needing robust model validation to ensure pattern recognition reliability.

Comparative Performance Data

Table 1: Performance Comparison of Targeted vs. Non-Targeted Methods Across Applications

| Performance Metric | Targeted Methods | Non-Targeted Methods |

|---|---|---|

| Analytical Focus | Predefined, specific analytes [31] | Comprehensive, hypothesis-free [32] [33] |

| Primary Application | Quantification, compliance testing [31] | Discovery, authenticity testing, fingerprinting [34] [32] |

| Typical Output | Concentration values [31] | Spectral patterns, statistical models [34] [32] |

| Sensitivity | Comprehensive LOD/LOQ determination [31] | Confirmatory of published sensitivity [31] |

| Quantification Accuracy | High precision [31] | Moderate assurance [31] |

| Implementation Speed | Slower (weeks/months) [31] | Rapid (days) [31] |

| Method Flexibility | Highly adaptable [31] | Limited to validated model scope [31] [34] |

| Data Complexity | Manageable, structured [31] | High-dimensional, requires specialized bioinformatics [35] [36] |

Table 2: Application-Specific Validation Criteria and Experimental Findings

| Application & Context | Validation Approach | Key Experimental Findings | Statistical Performance |

|---|---|---|---|

| Biomarker Discovery(Clinical Diagnostics) | Analytical validation per CLSI guidelines; Clinical validation for outcomes [37] | AI-powered discovery cuts timelines from 5+ years to 12-18 months [37] | Requires AUC ≥0.80, sensitivity/specificity typically ≥80% [37] |

| Exposomics(Dried Blood Spot Analysis) | Optimized LC-HRMS workflow evaluating extraction efficiency & matrix effects [38] | Acceptable recoveries (60–140%) and reproducibility (median RSD: 18%) for majority of >200 xenobiotics [38] | Matrix effects showed median value of 76% (median RSD: 14%) [38] |

| Food Fraud(NMR-based Food Authentication) | Validation of NMR-based non-targeted protocols for multi-lab reproducibility [32] | Technique allows comparison of spectra across different instruments and laboratories [32] | Collaborative datasets enable reliable classification models [32] |

Biomarker Discovery: From Analytical Validation to Clinical Utility

Experimental Protocols

Targeted Biomarker Validation Protocol:

- Precision Evaluation: Perform repeat measurements of biomarkers to achieve a coefficient of variation under 15% [37].

- Recovery Assessment: Spike samples with known biomarker quantities to demonstrate recovery rates between 80-120% [37].

- Correlation Analysis: Compare results against reference standards to attain correlation coefficients above 0.95 [37].

- Inter-laboratory Study: Validate assay performance across multiple sites with different equipment and technicians [37].

Non-Targeted Biomarker Discovery Protocol:

- Sample Preparation: Use minimally processed biological samples (e.g., plasma, urine) to preserve molecular integrity [36].

- LC-HRMS Analysis: Perform liquid chromatography-high resolution mass spectrometry with untargeted data acquisition [38].

- Data Preprocessing: Apply peak picking, alignment, and normalization to raw data [36].

- Multivariate Analysis: Utilize unsupervised (PCA, clustering) and supervised (ML algorithms) methods to identify discriminatory features [36].

- Biomarker Identification: Match significant features to chemical databases and validate identities with reference standards [36].

Workflow Visualization

Biomarker Discovery Pathway

Exposomics: Comprehensive Exposure Assessment

Experimental Protocols

Targeted Exposomics Protocol for Known Chemicals:

- Sample Extraction: Employ optimized extraction protocols for specific chemical classes (e.g., PFAS, pesticides, mycotoxins) [38].

- Internal Standard Addition: Spike samples with isotopically labeled standards before extraction to correct for matrix effects [38].

- LC-HRMS Analysis with Targeted MS/MS: Use scheduled multiple reaction monitoring for high sensitivity quantification [38].

- Matrix Effect Evaluation: Calculate matrix effects by comparing standards in solvent versus matrix extracts; acceptable range: 60-140% [38].

Non-Targeted Exposomics Protocol:

- Comprehensive Sample Preparation: Use minimal sample cleanup to retain diverse chemical features [33].

- High-Resolution Mass Spectrometry: Acquire data in full-scan mode with fragmentation to enable compound identification [33].

- Wide Mass Range Analysis: Scan broad mass range (e.g., 50-1000 m/z) to capture diverse exposures [33].

- Data Processing with Feature Detection: Use software (e.g., XCMS, MS-DIAL) to detect chromatographic peaks and align across samples [33].

- Compound Annotation: Search experimental spectra against mass spectral libraries (e.g., NIST, HMDB) and apply in-silico fragmentation tools [33].

Workflow Visualization

Exposomics Research Workflow

Food Fraud Detection: Authenticity and Traceability

Experimental Protocols

Targeted Food Authentication Protocol:

- Marker Selection: Identify specific chemical markers indicative of authenticity (e.g., isotopic ratios, specific compounds) [34].

- Reference Material Analysis: Establish expected ranges for authentic samples through analysis of verified reference materials [34].

- Method Validation: Determine accuracy, precision, LOD, LOQ, and linearity for each marker [31].

- Sample Classification: Compare sample results to established ranges to determine authenticity likelihood [34].

Non-Targeted Food Authentication Protocol:

- Authentic Reference Collection: Assemble a comprehensive set of authentic samples with verified provenance [34] [32].

- Spectroscopic Fingerprinting: Acquire NMR or MS spectral data from reference samples using standardized protocols [32].

- Data Preprocessing: Apply spectral alignment, normalization, and scaling to minimize technical variation [32].

- Predictive Model Building: Use machine learning (e.g., PCA-LDA, random forests) to build classification models from reference data [34].

- Model Validation: Test model performance with independent sample sets and monitor ongoing performance with quality control samples [34] [32].

Workflow Visualization

Food Authentication Decision Path

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Targeted and Non-Targeted Applications

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Stable Isotope-Labeled Standards | Internal standards for quantification; correct for matrix effects and recovery [38] | Targeted exposomics (quantification of xenobiotics); Targeted biomarker validation [38] [37] |