Single-Lab vs. Multi-Laboratory Validation: Ensuring Robustness in Food Safety Methods

This article provides a comprehensive analysis for researchers and scientists on the critical roles of single-lab and multi-laboratory validation in food safety methods.

Single-Lab vs. Multi-Laboratory Validation: Ensuring Robustness in Food Safety Methods

Abstract

This article provides a comprehensive analysis for researchers and scientists on the critical roles of single-lab and multi-laboratory validation in food safety methods. It explores the foundational principles of method validation as outlined by regulatory bodies like the FDA, detailing the hierarchical validation levels from emergency use to full collaborative studies. The content covers practical applications across chemical and microbiological analyses, addresses common troubleshooting and optimization strategies, and presents a comparative analysis of effect sizes and methodological rigor between single and multi-laboratory studies. By synthesizing current validation guidelines, empirical research, and industry trends, this resource offers a strategic framework for selecting the appropriate validation pathway to ensure method reliability, regulatory compliance, and robust food safety outcomes.

The Validation Hierarchy: From Single-Lab to Multi-Laboratory Standards

Understanding FDA's Method Validation Guidelines and the MDVIP Framework

The Food and Drug Administration (FDA) Foods Program employs a rigorous, structured approach to analytical method validation governed by the Methods Development, Validation, and Implementation Program (MDVIP) Standard Operating Procedures. This framework ensures that FDA laboratories use properly validated methods to support the regulatory mission for food safety and public health protection. The MDVIP commits its members to collaborate on the development, validation, and implementation of analytical methods, with one of its main goals being to ensure the use of properly validated methods, and where feasible, methods that have undergone multi-laboratory validation (MLV) [1].

The MDVIP operates under the oversight of the FDA Foods Program Regulatory Science Steering Committee (RSSC), which includes members from FDA's Center for Food Safety and Applied Nutrition (CFSAN), Office of Regulatory Affairs (ORA), Center for Veterinary Medicine (CVM), and National Center for Toxicological Research (NCTR). The process of generating, validating, and approving methods is managed separately for chemistry and microbiology disciplines through Research Coordination Groups (RCGs) and Method Validation Subcommittees (MVS). The RCGs provide overall leadership and coordination in developing and updating guidelines, while MVSs are responsible for approving validation plans and evaluating validation results [1].

Single-Lab vs. Multi-Laboratory Validation: Key Concepts

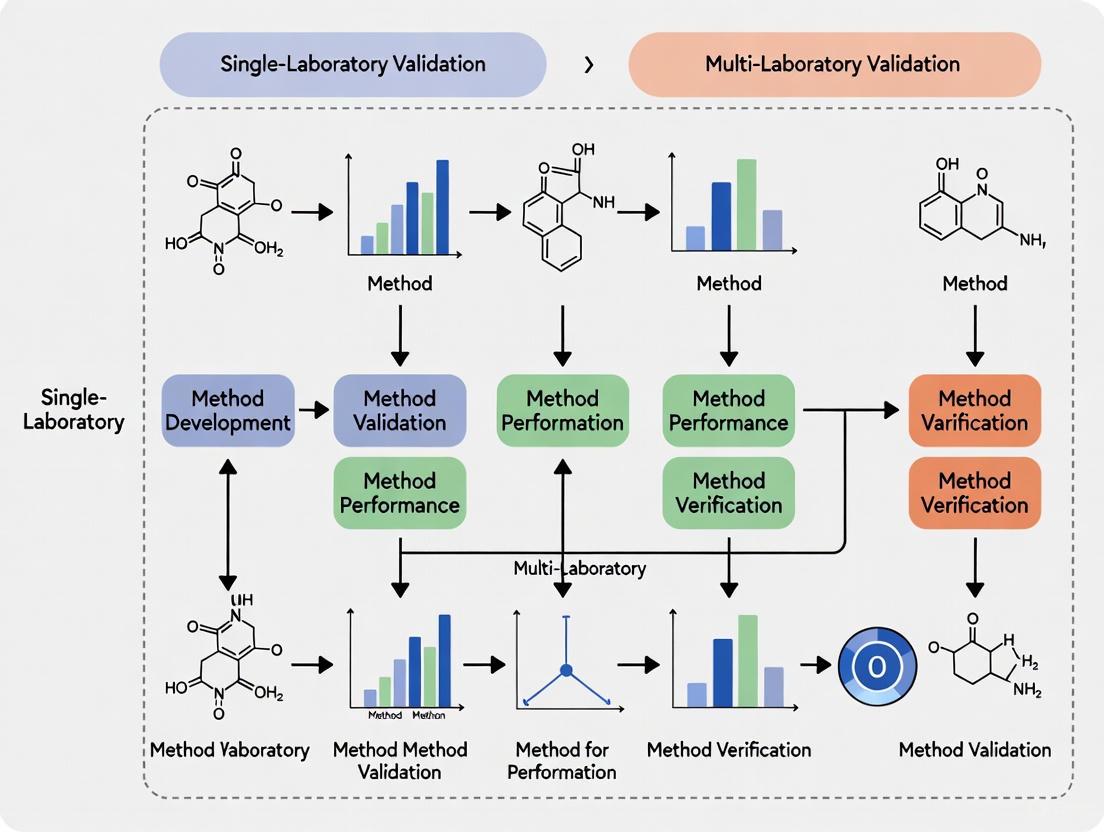

Within the MDVIP framework, method validation can proceed through two primary pathways: single laboratory validation (SLV) and multi-laboratory validation (MLV). Each approach serves distinct purposes in the method development and implementation continuum.

Single laboratory validation represents the initial phase where a method is developed and validated within a single laboratory setting. This establishes the foundational performance characteristics of the method before proceeding to broader validation. In contrast, multi-laboratory validation involves multiple laboratories following standardized protocols to evaluate method performance across different environments, equipment, and personnel. The MLV approach provides a more comprehensive assessment of method robustness, transferability, and real-world applicability [1] [2].

The MDVIP explicitly prioritizes multi-laboratory validation where feasible, recognizing that MLV provides superior evidence of method robustness and inter-laboratory reproducibility. This preference stems from the understanding that methods must perform consistently across the FDA's network of laboratories and those of its regulatory partners [1].

Comparative Performance Data: SLV vs. MLV

Case Study: Cyclospora cayetanensis Detection in Fresh Produce

A recent multi-laboratory validation study demonstrates the rigorous evaluation process for methods transitioning from SLV to MLV. Researchers validated a modified real-time PCR assay (Mit1C) for detecting Cyclospora cayetanensis in fresh produce, comparing it against the existing reference method (18S qPCR) across 13 laboratories [2].

Table 1: Performance Comparison of Mit1C qPCR vs. Reference Method in MLV Study

| Sample Type | Detection Rate (Mit1C qPCR) | Detection Rate (18S qPCR) | Relative Level of Detection (RLOD) |

|---|---|---|---|

| Samples with 200 oocysts | 100% (78/78) | 100% (78/78) | 1.00 (reference) |

| Samples with 5 oocysts | 69.23% (99/143) | 61.54% (88/143) | 0.81 (95% CI: 0.600, 1.095) |

| Un-inoculated samples | 1.1% (1/91) | 0% (0/91) | Not applicable |

The MLV study demonstrated that the new Mit1C qPCR method showed statistically equivalent performance to the reference method (since the confidence interval for RLOD included 1), with high specificity (98.9%) and nearly zero between-laboratory variance, confirming its suitability as an effective alternative analytical tool [2].

Case Study: hERG Block Potency Assays

Beyond food safety applications, the principle of multi-laboratory validation extends to pharmaceutical development. A HESI-coordinated study evaluating hERG channel block potency—a critical cardiac safety assessment—across five laboratories revealed important insights about inter-laboratory variability [3].

Table 2: Inter-laboratory Variability in hERG Assay Performance

| Validation Metric | Findings | Implications for Method Validation |

|---|---|---|

| Systematic Differences | One laboratory showed systematic potency differences for first 21 drugs | Highlights need for standardized protocols and cross-lab calibration |

| Within-laboratory Variability | Most retests within 1.6X of initial testing | Establishes baseline for expected variability in validated methods |

| Data Distribution | Natural variability estimated at ~5X | Suggests potency values within 5X should not be considered different |

| Impact of Best Practices | Standardized protocols reduced inter-lab differences | Supports FDA emphasis on standardized approaches in MDVIP |

This study demonstrated that even when following best practices and standardized protocols, systematic differences between laboratories can emerge, emphasizing the importance of MLV studies in understanding methodological limitations and establishing appropriate acceptance criteria [3].

Experimental Protocols for Method Validation Studies

Protocol Design for Multi-Laboratory Validation

Well-designed experimental protocols are essential for generating meaningful validation data. The MLV study for Cyclospora detection employed a rigorous approach where each participating laboratory analyzed twenty-four blind-coded Romaine lettuce DNA test samples. The sample set included unseeded samples, samples seeded with five oocysts, and samples seeded with 200 oocysts, distributed across two testing rounds. This design allowed researchers to assess method sensitivity, specificity, and reproducibility across different contamination levels and laboratory environments [2].

For the hERG assay study, laboratories followed a standardized protocol using the same voltage waveform and solutions to record hERG current at near-physiological temperature. However, certain elements—including cell lines, drug sources, stock preparation procedures, and specific test concentrations—were not standardized, reflecting real-world variations that exist across laboratories. This approach provided valuable insights into how such variables might affect method performance in practice [3].

Statistical Analysis and Acceptance Criteria

Both studies employed robust statistical approaches to evaluate method performance. The Cyclospora detection study calculated relative levels of detection with confidence intervals to determine statistical equivalence between methods. The hERG study used descriptive statistics and meta-analysis to estimate natural data distributions and establish appropriate variability thresholds for considering results statistically different [2] [3].

Establishing predefined acceptance criteria is essential for objective method validation. The finding that hERG block potency values within 5X of each other should not be considered different (as they fall within natural data distribution) provides a scientifically-grounded benchmark for regulatory decision-making [3].

The Research Toolkit: Essential Materials and Reagents

Table 3: Key Research Reagent Solutions for Method Validation Studies

| Reagent/Material | Function in Validation Studies | Application Examples |

|---|---|---|

| Blind-coded test samples | Eliminates testing bias; assesses method accuracy and precision | Cyclospora detection study used 24 blind-coded samples per lab [2] |

| Standardized DNA extracts | Ensures consistency in molecular target availability across laboratories | Romaine lettuce DNA extracts spiked with known oocyst concentrations [2] |

| Reference standards and controls | Provides benchmarks for method comparison and quality control | hERG study used reference drugs with known block potency [3] |

| Cell lines with stable expression | Ensures consistent biological response across test systems | HEK 293 cells expressing hERG1a subunit used in 4 of 5 labs [3] |

| Standardized buffer solutions | Maintains consistent experimental conditions across laboratories | All hERG labs used identical internal/external solutions [3] |

| System suitability standards | Verifies instrument performance meets predefined criteria | PRTC synthetic peptide mixture for LC-MS system checks [4] |

Regulatory Implications and Future Directions

The MDVIP framework continues to evolve with advancements in analytical technologies. Regulatory agencies are increasingly recognizing that advanced analytical methods can sometimes provide more sensitive detection of differences between products than traditional clinical endpoints. For instance, recent FDA draft guidance on biosimilars proposes that comparative efficacy studies "may not be necessary" for certain therapeutic protein products when advanced analytical technologies can structurally characterize products with high specificity and sensitivity [5].

This evolving regulatory landscape emphasizes the growing importance of robust method validation frameworks like the MDVIP. As analytical technologies continue to advance—including increased automation and artificial intelligence in laboratories—the principles of single-lab and multi-laboratory validation will remain foundational for establishing method reliability and ensuring regulatory acceptance [6].

In the field of food science and analytical chemistry, the reliability of analytical methods is paramount for ensuring food safety, quality, and regulatory compliance. Method validation demonstrates that an analytical procedure is suitable for its intended purpose and generates reliable results. Within this framework, validation activities occur across distinct tiers, each with varying levels of rigor, scope, and applicability. These tiers can be conceptualized as single-laboratory validation, multi-laboratory validation, and scenarios resembling emergency validation, each addressing different needs within the research and regulatory landscape.

Single-laboratory validation, often referred to as in-house validation, represents the foundational tier where a laboratory establishes the performance characteristics of a method within its own environment. This process involves rigorous testing of parameters such as accuracy, precision, specificity, and sensitivity to ensure the method produces trustworthy results for its intended application. In contrast, multi-laboratory validation, also known as collaborative study, represents a more comprehensive tier where multiple independent laboratories evaluate the same method using standardized protocols to establish its reproducibility across different environments, operators, and equipment. This tier provides a higher level of confidence in the method's robustness and transferability. In specific circumstances, such as responding to emerging food safety threats or analyzing unique sample matrices, modified validation approaches may be necessary, creating a tier that functions similarly to an emergency validation level, though this terminology is not formally standardized in the literature.

Comparative Analysis of Validation Tiers

Performance and Scope Comparison

The choice between single-lab and multi-laboratory validation strategies involves clear trade-offs between practicality, resource allocation, and the required level of evidence for method acceptability. The table below summarizes the core characteristics of each tier, illustrating their distinct roles within method validation.

Table 1: Comparative Analysis of Single-Lab and Multi-Laboratory Validation Tiers

| Comparison Factor | Single-Laboratory Validation | Multi-Laboratory Validation |

|---|---|---|

| Primary Objective | To confirm a method performs as expected under specific laboratory conditions [7]. | To establish the method's reproducibility and ruggedness across different environments [8] [9]. |

| Typical Scope | Limited testing of critical parameters like accuracy, precision, and detection limits [7]. | Comprehensive assessment of reproducibility (often reported as relative reproducibility standard deviation) and trueness (bias) across a defined dynamic range [8]. |

| Resource Requirements | Lower cost and time requirements; can be completed in days to weeks [7]. | High resource intensity; requires significant coordination, time, and financial investment [10]. |

| Regulatory Standing | Often sufficient for in-house use and when adopting standard methods; required for ISO/IEC 17025 accreditation [7]. | Frequently required for official standard methods and regulatory acceptance; provides higher confidence for method standardization [8] [10]. |

| Key Performance Metrics | Accuracy, precision, limit of detection, linearity, robustness [7] [10]. | Reproducibility precision, trueness (bias), collaborative study success rates [8]. |

| Example Performance Data | (Varies by method and laboratory) | Relative reproducibility standard deviation of 2.1% to 16.5% for a digital PCR method; bias well below 25% across the dynamic range [8]. |

Experimental Data from Validation Studies

Quantitative data from published studies highlights the performance achievable through rigorous multi-laboratory validation. A study on a droplet digital PCR (ddPCR) method for analyzing genetically modified organisms (GMO) in food and feed demonstrated the high reproducibility attainable through collaborative studies. The method showed relative repeatability standard deviations from 1.8% to 15.7%, while the relative reproducibility standard deviation between different labs was found to be between 2.1% and 16.5% over the dynamic range studied. Furthermore, the relative bias of the ddPCR methods was well below 25% across the entire dynamic range, satisfying the acceptance criteria set by EU and international guidelines like the Codex Committee on Methods of Analysis and Sampling (CCMAS) [8].

Another multi-laboratory assessment in proteomics using SWATH-mass spectrometry demonstrated that this method could consistently detect and reproducibly quantify over 4000 proteins from cell line samples across 11 participating laboratories worldwide. The study concluded that the sensitivity, dynamic range, and reproducibility established with the method were uniformly achieved across all sites, proving that acquiring reproducible quantitative data by multiple labs is achievable with this technology [9]. These examples underscore the level of confidence that multi-laboratory validation provides.

Experimental Protocols for Key Validation Tiers

Single-Laboratory (In-House) Validation Protocol

Single-laboratory validation is a systematic process that evaluates critical method performance parameters to ensure fitness for purpose. The protocol involves a sequence of experiments designed to characterize the method's behavior under the laboratory's specific conditions.

Table 2: Core Experimental Protocol for Single-Laboratory Validation

| Validation Parameter | Experimental Methodology | Data Analysis & Output |

|---|---|---|

| Accuracy/Trueness | Analysis of certified reference materials (CRMs) or spiked samples with known analyte concentrations [10]. | Calculation of recovery percentage (%) or bias between measured and known values [10]. |

| Precision | Repeated analysis (n≥6) of homogeneous samples at multiple concentration levels within the same day (repeatability) and over different days (intermediate precision) [10]. | Calculation of relative standard deviation (RSD, %) for repeatability and intermediate precision [10]. |

| Linearity & Range | Analysis of a series of standard solutions or spiked samples across the claimed analytical range (e.g., 5-7 concentration levels) [10]. | Linear regression analysis; calculation of correlation coefficient (R²) and residual plots [10]. |

| Limit of Detection (LOD) / Limit of Quantification (LOQ) | Analysis of low-level analyte samples and blank matrices [7]. | LOD: 3.3 × (Standard Deviation of blank / Slope of calibration curve). LOQ: 10 × (Standard Deviation of blank / Slope of calibration curve) [7]. |

| Robustness | Deliberate, small variations of key method parameters (e.g., temperature, pH, flow rate) to assess method resilience [10]. | Observation of the impact on results; establishes acceptable operating ranges for each parameter [10]. |

The following workflow diagram illustrates the sequential process of a single-laboratory validation, from planning to final report.

Multi-Laboratory (Collaborative) Validation Protocol

Multi-laboratory validation is a complex, highly structured process designed to quantify a method's inter-laboratory reproducibility. The protocol is typically organized by a coordinating laboratory and follows international standards.

- Study Organization and Material Preparation: A coordinating laboratory designs the study, recruits participating laboratories (typically 8-15), and prepares homogeneous, stable test samples with known or assigned values. These samples should cover the method's specified concentration range and represent relevant sample matrices [8] [10].

- Distribution and Standardization: The sample sets, along with a detailed, standardized experimental protocol, are distributed to all participants. The protocol must be unambiguous, specifying every detail from sample preparation to data reporting format to minimize variability from procedural differences [8].

- Blinded Analysis: Participating laboratories analyze the samples in a randomized order under typical laboratory conditions (within the protocol's constraints), often including replicates. The analyses are performed over different days to capture realistic inter-laboratory variation [9].

- Data Collection and Statistical Analysis: The coordinating laboratory collects all raw data and results. Statistical analysis is performed according to established international standards (e.g., ISO 5725), typically involving the calculation of:

- Repeatability Standard Deviation (sr): Variability within a single laboratory.

- Reproducibility Standard Deviation (sR): Variability between different laboratories.

- Trueness (Bias): Difference between the overall mean and the accepted reference value [8].

- Reporting and Evaluation: The final report includes all statistical outcomes, an assessment of the method's performance against pre-defined fitness-for-purpose criteria, and a statement on the method's suitability for standardization [10].

The logical relationship and workflow of a multi-laboratory study are summarized in the diagram below.

The Scientist's Toolkit: Essential Research Reagent Solutions

The execution of robust validation studies, particularly in food analysis, relies on a set of essential reagents and materials. The following table details key components of the research toolkit for methods like digital PCR in GMO analysis.

Table 3: Key Research Reagent Solutions for Food Method Validation

| Reagent/Material | Function in Validation | Application Example |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide a known, traceable analyte concentration to establish method accuracy (trueness) and evaluate bias [10]. | Quantification of GMO content in food samples; calibration of analytical instruments [8]. |

| DNA Extraction Kits | Isolate and purify target nucleic acids from complex food matrices. The efficiency and purity of extraction directly impact method accuracy and precision [8]. | Preparation of template DNA from processed food products for ddPCR or qPCR analysis of GMOs [8]. |

| Stable Isotope-Labeled Standards | Act as internal standards to correct for analyte loss during sample preparation and matrix effects, improving quantification accuracy [9]. | Used in proteomics (e.g., SWATH-MS) and increasingly in LC-MS/MS for small molecule analysis in food [9]. |

| Synthetic Oligonucleotides | Serve as positive controls, calibration standards, and for constructing dilution series to determine limits of detection and quantification [8]. | In ddPCR validation, used to create a defined dynamic range from 0.012 to 10,000 fmol on column [8]. |

| Proficiency Test Materials | Allow a laboratory to assess its performance by comparing its results with assigned values or results from other laboratories. | Used for ongoing verification of laboratory competency after a method has been validated [10]. |

The structured tiers of method validation—single-lab and multi-laboratory—serve complementary yet distinct roles in advancing reliable food methods research. Single-laboratory validation provides a time-efficient and cost-effective path for establishing method fitness in a specific setting, making it ideal for method development, transfer, and routine laboratory accreditation [7]. In contrast, multi-laboratory validation delivers a comprehensive assessment of reproducibility, generating robust statistical data on inter-laboratory performance that is essential for formal method standardization and widespread regulatory acceptance [8] [10].

The choice between these tiers is not a matter of superiority but of strategic alignment with the method's intended application. For novel methods or those intended for use in a single facility, a full single-laboratory validation is both necessary and sufficient. However, for methods destined to become official standards or for use in widespread market control, the resource investment of a multi-laboratory collaborative study is indispensable. As demonstrated by validation studies in digital PCR and proteomics, this top tier of validation provides the highest level of confidence that a method will perform reliably, wherever it is applied, ensuring the integrity and reproducibility of data critical to food safety and public health.

In the rigorously controlled realms of food safety and analytical science, the validity of a test method is paramount. Regulatory standards established by bodies like AOAC INTERNATIONAL and the U.S. Food and Drug Administration (FDA) provide the critical framework for ensuring that analytical methods are reliable, reproducible, and fit for purpose. A central and evolving debate in this field concerns the level of validation necessary to prove this reliability, pitching the expediency of single-laboratory validation against the comprehensive generalizability of multi-laboratory validation. This guide objectively compares these two validation pathways within the context of global compliance requirements, providing researchers and scientists with the data and protocols needed to navigate this complex landscape.

Understanding the Regulatory Landscape

The ecosystem of food testing method validation is supported by prominent organizations that set and enforce standards accepted by regulatory bodies worldwide.

AOAC INTERNATIONAL: An independent, non-profit scientific association that develops validated test methods through its Official Methods of AnalysisSM (OMA) and Performance Tested MethodsSM (PTM) programs [11]. AOAC methods are often adopted by regulatory agencies. A key function is the administration of Proficiency Testing (PT) Programs, which allow laboratories to prove their competency by analyzing provided samples for parameters like pathogens, nutrients, or pesticides and submitting results for evaluation [12].

U.S. Food and Drug Administration (FDA): A federal agency that protects public health by ensuring the safety of the food supply. The FDA's Bacteriological Analytical Manual (BAM) is a key resource, presenting the agency's preferred laboratory procedures for the microbiological analysis of foods and cosmetics [13]. The FDA also provides guidelines for the validation of analytical methods for the detection of microbial pathogens [13].

Collaboration between these entities strengthens the overall system. For instance, AOAC and the USDA Food Safety and Inspection Service (FSIS) have a Memorandum of Understanding to collaborate on method validation, ensuring regulatory testing is "backed by science, vigilance, and trusted methods" [11].

Single-Lab vs. Multi-Laboratory Validation: A Conceptual Framework

The choice between single-lab and multi-laboratory validation strategies has profound implications for the perceived rigor and applicability of a method's results.

Table 1: Core Concepts of Validation Approaches

| Feature | Single-Laboratory Validation | Multi-Laboratory Validation |

|---|---|---|

| Definition | Method validation is conducted within a single laboratory, using its equipment, personnel, and protocols. | Method validation is conducted concurrently across multiple, independent laboratories. |

| Primary Goal | To demonstrate that a method is fit for a specific purpose under controlled, internal conditions. | To demonstrate the method's ruggedness and reproducibility across different environments, operators, and equipment. |

| Key Advantage | Efficiency in terms of cost, time, and resource allocation; ideal for initial method development. | Generalizability; provides robust evidence that the method will perform reliably in other laboratories, a key requirement for regulatory acceptance. |

| Limitation | Results may be less transferable; the method's performance may be dependent on lab-specific conditions. | More resource-intensive, complex to organize, and time-consuming. |

A systematic assessment of preclinical studies highlights these differences, noting that multilaboratory studies "adhered to practices that reduce the risk of bias significantly more often than single lab studies" [14]. Furthermore, this rigor impacts outcomes, with multi-laboratory studies demonstrating significantly smaller effect sizes than single-lab studies, a trend well-recognized in clinical research where multicenter trials provide more conservative and reliable effect estimates [14].

Experimental Data and Performance Comparison

Quantitative data from validation studies provides clear evidence of the performance characteristics of each approach.

The following diagram illustrates the typical workflow for a multi-laboratory validation study, highlighting the phases that ensure robustness and reproducibility.

Diagram 1: Multi-Laboratory Validation Workflow

Case Study: Validation of a Digital PCR Method

A collaborative study validated a droplet digital PCR (dPCR) method for quantifying genetically modified organisms (GMOs) in food and feed [8]. The study assessed key performance parameters, including trueness (bias) and precision (repeatability and reproducibility), across its dynamic range.

Table 2: Performance Data from dPCR Multi-Laboratory Validation [8]

| Target & Format | Concentration Level | Relative Bias (%) | Repeatability Standard Deviation (SR, %) | Reproducibility Standard Deviation (SR, %) |

|---|---|---|---|---|

| MON810 (Duplex) | Across Dynamic Range | Well below 25% | 1.8% to 15.7% | 2.1% to 16.5% |

The data demonstrated that the dPCR method met the strict acceptance criteria set by EU and international guidance, such as the Codex Alimentarius [8]. The study also investigated factors influencing variability, finding that the DNA extraction step added only a limited contribution, while lower target ingredient content decreased precision, though it remained within acceptable limits [8].

Detailed Experimental Protocols

For scientists designing validation studies, understanding the core protocols is essential.

Protocol for a Multi-Laboratory Validation Study

The following steps outline a standardized protocol based on international standards [8] [14]:

- Protocol Development: A detailed, standardized experimental protocol is developed, specifying every aspect of the analysis, including sample preparation, DNA extraction methods (if applicable), equipment settings, and data analysis procedures.

- Participant Selection: Multiple independent laboratories (a median of four, according to one review [14]) are selected to participate.

- Sample Distribution: Identical sets of blinded samples, often including controls and samples at various concentration levels across the dynamic range, are distributed to all participating laboratories.

- Concurrent Analysis: Each laboratory performs the analysis according to the shared protocol within a defined timeframe.

- Data Submission: Laboratories submit their raw and processed data to a central coordinating body for statistical analysis.

- Statistical Evaluation: The centralized team calculates performance metrics like repeatability standard deviation (within-lab precision) and reproducibility standard deviation (between-lab precision), and assesses trueness (bias).

Protocol for a Single-Laboratory Validation

A robust single-laboratory validation should assess the same core performance characteristics as a collaborative study, using the following approach:

- Specificity: Demonstrate the method's ability to measure the analyte accurately in the presence of other components.

- Linearity and Dynamic Range: Establish the method's response across a range of analyte concentrations.

- Accuracy (Trueness): Assess via spike-recovery experiments, where a known amount of the analyte is added to the sample matrix and the percentage recovery is calculated.

- Precision: Determine repeatability by analyzing multiple replicates of the same sample within the same run, on the same day, with the same analyst and equipment.

- Limit of Detection (LOD) and Quantification (LOQ): Determine the lowest amount of analyte that can be detected and reliably quantified.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents essential for conducting method validation studies, particularly in food and microbiological analysis.

Table 3: Essential Reagents and Materials for Validation Studies

| Item | Function in Validation | Example Use Cases |

|---|---|---|

| Proficiency Testing (PT) Samples | Commercially available samples with known or assigned values used to objectively assess a laboratory's analytical performance and comparability to other labs [12]. | AOAC's PT programs provide samples for pathogens, nutrients, pesticides, and more. Labs analyze them and report results for external evaluation [12]. |

| Certified Reference Materials (CRMs) | Matrix-based materials with certified values for specific analytes, used to establish method accuracy and for calibration. | Used in spike-recovery experiments to determine trueness in both single and multi-laboratory studies. |

| Selective Culture Media & Agar Plates | Used to isolate, identify, and enumerate specific microorganisms in food samples. | Essential for cultural methods described in the FDA's BAM for pathogens like Salmonella, Listeria, and E. coli [13]. |

| PCR Reagents & Kits | Kits containing enzymes, primers, and probes for the detection and quantification of specific DNA targets. | Used in modern methods like the dPCR validation for GMOs [8] and real-time PCR for Cyclospora in the BAM [13]. |

| DNA Extraction Kits | Standardized kits for isolating high-quality DNA from complex food matrices, critical for molecular methods. | The performance of these kits can be a variable tested in validation studies, as noted in the dPCR study [8]. |

Pathways to Regulatory Compliance and Adoption

The journey of a method from development to regulatory acceptance follows a logical pathway, influenced heavily by the type of validation it undergoes.

Diagram 2: Pathway from Method Development to Regulatory Acceptance

As shown in Diagram 2, multi-laboratory validation is the critical step for methods seeking broad regulatory acceptance. Once methods are validated through programs like the AOAC OMA or PTM, they are recognized as "fit for purpose" and can be adopted by agencies like the USDA FSIS for regulatory testing [11]. The FDA's BAM also directs users to AOAC Official Methods for analyzing organisms or toxins not covered in its manual [13].

The choice between single-lab and multi-laboratory validation is not a matter of which is inherently superior, but which is fit for purpose. Single-lab validation offers a vital and efficient first step for method development and internal verification. However, the empirical evidence is clear: multi-laboratory validation produces more generalizable, robust, and conservative estimates of a method's performance, making it the undisputed gold standard for methods seeking global regulatory compliance. For researchers and drug development professionals, designing a validation strategy that culminates in a successful multi-laboratory study is the most reliable pathway to demonstrating scientific rigor and ensuring public health protection.

Method validation serves as the cornerstone of reliable food safety testing, public health protection, and sound regulatory decisions. It provides the critical evidence that analytical methods perform as intended for their specific purpose, ensuring that measurements of contaminants, pathogens, nutrients, and genetically modified organisms (GMOs) can be trusted for decision-making. In food safety and public health contexts, the choice between single-laboratory and multi-laboratory validation approaches carries significant implications for method reliability, reproducibility, and ultimate regulatory acceptance. Single-laboratory validation (SLV) represents the essential first step where a method is developed and characterized within one laboratory, while multi-laboratory validation (MLV) rigorously tests the method across multiple independent facilities to establish its generalizability and robustness under different conditions, operators, and equipment [8] [1].

The validation paradigm in food science directly impacts public health outcomes. Regulatory agencies such as the FDA Foods Program explicitly emphasize that "properly validated methods" are fundamental to their regulatory mission, with a preference for methods that have undergone multi-laboratory validation where feasible [1]. This preference stems from the demonstrated capacity of MLV to identify limitations and biases that may not be apparent in single-laboratory studies, thereby providing greater confidence in methods used to detect foodborne pathogens, allergens, chemical contaminants, and other public health risks. The rigorous evaluation of trueness, precision, selectivity, and robustness through systematic validation protocols ensures that food safety testing produces consistent, reliable results across the diverse laboratory landscape responsible for protecting the food supply [8].

Single vs. Multi-Laboratory Validation: A Systematic Comparison

Performance Metrics and Experimental Evidence

Table 1: Quantitative Comparison of Single vs. Multi-Laboratory Validation Performance

| Performance Characteristic | Single-Laboratory Validation | Multi-Laboratory Validation | Regulatory Acceptance Criteria |

|---|---|---|---|

| Trueness (Relative Bias) | Variable; lab-dependent | Consistently <25% for dPCR GMO methods [8] | Below 25% threshold [8] |

| Repeatability Precision | Optimized under ideal conditions | RSD: 1.8%-15.7% (dPCR for MON810) [8] | Meets international guidelines [8] |

| Reproducibility Precision | Not assessed | RSD: 2.1%-16.5% (dPCR for MON810) [8] | Meets EU/Codex requirements [8] |

| Risk of Bias | Higher risk of methodological shortcomings [14] | Significantly lower risk of bias [14] | Reduced bias per SYRCLE tool [14] |

| Effect Size Estimation | Often overestimates effects [14] | More accurate, realistic effect sizes [14] | Closer to true biological effect |

| Generalizability | Limited to specific conditions | Tested across diverse environments [14] | Required for broad implementation |

Table 2: Practical Considerations for Validation Approaches

| Consideration | Single-Laboratory Validation | Multi-Laboratory Validation |

|---|---|---|

| Time Requirements | Shorter implementation timeline | Extended timeline for coordination |

| Financial Cost | Lower direct costs | Higher costs but reduced long-term waste [14] |

| Infrastructure Needs | Minimal coordination requirements | Significant coordination infrastructure |

| Sample Size | Median: 19 animals (preclinical) [14] | Median: 111 animals (preclinical) [14] |

| Regulatory Standing | Preliminary assessment | Preferred for definitive regulatory decisions [1] |

| Error Detection | Limited to internal error sources | Identifies inter-laboratory variation [8] |

Empirical evidence demonstrates systematic differences between single and multi-laboratory study outcomes. A comprehensive systematic assessment of preclinical multilaboratory studies revealed that MLV demonstrates significantly smaller effect sizes than single lab studies (Difference in Standardized Mean Differences: 0.72 [95% CI: 0.43-1.0]), reflecting more realistic treatment effect estimates [14]. This trend mirrors well-established patterns in clinical research, where multicenter trials typically produce more conservative and generalizable effect estimates than single-center studies.

The methodological rigor in MLV also appears superior. The same systematic review found that multilaboratory studies "adhered to practices that reduce the risk of bias significantly more often than single lab studies," including more robust blinding procedures, allocation concealment, and statistical planning [14]. This enhanced rigor directly addresses recognized reproducibility challenges in laboratory science and provides regulatory agencies with higher confidence in the resulting data.

Experimental Protocols for Validation Studies

Single-Laboratory Validation Protocol typically follows a structured approach assessing fundamental performance parameters:

- Precision Evaluation: Repeated analysis of homogeneous samples to determine method repeatability under stable conditions [8]

- Trueness Assessment: Analysis of certified reference materials or comparison with reference methods to establish accuracy [15]

- Selectivity/Specificity: Demonstration that the method accurately measures the analyte in the presence of potential interferents

- Linearity and Dynamic Range: Establishment of the concentration interval over which the method provides proportional results [8]

- Limit of Detection/Quantification: Determination of the lowest analyte concentration that can be reliably detected and quantified [15]

- Robustness Testing: Deliberate variations of method parameters to evaluate method resilience to minor changes [15]

Multi-Laboratory Validation Protocol expands upon SLV through collaborative study:

- Protocol Harmonization: Development of standardized experimental protocols, measurement procedures, and data reporting formats across participating laboratories [8] [14]

- Sample Homogenization and Distribution: Preparation of identical, homogeneous test materials with centralized distribution to all participants [8]

- Blinded Analysis: Independent analysis of coded samples without knowledge of expected values to prevent conscious or unconscious bias [14]

- Data Collection and Statistical Analysis: Centralized collection of raw data with statistical analysis using internationally accepted methods (e.g., ISO 5725) [8]

- Outlier Assessment: Application of standardized statistical tests (e.g., Cochran's test, Grubbs' test) to identify between-laboratory outliers [8]

- Precision Calculation: Separate calculation of repeatability standard deviation (within-laboratory precision) and reproducibility standard deviation (between-laboratory precision) [8]

Impact on Food Safety and Public Health

Enhanced Detection Capabilities

The rigorous evaluation of methods through multi-laboratory validation directly strengthens food safety systems by ensuring reliable detection of hazards. For instance, validated droplet digital PCR (dPCR) methods for detecting genetically modified organisms (GMOs) have demonstrated exceptional precision in collaborative trials, with relative repeatability standard deviations from 1.8% to 15.7% and relative reproducibility standard deviation between 2.1% and 16.5% over the dynamic range studied [8]. This level of confirmed performance gives regulatory agencies confidence in enforcement testing for GMO labeling requirements and environmental safety assessments.

Similarly, validation protocols for kinetic models in food science ensure accurate prediction of microbial growth, toxin formation, and nutrient degradation – critical factors in determining food shelf life and safety. Proper validation must assess both residual analysis (differences between observed and predicted values) and parameter uncertainty relative to data uncertainty, as the latter directly influences model predictions for food safety decisions [15]. Without appropriate validation, models may provide misleading conclusions that compromise public health protection.

Regulatory Compliance and Harmonization

Table 3: Regulatory Validation Requirements Across Jurisdictions

| Regulatory Body | Validation Approach | Key Performance Criteria | Reference Method |

|---|---|---|---|

| European Union (GMO Analysis) | SLV + MLV preferred | Trueness, precision, dynamic range, compliance with EU performance requirements [8] | Real-time PCR/dPCR methods |

| FDA Foods Program | MLV preferred where feasible | Properly validated methods supporting regulatory mission [1] | Chemistry, microbiology, DNA-based methods |

| Codex Alimentarius | International harmonization | Method performance parameters across collaborative studies [8] | Internationally recognized standards |

| Academic Research | Increasing MLV emphasis | Reduced risk of bias, generalizability of findings [14] | Systematic assessment guidelines |

Validation serves as the bridge between scientific innovation and regulatory implementation. The FDA Foods Program operates under a Methods Development, Validation, and Implementation Program (MDVIP) that commits its members "to collaborate on the development, validation, and implementation of analytical methods to support the Foods Program regulatory mission" [1]. This structured approach ensures that methods used for enforcement activities have demonstrated reliability through proper validation, with separate validation guidelines established for chemical, microbiological, and DNA-based methods.

Internationally, validation against recognized standards enables harmonization of food safety measures and trade facilitation. Methods that demonstrate compliance with EU and Codex Committee on Methods of Analysis and Sampling (CCMAS) performance requirements through multi-laboratory validation [8] gain broader international acceptance, reducing technical barriers to trade while maintaining high levels of consumer protection.

Visualization of Validation Pathways and Methodological Rigor

Validation Decision Pathway

Methodological Rigor Comparison

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Essential Research Reagents and Materials for Validation Studies

| Reagent/Material | Function in Validation | Application Examples | Critical Quality Parameters |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Establish trueness and accuracy through analysis of materials with known analyte concentrations | GMO quantification, nutrient analysis, contaminant testing [8] | Certified uncertainty, stability, commutability with test samples |

| DNA Extraction Kits | Isolate high-quality DNA for molecular methods; minimal variation between laboratories affects final results | dPCR for GMO detection, pathogen identification [8] | Yield, purity, inhibition removal, consistency across batches |

| Digital PCR Reagents | Enable absolute quantification of nucleic acid targets without standard curves | GMO quantification in food and feed [8] | Polymerase fidelity, probe specificity, minimal batch-to-batch variation |

| Enzyme Immunoassay Kits | Detect and quantify proteins, allergens, or contaminants through antibody-based methods | Allergen testing, mycotoxin detection, pathogen identification | Antibody specificity, minimal cross-reactivity, consistent calibration |

| Microbiological Media | Support growth of target microorganisms for cultural methods | Pathogen detection, spoilage organism enumeration, probiotic quantification | Selectivity, productivity, stability, composition consistency |

| Kinetic Model Calibrants | Provide reference points for validating predictive models of microbial growth or chemical degradation | Shelf-life prediction, safety assessment, quality optimization [15] | Purity, stability, relevance to food matrix |

The evidence clearly demonstrates that validation approach selection has profound implications for food safety, public health protection, and regulatory decision-making. While single-laboratory validation provides essential preliminary method characterization, multi-laboratory validation offers superior assessment of method robustness, generalizability, and real-world performance. The demonstrated tendency of MLV to produce more realistic effect estimates and adhere more rigorously to bias-reduction practices makes it particularly valuable for high-stakes applications where erroneous results could compromise public health or economic interests.

The future of food safety testing will likely see increased emphasis on multi-laboratory validation approaches, particularly as global harmonization of analytical methods facilitates trade while protecting consumers. Emerging technologies, including digital PCR and sophisticated kinetic modeling, will require robust validation protocols to establish their reliability for regulatory applications. By embracing rigorous validation frameworks that include multi-laboratory assessment where appropriate, the scientific community can strengthen the foundation upon which food safety systems and public health protections are built.

Practical Applications: Implementing Validation in Chemical and Microbiological Testing

{ article }

Chemical Method Validation: LC-MS/MS for Veterinary Drugs, Mycotoxins, and PFAS

The accurate measurement of chemical contaminants in food, such as veterinary drugs (VDs), mycotoxins, and per- and polyfluoroalkyl substances (PFAS), is a cornerstone of food safety and public health protection. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) has emerged as a powerful technique for the simultaneous analysis of these diverse compounds. However, the reliability of any analytical method hinges on the rigor of its validation. A pivotal, yet often overlooked, distinction in validation practices is the approach between single-laboratory validation (SLV) and multi-laboratory validation (MLV). This guide objectively compares the performance of analytical methods for VDs, mycotoxins, and PFAS within the context of this broader thesis, synthesizing current experimental data to illustrate how validation design impacts the robustness and generalizability of results for researchers and drug development professionals.

The choice between SLV and MLV represents a fundamental trade-off between practicality and generalizability. SLV involves the assessment of method performance parameters within a single facility. While more accessible and cost-effective, its findings may be influenced by laboratory-specific conditions, reagents, and equipment. In contrast, MLV, or collaborative study, formally evaluates the method across multiple independent laboratories. This design inherently tests the method's robustness against variations in operators, instruments, and environments, providing a more realistic estimate of its real-world performance [8] [14].

A systematic assessment of preclinical studies has quantitatively demonstrated that multilaboratory studies consistently demonstrate smaller effect sizes and adhere to practices that reduce the risk of bias significantly more often than single-lab studies [14]. This trend mirrors long-standing observations in clinical research, where multicenter trials are valued for their methodological rigor and more conservative effect estimates. For food safety methods, which form the basis for regulatory compliance and public health decisions, the enhanced credibility and transferability offered by MLV are of paramount importance.

Comparative Performance Data for Contaminant Classes

The following tables summarize key validation parameters from recent studies for multi-residue LC-MS/MS methods, illustrating the performance achievable for each class of contaminant.

Table 1: Validation Data for a Multi-Residue LC-MS/MS Method for Veterinary Drugs and Pesticides in Urine

| Parameter | Veterinary Drugs & Pesticides (72 analytes) [16] | Expanded Exposome Method (120+ analytes) [17] |

|---|---|---|

| Matrix | Bovine Urine | Human Urine |

| Linearity (R²) | 0.991 – 0.999 | Not Specified |

| LOD Range | 0.01 – 2.71 µg/L | Median: 0.10 ng/mL |

| LOQ Range | 0.05 – 7.52 µg/L | Median: 0.31 ng/mL |

| Accuracy (Recovery %) | 71.0 – 117.0% | 81 – 120% |

| Precision (CV %) | < 21.38% | < 20% |

Table 2: Validation Data for an LC-MS/MS Method for PFAS in Food

| Parameter | PFAS in Salmon (16 analytes) [18] |

|---|---|

| Matrix | Salmon |

| Method Basis | FDA Method C-010.02 |

| Linearity (R²) | ≥ 0.99 (for 15/16 analytes) |

| LOQ | 0.02 ng/g (in food) |

| Accuracy (Recovery %) | Within 40-120% (FDA acceptable range) |

| Precision (RSD %) | < 22% |

The data in Table 1 demonstrates that well-optimized SLV methods can achieve excellent performance for complex mixtures. The study on bovine urine, validating 72 analytes, met the stringent criteria of Regulation 2021/808/EC [16]. Similarly, the "exposome" method for human urine shows that SLV can be successfully scaled to cover over 120 diverse xenobiotics with high sensitivity and accuracy [17]. Table 2 shows the application of a validated SLV method for PFAS in a challenging food matrix, salmon, achieving the low detection limits required for modern food safety monitoring [18].

However, these single-lab performance characteristics, while impressive, do not guarantee the same results in other laboratories. As highlighted in a collaborative study on digital PCR methods, MLV assesses trueness and precision across different sites, providing metrics like reproducibility standard deviation that are crucial for understanding method transferability [8].

Detailed Experimental Protocols

Multi-Residue Analysis of Veterinary Drugs and Pesticides

A 2023 study developed a quantitative LC-MS/MS method for 72 residues (42 VDs, 28 pesticides, 2 mycotoxins) in bovine urine, following Regulation 2021/808/EC [16].

- Sample Preparation: The optimal protocol involved enzymatic hydrolysis using β-glucuronidase to free conjugated analytes, followed by a solid-phase extraction (SPE) clean-up using OASIS HLB cartridges.

- LC-MS/MS Analysis: Analysis was performed in both positive and negative ionization modes with multiple reaction monitoring (MRM). The total run time was 12 minutes.

- Validation Protocol: The method was validated for linearity, LOD, LOQ, decision limit (CCα), detection capability (CCβ), accuracy, and precision. The use of stable isotopically labelled internal standards for each analyte was critical for achieving accurate quantification and compensating for matrix effects [16].

Expanding the Exposome with Veterinary Drugs and Pesticides

A 2024 study scaled up a targeted human biomonitoring method by incorporating over 40 VDs/antibiotics and pesticides into an existing method for >80 xenobiotics [17].

- Sample Preparation: A generic sample preparation workflow was investigated to determine its suitability for the new analytes.

- LC-MS/MS Analysis: The expanded method was designed to detect and quantify more than 120 analytes in a single analytical run.

- Validation Protocol: The method underwent in-house SLV, evaluating specificity, matrix effects, linearity, intra-/inter-day precision, accuracy, and limits of quantification. Most added analytes showed satisfactory recovery (81–120%) and acceptable precision (RSD < 20%). Matrix effects for most new analytes were within 50–140% [17].

PFAS Analysis in Food Using QuEChERS

An application note based on FDA Method C-010.02 detailed the analysis of 16 PFAS in salmon [18].

- Sample Preparation: The method used a modified QuEChERS (Quick, Easy, Cheap, Effective, Rugged, and Safe) procedure. A 5 g homogenized salmon sample was spiked with internal standards, extracted with acidified acetonitrile, and partitioned using salts. A dual clean-up step was employed: first with a dispersive SPE (dSPE) tube (PSA/ENVI-Carb), followed by a more specific weak anion exchange (WAX) SPE cartridge.

- LC-MS/MS Analysis: To prevent background contamination, a delay column was installed in the LC system. Analysis used a C-18 analytical column and a triple quadrupole mass spectrometer.

- Validation Protocol: The method was validated at three fortification levels (0.05, 0.5, and 2.0 ng/g). Recovery and precision (%RSD) were calculated and shown to meet FDA guidelines [18].

Visualizing the Validation Workflow

The following diagram illustrates the critical steps in developing and validating a multi-residue LC-MS/MS method, from initial setup to the pivotal decision between single and multi-laboratory validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Multi-Residue LC-MS/MS Analysis

| Item | Function / Application | Example from Literature |

|---|---|---|

| Stable Isotopically Labelled Internal Standards (SIL-IS) | Correct for analyte loss during preparation and quantify accurately by compensating for matrix effects and signal suppression. | Used for all 72 analytes in the bovine urine method [16]. |

| Solid-Phase Extraction (SPE) Cartridges | Clean-up and concentrate samples to remove interfering matrix components and enhance sensitivity. | OASIS HLB cartridges for veterinary drugs [16]; ENVI-WAX for PFAS clean-up [18]. |

| QuEChERS Kits | Provide a streamlined, efficient protocol for extracting a wide range of analytes from food matrices. | Used with salt mixtures and dSPE clean-up for PFAS in salmon [18]. |

| LC Delay Column | Placed before the autosampler to trap PFAS background contamination leaching from the LC system itself. | Critical for achieving low LODs in PFAS analysis [18]. |

| β-Glucuronidase Enzyme | Hydrolyze conjugated metabolites (e.g., glucuronides) in urine back to the parent compound for accurate measurement of total burden. | Used in the sample preparation for veterinary drugs in urine [16]. |

The development of robust LC-MS/MS methods for monitoring veterinary drugs, mycotoxins, and PFAS is critically important for ensuring food safety. While single-laboratory validation can demonstrate excellent performance characteristics for increasingly complex multi-residue methods, the evidence strongly indicates that multi-laboratory validation provides a superior assessment of a method's trueness, precision, and real-world robustness. The choice between SLV and MLV should be guided by the intended use of the method. For internal monitoring and initial development, SLV is a powerful tool. However, for methods intended for regulatory compliance, standardization across laboratories, or to inform high-stakes public health decisions, the investment in multi-laboratory validation is indispensable for establishing credibility and ensuring reliable results across the wider scientific and regulatory community.

{ /article }

Method validation is a critical gateway for any new microbiological detection technique seeking acceptance in food safety and pharmaceutical development. It provides the scientific and regulatory evidence that an alternative method is at least as reliable as the traditional, compendial methods it aims to replace. This process is governed by a fundamental question: will the new method yield results equivalent to, or better than, the results generated by the conventional method? [19] In the context of pathogen detection and allergen screening, this ensures the safety of products and protects public health.

A central thesis in this field contrasts the single-laboratory validation (SLV) with the more comprehensive multi-laboratory validation (MLV). While an SLV can provide initial performance data, regulatory guidelines often require an MLV to fully demonstrate a method's robustness, reproducibility, and transferability across different laboratory environments, instruments, and analysts. [20] [21] This guide objectively compares the performance of different validation approaches and the methods they evaluate, providing researchers with a clear framework for selecting and implementing validated microbiological techniques.

Core Principles and Regulatory Frameworks

Defining Validation Parameters

The validation of microbiological methods is distinctly different from chemical assay validation due to the inherent variability of working with biological systems. Parameters are tailored to the type of test—qualitative or quantitative. [21]

Table 1: Validation Parameters for Qualitative vs. Quantitative Microbiological Tests

| Validation Parameter | Qualitative Tests | Quantitative Tests |

|---|---|---|

| Accuracy | Not Required | Required |

| Precision | Not Required | Required |

| Specificity | Required | Required |

| Limit of Detection (LOD) | Required | Required |

| Limit of Quantification (LOQ) | Not Required | Required |

| Linearity | Not Required | Required |

| Range | Not Required | Required |

| Robustness | Required | Required |

| Equivalence | Required | Required |

For qualitative tests, such as those detecting the presence or absence of Salmonella, specificity ensures the method can detect the target microorganism and not give false positives from non-target material. The limit of detection is the lowest number of microorganisms that can be reliably detected in a sample. [19] For quantitative tests, which enumerate microorganisms, additional parameters like precision (the agreement between repeated measurements) and accuracy (the closeness to the true value) are critical. [21]

The Role of Multi-Laboratory Validation

An MLV study, as exemplified by a recent investigation of a Salmonella qPCR method, is designed to demonstrate that a method's performance is consistent and reproducible across multiple, independent laboratories. These studies follow strict international protocols, such as ISO 16140-2:2016, and assess key statistical measures of agreement between the new and reference methods [20]:

- Negative Deviation (ND) and Positive Deviation (PD): Measure the rate of false negatives and false positives compared to the reference method.

- Relative Level of Detection (RLOD): Compares the sensitivity of the new method to the reference method. An RLOD of approximately 1 indicates equivalent performance. [20]

- Fractional Positive Rate: The percentage of positive results should fall within a specified range (e.g., 25–75%) to ensure a valid challenge level. [20]

Performance Comparison of Validated Methods

Validated Pathogen Detection Methods

Regulatory bodies like AFNOR Certification periodically award NF VALIDATION marks to methods that successfully complete the validation process according to international standards. The following table summarizes a selection of recently validated or renewed methods for pathogen detection.

Table 2: Comparison of Select Validated Pathogen Detection Methods (2025)

| Method Name | Technology | Target | Key Validation Updates / Scope |

|---|---|---|---|

| EZ-Check Salmonella spp. (BIO-RAD) | PCR | Salmonella spp. | New enrichment protocol for chocolates; addition of automated instrument (iQ-Check Prep System v5) [22] |

| Thermo Scientific SureTect (OXOID) | PCR | Listeria monocytogenes, Listeria species | Scope extended to 125g samples for dairy and multi-composite foods [23] |

| REBECCA (bioMérieux) | Chromogenic Media | E. coli, Coliforms | Extension to allow colony counting on a single plate [22] |

| Assurance GDS (Millipore Sigma) | Molecular / Immunoassay | STEC, E. coli O157:H7 | Extended application to raw meats, poultry, dairy, and environmental samples [23] |

| FDA Salmonella qPCR Method | qPCR | Salmonella | Validated for frozen fish; high reproducibility, specificity, and sensitivity [20] |

Comparative Experimental Data: qPCR vs. Culture Method

A multi-laboratory validation study provides robust, quantitative data for a direct comparison between an alternative method and the reference culture method. The study design and results for an FDA qPCR method for detecting Salmonella in frozen fish are summarized below.

Table 3: Experimental Data from MLV Study of FDA qPCR Method for Salmonella in Frozen Fish

| Performance Metric | qPCR Method | BAM Culture Method | Acceptance Criterion |

|---|---|---|---|

| Positive Rate | ~39% | ~40% | Within 25%-75% (Met) |

| Relative Level of Detection (RLOD) | ~1 | (Reference) | Approx. 1 (Met) |

| Negative Deviation (ND) | Statistically insignificant | -- | Below acceptability limit (Met) |

| Positive Deviation (PD) | Statistically insignificant | -- | Below acceptability limit (Met) |

| Time to Result | ~24 hours | 4-5 days | -- |

| DNA Extraction Impact | Automatic extraction improved sensitivity and DNA quality | -- | -- |

Experimental Protocol Summary [20]:

- Participating Laboratories: 14 (7 FDA labs, 7 state regulatory labs)

- Sample Matrix: 24 blind-coded test portions of frozen fish per lab.

- Challenge Strains: Inoculated with low (0.58 MPN/25g) and high (4.27 MPN/25g) levels of Salmonella.

- Reference Method: FDA BAM culture method.

- Test Method: FDA qPCR method targeting the invA gene.

- Statistical Analysis: Performance was assessed against FDA and ISO 16140-2:2016 guidelines for ND, PD, and RLOD.

The conclusion was that the qPCR and BAM culture methods "performed equally well" for detection, with the qPCR offering a significant advantage in speed. [20]

The Validation Workflow: From Single-Lab to Multi-Lab

The path from method development to regulatory acceptance follows a logical sequence, moving from internal verification to external, multi-laboratory assessment. The following diagram illustrates this workflow and the key questions answered at each stage.

The Scientist's Toolkit: Essential Reagents and Materials

Successful method validation relies on a suite of critical reagents and materials. The following table details key components used in the validation of rapid methods, such as the qPCR method featured in the MLV study.

Table 4: Key Research Reagent Solutions for Microbiological Method Validation

| Item / Solution | Function in Validation | Example from MLV Study |

|---|---|---|

| Enrichment Broths | Supports the recovery and growth of target pathogens from the sample matrix, crucial for detection sensitivity. | Buffered Peptone Water used for pre-enrichment. [20] |

| DNA Extraction Kits | Isolates high-quality DNA for PCR-based methods; automated systems enhance throughput and reproducibility. | Automatic DNA extraction methods were compared with manual kits and shown to improve qPCR sensitivity. [20] |

| Primers & Probes | Specifically amplifies and detects the target organism's genetic material in qPCR assays. | Custom-designed primers and a TaqMan probe targeting the Salmonella invA gene. [20] |

| Chromogenic Media | Allows visual enumeration and presumptive identification based on enzyme-specific color reactions. | Used in reference culture methods and alternative methods like REBECCA and COMPASS. [22] [23] |

| Reference Strains | Provides a known, traceable positive control to challenge the method's accuracy and LOD. | ATCC strains used for inoculation in the MLV study. [20] |

| Neutralizing Agents | Critical for testing antimicrobial products; inactivates preservatives to allow microbial recovery. | Chemical agents, dilution, or filtration used per USP <1227>. [21] |

The rigorous, data-driven process of microbiological method validation ensures that new, faster technologies like qPCR can be trusted to perform as reliably as traditional culture methods. The evidence from multi-laboratory validation studies provides the strongest foundation for this trust, demonstrating that a method is not only effective in a single, controlled environment but is also reproducible and rugged across the wider scientific community.

The continuous cycle of validation and renewal, as seen with the NF VALIDATION updates, drives innovation in food safety and pharmaceutical development. By adhering to structured protocols and international standards, researchers and industry professionals can confidently adopt advanced methods, enhancing our ability to rapidly and accurately screen for pathogens and allergens, thereby safeguarding public health.

The global food industry requires robust analytical methods to ensure that animal-derived products are free from harmful levels of veterinary drug residues. This case study examines the rigorous multi-laboratory validation of a comprehensive screening method for 152 veterinary drug residues in diverse food matrices, officially designated as AOAC Official Method 2020.04 [24] [25]. The method represents a significant advancement in food safety testing, providing a harmonized approach for regulatory and commercial laboratories worldwide.

This analysis places particular emphasis on the critical distinction between single-laboratory validation (SLV) and multi-laboratory validation (MLV), demonstrating how collaborative studies establish superior method reliability, reproducibility, and fitness-for-purpose across different laboratory environments, instruments, and personnel.

Method Scope and Configuration

The screening method was designed to address the complex challenge of detecting a wide spectrum of veterinary drug residues across various food commodities. To achieve optimal performance for different drug classes, the method employs a streamlined approach divided into four analytical streams [25]:

- Stream A: Targets 105 veterinary drugs, including antibiotics, anti-inflammatory drugs, and antiparasitic agents, using a generic QuEChERS procedure.

- Stream B: Focuses on 23 beta-lactam antibiotics with a modified QuEChERS procedure using basic buffer extraction.

- Stream C: Optimized for 14 aminoglycoside antibiotics employing molecularly imprinted polymers for selective clean-up.

- Stream D: Designed for 10 tetracycline antibiotics with protocols to reduce chelation during analysis.

This division allows for tailored extraction and analysis procedures specific to each drug class's chemical properties, maximizing detection sensitivity while accounting for complex matrix effects present in different food commodities [25].

Sample Preparation and Analysis

The method utilizes a distinctive "unspiked-spiked" approach where each sample is prepared in two test portions [25]:

- Test Portion 1: Unspiked portion to check for native residues

- Test Portion 2: Spiked with target veterinary drugs at the Screening Target Concentration (STC)

This paired approach compensates for losses during extraction and matrix effects during mass spectrometry analysis. Sample preparation employs QuEChERS-based extraction with variations tailored to different drug classes, followed by LC-MS/MS analysis using SCIEX triple quadrupole instruments (5500, 6500, and 6500+ systems) [25]. Data processing utilizes MultiQuant software (v3.0) with side-by-side peak review to efficiently compare unspiked versus spiked samples.

Single-Laboratory Validation Findings

Initial single-laboratory validation demonstrated the method's capability to reliably detect veterinary drug residues across a wide range of food products, including dairy, meat, fish, egg-based foods, animal fat, and byproducts [25]. The SLV established foundational performance characteristics, confirming that the method was sufficiently robust to proceed to multi-laboratory validation.

Multi-Laboratory Validation Study Design

Experimental Protocol

The multi-laboratory validation study was conducted following AOAC Standard Method Performance Requirement (SMPR) 2018.010 [24] [26]. Five independent laboratories located across Europe, Asia, and America participated in the validation, applying the method to various food matrices under reproducibility conditions [25]. This geographical diversity helped ensure the method's performance across different operational environments.

The core validation metric was the Probability of Detection (POD), calculated for both blank test samples and samples spiked at the Screening Target Concentration (STC). Acceptance criteria required PODs ≤10% in blank samples and ≥90% in spiked samples [24]. The STC was defined as the lowest concentration for which a compound can be detected in at least 95% of samples, typically set at or below the Maximum Residue Limits (MRLs) established by regulatory bodies [25] [27].

Food Matrices and Veterinary Drugs Covered

The validation encompassed a wide variety of food commodities to demonstrate method robustness across different matrix types [24]:

- Milk-based ingredients and related products (milk fractions, infant formula, infant cereals, and baby foods)

- Meat- and fish-based ingredients and related products (fresh, powdered, cooked, infant cereals, and baby foods)

- Other ingredients based on eggs, animal fat, and animal byproducts

The 152 veterinary drugs covered included antibiotics, anti-inflammatory agents, antiparasitics, and tranquilizers from multiple therapeutic classes, making it one of the most extensive multi-residue detection platforms available [25].

Validation Results and Performance Data

Multi-Laboratory Validation Outcomes

The collaborative validation study yielded exemplary results, confirming the method's robustness and transferability across different laboratory settings:

- POD at STC: ≥94% across all participating laboratories, significantly exceeding the target of ≥90% [24] [25]

- POD in blank samples: ≤9%, meeting the acceptance criterion of ≤10% [24] [25]

- Proficiency testing: Method effectiveness was further evaluated through participation in 92 proficiency tests, yielding >99% satisfactory submitted results (n=784) [24]

These results demonstrated that the method consistently met acceptance criteria under reproducibility conditions, with significantly lower false-positive and false-negative rates than typically observed with single-laboratory validated methods.

Comparative Performance: Single vs. Multi-Laboratory Validation

Table 1: Comparison of Single-Lab vs. Multi-Lab Validation Metrics

| Performance Parameter | Single-Lab Validation | Multi-Lab Validation | Significance |

|---|---|---|---|

| False Compliant Rates | Not fully established | <5% confirmed | Critical for regulatory decisions |

| Matrix Effects Understanding | Limited to one lab's experience | Comprehensive across multiple matrices | Reveals method robustness |

| Reproducibility Assessment | Not measurable | Quantified across 5 labs | Confirms transferability |

| Operational Variability | Not assessed | Accounted for different operators, equipment, environments | Demonstrates real-world applicability |

| Regulatory Acceptance | Preliminary | AOAC Final Action Status [24] | Gold standard for compliance |

The multi-laboratory approach provided a more comprehensive assessment of method robustness, accounting for variables that cannot be captured in single-laboratory studies, including different operators, equipment, reagents, and environmental conditions [24] [25]. This comprehensive validation directly supported the method's approval for AOAC Final Action status, representing the highest level of methodological recognition [24].

Method Workflow and Application

The following diagram illustrates the comprehensive workflow for veterinary drug residue screening, from sample preparation to final analysis:

The Scientist's Toolkit: Essential Research Reagents and Equipment

Successful implementation of the multi-residue screening method requires specific analytical technologies and reagents optimized for veterinary drug analysis.

Table 2: Essential Research Tools for Veterinary Drug Residue Analysis

| Tool/Category | Specific Examples/Formats | Function in Analysis |

|---|---|---|

| LC-MS/MS Instrumentation | SCIEX 5500, 6500, 7500+ systems [25] [28] | High-sensitivity detection and quantification of target analytes |

| Liquid Chromatography Columns | HSS T3 Column (1.8 µm, 2.1 × 100 mm) [27] | Separation of complex mixtures with excellent retention and peak shape |

| Sample Preparation Sorbents | EMR-Lipid, dSPE, MIP [27] [29] | Removal of matrix interferents (lipids, pigments) while retaining target analytes |

| Extraction Solvents | Oxalic acid in acetonitrile, Basic buffers [27] | Efficient analyte extraction while minimizing chelation (tetracyclines) |

| Data Processing Software | MultiQuant, SCIEX OS [25] | Automated peak integration, comparison of unspiked vs. spiked samples |

| Quality Control Materials | Matrix-matched standards, Spiked samples [25] [27] | Method performance verification, calibration, and quality assurance |

Impact on Food Safety and Public Health

The validated screening method addresses significant public health concerns associated with veterinary drug residues in food, including:

- Antimicrobial Resistance (AMR): Comprehensive monitoring helps combat the emergence and spread of AMR by ensuring food products are free from excessive antibiotic levels [25] [30].

- Allergic Reactions and Toxicity: Detection of residues at or below MRLs protects consumers from potential allergic reactions, carcinogenic, and teratogenic effects [30].

- Regulatory Compliance: The method supports compliance with diverse international standards, facilitating global trade and ensuring consistent food safety monitoring [25] [27].

The method's validation across multiple laboratories makes it particularly valuable for establishing harmonized monitoring programs that yield comparable results across different jurisdictions and testing facilities.

The multi-laboratory validation of AOAC Official Method 2020.04 represents a paradigm shift in veterinary drug residue screening, establishing a new standard for methodological robustness in food safety testing. The collaborative validation approach demonstrated unequivocally that the method delivers:

- Consistent Performance: With PODs ≥94% at STC and ≤9% in blanks across five independent laboratories [24] [25]

- Broad Applicability: Across diverse food matrices and veterinary drug classes [24]

- Regulatory Readiness: As evidenced by AOAC Final Action status [24]

This case study underscores the critical importance of multi-laboratory validation over single-laboratory studies for methods intended for widespread regulatory and commercial use. The comprehensive data generated through collaborative validation provides greater confidence in method performance under real-world conditions, ultimately strengthening the global food safety system and protecting consumer health.

For researchers and laboratories implementing veterinary drug residue testing programs, the validated multi-laboratory approach offers a proven framework for ensuring result reliability, regulatory acceptance, and meaningful public health protection.