Precision Testing in Food Analysis: A Practical Guide to Repeatability and Intermediate Precision

This article provides a comprehensive guide to precision testing for food analytical methods, with a focused exploration of repeatability and intermediate precision.

Precision Testing in Food Analysis: A Practical Guide to Repeatability and Intermediate Precision

Abstract

This article provides a comprehensive guide to precision testing for food analytical methods, with a focused exploration of repeatability and intermediate precision. Tailored for researchers and scientists, it covers foundational definitions, step-by-step calculation methodologies, strategies for troubleshooting common variability issues, and protocols for integrating precision into method validation to ensure compliance and data reliability. The content synthesizes regulatory guidelines and practical applications to deliver actionable insights for developing robust quality control systems in food science and development.

Understanding Precision: The Pillars of Reliable Food Analysis

Defining Precision and Its Critical Role in Method Validation

Precision is a fundamental validation parameter that quantifies the degree of scatter among a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions. It provides a critical measure of a method's reliability and reproducibility, serving as a cornerstone for building confidence in analytical data used for drug development, quality control, and regulatory compliance. For researchers and scientists developing analytical methods, demonstrating acceptable precision is mandatory for method validation, confirming that the procedure will yield consistent results throughout its routine use. The International Conference on Harmonisation (ICH) and regulatory bodies like the FDA provide frameworks for precision validation, with recent updates to the ICH Q2(R2) guideline emphasizing its continued critical importance alongside accuracy [1].

Within the broader context of food methods research, precision testing ensures that methods can reliably detect contaminants, verify nutritional composition, and confirm the absence of prohibited substances in complex matrices. The increasing complexity of food safety challenges demands robust analytical systems where precision is not just a statistical requirement but a practical necessity for protecting public health and maintaining consumer trust, particularly in fast-growing sectors like organic foods and novel food ingredients [2]. This application note delineates the components of precision, provides experimental protocols for its determination, and establishes its indispensable role in method validation.

Components of Precision: Repeatability and Intermediate Precision

Precision is evaluated at three distinct levels, with repeatability and intermediate precision representing the core components typically assessed during method validation for single-laboratory use.

Repeatability (Intra-assay Precision)

Repeatability, also known as intra-assay precision, expresses the precision under the same operating conditions over a short interval of time. It represents the best-case scenario precision that the method can achieve. The USP Chapter 1225 and ICH Q2(R1) guidelines mandate that repeatability should be assessed using a minimum of 6 determinations at 100% of the test concentration, or a minimum of 9 determinations covering the specified range for the procedure (e.g., 3 concentrations/3 replicates each) [3]. For example, in a study evaluating emulsifier testing methods, sodium gluconate demonstrated excellent repeatability with an intra-day precision of 2.07% RSD (Relative Standard Deviation), while sodium lactate achieved 2.7% RSD in repeated experiments (n=5) [4].

Intermediate Precision

Intermediate precision expresses within-laboratories variations, such as different days, different analysts, different equipment, and different reagent lots. The FDA's updated guidance emphasizes that precision, including its intermediate precision component, must be established across the method's range [1]. Investigating the effects of these variables establishes whether an analytical procedure will provide reliable results during normal, expected operational variations. In the emulsifier study, the inter-day precision (n=3) for sodium gluconate was 2.95% RSD, demonstrating consistent performance across different analysis times [4]. The term "ruggedness" was historically used by the USP to describe this reproducibility under a variety of conditions but is being phased out in favor of "intermediate precision" to harmonize with ICH terminology [3].

Table 1: Precision Results from Emulsifier Method Evaluation Study

| Emulsifier | Intra-Day Precision (%RSD, n=5) | Inter-Day Precision (%RSD, n=3) | Recovery Rate (%) |

|---|---|---|---|

| Sodium Gluconate | 2.07 | 2.95 | 94.93 |

| Sodium Lactate | 2.7 | 1.55 | 99.52 |

| Propylene Glycol | 4.26 | 1.47 | 78.73 |

| Calcium Stearate | 0.5 | 0.92 | 40.22-72.17 |

Regulatory Framework and Current Guidelines

Recent updates to regulatory guidance have refined the expectations for precision validation. The FDA has updated its decades-old guidance on analytical test method validation based on revisions of the ICH Q2(R2) guidelines. While the fundamental requirement for precision demonstration remains, the updated approach provides flexibility for new types of analytical methods and focuses on the most critical validation parameters [1].

A significant change in the new guidance is the integrated evaluation of accuracy and precision. These parameters can now be evaluated independently or in a single study, with the requirement that accuracy must be established across the entire range of the analytical procedure. For multivariate analytical procedures, which are now explicitly addressed, the test method should be evaluated for metrics such as the root mean square error of prediction (RMSEP). If RMSEP is comparable to acceptable root mean square error of calibration, it indicates the model is sufficiently accurate when tested with an independent test set [1].

The guidance specifically requires precision validation for:

- Identification tests

- Assay of drug substance or drug product

- Purity tests

- Impurity tests (quantitative)

The landscape of analytical tools is quickly evolving, with testing methodologies becoming more precise. This evolution necessitates that businesses implement effective testing programs at various stages of the supply chain that rely on science-based information and product-specific attributes [2].

Experimental Protocol for Determining Precision

Protocol for Repeatability Assessment

Objective: To determine the repeatability (intra-assay precision) of the analytical method under the same operating conditions.

Materials and Reagents:

- Homogeneous sample aliquot (standard or spiked matrix)

- Reference standards

- Appropriate solvents and mobile phases

- Calibrated analytical instrument (HPLC, GC, MS, etc.)

Procedure:

- Prepare a single homogeneous sample at 100% of the test concentration.

- Perform a minimum of 6 independent sample preparations and analyses using the same analytical procedure.

- Alternatively, prepare samples at three different concentrations (e.g., 80%, 100%, 120%) covering the specified range with 3 replicates each (total 9 determinations).

- All preparations and analyses should be performed by the same analyst using the same instrument within the same day.

- Calculate the mean, standard deviation, and relative standard deviation (%RSD) for the results.

Acceptance Criteria: The %RSD should be predefined based on method requirements and typical industry standards. For assay of active pharmaceuticals, RSD is typically ≤1-2%, while for impurities at lower levels, higher RSD may be acceptable.

Protocol for Intermediate Precision Assessment

Objective: To establish the impact of random events within the same laboratory on the analytical results.

Materials and Reagents:

- Same as for repeatability assessment

Procedure:

- Design the experiment to incorporate variations including:

- Different analysts (at least 2)

- Different instruments (same type and model)

- Different days

- Different reagent lots

- Prepare homogeneous samples at 80%, 100%, and 120% of test concentration with 3 replicates at each level (total 9 determinations per variation).

- Execute the analysis following the same procedure but incorporating the planned variations.

- Calculate the mean, standard deviation, and %RSD for the combined results from all variations.

Acceptance Criteria: The overall %RSD from the intermediate precision study should be predefined and will typically be slightly higher than for repeatability alone but within acceptable limits for the method's intended use.

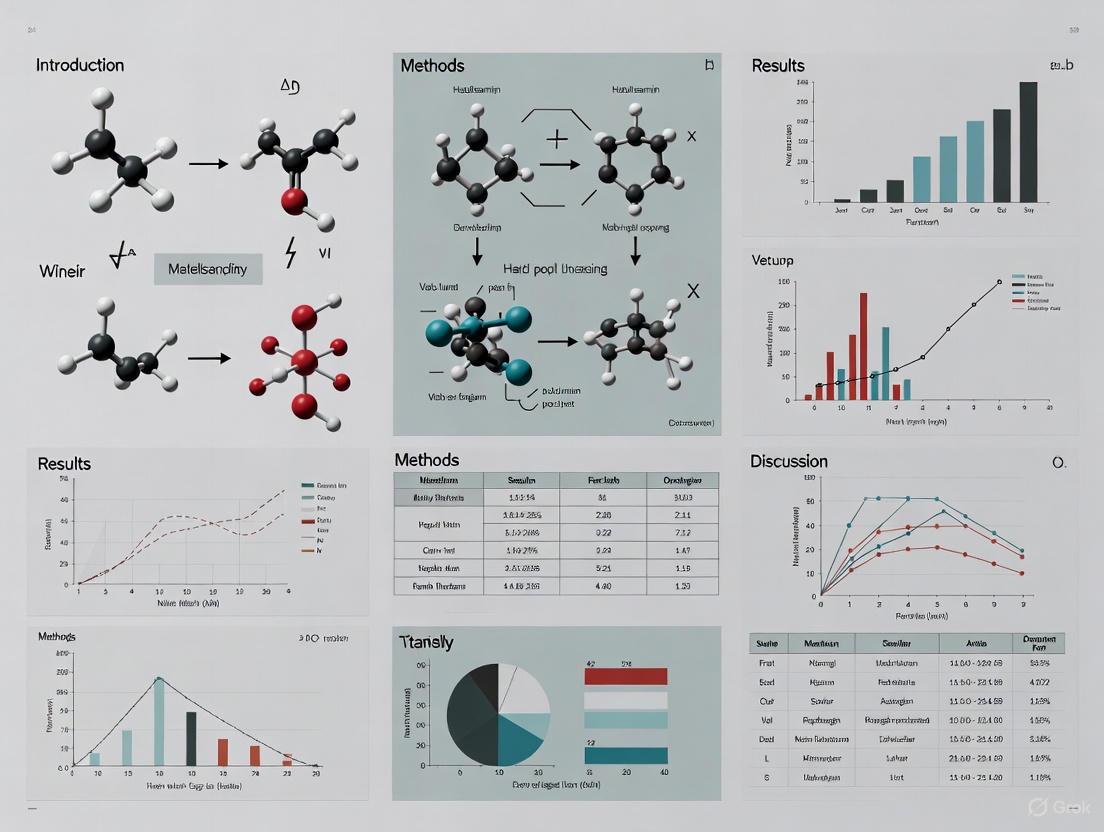

Diagram 1: Precision Assessment Workflow illustrating the sequential process for evaluating repeatability and intermediate precision in method validation.

Essential Research Reagent Solutions for Precision Studies

Table 2: Key Research Reagents and Materials for Precision Experiments

| Reagent/Material | Function in Precision Studies | Application Notes |

|---|---|---|

| Certified Reference Standards | Provides known concentration for accuracy and precision determination | Essential for recovery studies; should be traceable to national/international standards |

| HPLC/Grade Solvents | Mobile phase preparation for chromatographic methods | Different lots should be used in intermediate precision studies |

| Buffer Components (e.g., phosphate, acetate) | Mobile phase modification for pH control | pH and concentration variations test method robustness |

| Stable Homogeneous Sample | Test matrix for repeated measurements | Ensures variability comes from method not sample heterogeneity |

| Column Batches (multiple) | Stationary phase for separation | Different column lots evaluate separation robustness |

Robustness and Its Relationship to Precision

While precision addresses the random variation of a method under normal operating conditions, robustness tests a method's capacity to remain unaffected by small, deliberate variations in method parameters. According to ICH and USP guidelines, robustness is defined as "a measure of its capacity to remain unaffected by small but deliberate variations in procedural parameters listed in the documentation, providing an indication of the method's or procedure's suitability and reliability during normal use" [3].

Robustness is traditionally investigated during method development rather than formal validation, as identifying parameters that affect the method early can prevent issues during validation and transfer. In liquid chromatography, typical variations examined in robustness studies include:

- Mobile phase composition and pH

- Flow rate

- Temperature

- Detection wavelength

- Different column lots

- Gradient variations [3]

Experimental designs for robustness studies often employ multivariate approaches such as full factorial, fractional factorial, or Plackett-Burman designs, which allow multiple variables to be studied simultaneously rather than one variable at a time. These efficient screening designs help identify critical factors that affect method performance and help establish system suitability parameters [3].

Case Study: Precision in Food Additive Analysis

A 2025 study on emulsifier testing methods provides a practical illustration of precision assessment in food additive analysis. The study compared and analyzed domestic and international analytical methods to improve the reproducibility and efficiency limitations of emulsifier testing methods registered in the Korean Food Code. Precision (%RSD) and recovery rates were evaluated by conducting intra-day (n=5) and inter-day (n=3) repeated experiments on 20 types of emulsifiers [4].

The results demonstrated varying precision performance across different emulsifiers:

- Sodium gluconate showed excellent precision with intra-day 2.07% and inter-day 2.95% RSD, with a recovery rate of 94.93% within the standard range (90-110%).

- Sodium lactate achieved intra-day 2.7% and inter-day 1.55% RSD precision with a 99.52% recovery rate.

- Propylene glycol met the precision acceptability criterion (≤5%) with intra-day 4.26% and inter-day 1.47% RSD, but its recovery rate decreased to 78.73% due to blank test background interference.

- Calcium stearate recorded outstanding precision with intra-day 0.5% and inter-day 0.92% RSD; however, its recovery rate diminished to 40.22-72.17% due to matrix effects and calculation formula errors [4].

This case study highlights that while precision is necessary, it is not sufficient alone; accuracy (recovery rate) must also be acceptable for a method to be fit-for-purpose. The study derived method simplification plans through comparison with Codex Alimentarius standards, presenting the necessity for customized testing methods according to emulsifier characteristics [4].

Precision remains a cornerstone of analytical method validation, with repeatability and intermediate precision providing essential metrics for assessing method reliability. Recent regulatory updates have refined the approach to precision validation, particularly for novel analytical technologies and multivariate methods. The thorough evaluation of precision, alongside accuracy and robustness, provides the scientific foundation for reliable analytical methods that ensure product quality, consumer safety, and regulatory compliance across the pharmaceutical and food industries. As analytical challenges continue to evolve with novel foods and complex matrices, the principles of precision validation will remain essential for generating trustworthy data and maintaining confidence in analytical results.

In the realm of analytical chemistry, food methods research, and drug development, demonstrating the reliability of analytical methods is paramount. Precision, a critical component of method validation, assesses the variability in a series of measurements obtained from multiple sampling of the same homogeneous sample. Within precision testing, repeatability and intermediate precision represent two distinct hierarchical levels of variability measurement. A clear understanding of their differences is essential for researchers and scientists to properly design validation protocols, interpret results, and ensure data integrity for regulatory compliance. This document delineates the conceptual and practical distinctions between these two precision parameters, providing structured experimental protocols and data analysis frameworks tailored for professionals in food science and pharmaceutical development.

Theoretical Foundations and Definitions

The Precision Hierarchy

Precision in analytical methodology is not a single characteristic but a spectrum of variability under different experimental conditions. The International Conference on Harmonisation (ICH) guidelines formalize this spectrum into a hierarchy, with repeatability and intermediate precision occupying distinct levels based on the sources of variation they encompass [5].

Repeatability expresses the precision under the same operating conditions over a short interval of time. It represents the best-case scenario for method performance, capturing the minimal expected variation when a method is executed by the same analyst, using the same equipment, reagents, and laboratory, within a short time frame. It is also termed intra-assay precision [5].

Intermediate Precision measures the variation in test results when the same method is performed under different but normal operating conditions within a single laboratory over time. It introduces controlled changes that would be expected during routine operation, such as different days, different analysts, or different equipment. It reflects real-world internal lab consistency while maintaining the same analytical method [6] [5].

Reproducibility (not the focus of this document) represents the highest level of variability, assessed through collaborative studies across different laboratories. It captures the maximum expected method variability and is crucial for method standardization [6] [5].

Table 1: Core Definitions and Characteristics of Precision Measures

| Precision Measure | Scope of Variability Assessment | Experimental Conditions | Primary Use Case |

|---|---|---|---|

| Repeatability | Variation under identical conditions | Same analyst, same equipment, same day, same reagents | Establishes the fundamental capability of the method |

| Intermediate Precision | Variation within a single laboratory | Different days, different analysts, different equipment | Verifies method robustness for routine use in a lab |

| Reproducibility | Variation between different laboratories | Different labs, different equipment, different personnel | Method standardization and transfer |

Conceptual Relationship and Distinction

The relationship between repeatability, intermediate precision, and reproducibility is fundamentally hierarchical. Repeatability forms the base level of precision, as it quantifies the inherent noise of the method under ideal circumstances. Intermediate precision builds upon this by incorporating additional, expected sources of intra-laboratory variation. The total variance observed in intermediate precision (( \sigma{IP}^2 )) can be conceptualized as the sum of the variance from repeatability (( \sigma{within}^2 )) and the variance introduced by the changing conditions (( \sigma_{between}^2 )) [6].

The formula for calculating intermediate precision is: σIP = √(σ²within + σ²between) [6]

This statistical relationship underscores that intermediate precision will always be a larger, more conservative estimate of variability than repeatability, as it encompasses more potential sources of error. The following diagram illustrates this hierarchical relationship and the expanding scope of variability.

Diagram 1: The Precision Hierarchy: Expanding Scope of Variability.

Experimental Protocols and Data Analysis

Accurately determining repeatability and intermediate precision requires carefully designed experiments and appropriate statistical analysis. The following protocols are aligned with ICH guidelines and can be adapted for various analytical methods in food and pharmaceutical research.

Protocol for Assessing Repeatability

The goal of this protocol is to quantify the method's variability under the best possible, most controlled conditions.

1. Experimental Design:

- Prepare a minimum of 6-12 identical samples from a single, homogeneous sample source [6] [7].

- All samples must be analyzed by the same analyst.

- All analyses must be performed using the same instrument.

- All analyses must be completed within a short time frame (e.g., the same day or same session) to minimize temporal drift [5].

- The analyte concentration should cover the relevant range, ideally testing at least three different concentrations (low, medium, high) with three replicates each [5].

2. Data Analysis:

- Calculate the mean (( \bar{x} )) and standard deviation (( s )) of the measurements.

- Compute the Relative Standard Deviation (RSD%), also known as the Coefficient of Variation (CV), using the formula: RSD% = (s / ( \bar{x} )) × 100%

- The resulting RSD% is a direct measure of the method's repeatability [6].

Protocol for Assessing Intermediate Precision

This protocol is designed to capture the additional variability introduced by normal, within-lab operational changes.

1. Experimental Design:

- The study should span a minimum of two different days [6] [5].

- Involve a minimum of two different analysts [5].

- If available, use different pieces of equipment of the same type and calibration.

- For each combination of conditions (e.g., Day 1/Analyst A, Day 2/Analyst B), analyze a minimum of three replicates of the same homogeneous sample at each concentration level [5].

- A balanced design with a minimum of 6-12 measurements across the varying conditions is recommended [6].

2. Data Analysis:

- The data can be analyzed using Analysis of Variance (ANOVA) to decompose the different sources of variation (e.g., analyst, day) [5].

- Calculate the pooled standard deviation or use the variance components from ANOVA to compute the overall intermediate precision standard deviation (( \sigma_{IP} )) [6].

- The RSD% is then calculated from ( \sigma_{IP} ) to express intermediate precision as a percentage.

Table 2: Summary of Experimental Protocols for Precision Assessment

| Protocol Component | Repeatability | Intermediate Precision |

|---|---|---|

| Sample | Homogeneous, single source | Homogeneous, single source |

| Analysts | 1 | Minimum of 2 |

| Time Frame | Single day/session | Minimum of 2 different days |

| Equipment | Single instrument | Different instruments (if available) |

| Replicates | Minimum 6-12 total | Minimum 3 per condition (e.g., per analyst/day) |

| Key Calculation | RSD% from all replicates | ( σ{IP} = √(σ²{within} + σ²_{between}) ), then RSD% |

| Statistical Method | Descriptive statistics | Analysis of Variance (ANOVA) |

Practical Application and Data Interpretation

Establishing Acceptance Criteria

The interpretation of repeatability and intermediate precision results is not universal; it depends on the method's intended purpose and the industry-specific standards. The RSD% values obtained from the experiments must be compared against pre-defined acceptance criteria [6].

- For pharmaceutical assays of active ingredients, intermediate precision RSD% is often expected to be ≤ 2.0% for excellent precision, though methods for trace analysis may allow for higher variability [6].

- There are no universal RSD% limits; they should be scientifically justified based on the method's application. The acceptance criteria should align with the method's intended purpose [6].

Table 3: Example Framework for Interpreting Intermediate Precision RSD%

| RSD% Result | Interpretation | Typical Action |

|---|---|---|

| ≤ 2.0% | Excellent precision | Method is suitable for its intended use. |

| 2.1% - 5.0% | Acceptable precision | Method is likely acceptable; consider monitoring. |

| 5.1% - 10.0% | Marginal precision | Investigate sources of variability; method may require improvement. |

| > 10.0% | Unacceptable precision | Method is not suitable; requires re-development or re-optimization. |

Case Study Context: Precision in Food and Nutrition Research

The concepts of repeatability and intermediate precision are transferable to modern food and nutrition research. For instance, in the development of precision nutrition applications, AI-based systems recommend meals based on individual diner profiles and the precise nutritional content of food [8]. The reliability of the underlying nutritional analysis is paramount.

- Scenario: A laboratory validates a method for quantifying macronutrients in restaurant meals for a dietary app.

- Repeatability ensures that when the same lab technician analyzes the same meal sample multiple times in one sitting, the results are consistent.

- Intermediate Precision ensures that different technicians analyzing the same meal on different days, or after a reagent batch change, obtain consistent results. This is critical for ensuring the AI system's recommendations remain accurate over time and across different operational contexts [8].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials and reagents crucial for conducting robust precision studies in analytical method validation.

Table 4: Key Research Reagent Solutions for Precision Studies

| Item | Function & Importance in Precision Testing |

|---|---|

| Certified Reference Materials (CRMs) | Provides a sample with a known and traceable analyte concentration. Serves as the "true value" for calculating accuracy and is the foundational material for all precision experiments. |

| High-Purity Solvents & Reagents | Minimizes variability introduced by impurities in reagents. Consistent reagent quality is essential for achieving low RSD% in both repeatability and intermediate precision. |

| In-House Reference Materials | A well-characterized, homogeneous sample produced internally. Used for routine system suitability tests and long-term precision monitoring, as shown in a method for quantifying plastic additives [9]. |

| Stable, Homogeneous Test Samples | The test sample must be homogeneous and stable throughout the testing period. A lack of homogeneity can artificially inflate variability measurements, invalidating the study. |

| Calibrated Volumetric Equipment | Ensures accurate and precise measurement of volumes. Miscalibrated pipettes, flasks, and syringes are a significant source of systematic error and poor intermediate precision. |

| Quality Control (QC) Samples | Samples with known expected values analyzed alongside test samples. QC charts tracking precision over time are a practical application of intermediate precision monitoring. |

In the structured environment of analytical method validation, a clear distinction between repeatability and intermediate precision is non-negotiable. Repeatability defines the inherent, best-case variability of a method, serving as a benchmark for its fundamental performance. Intermediate precision provides a realistic estimate of the variability a laboratory can expect during routine use, incorporating the inevitable small changes in operational parameters. A method cannot be considered robust or fit-for-purpose without a thorough assessment of both. By implementing the defined experimental protocols, utilizing appropriate statistical tools, and adhering to scientifically justified acceptance criteria, researchers and drug development professionals can ensure the generation of reliable, high-quality data that stands up to regulatory scrutiny.

In analytical chemistry, particularly within food methods research and drug development, precision is a fundamental validation parameter that measures the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [10]. Precision assessment is not a single measurement but rather a hierarchical concept that evaluates variability under different experimental conditions, providing scientists with crucial information about method reliability during routine use. The precision hierarchy comprises three distinct levels: repeatability, intermediate precision, and reproducibility [6] [11]. Understanding this hierarchy is essential for researchers designing validation protocols, as it allows for systematic evaluation of random events that might affect the analytical procedure's performance in real-world scenarios, from internal quality control to collaborative studies between laboratories.

For food and pharmaceutical researchers, establishing precision is critical for ensuring consistent product quality, detecting variations in raw materials, and confirming that products meet regulatory specifications throughout their shelf life. The International Conference on Harmonisation (ICH) guidelines Q2(R1) and its recent update Q2(R2) provide the foundational framework for precision validation, with regulatory bodies like the FDA requiring complete precision assessment prior to New Drug Application submission and characterization of pivotal clinical trial materials [12] [1].

The Theoretical Framework of Precision Hierarchy

Conceptual Relationships and Definitions

The precision hierarchy represents increasing levels of variability incorporation, with each level providing information about method performance under different experimental conditions. These concepts exist on a continuum of variability sources, from the minimally variable conditions of repeatability to the extensively variable conditions of reproducibility.

Table 1: Key Characteristics of Precision Hierarchy Levels

| Precision Level | Experimental Conditions | Variability Sources | Typical Expression | Primary Application |

|---|---|---|---|---|

| Repeatability | Same procedure, operator, equipment, laboratory, short time interval [11] | Random variation under nearly identical conditions | Standard deviation (sr) or Relative Standard Deviation (RSD%) [13] | Method capability under optimal conditions |

| Intermediate Precision | Within-laboratory variations: different days, analysts, equipment, calibrations [6] [10] | Random events within a single laboratory | Standard deviation (sRW) or RSD% [11] | Routine laboratory performance expectations |

| Reproducibility | Different laboratories, procedures, operators, equipment [6] [13] | Inter-laboratory variations including different reagents, environmental conditions | Standard deviation (sR) or RSD% [13] | Method standardization and transfer between sites |

The relationship between these precision levels can be visualized through the following conceptual diagram, which illustrates the increasing variability and scope of conditions at each level:

Statistical Foundations and Variance Components

The statistical foundation of precision hierarchy lies in variance components analysis, which partitions the total method variability into its constituent sources. The relationship between different precision levels follows a predictable pattern where variance increases as more sources of variability are introduced:

Repeatability Variance (σ²r): Represents the minimum achievable variance under ideal conditions and forms the baseline for precision assessment [11].

Intermediate Precision Variance (σ²IP): Incorporates additional within-laboratory variance components and is calculated using the formula: σIP = √(σ²within + σ²between) where σ²within represents repeatability variance and σ²between represents variance from changing conditions (different days, analysts, equipment) [6].

Reproducibility Variance (σ²R): Includes all variance components from intermediate precision plus between-laboratory variations, representing the total method variability [13].

This variance component approach allows researchers to identify specific sources of variability that most significantly impact method performance and focus improvement efforts accordingly. The precision estimates are typically expressed as standard deviation (SD) or relative standard deviation (RSD%), with the latter being preferred for comparing variability across different concentration levels as it represents the coefficient of variation [13].

Practical Implementation and Experimental Protocols

Experimental Design for Precision Assessment

Comprehensive precision assessment requires carefully designed experiments that systematically introduce variability sources while controlling for others. The following workflow illustrates a typical nested experimental design for evaluating all three levels of precision hierarchy:

Detailed Protocol for Intermediate Precision Assessment in Food Methods

Intermediate precision represents the most critical assessment for routine laboratory operations, as it reflects the realistic variability encountered during normal method use [6]. The following protocol provides a detailed approach for evaluating intermediate precision in food analytical methods, adapted from ICH guidelines and AOAC International recommendations [14] [12].

Sample Preparation and Experimental Design

Select a homogeneous representative sample of the food matrix containing the analyte of interest at a concentration level relevant to routine testing (typically 100% of the target concentration).

Prepare a minimum of six independent sample determinations across the variables being tested:

- Two different analysts (minimum)

- Three different days (minimum)

- Different equipment (if available)

- Different reagent batches

For accuracy assessment simultaneously with precision, prepare samples at three concentration levels (80%, 100%, 120% of target) with three replicates at each level, for a total of nine determinations [12] [13].

Ensure proper calibration using freshly prepared standards for each series of measurements to incorporate calibration variability into the assessment.

Data Collection and Analysis

Execute the analytical method following the standardized procedure, with each analyst performing the analysis independently using their own reagents and equipment.

Record all individual results along with the specific conditions (analyst, date, equipment, reagent lot numbers).

Calculate summary statistics for the complete data set:

- Mean (x̄) and standard deviation (SD)

- Relative standard deviation (RSD% = [SD/x̄] × 100)

- Confidence intervals (typically 95%)

Perform variance component analysis using ANOVA to partition variability sources:

- Between-analyst variance

- Between-day variance

- Residual variance (repeatability)

Acceptance Criteria Evaluation

Compare the calculated RSD% against pre-defined acceptance criteria based on method requirements and industry standards. For food and pharmaceutical methods, typical acceptance criteria for RSD% at the target concentration level are:

Table 2: Typical Precision Acceptance Criteria for Analytical Methods

| Analytical Method Type | Repeatability (RSD%) | Intermediate Precision (RSD%) | Reference |

|---|---|---|---|

| Assay of active ingredient | ≤ 1.0% | ≤ 2.0% | [6] |

| Impurity quantification | ≤ 5.0% | ≤ 8.0% | [12] |

| Food component analysis | ≤ 2.0% | ≤ 5.0% | [14] |

| Near-limit quantitation | ≤ 10.0% | ≤ 15.0% | [13] |

For the method to be considered successfully validated for intermediate precision, the RSD% should not exceed the pre-defined acceptance criteria, and no statistically significant differences should be observed between different analysts or days when tested using appropriate statistical methods (e.g., Student's t-test, F-test) [13].

Case Study: HPLC Analysis of Quercitrin in Capsicum annuum

A practical example of precision assessment comes from the validation of an HPLC method for quantifying quercitrin in Capsicum annuum cultivar Dangjo extracts [14]. This case study exemplifies the application of precision hierarchy in food methods research.

Experimental Conditions:

- Matrix: Pepper extracts

- Analytic: Quercitrin (flavonoid glycoside)

- Instrumentation: HPLC with diode array detection

- Concentration range: 2.5-15.0 μg/mL

Precision Assessment Results:

Table 3: Precision Data from Quercitrin HPLC Method Validation

| Precision Level | Conditions | RSD% Obtained | Acceptance Criteria | Assessment |

|---|---|---|---|---|

| Repeatability | Same analyst, same day, five replicates | 0.50% - 5.95% | ≤ 8.0% | Acceptable [14] |

| Intermediate Precision | Different days, different operators | Within 8.0% | ≤ 8.0% | Acceptable [14] |

| Reproducibility | Not assessed (single-laboratory validation) | N/A | N/A | Not required |

The validation demonstrated that the HPLC method produced precise results across different operators and days, with RSD values within the Association of Official Agricultural Chemists (AOAC) standard criteria of ≤8% [14]. The study highlights how precision validation provides scientific evidence that a method will perform reliably during routine use in quality control laboratories.

The Scientist's Toolkit: Essential Materials for Precision Studies

Table 4: Essential Research Reagent Solutions and Materials for Precision Assessment

| Material/Reagent | Function in Precision Assessment | Specification Requirements | Critical Considerations |

|---|---|---|---|

| Certified Reference Materials | Provides accepted reference value for accuracy and precision assessment [10] | Certified purity with documented uncertainty | Traceability to national/international standards |

| HPLC-grade solvents | Mobile phase preparation in chromatographic methods | Low UV absorbance, high purity | Consistent supplier to minimize batch-to-batch variability |

| Standard compounds | Calibration standards and spike recovery studies | Documented purity and identity | Verify stability and storage conditions |

| Characterized sample matrix | Representative blank matrix for recovery studies | Similar to routine samples in composition | Homogeneity and stability documentation |

| Stable control samples | Monitoring precision over time | Homogeneous, stable, representative | Aliquoting for consistent long-term use |

| Column performance tests | HPLC system suitability testing | Documented efficiency, tailing factor | Consistent column lot or equivalent specifications |

The precision hierarchy of repeatability, intermediate precision, and reproducibility provides a systematic framework for evaluating the reliability of analytical methods across increasingly variable conditions. For food methods researchers and drug development professionals, understanding these interrelationships is essential for designing appropriate validation protocols that demonstrate method suitability for intended use. Recent updates to regulatory guidelines, including ICH Q2(R2), have further emphasized the importance of precision assessment while providing flexibility for novel analytical technologies [1]. By implementing the detailed experimental protocols outlined in this application note and utilizing appropriate materials from the scientist's toolkit, researchers can generate robust precision data that supports method validation, transfer, and ultimately ensures the quality and safety of food and pharmaceutical products.

Why Precision is Non-Negotiable in Food Quality and Safety Testing

In the field of food quality and safety testing, precision is a fundamental requirement rather than a mere desirable attribute. It represents the cornerstone of reliable analytical results, directly impacting public health, regulatory compliance, and brand integrity. The World Health Organization estimates that approximately 600 million people fall ill annually from eating contaminated food, underscoring the critical importance of accurate testing methodologies [15]. Precision in analytical chemistry ensures that food testing methods yield consistent, reproducible results across different laboratories, analysts, and equipment, forming the scientific foundation upon which food safety decisions are based.

The concept of precision extends beyond simple repeatability to encompass intermediate precision – a more comprehensive measure of variability within a laboratory under changing conditions such as different days, analysts, or equipment [6]. This distinction is crucial for understanding real-world performance of analytical methods in food testing environments where multiple variables can influence results. Without demonstrated precision, the validity of food safety testing becomes questionable, potentially allowing contaminated products to reach consumers or resulting in unnecessary product recalls that damage brand reputation and consumer trust.

Defining Precision Parameters in Food Testing

The Precision Hierarchy: Key Definitions

In analytical method validation for food testing, precision is systematically evaluated at multiple levels to ensure comprehensive reliability assessment. The precision hierarchy consists of three well-defined components, each serving a distinct purpose in method validation:

Repeatability (intra-assay precision): Measures the precision under the same operating conditions over a short time interval, performed by the same analyst using the same equipment [13]. This represents the best-case scenario for method performance, indicating the inherent variability of the method under controlled conditions.

Intermediate Precision: Measures within-laboratory variations due to random events such as different days, different analysts, or different equipment [6] [13]. Unlike repeatability, intermediate precision reflects real-world internal lab consistency while maintaining the same analytical method, making it particularly valuable for assessing routine operational performance.

Reproducibility (inter-laboratory precision): Represents the precision between different laboratories, typically assessed through collaborative studies [13]. This captures the maximum expected method variability and is especially important for standardized methods used across multiple facilities or for regulatory compliance.

Intermediate Precision: The Bridge Between Ideal and Real-World Conditions

Intermediate precision occupies a particularly important position in the precision hierarchy for food testing laboratories. It specifically measures an analytical method's variability within a single laboratory across different days, operators, or equipment [6]. Unlike repeatability (which uses identical conditions) or reproducibility (which involves different labs), intermediate precision reflects realistic internal lab variability that would be expected during routine operations.

The calculation of intermediate precision involves determining both within-run and between-run variability using the formula: σIP = √(σ²within + σ²between) [6]. This combined standard deviation provides a more comprehensive understanding of method performance under normal laboratory variations. The results are typically expressed as relative standard deviation (RSD%), with industry-specific acceptance criteria determining whether the precision is adequate for the intended purpose. Proper evaluation of intermediate precision requires structured experimental design and careful documentation to ensure all potential sources of variation are adequately captured and assessed.

Experimental Protocols for Precision Determination

Comprehensive Protocol for Assessing Intermediate Precision

Objective: To determine the intermediate precision of an analytical method for quantifying target analytes (e.g., contaminants, nutrients, or additives) in food matrices.

Scope: This protocol applies to chromatographic, spectroscopic, and other quantitative analytical methods used in food testing laboratories.

Experimental Design:

- Sample Preparation: Prepare a homogeneous bulk sample of the food matrix fortified with the target analyte at a concentration within the method's validated range. For contamination testing, this may involve spiking with known quantities of pathogens or chemical contaminants. Divide into aliquots for analysis under varying conditions [13].

Variable Conditions:

- Multiple Analysts: Involve at least two qualified analysts who independently prepare standards and samples using different reagent lots [13].

- Different Instruments: Utilize multiple instruments of the same type but with different calibrations and maintenance histories.

- Temporal Variation: Conduct analyses on different days (minimum of 3 non-consecutive days) to account for environmental fluctuations [6].

Data Collection:

- Each analyst should perform a minimum of 6 replicate determinations at 100% of the test concentration, or a minimum of 9 determinations across three concentration levels covering the specified range (three concentrations, three replicates each) [13].

- Document all relevant parameters including instrument conditions, reagent lots, preparation times, and environmental conditions (temperature, humidity) that might affect results.

Statistical Analysis:

- Calculate the mean, standard deviation, and relative standard deviation (%RSD) for each set of conditions.

- Apply the formula for intermediate precision: σIP = √(σ²within + σ²between) [6].

- Perform appropriate statistical tests (e.g., Student's t-test, ANOVA) to determine if significant differences exist between results obtained under different conditions.

Acceptance Criteria: Industry standards typically require %RSD values ≤2.0% for excellent precision, 2.1-5.0% for acceptable precision, 5.1-10.0% for marginal precision, and >10.0% for unacceptable precision, though these ranges may vary based on the specific application and analyte [6].

Protocol for Establishing Repeatability (Intra-Assay Precision)

Objective: To determine the short-term precision of an analytical method under identical conditions.

Experimental Design:

- Sample Preparation: Prepare a minimum of six sample replicates at 100% of the test concentration or nine determinations across three concentration levels from a homogeneous sample source [13].

Analysis Conditions:

- Single analyst using the same instrument

- Same reagent lots and standards

- Consecutive analyses within a short time frame (typically within one day)

Data Analysis:

- Calculate mean, standard deviation, and %RSD of the results

- Compare %RSD to established acceptance criteria

Acceptance Criteria: Repeatability %RSD should typically be more stringent than intermediate precision, often requiring ≤2.0% for critical analytes [13].

Data Interpretation and Method Validation

The results from precision studies must be thoroughly evaluated to determine method suitability:

- Comparative Analysis: Compare precision metrics (%RSD) across different concentration levels to identify potential concentration-dependent effects.

- Trend Analysis: Examine data for systematic trends or outliers that might indicate specific sources of variability.

- Acceptance Criteria Evaluation: Assess whether precision metrics fall within predefined acceptance criteria based on the method's intended use and industry standards [6].

Precision Assessment Workflow: This diagram illustrates the systematic process for evaluating method precision, from initial planning through final validation decision.

Quantitative Data Presentation: Precision Metrics and Standards

Precision Acceptance Criteria for Food Testing Methods

Table 1: Precision Performance Standards for Analytical Methods in Food Testing

| Precision Level | Assessment Conditions | Typical Acceptance Criteria (%RSD) | Application Context |

|---|---|---|---|

| Repeatability | Same analyst, same day, same instrument | ≤ 2.0% | Method capability assessment; optimal performance baseline |

| Intermediate Precision | Different days, analysts, or equipment within same lab | 2.1% - 5.0% | Routine operational consistency; real-world variability assessment |

| Reproducibility | Different laboratories | 5.1% - 10.0% | Method transfer validation; multi-site studies |

Data compiled from analytical method validation guidelines [6] [13]

Example Precision Data for Food Contaminant Analysis

Table 2: Representative Intermediate Precision Data for Pathogen Detection in Dairy Products

| Analysis Condition | Sample Matrix | Target Pathogen | Spike Level (CFU/mL) | Mean Recovery (n=6) | Standard Deviation | %RSD |

|---|---|---|---|---|---|---|

| Analyst A, Day 1 | Pasteurized Milk | Listeria monocytogenes | 100 | 95.2 | 3.8 | 4.0 |

| Analyst B, Day 1 | Pasteurized Milk | Listeria monocytogenes | 100 | 92.7 | 4.1 | 4.4 |

| Analyst A, Day 2 | Pasteurized Milk | Listeria monocytogenes | 100 | 94.5 | 3.9 | 4.1 |

| Analyst B, Day 2 | Pasteurized Milk | Listeria monocytogenes | 100 | 93.1 | 4.3 | 4.6 |

| Intermediate Precision | All conditions combined | 100 | 93.9 | 4.2 | 4.5 |

Data adapted from dairy safety research and precision methodology guidelines [16] [6] [13]

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Precision Studies in Food Testing

| Reagent/Material | Function in Precision Assessment | Critical Quality Attributes | Application Example |

|---|---|---|---|

| Certified Reference Materials | Establish accuracy and precision baseline; method calibration | Certified purity, stability, traceability | Quantification of contaminants, nutrients, or additives |

| Matrix-Matched Standards | Account for matrix effects on precision; improve accuracy | Similar composition to sample matrix; minimal interference | Analysis of pesticides in produce; antibiotics in meat |

| Quality Control Materials | Monitor method performance over time; detect precision drift | Stability, homogeneity, representative concentration | Daily system suitability testing; batch quality verification |

| Stable Isotope-Labeled Internal Standards | Compensate for sample preparation variations; improve precision | Chemical similarity to analyte; minimal natural abundance | LC-MS/MS analysis of mycotoxins, veterinary drug residues |

| Proficiency Testing Samples | Assess inter-laboratory precision (reproducibility) | Homogeneity, stability, assigned values with uncertainty | Laboratory accreditation; method performance verification |

Information synthesized from analytical method validation guidelines and food testing practices [6] [13]

Regulatory Context and Industry Implications

Precision in food testing is not merely a scientific concern but a regulatory requirement with significant implications for public health protection. The FDA's Human Foods Program (HFP), launched in 2024, emphasizes a risk-based approach to food safety that relies heavily on precise analytical methods [17]. Their FY 2025 priorities include strengthening regulatory oversight through improved traceability tools and advancing scientific capabilities – both dependent on precise measurement systems.

The Global Food Safety Initiative (GFSI) and other international standards require demonstrated method precision as part of food safety management systems [15]. These standards acknowledge that without established precision, food safety testing cannot reliably detect contaminants, verify sanitation effectiveness, or ensure product composition matches labeling claims.

From an industry perspective, precision directly impacts business operations and brand protection. Surveys indicate over 40% of consumers would switch brands after a serious recall, highlighting the economic importance of precise testing methods that prevent such incidents [15]. Food manufacturers like Nestlé emphasize that "food safety is non-negotiable" and implement rigorous quality assurance programs that depend on precise testing methodologies throughout their supply chains [18].

Precision Hierarchy & Impact: This diagram illustrates the relationship between different precision levels and their significance in various food safety domains.

Precision in food quality and safety testing represents a fundamental requirement rather than an optional enhancement. As the foundation of reliable analytical results, precision – particularly intermediate precision – provides the scientific basis for detecting contaminants, verifying nutritional content, ensuring regulatory compliance, and protecting public health. The experimental protocols and quantitative assessments outlined in this document provide a framework for establishing and verifying the precision of food testing methods, while the regulatory context underscores their critical importance to food safety systems.

Without demonstrated precision across its multiple dimensions, food testing cannot fulfill its essential role in preventing foodborne illness and ensuring consumer confidence. The non-negotiable status of precision reflects its position as an indispensable component of modern food safety management, supporting the entire food supply chain from production to consumption. As testing technologies evolve and regulatory standards advance, the fundamental requirement for precise measurement will remain constant, continuing to serve as the bedrock of food safety science.

From Theory to Practice: Calculating and Establishing Precision for Food Methods

Designing a Robust Experiment for Repeatability Testing

Repeatability testing is a fundamental component of sensory evaluation in experimental foods, enabling researchers to determine whether a perceivable difference exists between two or more food products under identical conditions [19]. This methodology is essential in product development, quality control, and reformulation, as it helps food manufacturers identify the impact of changes in ingredients, processing, or packaging on the sensory characteristics of their products [19]. Within the broader context of precision testing for intermediate precision in food methods research, establishing robust repeatability protocols ensures that analytical measurements remain consistent when performed multiple times within the same laboratory using the same equipment, operators, and short time intervals. The importance of repeatability lies in its ability to provide objective and reliable data on the sensory characteristics of food products, which is critical for ensuring product quality and consistency across food and drug development industries [19].

The distinction between repeatability and reproducibility is crucial for research design. Repeatability refers to the likelihood that, having produced one result from an experiment, you can try the same experiment with the same setup and produce that exact same result [20]. Reproducibility, meanwhile, measures whether results in a paper can be attained by a different research team using the same methods [20]. For food methods research, this distinction is particularly important when validating analytical techniques for regulatory compliance or quality assurance protocols, where both internal consistency (repeatability) and external validity (reproducibility) must be established.

Foundational Concepts and Definitions

Key Terminology

- Repeatability: A measure of the likelihood that having produced one result from an experiment, you can try the same experiment with the same setup and produce that exact same result [20]. It's a way for researchers to verify that their own results are true and are not just chance artifacts.

- Intermediate Precision: Variation within the same laboratory under different operational conditions (different days, different analysts, different equipment).

- Reproducibility: Variation between different laboratories following the same methodology [20].

- Discrimination Testing: A type of sensory evaluation that aims to detect whether a difference exists between two or more food samples [19].

Standardized Protocols in Food Analysis

The development of standardized analytical protocols is revolutionizing food composition analysis. Initiatives like the Periodic Table of Food Initiative (PTFI) are addressing critical challenges in repeatability through four key areas of standardization [21]:

- Standardized multi-omics protocols that are publicly accessible

- Custom internal standards to accompany PTFI methods for data harmonization across labs

- Expanding cloud-based chemical libraries for confident annotation of features detected in foods

- Centralized data pipelines allowing labs to upload raw data for processing, retention time alignment, and final data assembly [21]

This standardized approach enables distributable analytical methods to labs worldwide, facilitating participation in food composition analysis and contribution to building comprehensive food biomolecular composition databases [21].

Experimental Design for Repeatability Assessment

Common Difference Testing Methods

Several difference testing methods are commonly used in experimental foods for repeatability assessment [19]:

- Triangle Test: Presenting three samples to a panelist, two of which are identical, and asking them to identify the odd sample

- Duo-Trio Test: Presenting three samples, one reference and two test samples, asking panelists to identify which test sample matches the reference

- Paired Comparison Test: Presenting two samples to a panelist and asking them to evaluate a specific attribute

Table 1: Comparison of Difference Testing Methods

| Method | Samples Presented | Task | Probability of Chance | Best Use Cases |

|---|---|---|---|---|

| Triangle Test | 3 samples (2 identical, 1 different) | Identify the odd sample | 1/3 | General difference detection |

| Duo-Trio Test | 3 samples (1 reference, 2 test samples) | Identify which matches reference | 1/2 | When reference standard is available |

| Paired Comparison Test | 2 samples | Evaluate specific attribute | 1/2 | Directed attribute comparison |

Statistical Foundations

The statistical basis for difference testing relies on binomial probability distributions. For the triangle test, the probability of a panelist correctly identifying the odd sample by chance is given by:

[P = \frac{1}{3}]

The number of correct responses required to establish a significant difference between the samples can be determined using a binomial distribution. The probability of x correct responses out of n trials is given by:

[P(x) = \binom{n}{x} \left(\frac{1}{3}\right)^x \left(\frac{2}{3}\right)^{n-x}]

For example, with 50 panelists participating in a triangle test, the number of correct responses required to establish a significant difference at a 5% significance level can be calculated using:

[P(X \geq x) = 1 - \sum_{i=0}^{x-1} \binom{50}{i} \left(\frac{1}{3}\right)^i \left(\frac{2}{3}\right)^{50-i} \leq 0.05]

Using this formula, approximately 23 correct responses out of 50 would indicate a statistically significant difference between samples [19].

Detailed Experimental Protocols

Triangle Test Methodology

Objective: To determine whether a perceivable difference exists between two products.

Materials:

- Test samples (prepared identically except for the variable being tested)

- Neutral palate cleansers (unsalted crackers, water)

- Sample presentation containers (identical for all samples)

- Data collection sheets or electronic data capture system

Procedure:

- Sample Preparation: Prepare samples following standardized protocols, controlling for extraneous variables such as temperature, portion size, and presentation [19].

- Sample Coding: Label each sample with random three-digit codes.

- Presentation Order: Randomize the presentation order of the triplet (AAB, ABA, BAA, BBA, BAB, ABB) across panelists.

- Instructions: Provide panelists with clear instructions to identify the odd sample.

- Palate Cleansing: Mandate palate cleansing between samples.

- Data Collection: Record correct/incorrect identifications.

- Statistical Analysis: Compare results to binomial probability tables to determine significance.

Statistical Analysis:

- For 25 panelists, minimum of 13 correct identifications for significance (α=0.05)

- For 50 panelists, minimum of 23 correct identifications for significance (α=0.05) [19]

Sample Preparation Guidelines for Optimal Repeatability

Proper sample preparation is crucial for ensuring testing conditions are optimal and results are reliable [19]:

- Control of Variables: Use identical samples except for the variable being tested

- Environmental Control: Control for extraneous variables such as temperature and humidity

- Consistent Presentation: Ensure samples are prepared and presented consistently

- Blinding: Implement blind testing conditions to prevent bias

- Replication: Include sufficient replicates to account for biological and technical variation

Recent advances in sample preparation techniques include compressed fluids and novel green solvents that enable sustainable extraction while maintaining analytical precision [22]. Methods such as Pressurized Liquid Extraction (PLE), Supercritical Fluid Extraction (SFE), and Gas-Expanded Liquid Extraction (GXL) offer high selectivity, shorter extraction times, and lower environmental impact compared to traditional solvent-based methods [22].

Data Analysis and Interpretation

Statistical Methods for Repeatability Assessment

The choice of statistical method for data analysis depends on the difference testing method used [19]:

- Binomial Distribution: Appropriate for triangle and duo-trio tests

- t-tests or ANOVA: Suitable for paired comparison tests with continuous data

- Confidence Intervals: Calculate 95% confidence intervals for difference thresholds

- Power Analysis: Determine appropriate sample sizes for detecting meaningful differences

Table 2: Minimum Number of Correct Responses for Statistical Significance in Triangle Tests

| Number of Panelists | Minimum Correct for Significance (α=0.05) | Minimum Correct for Significance (α=0.01) |

|---|---|---|

| 20 | 12 | 14 |

| 25 | 13 | 15 |

| 30 | 16 | 18 |

| 40 | 19 | 22 |

| 50 | 23 | 26 |

Interpretation Guidelines

When interpreting repeatability test results, consider [19]:

- The significance level (α) and the power of the test (1-β)

- The number of panelists and the number of correct responses

- The practical significance of the results in the context of the product and research question

- The sensitivity of the panel and testing conditions

- Comparison with historical data or predefined acceptability thresholds

Advanced Applications in Food Methods Research

Integration with Multi-Omics Approaches

Modern food analysis increasingly incorporates multi-omics data to understand food composition at a systems level. The PTFI approach demonstrates how standardized tools can map food quality through [21]:

- Untargeted metabolomics for comprehensive small molecule profiling

- Lipidomics for detailed fat composition analysis

- Ionomics for elemental composition mapping

- Fatty acid analysis for specific lipid characterization

- Proteomics and glycomics (in development) for protein and carbohydrate profiling

This integrated approach enables researchers to move beyond traditional nutrient analysis to understand the complex biomolecular composition of foods and how it varies based on agricultural practices, processing methods, and environmental factors [21].

Addressing the Reproducibility Crisis in Food Science

The broader scientific community has recognized a "reproducibility crisis" affecting many disciplines, including food science [20]. A 2015 paper by the Open Science Collaboration examined 100 experiments published in high-ranking, peer-reviewed journals and found that only 68% of reproductions provided statistically significant results that matched the original findings [20]. To enhance repeatability and reproducibility in food methods research, implement these evidence-based practices:

- Comprehensive Documentation: Maintain detailed records of all methodological details

- Method Standardization: Adopt and validate standardized protocols across laboratories

- Reference Materials: Incorporate certified reference materials for method validation

- Blinding and Randomization: Implement appropriate experimental controls

- Statistical Rigor: Conduct power calculations and report effect sizes with confidence intervals

- Collaborative Verification: Engage multiple researchers in data analysis and interpretation

Research Reagent Solutions for Repeatability Testing

Table 3: Essential Materials and Reagents for Repeatability Testing in Food Analysis

| Item | Function | Application Notes |

|---|---|---|

| Internal Standards | Enable data harmonization across different laboratories and instruments [21] | PTFI provides custom internal standards to accompany standardized methods |

| Deep Eutectic Solvents (DES) | Green extraction media for sample preparation [22] | Improve biodegradability and safety while maintaining extraction efficiency |

| Compressed Fluid Extraction Systems | Sustainable sample preparation using pressurized liquids [22] | PLE, SFE, and GXL systems reduce environmental impact while providing high selectivity |

| Reference Materials | Quality control and method validation | Certified reference materials with known composition for analytical calibration |

| Standardized Chemical Libraries | Confident annotation of features detected in foods [21] | Cloud-based libraries allow consistent compound identification across labs |

| Palate Cleansers | Neutralize sensory perception between samples | Unsalted crackers, water, or mild solutions to prevent cross-sample contamination |

| Sample Presentation Containers | Consistent sensory evaluation | Identical containers, covers, and serving utensils to minimize non-product cues |

Workflow Visualization

Title: Repeatability Testing Workflow

Title: Multi-Omics Food Analysis Pipeline

A Step-by-Step Guide to Calculating Intermediate Precision

In the realm of analytical chemistry, particularly within food methods research and drug development, the reliability of data is paramount. Intermediate precision is a critical validation parameter that measures the consistency of analytical results under varying conditions within a single laboratory over time [6]. It bridges the gap between repeatability (which measures variability under identical conditions) and reproducibility (which measures variability between different laboratories) [13] [6].

For researchers and scientists, establishing intermediate precision provides confidence that an analytical method will produce dependable results despite normal, expected fluctuations in day-to-day laboratory operations, such as changes in analysts, equipment, or calibration schedules [6]. This guide provides a detailed, step-by-step protocol for calculating intermediate precision, framed within the context of precision testing for food and pharmaceutical methods.

Key Definitions and Concepts

Understanding the hierarchy of precision terms is essential for proper method validation:

- Repeatability: Also known as intra-assay precision, it measures the precision under the same operating conditions over a short interval of time [13] [6]. It represents the best-case scenario for a method's variability.

- Intermediate Precision: Measures the within-laboratory variation, specifically the impact of changes such as different days, different analysts, or different equipment [13] [6]. It reflects the method's robustness to realistic internal lab variations.

- Reproducibility: Represents the precision obtained between different laboratories, typically assessed through collaborative studies [13] [6]. It captures the maximum expected method variability.

The following workflow illustrates how these precision parameters are sequentially determined during method validation and how their data feeds into the final calculation of intermediate precision:

Step-by-Step Calculation Protocol

Experimental Design and Data Collection

A structured experimental design is crucial for obtaining a meaningful intermediate precision estimate.

Define Variables: Systematically vary key factors such as:

- Day: Perform analysis on at least two different, non-consecutive days.

- Analyst: Involve at least two different qualified analysts.

- Instrument: Use different calibrated instruments of the same type, if available [6].

Prepare Samples: Analyze a minimum of six determinations at 100% of the test concentration, or a minimum of nine determinations covering the specified range (e.g., three concentration levels with three repetitions each) [13]. Ensure samples are homogeneous and stable throughout the study.

Execute the Study: Each analyst should prepare their own standards and solutions and perform the analysis according to the standardized procedure on their designated day and equipment [13]. Record all raw data values, not averages, to capture the true variability.

The table below outlines a recommended data collection structure for a study involving two analysts over two days:

Table 1: Example Data Collection Structure for Intermediate Precision Study

| Day | Analyst | Sample Result 1 (%) | Sample Result 2 (%) | Sample Result 3 (%) |

|---|---|---|---|---|

| 1 | Anna | 98.7 | 99.0 | 98.5 |

| 1 | Ben | 99.1 | 98.8 | 99.2 |

| 2 | Anna | 98.5 | 98.9 | 98.6 |

| 2 | Ben | 98.9 | 98.4 | 98.7 |

Statistical Calculation

Intermediate precision is calculated by combining the variance components from within-group and between-group variations.

Calculate Variance Components:

- Within-group variance (σ²within): Calculate the variance of results obtained under identical conditions (e.g., all results from Analyst Anna on Day 1). Pool these variances across all groups.

- Between-group variance (σ²between): Calculate the variance between the mean values of the different groups (e.g., the variance between the mean of Anna's results and the mean of Ben's results) [6].

Apply the Formula: Combine the variance components using the following formula to obtain the intermediate precision standard deviation:

σIP = √(σ²within + σ²between)[6].Express as Relative Standard Deviation: For easier interpretation and comparison across methods and concentrations, convert the standard deviation to a Relative Standard Deviation (%RSD), also known as the Coefficient of Variation (CV).

%RSD = (σIP / Overall Mean) × 100[6].

Interpretation and Acceptance Criteria

The calculated %RSD is evaluated against pre-defined, method-specific acceptance criteria. These criteria should be established based on the method's intended use and the typical performance standards for the analyte and matrix.

Table 2: General Guidelines for Interpreting Intermediate Precision (%RSD)

| % RSD Value | Interpretation | Typical Scenarios |

|---|---|---|

| ≤ 2.0% | Excellent precision | Suitable for assay determination of active ingredients. |

| 2.1% - 5.0% | Acceptable precision | Common for many pharmaceutical and food analysis methods. |

| 5.1% - 10.0% | Marginal precision | May be acceptable for impurity quantification or trace analysis. |

| > 10.0% | Unacceptable precision | Investigation required; method is not sufficiently robust. |

For statistical evaluation, results from different analysts can be subjected to a Student's t-test to determine if there is a statistically significant difference in the mean values obtained, which would indicate a significant analyst-induced bias [13].

The Scientist's Toolkit: Essential Reagent Solutions

Successful intermediate precision studies rely on high-quality materials and controls. The following table details key reagents and their functions:

Table 3: Essential Research Reagents and Materials for Precision Studies

| Reagent/Material | Function | Critical Quality Attributes |

|---|---|---|

| Certified Reference Standard | Serves as the primary benchmark for accuracy and calibration. | High purity, well-characterized identity and stability, traceable certification. |

| Homogeneous Sample Matrix | Provides a consistent test material for repeated measurements. | Representative of the actual test material, uniform composition, and stability. |

| High-Purity Solvents & Reagents | Used for sample preparation, dilution, and mobile phase preparation. | Appropriate grade (e.g., HPLC-grade), low background interference, consistent lot-to-lot quality. |

| Stable Control Samples | Monitors the performance of the analytical system over time. | Known concentration, behaves similarly to test samples, long-term stability. |

| Standardized Instrument Calibrators | Ensures all instruments used in the study are operating to the same standard. | Traceable, compatible with the analytical method, and stable. |

Factors Affecting Intermediate Precision and Best Practices

Several factors can significantly impact the outcome of an intermediate precision study. Proactive management of these factors is key to success.

- Staff Training and Competency: Analyst expertise is a major contributor to variability. Ensure all personnel are thoroughly trained on the specific analytical method and instruments, and their competency is assessed before the study [6].

- Environmental Control: Laboratory conditions such as temperature, humidity, and air quality can account for over 30% of result variability. Continuously monitor and control these parameters within specified ranges using calibrated devices [6].

- Standardized Procedures: Use well-documented, detailed procedures for every step, from sample preparation and instrument operation to data analysis. This minimizes technique variations between analysts [6].

- Instrument Calibration and Maintenance: Ensure all equipment is properly qualified, calibrated, and maintained according to a strict schedule. This prevents instrument drift from contributing to between-day or between-instrument variability [13].

By systematically addressing these factors and following the detailed protocol outlined in this guide, researchers and scientists can robustly determine the intermediate precision of their analytical methods, ensuring the generation of reliable and defensible data for food methods research and drug development.

Key Factors Influencing Intermediate Precision in the Laboratory

Within the framework of precision testing for food methods research, intermediate precision is a critical validation parameter that demonstrates the reliability of an analytical method under normal variations encountered in a single laboratory over time. It is defined as the precision obtained under varied conditions, such as different days, analysts, and equipment, within the same facility [11]. For researchers and drug development professionals, establishing robust intermediate precision is paramount for ensuring that analytical results are consistent and trustworthy, supporting method transfers and regulatory compliance. This application note delineates the key factors influencing intermediate precision and provides detailed protocols for its evaluation within the context of food analysis.

Defining Precision Tiers: Repeatability, Intermediate Precision, and Reproducibility

Understanding the hierarchy of precision measurements is fundamental. The three primary tiers are:

- Repeatability (intra-assay precision): Expresses the closeness of results obtained under identical conditions—same operator, equipment, and short time period [11]. It represents the smallest possible variation in results.

- Intermediate Precision (within-lab reproducibility): Accounts for random events within a single laboratory over a longer period (e.g., several months), including factors like different analysts, equipment calibration, reagent batches, and columns [13] [11]. Its standard deviation is typically larger than that of repeatability due to these additional variables.

- Reproducibility (between-lab reproducibility): Expresses the precision between measurement results obtained in different laboratories, often assessed during collaborative studies [13] [11].

The relationship between these concepts is hierarchical, with each tier encompassing a broader scope of variability. The following diagram illustrates this relationship and the key factors affecting intermediate precision.

Key Factors Influencing Intermediate Precision

Intermediate precision is affected by a range of laboratory variables. Effectively controlling these factors is essential for maintaining data integrity in food methods research.

Table 1: Key Factors and Their Impact on Intermediate Precision

| Factor Category | Specific Examples | Impact on Analytical Results |

|---|---|---|

| Personnel | Different analysts [13] [11] | Variation in sample preparation technique, interpretation of results, and operational skill. |

| Instrumentation | Different HPLC systems [13], columns [11], spray needles (for LC-MS) [11] | Changes in detector response, retention time, separation efficiency, and sensitivity. |

| Reagents & Consumables | Different calibrants [11], batches of reagents [11], solvents | Shifts in calibration curves, introduction of impurities, and altered reaction kinetics. |

| Temporal Effects | Different days [13] [11], weeks, or months | Long-term instrument drift, environmental fluctuations (temperature, humidity), and degradation of standards/reagents. |

The Scientist's Toolkit: Essential Materials for Precision Studies

Table 2: Key Research Reagent Solutions for Precision Evaluation

| Item | Function in Precision Studies |

|---|---|

| Certified Reference Materials (CRMs) | Provides an accepted reference value to establish accuracy and traceability for method validation [13]. |

| Chromatographic Columns | Different batches or brands are used to test the method's robustness to variations in stationary phase chemistry [11]. |

| High-Purity Solvents & Reagents | Different lots are used to assess their impact on baseline noise, retention time, and detector response [11]. |

| Stable Control Samples | A homogeneous sample, stored appropriately and analyzed over time, is essential for calculating precision metrics like %RSD [13]. |

| Calibration Standards | Different sets of calibrants, prepared independently by different analysts, are used to evaluate intermediate precision [13] [11]. |

Quantitative Assessment and Acceptance Criteria

The evaluation of intermediate precision involves specific statistical calculations based on experimental data. A standard approach involves two analysts independently performing the analysis on different days or with different instruments.

The data is used to calculate the Relative Standard Deviation (RSD), also known as the coefficient of variation, which is the primary metric for precision. The RSD is calculated as:

[RSD = \frac{Standard\ Deviation}{Mean} \times 100\%]

The experimental workflow for determining intermediate precision, from study design to data analysis, is outlined below.