Navigating Inter-Individual Variability in Drug Absorption: From Foundational Causes to Advanced Study Designs

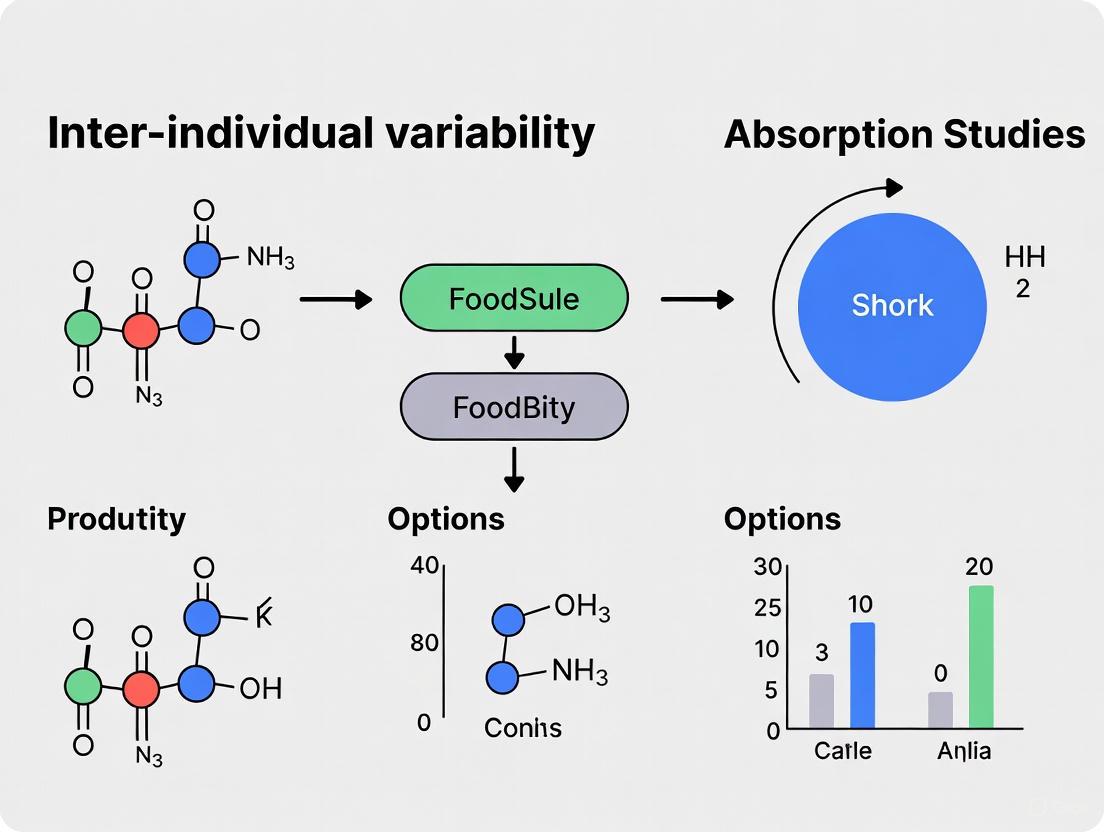

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenge of inter-individual variability (IIV) in oral drug absorption studies.

Navigating Inter-Individual Variability in Drug Absorption: From Foundational Causes to Advanced Study Designs

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenge of inter-individual variability (IIV) in oral drug absorption studies. It explores the foundational sources of IIV, including genetic polymorphisms, gut microbiota composition, and physiological factors. The content details advanced methodological approaches such as metabolomics and genomic analyses for characterizing variability, alongside practical strategies for optimizing study designs through metabotyping and crossover protocols. Furthermore, it examines validation techniques using case studies from polyphenol and pharmaceutical research, offering a holistic framework for improving prediction accuracy and developing personalized therapeutic strategies.

Unraveling the Core Drivers of Inter-Individual Variability in Drug Absorption

In the field of drug development and personalized medicine, inter-individual variability in drug response presents a significant challenge. A substantial portion of this variability originates from genetic polymorphisms, particularly Single Nucleotide Polymorphisms (SNPs), in genes governing the Absorption, Distribution, Metabolism, and Excretion (ADME) of pharmaceuticals [1] [2]. SNPs are variations at a single nucleotide position in the DNA sequence, and they represent approximately 78% of all genetic variations in the human genome [1]. When these polymorphisms occur in coding or regulatory regions of ADME-related genes, they can profoundly alter the activity of drug-metabolizing enzymes and transporter proteins, leading to unpredictable drug efficacy and safety profiles [1] [2]. Understanding and troubleshooting these genetic influences is therefore crucial for researchers designing absorption studies and developing new therapeutic agents.

The following technical guide addresses common experimental challenges and questions related to SNP-ADME research, providing a framework for handling genetic variability in pharmacological studies.

SNP & ADME: Core Concepts FAQ

What are SNPs and how common are they? SNPs are variations where a single DNA nucleotide (A, T, C, or G) differs between individuals. They occur every 100 to 300 bases along the 3-billion-nucleotide human genome, with an estimated 10-30 million SNPs in the human genome [1].

How can a single nucleotide change impact drug absorption and metabolism? SNPs can have functional consequences through several mechanisms. Non-synonymous SNPs in a gene's coding sequence can change an amino acid in the encoded protein, potentially rendering it inactive or with reduced function [1]. Regulatory SNPs in promoter regions can alter transcription factor binding, increasing or decreasing gene expression [1]. Finally, SNPs at exon-intron boundaries can disrupt mRNA splicing, leading to abnormal, non-functional proteins [1].

Which polymorphisms are most clinically relevant for drug safety? Polymorphisms in genes encoding cytochrome P450 (CYP) enzymes are among the most critical. For example, variants in CYP2D6 and CYP2C9 can create "poor metabolizer" or "ultrarapid metabolizer" phenotypes, dramatically affecting the activation and clearance of a wide range of drugs and leading to severe adverse reactions or therapeutic failure [2].

Why do allele frequencies for ADME genes vary across populations? The frequency of specific SNP alleles can differ significantly among ethnic groups. For instance, Amazonian Amerindian populations show a unique genetic profile for 32 ADME-related polymorphisms, with allele frequencies distinct from African, European, American, and Asian populations [3]. This highlights the importance of considering population-specific genetics in research and drug dosing.

Troubleshooting Guide: Common Experimental Challenges

Low Cell Viability in Hepatocyte Experiments

| Possible Cause | Recommendation |

|---|---|

| Improper thawing technique | Thaw cells for <2 minutes at 37°C. Review and adhere to detailed thawing, plating, and counting protocols [4]. |

| Sub-optimal thawing medium | Use recommended Hepatocyte Thawing Medium (HTM) during thawing to effectively remove cryoprotectant [4]. |

| Rough handling of cells | Mix cells slowly and use wide-bore pipette tips to minimize shear stress. Ensure a homogenous cell mixture before counting [4]. |

| Incorrect centrifugation | Check species-specific protocol for proper centrifugation speed and time. For human hepatocytes, this is typically 100 x g for 10 minutes at room temperature [4]. |

Sub-optimal Monolayer Confluency

| Possible Cause | Recommendation |

|---|---|

| Seeding density too low | Check the lot-specific characterization sheet for the appropriate seeding density. Observe cells under a microscope after seeding [4]. |

| Insufficient dispersion during plating | Disperse cells evenly by moving the plate slowly in a figure-eight and back-and-forth pattern immediately after plating [4]. |

| Not enough time for attachment | Allow sufficient time for cells to attach before overlaying with matrix. Compare culture morphology to lot-specific specification sheets [4]. |

| Poor-quality substratum | Use high-quality, collagen I-coated plates to improve cell attachment [4]. |

Inconsistent Transporter Assay Results

| Possible Cause | Recommendation |

|---|---|

| Hepatocyte lot not qualified | Always check cell lot specifications to ensure it is qualified and validated for transporter studies [4]. |

| Insufficient culture time | Bile canaliculi formation, critical for transporter function, generally requires at least 4–5 days in culture [4]. |

| Sub-optimal culture medium | Use recommended Williams Medium E with specialized Plating and Incubation Supplement Packs [4]. |

Key Methodologies & Experimental Protocols

Genotyping and SNP Detection Workflow

Several established methods are available for SNP detection and genotyping in ADME research [5]. The choice of method depends on throughput, cost, and the scale of the study.

- Direct DNA Sequencing: This is the gold standard and a high-throughput method for SNP discovery and validation. It involves PCR amplification of the target region followed by sequencing reaction and capillary electrophoresis [5].

- Real-Time PCR (qPCR): This method, used in recent pharmacogenetic studies, employs TaqMan probes for allelic discrimination. It is highly accurate and suitable for genotyping a predefined set of SNPs across many samples [3] [6].

- DNA Microarray Technology: Microarrays allow for the simultaneous genotyping of hundreds of thousands of SNPs across the genome. This is ideal for genome-wide association studies (GWAS) exploring novel genetic links to drug response [5].

- High-Performance Liquid Chromatography (HPLC): Heteroduplex analysis using HPLC (e.g., Transgenomic Wave system) can detect SNPs with a 95-100% success rate based on differential retention of DNA heteroduplexes on a column [5].

Analysis of ADME Pharmacogenetics Data

After genotyping, rigorous statistical analysis is essential.

- Quality Control: Ensure genotype data quality by testing for deviations from Hardy-Weinberg Equilibrium (HWE). Markers not in HWE (p ≤ 1.56E-03) should be excluded from further analysis [3].

- Allele Frequency Calculation: Determine allele frequencies by direct gene counting in the study population.

- Population Comparison: Compare allele frequencies and genotype distributions with other populations using Fisher’s exact test, applying corrections like Bonferroni for multiple comparisons [3].

- Genetic Differentiation: Assess inter-population variability using Wright’s fixation index (FST). Multidimensional scaling of FST values can provide a visual representation of genetic distance between populations [3].

- Phenotype Association: Finally, associate genetic variants (genotypes) with pharmacokinetic parameters (e.g., C~max~, AUC~last~, T~max~) or clinical outcomes (e.g., bleeding, thrombosis) using statistical models like linear regression [6].

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in ADME/SNP Research |

|---|---|

| Cryopreserved Hepatocytes | In vitro model for studying hepatic metabolism and transporter-mediated uptake; must be transporter-qualified for specific assays [4]. |

| TaqMan OpenArray Genotyping System | A high-throughput technology platform for accurate genotyping of a customized panel of SNPs across many samples [3]. |

| Williams Medium E with Supplements | Specialized culture medium optimized for the plating and incubation of hepatocytes to maintain viability and function [4]. |

| Collagen I-Coated Plates | A substratum that promotes hepatocyte attachment and formation of a confluent monolayer, essential for reliable assay results [4]. |

| Physiologically-Based Pharmacokinetic (PBPK) Modeling Software | A computational tool to integrate mechanistic ADME data and simulate human pharmacokinetics, accounting for genetic polymorphisms [7]. |

Advanced Framework for Handling Inter-individual Variability

To systematically dissect inter-individual variability in ADME studies, a multi-faceted framework is recommended [8].

- Expand Study Cohorts: Include a larger number of participants to achieve sufficient statistical power for identifying genetic and non-genetic determinants of variability.

- Comprehensive Profiling: Collect individual data on all possible determinants, including age, sex, dietary habits, health status, medication use, and gut microbiota composition, in addition to genetic data.

- Individual ADME Data Presentation: Report individual pharmacokinetic and metabolomic data rather than only group means to allow for the identification of sub-populations or "metabotypes" [8].

- Integrate Omics Platforms: Incorporate genomics, microbiomics, and metabolomics into ADME studies. Metabolomics is particularly crucial for stratifying individuals based on their intrinsic capacity to absorb and metabolize compounds [8].

Gut Microbiota Composition and Its Metabolic Influence

FAQ: Addressing Inter-Individual Variability in Human Studies

1. Why do human studies on the gut microbiome's influence on energy metabolism show such inconsistent results, and how can I account for this in my experimental design?

Human studies often find no consistent gut microbiome patterns associated with energy metabolism because of significant inter-individual variability (IIV). This variability originates from differences in digestion, absorption, distribution, metabolism, and excretion (ADME) between subjects. To control for this, future studies should be longitudinal observational studies or randomized controlled trials utilizing robust methodologies and advanced statistical analysis. Furthermore, when designing interventions aimed at modulating the gut microbiome to influence host energy expenditure, researchers should note that most have not been effective, and cause-and-effect relationships in humans have not been firmly established [9].

2. What are the primary factors driving inter-individual variability in the metabolism of bioactive compounds, and which is considered the most significant?

The factors underlying IIV are complex and often poorly characterized for many compounds. However, systematic reviews of human studies have identified the following key determinants, with their relative importance often depending on the specific compound sub-class [8] [10]:

Table: Determinants of Inter-Individual Variability in Bioactive Compound Metabolism

| Determinant | Influence on Inter-Individual Variability |

|---|---|

| Gut Microbiota | Major role for most (poly)phenols; composition and activity determine qualitative and quantitative differences in metabolites (e.g., equol, urolithin production). |

| Genetic Polymorphisms | Important for enzymes associated with the metabolism of specific compounds like flavanones and flavan-3-ols. |

| Age & Sex | Older individuals and females often show different metabolite plasma concentrations (e.g., enterolactone from lignans). |

| Ethnicity | Often linked to altered dietary habits, which in turn affect metabolism. |

| BMI & Health Status | Individuals with a high BMI or specific diseases may show altered metabolite profiles. |

| Lifestyle (Diet, Smoking) | Dietary fiber intake positively correlates with microbial diversity and metabolite levels; smoking shows a negative correlation. |

For many (poly)phenols and other plant bioactive compounds, the gut microbiota plays the most significant role in driving inter-individual differences in ADME. This can result in distinct metabotypes—clusters of individuals defined by their metabolic output, such as "producers" vs. "non-producers" of specific metabolites like equol (from isoflavones) or urolithins (from ellagitannins) [8] [10].

3. What methodologies can I use to stratify research subjects and better account for inter-individual variability?

To move beyond broad variability and identify meaningful patterns, researchers should stratify individuals according to their metabotype. This involves:

- Metabolomic Profiling: Using metabolomics techniques to decipher inter-individual variability and stratify individuals according to their intrinsic capacity to absorb and metabolize compounds [8].

- Comprehensive Data Collection: Future study designs should include a larger number of participants, individual profiling of all possible determinants (genetics, microbiome, diet, health status), and the presentation of individual ADME data [8].

- Microbiome Functional Analysis: Moving beyond compositional data to understand the functional capacity of an individual's microbiome, as this directly determines its metabolic output [9].

Troubleshooting Guide: Common Experimental Challenges

Issue: High Inter-Individual Variability Obscuring Metabolic Findings

Problem: Measured outcomes, such as plasma metabolite concentrations or energy expenditure, show high variation between subjects, making it difficult to identify statistically significant effects of an intervention.

Solution:

- Pre-Stratify Subjects by Metabotype: Before intervention, classify participants into producer/non-producer groups (e.g., for equol or urolithins) or high/low excretors based on a baseline metabolomic analysis [10]. This allows for stratified statistical analysis or targeted recruitment.

- Characterize Key Covariates: Collect comprehensive baseline data on factors known to drive variability. The following table outlines essential data to collect and its purpose [8] [10]:

Table: Key Covariates to Control for Inter-Individual Variability

| Research Reagent / Data Type | Function / Purpose in the Experiment |

|---|---|

| Genomic DNA Extraction Kits | To obtain high-quality DNA for sequencing of the gut microbiome and host genetic variants. |

| 16S rRNA / Metagenomic Sequencing | To determine gut microbiome composition and functional potential. |

| SCFA Analysis Kits (GC/MS) | To quantify short-chain fatty acids (acetate, propionate, butyrate) as key microbial metabolites. |

| Targeted Metabolomics Panels | To quantify specific metabolites of interest (e.g., enterolactone, urolithins, equol) in plasma, urine, or stool to define metabotypes. |

| Dietary Intake Records | To account for the profound impact of background diet on microbiome composition and activity. |

| Demographic & Health Questionnaires | To record age, sex, BMI, medication use, and health status, all of which are potential determinants of ADME. |

- Utilize Advanced Statistical Models: Employ nonlinear mixed-effect (NLME) models that can quantify and separate different levels of variability, such as inter-individual variability (IIV) and inter-occasion variability (IOV), from the residual error. This provides a more precise estimate of the intervention's true effect [11].

Issue: Inability to Establish Causal Links Between Microbiome and Host Metabolism

Problem: Studies identify correlations between microbial taxa and metabolic readouts but cannot demonstrate mechanistic causality.

Solution:

- Implement Mechanistic Animal Models: Use germ-free (GF) mice or mice treated with broad-spectrum antibiotics to deplete gut microbes. These models can demonstrate the necessity of the microbiome for observed metabolic phenotypes. For example, GF mice show altered intestinal development, reduced body mass, and require greater caloric intake to maintain body weight [12].

- Conduct Fecal Microbiota Transplantation (FMT): Transferring microbiota from human donors (e.g., with lean or obese phenotypes) into GF mice can test the sufficiency of a microbial community to convey a metabolic phenotype [13].

- Focus on Microbial Metabolites and Signaling Pathways: Move beyond taxonomy to measure the functional output of the microbiome. Key experimental protocols include:

- Measuring SCFA Receptor Signaling: Investigate the role of microbial SCFAs by measuring their levels and the expression/activation of their receptors (FFAR2, FFAR3) on host enteroendocrine cells (EECs), which influences the release of hormones like GLP-1 and PYY that regulate metabolism [13].

- Analyzing Bile Acid Metabolism: Profile primary and secondary bile acids. Microbial transformation of bile acids creates signaling molecules that activate host receptors like TGR5 and FXR, which are expressed in EECs and have major roles in peripheral metabolism [13].

- Studying Gut Barrier and Inflammation: Assess the impact of microbial structural components like lipopolysaccharide (LPS) on intestinal barrier function and low-grade inflammation, which is associated with adiposity and insulin resistance [9].

The diagram below summarizes the key microbial signaling pathways that influence host metabolism, which should be a focus for establishing causality.

Experimental Protocols for Key Methodologies

Protocol: Analyzing Gut Microbial Energy Harvest in Humans

Objective: To quantify the contribution of the gut microbiome to host energy harvest by measuring stool energy density and short-chain fatty acid (SCFA) production.

Detailed Methodology:

- Subject Recruitment and Stool Collection: Recruit subjects with defined enterotypes (e.g., Bacteroides [B-type], Prevotella [P-type], or Ruminococcaceae [R-type]). Collect fresh stool samples and immediately freeze at -80°C or process for analysis.

- Stool Energy Density Measurement: Use a bomb calorimeter to determine the energy content (calories per gram) of lyophilized stool samples. Lower stool energy density indicates greater energy harvest by the host [9].

- SCFA Analysis via Gas Chromatography (GC):

- Sample Preparation: Homogenize stool samples in a known volume of ultrapure water. Centrifuge at high speed to remove particulate matter. Derivatize the supernatant to convert SCFAs into volatile derivatives.

- GC Analysis: Inject the derivatized sample into a GC system equipped with a flame ionization detector (FID) or mass spectrometer (MS). Use a fused-silica capillary column. Quantify acetate, propionate, butyrate, and branched-chain SCFAs (isobutyrate, isovalerate) by comparing peak areas to a standard curve of known SCFA concentrations.

- Correlation with Intestinal Transit Time: Measure intestinal transit time using a radio-opaque marker. Correlate transit time with both stool energy density and SCFA levels, as shorter transit times are associated with lower stool energy density [9].

Protocol: Designing a Study to Investigate Inter-Individual Variability in (Poly)phenol Metabolism

Objective: To identify the factors driving inter-individual variability in the absorption and metabolism of dietary (poly)phenols and to define distinct metabotypes within a cohort.

Detailed Methodology:

- Controlled Intervention and Sampling: Conduct a controlled feeding study where all participants consume the same (poly)phenol-rich food or supplement for a set period. Collect time-series bio-samples (blood, urine, stool) at baseline and at predetermined time points post-consumption.

- Multi-Omics Data Collection:

- Metabolomics: Perform targeted LC-MS/MS on urine and plasma samples to quantify the parent (poly)phenol and its metabolite phases. Identify patterns such as "high" vs. "low" excretors or "producers" vs. "non-producers" of specific metabolites like equol [10].

- Microbiomics: Extract DNA from stool samples and perform 16S rRNA gene sequencing or shotgun metagenomics to characterize the gut microbiome composition and genetic potential at baseline.

- Genomics: Genotype participants for known genetic polymorphisms in human enzymes involved in (poly)phenol metabolism (e.g., UGT, SULT, COMT enzymes) [8] [10].

- Statistical Integration and Metabotyping: Use multivariate statistical analysis (e.g., PCA, OPLS-DA) to integrate the metabolomic, microbiomic, and genomic data. Cluster participants based on their qualitative and quantitative metabolic profiles to define distinct metabotypes. Correlate these metabotypes with specific microbial taxa or genetic variants [8] [10].

The workflow for this integrated approach is outlined below.

FAQs: Understanding Core Concepts

Q1: How do age-related physiological changes impact drug absorption and distribution? Age-related physiological changes significantly alter pharmacokinetics, necessitating dosage adjustments for geriatric patients. Key changes include reduced renal and hepatic clearance, increased volume of distribution for lipid-soluble drugs, and altered body composition with decreased lean body mass and total body water. These changes prolong elimination half-life for many medications [14] [15]. For example, hydrophilic drugs like digoxin and lithium have reduced volume of distribution in older adults, leading to higher plasma concentrations, while lipophilic drugs like diazepam exhibit larger distribution volumes and prolonged clearance times [14] [15].

Q2: What are the primary sex-based differences in drug metabolism? Sex-based differences in drug metabolism primarily arise from variations in the activity of cytochrome P450 (CYP450) enzymes. Key differences include higher CYP3A4 activity in women, leading to faster clearance of drugs like cyclosporine and erythromycin. Conversely, men show higher CYP1A2 activity, resulting in faster metabolism of antipsychotics like olanzapine and clozapine. Most phase II enzymes, including UGTs, also demonstrate higher activity in men [16] [17]. These metabolic differences mean that at a standard dose, women may experience higher drug concentrations and increased adverse effects [18].

Q3: Why does critical illness significantly alter drug pharmacokinetics? Critical illness induces pathophysiological changes that dramatically affect all pharmacokinetic phases. Key alterations include endothelial dysfunction causing capillary leak and increased volume of distribution for hydrophilic drugs, organ dysfunction reducing drug clearance, and fluid resuscitation affecting drug concentration. These changes create considerable pharmacokinetic heterogeneity with significant inter- and intra-individual variation [19]. For instance, the volume of distribution for vancomycin can double in critically ill patients with septic shock, potentially necessitating larger loading doses [19].

Q4: How does gut microbiota contribute to inter-individual variability in polyphenol metabolism? Gut microbiota is a major determinant of inter-individual variability in the absorption, distribution, metabolism, and excretion (ADME) of dietary polyphenols. Microbial composition variations create distinct "metabotypes" - subgroups with qualitative or quantitative differences in metabolite production. Well-established examples include urolithin production from ellagitannins (urolithin producers vs. non-producers) and equol production from daidzein (equol producers vs. non-producers) [20]. These microbiota-driven metabolic differences likely condition the health effects of dietary polyphenols and contribute to heterogeneous responses in clinical trials [20].

Q5: What genetic factors influence inter-individual variability in polyphenol bioavailability? Single nucleotide polymorphisms (SNPs) in genes involved in polyphenol ADME contribute significantly to inter-individual variability. Relevant genes include those coding for transporters, glycosidases, and phase II enzymes like sulfotransferases (SULTs), UDP-glucuronosyltransferases (UGTs), and catechol-O-methyltransferase (COMT). A systematic review identified 88 SNPs in 33 genes associated with variability in polyphenol bioavailability, with about half related to drug/xenobiotic metabolism [21]. However, establishing clear genotype-phenotype relationships requires further research with larger sample sizes [21].

Troubleshooting Common Experimental Challenges

Problem: High Inter-individual Variability in Polyphenol Metabolite Profiles

Issue: Significant differences in urinary or plasma metabolite profiles among study participants following standardized polyphenol intake.

Solution:

- Stratify participants by metabotype: For ellagitannin studies, pre-screen and stratify participants into urolithin producers versus non-producers. For isoflavone studies, identify equol producers versus non-producers [20].

- Control for genetic factors: Genotype participants for key SNPs in ADME-related genes (e.g., UGTs, SULTs, COMT) and include this as a covariate in analysis [21].

- Document gut microbiota composition: Collect fecal samples for 16S rRNA sequencing to characterize microbial communities and identify microbiota-driven metabotypes [20].

Preventive Measures:

- Pre-screen participants: Include metabotype status as an inclusion criterion for homogeneous study populations.

- Standardize diet: Control dietary intake for 48-72 hours prior to studies to minimize food matrix effects on polyphenol absorption [20].

- Consider demographic factors: Account for age, sex, and hormonal status (e.g., menstrual cycle phase, oral contraceptive use) in study design and statistical analysis [16] [17].

Problem: Unexpected Drug Response in Geriatric Population Studies

Issue: Older participants exhibit heightened drug sensitivity or prolonged elimination compared to younger adults.

Solution:

- Adjust for body composition: Calculate doses based on lean body mass rather than total weight, as geriatric patients typically have increased body fat (20-40%) and decreased lean mass (10-15%) [15].

- Monitor renal function: Use cystatin C or direct measurements rather than serum creatinine alone, as age-related muscle mass reduction makes creatinine an unreliable glomerular filtration rate indicator [14].

- Consider protein binding: Monitor free drug levels for highly protein-bound drugs in patients with hypoalbuminemia, which is common in critical illness and aging [19].

Preventive Measures:

- Implement therapeutic drug monitoring: Especially for drugs with narrow therapeutic indices.

- Use reduced initial doses: For lipophilic drugs in geriatric patients, then titrate based on response [15].

- Account for comorbidities: Document and adjust for conditions affecting drug disposition (renal impairment, heart failure, liver disease).

Problem: Sex-Specific Adverse Drug Reactions in Clinical Trials

Issue: Female participants experience higher incidence or severity of adverse drug reactions at standard doses.

Solution:

- Implement sex-stratified dosing: Consider weight-adjusted doses or specific reductions for women, particularly for drugs metabolized by enzymes with known sex differences (e.g., CYP3A4, CYP2D6) [18].

- Monitor drug concentrations: Measure plasma levels to identify sex-based pharmacokinetic differences.

- Analyze data by sex: Ensure statistical analysis includes sex as a biological variable rather than pooling data [18].

Preventive Measures:

- Include adequate female representation: Ensure sufficient power for sex-specific analysis in trial design.

- Account for hormonal status: Document menstrual cycle phase, menopausal status, and hormonal medication use.

- Consider weight differences: Use weight-based dosing rather than fixed doses for all adults [18].

Table 1: Age-Related Physiological Changes Affecting Pharmacokinetics

| Parameter | Young Adult Reference | Geriatric Change | Impact on Pharmacokinetics | Example Drugs Affected |

|---|---|---|---|---|

| Liver Volume | Normal | ↓ 20-30% [14] | ↓ First-pass metabolism, ↑ bioavailability | Propranolol, Labetalol [14] |

| Renal Plasma Flow | Normal | ↓ 10-15% per decade [14] | ↓ Renal clearance, ↑ half-life | Gabapentin, Methotrexate [17] |

| Body Fat Percentage | Male: ~20%, Female: ~30% | ↑ 20-40% [15] | ↑ Vd for lipophilic drugs, prolonged t½ | Diazepam, Amiodarone [15] |

| Lean Body Mass | Normal | ↓ 10-15% [15] | ↓ Vd for hydrophilic drugs, ↑ plasma concentration | Digoxin, Lithium [15] |

| Serum Albumin | Normal | ↓ 10-20% [19] | ↑ Free fraction of highly protein-bound drugs | Phenytoin, Warfarin [19] |

Table 2: Sex Differences in Drug Metabolizing Enzymes and Transporters

| Enzyme/Transporter | Sex Difference | Clinical Impact | Example Substrates |

|---|---|---|---|

| CYP3A4 | ↑ 20-30% activity in women [17] | Faster clearance in women | Cyclosporine, Erythromycin [17] |

| CYP1A2 | ↑ 20-40% activity in men [17] | Slower clearance in women, more ADEs | Olanzapine, Clozapine [17] |

| CYP2D6 | ↑ activity in women [17] | Higher metabolite formation | Codeine, SSRIs [17] |

| UGTs (Glucuronidation) | ↑ activity in men [17] | Longer half-life in women | Oxazepam, Acetaminophen [17] |

| P-glycoprotein | ↑ expression in men [17] | Shorter elimination half-life in men | Digoxin, Quinidine [17] |

| Alcohol Dehydrogenase | ↑ activity in men [17] | Faster alcohol absorption in women, higher peak concentration | Ethanol [17] |

Table 3: Impact of Critical Illness on Pharmacokinetic Parameters

| Parameter | Change in Critical Illness | Clinical Consequence | Dosing Consideration |

|---|---|---|---|

| Volume of Distribution (Hydrophilic drugs) | ↑ Up to 100% [19] | Subtherapeutic plasma levels | Increased loading dose (e.g., Vancomycin) [19] |

| Hepatic Blood Flow | ↓ 30-50% [19] | Accumulation of high extraction ratio drugs | Reduce dose of Propofol, Opioids [19] |

| Protein Binding | ↓ Albumin, ↑ α1-acid glycoprotein [19] | Altered free drug concentration | Monitor free drug levels (e.g., Phenytoin) [19] |

| Renal Clearance | Variable (AKI common) [19] | Drug accumulation or enhanced clearance | Therapeutic drug monitoring, dose adjustment [19] |

| Gastric Motility | Often ↓ [19] | Unpredictable oral absorption | Prefer intravenous route when critical [19] |

Experimental Protocols

Protocol for Characterizing Inter-individual Variability in Polyphenol Metabolism

Purpose: To identify and quantify factors contributing to inter-individual variability in polyphenol absorption and metabolism.

Materials:

- Standardized polyphenol source (e.g., 500mg green tea extract capsule)

- EDTA-containing blood collection tubes

- Urine collection containers

- DNA collection kit (saliva or blood)

- Fecal sample collection kit

- LC-MS/MS system for metabolite quantification

- PCR system for genotyping

- 16S rRNA sequencing reagents

Procedure:

- Participant Characterization: Document age, sex, BMI, medical history, medication use, and dietary habits. Collect DNA and fecal samples.

- Pre-study Standardization: Instruct participants to avoid polyphenol-rich foods and medications for 48 hours prior to study.

- Baseline Sampling: Collect pre-dose blood, urine, and fecal samples.

- Intervention: Administer standardized polyphenol dose with documentation of exact time.

- Serial Blood Sampling: Collect at 0.5, 1, 2, 4, 8, 12, and 24 hours post-dose. Process plasma immediately and store at -80°C.

- Urine Collection: Collect cumulative urine over 0-4, 4-8, 8-12, 12-24, and 24-48 hour intervals. Record volume and aliquot for storage.

- Metabolite Analysis: Quantify parent compounds and metabolites using validated LC-MS/MS methods.

- Genotyping: Analyze SNPs in ADME-related genes (UGTs, SULTs, COMT, transporters).

- Microbiota Analysis: Perform 16S rRNA sequencing on fecal samples.

- Data Integration: Correlate metabolite profiles with genotype, microbiota composition, and demographic factors.

Data Analysis:

- Calculate pharmacokinetic parameters (AUC, Cmax, Tmax, t½) for key metabolites.

- Stratify participants by metabotype (producer/non-producer, high/low excretor).

- Perform multivariate analysis to identify significant predictors of variability.

- Build regression models incorporating demographic, genetic, and microbiota factors.

Protocol for Assessing Age-Related Pharmacokinetic Changes

Purpose: To characterize the impact of aging on drug disposition and inform age-appropriate dosing.

Materials:

- Study drug with primarily renal or hepatic elimination

- Therapeutic drug monitoring equipment

- Body composition measurement device (e.g., DEXA or BIA)

- Serum and urine collection materials

- GFR measurement markers (e.g., iohexol)

Procedure:

- Participant Recruitment: Enroll young (20-30 years) and older (65-80 years) healthy volunteers, matched for sex and BMI.

- Baseline Assessment: Measure body composition (lean mass, fat mass), serum creatinine, cystatin C, and GFR.

- Drug Administration: Administer single oral dose of study drug under fasting conditions.

- Intensive Sampling: Collect serial blood samples at 0, 0.5, 1, 1.5, 2, 3, 4, 6, 8, 12, 24, 36, and 48 hours.

- Urine Collection: Collect cumulative urine over 0-6, 6-12, 12-24, and 24-48 hour intervals.

- Sample Analysis: Quantify drug and metabolites in plasma and urine using validated methods.

- Protein Binding: Determine free drug fraction using equilibrium dialysis or ultrafiltration.

Data Analysis:

- Calculate and compare PK parameters (CL/F, Vd/F, t½, AUC, Cmax) between age groups.

- Correlate PK parameters with measured physiological parameters (GFR, body composition).

- Develop age-adjusted dosing recommendations based on observed differences.

Visualizations

ADME Workflow and Variability Factors

Demographic Impact on Drug Disposition

Research Reagent Solutions

Table 4: Essential Research Materials for Variability Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Standardized Polyphenol Extracts | Provide consistent intervention for absorption studies | Characterize composition; verify stability; use certified reference materials [20] |

| LC-MS/MS Systems | Quantify drugs and metabolites in biological matrices | Validate methods for sensitivity and specificity; use stable isotope-labeled internal standards [20] [21] |

| Genotyping Arrays | Identify SNPs in ADME-related genes | Select arrays with comprehensive coverage of pharmacogenes; validate with Sanger sequencing [21] |

| 16S rRNA Sequencing Kits | Characterize gut microbiota composition | Standardize sampling and storage; include positive controls; use appropriate bioinformatics pipelines [20] |

| Therapeutic Drug Monitoring Assays | Measure drug concentrations in clinical samples | Implement quality control procedures; establish reference ranges for different populations [19] |

| Body Composition Analyzers | Quantify fat mass, lean mass, and body water | Standardize measurement conditions; use consistent methodology for longitudinal studies [15] |

| Protein Binding Assay Kits | Determine free vs. protein-bound drug fractions | Use physiological conditions; consider disease-related protein changes [19] |

FAQs and Troubleshooting Guides

FAQ 1: What is the fundamental difference between LogP and LogD, and why does it matter for predicting oral bioavailability?

Answer: LogP and LogD are both measures of lipophilicity, but they account for ionization differently, which is critical for accurate prediction of a compound's behavior in the body.

- LogP (Partition Coefficient): Measures the distribution of the uncharged, neutral form of a compound between octanol and water. It is a constant for a given compound [22].

- LogD (Distribution Coefficient): Measures the distribution of all forms of the compound (ionized, unionized, etc.) at a specific pH. It is pH-dependent and provides a more realistic picture of lipophilicity under physiological conditions [22].

For compounds with ionizable groups, LogP can be misleading. For example, a compound might have a high LogP, suggesting good membrane permeability. However, its LogD at intestinal pH (e.g., 6.5) might be low because the compound is ionized and less permeable. Therefore, LogD is the preferred metric for estimating membrane permeability and absorption in different segments of the gastrointestinal tract, which have varying pH levels [22].

Table 1: Key Differences Between LogP and LogD

| Property | LogP | LogD |

|---|---|---|

| Ionization State | Considers only the neutral molecule | Accounts for all species (ionized and unionized) |

| pH Dependence | Constant, pH-independent | Variable, pH-dependent |

| Physiological Relevance | Limited, as it ignores ionization in the body | High, as it reflects lipophilicity at specific biological pH values |

| Primary Use | Fundamental measure of intrinsic lipophilicity | Predicting solubility, permeability, and absorption in biological systems |

FAQ 2: Our new chemical entity has poor aqueous solubility. What experimental strategies can we use to characterize its solubility profile for regulatory submissions?

Answer: A comprehensive solubility assessment should evaluate both kinetic (non-equilibrium) and thermodynamic (equilibrium) solubility in pharmaceutically relevant media. The following protocol is recommended:

Detailed Experimental Protocol: Solubility Profiling

- Objective: To determine the kinetic and thermodynamic solubility of a new chemical entity in buffers simulating gastrointestinal and plasma environments.

- Materials:

- Test compound

- Solvents: Buffer solutions (e.g., HCl buffer pH 2.0, Phosphate buffer pH 7.4), 1-octanol [23]

- Equipment: Shaking water bath, HPLC system with UV detector, centrifuge

- Procedure:

- Kinetic Solubility: Prepare a supersaturated solution of the compound in the relevant buffers (e.g., pH 2.0 and 7.4). Shake the mixture and monitor the concentration at regular time intervals (e.g., every hour for the first 8 hours, then daily) until a stable plateau concentration is reached. This determines the time required to reach equilibrium and provides early-stage solubility data [23].

- Thermodynamic Solubility: Place an excess of the solid compound in the solvent. Agitate the suspension in a constant-temperature water bath (e.g., from 293.15 K to 313.15 K) for a sufficient time to reach equilibrium (as determined from kinetic studies). Centrifuge the samples and analyze the supernatant using a validated HPLC-UV method [23].

- Data Analysis: Correlate the experimental solubility data using established equations like the modified Apelblat or van't Hoff models to understand the thermodynamic aspects of the dissolution process [23].

- Troubleshooting:

- Problem: Failure to reach equilibrium, leading to overestimation of solubility.

- Solution: Ensure the kinetic solubility study is conducted first to define the appropriate shaking time for the thermodynamic study. Use an excess of solid compound throughout the experiment [23].

Table 2: Example Solubility Data for Novel Hybrid Compounds [23]

| Compound | Solubility in Buffer pH 7.4 (mol·L⁻¹) | Solubility in Buffer pH 2.0 (mol·L⁻¹) | Solubility in 1-Octanol (mol·L⁻¹) |

|---|---|---|---|

| I (-CH₃) | 1.98 × 10⁻³ | Higher by an order of magnitude | Significantly higher |

| II (-F) | Poor, specific value not listed | Higher by an order of magnitude | Significantly higher |

| III (-Cl) | 0.67 × 10⁻⁴ | Higher by an order of magnitude | Significantly higher |

FAQ 3: How does molecular size influence "druggability," and how strict are the rules like the Rule of 5 today?

Answer: Molecular size, often approximated by molecular weight (MW), is a key component of the Rule of 5 (Ro5), which suggests that for good oral absorption, a molecule should have MW ≤ 500 [24] [22]. The rule was instrumental in focusing drug discovery on compounds with favorable physicochemical properties.

However, the landscape is evolving. It is now recognized that some protein targets require larger molecules for effective binding. Consequently, the chemical space "Beyond the Rule of 5" (bRo5) is actively explored for new therapeutics [22]. Proposed revised parameters for bRo5 space include:

- Molecular weight < 1000 Da

- Calculated LogP between -2 and 10

- Fewer than 6 H-bond donors

- No more than 15 H-bond acceptors [22]

These larger compounds, such as macrocycles and PROTACs, can achieve oral bioavailability by folding in a way that masks their hydrogen bond donors and acceptors [22]. The Ro5 should be viewed as a guideline, not an absolute rule, and lipophilicity (LogD) remains a critically important parameter regardless of molecular size.

FAQ 4: Our drug candidate is a substrate for gut microbial metabolism. How can we account for inter-individual variability in absorption studies?

Answer: Inter-individual variability in absorption, distribution, metabolism, and excretion (ADME), often driven by differences in gut microbiota composition, genetics, and other factors, is a major challenge [8] [20]. You can address this using the following strategies:

Detailed Experimental Protocol: Addressing Inter-individual Variability

- Objective: To identify and stratify study participants based on their metabolic phenotypes (metabotypes) to reduce variability and identify responsive subgroups.

- Strategies:

- Metabotyping: Conduct a baseline assessment where participants consume a standardized dose of the compound of interest (e.g., a polyphenol-rich food or your drug candidate). Collect blood and urine samples over 24-48 hours. Use mass spectrometry-based metabolomics to profile the resulting metabolites. Stratify participants into metabotypes such as "high-producers" vs. "low-producers" or "producers" vs. "non-producers" of key microbial metabolites [25] [20].

- Stratified Randomization: In clinical trials, use the metabotype information to ensure an even distribution of different metabotypes across all study arms. This minimizes variability and allows for clearer identification of drug effects within specific subgroups [25].

- Genomic and Microbiome Analysis: Collect DNA samples for genotyping polymorphisms in genes encoding metabolizing enzymes (e.g., UGTs, SULTs) and transporters. Analyze gut microbiota composition through 16S rRNA sequencing or metagenomics to identify the bacterial taxa responsible for the observed metabolic profiles [8] [25].

- Troubleshooting:

- Problem: High variability in metabolite profiles obscures the drug's efficacy.

- Solution: Implement a crossover study design where participants serve as their own controls, or consider N-of-1 trials to capture individual response patterns [25].

Diagram 1: A workflow for managing inter-individual variability in absorption studies.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Physicochemical and Absorption Studies

| Tool / Reagent | Function | Application Context |

|---|---|---|

| 1-Octanol / Buffer Systems | Experimental measurement of partition (LogP) and distribution (LogD) coefficients. | Lipophilicity assessment [23] [22]. |

| Simulated Biological Buffers (pH 2.0, 7.4) | Mimic the environment of the gastric juice and blood plasma for solubility and dissolution testing. | Kinetic and thermodynamic solubility profiling [23]. |

| PBPK Modeling Software (e.g., GastroPlus) | Computational tool that simulates drug absorption, distribution, metabolism, and excretion (ADME) in humans. | Identifying absorption risks early, predicting food effects, and optimizing formulation [26] [27]. |

| High-Performance Liquid Chromatography (HPLC) | Analytical technique for separating, identifying, and quantifying compounds in a mixture. | Determining drug concentration in solubility, permeability, and pharmacokinetic samples [23] [24]. |

| Immobilized Artificial Membrane (IAM) HPLC Columns | HPLC columns that mimic cell membranes to assess a compound's potential to permeate lipids. | Predicting membrane permeability and volume of distribution [24]. |

| Mass Spectrometry-Based Metabolomics | Comprehensive analysis of the small-molecule metabolite profiles in a biological system. | Identifying metabotypes and characterizing inter-individual variability in drug metabolism [8] [25] [20]. |

Diagram 2: The integration of key physicochemical properties into a predictive framework for absorption.

Troubleshooting Guide: Managing Inter-Individual Variability in Absorption Studies

This technical support center provides FAQs and troubleshooting guides to help researchers address the critical challenge of inter-individual variability in gastrointestinal (GI) physiology, which significantly impacts the reproducibility and predictive power of oral drug absorption studies.

Frequently Asked Questions

Q1: Why do we observe high variability in drug plasma concentrations between subjects for the same oral formulation? A: High inter-subject variability often stems from physiological differences in the GI tract that are not accounted for in your experimental design. Key factors include:

- Gastric Emptying Time: This is highly variable and depends on the fed/fasted state, the size and density of the dosage form, and the nature of the food itself [28]. This variability directly impacts when a drug arrives at the primary absorption site.

- Colonic Transit Time: This is slow and highly influenced by diet, stress, dietary fiber content, and disease states [28]. In conditions like ulcerative colitis, colonic transit can be dramatically shorter (about 24 hours) compared to a healthy state (about 52 hours) [28].

- pH Profiles: The intraluminal pH changes along the GI tract and can be influenced by diet, health status, and age [28].

Q2: How can we design more robust experiments to account for variable GI transit times? A:

- For Small Intestine-Targeted Release: The small intestine transit time (SITT) is relatively constant at 3–4 hours and is generally unaffected by the nature of the product [28]. Design release profiles to occur within this window.

- For Colon-Targeted Release: A major challenge is the unpredictable gastric emptying time [28]. Do not rely solely on time-controlled delivery. Consider a combination of mechanisms, such as pH-dependent and time-controlled systems, to improve colon-specific delivery. Research indicates that such a system can prevent burst release in the stomach and provide sustained release in the colon [28].

Q3: Our in-vitro models poorly predict in-vivo absorption for low-solubility drugs. What physiological factors are we likely missing? A: The primary factor is the drastic reduction in available water volume in the colon. While the small intestine has about 130 mL of water in fasting conditions, the colon has only about 10 mL, leading to more viscous contents and impaired dissolution [28]. Your in-vitro models may not adequately simulate this water-restricted, viscous colonic environment.

Quantitative Physiology Reference Tables

Table 1: Key Physiological Parameters of the Human Intestine [28]

| Parameter | Small Intestine | Colon |

|---|---|---|

| Length (m) | 7 | 1.5 |

| Absorption Surface Area (m²) | 120 | 0.3 |

| Transit Time (h) | 3–4 | ~24 (highly variable) |

| pH Range | 6.0–7.0 (duodenum) to 6.5–8.0 (ileum) | 5.5–7.5 (ascending) to 7.0–8.0 (descending) |

| Water Volume (mL), fasting | 130 | 10 |

| Microorganism Load (organisms/g) | 10² (duodenum) to 10⁷ (ileum) | 10¹¹–10¹² |

Table 2: Comparison of Absorption Pathways and Key Proteins [28]

| Small Intestine | Colon | |

|---|---|---|

| Absorption Surface Provided by | Folds, villi, and microvilli | Folds and microvilli |

| Passive Absorption | Transcellular, Paracellular | Transcellular |

| Key Active Transporters | PEPT, MRP2, P-gp | MRP3, MRP2, OCTs |

| Key Enzymes | CYP3A family | Enzymes from colonic microflora |

Experimental Protocol: Accounting for Physiological Variability

Protocol: Designing a Robust Colonic Delivery Formulation

1. Objective: To develop an oral dosage form that reliably releases a drug in the colon, overcoming variability in gastric emptying and small intestine transit.

2. Methodology:

- Mechanism: Employ a dual-approach system combining pH-dependent release and time-controlled release [28].

- Formulation: Encapsulate the drug in a core surrounded by a pH-sensitive polymer coating (e.g., resistant to stomach pH but dissolving at higher pH). This core-time module is then further coated with a layer designed to erode after a specific lag time.

3. Key Steps:

- Characterization: Determine the dissolution profile of the formulation in media simulating the stomach (pH ~1.5-3), small intestine (pH ~6-7.5), and colon (pH ~7-8) [28] [29].

- Lag Time Calibration: Set the lag time of the time-controlled component to approximately 5 hours to account for the combined transit of the stomach and small intestine [28].

- Validation: Test the formulation's performance in vivo, monitoring drug plasma concentrations to confirm release in the colon despite individual variations in gastric emptying.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GI Absorption Studies

| Item | Function in Research |

|---|---|

| pH-Sensitive Polymers | To create coatings for dosage forms that target drug release to specific regions of the GI tract based on local pH. |

| Enzyme Inhibitors | To study the metabolic stability of drugs by inhibiting specific enzymes (e.g., CYP3A in the small intestine) [28]. |

| Transport Modulators | To investigate the role of specific transporters (e.g., P-gp, PEPTs) in drug absorption and efflux [28]. |

| Simulated GI Fluids | Biorelevant media for in-vitro dissolution testing that mimic the pH, buffer capacity, and composition of gastric, intestinal, and colonic fluids. |

| Gamma Scintigraphy Tracers | To non-invasively track the transit and disintegration of dosage forms in human volunteers in real-time. |

Visualizing the Experimental Workflow

The diagram below outlines a logical workflow for developing a colon-targeted drug delivery system, integrating key decision points to manage physiological variability.

Diagram 1: Workflow for developing a colon-targeted drug delivery system.

The relationship between GI physiology, its inherent variability, and the critical parameters for absorption studies can be summarized as follows:

Diagram 2: Key physiological factors influencing drug absorption.

Advanced Methodologies for Characterizing and Assessing Absorption Variability

Metabotyping, also known as metabolic phenotyping, is a strategy that involves grouping individuals into homogeneous subgroups—called metabotypes—based on their metabolic profiles [30]. This approach is increasingly recognized as a powerful tool for managing the substantial inter-individual variability observed in responses to dietary interventions, drugs, and environmental exposures [30]. In the context of absorption studies, this variability presents a significant challenge, as coefficients of variation between 59% and 103% have been reported for postprandial triacylglycerol, glucose, and insulin responses to identical meals [30]. Metabotyping helps deconvolute this heterogeneity by identifying subpopulations with similar metabolic characteristics, thereby enabling more precise research and tailored interventions.

Table 1: Key Terminology in Metabotyping Research

| Term | Definition |

|---|---|

| Metabotype | A subgroup of individuals with similar metabolic phenotypes or profiles [30]. |

| Inter-individual Variability | The differences in metabolic responses between individuals exposed to the same stimulus [30]. |

| Metabolic Profile | A set of biochemical measurements that can include metabolites, hormones, and clinical biomarkers [30] [31]. |

| Precision Nutrition | Dietary advice tailored to an individual's or group's specific characteristics [32]. |

Core Methodologies and Experimental Protocols

The process of defining metabotypes relies on measuring a suite of biological variables and using statistical methods to group individuals. The following workflow outlines a generalized protocol for conducting a metabotyping study.

Key Variables for Metabotype Classification

Researchers can use diverse sets of parameters to define metabotypes. The choice of variables depends on the research question and available resources.

Table 2: Common Variable Categories Used for Metabotyping

| Variable Category | Specific Examples | Utility and Rationale |

|---|---|---|

| Anthropometric & Clinical | BMI, Waist Circumference, Age, Blood Pressure [30] [32] | Provides a quick, low-cost assessment of overall metabolic health and disease risk. |

| Standard Biochemical | Fasting Glucose, Insulin, HbA1c, Blood Lipids (HDL-C, LDL-C, TG), Uric Acid [30] [32] [31] | Captures core aspects of glycemic control and cardiovascular health. |

| Metabolomics | Amino acids (leucine, isoleucine), Acylcarnitines, Sphingomyelins, Phosphatidylcholines [30] | Offers a deep, functional readout of metabolic pathways and physiological status. |

| Gut Microbiota | Microbiome composition (e.g., Prevotella, Lactobacillus), Metagenomic functional potential [32] [31] | Accounts for the significant role of gut bacteria in metabolizing dietary compounds and producing bioactive metabolites. |

Detailed Experimental Protocol: A Representative Example

The following protocol is synthesized from methodologies used in recent publications to classify individuals based on their metabolic responses to a dietary challenge [30].

Objective: To identify metabotypes with differential glycemic and lipidemic responses to a standardized meal.

Materials:

- Participants: Recruited based on inclusion criteria (e.g., age, health status).

- Standardized Test Meal: Composition precisely controlled for macronutrients (e.g., high-protein meal or high saturated fat meal).

- Blood Collection Tubes: Including tubes with appropriate anticoagulants and preservatives for plasma/serum separation.

- Clinical Analyzer: For measuring glucose, insulin, triacylglycerols, and other clinical biomarkers.

- Mass Spectrometer: (If applicable) For targeted or untargeted metabolomic profiling.

- Statistical Software: Such as R or Python, with packages for clustering (e.g.,

k-means,cluster) and dimensionality reduction (e.g.,FactoMineRfor PCA).

Procedure:

- Baseline Assessment: After an overnight fast, collect baseline blood samples and record anthropometric measurements (weight, height, waist circumference).

- Dietary Challenge: Administer the standardized test meal. Participants must consume the meal within a specified time (e.g., 15 minutes).

- Postprandial Blood Sampling: Collect blood at predetermined time points (e.g., 30, 60, 120, and 180 minutes post-meal).

- Sample Analysis: Process blood samples to obtain plasma/serum. Analyze using:

- Clinical analyzer for glucose, insulin, and triacylglycerol concentrations.

- Mass spectrometry for metabolomic profiles (e.g., acylcarnitines, bile acids).

- Data Integration: Calculate postprandial responses (e.g., area under the curve, peak concentration) for each analyte.

- Statistical Clustering:

- Integrate baseline and response data into a single data matrix.

- Normalize all variables to a common scale (e.g., Z-scores).

- Perform k-means clustering or use Self-Organizing Maps (SOMs) to group participants into distinct metabotypes [31].

- Validate the stability and robustness of the clusters.

Troubleshooting Common Experimental Challenges

FAQ 1: How many variables are needed to define a robust metabotype? There is no fixed number. Studies have successfully used as few as four clinical variables (e.g., age at diagnosis, BMI, waist circumference, HbA1c) and as many as 33 biochemical parameters [30] [31]. The key is to select variables that are biologically relevant to the research question. A larger number of variables, particularly from omics technologies, can capture greater detail but also increases complexity and the risk of overfitting. Start with a core set of well-established clinical biomarkers and expand as needed.

FAQ 2: Our clusters are unstable and change with different analysis parameters. What should we do? This indicates low robustness. To address this:

- Pre-process data carefully: Ensure proper normalization and handling of outliers.

- Validate clusters: Use internal validation methods (e.g., silhouette width) and resampling techniques (e.g., bootstrapping) to assess cluster stability [31].

- Try multiple algorithms: Compare results from k-means, hierarchical clustering, and Self-Organizing Maps to see if consistent groups emerge [30] [31].

- Simplify the model: Reduce the number of highly correlated variables using Principal Component Analysis (PCA) before clustering.

FAQ 3: We identified metabotypes, but they do not predict response to our intervention. What could be the reason? This suggests the chosen variables for clustering may not be the key drivers of response for your specific intervention. Re-evaluate the biological plausibility of your metabotypes in the context of the intervention's mechanism of action. It may be necessary to incorporate different types of data, such as gut microbiota profiles, which have been shown to be strong determinants of inter-individual variation in response to diet [32].

FAQ 4: How can we translate a research metabotyping protocol into a clinically usable tool? The goal is to move from complex, high-dimensional models to simpler, actionable classifiers.

- Perform variable selection: Identify the minimal set of biomarkers that retain most of the predictive power of the full model. For example, one study found that a model using only HDL-C, non-HDL-C, uric acid, fasting glucose, and BMI was effective [30].

- Develop decision trees: Create simple algorithms that can be used by clinicians to assign patients to a metabotype and receive pre-defined, tailored advice [32].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Metabotyping Studies

| Item | Function/Application | Example Use in Protocol |

|---|---|---|

| Standardized Test Meals | Provides a uniform dietary challenge to assess postprandial metabolism. | High saturated fat meal or high-protein meal to trigger lipid or glucose/insulin responses [30]. |

| EDTA or Heparin Blood Tubes | Anticoagulant for plasma collection; preserves analytes for metabolomic analysis. | Collection of fasting and postprandial blood samples for clinical biochemistry and MS analysis. |

| Luminescent/Optical Immunoassay Kits | Quantification of specific protein hormones and cytokines. | Measurement of insulin, leptin, and adipokines as part of the metabolic profile [30]. |

| Mass Spectrometry (MS) Grade Solvents | High-purity solvents for sample preparation and liquid chromatography (LC). | Essential for reproducible and accurate metabolomic profiling by LC-MS. |

| Stable Isotope-Labeled Internal Standards | Allows for precise quantification of metabolites in complex biological samples. | Added to plasma/serum samples prior to metabolomic analysis to correct for technical variability. |

| DNA/RNA Extraction Kits | Isolation of high-quality nucleic acids from fecal samples. | Required for subsequent 16S rRNA sequencing or shotgun metagenomics of the gut microbiota [31]. |

| Clustering Software (e.g., R, Python with scikit-learn) | Statistical computing and machine learning for identifying metabotypes. | Performing k-means clustering, Self-Organizing Maps, and other multivariate analyses [31]. |

Advanced Data Integration and Interpretation

Modern metabotyping often involves the integration of multiple omics datasets. The following diagram illustrates a conceptual framework for how different data layers inform the final metabotype and its application.

Troubleshooting Guides

Data Quality and Preprocessing Issues

Problem: High technical variation and noise in multi-omics datasets.

| Symptom | Likely Cause | Solution | Validation Approach |

|---|---|---|---|

| Poor clustering of QC samples in PCA [33] | Signal drift across analytical run; Batch effects | Implement systematic QC protocol (e.g., QComics) with intermittent QC samples [33] | PCA scores show tight clustering of QC samples; RSD < 30% for most chemical descriptors [33] |

| Low mapping sensitivity/specificity [34] | Suboptimal read aligner for specific genome/read length | Use BWA for speed with long reads (>100bp); NovoAlign for complex genomes/short reads [34] | Benchmark aligners using simulated reads; >95% sensitivity for long-read mapping [34] |

| Non-random errors and artifacts in variants [35] | Pre-sequencing, sequencing, or data processing errors | Use Mapinsights toolkit for deep QC of alignment files to detect cycle-specific biases and outliers [35] | Logistic regression model on Mapinsights features identifies low-confidence variant sites [35] |

| Gene expression correlated with sequencing depth [36] | Technical confounding in scRNA-seq data | Apply regularized negative binomial regression (sctransform) instead of single scaling factors [36] | Normalized gene expression should not correlate with cellular sequencing depth [36] |

Experimental Protocol for Metabolomics QC [33]:

- Sample Preparation: Prepare procedural blanks by replacing biological sample with water. Create QC samples by pooling equal aliquots of all study samples.

- Injection Sequence:

- Inject 5 consecutive blank samples for system stabilization.

- Inject 5-10 consecutive QC samples for system conditioning.

- Analyze real samples in random order, intercalating one QC sample after every 10 study samples.

- Inject 5 procedural blanks at the end to assess carryover.

- Data Processing: Select a set of "chemical descriptors" (metabolites from various chemical classes) to monitor method reproducibility. Calculate RSD values across QC injections.

Data Integration and Biological Interpretation

Problem: Difficulty reconciling data across different omics layers.

| Symptom | Likely Cause | Solution | Validation Approach |

|---|---|---|---|

| Cannot discern meaningful biological patterns from integrated data | Improper normalization between omics layers; Technical variability obscuring biological signals | Establish realistic omics hierarchy considering different temporal dynamics [37] | Use of negative control datasets; Silhouette width and batch-effect tests to assess normalization performance [38] |

| High inter-individual variability obscures group effects | True biological differences in ADME processes; Undetected subpopulations | Identify and stratify individuals by metabotypes (e.g., equol producers vs. non-producers) [8] [10] | Stratification should yield distinct metabolic clusters with different phenotypic outcomes [10] |

| Discrepancy between genomic potential and metabolic output | Functional redundancy in microbiome; Regulatory mechanisms not captured | Integrate metagenomics with metabolomics using knowledge-based strategies and multivariate models [39] | Key microbial pathways (e.g., L-arginine biosynthesis) should correlate with corresponding metabolic profiles [39] |

Experimental Protocol for Stratifying Individuals by Metabotype [8] [10]:

- Study Design: Recruit sufficient participants (larger N improves detection of subpopulations). Collect comprehensive metadata (age, sex, diet, health status, medication).

- Sample Collection: Collect appropriate biospecimens (feces for microbiome; plasma/urine for metabolites) at relevant time points for the bioactive compounds being studied.

- Multi-omics Profiling: Conduct genomic analysis (host and/or microbial); perform metabolomic profiling (untargeted or targeted).

- Data Analysis: Present individual ADME data; use clustering algorithms to identify qualitative or quali-quantitative patterns in metabolite production (e.g., urolithin metabotypes for ellagitannins).

- Validation: Correlate metabotypes with specific microbial features (e.g., gut microbiota composition for equol production) or genetic polymorphisms.

Frequently Asked Questions (FAQs)

Q1: How often should we sample for different omics layers in a longitudinal study?

Sampling frequency should follow a realistic omics hierarchy, as not all layers change at the same rate. The genome is largely static and may only need baseline assessment. The epigenome is more dynamic but still relatively stable. The transcriptome is highly responsive to environment, treatment, and behaviors, often requiring more frequent assessments (e.g., across circadian cycles). The proteome is generally stable due to longer half-lives, needing less frequent testing. The metabolome offers a real-time snapshot of metabolic activity and may require high-frequency sampling, depending on the intervention [37].

Q2: What are the primary factors causing inter-individual variability in the metabolism of bioactive compounds?

The main drivers of inter-individual variability include:

- Gut Microbiota: A major determinant for most (poly)phenols, isoflavones, and ellagitannins, resulting in producer vs. non-producer metabotypes (e.g., equol, urolithins) [10].

- Genetic Polymorphisms: Variations in genes encoding enzymes involved in absorption and metabolism (e.g., BCO1 for carotenoids) [8].

- Demographic and Physiological Factors: Age, sex, BMI, ethnicity, and health status can influence ADME processes [8] [40].

- External Factors: Diet, drug exposure, lifestyle (e.g., smoking), and physical activity [8] [40].

Q3: Our single-cell RNA-seq analysis is confounded by sequencing depth. Which normalization method should we use?

Avoid methods that apply a single scaling factor (e.g., log-normalization), as they fail to effectively normalize both lowly and highly expressed genes. Instead, use a generalized linear model-based approach like regularized negative binomial regression (implemented in the sctransform R package). This method uses sequencing depth as a covariate and pools information across genes to prevent overfitting, effectively removing the influence of technical variation while preserving biological heterogeneity [36].

Q4: How can we monitor and control quality in untargeted metabolomics?

Implement a robust protocol like QComics [33]:

- Use procedural blanks to correct for background noise and carryover.

- Analyze QC samples (pooled from all study samples) throughout the sequence to monitor signal drift.

- Employ a set of representative chemical descriptors (metabolites covering various classes) to assess reproducibility.

- Establish criteria for removing out-of-control observations and for overall data quality (e.g., precision and accuracy).

Q5: How do we choose a sequencing read aligner for our genomics/metagenomics study?

Select an aligner based on your genome characteristics and read length [34]:

- For general use and speed with long reads (>100bp): BWA.

- For sensitivity with short reads (36-72bp) or complex genomes: NovoAlign.

- For efficient mapping of a variety of read lengths: Bowtie2 or Smalt. Benchmark several aligners on a subset of your data or simulated reads specific to your study genome whenever possible.

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function & Application | Key Considerations |

|---|---|---|

| Procedural Blanks | Distinguish true biological signals from background noise and carryover in metabolomics [33]. | Prepare using the same solvents and procedures as real samples but without the biological matrix. |

| Pooled QC Samples | Monitor analytical stability, correct for signal drift, and assess overall data quality in metabolomics [33] [40]. | Prepare by combining equal aliquots of all study samples; analyze throughout the run sequence. |

| Chemical Descriptors | A defined set of metabolites used as quality markers to evaluate method reproducibility in metabolomics [33]. | Should represent different chemical classes, molecular weights, and chromatographic regions. |

| External RNA Controls (ERCC Spike-ins) | Create a standard baseline for transcript counting and normalization in scRNA-seq [38]. | Not feasible for all platforms (e.g., droplet-based). Use to differentiate technical from biological variation. |

| Unique Molecular Identifiers (UMIs) | Correct for PCR amplification biases and enable accurate digital counting of mRNA molecules in scRNA-seq [38]. | Incorporated during library preparation; essential for quantifying transcript abundance in droplet-based methods. |

Workflow and Conceptual Diagrams

Multi-Omics Integration and Variability Analysis Workflow

Multi-Omics Variability Workflow

Determinants of Inter-Individual Variability

Determinants of Variability

Population Pharmacokinetic (PPK) Modeling Approaches

Troubleshooting Common PPK Modeling Issues

Q1: My model fails to converge or produces unreliable parameter estimates. What are the potential causes and solutions?

A: Non-convergence often stems from model overparameterization, poor initial estimates, or insufficient data quality/quantity.

- Cause 1: High Interoccasion Variability (IOV) in Sparse Designs. In sparse sampling designs (e.g., phase II trials), high IOV can mask interindividual variability (IIV) and lead to estimation problems. A 2025 simulation study showed that neglecting a true IOV of 75% can severely bias IIV and residual error estimates [41].

- Solution: Incorporate IOV explicitly in the model when possible. The power to detect IOV increases with the number of occasions (e.g., from one to three). Including a trough sample in your design significantly improves model performance [41].

- Cause 2: Inadequate Handling of Body Size and Maturation in Paediatric Populations. Using allometric exponents estimated from adult data for paediatric models is not advised, as adult exponents are affected by factors like obesity [42].

- Solution: For paediatric PK models, use fixed allometric exponents (0.75 for clearance, 1.0 for volume of distribution) based on physiological principles. For the youngest patients, also integrate maturation functions (e.g., sigmoid Emax model for renal or metabolic maturation) to account for organ development [42].

- Cause 3: Improper Covariate Model Building. Including too many covariates or using correlated covariates can destabilize the model.

Q2: How do I determine which covariates significantly influence drug pharmacokinetics?

A: Covariate analysis identifies patient factors (e.g., weight, age, organ function) that explain Between-Subject Variability (BSV).

- Protocol: The standard method is Stepwise Covariate Modeling [43] [44].

- Base Model Development: First, develop a structural model (e.g., one- or two-compartment) and statistical model without any covariates.

- Forward Inclusion: Systematically test each pre-selected covariate's relationship with PK parameters (e.g., clearance, volume). A covariate is included if it produces a statistically significant reduction in the model's objective function value (e.g., >3.84 for p<0.05).

- Backward Elimination: All covariates added in the forward step are then tested for retention. Each is removed one by one, and only those whose removal causes a large, significant increase in the objective function are kept in the final model.

- Justification: Covariate selection should be based not only on statistical significance but also on physiological and clinical plausibility [45].

Q3: What are the best practices for model evaluation and validation?

A: A robust PPK model must undergo rigorous evaluation to be credible for regulatory submission or clinical application.

- Essential Techniques:

- Visual Predictive Check (VPC): A graphical method where the model is used to simulate multiple datasets. The percentiles of the observed data are overlaid on the percentiles of the simulated data to see if they match, indicating the model accurately predicts the central trend and variability [43].

- Bootstrap: A resampling technique where the model is repeatedly fitted to hundreds of datasets randomly sampled (with replacement) from the original data. The distribution of the resulting parameter estimates validates the stability and precision of your final model [44].

- Goodness-of-Fit Plots: Standard plots like observed vs. population-predicted concentrations and conditional weighted residuals vs. time help identify model misspecification [43].

The diagram below illustrates the core workflow and key components of a PPK analysis.

Figure 1: PPK Model Development and Evaluation Workflow.

Quantitative Data and Variability in PPK

The table below summarizes the key types of variability accounted for in PPK models.

Table 1: Types of Variability in Population Pharmacokinetic Models

| Variability Type | Abbreviation | Description | Source/Example |

|---|---|---|---|

| Between-Subject Variability | BSV or IIV | The variability in PK parameters between different individuals in the population. | Differences in drug clearance due to genetics, body weight, or disease status [45]. |

| Within-Subject/Residual Unexplained Variability | RUV | The remaining variability not explained by the model, including measurement error and model misspecification. | Assayed using combined proportional and additive error models [44] [41]. |

| Interoccasion Variability | IOV | The variability within a single subject between different dosing occasions. | Changes in a patient's absorption rate between cycle 1 and cycle 3 of chemotherapy [41]. |

Experimental Protocols for Key Scenarios

Protocol 1: Building a PPK Model for a Monoclonal Antibody

This protocol is adapted from a population PK analysis of olaratumab [43].

- 1. Study Design & Data Collection:

- Population: Data pooled from four Phase II studies in cancer patients (non-small cell lung cancer, glioblastoma, soft tissue sarcoma, gastrointestinal stromal tumors).

- Dosing: Olaratumab tested at 15-20 mg/kg, both as monotherapy and combined with chemotherapy.

- Sampling: A mix of rich (in cycles 1 and 3) and sparse (peak and trough) PK sampling.

- 2. Bioanalysis:

- Concentration Assay: Olaratumab serum concentrations were measured using a validated enzyme-linked immunosorbent assay (ELISA) with a lower limit of quantitation of 1 μg/mL [43].

- Immunogenicity Assessment: Treatment-emergent anti-drug antibodies (TE-ADAs) were assessed using a validated, multi-tiered ELISA.

- 3. Model Development (using NONMEM):

- Structural Model: Test one-, two-, and three-compartment models with linear and Michaelis-Menten clearance. Olaratumab was best described by a two-compartment model with linear clearance.

- Statistical Model: Estimate IIV on key parameters (e.g., CL, V) and test proportional vs. combined error models for RUV.

- Covariate Model: Test continuous (body weight, tumor size) and categorical (sex, race) covariates on CL and V. Body weight significantly influenced both CL and V, and tumor size affected CL.

- 4. Model Evaluation: Perform Visual Predictive Check (VPC) to evaluate the final model's predictive performance [43].

Protocol 2: Handling IOV in a Sparse Sampling Design

This protocol is based on a 2025 simulation study investigating IOV [41].

- 1. Simulation Setup:

- Base Model: Use a known one-compartment model (e.g., from linezolid PK) with established IIV on parameters like clearance (CL) and volume of distribution (V).

- IOV Implementation: Introduce IOV of different magnitudes (e.g., 25%CV and 75%CV) on parameters like CL, V, or the absorption rate constant (ka).

- 2. Study Design Simulation:

- Sampling Schemes: Simulate datasets with sampling in one, two, or three dosing occasions. Compare schemes with and without a trough (pre-dose) sample.

- Patients: Simulate data for a plausible number of patients for a phase II study (e.g., 150).

- 3. Model Estimation & Evaluation:

- Stochastic Simulation and Estimation (SSE): Use tools like PsN to simulate 500 datasets and then attempt to re-estimate the model parameters.

- Key Metrics: Calculate the power to correctly detect IOV, type I error, and the bias/imprecision in parameter estimates when IOV is neglected.