Navigating Food Method Validation: A Comparative Guide to Acceptance Criteria Across Global Standards

This article provides researchers, scientists, and drug development professionals with a comprehensive analysis of method validation acceptance criteria, specifically contextualized for the food industry.

Navigating Food Method Validation: A Comparative Guide to Acceptance Criteria Across Global Standards

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive analysis of method validation acceptance criteria, specifically contextualized for the food industry. It explores the foundational principles of validation, details the application of key parameters like accuracy and precision, offers strategies for troubleshooting common pitfalls, and delivers a critical comparison of major regulatory guidelines from ICH, FDA, EMA, and WHO. The content is designed to equip professionals with the knowledge to develop robust, compliant, and fit-for-purpose analytical methods that ensure food safety and quality.

The Pillars of Reliability: Understanding Method Validation Fundamentals in Food Analysis

Method validation is a formal, documented process that provides objective evidence that an analytical method is consistently fit for its intended purpose [1] [2]. In food testing, this confirms that a method reliably measures what it claims to measure—whether detecting pathogens, identifying adulterated products, or quantifying nutritional components—ensuring the safety, authenticity, and quality of the food supply [1] [3].

The process establishes the performance characteristics and limitations of a method before it is implemented in a laboratory. As with any analytical method, validating the method is crucial to ensure that it produces reliable, accurate, and reproducible results [1]. This verification is a multi-stage process; first, a method must be proven fit-for-purpose through validation, and then a laboratory must demonstrate it can properly perform the method through verification [2].

Core Principles and Regulatory Landscape of Method Validation

Method validation confirms that a method's performance characteristics meet the requirements for its application. For food testing, these requirements are often defined by international standards and regional regulations. A key standard is the ISO 16140 series, which provides protocols for the validation of alternative microbiological methods against reference methods and for their subsequent verification in individual laboratories [3] [2].

Globally, various regulatory bodies have established guidelines, leading to a complex landscape that companies must navigate for compliance [4]. The table below provides a comparative overview of major regulatory frameworks for analytical method validation.

Table: Comparative Analysis of International Method Validation Guidelines

| Regulatory Body | Key Guidance Documents | Primary Focus & Scope | Common Validation Parameters |

|---|---|---|---|

| International Council for Harmonisation (ICH) | ICH Q2(R2), ICH Q14 [5] | Harmonized technical requirements for pharmaceuticals for human use; widely referenced. | Accuracy, Precision, Specificity, LOD, LOQ, Linearity, Robustness [4] |

| European Medicines Agency (EMA) | Adopts ICH guidelines [4] | Regulatory oversight for medicines in the European Union. | Aligned with ICH parameters [4] |

| World Health Organization (WHO) | WHO Draft on Analytical Method Validation [4] | Global public health, including essential medicines and prequalification programs. | Quality, safety, and efficacy; requirements may vary [4] |

| ASEAN | ASEAN Analytical Validation Guidance [4] | Regional requirements for member states of the Association of Southeast Asian Nations. | Quality, safety, and efficacy; requirements may vary [4] |

| ISO (for Food Chain Microbiology) | ISO 16140 series (Parts 1-7) [2] | Protocol for validation and verification of alternative microbiological methods in the food chain. | Includes method comparison and interlaboratory study for certification [3] [2] |

A foundational concept in modern method validation is the Analytical Target Profile (ATP), which defines the intended purpose of the method by specifying the required quality of the measurement before method development begins [5]. The ATP provides the basis for the required method development and subsequent method validation parameters [5].

Essential Validation Parameters and Acceptance Criteria

The fitness of an analytical method is demonstrated by evaluating a set of key performance parameters. The specific criteria for each parameter depend on the method's intended use, whether for quantitative analysis, qualitative detection, or identification [1].

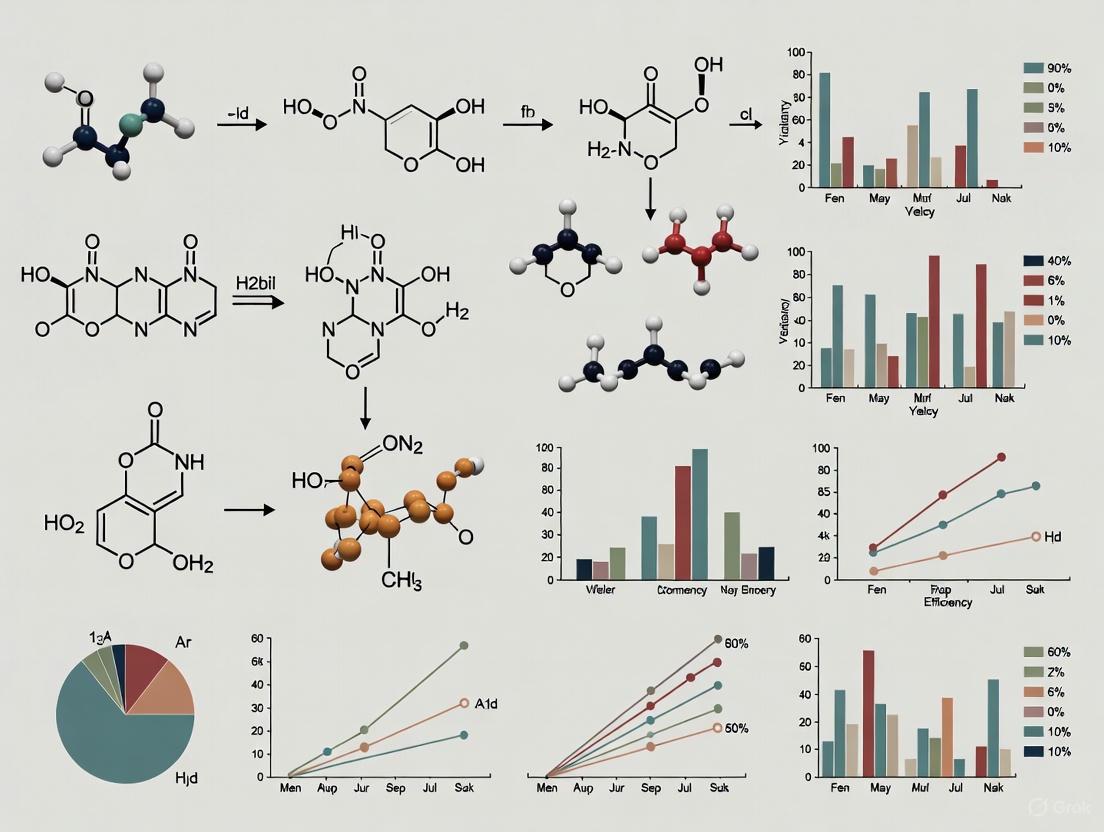

The following diagram illustrates the logical workflow and relationships between the core components of the method validation lifecycle.

The table below details the experimental protocols and acceptance criteria for these core validation parameters.

Table: Experimental Protocols for Core Validation Parameters

| Parameter | Experimental Protocol & Methodology | Typical Acceptance Criteria |

|---|---|---|

| Accuracy [1] | Analysis of samples spiked with known concentrations of the analyte (for quantitative assays). Comparison of results with a certified reference material or a validated reference method. For botanical identification, a sample is compared with an authenticated reference standard [1]. | For quantitative analysis: Recovery of the known amount should be within a specified range (e.g., 90-110%). For identification: Correct and consistent identification against the reference standard [1]. |

| Precision [1] | Repeatability (Intra-day): Multiple analyses of the same homogeneous sample under the same operating conditions over a short time. Intermediate Precision (Inter-day): Analysis of the same sample on different days, by different analysts, or with different equipment [1]. | Expressed as Relative Standard Deviation (RSD). Acceptance depends on the analyte and concentration but is typically ≤ 5% RSD for intra-day precision and slightly higher for inter-day precision [1]. |

| Specificity [1] | Analysis of pure analyte in the presence of other likely components (e.g., impurities, matrix components) to prove that the response is due solely to the target. For botanicals, testing against closely related species [1]. | The method should be able to distinguish the analyte from all other components. No interference from the sample matrix or other analytes should be observed [1]. |

| Limit of Detection (LOD) [1] | Analysis of samples with known low concentrations of the analyte. The LOD is determined as the lowest concentration at which the analyte can be reliably detected. Statistical methods based on the signal-to-noise ratio (e.g., 3:1) are common [1]. | The lowest concentration that gives a detectable signal, distinguished from background noise, with acceptable precision [1]. |

| Robustness [1] | Deliberate, small variations in method parameters (e.g., mobile phase composition, temperature, pH, incubation time) are introduced to evaluate the method's resilience. | The method should continue to perform acceptably (meet all validation criteria) despite minor, intentional changes in operational parameters [1]. |

Experimental Design for Method Comparison and Equivalence

A common scenario in method validation is demonstrating the equivalence of a new or modified method to an existing one. A streamlined, efficient approach often starts with a paper-based assessment of the methods and progresses to a data assessment [5].

The core principle of an equivalence study is to demonstrate that results generated using either the original or the proposed method yield insignificant differences in accuracy and precision, leading to the same accept or reject decision for a sample [5].

Table: Essential Research Reagents and Materials for Method Equivalence Studies

| Item Category | Specific Examples | Critical Function in the Experiment |

|---|---|---|

| Reference Standards | Certified Reference Materials (CRMs), Authenticated Botanical Reference Material [1] | Provides a material with a known, traceable quantity of analyte. Serves as the benchmark for determining accuracy and calibrating instruments. |

| Control Samples | Negative Controls, Positive Controls (e.g., samples spiked with known analyte), Incurred Samples [5] | Verifies the method is performing correctly during the study. Negative controls check for interference; positive controls confirm the method can detect the analyte. |

| Sample Matrices | Blank matrix (e.g., food material without the analyte), representative food categories (dairy, meat, grains) [2] | Used to prepare calibration standards and fortified samples. Essential for testing specificity and demonstrating the method's performance in the real sample context. |

| Culture Media & Reagents | Selective agars, enrichment broths, biochemical confirmation reagents [2] | For microbiological methods, these are critical for the growth and identification of target microorganisms and are specified in the validation scope. |

The following workflow diagram outlines the key stages in a method equivalence study, from planning to regulatory submission.

Statistical tools are essential for evaluating equivalency. While basic statistical tools (e.g., mean, standard deviation, pooled standard deviation) may be sufficient for simple methods, United States Pharmacopeia (USP) <1010> presents numerous methods and statistical tools for designing, executing, and evaluating equivalency protocols [5]. A deep knowledge of the methods being evaluated and the material or product being tested is crucial for selecting the appropriate statistical approach [5].

Method validation is the scientific and regulatory bedrock of reliable food testing. It is the definitive process that transforms an analytical procedure from a theoretical technique into a trusted tool for decision-making. As global supply chains become more complex and regulatory scrutiny intensifies, the principles of robust method validation—accuracy, precision, specificity, and reliability—become even more critical. For researchers and scientists, a deep understanding of validation parameters, regulatory expectations, and experimental design for demonstrating equivalence is not merely a compliance exercise but a fundamental aspect of ensuring food safety, protecting public health, and maintaining brand integrity.

In the realm of pharmaceutical and food safety testing, the reliability of analytical data forms the bedrock of quality control, regulatory submissions, and ultimately, consumer safety [6]. Analytical method validation provides documented evidence that a laboratory test method is fit for its intended purpose, ensuring that the results it produces are accurate, reliable, and reproducible [7]. For researchers and drug development professionals, understanding the core validation parameters is not merely a regulatory formality but a fundamental scientific responsibility. The International Council for Harmonisation (ICH) guidelines, particularly Q2(R2) on "Validation of Analytical Procedures," provide the globally recognized framework for defining these parameters [6]. This guide offers a detailed, comparative examination of the seven core validation parameters—Specificity, Accuracy, Precision, Limit of Detection (LOD), Limit of Quantitation (LOQ), Linearity, and Robustness—within the context of food method validation, complete with experimental protocols and data presentation.

The Seven Core Validation Parameters: Definitions and Regulatory Significance

The ICH Q2(R2) guideline outlines the fundamental performance characteristics that must be evaluated to prove an analytical method is valid [6]. While their application can vary based on the method's type (e.g., identification vs. quantitative assay), these seven parameters collectively ensure a method is reliable.

Specificity/Selectivity

Specificity is the ability of a method to assess the analyte unequivocally in the presence of other components that may be expected to be present in the sample matrix [6] [8]. This ensures that a peak's response is due to a single component, with no interferences from impurities, degradation products, or matrix components [8]. In chromatography, specificity is demonstrated by the resolution of the two most closely eluted compounds, typically the active ingredient and a closely eluting impurity [8]. The use of peak purity tests based on photodiode-array (PDA) detection or mass spectrometry (MS) is highly recommended for unequivocal demonstration of specificity [8].

Accuracy

Accuracy expresses the closeness of agreement between the test result and an accepted reference value (e.g., a true value or a conventional value) [6] [8]. It is typically measured as the percent recovery of a known, added amount of analyte and is established across the specified range of the method [8]. For drug products, accuracy is evaluated by analyzing synthetic mixtures spiked with known quantities of components [8]. The guidelines recommend that data be collected from a minimum of nine determinations over a minimum of three concentration levels covering the specified range [8].

Precision

Precision signifies the closeness of agreement among individual test results from repeated analyses of a homogeneous sample [8]. It is commonly evaluated at three levels:

- Repeatability (Intra-assay Precision): The precision under the same operating conditions over a short time interval [6] [8]. It is assessed with a minimum of nine determinations covering the specified range or six determinations at 100% of the test concentration [8].

- Intermediate Precision: The agreement between results from within-laboratory variations due to random events, such as different days, analysts, or equipment [6] [8]. An experimental design is used to monitor the effects of these individual variables.

- Reproducibility: The precision between different laboratories, typically assessed during collaborative studies [8].

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

- LOD is the lowest concentration of an analyte in a sample that can be detected, but not necessarily quantitated, under the stated experimental conditions [7] [8]. It is a limit test that specifies whether an analyte is above or below a certain value [8].

- LOQ is the lowest concentration of an analyte that can be determined with acceptable precision and accuracy [7] [8].

The most common way of determining LOD and LOQ is by using signal-to-noise ratios (S/N), typically 3:1 for LOD and 10:1 for LOQ [8]. An alternative method involves calculation based on the standard deviation of the response and the slope of the calibration curve: LOD = 3.3(SD/S) and LOQ = 10(SD/S), where SD is the standard deviation of the response and S is the slope of the calibration curve [7].

Linearity and Range

- Linearity is the ability of the method to elicit test results that are directly proportional to the concentration of the analyte in the sample within a given range [6] [8].

- Range is the interval between the upper and lower concentrations of an analyte that have been demonstrated to be determined with suitable levels of precision, accuracy, and linearity [6] [8]. Guidelines specify that a minimum of five concentration levels be used to determine the range and linearity [8].

Robustness

Robustness is a measure of a method's capacity to remain unaffected by small, deliberate variations in method parameters, such as pH, flow rate, mobile phase composition, or temperature [7] [6] [8]. A robust method reduces out-of-specification results and increases long-term reliability [9]. It provides an indication of the method's reliability during normal use and is a key part of the lifecycle management concept introduced in the modernized ICH guidelines [6].

The following workflow illustrates the logical relationship and the process of evaluating these core validation parameters.

Comparative Analysis of Calibration Methods in Food Analysis: A Case Study on Ochratoxin A

The choice of calibration strategy is critical for achieving accurate results, especially when analyzing complex food matrices where matrix effects—ionization suppression or enhancement of trace analytes by co-eluting matrix components—can significantly bias results [10] [11]. A 2023 study on the quantification of Ochratoxin A (OTA) in wheat flour provides an excellent experimental model for comparing the performance of different calibration methods [11].

Experimental Protocol for Ochratoxin A Analysis

- Materials: Canada Western Red Spring (CWRS) and Canada Western Amber Durum (CWAD) wheat samples; certified reference materials (CRMs) for native OTA (OTAN-1) and stable isotope-labelled [¹³C₆]-OTA (OTAL-1); rye flour CRM (MYCO-1) as a quality control sample [11].

- Sample Preparation: Test portions of flour (5 g) were spiked with the internal standard solution and extracted with 11.1 g of 85% acetonitrile/water (by volume) via vortexing, orbital shaking (1 h, 450-475 RPM), and centrifugation [11].

- LC-HRMS Analysis: Analysis was performed using UHPLC coupled to a high-resolution Orbitrap mass spectrometer in positive ion mode. Separation was achieved on a C18 column with a water-acetonitrile gradient and acetic acid as a mobile phase modifier [11].

- Calibration Methods Compared:

- External Calibration: Relies on a calibration curve prepared with pure standard solutions without the sample matrix.

- Single Isotope Dilution Mass Spectrometry (ID1MS): A known amount of isotopically labelled internal standard is spiked into the sample. The analyte concentration is determined from the ratio of the analytical signals of the analyte and internal standard [11].

- Double Isotope Dilution Mass Spectrometry (ID2MS): The internal standard is spiked into both the sample and a calibration standard solution of unlabelled analyte, negating the need to know the exact concentration of the internal standard [11].

- Quintuple Isotope Dilution Mass Spectrometry (ID5MS): A "multi-spike" approach where multiple calibration standard solutions are prepared to bracket the samples, overcoming deviations from perfect exact-matching [11].

Performance Data and Comparison

The following table summarizes the quantitative results and performance characteristics of the different calibration methods used in the OTA case study, demonstrating the profound impact of calibration choice on accuracy.

Table 1: Comparison of Calibration Methods for Ochratoxin A (OTA) in Flour CRM MYCO-1 (Certified Value: 3.17–4.93 µg/kg) [11]

| Calibration Method | Principle | Result for MYCO-1 (µg/kg) | Accuracy Assessment | Key Finding |

|---|---|---|---|---|

| External Calibration | Curve from pure standard solutions | 18-38% lower | Not accurate (outside certified range) | Significant bias due to matrix suppression effects. |

| ID1MS | Single point isotopic dilution | Within certified range | Accurate | Simpler but potentially biased by isotopic enrichment of internal standard. |

| ID2MS / ID5MS | Exact-matching / multi-spike isotopic dilution | Within certified range | Highly accurate | Most reliable, compensating for all method variabilities and biases. |

The data clearly shows that external calibration failed to provide accurate results, yielding values 18-38% lower than the certified value due to unaddressed matrix suppression effects [11]. All isotope dilution methods produced accurate results within the certified range, validating their effectiveness in compensating for matrix effects and analyte losses [11]. However, a consistent 6% decrease in OTA mass fraction was observed with ID1MS compared to ID2MS and ID5MS, attributed to a slight isotopic enrichment bias in the internal standard CRM [11]. This highlights that while ID1MS is simpler, ID2MS and ID5MS offer superior accuracy by negating such biases.

Experimental Protocols for Determining Core Validation Parameters

This section outlines general methodologies for experimentally establishing key validation parameters, as derived from ICH guidelines and industry best practices [7] [8].

Protocol for Assessing Accuracy and Precision

Accuracy and precision are often evaluated concurrently in a single experimental design [8].

- Sample Preparation: Prepare a minimum of nine samples at three concentration levels (e.g., 80%, 100%, 120% of the target concentration) covering the specified range. Each concentration level should have three replicates.

- Analysis: Analyze all samples using the validated method.

- Calculation:

- Accuracy: For each spiked sample, calculate the percent recovery of the known, added amount. Report the mean recovery and confidence intervals for each concentration level.

- Precision (Repeatability): Calculate the relative standard deviation (%RSD) of the results for the replicate measurements at each concentration level.

- Intermediate Precision: Repeat the above experiment on a different day with a different analyst and/or a different instrument. Compare the mean results and %RSD from both sets to demonstrate consistency.

Protocol for Determining LOD and LOQ

The signal-to-noise ratio method is widely used in chromatographic analyses [8].

- Preparation: Prepare and analyze a series of samples with known, low concentrations of the analyte.

- Measurement: For each chromatogram, measure the signal (S) of the analyte peak and the noise (N) from the baseline in a blank sample or a region close to the analyte's retention time.

- Calculation:

- LOD: The concentration at which the average S/N ratio is approximately 3:1.

- LOQ: The concentration at which the average S/N ratio is approximately 10:1.

- Verification: Once calculated, the method's performance at the LOD and LOQ must be validated by analyzing an appropriate number of samples at those limits to confirm detection (for LOD) and quantitation with acceptable precision and accuracy (for LOQ) [8].

Protocol for Testing Robustness

Robustness testing involves deliberately introducing small, plausible variations to method parameters [7] [8].

- Identify Parameters: Select critical method parameters that could vary, such as mobile phase pH (±0.2 units), flow rate (±5-10%), column temperature (±2-3°C), or detection wavelength (±2-3 nm).

- Experimental Design: Use an experimental design (e.g., a Plackett-Burman design) to efficiently evaluate the effect of multiple parameters simultaneously.

- Analysis: Analyze a system suitability sample or a reference standard under each varied condition.

- Evaluation: Monitor critical performance attributes such as retention time, resolution, tailing factor, and peak area. The method is considered robust if these attributes remain within predefined acceptance criteria despite the variations.

The following diagram maps this systematic process for evaluating method robustness.

Essential Research Reagent Solutions for Method Validation

The following table details key reagents and materials essential for successfully developing and validating robust analytical methods, particularly for complex food matrices.

Table 2: Key Research Reagent Solutions for Analytical Method Validation

| Reagent / Material | Function & Importance in Validation | Example from Case Study |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides an traceable standard with a certified value, crucial for determining method Accuracy and trueness [11]. | MYCO-1 (OTA in rye flour CRM) used to evaluate calibration method accuracy [11]. |

| Stable Isotope-Labelled Internal Standards (IS) | Compensates for analyte loss during sample preparation and for matrix effects during MS analysis, improving both accuracy and precision [10] [11]. | [¹³C₆]-Ochratoxin A (OTAL-1) used in ID1MS, ID2MS, and ID5MS calibration [11]. |

| High-Purity Mobile Phase Modifiers | Critical for achieving consistent chromatographic separation (peak shape, retention) and stable ionization in MS, directly impacting Specificity and Robustness [11] [12]. | Formic acid and acetic acid of LC-MS grade used in the OTA study and honey fingerprinting study [11] [12]. |

| Characterized Real-World Samples | Authentic, well-characterized samples are vital for testing Specificity against a representative matrix and for building models in untargeted analysis [12]. | Honey samples of verified geographical origin used to develop the untargeted LC-HRMS classification model [12]. |

The rigorous assessment of the seven core validation parameters is indispensable for generating reliable data that supports product quality, safety, and regulatory compliance. As demonstrated by the OTA case study, the choice of calibration methodology can have a profound impact on accuracy, especially when dealing with complex matrices and sophisticated detection techniques like LC-MS/MS. The trend in modern analytical science, reflected in the latest ICH Q2(R2) and Q14 guidelines, is moving towards a more holistic, lifecycle management approach [6]. This emphasizes a deeper scientific understanding of the method, facilitated by risk assessment and proactive planning via an Analytical Target Profile (ATP). For researchers and scientists, mastering these core parameters and their experimental determination is not the end goal, but the foundation for developing robust, fit-for-purpose methods that ensure public safety and uphold the integrity of the scientific process.

In the landscape of modern food safety, validation has transitioned from a recommended practice to an absolute regulatory requirement. For researchers and scientists developing analytical methods, understanding this imperative is crucial. Validation provides the scientific evidence that a control measure, process, or analytical method is capable of effectively controlling an identified hazard or producing reliable results [13] [14]. Within regulatory frameworks, it answers the fundamental question: "Is our plan or method effective based on objective evidence?" [14].

The U.S. Food and Drug Administration (FDA) mandates under the Food Safety Modernization Act (FSMA) that facilities must validate their process preventive controls to demonstrate that such controls are adequate for the identified hazard [15] [14]. This principle is echoed across international standards, including ISO 22000, which requires that control measures be validated prior to implementation [13]. For the scientific community, this translates to a non-negotiable need to establish rigorous, defensible, and scientifically sound validation protocols that meet defined acceptance criteria, ensuring that methods are not just theoretically sound but empirically proven under specified conditions.

The Regulatory Landscape Governing Validation

Key Regulations and Standards Mandating Validation

Validation's role is codified in major food safety regulations and standards globally. These frameworks share a common requirement for scientific proof of efficacy but differ in their specific foci and applications.

- FDA Food Safety Modernization Act (FSMA): The Preventive Controls for Human Food rule explicitly requires that "process controls include procedures to ensure the control parameters are met" and must be validated [15]. This is not a suggestion but a binding legal requirement for registered facilities.

- ISO 22000:2018: This international standard stipulates in Clause 8.5.3 that validation must be completed prior to the implementation of control measures to ensure their adequacy and effectiveness [13].

- GFSI-Benchmarked Standards (BRCGS, SQF, FSSC 22000, IFS): Standards recognized by the Global Food Safety Initiative (GFSI) integrate the requirement for validation within their food safety management systems. Their specific requirements, particularly regarding food fraud vulnerability assessments, are compared in the table below [16].

Table: Comparison of Food Fraud Validation and Verification Requirements in Major GFSI Standards

| Standard | Validation/Assessment Explicitly Required? | Food Types for Assessment | Documented Procedure Required? | Training Explicitly Mentioned? |

|---|---|---|---|---|

| BRCGS (Issue 9) | Yes | Raw materials | - | "Knowledge" is required [16] |

| SQF (Edition 9) | Implied | Raw materials, Ingredients, Finished products | Implied ("methods shall be documented") | Yes [16] |

| FSSC 22000 (V6) | Yes | Products and processes | Yes | Yes [16] |

| IFS (Version 8) | Yes | Raw materials, Ingredients, Packaging | Implied | Yes [16] |

| GlobalG.A.P. (V6) | Risk assessment | Not specifically described | - | - [16] |

Distinguishing Validation from Verification

A critical conceptual foundation for scientists is the clear distinction between validation and verification. While complementary, they address different stages of control assurance and are often conflated.

- Validation occurs before implementation and focuses on the capability and design of the control or method. It asks, "Will this work in theory?" and is based on scientific studies, experimental trials, and expert opinions [13] [14].

- Verification occurs after implementation and focuses on ongoing performance and compliance. It asks, "Are we doing it correctly and consistently in practice?" and involves activities like record reviews, calibration, and product testing [17] [13].

Table: Core Differences Between Validation and Verification

| Aspect | Validation | Verification |

|---|---|---|

| Primary Question | Is the control measure or method effective and capable? | Is the system being followed as designed? |

| Timing | Before implementation | After implementation, and continuously |

| Evidence Base | Scientific data, experimental trials, peer-reviewed literature | Monitoring records, audits, calibration certificates, test results |

| Regulatory Reference | ISO 22000:2018 Clause 8.5.3; FSMA Preventive Controls | ISO 22000:2018 Clause 8.8; FSMA monitoring requirements [13] [15] |

The following workflow diagram illustrates the distinct yet interconnected roles of validation and verification within a food safety management system.

Experimental Protocols for Method Validation

For scientists, the core of validation lies in executing structured experimental protocols to define and confirm a method's performance characteristics. The following section outlines standard methodologies for establishing acceptance criteria.

Protocol 1: Establishing Accuracy and Precision

Objective: To quantitatively determine the systematic error (accuracy) and random error (precision) of an analytical method for a specified analyte.

Methodology:

- Sample Preparation: Prepare a minimum of five replicates of the test material at three different concentration levels (low, medium, high) spanning the method's range. Use certified reference materials (CRMs) where available for accuracy determination.

- Analysis: Analyze all replicates using the validated method following the standard operating procedure.

- Data Analysis:

- Accuracy: Calculate the percent recovery for each sample. Compare the mean measured value to the true value of the CRM or known spike. Acceptance criteria are typically set at 80-110% recovery, depending on the analyte and matrix [14].

- Precision:

- Repeatability (Intra-assay): Calculate the relative standard deviation (RSD%) of the replicates within a single run. Acceptance is often <10-15% RSD.

- Intermediate Precision (Inter-assay): Calculate the RSD% of results generated across different days, by different analysts, or on different equipment. Criteria are typically wider than for repeatability.

Protocol 2: Determining Limit of Detection (LOD) and Limit of Quantitation (LOQ)

Objective: To define the lowest concentration of an analyte that can be reliably detected and quantified by the method.

Methodology (Based on Signal-to-Noise):

- Blank Analysis: Analyze a minimum of 10 independent blank samples (matrix without the analyte).

- Low-Level Spike: Analyze multiple replicates (e.g., n=5) of a sample spiked at a concentration near the expected detection limit.

- Data Analysis:

- LOD: Typically determined as the concentration yielding a signal-to-noise ratio of 3:1. Alternatively, calculate from the standard deviation of the blank response (LOD = 3.3 * σ/S, where σ is the standard deviation of the blank and S is the slope of the calibration curve).

- LOQ: Typically determined as the concentration yielding a signal-to-noise ratio of 10:1, or calculated as LOQ = 10 * σ/S. The LOQ must also meet predefined accuracy and precision criteria.

Protocol 3: Assessing Linearity and Range

Objective: To demonstrate that the analytical method produces results that are directly proportional to the concentration of the analyte in the sample within a specified range.

Methodology:

- Calibration Standards: Prepare a series of at least five standard solutions across the intended working range (e.g., 50-150% of the target concentration).

- Analysis: Analyze each standard in triplicate.

- Data Analysis: Plot the mean response against the concentration. Perform linear regression analysis to obtain the correlation coefficient (r), slope, and y-intercept. The range is considered valid where the method demonstrates linearity (typically r ≥ 0.995) and meets accuracy and precision criteria.

The Scientist's Toolkit: Essential Reagents and Materials for Validation Studies

Table: Key Research Reagent Solutions for Food Safety Validation Experiments

| Reagent/Material | Function in Validation | Example Application |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable, known quantity of analyte for establishing method accuracy and calibrating instruments. | Determining recovery rates for a heavy metal analysis method [14]. |

| Selective Culture Media & Agar | Used in microbiological validation to enumerate specific pathogens or indicators, confirming the efficacy of a kill-step. | Validating a thermal process for Salmonella inactivation in a cooked product [14]. |

| Enzymes & Substrates | Key components in developing and validating enzymatic assays or immunoassays for specific chemical contaminants or allergens. | Detecting and quantifying peanut allergen residues in a product claiming to be allergen-free. |

| Stable Isotope-Labeled Internal Standards | Used in chromatographic-mass spectrometric methods to correct for matrix effects and analyte loss, improving accuracy and precision. | Validating an LC-MS/MS method for pesticide residue analysis in a complex food matrix. |

| Inactivation Chemicals (e.g., Dey-Engley Neutralizing Broth) | Critical for validating sanitation processes; neutralizes residual sanitizers on contact surfaces to allow for accurate microbiological testing. | Conducting surface swabs to verify the effectiveness of a cleaning and sanitizing protocol. |

Validation is the cornerstone of a modern, preventive food safety system. For the scientific community, it is not merely a regulatory hurdle but a fundamental principle of sound research and development. The process of gathering objective evidence to prove a method or control works as intended transforms food safety from an aspiration into a demonstrable, defensible reality. As global supply chains become more complex and regulatory scrutiny intensifies, the ability to design, execute, and document robust validation protocols becomes an indispensable skill. It is this scientific rigor that ultimately builds the foundation for consumer trust and public health protection.

Distinguishing Between Validation, Verification, and Transfer in a Quality Control Environment

In the rigorously regulated landscapes of pharmaceutical development and food safety testing, the reliability of analytical data is paramount. This reliability is anchored in three critical, yet distinct, processes: method validation, method verification, and method transfer. A clear understanding of these processes is not merely a regulatory formality but a fundamental component of data integrity and product quality assurance [9]. Confusion between these terms can lead to significant compliance issues, with method validation being a frequent subject of FDA inspectional observations and Warning Letters [9].

This guide provides a structured comparison of these core processes. It is designed to equip researchers, scientists, and drug development professionals with the knowledge to implement these procedures correctly, ensuring that analytical methods are not only scientifically sound but also fully compliant with evolving regulatory standards from bodies like the FDA, USP, and ICH [9] [18]. The content is framed within the context of food method validation to highlight the practical application of these concepts.

Core Definitions and Concepts

Method Validation

Method validation is the comprehensive, documented process of proving that an analytical procedure is suitable for its intended purpose [9]. It is performed when a new method is developed, when an existing method is significantly changed, or when a method is applied to a new matrix [19] [9]. The process involves rigorous testing of multiple performance characteristics to build a complete picture of the method's capabilities and limitations. As underscored by regulatory guidelines like USP <1225> and ICH Q2(R1), validation is essential for new drug applications and novel assay development, providing high confidence in data quality and universal applicability [19] [9].

Method Verification

Method verification, in contrast, is the process of confirming that a previously validated method performs as expected in a specific laboratory [19] [20]. It is not as exhaustive as validation but is a critical step for quality assurance when a laboratory adopts a standard or compendial method (e.g., from USP, EP, or AOAC) for the first time [19] [9] [18]. The goal is to provide documented evidence that the laboratory can successfully execute the method with its specific personnel, equipment, and reagents [20]. This process is ideal for compendial methods and supports lab accreditation under standards like ISO/IEC 17025 [19].

Method Transfer

Method transfer is the documented process that qualifies a receiving laboratory (such as a quality control lab or a contract research organization) to use an analytical method that originated in a transferring laboratory (such as an R&D site) [9] [21]. This is common when methods move from development to commercial manufacturing sites, between global testing facilities, or when outsourcing GMP activities [18]. The transfer ensures the method performs consistently and reproducibly across different locations, maintaining data integrity throughout a product's lifecycle [21].

Comparative Analysis: Validation, Verification, and Transfer

The table below summarizes the key differences between method validation, verification, and transfer, providing a clear, at-a-glance comparison.

Table 1: Comprehensive Comparison of Method Validation, Verification, and Transfer

| Comparison Factor | Method Validation | Method Verification | Method Transfer |

|---|---|---|---|

| Primary Objective | To prove a method is suitable for its intended use [9] | To confirm a lab can perform a validated method correctly [20] | To qualify a receiving lab to use an existing method [9] |

| Typical Initiating Event | New method development; regulatory submission (e.g., NDA, ANDA) [19] [9] | First-time use of a compendial or standard method in a lab [19] [9] | Moving a method between labs/sites (e.g., R&D to QC) [18] [21] |

| Scope & Complexity | Comprehensive and rigorous [19] | Limited and confirmatory [19] | Comparative testing to demonstrate equivalence [21] |

| Key Parameters Assessed | Accuracy, Precision, Specificity, Linearity, Range, LOD, LOQ, Robustness [9] | Accuracy, Precision, LOD/LOQ (as applicable) [9] | Precision, Intermediate Precision, Accuracy, Specificity [21] |

| Regulatory Foundation | ICH Q2(R1), USP <1225>, FDA Guidance [19] [9] | ISO/IEC 17025, GLP [19] [20] | USP, FDA/EMA Guidance on method transfer [9] [21] |

| Resource Intensity | High (time, cost, expertise) [19] | Moderate to Low [19] | Moderate (requires coordination between sites) [21] |

| Output | Full validation report proving method fitness [9] | Verification report demonstrating lab competency [20] | Transfer report confirming success at receiving site [21] |

Experimental Protocols and Methodologies

Protocol for Method Validation

A full method validation follows a strict protocol to assess defined performance characteristics. The following workflow outlines the key stages and parameters evaluated in a typical validation process, illustrating its comprehensive nature.

Key Experimental Details:

- Accuracy: Determined by spiking a known amount of the target analyte into a sample matrix and measuring the recovery percentage. For example, in size-exclusion chromatography (SEC) validation for biologics, aggregates and low-molecular-weight species are generated via controlled chemical reactions and spiked to achieve 80-120% recovery [18].

- Precision: Evaluated through repeatability (multiple analyses of the same homogeneous sample by the same analyst under identical conditions) and intermediate precision (testing variations like different days, analysts, or equipment) [9].

- Robustness: Assessed by introducing small, deliberate changes to method parameters (e.g., temperature, pH, flow rate) to ensure the method's reliability remains unaffected under normal operational variability [9].

Protocol for Method Verification

For verifying a compendial method, the laboratory performs a subset of validation testing. The process is less exhaustive but must be meticulously documented to demonstrate procedural competence.

Table 2: Typical Method Verification Experiments and Acceptance Criteria

| Parameter Verified | Experimental Approach | Typical Acceptance Criteria |

|---|---|---|

| Accuracy/Bias Recovery | Analyze a certified reference material (CRM) or perform a spike/recovery study [9]. | Recovery within 80-120% for a spiked sample, or agreement with CRM reference value [18]. |

| Precision | Perform replicate analyses (e.g., n=6) of a homogeneous sample [9]. | Relative Standard Deviation (RSD) meets pre-defined limits based on method type and analyte level. |

| Limit of Detection (LOD) / Limit of Quantitation (LOQ) | Verify the published LOD/LOQ using signal-to-noise ratio or based on standard deviation of the response [19] [9]. | Consistent detection/quantitation at or below the claimed limit. |

| Measurement Uncertainty & Calibration Model | Assess calibration curve performance and estimate uncertainty budget [9]. | Correlation coefficient (r) >0.998, residuals within expected range. |

Protocol for Method Transfer

The most common approach for method transfer is comparative testing, where a predetermined number of samples are analyzed in both the sending and receiving laboratories [21]. Successful transfer relies on robust communication and clear acceptance criteria defined in a pre-approved protocol.

Key Experimental Details:

- Experimental Design: The sending and receiving laboratories analyze the same set of samples, which can be routine production batches, stability samples, or spiked samples [21]. The number of batches and replicates should be statistically sound.

- Statistical Assessment: For quantitative methods, results are compared using statistical tools. A 2025 study highlights the use of Bland-Altman plots with equivalence testing and Deming regression as robust statistical methods for cross-validation in bioanalysis, ensuring the 95% confidence interval of the mean difference between labs falls within pre-specified boundaries [22].

- Acceptance Criteria: Criteria are based on the method's validation data and its intended purpose. Typical examples include [21]:

- Assay: Absolute difference between the mean results from the two sites should be ≤ 2-3%.

- Related Substances: Recovery of spiked impurities should be 80-120%.

- Dissolution: Absolute difference in mean results is ≤ 10% at time points with <85% dissolved and ≤ 5% at points with >85% dissolved.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials critical for conducting validation, verification, and transfer studies, particularly in a food or pharmaceutical context.

Table 3: Essential Reagents and Materials for Analytical Method Studies

| Reagent/Material | Function and Criticality |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable and definitive value for the analyte, essential for establishing method accuracy during validation and verification [9]. |

| High-Purity Reference Standards | Used to prepare calibration curves and spiking solutions. Purity and stability are critical for obtaining reliable linearity, accuracy, and LOD/LOQ data [9]. |

| Characterized Spiking Materials | For impurity or pathogen tests (e.g., SEC aggregates, Listeria monocytogenes), well-characterized materials are needed for accuracy/spike recovery studies [20] [18]. |

| Selective Culture Media & Reagents | In microbiology, validated and verified culture media are fundamental for specificity testing, ensuring accurate detection and identification of target organisms [23] [20]. |

| Standardized Method Protocols (e.g., AOAC, USP, ISO) | Provide the official, validated procedure that serves as the baseline for verification or transfer activities [20] [24]. |

Method validation, verification, and transfer are interconnected yet distinct pillars of a robust quality control system. Validation creates the foundational proof of a method's performance, verification confirms a laboratory's operational competence with a standardized method, and transfer ensures methodological consistency across different locations.

The modern validation landscape is evolving, with a 2025 industry report highlighting a shift towards digital validation tools (DVTs) to enhance efficiency, data integrity, and continuous audit readiness [25]. Furthermore, statistical methodologies for cross-validation are being refined, incorporating equivalence testing for more robust assessments [22].

Selecting the correct process is a strategic decision. Use validation for novel methods or regulatory submissions, verification for implementing compendial methods, and transfer when moving validated methods between sites. Adhering to this framework ensures scientific rigor, regulatory compliance, and the generation of reliable, high-quality data that supports drug development and food safety.

From Theory to Practice: Implementing and Applying Validation Parameters for Food Methods

Analytical method validation provides documented evidence that a testing procedure consistently performs as intended for its specific application. For researchers and scientists in drug development and food safety, executing a robust validation is critical for regulatory compliance and data integrity. This guide compares the performance and acceptance criteria of various international validation guidelines, providing a detailed, step-by-step experimental protocol framed within broader research on food method validation acceptance criteria.

Understanding Method Validation, Verification, and Fitness for Purpose

Before initiating validation, understanding key terminology ensures appropriate experimental design:

- Method Validation confirms a method's performance characteristics through testing its ability to detect target analytes under specified conditions, typically performed for particular matrix categories or subcategories [20].

- Method Verification demonstrates that a specific laboratory can successfully execute a previously validated method and correctly detect target organisms [20].

- Fitness for Purpose establishes that a method produces accurate data for making correct decisions in a specific, previously unvalidated application or matrix [20].

The ISO 16140 series outlines that two stages are required before method implementation: initial validation to prove the method is fit-for-purpose, followed by verification to demonstrate laboratory proficiency [2].

Comparative Analysis of International Validation Guidelines

Various international bodies publish validation guidelines with differing emphases. The table below compares key guidelines relevant to food and pharmaceutical analysis:

| Guideline Issuer | Primary Focus | Key Validation Parameters | Typical Application Scope |

|---|---|---|---|

| FDA Foods Program (MDVIP) | Regulatory methods for food safety; multi-laboratory validation (MLV) preferred [26]. | Parameters per MDVIP Standard Operating Procedures; managed via Research Coordination Groups (RCGs) [26]. | Foods Program regulatory mission; chemical, microbiological, and DNA-based methods [26]. |

| ICH Q2(R1) | Harmonized pharmaceutical analysis; scientific rigor in analytical performance [27] [28]. | Specificity, Accuracy, Precision, LOD, LOQ, Linearity, Range, Robustness [27]. | Active pharmaceuticals, excipients, drug products [27] [28]. |

| ISO 16140 (Microbial) | Microbiological methods for food/feed chain; standardized protocols for alternative methods [2]. | Method comparison study, interlaboratory study, RLOD, specificity, sensitivity [29] [2]. | Broad range of foods (15 categories); qualitative and quantitative microbial detection [2]. |

| AOAC International | Official chemical and microbiological methods; proprietary kit validation [20]. | Collaborative study data for specificity, sensitivity, accuracy, precision per Appendix J [29] [20]. | Defined food categories and subcategories (e.g., 8 main, 92 sub) [20]. |

A Step-by-Step Validation Protocol

This protocol synthesizes requirements from major guidelines, with an emphasis on experimental design for microbiological methods as per ISO 16140 and FDA standards.

Step 1: Define the Method's Purpose and Scope

Clearly articulate the method's intended use, target analyte, and applicable matrices. For microbial methods, define the target microorganisms and the food categories according to established groupings (e.g., the 15 categories in ISO 16140-2) [2]. The scope directly dictates the breadth of the validation study.

Step 2: Develop a Validation Plan

Create a detailed protocol specifying the reference method (if applicable), experimental design, sample preparation, number of replicates, performance characteristics to be assessed, and pre-defined acceptance criteria based on the chosen guideline [27] [2]. This plan must be approved before experimentation begins.

Step 3: Execute Experimental Validation - Performance Parameters

The core experimentation involves testing the following performance characteristics, with methodologies detailed below.

Specificity/Selectivity

- Objective: Ensure the method accurately detects the target analyte without interference from other components [27].

- Experimental Protocol:

- For Chemical Methods: Inject individual solutions of the target analyte and potential interferents (e.g., impurities, degradation products, matrix components). Demonstrate resolution of the target peak and use peak purity tests (e.g., photodiode-array or mass spectrometry) to confirm a single component [27].

- For Microbiological Methods: Inoculate the target organism and a panel of related, non-target strains into the test system. The method should yield positive results only for the target strains, demonstrating inclusivity and exclusivity [29] [2].

Accuracy

- Objective: Measure the closeness of test results to the true value [27].

- Experimental Protocol:

- For Drug Substances: Compare results with the analysis of a standard reference material or a second, well-characterized method [27].

- For Drug Products & Foods: Analyze a blank matrix spiked with known quantities of the analyte. Guidelines recommend a minimum of nine determinations over at least three concentration levels covering the specified range [27]. Report percent recovery of the known, added amount.

Precision

- Objective: Measure the degree of agreement among test results when the method is applied repeatedly to multiple samplings of a homogeneous sample [27].

- Experimental Protocol:

- Repeatability: Have the same analyst using the same equipment analyze a minimum of nine determinations at the 100% test concentration or over the specified range. Report as percent relative standard deviation (% RSD). An RSD of <2% is often recommended, but <5% can be acceptable for minor components [27].

- Intermediate Precision: Incorporate variations within a single laboratory (e.g., different analysts, equipment, days) into the study design [27].

- Reproducibility (Multi-Laboratory Validation, MLV): Compare results across different laboratories, as required by the FDA MDVIP for certain methods [26] [29]. The ISO 16140-2 standard provides a definitive protocol for this [2].

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

- Objective: Determine the lowest concentration that can be detected (LOD) and quantified with acceptable precision and accuracy (LOQ) [27].

- Experimental Protocol:

Linearity and Range

- Objective: Demonstrate that the method provides results proportional to analyte concentration within a given interval [27].

- Experimental Protocol:

Robustness

- Objective: Measure the method's capacity to remain unaffected by small, deliberate variations in procedural parameters [27].

- Experimental Protocol:

Step 4: Data Analysis and Comparison to Acceptance Criteria

Analyze all collected data against the pre-defined acceptance criteria from the validation plan and relevant guideline (e.g., ICH, ISO). For microbial methods, this includes calculating metrics like the Relative Level of Detection (RLOD) and assessing negative/positive deviations against acceptability limits, as per ISO 16140-2 [29].

Step 5: Compile the Final Validation Report

The report must comprehensively document the entire process, including:

- Method purpose and scope

- Detailed experimental protocol

- Raw data and statistical analysis for all performance characteristics

- Assessment against acceptance criteria

- Statement of validity and the final, approved method procedure

Case Study: Multi-Laboratory Validation of a Salmonella qPCR Method

A recent study validates an FDA-developed quantitative PCR (qPCR) method for detecting Salmonella in frozen fish, providing a practical application of this protocol [29].

- Guideline Used: FDA Microbiological Method Validation Guidelines and ISO 16140-2:2016 [29].

- Experimental Design: Fourteen laboratories each analyzed twenty-four blind-coded frozen fish test portions using both the qPCR and the BAM culture (reference) methods [29].

- Performance Data and Acceptance Criteria:

- Positive Rate: The qPCR method yielded a ~39% positive rate, within the FDA's required 25%-75% fractional range and comparable to the culture method's ~40% [29].

- Method Agreement: The measures of disagreement (ND-PD and ND+PD) did not exceed the ISO 16140-2 Acceptability Limit [29].

- Sensitivity Comparison: The Relative Level of Detection (RLOD) was approximately 1, demonstrating equivalent performance between the qPCR and reference culture methods [29].

- Conclusion: The study demonstrated the qPCR method was reproducible, specific, and sensitive, validating it as a reliable 24-hour screening tool for Salmonella in frozen fish [29].

The Scientist's Toolkit: Key Reagents and Materials

The table below lists essential solutions and materials for method validation, drawing from the cited experimental examples.

| Reagent/Material | Function in Validation | Example from Literature |

|---|---|---|

| Standard Reference Material | To establish accuracy by providing a known-concentration sample with certified purity [27]. | Used in drug substance accuracy testing [27]. |

| Blank Matrix Samples | To prepare spiked samples for determining accuracy, precision, LOD, and LOQ in a relevant sample background [27]. | Blank food matrices (e.g., frozen fish, baby spinach) used in microbial method validation [29]. |

| Target Analyte Standard | To prepare calibration standards for linearity, range, and for spiking recovery studies [27]. | Sodium sulfite standard used in food preservative analysis [30]. |

| Primers and Probes | For molecular methods (e.g., qPCR), these are essential for specific amplification and detection of the target sequence [29]. | Custom-designed primers and TaqMan probe targeting the Salmonella invA gene [29]. |

| Selective Enrichment Media | In microbial methods, these promote the growth of the target organism while inhibiting competitors, testing method specificity [29]. | Media used in the FDA BAM culture method for Salmonella [29]. |

| Automated Nucleic Acid Extraction Systems | To ensure high-quality, inhibitor-free DNA extraction for molecular methods, improving sensitivity and enabling high-throughput analysis [29]. | Automatic DNA extraction methods compared to manual boiling in the Salmonella qPCR MLV study [29]. |

Measurement Uncertainty in Validation

A crucial outcome of validation is understanding the method's measurement uncertainty. In quantitative chemical analysis, Type A (experimental) uncertainties, derived from statistical analysis of repeated measurements (e.g., standard deviation), are often the most significant contributors to overall uncertainty. Type B (inherited) uncertainties (e.g., from reference standards) are frequently negligible in comparison [30]. For qualitative methods, uncertainty is expressed as the probability of making a correct or incorrect decision [30].

Validation experiments are fundamental to establishing the reliability, accuracy, and reproducibility of analytical methods in food science. For researchers and drug development professionals, designing robust validation protocols requires careful consideration of sample design, replication strategies, and statistical analysis approaches tailored to complex food matrices. Food matrices present unique challenges due to their heterogeneous composition, varying nutrient distributions, and potential interactions between analytes and matrix components [31]. The validation process must demonstrate that a method is fit for its intended purpose, providing scientific evidence that it consistently meets predefined acceptance criteria. This guide compares key approaches for designing validation experiments, providing structured protocols and analytical frameworks to support method comparison and establishment of acceptance criteria in food method validation research.

Statistical Foundations for Validation Experiments

Sample Size Determination and Power Analysis

Determining an appropriate sample size is a critical first step in validation study design. An inadequate sample size reduces statistical power and increases the risk of Type II errors (failing to detect an effect when one exists), while excessively large samples waste resources and may raise ethical concerns [32]. Statistical power, defined as the probability of correctly rejecting a false null hypothesis (1-β), is ideally set at 0.8 or higher [32]. The relationship between sample size, effect size (ES), alpha (α) level, and power must be balanced throughout the planning stage.

Table 1: Sample Size Calculation Formulas for Common Validation Study Designs

| Study Type | Formula | Parameters and Considerations |

|---|---|---|

| Proportion in Survey Studies | N = (Zα/2² × P(1-P) × D) / E² |

P: expected prevalence or proportion;E: margin of error;D: design effect (1 for simple random sampling, 1-2 for complex designs) |

| Group Mean Studies | N = (Zα/2² × s²) / d² |

s: standard deviation from previous studies;d: accuracy of estimate or closeness to true mean |

| Comparison of Two Means | n1 = n2 = (Zα/2 + Z1-β)² × 2σ² / d² |

σ: pooled standard deviation;d: difference between group means;r: ratio of sample sizes (n1/n2) |

| Comparison of Two Proportions | n1 = n2 = (Zα/2 + Z1-β)² × [p1(1-p1) + p2(1-p2)] / (p1-p2)² |

p1, p2: event proportions for groups I and II;p: (p1+p2)/2 |

In practice, sample size selection must consider analytical constraints. For expensive or destructive testing, researchers may implement acceptance sampling plans like the C=0 sampling plan (accepting a lot with zero defects), though the initial sample size must provide sufficient statistical confidence [33]. One discussed approach for process validation in food safety uses an initial sample size of 10, with the control measure being redesigned if inconsistent results are obtained [33].

Data Types and Distribution Considerations

Food validation data can be quantitative (continuous or discrete) or qualitative (categorical). Microbial enumeration data, common in food safety, often follows a lognormal distribution rather than a normal distribution [34]. Data transformation (e.g., log transformation) is typically applied before analysis to meet the assumptions of parametric statistical tests.

Statistical methods must account for the compositional nature of food data, natural groupings, and correlated components [31]. Normality can be assessed using statistical tests such as the Shapiro-Wilk test (recommended for small sample sizes) or the Kolmogorov-Smirnov test (preferred for larger datasets) [34].

Experimental Design Approaches for Food Matrices

Sample Design and Replication Strategies

Food matrices are inherently variable, requiring replication at multiple levels to account for different sources of variation. The experimental design should separate technical variation (measurement error) from biological variation (inherent matrix heterogeneity).

Table 2: Replication Strategies for Food Matrix Validation Studies

| Replication Type | Purpose | Examples in Food Validation |

|---|---|---|

| Technical Replicates | Measure analytical method precision | Multiple injections of the same sample extract in HPLC analysis |

| Processing Replicates | Account for sample preparation variability | Preparing multiple aliquots of homogenate from the same food sample |

| Biological Replicates | Capture natural matrix variability | Analyzing multiple individual units (e.g., different apples from same batch) |

| Independent Experiments | Establish method robustness | Repeating entire analysis on different days with fresh reagents |

For process validation in food manufacturing, one documented approach collects 30 data points for one control measure, though this has significant cost implications [33]. Alternative approaches using 3-5 production runs with intensive parameter monitoring may provide sufficient validation for certain control measures when combined with statistical process control [33].

Handling Food Matrix Effects

Food matrices can interfere with analytical methods through binding effects, chemical interactions, or physical obstruction. Validation experiments should characterize these effects by:

- Comparing calibration in solvent vs. matrix: Assessing signal suppression or enhancement.

- Testing at multiple fortification levels: Evaluating accuracy across the analytical range.

- Using structurally similar analog: When analyzing for compounds not naturally present.

For example, in a study investigating phenolic compound bioaccessibility, researchers incorporated microparticles into different food matrices (carbohydrate-, protein-, and lipid-based) to evaluate how matrix composition affects release profiles during digestion [35]. This approach provides crucial data on how a method performs across different matrix types.

Experimental Protocols for Method Validation

Protocol for Food Retail Environment Assessment Tool Validation

A validated protocol for assessing food retail environments demonstrates comprehensive validation approach [36]:

- Tool Development: Integrate existing instruments (NEMS-S and INFORMAS retail module) and adapt to local context through translation and back-translation.

- Expert Review: Convene a panel of domain experts (e.g., nutrition specialists, public health researchers) to evaluate content relevance using standardized validity indices (Content Validity Ratio and Content Validity Index).

- Field Testing: Select multiple store types across different socioeconomic areas using clustered random sampling.

- Reliability Assessment: Deploy independent raters to evaluate identical environments, calculating inter-rater agreement and intraclass correlation coefficients.

- Refinement: Finalize the protocol based on statistical measures (e.g., 93.77% inter-rater agreement and ICC of 0.89-1.00 reported in the validation study) [36].

Protocol for In Vitro Bioaccessibility Studies

The INFOGEST static in vitro simulation protocol provides a standardized approach for assessing compound bioaccessibility in food matrices [35]:

- Sample Preparation: Incorporate the test material into relevant food matrices (carbohydrate, protein, lipid, or mixed).

- Oral Phase: Mix sample with simulated salivary fluid (SSF) and incubate for 2 minutes.

- Gastric Phase: Add simulated gastric fluid (SGF) and gastric enzymes, incubate for 2 hours at 37°C with continuous agitation.

- Intestinal Phase: Add simulated intestinal fluid (SIF) and pancreatic enzymes with bile extract, incubate for 2 hours.

- Analysis: Centrifuge to obtain the bioaccessible fraction and analyze for target compounds.

- Antioxidant Activity Assessment: Apply additional assays (e.g., DPPH, ABTS) to the bioaccessible fraction.

This protocol was used to demonstrate how microparticle physical state and food matrix affect the release profile of gallic acid and ellagic acid during digestion [35].

Statistical Analysis Methods for Validation Data

Selection of Appropriate Statistical Methods

The choice of statistical method depends on the study objectives, data type, and distribution properties. Research analyzing food composition databases shows that statistical methods are most frequently applied to group similar food items (37.5% of studies), determine nutrient co-occurrence (20.8%), or evaluate changes over time (16.7%) [31].

Table 3: Statistical Methods for Food Validation Experiments

| Analysis Goal | Recommended Methods | Application Examples |

|---|---|---|

| Group Similar Items | Cluster analysis (k-means, hierarchical), Principal Component Analysis | Categorizing foods by nutritional similarity; Identifying patterns in composition data [31] |

| Compare Groups | t-tests, ANOVA, Wilcoxon signed-rank, Friedman test | Evaluating processing effects on nutrient content; Comparing sugar content in "low-fat" vs regular foods [31] |

| Assess Relationships | Correlation analysis (Spearman's), Regression methods (logistic, linear) | Determining association between nutrient content and food characteristics [31] |

| Handle Missing Data | Multiple imputation, Random forest imputation, k-nearest neighbor | Addressing incomplete food composition data [31] |

| Detect Errors/Outliers | Coefficient of variation ranking, outlier detection | Identifying unlikely values in food composition databases [31] |

Data Visualization for Validation Studies

Effective data visualization facilitates outlier detection, trend identification, and results communication. Modern approaches extend beyond basic graphs to include:

- Heatmaps with clustering: Visualizing food group similarities based on multiple nutrients.

- Network graphs: Showing complex relationships between foods and nutrients.

- Multi-panel figures: Presenting results across different matrix types or conditions.

- Color-coded matrices: Simultaneously communicating multiple dimensions (e.g., health and environmental impacts) [37].

For example, a three-by-three color-coded matrix effectively visualized the relationship between carbon footprint and health impacts of 30 food groups, enabling intuitive comparison across categories [37].

Research Reagent Solutions for Food Validation

Table 4: Essential Research Reagents and Materials for Food Validation Studies

| Reagent/Material | Function in Validation | Application Examples |

|---|---|---|

| Inulin | Encapsulating agent for creating microparticles with controlled crystallinity | Protecting phenolic compounds (gallic acid, ellagic acid) during digestion studies [35] |

| Digestive Enzymes | Simulating human gastrointestinal conditions in bioaccessibility studies | Pepsin (gastric phase), pancreatin and lipase (intestinal phase) in INFOGEST protocol [35] |

| Model Food Matrices | Providing standardized background for evaluating matrix effects | Carbohydrate- (sucrose, maltodextrin), protein- (whey), and lipid-based (sunflower oil) systems [35] |

| Reference Materials | Establishing method accuracy through analysis of materials with known properties | Certified reference materials for nutrient composition, contaminant levels |

| Viability Markers | Differentiating between viable and non-viable microorganisms in method comparison | Fluorescent stains, culture media with selective agents |

| Antioxidant Assay Kits | Quantifying functional properties of bioactive compounds in food | DPPH, ABTS, ORAC assays for antioxidant capacity measurement |

Establishing Fit-for-Purpose Acceptance Criteria for Accuracy (Recovery %) and Precision (%RSD)

In the fields of pharmaceutical development and food safety, the reliability of analytical data is paramount. Establishing that an analytical method is "fit-for-purpose"—meaning it consistently produces results that are reliable and meaningful for a specific intended use—is a foundational requirement. The process of demonstrating this reliability is known as analytical method validation [8]. At the core of this process is the definition of clear, scientifically sound acceptance criteria for key performance parameters, primarily Accuracy (often expressed as Recovery %) and Precision (expressed as % Relative Standard Deviation, or %RSD) [38].

The International Council for Harmonisation (ICH) provides a globally recognized framework for method validation through its ICH Q2(R2) guideline, which defines the core parameters and principles for demonstrating a method is suitable for its intended purpose [38]. The concept of "fitness-for-purpose" acknowledges that the stringency of acceptance criteria can and should be adapted based on the method's role, whether it is for quantifying a major active ingredient, detecting trace impurities, or ensuring food is free from harmful contaminants like mycotoxins [39]. This guide provides a comparative analysis of established acceptance criteria for accuracy and precision, detailing the experimental protocols used to establish them and presenting key reagent solutions essential for researchers.

Core Principles and Regulatory Frameworks

The ICH Q2(R2) Foundation

The ICH Q2(R2) guideline, along with the complementary ICH Q14 on analytical procedure development, forms the bedrock of modern analytical method validation in the pharmaceutical industry [38]. These guidelines advocate for a science- and risk-based approach, encouraging the definition of an Analytical Target Profile (ATP) early in the method development process. The ATP outlines the desired performance criteria for the method, ensuring the subsequent validation is targeted and relevant [38]. The core performance characteristics defined by ICH include specificity, linearity, accuracy, precision, detection limit (LOD), quantitation limit (LOQ), and robustness [8] [38].

Defining Accuracy and Precision

- Accuracy is defined as the closeness of agreement between a test result and an accepted reference value (the true value) [8]. It is a measure of exactness and is typically reported as % Recovery. A recovery of 100% indicates perfect agreement with the true value.

- Precision refers to the closeness of agreement among a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [8]. It is a measure of random error and reproducibility. Precision is further broken down into three tiers:

- Repeatability (intra-assay precision): Precision under the same operating conditions over a short interval of time [8].

- Intermediate Precision: Precision within a single laboratory, incorporating variations such as different days, different analysts, or different equipment [8].

- Reproducibility: Precision between different laboratories, typically assessed through collaborative studies [8].

Precision is most commonly expressed as the % Relative Standard Deviation (%RSD), which is calculated as (Standard Deviation / Mean) x 100% [40]. This metric allows for the comparison of variability across data sets with different units or averages, making it a versatile tool in quality control [40].

Comparative Analysis of Acceptance Criteria

Acceptance criteria for accuracy and precision are not universal; they are tailored to the analytical task. The following tables summarize typical criteria for different applications, from pharmaceutical assays to food contaminant testing.

Table 1: Acceptance Criteria for Pharmaceutical Assay and Impurity Methods (e.g., HPLC)

| Analytical Task | Typical Accuracy (Recovery %) | Typical Precision (%RSD) | Basis of Criteria |

|---|---|---|---|

| Assay of Drug Substance/Product | 98.0% - 102.0% [38] | ≤ 2.0% (Repeatability) [38] | ICH Q2(R2); for quantifying major component |

| Quantification of Impurities | Specific to range; e.g., 90-107% at LOQ [38] | ≤ 5.0% to 10.0% (depending on level) [38] | ICH Q2(R2); more lenient for trace analysis |

| Identification Tests | Not a primary parameter [38] | Not a primary parameter [38] | ICH Q2(R2); focuses on specificity |

Table 2: Acceptance Criteria for Food Contaminant and Mycotoxin Methods

| Analytical Task | Typical Accuracy (Recovery %) | Typical Precision (%RSD) | Basis of Criteria |

|---|---|---|---|

| Fumonisin in Maize (Field Kit vs. LC-MS/MS) | Evaluated via correlation and statistical comparison (e.g., paired t-test) [39] | Assessed via correlation and categorical analysis against reference method [39] | USDA-FGIS performance criteria; focus on correct classification (violative vs. non-violative) [39] |

| Mycotoxin Test Kits (General) | Recovery limits often reference Horwitz criteria [39] | Precision targets from AOAC (Horwitz) [39] | AOAC International standards; fitness-for-purpose for regulatory compliance [39] |

The data reveals a clear distinction in strategy. Pharmaceutical assays for active ingredients demand very tight criteria (e.g., 98-102% recovery, ≤2% RSD) to ensure product potency and consistency [38]. In contrast, methods for contaminants like mycotoxins may prioritize correct categorical outcomes (e.g., is a sample above or below a legal limit?) and use statistical comparisons to a reference method, with accuracy and precision expectations derived from established standards like the Horwitz criteria [39].

Experimental Protocols for Establishing Criteria

Protocol for Determining Accuracy (Recovery %)

The following workflow details the standard experiment for establishing the accuracy of an analytical method, as per ICH guidelines [8] [38].

Title: Accuracy (Recovery %) Determination Workflow

Detailed Methodology:

- Sample Preparation: A blank sample matrix (e.g., drug product excipients without the active ingredient, or ground maize free of the target mycotoxin) is spiked with known quantities of the pure analyte. The ICH guideline recommends a minimum of nine determinations over a minimum of three concentration levels (e.g., 80%, 100%, 120% of the target concentration), with three replicates at each level [8] [38].

- Analysis: The spiked samples are analyzed using the method being validated.

- Calculation: The percentage recovery is calculated for each sample using the formula: Recovery % = (Measured Concentration / Spiked Concentration) × 100%.

- Evaluation: The mean recovery and confidence intervals are calculated for each concentration level. The method is considered accurate if the recovery results at all levels fall within the pre-defined, fit-for-purpose acceptance criteria [8].

Protocol for Determining Precision (%RSD)

Precision is evaluated at multiple levels. The following protocol outlines the experiments for determining repeatability and intermediate precision.

Title: Precision (%RSD) Evaluation Workflow

Detailed Methodology:

- Repeatability:

- Experiment: A minimum of six determinations at 100% of the test concentration are analyzed, or nine determinations covering the specified range (e.g., three concentrations/three replicates each) [8].

- Calculation: The %RSD is calculated for the resulting data set. The formula is %RSD = (Standard Deviation / Mean) x 100% [40].

- Evaluation: The calculated %RSD is compared against pre-defined acceptance criteria (e.g., ≤ 2.0% for an assay).

- Intermediate Precision:

- Experiment: The same set of samples is analyzed by a different analyst, on a different HPLC system, and on a different day. Each analyst prepares their own standards and solutions [8].

- Evaluation: The %RSD is calculated for the combined data set. Furthermore, the results from the two analysts are often compared using a statistical test, such as a Student's t-test, to determine if there is a significant difference between the mean values obtained by each analyst [8].

The Scientist's Toolkit: Key Research Reagent Solutions