Navigating Food Analyte Validation: Requirements, Methods, and Best Practices for Scientific Research

This article provides a comprehensive guide to validation requirements for diverse food analyte types, tailored for researchers, scientists, and drug development professionals.

Navigating Food Analyte Validation: Requirements, Methods, and Best Practices for Scientific Research

Abstract

This article provides a comprehensive guide to validation requirements for diverse food analyte types, tailored for researchers, scientists, and drug development professionals. It covers foundational regulatory frameworks from authorities like the FDA and ISO, explores advanced methodological applications for chemical and microbiological contaminants, addresses common troubleshooting and optimization challenges, and details the rigorous process of method validation and comparative analysis. The content synthesizes current standards and emerging trends to support the development of robust, reliable analytical methods in food safety and quality control.

Understanding Food Analyte Validation: Regulatory Frameworks and Core Principles

Validation is a cornerstone of analytical science, providing the documented evidence that a method is fit for its intended purpose. For food analysts, defining the validation scope for different analyte types—chemical, microbiological, and toxic elements—is fundamental to ensuring accurate results that protect public health and meet regulatory standards. The validation requirements for these analyte categories differ significantly based on their inherent properties, associated risks, and detection challenges. With the recent modernization of international guidelines, including the simultaneous release of ICH Q2(R2) on analytical procedure validation and ICH Q14 on analytical procedure development, the approach to validation has evolved toward a more scientific, risk-based lifecycle model [1]. This guide compares the specific validation requirements across these analyte categories, providing researchers and drug development professionals with experimental protocols and data to support method selection and implementation.

Comparative Analysis of Validation Parameters

The validation scope for each analyte category is determined by its unique characteristics and potential public health impact. Chemical analytes, including nutrients, additives, and contaminants, require comprehensive validation of parameters like accuracy, precision, and specificity. Microbiological methods demand validation of detection capabilities for living organisms, while toxic element analyses focus on ultra-trace level detection with extreme accuracy.

Table 1: Core Validation Parameters by Analyte Category

| Validation Parameter | Chemical Analytes | Microbiological Analytes | Toxic Elements |

|---|---|---|---|

| Accuracy | Measured via spike recovery (target: 70-120%) [2] | Correlation to culture-based "gold standard" [3] | Certified Reference Material (CRM) analysis [4] |

| Precision | Repeatability & intermediate precision (RSD <5-10%) [1] | Statistical analysis of binary outcomes [3] | Repeatability at trace levels (RSD <10-15%) [4] |

| Specificity/Selectivity | Ability to distinguish from impurities, matrix [1] | Ability to detect target organism in mixed flora [3] | Ability to resolve spectral interferences [4] |

| Linearity & Range | Demonstrated across specified concentration range [1] | Demonstrated for quantitative methods only [3] | Demonstrated in appropriate matrix [4] |

| Limit of Detection (LOD) | Signal-to-noise ratio (3:1) [1] | Lowest number of organisms detectable [3] | Instrumental detection limit (3*sd blank) [4] |

| Limit of Quantitation (LOQ) | Signal-to-noise ratio (10:1) with precision/accuracy [1] | Lowest number of organisms quantifiable [3] | Lowest level meeting precision/accuracy criteria [4] |

| Robustness | Resistance to deliberate method parameter variations [1] | Resistance to variations in media, incubation [3] | Resistance to matrix & instrument variations [4] |

Table 2: Method Performance Comparison Across Analyte Types

| Performance Aspect | Chemical Analytes | Microbiological Analytes | Toxic Elements |

|---|---|---|---|

| Typical Turnaround Time | Minutes to hours [4] | Hours to days [3] [4] | Minutes to hours [4] |

| Key Technologies | HPLC, LC-MS/MS, GC-MS [4] [1] | PCR, Biosensors, Culture [3] [4] | ICP-MS, AAS [4] |

| Primary Challenge | Matrix effects & interferences [1] | Viability, non-culturable entities [3] | Contamination & ultra-trace detection [4] |

| Regulatory Focus | ICH Q2(R2), FDA Guidance [1] | AOAC Appendix J, FSMA [5] [3] | FDA Closer to Zero Initiative [5] |

| Trends for 2025 | Lifecycle management (ICH Q14) [1] | Rapid detection, genomics [5] [4] | Lower action levels, advanced ICP-MS [5] |

Experimental Protocols for Validation Studies

Chemical Analyte Validation Protocol

For chemical analytes such as pesticide residues or food additives, a systematic approach to validation is required. The protocol should begin with defining an Analytical Target Profile (ATP) as outlined in ICH Q14, which prospectively defines the method's required performance characteristics [1].

Accuracy Assessment: Prepare a minimum of nine determinations over at least three concentration levels covering the specified range (e.g., 50%, 100%, 150% of target). Spike the analyte into the representative food matrix and calculate percent recovery. Acceptance criteria typically range from 70% to 120% recovery, depending on the analyte and concentration [2] [1].

Precision Evaluation: Conduct repeatability tests using six replicate determinations at 100% of the test concentration or across three concentrations with six replicates each. For intermediate precision, vary days, analysts, or equipment following a pre-defined experimental design. Report results as relative standard deviation (RSD), with acceptable limits generally below 5-10% depending on analyte and concentration [1].

Specificity Verification: Demonstrate that the method can unequivocally quantify the analyte in the presence of potential interferents, including impurities, degradation products, and matrix components. For chromatographic methods, assess resolution from closely eluting compounds and verify peak purity using diode array or mass spectrometric detection [1].

Microbiological Analyte Validation Protocol

Microbiological method validation requires specialized approaches to address the challenges of working with living organisms. The ongoing revision of AOAC Appendix J guidelines reflects evolving needs, including handling non-culturable entities and determining whether culture should remain the "gold standard" for confirmation [3].

Detection Limit Study: For qualitative methods, prepare panels of artificial contamination at various levels near the claimed detection limit. Use at least 20 replicates per contamination level and analyze using the test method and a reference method. Statistical models such as probability of detection (POD) or beta-binomial distribution are applied to determine the limit of detection [3].

Precision Assessment for Quantitative Methods: Conduct reproducibility studies using a standardized inoculum across multiple laboratories, days, or analysts. For binary methods (positive/negative results), precision is assessed through agreement statistics rather than traditional standard deviation calculations [3].

Robustness Testing: Deliberately introduce small variations in critical method parameters such as incubation temperature (±2°C), incubation time (±10%), media pH (±0.2 units), and sample volume. Evaluate the impact on method performance to establish operational tolerances [3].

Toxic Element Validation Protocol

Validating methods for toxic elements like lead, arsenic, cadmium, and mercury requires exceptional sensitivity and contamination control. The FDA's Closer to Zero initiative emphasizes the need for robust methods to support increasingly stringent action levels, particularly for foods intended for infants and young children [5].

Accuracy via Certified Reference Materials: Analyze a minimum of six replicates of appropriate matrix-matched Certified Reference Materials (CRMs) with known concentrations of the target elements. Calculate percent recovery against certified values, with acceptance criteria typically 80-115% depending on the element and concentration level [4].

Limit of Quantitation Determination: Prepare and analyze at least ten independent blank matrix samples to establish the baseline signal. Fortify blanks at the presumed LOQ level (typically 3-10 times the standard deviation of the blank response) and analyze multiple replicates. The LOQ is confirmed when both precision (RSD ≤20%) and accuracy (70-120% recovery) are met at that level [4] [1].

Matrix Effect Evaluation: Analyze post-column infused samples to identify signal suppression or enhancement across the chromatographic run. Compare calibration slopes in solvent versus matrix-matched standards; a significant difference (>15%) indicates substantial matrix effects that must be addressed through method modification [4].

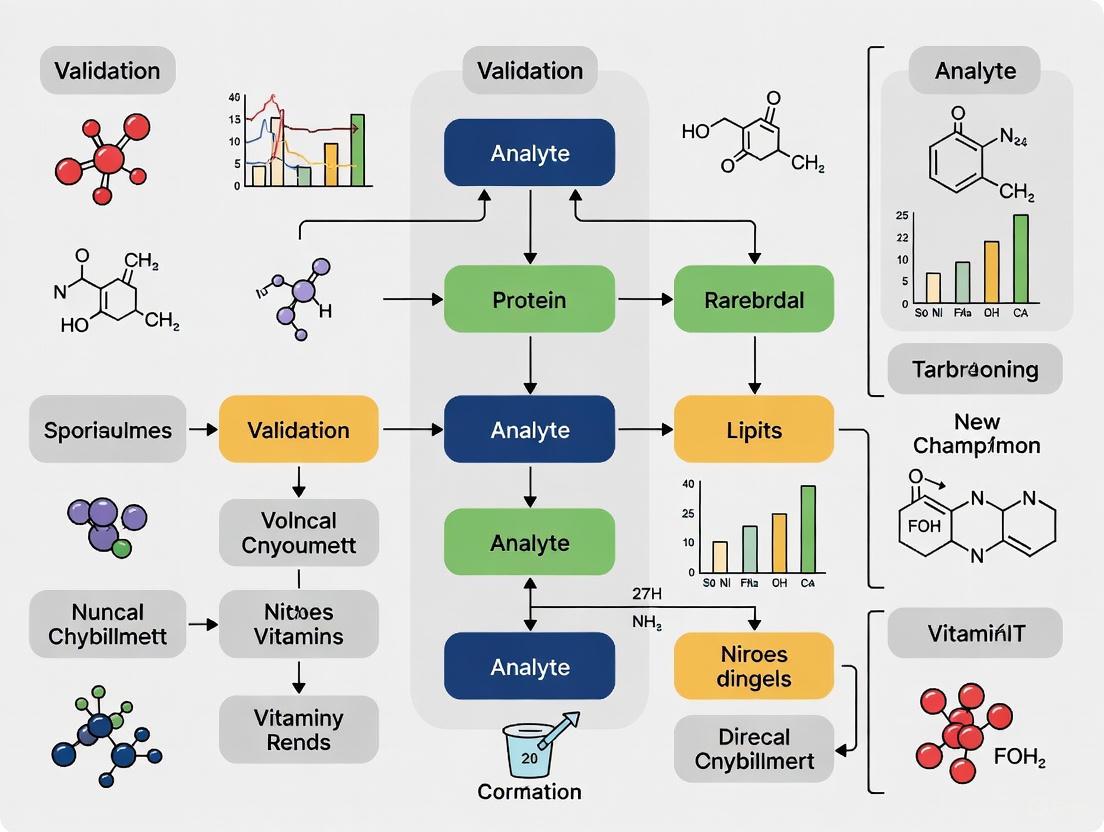

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Analytical Validation

| Reagent/Material | Primary Function | Application Across Analyte Types |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide traceable accuracy verification | Essential for toxic element analysis; used for chemical method validation [4] |

| Matrix-Matched Calibrators | Compensate for matrix effects in quantification | Critical for chemical contaminant and toxic element analysis to ensure accurate quantification [4] |

| Selective Enrichment Media | Promote growth of target microorganisms while inhibiting competitors | Fundamental for traditional microbiological methods and reference culture techniques [3] |

| Molecular Grade Water | Serve as ultra-pure blank and diluent | Critical for trace element analysis to prevent contamination; used in all method types [4] |

| Stable Isotope-Labeled Internal Standards | Compensate for sample preparation losses and matrix effects | Essential for accurate quantitation in LC-MS/MS analysis of chemical contaminants [1] |

| Sample Collection Swabs | Recover residues from surfaces for analysis | Polyester swabs used in cleaning validation; applicable to surface sampling for all analyte types [6] |

| Quality Control Materials | Monitor method performance during validation and routine use | Used across all analyte categories to ensure ongoing method reliability [3] [4] [1] |

The scope of validation for chemical, microbiological, and toxic element analytes demonstrates both significant distinctions and underlying scientific principles that unite analytical science. Chemical methods demand rigorous characterization of precision and accuracy across defined ranges, microbiological methods require specialized approaches for living organisms, and toxic element analyses need extreme sensitivity with robust contamination control. The modern framework established by ICH Q2(R2) and Q14 emphasizes a lifecycle approach, beginning with a well-defined Analytical Target Profile and continuing through post-implementation monitoring [1]. This comparative analysis provides researchers with the experimental protocols and performance criteria needed to develop, validate, and maintain robust analytical methods that ensure food safety and regulatory compliance across all analyte categories.

Method validation is a foundational process in food safety testing, ensuring that analytical procedures produce reliable, accurate, and reproducible results. For researchers and scientists developing detection methods for food analytes, understanding the landscape of regulatory standards is crucial for both compliance and scientific rigor. The validation requirements differ significantly based on the type of food analyte—whether microbiological, chemical, or from specific product categories like tobacco. This guide objectively compares the frameworks established by major regulatory bodies: the U.S. Food and Drug Administration (FDA), the International Organization for Standardization (ISO) through its 16140 series on food chain microbiology, and other international guidelines. Recent updates through 2025 have refined these protocols, emphasizing the need for researchers to stay current with validation requirements across different regulatory jurisdictions and analyte categories.

Comparative Analysis of Regulatory Frameworks

FDA Validation Guidelines

The FDA provides distinct validation frameworks for different product categories, with a recently increased focus on demonstration of method validity:

Microbiological Pathogens: The FDA Guidelines for the Validation of Analytical Methods for the Detection of Microbial Pathogens in Foods and Feeds, Edition 3.0 (2019) outlines requirements for multi-laboratory validation (MLV) studies. These guidelines mandate that MLV studies be performed for each sample preparation procedure and require positive rates to fall within a 25%-75% fractional range to be considered acceptable [7]. A notable shift in FDA inspection focus throughout 2024 and into 2025 has placed greater emphasis on product-specific validation and verification reports, even for compendial methods such as USP monographs [8].

Tobacco Products: In January 2025, FDA finalized guidance titled "Validation and Verification of Analytical Testing Methods Used for Tobacco Products," which provides recommendations for producing validated and verified data for analytical procedures used in tobacco product applications. This document outlines procedures for validating precision, accuracy, selectivity, and sensitivity, and acknowledges that alternative approaches may differ from its recommendations [9] [10].

Human Foods Program: The newly launched Human Foods Program (HFP) has identified the use and development of new methods as an FY 2025 priority, focusing on completing external review and validation of scientific tools like the Expanded Decision Tree for chemical assessments [5].

ISO 16140 Series for Food Microbiology

The ISO 16140 series is specifically dedicated to the validation and verification of microbiological methods in the food and feed chain. The series consists of multiple parts, each addressing different validation scenarios [11]:

ISO 16140-2: Serves as the base standard for alternative methods validation, involving both method comparison and interlaboratory studies. The September 2024 Amendment 1 introduced new calculations for qualitative method evaluation and specific protocols for commercial sterility testing [11].

ISO 16140-3: Describes the protocol for verification of reference and validated alternative methods in a single laboratory, comprising both implementation verification and item verification stages. The August 2025 Amendment 1 specifies protocols for verification of identification methods [11].

ISO 16140-4 & -5: Address validation in single laboratories (Part 4) and factorial interlaboratory validation for non-proprietary methods (Part 5) for situations where full validation according to Part 2 is not feasible [11].

ISO 16140-6 & -7: Cover validation of confirmation and typing procedures (Part 6) and identification methods (Part 7), with the latter being particularly significant as it addresses validation where no reference method exists [11].

International Certification Schemes

MicroVal is an international certification program for alternative microbiological methods that operates based on the ISO 16140 series. In December 2024, MicroVal published updated rules (Version 9.4) that now include validation against EN-ISO 16140-7 for identification methods and establish an emergency validation protocol for crisis situations [12]. The program brings together industry stakeholders including 3M Food Safety, bioMérieux, Eurofins, Nestle, and Thermo Fisher Scientific to validate methods through an independent certification process [12].

Table 1: Key Regulatory Bodies and Their Primary Validation Standards

| Regulatory Body | Primary Standards/Guidelines | Scope/Focus | Recent Updates (2024-2025) |

|---|---|---|---|

| FDA | Microbiological Method Validation Guidelines (3rd Ed.); Tobacco Product Analytical Testing Guidance | Microbial pathogens in foods/feeds; Tobacco product constituents | Finalized tobacco guidance (Jan 2025); Increased inspection focus on validations |

| ISO | ISO 16140 series (7 parts) | Microbiology of the food chain | Amendments to Parts 2, 3, & 4 (2024-2025); Part 7 for identification methods |

| MicroVal | MicroVal Rules (based on ISO 16140) | Certification of alternative microbiological methods | Version 9.4 (Dec 2024); Support for ISO 16140-7; Emergency validation protocol |

Experimental Validation Protocols and Performance Data

Multi-Laboratory Validation (MLV) Protocol for Salmonella Detection

A recent MLV study validating a real-time PCR method for Salmonella detection in frozen fish demonstrates the practical application of these standards. The study followed both FDA and ISO 16140-2:2016 guidelines and involved 14 laboratories [7].

Methodology: Each laboratory analyzed 24 blind-coded frozen fish test portions using both the qPCR method and the FDA/BAM culture reference method. Test portions included uninoculated controls and samples inoculated with low (0.58 MPN/25g) and high (4.27 MPN/25g) levels of Salmonella after a 2-week aging period at frozen temperature. DNA extraction was performed using both manual and automated methods, with the qPCR method targeting the Salmonella invA gene [7].

Performance Data: The study reported a 39% positive rate for qPCR versus 40% for the culture method, both falling within FDA's required 25%-75% fractional range. The Relative Level of Detection (RLOD) was approximately 1, indicating equivalent performance between methods. The study also found that automated DNA extraction improved qPCR sensitivity by providing higher quality DNA extracts [7].

Independent Laboratory Validation for Multi-Matrix Testing

A December 2025 study demonstrated the validation of a Salmonella loop-mediated isothermal amplification (LAMP) assay across 27 human and animal food matrices representing 9 ISO food categories [13].

Methodology: For each matrix, laboratories received 30 blinded test portions spiked with low (fractional) or high levels of Salmonella and uninoculated controls. All test portions were processed for Salmonella isolation according to FDA/BAM Chapter 5, with overnight enrichments screened by LAMP on one of two platforms [13].

Performance Data: All 27 matrices were successfully validated with clean uninoculated controls (all negative), fractional recoveries (25-75%), and acceptable RLOD values below 1.5. This demonstrated the LAMP assay's reliability across diverse food categories including chocolate, dairy, fish, fresh produce, and infant formula [13].

Table 2: Performance Comparison of Validated Rapid Methods for Salmonella Detection

| Validation Parameter | FDA qPCR Method (Frozen Fish) | Salmonella LAMP Assay (27 Food Matrices) | Acceptance Criteria |

|---|---|---|---|

| Number of Laboratories | 14 | Multiple independent laboratories | Varies by standard |

| Positive Rate (Rapid Method) | ~39% | 25-75% (fractional) | 25-75% (FDA) |

| Positive Rate (Reference Method) | ~40% | Not specified | 25-75% (FDA) |

| Relative Level of Detection (RLOD) | ~1.0 | <1.5 | <1.5 (ISO) |

| Specificity | High, with automatic extraction improving sensitivity | All controls negative | No false positives |

| Applicable Matrices | Frozen fish (blended preparation) | 27 matrices across 9 ISO categories | Category-dependent |

Research Reagent Solutions for Method Validation

The following table details essential reagents and materials used in the validation experiments cited, with their specific functions in microbiological method validation:

Table 3: Key Research Reagent Solutions for Microbiological Method Validation

| Reagent/Material | Function in Validation | Example from Cited Studies |

|---|---|---|

| Selective Enrichment Broths | Supports target pathogen growth while inhibiting competitors | Used in BAM Salmonella culture method for pre-enrichment and sequential enrichment [7] |

| DNA Extraction Kits | Isolation of high-quality DNA for molecular detection | Manual and automated extraction methods compared in Salmonella qPCR validation [7] |

| qPCR/LAMP Reagents | Amplification and detection of target sequences | Salmonella invA gene primers/probes (qPCR); LAMP reagents for isothermal amplification [7] [13] |

| Reference Strains | Provides positive controls for method performance assessment | Used in spiking studies at predetermined concentrations (e.g., 0.58 and 4.27 MPN/25g) [7] |

| Selective Plating Media | Isolation and presumptive identification of target organisms | Used in FDA/BAM culture method for selective/differential isolation [7] |

| Sample Matrices | Represents food categories for determining method applicability | 27 food matrices across 9 ISO categories; frozen fish; baby spinach [7] [13] |

Workflow Visualization of Method Validation Pathways

The following diagram illustrates the decision pathway for selecting appropriate validation procedures based on method type and intended use, synthesizing requirements from FDA, ISO 16140, and international certification schemes:

The validation workflow demonstrates the structured approach required for different methodological categories. For microbiological methods, the critical distinction lies between alternative method validation (requiring either full ISO 16140-2 validation or single-laboratory validation per ISO 16140-4) and reference method verification (following ISO 16140-3 protocols). Chemical methods, particularly for tobacco products, follow the specialized FDA guidance issued in 2025, while the optional MicroVal certification pathway provides international recognition for microbiological methods [11] [10] [12].

The regulatory landscape for method validation in food safety is multifaceted, with distinct but complementary frameworks established by FDA, ISO, and international certification bodies. For researchers and scientists, selecting the appropriate validation pathway depends on multiple factors: the analyte type (microbiological vs. chemical), intended method use (screening vs. confirmation), regulatory jurisdiction, and desired scope of application. The experimental data presented demonstrates that properly validated rapid methods can achieve performance equivalent to traditional culture methods while significantly reducing detection time. As regulatory focus intensifies on validation and verification—evidenced by recent FDA inspection trends and continuous updates to ISO standards—researchers must prioritize robust validation protocols tailored to their specific methodological applications and regulatory requirements.

In the scientific and regulatory landscape, the validation of analytical methods exists on a broad spectrum. On one end, Emergency Use Authorizations (EUAs) provide a pathway for rapid deployment during crises, while on the other, multi-laboratory validation studies represent the gold standard for establishing robust, reproducible method performance. For researchers and drug development professionals, understanding this hierarchy is critical for selecting appropriate methods, interpreting data, and navigating regulatory requirements for different food analyte types and medical products.

This guide objectively compares the performance, requirements, and applications of various validation tiers, providing a structured framework for evidence-based decision-making.

Validation Tiers: A Comparative Framework

The rigor of analytical validation is directly proportional to the intended use of the method and the associated risk. The table below summarizes the key characteristics across the validation hierarchy.

Table 1: Comparative Framework of Validation Tiers

| Validation Tier | Primary Trigger | Regulatory/Guiding Body | Typical Timeline | Key Performance Indicators | Data Requirements |

|---|---|---|---|---|---|

| Emergency Use Authorization (EUA) | Public Health Emergency [14] | FDA (U.S.) [14] | Accelerated (Weeks/Months) | Sensitivity, Specificity [15] | Evidence of safety & potential effectiveness [14] |

| Laboratory Developed Test (LDT) | Unmet Clinical Need [16] | CLIA (U.S.) [16] | Variable (Months) | Sensitivity, Specificity, Precision, Reportable Range [16] | Full, single-laboratory validation data [16] |

| Multi-Laboratory Study | Standardization & Regulatory Approval | ISO, AOAC, FDA | Extended (Months/Years) | Reproducibility, Repeatability, Robustness [17] | Inter-laboratory statistical agreement data [17] |

Tier 1: Emergency Use Authorization (EUA)

Definition and Regulatory Context

An Emergency Use Authorization (EUA) is a mechanism that allows the U.S. Food and Drug Administration (FDA) to facilitate the availability of unapproved medical products during a public health emergency. This is invoked when the Secretary of Health and Human Services declares that circumstances justify emergency use, provided there are no adequate, approved, available alternatives [14]. This pathway was used extensively for COVID-19 diagnostics, therapeutics, and vaccines [14].

Performance and Experimental Data

The validation under EUA is focused on establishing a reasonable belief that the product may be effective, with a focus on key performance metrics. A systematic review of FDA-authorized rapid antigen SARS-CoV-2 tests provides comparative data.

Table 2: Pre- vs. Post-Approval Performance of Rapid Antigen Tests (SARS-CoV-2)

| Test Phase | Number of Studies | Pooled Sensitivity (95% CI) | Pooled Specificity (95% CI) | Key Finding |

|---|---|---|---|---|

| Preapproval | 13 [15] | 86.5% (83.3–89.1%) [15] | Not significantly different from postapproval [15] | Manufacturer claims are largely supported. |

| Postapproval | 26 [15] | 84.5% (81.2–87.3%) [15] | Not significantly different from preapproval [15] | For 2 of 9 tests, sensitivity was lower in post-market use [15]. |

Experimental Protocol for EUA Validation

A typical validation protocol for an in vitro diagnostic under EUA involves a cross-sectional study comparing the new test against an accepted reference standard.

- Reference Standard: Reverse transcription polymerase chain reaction (RT-PCR) for SARS-CoV-2 detection [15].

- Population: Patients with symptoms of the target disease (e.g., COVID-19) [15].

- Procedure: Run the candidate test and reference standard on samples from the same participants, blinded to the results of the other test.

- Statistical Analysis: Calculate sensitivity, specificity, and positive/negative predictive values with confidence intervals against the reference standard.

Tier 2: Laboratory Developed Tests (LDTs)

Definition and Regulatory Context

Laboratory Developed Tests (LDTs) are in vitro diagnostic tests that are developed, validated, and used within a single clinical laboratory [16]. They are often created out of necessity when no commercially available test exists or when available tests do not meet the needs of a specific patient population [16]. In the U.S., LDTs are currently overseen under the Clinical Laboratory Improvement Amendments (CLIA) rather than through FDA pre-market review, requiring laboratories to establish extensive performance specifications before clinical implementation [16].

Validation Requirements and Workflow

CLIA defines LDTs as high-complexity tests, mandating a rigorous and comprehensive single-laboratory validation. The workflow and requirements for this process are outlined below.

Diagram 1: LDT Validation Workflow

The Scientist's Toolkit: LDT Validation Essentials

Table 3: Essential Reagents and Materials for LDT Validation

| Category | Item/Reagent | Function in Validation |

|---|---|---|

| Reference Materials | Certified Reference Materials (CRMs), Biobanked Samples | Serves as the "gold standard" for method comparison and accuracy estimation [16]. |

| Quality Control (QC) Materials | Commercial QC pools, In-house prepared controls | Monitors daily precision and assay performance; used in reproducibility studies [16]. |

| Clinical Samples | Well-characterized patient specimens | Used to establish clinical reportable range, reference intervals, and for carryover/stability studies [16]. |

| Software & Statistical Tools | R, Python, SPSS, SAS, GraphPad Prism | Performs statistical analysis (e.g., linear regression, ANOVA for precision), data visualization, and determines metrics like LOD [17]. |

| Interference Substances | Lipids, Bilirubin, Common Medications | Assesses analytical specificity by testing for cross-reactivity and matrix effects [16]. |

Tier 3: Multi-Laboratory Validation Studies

The Gold Standard for Method Establishment

Multi-laboratory validation studies represent the most rigorous tier, designed to demonstrate that a method is rugged and reproducible across different instruments, operators, environments, and time. This is a foundational requirement for standard methods published by organizations like AOAC INTERNATIONAL and ISO, and is typically required for full FDA pre-market approval of commercial assays.

Statistical Analysis and Data Handling

A successful multi-laboratory study depends on a robust statistical framework to analyze collaborative data. Microbial data, common in food safety, often requires specific transformation before analysis.

- Data Transformation: Microbial concentration data are typically log-transformed (e.g., log10 CFU/g) to better approximate a normal distribution, which is a prerequisite for many statistical tests [17].

- Handling Non-Detects: Samples with non-detectable levels of the analyte require specialized statistical techniques, such as censored data analysis, to avoid bias [17].

- Key Outputs: The primary outputs include estimates of repeatability standard deviation (Sr) within a lab and reproducibility standard deviation (SR) between labs, which are used to calculate Horwitz Ratios and other measures of acceptability [17].

Experimental Protocol for a Multi-Laboratory Study

The following workflow visualizes the standardized process for executing a multi-laboratory validation study.

Diagram 2: Multi-Lab Study Workflow

The hierarchy of validation—from rapid EUAs to single-lab LDTs and comprehensive multi-laboratory studies—provides a structured, risk-based approach to establishing method reliability. Emergency pathways prioritize speed and availability during crises, accepting higher uncertainty. LDTs offer tailored solutions with robust single-site validation, while multi-laboratory studies provide the highest level of confidence for standardized methods.

For researchers and developers, the choice of validation tier is not about superiority but about contextual appropriateness. The optimal path is determined by the intended use, regulatory landscape, required speed-to-market, and the necessity for universal reproducibility. A clear understanding of this framework ensures that scientific evidence meets the requisite standard for its specific application, ultimately safeguarding public health and fostering innovation.

The FDA Foods Program Compendium of Analytical Laboratory Methods serves as a centralized repository of validated methods currently employed by FDA regulatory laboratories to ensure food and feed safety. This Compendium is structured into distinct yet complementary components, with the Chemical Analytical Manual (CAM) and the Bacteriological Analytical Manual (BAM) serving as its foundational pillars [18]. These resources provide the scientific and regulatory community with rigorously tested procedures for determining the identity, strength, quality, purity, and potency of food substances [19].

The Compendium operates under the Method Development, Validation, and Implementation Program (MDVIP), which establishes stringent validation guidelines. The validation status of a method determines its placement and tenure within the Compendium. Methods with multi-laboratory validation status are retained indefinitely, whereas those with single-laboratory validation or developed for emergency use are posted for limited durations, typically one to two years, subject to renewal [18]. This structured approach ensures that the methods referenced are both current and scientifically robust, providing a reliable framework for food safety testing.

Comparative Analysis: CAM vs. BAM

The CAM and BAM, while both essential to the FDA's analytical framework, are designed for distinct categories of analytes and employ different technological and validation approaches. The table below summarizes their core characteristics:

| Feature | Chemical Analytical Manual (CAM) | Bacteriological Analytical Manual (BAM) |

|---|---|---|

| Primary Focus | Chemical contaminants, toxins, additives, and nutrients [18] | Biological pathogens: bacteria, viruses, parasites, and microbial toxins [18] [20] |

| Representative Analytes | Mycotoxins, pesticides, PFAS, drug residues, sulfites, toxic elements [18] | Salmonella, Listeria, E. coli, Campylobacter, norovirus, Cyclospora [20] |

| Core Technologies | LC-MS/MS, GC-MS, ICP-MS, HPLC [18] | Culture methods, PCR (Polymerase Chain Reaction), qPCR, serology [18] [20] |

| Validation Workflow | Multi-laboratory, single-laboratory, or emergency-use validation [18] | Primarily multi-laboratory validation (Level 4 MLV) [18] |

| Data Output | Quantitative concentration (e.g., ppb, ppm) [18] | Qualitative detection and/or enumeration (e.g., CFU/g) [20] |

| Method Longevity | Indefinite for multi-laboratory validated; 1-3 years for others [18] | Methods are updated as chapters; considered stable reference [18] [20] |

Method Characteristics and Validation Status

The fundamental distinction between the CAM and BAM is their analytical target: chemistry versus microbiology. The CAM is designed for the extraction and quantification of specific molecules, employing advanced instrumental techniques like Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS) for detecting mycotoxins or Inductively Coupled Plasma Mass Spectrometry (ICP-MS) for elemental analysis [18]. Its methods provide quantitative data, such as the concentration of aflatoxin in peanut butter or perchlorate in infant food [18].

In contrast, the BAM focuses on the detection and identification of living organisms, utilizing a combination of traditional culture-based methods to grow microorganisms and modern molecular techniques like real-time PCR (qPCR) for genetic confirmation [18] [20]. Its outputs are often qualitative (presence/absence of a pathogen) or semi-quantitative (most probable number) [20].

Their validation pathways also differ. The CAM incorporates methods at various validation tiers, acknowledging the need for both well-established and rapidly developed emergency-response methods [18]. The BAM, however, primarily consists of methods that have achieved the highest multi-laboratory validation (MLV) status (Level 4), ensuring high reproducibility for complex biological analyses across different laboratory environments [18].

Figure 1: Distinct Validation Pathways for CAM and BAM. CAM incorporates methods at multiple validation tiers, while BAM prioritizes multi-laboratory validated methods.

Analytical Methodologies and Experimental Protocols

Representative CAM Protocol: Multi-Mycotoxin Analysis

Method C-003.03, "Determination of Mycotoxins in Corn, Peanut Butter, and Wheat Flour Using Stable Isotope Dilution Assay (SIDA) and LC-MS/MS," is a representative CAM protocol for analyzing chemical contaminants [18].

- Sample Preparation: Samples are homogenized, and an extraction solvent is added. The Stable Isotope Dilution Assay (SIDA) involves adding known quantities of isotopically-labeled internal standards (e.g., ¹³C-labeled aflatoxins) at the beginning of extraction. This step corrects for analyte losses during sample preparation and matrix effects during ionization, significantly improving accuracy [18].

- Extraction & Cleanup: Extraction is typically performed using a buffered aqueous organic solvent mixture. A cleanup step may involve passing the extract through a solid-phase extraction (SPE) column to remove interfering matrix components.

- Analysis - LC-MS/MS: The purified extract is separated using High-Performance Liquid Chromatography (HPLC), which resolves the different mycotoxins based on their chemical properties. The separated analytes are then introduced into a tandem mass spectrometer (MS/MS). The MS/MS detector first ionizes the molecules and then selects specific precursor ions for each mycotoxin. These precursor ions are fragmented, and unique product ions are monitored. This "multiple reaction monitoring" (MRM) provides high specificity and sensitivity, allowing for the detection and quantitation of numerous mycotoxins, such as Aflatoxin B1 and Ochratoxin A, at very low concentrations (parts-per-billion level) in a single run [18].

Representative BAM Protocol: Salmonella Detection

Chapter 5 of the BAM, detailing the analysis for Salmonella in foods, exemplifies a comprehensive microbiological method [20].

- Pre-Enrichment: A sample is incubated in a non-selective broth. This critical step revives stressed or injured cells and allows for the growth of a small number of Salmonella to detectable levels.

- Selective Enrichment: A portion of the pre-enriched culture is transferred to selective broths that inhibit the growth of competing background flora while favoring Salmonella.

- Selective Plating: The enriched culture is streaked onto selective and differential agar plates. After incubation, typical Salmonella colonies are selected based on their appearance.

- Biochemical Screening: Presumptive positive colonies are tested using a series of biochemical reactions to confirm their identity as Salmonella.

- Serological and Molecular Confirmation: The isolate is confirmed using polyvalent antisera for somatic (O) and flagellar (H) antigens. Modern protocols also include a real-time PCR (qPCR) confirmation step, which detects specific Salmonella DNA sequences, providing a highly specific and rapid result [18] [20].

Figure 2: Contrasting Workflows for Chemical (CAM) and Microbiological (BAM) Analysis. CAM workflows are linear and instrumental, while BAM relies on cultural enrichment and biological confirmation.

Validation Frameworks and Regulatory Compliance

Validation Requirements by Analyte Type

The FDA's validation requirements are tailored to the nature of the analyte and the analytical technique, as detailed in the MDVIP guidelines [18] [21]. The table below compares the key validation parameters for chemical and microbiological methods, informed by ICH Q2(R2) and FDA-specific guidelines [19] [22].

| Validation Parameter | Chemical Methods (CAM) | Microbiological Methods (BAM) |

|---|---|---|

| Accuracy | Measured as recovery % of known spikes; SIDA is preferred [18] | Comparison to a reference culture method; confirmed positives/negatives |

| Precision | Repeatability and intermediate precision (RSD) [22] | Reproducibility across laboratories (MLV is key) [18] |

| Specificity/Selectivity | Ability to distinguish analyte from matrix interferences [22] | Ability to detect target microbe in competitive flora |

| Limit of Detection (LOD) | Signal-to-noise ratio (e.g., 3:1) [22] | Lowest level that can be detected but not quantified |

| Limit of Quantitation (LOQ) | Signal-to-noise ratio (e.g., 10:1) with precision/accuracy [22] | Not typically applicable for presence/absence tests |

| Linearity & Range | Demonstrated across the calibrated range [22] | Not applicable for qualitative methods |

| Ruggedness/Robustness | Deliberate variations in method parameters [22] | Performance across different labs, analysts, and equipment |

For chemical methods, the emphasis is on quantitative precision and the use of techniques like SIDA to ensure accuracy in complex food matrices [18]. For microbiological methods, the primary goal is reliable detection of viable organisms, making specificity and reproducibility across laboratories the most critical validation parameters [18].

The Researcher's Toolkit: Essential Reagents and Materials

Successful implementation of CAM and BAM methods requires specific, high-quality materials. The following table details key research reagent solutions and their functions.

| Reagent/Material | Primary Function | Application Context |

|---|---|---|

| Stable Isotope-Labeled Internal Standards (e.g., ¹³C-labeled toxins) | Correct for analyte loss and matrix effects during analysis; essential for accurate quantitation. | Chemical Analysis (CAM), e.g., Mycotoxin testing via SIDA-LC-MS/MS [18] |

| Selective Culture Media (e.g., Tetrathionate Broth, XLD Agar) | Enrich and differentiate target pathogens from background microflora. | Microbiological Analysis (BAM), e.g., Salmonella detection [20] |

| PCR Primers & Probes | Target and amplify unique DNA sequences of pathogens for specific identification. | Microbiological Analysis (BAM), e.g., qPCR confirmation of Listeria [18] |

| Certified Reference Materials | Calibrate instruments and verify method accuracy against a known standard. | Both Chemical (CAM) and Microbiological (BAM) analysis |

| Solid-Phase Extraction (SPE) Cartridges | Clean up sample extracts by retaining interfering compounds or the analyte of interest. | Chemical Analysis (CAM), e.g., PFAS or drug residue analysis [18] |

Implementation in Regulatory and Research Contexts

Navigating Method Selection and Laboratory Accreditation

For laboratories supporting regulatory submissions or compliance testing, selecting the appropriate method is critical. The FDA's Laboratory Accreditation for Analyses of Foods (LAAF) program mandates that certain food testing be conducted by accredited laboratories [23]. These laboratories must operate under a quality system that meets ISO/IEC 17025:2017 standards and must use methods that are fit-for-purpose, which typically includes methods from the CAM and BAM [23].

When a fully validated method from the Compendium does not exist for an emerging contaminant, the FDA provides guidance on method development and validation. The Q2(R2) guideline offers a framework for validating analytical procedures, emphasizing a science- and risk-based approach [22]. Researchers developing new methods must document the validation parameters thoroughly to demonstrate that the method is reliable for its intended use [19] [22]. The FDA's own "Other Analytical Methods of Interest" page lists methods that, while not yet in the Compendium, are used for specific surveys or emergencies, providing insight into the Agency's current focus areas, such as testing for acrylamide in foods or Cyclospora in agricultural water [24].

Future Directions and Emerging Contaminants

The FDA's analytical methods are dynamic resources that evolve to address new food safety challenges. The 2025 Guidance Agenda for the Human Foods Program highlights upcoming priorities, including action on heavy metals like cadmium in baby food, improved allergen labeling, and scrutiny of natural food colorants and opiate alkaloids in poppy-derived ingredients [25]. These regulatory focus areas signal the future direction of method development and validation within the CAM and BAM frameworks. Furthermore, recent updates, such as the March 2025 revision of test methods for specific food additive specifications (e.g., caffeine, colorants), demonstrate the ongoing refinement of existing methods to ensure continued accuracy and relevance [26]. Staying abreast of these updates is essential for researchers and regulatory professionals aiming to maintain the highest standards of food safety and compliance.

The European Union's regulatory framework for Food Contact Materials (FCMs) is founded on the principle that materials must be safe and inert. The cornerstone of this framework is Regulation (EC) No 1935/2004, which sets out the overarching requirements that FCMs must not release their constituents into food at levels harmful to human health or change the food's composition, taste, or odour in an unacceptable way [27]. This framework regulation provides the legal basis for specific measures for different material types, including the most comprehensively regulated group: plastic materials and articles [27].

For plastic FCMs, the primary specific legislation is Commission Regulation (EU) No 10/2011, which details the rules on composition and establishes the Union List of authorised substances [27]. This regulation is dynamic and has been regularly amended, with a significant recent update being the "Quality Amendment" (Commission Regulation (EU) 2025/351), which entered into force on March 16, 2025 [28] [29]. This amendment introduces more detailed rules on aspects such as the purity of substances and labelling requirements for repeated-use articles [28] [29]. The system is designed to ensure safety through two primary control mechanisms: the Positive List (Union List) and specific migration limits, underpinned by strict Good Manufacturing Practices (GMP) as outlined in Regulation (EC) No 2023/2006 [27].

The Positive List (Union List) and Its Operational Mechanisms

Definition and Legal Basis

The Union List, established under Annex I of Regulation (EU) No 10/2011, is a positive list of substances permitted for use in the manufacture of plastic food contact materials [27]. A positive list system is a preventative regulatory tool that inherently prohibits the use of any substance not explicitly authorised. This means that only monomers, additives, and polymer production aids listed in the Union List can be legally used. The list is not static; it is subject to ongoing amendments to incorporate new scientific evidence and address emerging safety concerns. For instance, recent amendments have delisted substance FCM No 96 (untreated wood flour and fibres) and FCM No 121 (salicylic acid), with transitional periods allowing their use under specific conditions until January 31, 2026, pending a valid application for authorisation [30].

Key Amendments and the "Quality Amendment"

The Union List and its governing regulation are continually refined. The recent Quality Amendment (EU 2025/351) introduces critical clarifications and new requirements that researchers must note [29]:

- Refined Definitions: The amendment updates the definition of an "additive" and introduces new definitions for "re-processing of plastic" and "UVCB substances" (substances of Unknown or Variable composition, Complex reaction products, or Biological materials) [29].

- High Degree of Purity: A new Article 3a mandates that substances used in manufacturing must be of a "high degree of purity." This specifically regulates Non-Intentionally Added Substances (NIAS), setting strict migration thresholds for impurities unless they have been risk-assessed. For non-assessed NIAS, the migration must not exceed 0.00015 mg/kg [29].

- Rules for Reprocessed Plastics: The amendment sets specific conditions for using off-cuts and scraps ("reprocessed plastics") within the manufacturing process, requiring that they originate from compliant FCMs and do not lead to the final product exceeding migration limits [29].

Table 1: Key Authorisation and Restriction Mechanisms for Substances on the Union List

| Mechanism | Description | Purpose |

|---|---|---|

| Authorisation | Inclusion of a substance on the Union List (Annex I of Regulation (EU) 10/2011) following a safety assessment by EFSA. | To ensure only safe substances are used in plastic FCMs. |

| Specific Migration Limit (SML) | The maximum allowed amount of a specific substance that can migrate from the material into food (expressed in mg/kg of food). | To limit consumer exposure to individual substances based on their toxicity [27]. |

| Overall Migration Limit (OML) | A maximum limit of 60 mg/kg of food (or 10 mg/dm²) for the total amount of all substances that migrate from the material [27]. | To ensure the overall inertness of the material and prevent unacceptable changes in the food composition. |

| Restrictions on Use | May include limitations on the type of plastic, food contact conditions (e.g., temperature), or types of food the substance can be used with. | To ensure safe use under specific, foreseeable conditions. |

Defining Migration Limits

Migration limits are the operational expression of safety under foreseeable conditions of use. The EU system employs two parallel concepts:

- Specific Migration Limit (SML): This is a toxicologically derived limit for individual authorised substances on the Union List. The SML is set by the European Food Safety Authority (EFSA) based on available toxicity data to ensure a sufficient margin of safety for consumers [27]. Exceeding the SML for a substance renders the material non-compliant.

- Overall Migration Limit (OML): This is a general requirement that the total sum of all substances migrating from the material into food must not exceed 60 milligrams per kilogram of food (or 10 milligrams per square decimeter of the contact surface) [27]. The OML ensures the global inertness of the material and prevents an unacceptable change in the food's composition.

Experimental Protocol for Migration Testing

Compliance testing for migration is a standardized process designed to simulate actual conditions of use.

1. Principle: Migration testing is performed to quantify the level of specific substances (for SML compliance) or the total mass transfer (for OML compliance) from the food contact material into a food simulant under controlled time-temperature conditions [27].

2. Key Materials and Reagents:

- Food Simulants: These are chemical substitutes representing different food types [27]:

- Simulant A: Ethanol 10% (v/v) - for aqueous foods.

- Simulant B: Acetic acid 3% (w/v) - for acidic foods.

- Simulant C: Ethanol 20% (v/v) - for alcoholic foods.

- Simulant D1: Ethanol 50% (v/v) - for dairy and other fatty foods (alternative for Simulant D2).

- Simulant D2: Vegetable oil (e.g., olive oil) - for fatty foods (subject to substitution rules).

- Testing Cells/Conditioners: Equipment to ensure standardized contact between the sample and the simulant.

- Analytical Instruments:

- For OML: Analytical balance with a precision of at least 0.1 mg.

- For SML: Typically, High-Performance Liquid Chromatography (HPLC) coupled with Mass Spectrometry (MS) or UV detection for specific substance quantification.

3. Procedure:

- Sample Preparation: The plastic material is cut to a specific size, ensuring the contact surface is clean and representative. The standard surface area-to-volume ratio used is 6 dm² per kg of food simulant, with derogations for certain containers and articles [28].

- Exposure Conditions: The sample is immersed in the selected food simulant and exposed to precise time-temperature conditions that reflect the material's intended use (e.g., 10 days at 20°C for room temperature storage, 2 hours at 70°C for hot fill, or 30 minutes at 100°C for boiling conditions) [27].

- Overall Migration Analysis:

- Evaporation: After exposure, the simulant is evaporated, and the non-volatile residue is weighed.

- Calculation: The overall migration is calculated in mg/kg of simulant or mg/dm² of the sample surface.

- Specific Migration Analysis:

- Extraction and Analysis: An aliquot of the simulant is analysed using a validated analytical method (e.g., HPLC-MS) to quantify the target substance(s).

- Comparison: The measured concentration is compared to the SML listed for that substance in the Union List.

Comparative Analysis with Alternative Regulatory Approaches

EU vs. US Regulatory Philosophies

A comparison with the United States' system highlights fundamental differences in regulatory philosophy and operational methodology, which are critical for global market access.

Table 2: Comparative Analysis of EU and US Food Contact Material Regulations

| Aspect | European Union (EU) | United States (US FDA) |

|---|---|---|

| Regulatory Basis | Regulation (EC) No 1935/2004 (Framework) and specific measures (e.g., (EU) 10/2011 for plastics). | Federal Food, Drug, and Cosmetic Act (FFDCA). |

| Core Mechanism | Positive List (Union List) for plastics, with pre-market authorisation for all substances via EFSA assessment [27]. | Food Contact Notification (FCN) for new substances; prior-sanctioned substances and Generally Recognized as Safe (GRAS) exemptions [31]. |

| Migration Control | Dual System: Specific Migration Limits (SML) for individual substances and an Overall Migration Limit (OML) [27]. | Primarily focuses on cumulative exposure and safety of the individual substance; no universal OML. |

| Key Recent Developments | "Quality Amendment" (EU 2025/351) reinforcing purity and NIAS rules [29]. Ban on BPA and other bisphenols in FCMs (EU 2024/3190) [32]. | Phasing out of PFAS in grease-proofing agents [31]. Potential GRAS reform to eliminate self-affirmation and mandate notification [31]. |

| Post-Market Review | Implicit through EFSA's continuous re-evaluation of substances (e.g., BPA, phthalates) [33]. | Ad hoc, often petition-driven. Increased focus on systematic reassessment of chemicals like phthalates and bisphenols [31]. |

Focus on Emerging Challenges: NIAS and Recycled Materials

Modern FCM regulations are evolving to address complex scientific challenges:

- Non-Intentionally Added Substances (NIAS): NIAS are impurities or reaction by-products not intentionally added during manufacturing. The EU's Quality Amendment directly tackles NIAS by setting strict, tiered migration thresholds (e.g., 0.00015 mg/kg for non-assessed substances), compelling manufacturers to deeply understand their material's chemistry [29]. This contrasts with the US, where the focus remains primarily on intended substances.

- Recycled Plastic Materials: The EU has a dedicated regulation for recycled plastics, (EU) 2022/1616, which mandates a "challenge test" to demonstrate the efficiency of the decontamination process [27] [32]. This creates a specific, science-based pathway for authorising recycled content, whereas the US FDA also evaluates recycling processes but through its own pre-market notification system.

The Scientist's Toolkit: Essential Reagents and Methods for FCM Analysis

Table 3: Key Research Reagent Solutions for FCM Migration Testing

| Reagent / Material | Function in Experimental Protocol |

|---|---|

| Food Simulants (A, B, C, D1, D2) | To simulate the extraction and migration behaviour of different food types (aqueous, acidic, alcoholic, fatty) under controlled lab conditions [27]. |

| Certified Reference Materials (CRMs) | To calibrate analytical instruments and validate the accuracy and precision of both SML and OML methods. Essential for method validation. |

| HPLC-MS/MS Systems | The primary analytical technique for the sensitive and selective identification and quantification of specific substances (SML compliance) and NIAS. |

| Gas Chromatography (GC) Systems | Used for the analysis of volatile and semi-volatile organic compounds that may migrate from FCMs. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Employed for the determination of heavy metal migration (e.g., lead, cadmium) from certain FCMs like ceramics or inks. |

The EU's regulatory framework for food contact materials, centered on the positive Union List and the dual system of Specific and Overall Migration Limits, represents a rigorous, pre-market authorisation model. Recent amendments, particularly the 2025 Quality Amendment, have further strengthened this system by explicitly addressing the purity of substances and the management of NIAS. For researchers and industry professionals, understanding the detailed experimental protocols for migration testing—including the correct selection of food simulants and application of time-temperature conditions—is paramount for demonstrating compliance.

The comparative analysis with the US system reveals a global regulatory environment that is becoming increasingly stringent, with a clear trend towards greater scrutiny of chemicals of concern like PFAS, bisphenols, and phthalates. The scientific community is thus challenged to develop ever more sensitive analytical methods to meet lower detection limits and to tackle complex issues such as NIAS and the safety of novel, sustainable materials, ensuring that public health protection keeps pace with innovation and environmental goals.

Advanced Analytical Techniques: Method Development and Real-World Application

The demand for efficient, comprehensive, and reliable analytical methods in food safety and quality control has driven the development of multi-analyte methods capable of simultaneously determining dozens to hundreds of compounds in a single analytical run. These methods represent a significant advancement over traditional single-analyte approaches, offering improved laboratory efficiency, reduced analysis time, and lower operational costs. Within this landscape, gas chromatography-mass spectrometry (GC-MS) and liquid chromatography-tandem mass spectrometry (LC-MS/MS) have emerged as two cornerstone techniques, each with distinct strengths and applications. The implementation of these methods is particularly crucial for monitoring diverse chemical groups—from pesticides and mycotoxins to food packaging migrants and biogenic amines—in complex food matrices. This guide objectively compares GC-MS and LC-MS/MS multi-analyte strategies, providing researchers and scientists with experimental data and validation frameworks to inform analytical development within food safety research.

Both GC-MS and LC-MS/MS combine chromatographic separation with mass spectrometric detection but differ fundamentally in their operational principles and optimal application domains. GC-MS utilizes a gas mobile phase to transport vaporized samples through a heated column, making it particularly suitable for volatile and semi-volatile compounds. In contrast, LC-MS/MS employs a liquid mobile phase, enabling the analysis of non-volatile, thermally labile, and polar compounds without derivatization [34].

Table 1: Core Technical Differences Between GC-MS and LC-MS/MS

| Feature | GC-MS | LC-MS/MS |

|---|---|---|

| Mobile Phase | Inert gas (e.g., Helium) | Liquid solvents and buffers |

| Sample State | Must be vaporized | Dissolved in liquid |

| Separation Mechanism | Volatility and polarity | Polarity, hydrophobicity, ionic interaction |

| Analyte Suitability | Volatile, thermally stable compounds | Non-volatile, polar, thermally labile compounds |

| Typical Sample Preparation | Often requires derivation for polar compounds | "Dilute and shoot" possible for many matrices |

| Operational Costs | Generally lower | Generally higher due to solvents and maintenance |

The selection between these techniques often hinges on the physicochemical properties of the target analytes. GC-MS excels in separating compounds that can be vaporized without decomposition, while LC-MS/MS provides a gentler analysis pathway for molecules that would degrade under high temperatures [34]. For comprehensive food contaminant screening, many laboratories employ both techniques in a complementary manner to achieve the broadest possible analytical coverage.

GC-MS Multi-Analyte Methodologies: Applications and Protocols

GC-MS multi-analyte methods are particularly well-established for analyzing volatile organic compounds, migrants from food packaging, and certain pesticide residues. Their high resolution and compatibility with extensive spectral libraries make them powerful tools for both targeted and non-targeted analysis.

Experimental Protocol: Determination of Food Packaging Migrants

A validated method for the simultaneous determination of 75 plastic food contact materials in liquid food simulants demonstrates the robust capabilities of modern GC-MS/MS. The protocol employs salt-assisted liquid-liquid extraction (SALLE) for efficient sample preparation [35].

- Sample Preparation: An optimized SALLE is performed for all EU-regulated ethanol/H2O food simulants. Extraction occurs in the presence of 10% NaCl (for simulants A and C) or 5% NaCl (for simulant D1), using dichloromethane as the extracting solvent [35].

- Internal Standards: The method applies isotope dilution with selected deuterated compounds to correct for potential matrix effects and variability in extraction efficiency [35].

- GC-MS/MS Analysis: Separation and detection are achieved using gas chromatography with a triple-quadrupole mass spectrometer operating in multiple reaction monitoring (MRM) mode, which enhances selectivity and sensitivity by monitoring specific precursor-to-product ion transitions [35].

- Validation Performance: The method demonstrated sufficient accuracy for the majority of substances, with recoveries of 70–120% and repeatability (expressed as relative standard deviations, RSDs) smaller than 15%. Adequate sensitivity was confirmed for all 75 analytes at levels of a few ng g−1 [35].

Experimental Protocol: Broader Scopes with High-Resolution Detection

To further extend the scope of target analytes, a GC-APCI-QTOF-MS (Gas Chromatography-Atmospheric Pressure Chemical Ionization-Quadrupole-Time-of-Flight Mass Spectrometry) method was developed for the simultaneous determination of 126 food packaging substances. This approach leverages high-resolution accurate mass (HRAM) measurement [36].

- Sample Preparation: The method enables the direct analysis of 95% v/v aqueous ethanol food simulant extracts, eliminating the need for laborious and time-consuming sample preparation that could restrict the applicability to certain analyte groups or add uncertainty to the results [36].

- Instrumentation: The GC-APCI-QTOF-MS platform provides high sensitivity and the inherent capability for retrospective data analysis, allowing scientists to re-interrogate acquired data for compounds not included in the original screening method [36].

The following workflow diagram generalizes the sample preparation and analysis process for a GC-MS-based multi-analyte method:

(Graphic Source: Generalized from [35] [36])

LC-MS/MS Multi-Analyte Methodologies: Applications and Protocols

LC-MS/MS has become the technique of choice for multi-analyte determination of non-volatile, polar, and thermally labile compounds in food. Its versatility allows for the development of methods covering hundreds of analytes, from pesticides and mycotoxins to biogenic amines and food additives.

Experimental Protocol: Quantification of Biogenic Amines in Meat

A rapid LC-MS/MS method for the simultaneous quantification of six biogenic amines—putrescine (PUT), cadaverine (CAD), histamine (HIS), tyramine (TYR), spermidine (SPD), and spermine (SPM)—in meat products was developed without requiring derivatization, simplifying the workflow [37].

- Sample Preparation: One gram of minced muscle tissue is homogenized with 4 mL of 0.5 M HCl. The homogenate is centrifuged at 9000 rpm for 10 min at 4°C. The supernatant is filtered and subjected to a second centrifugation for further clarification. An aliquot of the final supernatant is diluted before LC-MS/MS analysis [37].

- Chromatography: Separation is achieved using a Waters Acquity UPLC BEH C18 column with acidified ammonium formate and acetonitrile mobile phases [37].

- Mass Spectrometry: Detection employs electrospray ionization (ESI) in positive ion mode with multiple reaction monitoring (MRM) [37].

- Validation Performance: The method showed excellent linearity (R² > 0.99), trueness between -20% and +20%, and acceptable precision (RSDr and RSDR ≤ 25%). The limits of quantification were established at 10 µg/g for all analytes [37].

Experimental Protocol: Large-Scale Pesticide and Mycotoxin Screening

The power of LC-MS/MS is fully realized in large-scale multi-residue methods. One study validated a method for 349 pesticides in tomato samples in a single chromatographic run [38].

- Sample Preparation: The method comprises QuEChERS-mediated extraction, followed by LC-MS/MS analysis, eliminating the need for additional clean-up steps [38].

- Validation Performance: All 349 analytes demonstrated a recovery between 70–120%, precision < 20%, and an LOQ of 0.01 mg/Kg, meeting the lowest acceptable concentration value for pesticides [38].

Similarly, a "dilute and shoot" LC-MS/MS approach was successfully validated for 295 analytes, including over 200 mycotoxins, across various food matrices. This approach minimizes sample preparation, and proficiency testing demonstrated satisfactory z-scores (-2 to 2) in 368 out of 408 cases, even for complex matrices like pepper and coffee [39].

The generalized workflow for an LC-MS/MS multi-analyte method, often simpler than GC-MS, is shown below:

(Graphic Source: Generalized from [37] [38] [39])

Comparative Experimental Data and Validation Outcomes

Direct comparison of validation data from implemented methods highlights the performance and reliability achievable with both techniques for multi-analyte determination.

Table 2: Summary of Validation Data from Representative Multi-Analyte Methods

| Method Description | Number of Analytes | Linear Range & R² | Accuracy (Recovery) | Precision (RSD) | Sensitivity (LOD/LOQ) |

|---|---|---|---|---|---|

| GC-MS/MS (Food Packaging) [35] | 75 | Not Specified | 70-120% | < 15% | Few ng g⁻¹ |

| LC-MS/MS (Biogenic Amines) [37] | 6 | R² > 0.99 | -20% to +20% | ≤ 25% | LOQ: 10 µg/g |

| LC-MS/MS (Pesticides) [38] | 349 | According to SANTE guide | 70-120% | < 20% | LOQ: 0.01 mg/kg |

| LC-MS/MS (Mycotoxins, "Dilute and Shoot") [39] | 295 | According to SANCO guide | Meets validation criteria | Meets validation criteria | Suitable for regulated limits |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of multi-analyte methods relies on a foundation of high-quality reagents and materials. The following table details key solutions used in the protocols cited herein.

Table 3: Key Research Reagent Solutions and Their Functions

| Reagent / Material | Function / Application | Representative Use Case |

|---|---|---|

| Deuterated Internal Standards (e.g., HIS-d4, PUT-d4) | Correct for matrix effects and loss during sample preparation; enable precise quantification. | Isotope dilution in GC-MS/MS for food packaging migrants [35] and LC-MS/MS for biogenic amines [37]. |

| QuEChERS Kits | Quick, Easy, Cheap, Effective, Rugged, and Safe extraction for multi-pesticide residue analysis. | Extraction of 349 pesticides from tomato samples prior to LC-MS/MS [38]. |

| C18 Chromatography Columns | Reversed-phase separation medium for LC-MS; provides robust separation of diverse analytes. | Separation of biogenic amines [37], food additives [40], and pesticides [38]. |

| Acidified Solvents (e.g., 0.5 M HCl) | Extraction solvent for isolating polar, ionic compounds from complex food matrices. | Extraction of biogenic amines from meat products [37]. |

| SALLE Reagents (NaCl, DCM) | Salt-assisted liquid-liquid extraction; improves partitioning of analytes into the organic phase. | Extraction of 75 migrants from food simulants for GC-MS/MS analysis [35]. |

Both GC-MS and LC-MS/MS provide powerful, complementary platforms for the simultaneous determination of multiple analytes in food. The choice of technique is primarily dictated by the physicochemical properties of the target analytes: GC-MS is ideal for volatile and thermally stable compounds, while LC-MS/MS is unmatched for non-volatile, polar, and thermally labile substances. As demonstrated by the experimental data, both can be validated to meet stringent regulatory requirements for accuracy, precision, and sensitivity. The ongoing trends toward automation, simplified "dilute and shoot" protocols, and the integration of high-resolution mass spectrometry are further enhancing the throughput, scope, and reliability of multi-analyte methods. This evolution empowers researchers and food safety professionals to more effectively monitor the chemical safety and quality of the global food supply.

Monitoring chemical residues and contaminants in food is essential for public health, as exposure to these substances can cause acute or chronic adverse health effects ranging from immediate distress to long-term issues like cancer, reproductive disorders, and antimicrobial resistance [41]. Modern analytical science has evolved from methods targeting single compounds to comprehensive multi-analyte procedures capable of simultaneously detecting hundreds of chemicals across different classes [41]. This guide objectively compares analyte-specific methodologies for four critical contaminant groups—per- and polyfluoroalkyl substances (PFAS), mycotoxins, pesticides, and drug residues—by examining their experimental protocols, performance characteristics, and regulatory validation requirements. The comparison focuses primarily on liquid chromatography-tandem mass spectrometry (LC-MS/MS) approaches, which have become the cornerstone of modern food safety monitoring due to their sensitivity, selectivity, and ability to cover diverse chemical compounds [42] [43] [44].

Comparative Analytical Performance Data

The table below summarizes key performance characteristics for each contaminant class, highlighting differences in methodological approaches and regulatory requirements.

Table 1: Analytical Method Performance Comparison Across Contaminant Classes

| Contaminant Category | Example Scope (# of Analytes) | Quantitative Sensitivity (LOQ) | Key Matrices | Extraction & Cleanup Approach | Regulatory Guidance Documents |

|---|---|---|---|---|---|

| PFAS | 19 linear PFAS (C4-C14) [42] | 0.010 μg/kg for most; 0.20 μg/kg for PFBA [42] | Eggs, fish, meat, milk, dairy products [42] | Alkaline digestion, WAX SPE cleanup [42] | EU Regulation 2022/1428; EURL Guidance Document [42] |

| Mycotoxins | 12 mycotoxins simultaneously (FDA method C-003) [43] | Varies by toxin; action levels established for aflatoxins, patulin [43] | Grains, dried fruits, coffee, apple products, milk [43] | Multi-mycotoxin LC-MS/MS with stable isotope dilution [43] | FDA Compliance Program Guidance Manual; Codex Codes of Practice [43] |

| Pesticides | >136 pesticides in mixed methods [41] | Varies by compound; set to enforce MRLs [45] | Fruits, vegetables, grains, animal feeds [41] | QuEChERS; acidified acetonitrile extraction [41] | SANTE/11312/2021 (EU quality control) [45] |

| Veterinary Drugs | >40 drugs/antibiotics in expanded methods [44] | Median LOQ: 0.31 ng/mL in urine [44] | Meat, milk, eggs, honey, seafood [44] [41] | Acidified acetonitrile; various SPE options [41] | Maximum Residue Limits (MRLs) by jurisdiction [44] |

| Mixed Residues & Contaminants | >350 analytes (veterinary drugs, pesticides, mycotoxins) [41] | Compound-specific; validated for each analyte [41] | Various (meat, milk, eggs, honey, feed) [41] | Generic extraction with acidified acetonitrile [41] | Method validation per SANTE guidance [41] |

Detailed Experimental Protocols

PFAS Analysis in Food Matrices

The determination of 19 PFAS in food matrices requires meticulous sample preparation and chromatographic optimization to achieve the low quantification limits mandated by EU regulations [42].

Sample Preparation: The procedure begins with alkaline digestion of homogenized food samples (1.0 g) using 1 mL of 1% ammonium hydroxide in methanol. After centrifugation, the supernatant is diluted with acetate buffer (pH 4.5) and loaded onto a weak anion exchange (WAX) solid-phase extraction (SPE) cartridge. The cartridge is washed with acetate buffer and methanol, then eluted with 1% ammonia in methanol. The eluate is concentrated under nitrogen stream and reconstituted in methanol [42].

LC-MS/MS Analysis: Separation is achieved using a C18 column (2.1 × 100 mm, 1.7 μm) with a mobile phase consisting of (A) 2 mM ammonium acetate in water and (B) methanol. The gradient elution program runs from 30% B to 95% B over 8 minutes, followed by a 4-minute hold at 95% B. The flow rate is 0.3 mL/min with a column temperature of 40°C. MS detection employs electrospray ionization in negative mode with multiple reaction monitoring (MRM). The method includes a specific chromatographic separation to resolve PFOS from the taurodeoxycholic bile acid interference commonly encountered in food analysis [42].

Quantification Approach: For PFAS compounds lacking their own isotopically labeled internal standards, the method uses surrogate internal standards with similar structural and chemical properties. This approach maintains quantification accuracy while expanding the analytical scope. The method is accredited under ISO/IEC 17025:2018 for PFOA, PFNA, PFHxS, PFOS and 15 other PFAS [42].

Multi-Mycotoxin Analysis

The U.S. Food and Drug Administration employs a multi-mycotoxin LC-MS/MS method for simultaneous quantification of twelve mycotoxins in human food, representing a significant advancement over traditional single-toxin methods [43].

Sample Extraction: Samples are homogenized and extracted with acidified acetonitrile/water (typically 50:50 or 84:16 v/v with 1% formic acid). The extraction solvent composition may be adjusted based on food matrix characteristics. After vigorous shaking and centrifugation, an aliquot of the supernatant is collected for further processing [43].

Cleanup Procedures: Depending on the matrix and target mycotoxins, various cleanup approaches may be employed. These include immunoaffinity columns for aflatoxins, multifunctional cartridges for multiple toxins, or a simple dilute-and-shoot approach for less complex matrices. The FDA method incorporates stable isotope dilution assays (SIDA) using deuterated or ¹³C-labeled internal standards to improve quantification accuracy and compensate for matrix effects [43].

LC-MS/MS Analysis: Chromatographic separation utilizes a C18 column with a water/acetonitrile or water/methanol gradient containing acidic modifiers (formic acid or acetic acid) or ammonium acetate buffers. MS detection employs electrospray ionization in positive or negative mode with rapid polarity switching. MRM transitions are optimized for each mycotoxin, with confirmation based on ion ratios and retention time matching against certified reference materials [43].

Mixed Organic Chemical Residues and Contaminants (MOCRC)

The emerging trend in food safety monitoring involves developing single methods that can analyze multiple categories of chemical residues, focusing on chemical properties rather than usage classifications [41].

Generic Extraction Protocol: The foundational approach for MOCRC methods uses acidified acetonitrile (water/acetonitrile with 1% formic acid) for sample extraction. This composition effectively extracts a wide range of analytes with varying polarities while precipitating proteins. Studies demonstrate this extraction achieves satisfactory recovery (81-120%) for most veterinary drugs, pesticides, and mycotoxins with acceptable precision (RSD <20%) across three spiking levels [44] [41].

Cleanup Optimization: While some methods employ no cleanup beyond protein precipitation, others utilize dispersive SPE with primary secondary amine (PSA) for pigment removal, C18 for lipid elimination, or graphitized carbon black for pigment and sugar removal. The selection depends on the specific food matrix and analyte scope [41].

Instrumental Analysis: LC-MS/MS with both triple quadrupole and high-resolution mass spectrometers (Orbitrap, Q-TOF) are employed. Triple quadrupole instruments provide superior sensitivity for targeted quantification, while high-resolution systems enable simultaneous targeted and non-targeted screening. The expanded method described in the search results can detect and quantify more than 120 highly diverse analytes in a single analytical run [44].

Figure 1: Experimental Workflow Comparison for Different Contaminant Classes. PFAS analysis requires specialized sample preparation including alkaline digestion and weak anion exchange solid-phase extraction (WAX SPE), while multi-residue methods typically employ acidified acetonitrile extraction with simplified clean-up procedures.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Food Contaminant Analysis

| Reagent/Material | Primary Function | Application Examples | Critical Considerations |

|---|---|---|---|

| Weak Anion Exchange (WAX) SPE | Selective retention of acidic compounds | PFAS extraction and cleanup from food matrices [42] | Effectively captures various PFAS chain lengths; ammonia required for elution |

| C18 LC Columns | Reversed-phase chromatographic separation | PFAS, mycotoxins, pesticides, drug residues [42] [41] | Column dimensions and particle size (e.g., 2.1 × 100 mm, 1.7 μm) affect resolution |

| Isotopically Labeled Internal Standards | Quantification accuracy compensation | PFAS, mycotoxins, veterinary drugs analysis [42] [43] [44] | Corrects for matrix effects and recovery losses; essential for precise quantification |

| Acidified Acetonitrile | Generic extraction solvent | Multi-residue methods for pesticides, drugs, mycotoxins [41] | 1% formic acid improves extraction efficiency for basic and neutral compounds |

| QuEChERS Kits | Rapid sample preparation | Pesticide residues in fruits, vegetables [41] | Available in various formulations optimized for specific matrix types |