Navigating Collinearity in Nutritional Research: Statistical Methods and Best Practices for Dietary Component Analysis

Collinearity among dietary components presents significant challenges in nutritional epidemiology and clinical research, obscuring true diet-disease relationships and complicating statistical inference.

Navigating Collinearity in Nutritional Research: Statistical Methods and Best Practices for Dietary Component Analysis

Abstract

Collinearity among dietary components presents significant challenges in nutritional epidemiology and clinical research, obscuring true diet-disease relationships and complicating statistical inference. This article provides a comprehensive framework for addressing collinearity through four key approaches: understanding its sources and impacts in dietary data, applying appropriate statistical methodologies including traditional and emerging techniques, implementing optimization strategies to enhance model performance, and validating findings through comparative analysis. Targeted at researchers, scientists, and drug development professionals, the content synthesizes current methodological advances including principal component analysis, reduced rank regression, compositional data analysis, and machine learning applications, while offering practical guidance for robust dietary pattern analysis in biomedical research.

Understanding Dietary Collinearity: Sources, Challenges, and Impacts on Research Validity

Frequently Asked Questions (FAQs)

What is collinearity and why is it particularly problematic in nutritional research?

Collinearity, sometimes called multicollinearity, occurs when two or more predictor variables in a regression model are highly correlated, meaning they express a linear relationship [1]. In nutritional research, this is exceptionally common because nutrients are not consumed in isolation; they come packaged together in foods and dietary patterns [2] [3].

For example, individuals with a high intake of dietary fiber often also have high intakes of certain vitamins and minerals. When these correlated nutrients are included in the same regression model to predict a health outcome, they cannot independently predict the value of the dependent variable because they explain some of the same variance [1]. This correlation leads to unstable and less interpretable regression estimates, making it difficult to isolate the specific effect of a single nutrient or food component on health [4].

What are the practical consequences of ignoring collinearity in my analysis?

Ignoring collinearity can severely impact the interpretation and validity of your research findings. Key consequences include:

- Unstable and Inflated Estimates: Regression coefficients can become highly sensitive to small changes in the model or data, leading to large standard errors and variance inflation [2] [4]. This instability means that coefficient estimates may vary wildly between studies or even between different samples from the same population.

- Reduced Statistical Power: The increased variance of coefficient estimates makes it harder to detect statistically significant relationships, even when true effects exist [5]. This can lead to Type II errors (false negatives).

- Counterintuitive Coefficient Interpretation: In the presence of strong collinearity, a coefficient might have a sign (positive or negative) that is the opposite of what is biologically plausible, making the results difficult to interpret meaningfully [4].

- Attenuated Observed Relative Risks: As demonstrated in diet-assessment studies, a moderate to high correlation between risk factors can substantially influence the observed relative risk (RRo). Methods with low validity might even produce inverse RRo, completely misrepresenting the true relationship [5].

How can I diagnose and measure collinearity in my dataset?

Diagnosing collinearity involves a combination of examining correlation matrices and calculating specific diagnostic statistics. The most common metric is the Variance Inflation Factor (VIF).

The VIF measures how much the variance of a regression coefficient is inflated due to collinearity. The table below outlines the interpretation of VIF values [1]:

| VIF Value | Interpretation |

|---|---|

| 1 - 2 | Essentially no collinearity. |

| 5 - 10 | Moderate to high degree of collinearity. |

| > 10 | Extreme collinearity; parameter estimates are highly unstable. |

Additional diagnostic tools include:

- Correlation Matrices: Simple Pearson correlations between pairs of independent variables. Values exceeding 0.8 or 0.9 signal potential problems.

- Condition Indices and Variance Decomposition Proportions: These more advanced diagnostics, extendable to relative risk regression models, can help identify the number and source of collinear relationships among multiple variables [4].

My model has high collinearity. What are my options to address it?

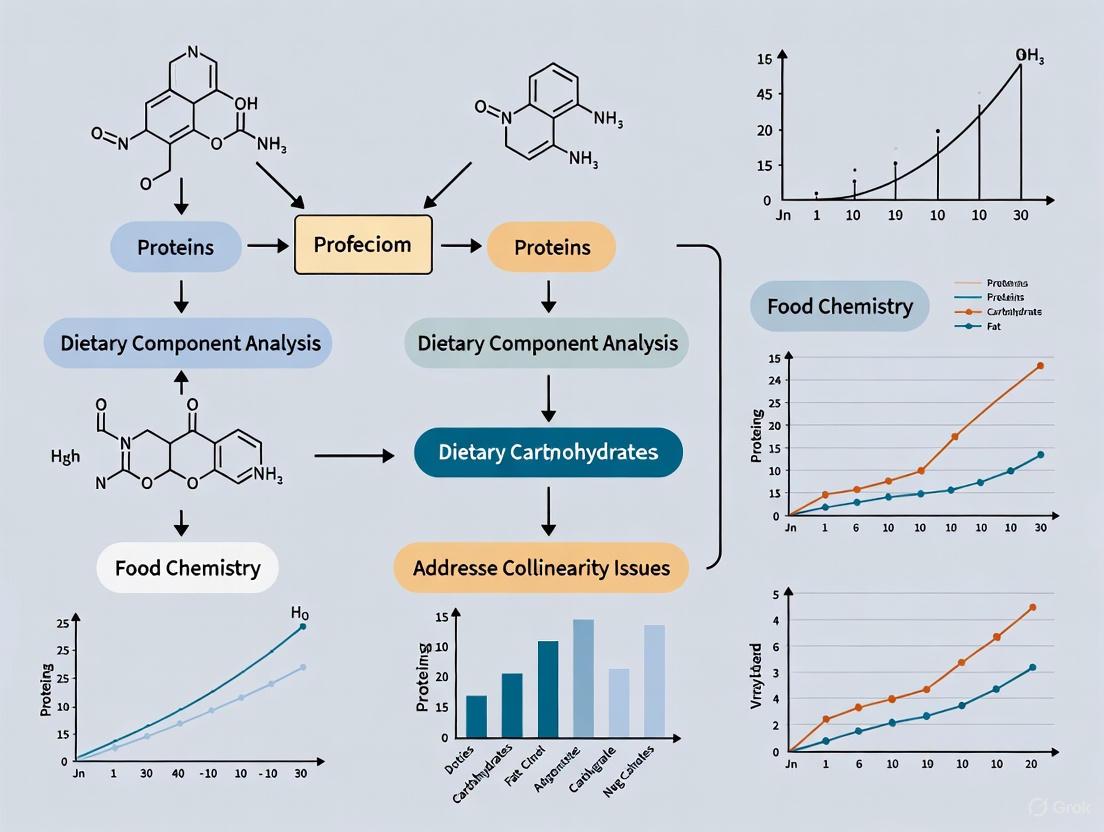

Several strategies are available, but the choice depends on your research question and the causal framework. The diagram below outlines a decision workflow for addressing collinearity.

1. Causal-Data Approaches: Your first consideration should always be causal theory, as represented by a Directed Acyclic Graph (DAG) [2].

- Adjust for Confounders: If the correlated variable is a confounder, you must adjust for it in the model to obtain an unbiased total effect estimate, even if it introduces high collinearity. The bias from omitting a confounder is typically a greater concern than the loss of precision from collinearity [2].

- Avoid Adjusting for Intermediates or Colliders: If the correlated variable is on the causal pathway (an intermediate) or is a common effect (a collider), adjusting for it will introduce bias (overadjustment or collider stratification bias) [2]. In these scenarios, the unadjusted model is correctly specified.

2. Statistical-Data Approaches: If the goal is to understand the overall diet rather than a specific nutrient, or if causal adjustment is necessary, these methods can help.

- Dietary Pattern Analysis: Instead of analyzing single nutrients, create composite dietary patterns.

- Variable Selection or Combination: Use expert knowledge to select a single, representative nutrient from a correlated group or to create a scientifically meaningful composite score.

- Advanced Regression Techniques: Methods like Ridge Regression can handle collinearity by adding a penalty to the model, which stabilizes coefficient estimates at the cost of introducing some bias [2].

Should I dichotomize continuous nutrition variables like BMI to avoid collinearity issues?

No. Dichotomizing or discretizing continuous variables (e.g., creating "high" and "low" BMI groups) is strongly discouraged [6]. This practice:

- Reduces Statistical Power: It throws away information and adds measurement error, attenuating the true effect size and making it harder to detect real relationships [6].

- Increases Risk of Spurious Findings: It can inflate Type I error rates and lead to the detection of spurious main effects or interactions, especially when multiple correlated variables are dichotomized [6].

- Prevents Detection of Non-Linear Relationships: Dichotomization makes it impossible to examine potentially important quadratic or other non-linear effects [6].

- Leads to Biased Parameter Estimates: These biased results may not be replicated, leading to incorrect scientific conclusions [6].

You should always analyze continuous variables as continuous, using multiple regression to examine their relationships with outcomes [6].

The Researcher's Toolkit: Key Methods for Dietary Pattern Analysis

The following table summarizes common statistical methods used to overcome collinearity by analyzing dietary patterns as a whole [3].

| Method | Category | Brief Description | Key Function |

|---|---|---|---|

| Principal Component Analysis (PCA) | Data-Driven | Creates new, uncorrelated variables (components) that explain maximum variance in food intake data. | Reduces dimensionality; handles multicollinearity by creating orthogonal patterns. |

| Factor Analysis | Data-Driven | Similar to PCA, but aims to identify underlying latent factors that explain correlations between foods. | Identifies unobserved constructs (e.g., "Western diet") driving food consumption. |

| Cluster Analysis | Data-Driven | Groups individuals into mutually exclusive categories based on similar dietary habits. | Classifies subjects into dietary types (e.g., "healthy eaters," "convenience food consumers"). |

| Reduced Rank Regression (RRR) | Hybrid | Derives dietary patterns that maximally explain the variation in intermediate response variables (e.g., biomarkers). | Creates patterns predictive of specific disease pathways. |

| Healthy Eating Index (HEI) | Investigator-Driven (A Priori) | Scores diet quality based on adherence to pre-defined dietary guidelines. | Assesses how well a population's diet aligns with national recommendations. |

Experimental Protocol: Diagnosing Collinearity in a Nutritional Epidemiological Analysis

This protocol provides a step-by-step guide for assessing collinearity in a standard nutritional cohort study analyzing the relationship between nutrient intakes and a health outcome.

1. Hypothesis: Investigate the association between intakes of Nutrient A, Nutrient B, and Nutrient C with the risk of Disease X, while controlling for key confounders like age, sex, and energy intake.

2. Software and Data Preparation:

- Software: R, SAS, SPSS, or Stata.

- Data: A dataset with continuous variables for nutrient intakes (e.g., from Food Frequency Questionnaires), the health outcome, and confounders. Ensure nutrients are adjusted for total energy intake using a preferred method (e.g., nutrient density or residual method).

3. Step-by-Step Procedure:

- Step 1: Run Initial Multivariable Model.

- Fit a regression model (logistic for binary outcomes, Cox for time-to-event) including all nutrients of interest (A, B, C) and confounders.

- Observation: Note if any coefficient estimates are non-significant or have counterintuitive signs despite a strong known biological relationship.

Step 2: Generate Correlation Matrix.

- Calculate pairwise Pearson correlations between Nutrient A, B, and C.

- Diagnostic Threshold: Correlations with an absolute value > 0.7 indicate a potential collinearity problem that requires further investigation.

Step 3: Calculate Variance Inflation Factors (VIFs).

- Run a linear regression model with the nutrients as independent variables. The health outcome can be ignored for this diagnostic step, or the VIF can often be calculated directly from the original model in most statistical software.

- The VIF for the i-th predictor is calculated as

VIF = 1 / (1 - R²_i), where R²_i is the coefficient of determination from a regression of the i-th predictor on all the other predictors. - Diagnostic Threshold: As per the table in the FAQs, a VIF > 10 indicates severe multicollinearity [1]. A VIF > 5 is often used as a more conservative rule of thumb for concern.

Step 4: Interpret and Act.

- Follow the decision workflow provided in the diagram above. Based on the VIF results and your causal hypothesis (DAG), decide whether to:

- Proceed with the model as is (if VIFs are low, or if adjusting for a confounder is essential).

- Use a dietary pattern approach (PCA, RRR) instead of single nutrients.

- Employ a penalized regression method like Ridge Regression.

- Follow the decision workflow provided in the diagram above. Based on the VIF results and your causal hypothesis (DAG), decide whether to:

FAQs: Addressing Core Conceptual Challenges

Q1: What is collinearity in dietary research and why is it a problem? Collinearity occurs when two or more dietary components in a regression model are highly correlated, making it difficult to isolate their individual effects on a health outcome. For example, people who eat more dietary fiber often also have higher intake of vitamin E, as a 2025 study found vitamin E mediated over 85% of fiber's association with cognitive function [7]. This interdependence distorts statistical results, leading to unreliable estimates of effect sizes and significance, and can obscure true biological relationships.

Q2: How can I experimentally disentangle synergistic effects from simple additive effects? True synergy is defined as a combined effect that exceeds the expected additive effect of individual components [8]. To test for this, researchers use specific pharmacological models and statistical approaches. You must first define the expected additive effect using a reference model (e.g., Bliss Independence or Loewe Additivity). Subsequently, experimentally measured effects of the combination are compared against this predicted additive value. A statistically significant excess indicates synergy [9] [8].

Q3: What are the practical implications of nutrient collinearity for designing interventions? Collinearity implies that population-level "one-size-fits-all" dietary guidelines may be suboptimal. For instance, a 2025 study on older adults revealed a J-shaped relationship between dietary fiber and cognitive function, with benefits plateauing after 22-30 grams per day [7]. This suggests that recommendations must account for such non-linear thresholds and interacting factors, moving towards precision nutrition that considers an individual's unique biochemical, genetic, and microbiome profile [10].

Q4: Which statistical methods are most robust for analyzing correlated dietary patterns? Clustering algorithms are a powerful tool. A 2022 study used k-means clustering to group individual foods and Partitioning Around Medoids (PAM) to categorize entire meals based on their nutritional content and food group composition [11]. This "generic meal" approach reduces data complexity by analyzing meals as cohesive units, which can more accurately reflect real-world eating patterns and help mitigate collinearity issues between single nutrients.

Troubleshooting Guides: Common Experimental Pitfalls

Issue: Inconsistent Results in Replicating Nutrient Synergy

Potential Causes and Solutions:

- Cause 1: Inaccurate control for the expected additive effect.

- Solution: Consistently apply and report a validated synergy model (e.g., Bliss Independence for agents with independent mechanisms, Loewe Additivity for agents with similar mechanisms) [8]. Justify your model choice.

- Cause 2: Uncontrolled ratios and dosing of interacting components.

- Solution: Synergy is highly dependent on the concentration/dose ratio of the components [8]. Conduct preliminary dose-response curves for each agent alone to identify appropriate ratio ranges for combination studies.

- Cause 3: Patient variability in genetics, microbiome, or metabolism.

Issue: High Collinearity Between Key Nutrients of Interest Inflates Statistical Variance

Potential Causes and Solutions:

- Cause 1: Studying nutrients that are naturally co-located in common foods.

- Solution: Instead of analyzing isolated nutrients, adopt a meal-based or dietary pattern approach. The "generic meal" method can characterize commonly consumed meal types, incorporating portions and nutritional content to create a more holistic exposure variable [11].

- Cause 2: Insufficient variation in the population's diet.

- Solution: Ensure your cohort includes individuals with diverse dietary habits. In analysis, consider using Principal Component Analysis (PCA) to create composite scores of dietary patterns, or employ ridge regression, which is designed to handle correlated variables.

Quantitative Data Tables

Table 1: Non-Linear Thresholds in Dietary Fiber Intake and Cognitive Outcomes

Data sourced from a cross-sectional study of 2,713 older adults (NHANES 2011-2014) [7]

| Cognitive Test | Inflection Point (g/day) | Association Below Threshold (β, 95% CI) | Association Above Threshold (β, 95% CI) |

|---|---|---|---|

| DSST (Processing Speed) | 29.65 | 0.18 (0.01, 0.26), P<0.0001 | -0.15 (-0.29, -0.02), P=0.0265 |

| Global Composite Z-Score | 22.65 | 0.01 (0.00, 0.01), P=0.0004 | -0.00 (-0.01, 0.00), P=0.9043 |

Table 2: Hazard Ratios for Cognitive Impairment from Combined Lifestyle Factors

Data from a cohort of 18,909 older Chinese adults followed for 5.27 years [12]

| Lifestyle Combination | APOE ε4 Carriers HR (95% CI) | APOE ε4 Non-Carriers HR (95% CI) |

|---|---|---|

| High Total Activity + Healthy Diet | 0.65 (0.60, 0.71) | 0.65 (0.60, 0.71) |

| High Physical Activity + Healthy Diet | 0.72 (0.66, 0.78) | 0.72 (0.66, 0.78) |

| High Cognitive Activity + Healthy Diet | 0.73 (0.67, 0.79) | 0.73 (0.67, 0.79) |

| High PA + High CA + Healthy Diet | 0.46 (0.28, 0.76) | 0.47 (0.37, 0.58) |

Experimental Protocols

Protocol 1: Clustering Meal Patterns to Reduce Collinearity

Objective: To identify commonly consumed meal patterns for use as exposure variables, minimizing collinearity from analyzing nutrients in isolation [11].

Methodology:

- Data Collection: Collect detailed dietary data using a 4-day weighed food diary.

- Food Grouping: Classify all food items into standardized food groups.

- Meal Characterization: For each meal, calculate its Nutrient Rich Foods (NRF9.3) index score and record the food groups it contains.

- Clustering: Apply the Partitioning Around Medoids (PAM) clustering algorithm to group similar meals based on their NRF score and food group composition.

- Portion Sizes: Define a set of standard portion sizes for each resulting "generic meal" cluster.

- Validation: Estimate mean daily nutrient intakes using the generic meal data and compare them to intakes calculated from the original data to assess accuracy.

Protocol 2: Testing for Synergy Using Bliss Independence Model

Objective: To determine if the combined effect of two nutrients (A and B) is greater than the sum of their individual effects (synergistic) [8].

Methodology:

- Dose-Response Curves: Conduct experiments to establish full dose-response curves for Nutrient A and Nutrient B individually.

- Define Expected Additive Effect (EAB): Using the Bliss Independence model, calculate the expected effect of the combination as:

E_AB = E_A + E_B - (E_A * E_B), where EA and E_B are the fractional effects (0 to 1) of each nutrient alone. - Combination Experiment: Measure the actual effect of the Nutrient A+B combination at a specific ratio.

- Statistical Comparison: Compare the experimentally observed effect to the predicted E_AB using an appropriate statistical test (e.g., t-test).

- Interpretation: If the observed effect is significantly greater than E_AB, the interaction is classified as synergistic.

Signaling Pathways and Workflows

Diagram Title: Mediated Pathway of Dietary Fiber and Cognition

Diagram Title: Meal Clustering Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Dietary Interaction Research

| Item | Function / Application |

|---|---|

| 24-Hour Dietary Recall | A structured interview method to quantify all foods and beverages consumed by a participant in the previous 24 hours. It is a standard tool for dietary assessment in studies like NHANES [7]. |

| Automated Multiple-Pass Method (AMPM) | A validated, five-step computerized methodology used by USDA to conduct 24-hour recalls, designed to enhance completeness and accuracy of dietary data [7]. |

| Digit Symbol Substitution Test (DSST) | A neuropsychological test from the NHANES battery that assesses processing speed, executive function, and sustained attention. It is a common outcome measure in nutrition-cognition studies [7]. |

| Partitioning Around Medoids (PAM) Algorithm | A robust clustering algorithm used to categorize complex meal data into distinct "generic meal" groups based on their nutritional content and food group composition, mitigating collinearity [11]. |

| Simplified Healthy Eating Index (SHE-index) | A scoring system based on the frequency of consumption of key food groups (e.g., fruits, vegetables, fish) and avoidance of others (e.g., sugar), used to define overall diet quality in cohort studies [12]. |

| Bliss Independence Model | A reference model used in pharmacology and nutrition to calculate the expected additive effect of two or more compounds, serving as the benchmark for identifying synergistic interactions [8]. |

Troubleshooting Guides

Guide 1: Diagnosing Multicollinearity in Dietary Data

Problem: Unstable coefficient estimates and inflated standard errors are observed in a regression model linking nutrient intake from an FFQ to a health outcome.

Explanation: Multicollinearity occurs when two or more predictor variables (e.g., food items or nutrient intakes) in a model are highly correlated. In dietary data, this is common because people consume foods in combinations (e.g., people who eat bread often also eat butter). This high intercorrelation makes it difficult for the model to estimate the independent effect of each food or nutrient [13].

Solution Steps:

- Calculate Variance Inflation Factors (VIFs): For each predictor variable in your regression model, compute the VIF. A common threshold for concern is a VIF > 2.5, which indicates the variance of a coefficient is inflated by 150% due to correlations with other predictors [14].

- Examine Correlation Matrices: Create a matrix of correlation coefficients between all food items or nutrient intakes. Look for pairs or groups with very high correlations (e.g., |r| > 0.8) [15].

- Investigate the Source: Determine if high VIFs are caused by:

- Structurally correlated food items (e.g.,

whole milkandsaturated fat). - Inclusion of interaction terms or polynomial terms (e.g.,

sodiumandsodium^2). - A categorical variable represented by multiple dummy indicators (e.g.,

season_of_recall) [14].

- Structurally correlated food items (e.g.,

Guide 2: Addressing Multicollinearity in Dietary Pattern Analysis

Problem: A researcher wants to identify distinct dietary patterns from an FFQ without the patterns being obscured by the inherent correlations between food items.

Explanation: Traditional regression struggles with highly correlated food data. Data reduction techniques are better suited for this task, as they are designed to handle intercorrelated variables and transform them into a new, smaller set of uncorrelated variables (dietary patterns) [13] [3].

Solution Steps:

- Apply Principal Component Analysis (PCA) or Factor Analysis: These are the most common methods. They derive dietary patterns (components) that are linear combinations of the original food items, with each successive component capturing the maximum remaining variance and being uncorrelated with the others [13] [16].

- Use Treelet Transform (TT): This emerging method combines PCA with cluster analysis, which can yield more interpretable patterns, especially when food groups are highly correlated in a hierarchical manner [13] [3].

- Consider Compositional Data Analysis (CODA): If your dietary data are "closed" (e.g., percentages of total energy intake), CODA is appropriate. It transforms intake data into log-ratios, effectively handling the multicollinearity inherent in compositional data [13] [3].

Guide 3: Resolving Multicollinearity in Food Origin Traceability Models

Problem: In spectroscopic analysis of foods (e.g., for origin traceability), multicollinearity between thousands of adjacent spectral wavelengths degrades model accuracy and stability.

Explanation: Spectral data contains massive redundancy, as measurements at nearby wavelengths are often nearly identical. This severe multicollinearity can overwhelm classification models like PLS-DA [15].

Solution Steps:

- Implement a Variable Selection Method: Use a strategy like Multicollinearity Reduction-based Variable Selection (MR-based VS). This method selects a subset of spectral variables based on two criteria [15]:

- Inter-class significant difference: The variables must differ significantly across the categories of interest (e.g., different geographic origins).

- Intra-class correlation evaluation: Among the discriminatory variables, it selects those with the lowest mutual correlation, thereby reducing multicollinearity.

- Validate the Reduced Model: Combine the selected, low-collinearity variables with your classification model (e.g., PLS-DA or ULDA) and confirm that classification accuracy improves compared to the full-spectrum model [15].

Frequently Asked Questions (FAQs)

FAQ 1: My VIFs are high for several control variables (e.g., total energy and physical activity level), but the VIF for my main variable of interest (e.g., vitamin D intake) is low. Is this a problem?

Answer: No, this scenario can often be safely ignored. Multicollinearity is primarily a problem for the variables that are themselves highly correlated. If your main variable of interest has a low VIF, it indicates that its effect can be reliably estimated despite the correlations among your control variables. The control variables can still effectively perform their function of accounting for confounding [14].

FAQ 2: The VIFs for my interaction term (e.g., 'sodium intake * age group') and its main effects are very high. Should I remove the interaction?

Answer: Not necessarily. High VIFs are an inherent property of models with interaction terms or polynomial terms. The statistical significance (p-value) of the highest-order term (the interaction itself) is not affected by this multicollinearity. You can proceed with the model but should use an overall test (e.g., a likelihood ratio test) to assess the significance of the interaction term as a whole [14].

FAQ 3: Can I use a Food Frequency Questionnaire (FFQ) even though dietary components are known to be correlated?

Answer: Yes, FFQs are a standard and valid tool in nutritional epidemiology, precisely because data reduction techniques like Principal Component Analysis (PCA) and Factor Analysis are designed to handle these correlations. These methods have demonstrated reasonable reproducibility and validity in deriving meaningful dietary patterns from FFQ data, despite multicollinearity [13] [16] [17].

FAQ 4: How can I validate that my method for handling multicollinearity (e.g., deriving dietary patterns) is effective?

Answer: Use a multi-method validation approach. For dietary patterns, this involves:

- Reproducibility: Administer the same FFQ to the same subjects at two time points and check the consistency of the derived patterns [16] [17].

- Validity: Compare the patterns and their correlations with health outcomes against those derived from more detailed dietary records [16] [18] or against biomarkers of nutrient intake (e.g., serum 25(OH)D for vitamin D), which are not subject to the same measurement errors as self-reported data [19] [20].

Experimental Protocols

Protocol 1: Validating an FFQ Using the Method of Triads

Purpose: To assess the validity of a nutrient intake estimate from an FFQ while accounting for measurement error by using two additional, uncorrelated methods [19] [20].

Workflow:

Materials:

- Research Reagent Solutions:

- Self-Administered FFQ: A questionnaire listing vitamin D-rich food sources and fortified products specific to the study population [19] [20].

- 7-Day Weighed Food Record (7d-FR): A detailed dietary record used as one reference method [19] [18].

- Biomarker Assay Kit: A validated kit for measuring serum 25-hydroxyvitamin D (25(OH)D) concentration, a objective biomarker of vitamin D status [19] [20].

- Sun Exposure Questionnaire (SEQ): A tool to quantify participants' sun exposure, a major confounding factor for vitamin D status [19] [20].

Procedure:

- Recruitment: Enroll a sample of at least 50-100 participants representative of the target population [19].

- Data Collection:

- Administer the newly developed FFQ to assess habitual vitamin D intake.

- Simultaneously, collect a 7-day weighed food record (7d-FR) as a detailed dietary reference.

- Collect a blood sample from each participant to measure serum 25(OH)D concentration.

- Administer a Sun Exposure Questionnaire (SEQ) to control for endogenous vitamin D synthesis.

- Statistical Analysis - Method of Triads:

- Calculate the correlation coefficients between each pair of the three methods (FFQ & 7d-FR, FFQ & Biomarker, 7d-FR & Biomarker).

- The validity coefficient (ρ) for the FFQ is estimated using the formula:

ρ_FFQ = √(r_FFQ,7dFR * r_FFQ,Biomarker / r_7dFR,Biomarker), whereris the correlation coefficient between two methods [19]. - A validity coefficient close to 1.0 indicates high validity for the FFQ.

Protocol 2: Applying PCA to Derive Dietary Patterns from an FFQ

Purpose: To reduce a large set of correlated food items from an FFQ into a smaller number of uncorrelated dietary patterns for analysis against health outcomes [13] [16].

Workflow:

Materials:

- Research Reagent Solutions:

- Cleaned FFQ Dataset: Data from a validated FFQ, with implausible intakes removed.

- Food Grouping Scheme: A pre-defined nutritional or culinary scheme for aggregating individual food items into logical groups (e.g., "red meat," "whole grains," "leafy green vegetables") [13] [16].

- Statistical Software: Software capable of PCA (e.g., R, SAS, Stata, SPSS).

Procedure:

- Data Preprocessing: Group individual food items from the FFQ into meaningful food groups to reduce the number of variables and mitigate noise.

- Perform PCA: Apply PCA to the correlation matrix of the food group intakes. This generates a set of principal components (linear combinations of the food groups) that are orthogonal (uncorrelated).

- Determine Components: Decide how many components to retain based on:

- Eigenvalue > 1 rule: Retain components with an eigenvalue greater than one.

- Scree plot: Retain components before the plot levels off.

- Interpretability: Retain a number of components that are nutritionally meaningful and explain a sufficient amount of variance (e.g., 70-80%) [13].

- Interpret Patterns: Rotate the retained components (often using Varimax rotation) to simplify interpretation. Name each dietary pattern based on the food groups with the highest absolute factor loadings (e.g., "Prudent Pattern" for high loadings on vegetables, fruits, whole grains; "Western Pattern" for high loadings on red meat, processed foods, refined grains) [13] [16].

Data Presentation

Table 1: Correlation Coefficients from FFQ Validation Studies

This table summarizes typical correlation coefficients observed when validating FFQs against other dietary assessment methods, providing a benchmark for researchers.

| Nutrient / Food Group | vs. Dietary Records (Crude) | vs. Dietary Records (Energy-Adjusted) | vs. Biomarkers | Notes | Source |

|---|---|---|---|---|---|

| Protein | 0.55 | - | - | Moderate validity | [18] |

| Carbohydrates | 0.27 | - | - | Low validity | [18] |

| Fruits | - | - | - | Overestimated by 56.3% | [18] |

| Vegetables | - | - | - | Overestimated by 82.8% | [18] |

| Vitamin D (FFQ vs 7d-FR) | - | 0.36 (R) | - | Compared to 7-day record | [19] |

| Vitamin D (FFQ vs Biomarker) | - | 0.56 (R) | - | Superior prediction ability | [19] |

| Prudent Dietary Pattern | 0.70 (ICC) | 0.45 - 0.74 (vs records) | - | Reasonable reproducibility & validity | [16] |

| Western Dietary Pattern | 0.67 (ICC) | 0.45 - 0.74 (vs records) | - | Reasonable reproducibility & validity | [16] |

Table 2: Statistical Methods for Dietary Pattern Analysis to Address Multicollinearity

This table compares different statistical approaches used to handle multicollinearity in dietary data analysis.

| Method | Category | Key Principle | Advantages | Disadvantages / Considerations | |

|---|---|---|---|---|---|

| Principal Component Analysis (PCA) | Data-driven | Extracts uncorrelated components that explain maximum variance. | Most common method; creates orthogonal patterns. | Patterns can be difficult to interpret; results are study-specific. | [13] [3] |

| Factor Analysis | Data-driven | Similar to PCA, models common factors shared across food groups. | Similar to PCA. | Similar to PCA. | [13] |

| Reduced Rank Regression (RRR) | Hybrid | Extracts patterns that explain maximum variation in intermediate response variables (e.g., nutrients). | Incorporates biological pathways; good for hypothesis testing. | Requires pre-specified response variables. | [13] [3] |

| Clustering Analysis | Data-driven | Groups individuals into mutually exclusive clusters with similar diets. | Easy to interpret (person-centered approach). | Does not reduce dimensionality of food variables. | [13] |

| Treelet Transform (TT) | Data-driven | Combines PCA and clustering in a one-step process. | Can yield more interpretable patterns with hierarchical data. | Emerging method; less established. | [13] [3] |

| Compositional Data Analysis (CODA) | Compositional | Transforms intake data into log-ratios to account for closed data. | Correctly handles relative nature of dietary data. | Complex interpretation; requires specific expertise. | [13] [3] |

Troubleshooting Guide: Is Multicollinearity Affecting My Model?

Multicollinearity can silently undermine your regression analysis. Use this guide to diagnose and correct it.

| Symptom | Possible Cause | Diagnostic Check | Immediate Action |

|---|---|---|---|

| A statistically significant overall model (e.g., F-test) has no statistically significant predictors [21] | Inflated standard errors due to shared variance among predictors making it hard to detect individual effects [22] [21] | Calculate Variance Inflation Factors (VIFs) for each predictor [22] | Check for and consider removing highly correlated variables (e.g., both BMI and body fat percentage) |

| Coefficient estimates are counter-intuitive or have opposite signs than expected [22] | Unstable coefficient estimates caused by overlapping predictor information, making estimates highly sensitive to minor data changes [22] [21] | Examine the stability of coefficient estimates when adding/removing other predictors or a few data points | Avoid interpreting regression coefficients in isolation; use the model for prediction only with caution |

| Coefficient estimates change dramatically with the addition or removal of a variable [21] | Unstable estimates where the effect of one predictor is confused with the effect of another, correlated predictor [21] | Check pairwise correlations between the unstable predictor and others in the model [22] | Center your predictors (subtract their means) or use regularization techniques like ridge regression [21] |

FAQ: Navigating Multicollinearity in Dietary Research

What is the Variance Inflation Factor (VIF), and how is it calculated?

The Variance Inflation Factor (VIF) quantifies how much the variance of a regression coefficient is inflated due to multicollinearity [21]. It is calculated for each predictor variable (i) using the formula:

VIF = 1 / (1 - R²ᵢ) [22] [21]

Here, R²ᵢ is the R-squared value obtained by regressing the i-th predictor variable on all the other predictor variables in the model. This R² measures how much of the variance in one predictor is explained by the others [22].

- VIF = 1: No multicollinearity [22] [21].

- VIF > 5: Represents a potentially problematic amount of multicollinearity [22].

- VIF > 10: Signals serious multicollinearity, indicating that over 90% of the variance in that predictor is shared with others [21].

Why can't I just look at correlation coefficients between my predictors?

Pairwise correlations are a good first check but are insufficient because they only assess the relationship between two variables [22]. Multicollinearity can be a multivariate phenomenon where one predictor is explained by a combination of several other predictors, even if no single pairwise correlation is high [22]. VIFs are better because they use multiple regression to detect this more complex correlation structure [22].

My goal is prediction, not interpreting coefficients. Do I need to worry about high VIF?

If your only goal is prediction and the correlation structure among your predictors is stable in new data, high VIF may not ruin your predictive accuracy [21]. However, if you care about understanding which specific dietary components drive the outcome, or if the correlations in your training data are not representative, multicollinearity remains a serious problem that compromises the interpretation of your model [21].

What are the practical consequences for my dietary component analysis?

In dietary research, where nutrients are often highly correlated, multicollinearity can lead to:

- Unreliable Insights: Making it difficult to determine if calories, fat, or sugar intake is the true driver of a health outcome [23].

- Wasted Resources: An underpowered study may fail to detect a real and important effect of a specific nutrient, leading to a Type II error (false negative) [24].

- Reduced Generalizability: Models with unstable coefficients may not perform well when applied to new populations with slightly different dietary patterns [24].

Experimental Protocol: Detecting and Addressing Multicollinearity

Follow this step-by-step protocol to diagnose and mitigate multicollinearity in your datasets.

Step 1: Calculate Variance Inflation Factors (VIFs)

- Fit your multiple regression model with all predictors.

- For each predictor

iin the model, run an auxiliary regression where predictoriis the dependent variable, and all other predictors are independent variables. - Obtain the R-squared (R²ᵢ) value from each of these auxiliary regressions.

- Calculate VIF for each predictor using: VIFᵢ = 1 / (1 - R²ᵢ) [22] [21].

Step 2: Interpret VIF Values Use the thresholds in the table below to assess the severity of multicollinearity for each variable [22] [21].

| VIF Value | Degree of Multicollinearity | Implied Shared Variance | Recommended Action |

|---|---|---|---|

| VIF = 1 | None | 0% | No action needed. |

| 1 < VIF ≤ 5 | Moderate | < 80% | Monitor; may be acceptable depending on context. |

| VIF > 5 | Problematic | ≥ 80% | Strongly consider mitigation strategies [22]. |

| VIF > 10 | Severe | ≥ 90% | Requires correction [21]. |

Step 3: Apply Mitigation Strategies

- Remove Variables: If two variables measure the same underlying construct (e.g., different metrics for "fruit intake"), remove one based on theoretical justification [21].

- Create Composite Variables: Combine highly correlated predictors into a single index or score (e.g., a "Western Dietary Pattern" score) [21].

- Use Regularization: Apply techniques like Ridge Regression, which introduces a penalty term to shrink coefficients and reduce variance [21].

- Center Predictors: For models with interaction terms, centering variables (subtracting the mean) can help reduce multicollinearity [21].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| VIF Calculation | Diagnoses the severity of multicollinearity by quantifying the inflation of a coefficient's variance [22] [21]. |

| Pairwise Correlation Matrix | An initial diagnostic tool to identify highly correlated pairs of predictor variables [22]. |

| Ridge Regression | A regularization technique that stabilizes coefficient estimates and reduces variance by introducing a penalty term, useful when prediction is the goal [21]. |

| Principal Component Analysis (PCA) | A dimensionality reduction technique that creates a new set of uncorrelated variables (principal components) from the original predictors, effectively eliminating multicollinearity [21]. |

| Dietary Species Richness (DSR) | A metric used in nutritional epidemiology to quantify food biodiversity, which can help avoid using multiple highly correlated food item variables [23]. |

Experimental Workflow Diagram

The diagram below outlines the logical process for diagnosing and addressing multicollinearity in research.

Visualizing Variance Inflation

This diagram illustrates the core concept of how multicollinearity inflates the variance of regression coefficients.

Frequently Asked Questions (FAQs)

FAQ 1: What is collinearity and why is it a specific problem in nutritional research? Collinearity, or multicollinearity, occurs when two or more predictor variables in a statistical model are highly correlated. In nutritional research, this is a fundamental challenge because people consume foods, not isolated nutrients. These foods contain multiple nutrients that are often consumed together (e.g., fat and calories in a rich diet, or fiber and certain vitamins in plant-based foods) [25]. This high correlation makes it statistically difficult to isolate the independent effect of a single nutrient on a health outcome, potentially obscuring the true diet-disease relationship [25].

FAQ 2: What are the practical consequences if I ignore collinearity in my analysis? Ignoring collinearity can lead to unstable and unreliable statistical models. The risks include:

- Inflated Variances: The standard errors for the coefficients of collinear variables become very large, reducing the statistical power of your test [25].

- Unreliable Estimates: The calculated effect (e.g., Hazard Ratio, Odds Ratio) of a specific nutrient can become extremely sensitive to minor changes in the model or dataset, making your results difficult to interpret and replicate [25].

- Misleading Conclusions: You may incorrectly conclude that a nutrient has no significant effect on disease risk when it actually does, or vice-versa.

FAQ 3: What are the established methods to detect and manage collinearity? Researchers have developed several strategies to address this issue. The following table summarizes the key approaches, their applications, and important limitations based on case studies.

Table 1: Methodologies for Addressing Collinearity in Nutritional Studies

| Method | Application Example | Key Findings/Limitations |

|---|---|---|

| Excluding Collinear Variables | A case-control study on colon cancer risk explored the relationship between fat and caloric intake [25]. | The perceived risk associated with fat was highly sensitive to whether calories were included or excluded from the model, demonstrating how this method can force a choice between related but distinct factors [25]. |

| Energy-Adjustment (Residual Method) | A prospective cohort study on carbohydrates and cancer adjusted for total energy intake using the residual method to isolate the effect of carbohydrate composition independent of total calories consumed [26]. | This method helps to "purge" the effect of total energy, allowing the examination of nutrient composition. It is a standard technique for handling the correlation between a nutrient and total energy intake [26]. |

| Advanced Regression Techniques (Ridge Regression) | The same colon cancer case-control study evaluated ridge regression as a solution [25]. | While specialized methods like ridge regression can stabilize coefficient estimates, the authors noted that the results remained sensitive to the underlying statistical assumptions [25]. |

| Machine Learning (XGBoost) | A study on Type 2 Diabetes (T2D) predictors used the XGBoost algorithm, which incorporates regularization (L1/L2) to handle multicollinearity among predictors like diet and lifestyle factors [27]. | This technique can manage high-dimensional, correlated data by penalizing complex models, reducing overfitting, and identifying the most robust predictors, such as age and BMI, even when other factors are correlated [27]. |

FAQ 4: Can you provide a real-world example where collinearity caused confusion? A classic example comes from a case-control study of colon cancer conducted in Utah. Researchers found that the apparent risk associated with dietary fat was entirely dependent on how they handled its collinearity with total caloric intake in their statistical models. Depending on the analytical method chosen, fat could appear to be a significant risk factor or have no association at all, highlighting how collinearity can lead to conflicting findings in the literature [25].

FAQ 5: How does study design contribute to collinearity problems? The design of nutritional studies can introduce collinearity. For example, in a large cross-sectional cohort study (n=25,970) examining climate-friendly diets and micronutrient intake, researchers noted that many key micronutrients (like iron, zinc, and vitamin B-12) are often found together in animal-source foods [28]. When participants reduce their intake of this food group, the intake of all these nutrients decreases simultaneously, creating a collinear block of variables that is hard to disentangle in observational analyses [28].

Experimental Protocols for Managing Collinearity

Protocol 1: Energy-Adjustment of Nutrients Using the Residual Method

This protocol is used to examine the effect of a specific nutrient independent of an individual's total caloric intake.

- Data Collection: Collect dietary intake data using validated methods (e.g., 24-hour recalls, food diaries, or FFQs) to estimate daily intake of the target nutrient and total energy [26].

- Model Fitting: Fit a linear regression model with the target nutrient intake (e.g., grams of carbohydrate) as the dependent variable and total energy intake (kcal) as the independent variable.

- Calculate Residuals: Obtain the residuals from the model fitted in Step 2. These residuals represent the variation in nutrient intake that is not explained by total energy intake.

- Use in Analysis: Use these energy-adjusted residuals as the exposure variable in your main disease risk model (e.g., a Cox proportional hazards model) [26].

Protocol 2: Applying Machine Learning (XGBoost) to Identify Robust Predictors

This protocol uses the XGBoost algorithm to handle collinear predictors and identify those with the strongest relationship to the outcome.

- Data Preparation: Compile a dataset including the outcome (e.g., T2D history) and a wide range of potential predictors (dietary nutrients, lifestyle factors, anthropometrics, socioeconomics) [27].

- Propensity Score Matching (Optional): To reduce confounding by lifestyle, groups with different dietary patterns (e.g., high vs. low animal-sourced food intake) can be matched on key confounders like age, gender, BMI, and physical activity [27].

- Model Training & Tuning: Train the XGBoost classifier on the data. Optimize hyperparameters, including the L1 (lasso) and L2 (ridge) regularization parameters, which help mitigate the effect of multicollinearity by penalizing model complexity [27].

- Feature Importance Analysis: Derive and interpret feature importance scores from the trained model using Shapley (SHAP) plots to identify the top predictors of the disease outcome, such as age, BMI, and specific nutrient ratios [27].

Workflow and Signaling Pathways

Statistical Decision Pathway for Collinearity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Analytical Tools for Nutritional Cohort Studies

| Item | Function in Research |

|---|---|

| Validated Food Frequency Questionnaire (FFQ) / 24-Hour Recall | A core tool for assessing habitual dietary intake over a defined period. It translates food consumption into nutrient intake data using a food composition database [28] [26]. |

| Food Composition Database (e.g., PC-KOST2-93, UK Nutritional Database) | Software that contains the nutritional profile of thousands of food items. It is used to calculate the intake of specific nutrients, energy, and other dietary components from the reported food consumption [28] [26]. |

| Biomarker Assay Kits (e.g., for Vitamin D, Selenium, Folate) | Provides an objective measure of nutrient status, complementing self-reported intake data and helping to account for issues of bioavailability and absorption [28]. |

| Statistical Software with Advanced Regression Modules (e.g., R, Python, Stata) | Essential for performing complex statistical analyses, including energy-adjustment, calculating variance inflation factors (VIF), running ridge regression, and implementing machine learning algorithms like XGBoost [27] [25]. |

| Machine Learning Libraries (e.g., XGBoost in Python/R) | Software libraries that provide implementations of advanced algorithms capable of handling high-dimensional, collinear data and providing robust feature importance rankings [27]. |

Statistical Approaches for Collinear Dietary Data: From Traditional to Emerging Methods

Frequently Asked Questions (FAQs)

FAQ 1: Why are dimension reduction techniques like PCA necessary in dietary pattern analysis? Traditional methods that analyze individual foods or nutrients in isolation often fail to capture the complex interactions and synergies within a whole diet. Dietary components are frequently consumed in combination and can be highly correlated, a problem known as multicollinearity. PCA helps overcome this by creating new, uncorrelated variables (principal components) that represent overarching dietary patterns, providing a more holistic view of diet and its relationship with health outcomes [29] [30].

FAQ 2: My PCA results are difficult to interpret. What is the biological meaning of a principal component? Principal components are mathematical constructs designed to capture maximum variance in the data; they are not inherently biologically meaningful [31]. Interpretation relies on the researcher examining the factor loadings—the correlations between the original food items and the component. For example, a component with high positive loadings for fruits, vegetables, and whole grains might be labeled a "Prudent" pattern, while one with high loadings for processed meats and refined grains might be labeled a "Western" pattern [32] [33]. The context of your study and existing nutritional knowledge are essential for meaningful interpretation.

FAQ 3: How do I decide the number of components to retain in my analysis? There is no single definitive rule, and the decision should be guided by a combination of statistical and interpretability criteria [32]. Common approaches include:

- Kaiser's Criterion: Retaining components with eigenvalues greater than 1 [32].

- Scree Plot: Looking for a "break" or "elbow" in the plot of eigenvalues, retaining components before the plot flattens out.

- Variance Explained: Retaining enough components to capture a satisfactory amount of the total variance in the data (e.g., 70-80%). In practice, many nutritional studies retain components that explain at least 5% of the variance each [33].

- Interpretability: The final component structure should be logically and nutritionally interpretable.

FAQ 4: I've detected severe multicollinearity in my dietary data. Should I proceed with PCA? Yes, PCA is one of the recommended methods to mitigate multicollinearity [30]. Since PCA transforms your original correlated variables into a set of uncorrelated principal components, the multicollinearity problem is eliminated in the new component space. This makes PCA an excellent pre-processing step for regression analyses, as the resulting component scores can be used as independent, non-collinear predictors [30].

FAQ 5: What is the key practical difference between using PCA and Factor Analysis (FA) for dietary pattern derivation? While both are data-driven techniques, their primary goals differ slightly, leading to different outcomes.

- PCA is a dimensionality reduction technique. Its goal is to explain the maximum possible variance in the original variables by creating new composite variables. It does not distinguish between common variance and unique (error) variance [29] [31].

- FA is a latent variable modeling technique. Its goal is to explain the covariances or correlations among the original variables by identifying underlying, unobservable "factors." It explicitly models the common variance shared among variables, separating it from unique variance [29]. In practice, the dietary patterns derived from both methods are often similar, but FA may provide a more refined model of the underlying dietary constructs.

Troubleshooting Common Experimental Issues

Problem: Unstable or Non-Reproducible Component Loadings

- Symptoms: Factor loadings change drastically with small changes in the dataset or when using different sub-samples.

- Potential Causes & Solutions:

- Cause 1: Inadequate sample size. A large number of observations relative to the number of food variables is needed for stable results.

- Solution: Ensure your sample size is sufficient. Rules of thumb vary, but a minimum of 10 observations per variable is often suggested.

- Cause 2: Incorrect handling of outliers. Outliers can disproportionately influence the variance and distort the component structure.

- Cause 3: Not accounting for energy intake. Absolute food intakes are often highly correlated with total energy intake.

- Solution: Adjust food intake for total energy using a preferred method (e.g., density method or residual method) before conducting PCA [32].

- Cause 1: Inadequate sample size. A large number of observations relative to the number of food variables is needed for stable results.

Problem: Low Total Variance Explained by Retained Components

- Symptoms: The first several components explain only a small fraction (e.g., <30%) of the total variance in the dietary data.

- Potential Causes & Solutions:

- Cause 1: Highly diverse and uncorrelated dietary behaviors in the population. If foods are not consumed in consistent patterns, it is difficult for PCA to find strong common components.

- Solution: Consider if your population is too heterogeneous. Stratified analysis by relevant subgroups (e.g., sex, ethnicity) may yield more coherent patterns [32].

- Cause 2: Poor grouping of individual food items. Using too many fine-grained food groups can introduce noise.

- Solution: Revisit your food grouping scheme. Combine similar foods into logically meaningful groups to strengthen correlations and improve pattern detection [32].

- Cause 1: Highly diverse and uncorrelated dietary behaviors in the population. If foods are not consumed in consistent patterns, it is difficult for PCA to find strong common components.

Problem: Component Scores are Weakly Associated with Health Outcomes

- Symptoms: In subsequent regression analysis, the dietary pattern scores show no statistically significant association with the health outcome of interest (e.g., BMI, disease incidence).

- Potential Causes & Solutions:

- Cause 1: The derived patterns are not causally related to the outcome.

- Solution: This may be a true finding. Re-evaluate your hypothesis and consider other dietary pattern techniques.

- Cause 2: Inadequate control for confounding variables.

- Cause 3: Loss of information due to over-aggressive dimension reduction.

- Solution: Retain a larger number of components in the initial PCA to ensure you are not discarding a pattern that is weakly represented in variance but biologically relevant.

- Cause 1: The derived patterns are not causally related to the outcome.

Standard Experimental Protocol for PCA in Dietary Studies

The following table summarizes a standard protocol for deriving dietary patterns using PCA, based on common practices in the nutritional epidemiology literature [32] [33].

Table 1: Standard Protocol for Dietary Pattern Derivation using PCA

| Step | Action | Rationale & Technical Notes | ||||

|---|---|---|---|---|---|---|

| 1. Data Preprocessing | Convert individual food intake data into pre-defined food groups. | Reduces computational complexity and noise. Groups should be based on nutritional similarity and culinary use. A typical study might use 30-50 food groups [32]. | ||||

| 2. Energy Adjustment | Adjust intake of each food group for total energy intake. | Removes the effect of overall calorie consumption, allowing patterns to reflect food choice independent of quantity. The nutrient density method (g/1000 kcal) is commonly used. | ||||

| 3. Standardization | Standardize the energy-adjusted food group intakes (mean=0, SD=1). | Prevents variables with larger natural ranges (e.g., beverages) from dominating the analysis simply due to their scale [34] [31]. | ||||

| 4. Run PCA | Perform PCA on the correlation matrix of standardized food groups. | The correlation matrix is used because data is standardized. The analysis extracts components (eigenvectors) and their associated variances (eigenvalues). | ||||

| 5. Determine Retention | Decide the number of components to retain. | Use a combination of eigenvalue >1 criterion, scree plot inspection, and interpretability [32]. | ||||

| 6. Rotation | Apply Varimax rotation to the retained components. | Rotation simplifies the component structure, maximizing high loadings and minimizing low ones, which aids in interpretation. Varimax is an orthogonal rotation that assumes components are uncorrelated [32]. | ||||

| 7. Interpretation | Interpret patterns based on factor loadings. | Food groups with absolute loadings above a threshold (e.g., | 0.2 | or | 0.3 | ) are considered significant contributors. Name the pattern based on the high-loading foods (e.g., "Western," "Prudent") [32] [33]. |

| 8. Score Calculation | Calculate dietary pattern scores for each participant. | Scores represent each individual's adherence to each pattern. Regression-based methods are often used to calculate standardized scores. |

The workflow for this protocol can be visualized as follows:

The Scientist's Toolkit: Key Reagents & Materials

Table 2: Essential "Research Reagents" for Dietary Pattern Analysis

| Item / Concept | Function / Definition in the Analysis |

|---|---|

| Food Frequency Questionnaire (FFQ) | The primary data collection tool that captures habitual intake of foods and beverages over a specified period. Its design and validity are foundational. |

| Food Grouping System | A predefined schema for aggregating individual food items into nutritionally and culturally meaningful groups (e.g., "whole grains," "processed meats," "leafy green vegetables") [32]. |

| Correlation Matrix | The square matrix showing pairwise correlations between all standardized food group variables. It is the input for the PCA [31]. |

| Eigenvalue | A scalar value that indicates the amount of variance captured by each principal component. Components with larger eigenvalues are more important [31]. |

| Eigenvector | A vector that defines the direction of the principal component. The loadings of the original variables on the component are derived from the eigenvector [31]. |

| Factor Loadings | The correlations between the original food group variables and the principal component. They are the primary basis for interpreting the dietary pattern [32]. |

| Varimax Rotation | An orthogonal rotation method that simplifies the component structure, aiding interpretation by making high loadings higher and low loadings lower [32]. |

| Dietary Pattern Score | A numerical value for each individual, quantifying their adherence to the identified dietary pattern. Used as an exposure variable in subsequent health outcome analyses [33]. |

Decision Pathway for Addressing Collinearity in Dietary Analysis

When facing correlated dietary data, the following decision pathway can guide your methodological choices, positioning PCA as a key solution within a broader set of options [35] [30].

Advanced Technical Notes

Handling Non-Normal Data: While PCA is based on linear algebra and does not require strict normality, extreme deviations from normality can distort results. If your dietary data is highly non-normal, consider log-transformation before standardization, or explore the use of the Semi-parametric Gaussian Copula Graphical Model (SGCGM), a non-parametric extension mentioned in recent methodological reviews [29].

Beyond PCA - Network Analysis: An emerging alternative to PCA is dietary network analysis (e.g., Gaussian Graphical Models). Instead of reducing dimensions, this approach maps the web of conditional dependencies between individual foods, potentially revealing more complex interaction structures [29]. This represents a shift from a "data-driven" to a "relationship-driven" paradigm for understanding dietary patterns.

In nutritional epidemiology, the analysis of diet-disease relationships presents a significant challenge due to the high correlation (collinearity) between different dietary components. Traditional methods that focus on single nutrients can be obscured by these complex interrelationships. Reduced Rank Regression (RRR) is a powerful hybrid method that addresses this issue by identifying dietary patterns that maximally explain the variation in pre-specified intermediate response variables, such as nutrient intakes or disease-related biomarkers. This approach is particularly valuable for deriving disease-specific dietary patterns, making it an essential tool for researchers and drug development professionals investigating the metabolic pathways linking diet to chronic diseases [36] [3] [37].

Core Concepts and Troubleshooting FAQ

What is Reduced Rank Regression (RRR) and how does it address collinearity?

RRR is a hybrid method that combines a priori knowledge with a posteriori data exploration. It identifies linear combinations of predictor variables (food groups) that maximally explain the variation in a set of response variables. These response variables are chosen based on prior knowledge of their role in the disease pathway, effectively breaking the collinearity problem by focusing the pattern extraction on biologically relevant intermediates [36] [3].

- Mechanism: Unlike purely data-driven methods like Principal Component Analysis (PCA), RRR uses response variables to guide the discovery of dietary patterns. This ensures the derived patterns are not only descriptive of consumption habits but are also directly relevant to the disease or health outcome of interest [3] [37].

- Advantage for Collinearity: By projecting both predictors and responses into a common subspace that maximizes their association, RRR handles multicollinear food items effectively, providing more stable and interpretable patterns in the context of the specific disease [36] [37].

How do I select appropriate response variables for an RRR analysis?

Selecting response variables is a critical step, as they determine the disease-specificity of the derived dietary pattern.

- Guideline: Response variables should be meaningful intermediates on the causal pathway between diet and the disease outcome.

- Common Choices:

- Macronutrients: Percentages of energy from protein, carbohydrates, saturated fats, and unsaturated fats are frequently used to explore patterns related to energy metabolism and obesity [36].

- Biomarkers: Physiological markers such as C-reactive protein (CRP) for inflammation or blood lipids for cardiovascular disease can serve as powerful responses [36] [37].

- Nutrient Intakes: Specific micronutrients or other nutrients known to be associated with the disease.

- Troubleshooting Tip: If the resulting patterns are poorly associated with the final disease outcome, re-evaluate the choice of response variables. They may not be sufficiently on the causal pathway.

What are common pitfalls in interpreting RRR results and their relationship to disease?

- Pitfall 1: Confusing explanation with prediction. RRR-derived patterns are optimized to explain variation in the response variables, not to maximize prediction of the final disease outcome.

- Pitfall 2: Misinterpreting directionality. The association between a dietary pattern score and a disease does not necessarily imply causality.

- Troubleshooting Tip: Always validate the association of the derived dietary pattern score with the hard disease endpoint (e.g., incidence of hypertension or diabetes) in a separate analysis or model. A pattern high in saturated fat was positively associated with waist circumference and CRP, demonstrating a link to metabolic health markers [36].

What is the difference between RRR, PCA, and PLS?

Researchers often need to choose between different pattern derivation methods. The table below compares their key features.

Table: Comparison of Dietary Pattern Derivation Methods

| Feature | Principal Component Analysis (PCA) | Reduced Rank Regression (RRR) | Partial Least Squares (PLS) |

|---|---|---|---|

| Primary Goal | Explains maximum variance in food intake variables [37]. | Explains maximum variance in disease-related response variables [37]. | Explains variance in both food intake and response variables [37]. |

| Pattern Basis | Inter-correlations between foods (data-driven) [37]. | Pre-specified intermediate pathways (hybrid) [36] [3]. | A combination of dietary variance and response variable correlation (hybrid) [37]. |

| Relationship to Disease | May be poorly related to disease risk [37]. | Designed to be more associated with disease risk via responses [37]. | Aims to balance dietary description and disease prediction [37]. |

| Interpretation | Describes actual dietary habits in a population. | Provides biologically plausible, disease-specific patterns. | Similar to RRR but with a slightly different optimization goal. |

Key Experimental Protocols

Protocol 1: Deriving a Macronutrient-Based Dietary Pattern using RRR

This protocol is based on a study that identified dietary patterns associated with markers of metabolic health using NHANES data [36].

- Data Preparation: Collect detailed dietary intake data, preferably through 24-hour dietary recalls or validated Food Frequency Questionnaires (FFQs). Code the data into meaningful food groups.

- Define Response Variables: Calculate the percentages of total energy intake derived from protein, carbohydrates, saturated fats, and unsaturated fats.

- Model Fitting: Apply the RRR model with the food groups as predictors and the four macronutrient percentages as response variables. The number of derived patterns will be equal to the number of response variables.

- Pattern Interpretation: Examine the factor loadings for each food group to interpret the patterns. For example, a pattern with high positive loadings on solid fats and processed meats and a high negative loading on carbohydrates might be labeled a "High Saturated Fat" pattern.

- Validation: Investigate the association between the pattern scores and health outcomes (e.g., waist circumference, CRP levels) using multivariate generalized linear models, adjusting for confounders like age, sex, and economic status.

Protocol 2: Comparing RRR with PCA and PLS in a Hypertension Study

This protocol is adapted from a study that identified dietary patterns associated with elevated blood pressure in Lebanese men [37].

- Data Collection: Gather dietary data via a culturally appropriate FFQ and measure blood pressure and other covariates.

- Parallel Analysis: Derive dietary patterns using three methods:

- PCA: Apply to the food group intake variables without using blood pressure information.

- RRR: Use nutrients or biomarkers known to be related to hypertension (e.g., sodium, potassium, magnesium) as response variables.

- PLS: Use the same response variables as in RRR.

- Performance Comparison: Compare the performance of the three methods by examining the odds ratios (OR) for elevated blood pressure across tertiles or quartiles of adherence to each dietary pattern. Assess which method yields patterns most strongly associated with the disease outcome.

- Interpretation: Discuss the behavioral relevance (PCA) versus the disease-specificity (RRR) of the identified patterns.

Visualizing the RRR Workflow

The diagram below illustrates the logical flow and key components of a Reduced Rank Regression analysis.

Figure 1: RRR Analysis Workflow

Table: Key Research Reagents and Resources for RRR Analysis

| Item/Resource | Function/Description | Example |

|---|---|---|

| Dietary Assessment Tool | To quantify food and nutrient intake in the study population. | Food Frequency Questionnaire (FFQ), 24-hour dietary recall [36] [37]. |

| Food Composition Database | To convert consumed foods into nutrient intakes. | USDA Food and Nutrient Database for Dietary Studies (FNDDS) [36]. |

| Biomarker Assay Kits | To measure physiological response variables (e.g., inflammation). | High-sensitivity C-reactive protein (hs-CRP) immunoassay [36]. |

| Statistical Software | To perform the complex RRR calculation and subsequent modeling. | R, SAS, or SPSS with appropriate procedures or custom scripts. |

| Theoretical Framework | The established knowledge used to select meaningful response variables. | Scientific literature on diet-disease pathways (e.g., saturated fat → inflammation → CVD). |

The following table summarizes key quantitative results from recent studies employing RRR for dietary pattern analysis.

Table: Summary of Selected RRR Study Findings

| Study & Population | Key Response Variables | Identified Dietary Pattern | Association with Health Outcome (β or OR [95% CI]) |

|---|---|---|---|

| NHANES (US Adults) [36] | % energy from protein, carbs, saturated fat, unsaturated fat. | High Saturated Fat Pattern | Waist Circumference: βQ5vsQ1 = 1.71 [0.97, 2.44]; CRP: βQ5vsQ1 = 0.37 [0.26, 0.47] |

| Lebanese Males [37] | Nutrients related to hypertension. | Pattern derived by RRR | Odds Ratio for Elevated BP: OR = 2.21 [1.21, 4.03] (Highest vs. Lowest Quartile) |

| NHANES (US Adults) [36] | % energy from macronutrients. | High Fat, Low Carbohydrate Pattern | Positive association with higher economic status: βHighVsLow = 0.22 [0.16, 0.28] |

FAQs: Method Selection and Conceptual Foundations

Q1: What is the primary difference between Cluster Analysis (CA) and Finite Mixture Models (FMM) for identifying dietary subgroups?

While both are data-driven methods to uncover latent subgroups, their core approaches differ. Cluster Analysis (CA), including methods like k-means, is an algorithmic, distance-based approach that partitions individuals into mutually exclusive groups based on the similarity of their dietary intake [38]. In contrast, a Finite Mixture Model (FMM) is a model-based, probabilistic approach that assumes the population is a mixture of distinct subpopulations, each with its own probability distribution [3] [39]. FMM does not assign an individual to a single group definitively but calculates a probability of belonging to each subgroup, naturally handling uncertainty in classification [40] [39].

Q2: When should I choose a Finite Mixture Model over traditional Cluster Analysis?

FMM is particularly advantageous in several scenarios:

- When classification uncertainty is high: FMM provides posterior probabilities of group membership, which is valuable when subgroups are not well-separated [40].

- To handle overlapping subgroups: Probabilistic classification in FMM accommodates food items or individuals with characteristics of multiple groups, reducing allocation bias [40].

- For statistical inference: As a model-based approach, FMM allows for the use of information criteria (e.g., AIC, BIC) for objective model selection and can more easily incorporate covariates to understand what drives subgroup membership [39].

Q3: How does the problem of collinearity among dietary components affect these analyses, and how can it be managed?

Collinearity, where dietary components (e.g., nutrients or food groups) are highly correlated, is a common issue in dietary data. It can lead to unstable results and make it difficult to discern the independent role of each dietary component in defining the subgroups [41]. Management strategies include:

- Principal Component Analysis (PCA): As a preprocessing step, PCA can reduce a set of intercorrelated variables into a few uncorrelated principal components, which can then be used as input for CA or FMM [42].

- Variable Selection or Combination: Prior to analysis, carefully combine correlated food items into logical food groups based on nutritional knowledge [3].

- Acknowledgment: At a minimum, investigators should assess and report on the potential for multicollinearity and acknowledge its possible impact on the interpretation of which foods/nutrients define the clusters [41].

Troubleshooting Guides: Addressing Common Experimental Challenges

Problem: Determining the Optimal Number of Clusters or Components

Issue: The researcher is unsure how many subgroups (k) best represent the underlying population.

Solutions:

- Use Multiple Information Criteria: For FMM, rely on statistical indices such as the Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC). The model with the lowest AIC/BIC is typically preferred [39].

- Employ Algorithmic Indices: For k-means clustering, use a combination of indices (e.g., Gap statistic, Silhouette width). One approach is to calculate numerous indices and choose the value of

kthat is most frequently suggested [43]. - Consider Interpretability and Extraneous Knowledge: The chosen number of clusters must be biologically or nutritionally plausible. A 3-cluster solution that is easily interpretable and actionable is often better than a 5-cluster solution that is not.

Problem: Results Are Not Reproducible or Are Unstable

Issue: Running the analysis multiple times on the same data yields different subgroup solutions.

Solutions:

- Set a Random Seed: Always set a random number generator seed before performing analyses with a stochastic element (e.g., k-means clustering, the EM algorithm in FMM) to ensure results can be replicated [42].

- Standardize Input Variables: If variables are on different scales (e.g., grams of carbohydrates vs. micrograms of vitamin A), one variable will disproportionately influence the distance calculations. Standardize all dietary variables (e.g., z-scores) before analysis [43].

- Validate Stability: Use internal validation techniques such as splitting the data and comparing results across halves, or using bootstrapping to assess the stability of the cluster solutions.

Problem: Interpreting the Meaning of the Derived Subgroups

Issue: The statistical analysis produces subgroups, but their dietary patterns are unclear or difficult to describe.

Solutions:

- Examine Component Loadings or Cluster Centroids: For FMM, review the parameters (e.g., means) of the dietary variables for each component. For CA, examine the mean intake (centroids) of each food group for every cluster. A useful table structure is shown below.

- Compare to the Overall Mean: Calculate the mean intake of each food group for the entire sample and then for each subgroup. Describe the subgroup by highlighting the food groups for which its mean intake is significantly higher or lower than the overall average.

- Profile and Label the Subgroups: Create a table to summarize the key characteristics. For example:

Table: Characteristics of Dietary Subgroups Identified via Finite Mixture Model

| Subgroup (Label) | Estimated Proportion | Key Defining Dietary Features | Posterior Probability > 0.8 |

|---|---|---|---|

| "Healthy" Pattern | 32% | High intake of fruits, vegetables, whole grains. Low intake of processed meats and sugary beverages. | 85% |

| "Western" Pattern | 41% | High intake of red meat, refined grains, and high-fat dairy. Low intake of legumes and fish. | 78% |

| "Moderate" Pattern | 27% | Average intake across most food groups. Slightly higher intake of poultry and eggs. | 82% |

Experimental Protocols

Protocol 1: Conducting k-Means Cluster Analysis on Dietary Data

Objective: To partition participants into a predefined number (k) of mutually exclusive subgroups based on dietary intake similarity.

Materials: Dietary intake data (e.g., from FFQs or 24-hour recalls), statistical software (R, Python, SAS, STATA).

Methodology:

- Data Preprocessing: Aggregate individual foods into meaningful food groups (e.g., "red meat," "leafy green vegetables"). Standardize all food group intake variables (e.g., convert to z-scores).

- Determine the Number of Clusters (k): Use the

NbClustpackage in R or similar to run multiple indices on a range of k values (e.g., 2-10). Select the optimal k [43]. - Execute k-means Algorithm: Using the standardized data and chosen k, run the k-means algorithm. Set a random seed for reproducibility. The algorithm will iteratively assign participants to clusters to minimize within-cluster variation.

- Extract Results: Obtain the cluster assignment for each participant and the cluster centroids (the mean values of each food group for the participants in that cluster).

- Interpret and Validate: Analyze the centroid values to label and describe each cluster. Validate the stability of the solution.

Protocol 2: Fitting a Gaussian Finite Mixture Model

Objective: To identify latent dietary subgroups by modeling the population as a mixture of Gaussian distributions.

Materials: Dietary intake data, statistical software with FMM capability (e.g., R packages mclust, flexmix).

Methodology:

- Data Preprocessing: Prepare and standardize food group intake variables as in Protocol 1.

- Model Specification: Assume the data arises from a mixture of