Navigating Analytical Method Transfer Challenges in Food Laboratories: Strategies for Ensuring Data Integrity and Compliance

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on overcoming the multifaceted challenges of analytical method transfer in food laboratory settings.

Navigating Analytical Method Transfer Challenges in Food Laboratories: Strategies for Ensuring Data Integrity and Compliance

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on overcoming the multifaceted challenges of analytical method transfer in food laboratory settings. It explores the foundational principles and regulatory landscape governing method transfers, outlines proven methodological approaches and protocols, details strategies for troubleshooting common technical and operational hurdles, and establishes frameworks for robust validation and comparative analysis. By synthesizing current best practices and real-world case studies, this resource aims to equip professionals with the knowledge to ensure data equivalence, maintain product quality, and achieve regulatory compliance during method transitions across laboratories.

Understanding the Core Principles and Regulatory Landscape of Method Transfer

Core Concepts and Regulatory Foundation

What is Analytical Method Transfer?

Analytical method transfer (AMT) is a formally documented process that qualifies a receiving laboratory (RL) to use a validated analytical testing procedure that originated in another laboratory (the transferring laboratory, or TL) [1]. The fundamental goal is to demonstrate that the RL can execute the method and generate results equivalent in accuracy, precision, and reliability to those produced by the TL, ensuring the method remains in a validated state despite the change in location [2] [1]. In essence, it confirms that an analytical procedure will perform as intended in a new environment with different analysts, equipment, and reagents [3].

Regulatory Context and Importance

While definitive regulatory guidelines specifically for AMT are limited, the process is a regulatory imperative governed by overarching guidelines from bodies like the FDA, EMA, and ICH, and is detailed in compendia such as USP General Chapter <1224> [1] [3]. Regulatory agencies require evidence that analytical methods are reliable across different laboratories to ensure the continued quality, safety, and efficacy of products [3]. A failed or poorly executed transfer can lead to delayed product releases, costly retesting, and regulatory non-compliance [2] [4]. Within the context of food laboratory settings, successful method transfer is crucial for ensuring consistent monitoring of contaminants, nutrients, and quality attributes, thereby safeguarding public health and ensuring fair trade practices.

Approaches and Methodologies for Method Transfer

The choice of transfer strategy depends on factors such as the method's complexity, its regulatory status, the experience of the receiving lab, and the level of risk involved [2]. The most common protocols are summarized in the table below.

Table 1: Primary Approaches to Analytical Method Transfer

| Transfer Approach | Description | Best Suited For | Key Considerations |

|---|---|---|---|

| Comparative Testing [2] [4] | Both laboratories analyze the same set of samples (e.g., reference standards, production batches). Results are statistically compared for equivalence. | Established, validated methods; laboratories with similar capabilities. | Requires careful sample preparation, homogeneity, and a robust statistical analysis plan. |

| Co-validation [2] [5] | The analytical method is validated simultaneously by both the TL and RL from the outset. | New methods or methods being developed specifically for multi-site use. | Requires high collaboration, harmonized protocols, and shared responsibilities. |

| Revalidation [2] [4] | The RL performs a full or partial revalidation of the method. | Significant differences in lab conditions/equipment; substantial method changes; when the TL cannot provide data. | Most rigorous and resource-intensive approach; requires a full validation protocol. |

| Transfer Waiver [2] [4] | The formal transfer process is waived based on strong scientific justification. | Highly experienced RL with identical conditions; simple, robust methods (e.g., some compendial methods). | Rare and subject to high regulatory scrutiny; requires robust documentation and risk assessment. |

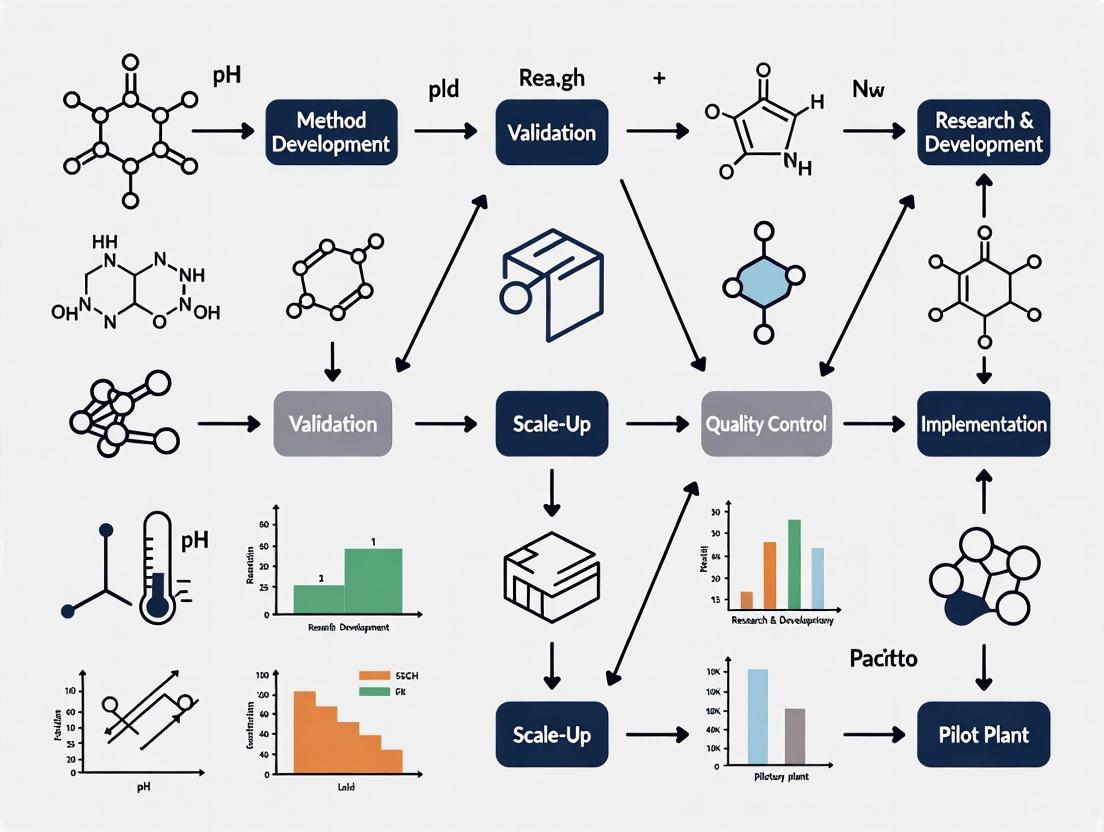

The following workflow outlines the typical lifecycle of an analytical method transfer, from initiation through to closure and ongoing monitoring.

The Scientist's Toolkit: Essential Materials for Method Transfer

Successful execution of an analytical method transfer relies on the careful management of specific materials and reagents. The following table details key items and their critical functions.

Table 2: Key Research Reagent Solutions and Materials for Method Transfer

| Item | Function & Importance in Transfer | Best Practices |

|---|---|---|

| Reference Standards [2] [4] | Qualified standards used to calibrate the method and ensure accuracy. Variability can directly cause transfer failure. | Use traceable, qualified standards. Ideally, both labs should use the same lot number during comparative testing. |

| Chromatography Columns [3] | The stationary phase for separation (e.g., in HPLC, GC). Different lots or brands can alter retention times and resolution. | Specify the exact column dimensions, packing material, and lot number in the transfer protocol. |

| Critical Reagents [4] [1] | Buffers, enzymes, antibodies, or mobile phase components. Their quality and composition are often critical to method performance. | Document supplier, grade, and catalog number. Use the same lot or perform equivalency testing if lots differ. |

| Test Samples [2] [1] | Homogeneous and representative samples (e.g., drug substance/product, spiked samples) used for comparative testing. | Ensure sample homogeneity and stability during shipment. Use stressed/aged samples for stability-indicating methods. |

| System Suitability Solutions [6] | A prepared mixture used to verify that the entire analytical system is performing adequately before the analysis. | The preparation procedure must be precisely defined and replicated identically in both laboratories. |

Troubleshooting Common Method Transfer Challenges

Despite meticulous planning, challenges during method transfer are common. The following section addresses specific issues and provides guidance for investigation and resolution.

Frequently Asked Questions (FAQs)

Q1: Our receiving laboratory is failing the precision (high %RSD) acceptance criteria for an HPLC assay. What are the primary areas we should investigate? [7]

A: A failure in precision typically indicates issues with the reproducibility of the analytical procedure itself. Focus your investigation on:

- Instrument Performance: Check system suitability parameters like pressure baseline noise. Ensure the HPLC system is properly calibrated and maintained at both sites. Minor differences in pump performance or detector lamps can cause variability [4].

- Sample Preparation Technique: This is a very common source of error. Verify that techniques like pipetting, weighing, dilution, mixing, and sonication are performed identically by all analysts. Inadequately calibrated pipettes or volumetric glassware are frequent culprits [1].

- Reagent and Mobile Phase Preparation: Ensure that all solutions are prepared with the same rigor regarding sourcing of chemicals, water quality, pH adjustment, and filtration. Small variations in pH or buffer concentration can significantly impact chromatography [4] [3].

- Environmental Conditions: For some methods, factors like ambient temperature or humidity can affect the analysis. Ensure the method's robustness to such variables was adequately characterized [3].

Q2: We observe a consistent bias (a significant difference in mean values) between the transferring and receiving laboratories. How should we approach this problem? [1]

A: A consistent bias suggests a systematic error rather than random variability. Your investigation should center on differences in materials or fundamental instrument settings:

- Reference Standards and Reagents: Confirm that both labs are using the same qualified reference standard and that its potency or purity value has been correctly applied in calculations. Different lots of critical reagents can also introduce bias [4].

- Instrument Calibration and Settings: While the model may be the same, verify that critical instrument parameters (e.g., detector wavelength accuracy, column oven temperature) are calibrated and set identically. A slight miscalibration in wavelength can cause a substantial bias in calculated concentrations [4].

- Calculation Methods and Software: Ensure that both labs are using identical data processing parameters (e.g., integration algorithm, baseline placement) and that any spreadsheets or custom calculations have been properly validated [4] [1].

Q3: A compendial method (e.g., from USP) is being implemented in our laboratory for the first time. Is a formal method transfer required, and if not, what is expected? [5] [6]

A: A full, formal comparative transfer is often not required for a compendial method. However, you cannot simply implement it without verification. The receiving laboratory must perform method verification to demonstrate that the method is suitable for use under actual conditions of use. This typically involves a limited set of experiments to confirm key performance characteristics such as accuracy, precision, and specificity for the specific product matrix being tested in your laboratory [6].

Q4: What are the critical elements that must be included in a Method Transfer Protocol? [2] [4] [5]

A: A robust transfer protocol is the cornerstone of a successful AMT. It must include:

- Clear Objective and Scope.

- Defined Responsibilities for both TL and RL.

- Detailed description of Materials, Equipment, and Analytical Procedure.

- Experimental Design (number of samples, analysts, days).

- Predefined, statistically justified Acceptance Criteria for each test parameter.

- A detailed plan for Data Analysis and Statistical Evaluation.

- A process for handling Deviations and Out-of-Specification results.

Q5: How are acceptance criteria for a comparative method transfer established? [5]

A: Acceptance criteria should be based on the original method validation data, particularly the reproducibility and intermediate precision. They should be statistically sound and justified, taking into account the method's purpose and product specifications. While criteria are method-specific, some typical examples are:

- Assay: Absolute difference between the site means should be ≤ 2.0-3.0%.

- Related Substances (Impurities): Criteria may vary with impurity level. For impurities spiked at low levels, recovery of 80-120% might be used.

- Dissolution: Absolute difference in mean results is typically ≤ 10% at early time points (<85% dissolved) and ≤ 5% at later time points (>85% dissolved).

Advanced Troubleshooting Guide

Table 3: Advanced Troubleshooting for Method Transfer Failures

| Observed Problem | Potential Root Cause | Corrective and Preventive Actions (CAPA) |

|---|---|---|

| Unexpected Chromatographic Peaks or Peak Shape Changes [1] | - Degraded samples due to unstable conditions or shipping delays.- Different column chemistry (lot-to-lot variability).- Contaminated mobile phase or solvent. | - Verify sample stability under shipping and storage conditions.- Use columns from the same manufacturer and lot, or perform column equivalency testing.- Strictly control mobile phase preparation and shelf-life. |

| Loss of Signal in a Cell-Based Bioassay [1] | - Incorrect cell culture practices at RL (e.g., over-passaging, contamination).- Improper handling of critical reagents (e.g., subjecting cells to trypsin for too long).- Malfunction or miscalibration of equipment (e.g., automated cell counter). | - Transfer and qualify a common cell bank. Provide intensive, hands-on training for cell culture techniques.- Define handling procedures for reagents with extreme precision in the SOP.- Require full equipment qualification (IQ/OQ/PQ) at the RL before transfer execution. |

| Failure of System Suitability Test [4] | - Differences in water purity or chemical grade of reagents.- Minor but impactful variations in HPLC system dwell volume or detector characteristics.- Preparation error of the system suitability solution. | - Specify water quality (e.g., 18.2 MΩ·cm) and reagent grades in the protocol.- Compare detailed system suitability data (e.g., tailing factor, plate count) between labs early in the process to identify hardware-related issues.- Standardize the preparation procedure for the system suitability test solution. |

The Critical Importance of Transfer in Global Food Supply Chains and Quality Control

Technical Support Center

Troubleshooting Guides

Issue 1: Inconsistent Results Between Laboratories During Method Transfer

- Problem: An analytical method yields acceptable results at the transferring lab but shows high variability or bias at the receiving lab.

- Investigation & Resolution:

- Verify Method Parameters: Confirm that all method parameters (e.g., column type, temperature, mobile phase composition, pH) match exactly between laboratories. Even minor, undocumented changes can cause significant discrepancies [8].

- Check Equipment Qualification: Ensure all instruments at the receiving lab (e.g., HPLC, GC, spectrophotometers) are properly qualified, calibrated, and maintained. Compare make and model with the transferring lab to identify potential instrument-specific effects [2].

- Audit Reagents and Standards: Confirm that both sites are using reagents from the same grade and supplier. Crucially, verify the purity, concentration, and handling of reference standards [2].

- Assess Analyst Training: Ensure that analysts at the receiving lab have received adequate hands-on training and have demonstrated proficiency with the method. A knowledge gap in subtle, "tacit" techniques can be a root cause [5].

- Review Environmental Conditions: Consider differences in laboratory environments, such as ambient temperature and humidity, which can affect certain analyses [2].

Issue 2: Recurring Non-Conformities in Raw Material Quality

- Problem: Incoming raw materials from suppliers consistently fail quality inspections, leading to production delays.

- Investigation & Resolution:

- Centralized Tracking: Log all non-conformities in a centralized system to identify patterns and specific defect types [9].

- Supplier Self-Assessment: Implement automated supplier self-assessment programs to gather performance data directly [9].

- Conduct Joint Audits: Perform audits with suppliers to review their quality control processes and identify the root cause of defects, whether it's in their production, handling, or transportation [9] [10].

- Enhance Inspection Protocols: Use customized inspection templates for incoming materials based on historical supplier performance to catch variability early [11].

- Initiate CAPA: Implement a Corrective and Preventive Action (CAPA) plan with the supplier to address the root cause and prevent recurrence [9].

Issue 3: Failure to Meet Regulatory Compliance During an Audit

- Problem: Inability to produce required documentation or evidence of compliance during a regulatory audit.

- Investigation & Resolution:

- Automate Document Management: Implement a centralized document management system with version control to ensure only the latest, approved versions of SOPs, methods, and reports are in use [9].

- Digitize Records: Replace paper-based records with digital systems that automatically capture and store quality control data, including test results, images, and audit trails [9] [11].

- Use Pre-Built Templates: Utilize pre-built, customizable templates for audits and inspections aligned with standards like ISO 22000 and HACCP to ensure all necessary compliance boxes are checked [9].

- Generate Custom Reports: Use software to quickly generate audit-ready PDF reports that demonstrate compliance with relevant food safety regulations [9].

Frequently Asked Questions (FAQs)

Q1: When can an analytical method transfer be waived? A: A formal method transfer can be waived in specific, justified cases, such as when using a verified pharmacopoeial method (e.g., USP, EP), when the method is applied to a new product strength with only minor changes, or when the receiving laboratory's personnel are already highly experienced with the method through prior work or training [5].

Q2: What are the typical acceptance criteria for a comparative method transfer for an assay? A: While criteria should be based on the method's validation data and purpose, a typical acceptance criterion for an assay is an absolute difference of 2.0-3.0% between the mean results obtained at the transferring and receiving sites [5]. The table below outlines common criteria for different test types.

Q3: How can we improve sustainability in our multi-tier food supply chain? A: Key strategies include:

- Multi-tier Collaboration: Partner with suppliers at all levels to share ideas and set mutual sustainability goals [10].

- Supply Chain Mapping: Gain comprehensive visibility into your upstream suppliers to identify and address key sustainability risks [10].

- Capacity Building: Develop training programs for all supply chain partners on environmental, economic, and social sustainability practices [10].

- Diffusion of Innovation: Promote and share sustainable innovations, such as soil management techniques or emission-reducing technologies, across the chain [10].

Q4: What is the core difference between Quality Assurance (QA) and Quality Control (QC)? A: QA is process-oriented and proactive, focusing on preventing defects through defined methodologies and procedures. QC is product-oriented and reactive, focusing on identifying and correcting defects in the final output [12]. In food production, QA involves activities like setting SOPs and GMPs, while QC involves tasks like testing finished products and monitoring critical control points [12].

Experimental Protocols & Data

Method Transfer Protocols

Protocol 1: Comparative Testing for Analytical Method Transfer

- Objective: To demonstrate that the receiving laboratory can perform the analytical procedure and obtain results equivalent to those of the transferring laboratory.

- Materials:

- Homogeneous and representative samples from at least one production batch.

- Spiked samples (if necessary, for impurity methods).

- Identical or equivalent instrumentation, qualified reference standards, and reagents at both sites.

- Experimental Design:

- A predefined number of samples (e.g., 6) are analyzed in duplicate by a minimum of two analysts at both the sending and receiving units.

- The analysis should be performed on different days to account for variability.

- Both laboratories follow the identical, approved analytical procedure.

- Data Analysis: Results from both laboratories are statistically compared using pre-defined tests (e.g., t-test for means, F-test for variances) or by calculating the absolute difference between mean values.

Protocol 2: Co-validation as a Transfer Strategy

- Objective: To qualify the receiving laboratory by having both sites participate simultaneously in the method validation.

- Materials: As described in the method validation protocol.

- Experimental Design:

- The analytical method is validated jointly by the transferring and receiving laboratories.

- The receiving site participates in testing the key validation parameters, typically reproducibility.

- Responsibilities for testing different parameters are shared and defined in a joint protocol.

- Data Analysis: The validation data from both sites is combined and evaluated against pre-defined validation criteria as outlined in guidelines like ICH Q2(R1).

Table 1: Typical Acceptance Criteria for Analytical Method Transfer [5]

| Test | Typical Transfer Acceptance Criteria |

|---|---|

| Identification | Positive (or negative) identification obtained at the receiving site. |

| Assay | Absolute difference between the mean results of the two sites: 2.0 - 3.0%. |

| Related Substances | Absolute difference criteria vary by impurity level. For low levels, recovery of 80-120% for spiked impurities is common. |

| Dissolution | Absolute difference in mean results:- Not More Than (NMT) 10% at time points <85% dissolved.- NMT 5% at time points >85% dissolved. |

Table 2: Key Research Reagent Solutions for Quality Control Labs

| Reagent / Material | Critical Function |

|---|---|

| Certified Reference Standards | Serves as the benchmark for quantifying analytes, ensuring accuracy and traceability of results [5] [2]. |

| Chromatography-Grade Solvents | Essential for producing reliable and reproducible chromatographic data (HPLC/GC) by minimizing background interference [2]. |

| Selective Culture Media | Used for the detection and enumeration of specific microbial pathogens or indicators in food samples [13]. |

| DNA Primers and Probes | For molecular identification and speciation of ingredients (e.g., fish species) and detection of genetically modified organisms (GMOs) [13]. |

Visualizations

Analytical Method Transfer Workflow

Troubleshooting Funnel for Laboratory Instruments

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the main objective of an analytical method transfer? The primary objective is to formally demonstrate and document that a receiving laboratory can successfully perform a validated analytical method and generate results that are equivalent to those produced by the originating laboratory. This ensures data integrity and product quality regardless of where the testing is performed [4] [1].

Q2: When can a formal method transfer be waived? A transfer waiver may be justified in specific, well-documented circumstances. These include the transfer of a compendial method (e.g., from the USP), when the product and method are comparable to one already familiar to the receiving lab, or when the personnel responsible for the method move with the assay to the new laboratory [4] [14] [5].

Q3: What are the typical acceptance criteria for an assay method transfer? While criteria are method-specific, some common examples based on historical data and validation studies include [5]:

| Test | Typical Acceptance Criteria |

|---|---|

| Assay | Absolute difference between site results: 2-3% |

| Related Substances | Recovery of spiked impurities: 80-120% (varies with impurity level) |

| Dissolution | Absolute difference in mean results: NMT 10% at <85% dissolved; NMT 5% at >85% dissolved |

| Identification | Positive (or negative) identification must be obtained |

Q4: What is the most common cause of method transfer failure? Regulatory case studies highlight that one of the most frequent causes of failure is a lack of sufficient comparative testing, often due to not including appropriately aged or spiked samples that can challenge the method. Other common issues include systematic differences between sites and inadequately defined acceptance criteria [1].

Q5: How do regulatory expectations for method transfer differ between FDA and EMA? While both require a formal, documented process, definitive, centralized guidelines for method transfer are less common. Regulatory expectations are often outlined in broader guidance documents on quality and manufacturing changes. For biologics, Health Canada's guidance, for instance, may require protocol preapproval for non-compendial methods. The FDA emphasizes a risk-based approach, and the USP general chapter <1224> provides a foundational framework for the Transfer of Analytical Procedures (TAP) [1] [14].

Troubleshooting Common Method Transfer Challenges

Problem: Inconsistent results between the sending and receiving units.

- Potential Causes & Solutions:

- Instrumentation Variability: Even the same instrument model can yield different results. Ensure both laboratories have performed formal Instrument Qualification (IQ/OQ/PQ) and compare system suitability data early to identify discrepancies [4].

- Reagent & Standard Variability: Differences in reagent lots can introduce variation. Use the same lot of critical reagents and standards for comparative testing where possible [4].

- Personnel & Technique: Unwritten techniques from experienced analysts can affect outcomes. Facilitate hands-on training and shadowing between sites to transfer tacit knowledge [4] [5].

Problem: An assay meets all validation parameters but fails during transfer.

- Potential Causes & Solutions:

- Inadequate Risk Assessment: The transfer protocol did not account for all potential variables. Perform a failure mode and effects analysis (FMEA) during the planning stage to identify and mitigate risks related to equipment, environment, and personnel [1].

- "Silent" Knowledge Gaps: Critical procedural details may be missing from the written method. Organize pre-transfer kick-off meetings and on-site training to discuss practical tips and nuances not captured in the standard operating procedure [5].

Problem: High variability in results at the receiving unit.

- Potential Causes & Solutions:

- Environmental Factors: Factors like local temperature or humidity can impact method performance, especially for cell-based or sensitive biochemical assays. Conduct a thorough assessment of the receiving lab's environment [1].

- Incorrect Equipment Calibration: Miscalibrated equipment, such as pipettes, is a common source of error. Verify the calibration status and maintenance records of all critical equipment at the receiving site [1].

Experimental Protocol for a Comparative Method Transfer

This protocol outlines the methodology for transferring a validated analytical procedure via comparative testing, the most common transfer approach [4].

Objective

To qualify the Receiving Laboratory (RU) to perform the [Specify Method Name, e.g., HPLC Assay for Purity] by demonstrating that its results are comparable to those generated by the Sending Laboratory (SU).

Materials and Equipment

- Samples: A minimum of three lots of [Drug Substance/Drug Product] with a range of potencies. One lot should be stressed to generate degradants, if stability-indicating claims are to be verified [1].

- Reference Standards: USP Reference Standard [Number] or an appropriately qualified in-house standard [15].

- Reagents: HPLC-grade [List key reagents, e.g., Acetonitrile, Water, Trifluoroacetic Acid]. The same lot numbers should be used by both labs for the transfer study [4].

- Equipment: [Specify instrument models and configurations, e.g., Agilent 1260 Infinity II HPLC with DAD detector]. Equipment at the RU must be qualified (IQ/OQ/PQ).

Experimental Workflow

Step-by-Step Procedure

- Protocol Development: Create a detailed transfer protocol defining objective, scope, responsibilities, experimental design, and pre-defined acceptance criteria [4] [5].

- Knowledge Transfer & Training: Hold a kick-off meeting between SU and RU. The SU shares the method SOP, validation report, and historical data. On-site or virtual training is conducted for RU analysts [5].

- Execution of Testing: Both the SU and RU analyze the same set of predefined samples. The design should include a statistically justified number of runs (e.g., 6-12 independent setups) per lab to adequately assess precision [1] [14].

- Data Analysis: Results are statistically compared. For assay/potency, this typically involves calculating the geometric mean of relative potencies at each site and determining if the 90% confidence interval of their ratio falls within the acceptance range (e.g., 80-125%) [14]. The intermediate precision (a measure of total variability) of the RU must also be shown to be within a pre-specified limit [14].

- Report and Conclusion: A final report summarizes all data, compares it against acceptance criteria, documents any deviations, and provides a conclusion on the success of the transfer [4].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Method Transfer |

|---|---|

| USP Reference Standards | Certified reference materials used to qualify reagents, calibrate instruments, and validate methods; essential for ensuring accuracy and regulatory compliance [15]. |

| Qualified Critical Reagents | Antibodies, enzymes, or cell lines used in bioassays. Their qualification (specificity, potency) is crucial, as lot-to-lot variability is a major risk factor [1]. |

| System Suitability Standards | A standardized preparation used to verify that the analytical system is functioning correctly and provides adequate sensitivity, resolution, and reproducibility before a run is started. |

| Stressed/Stability Samples | Samples intentionally degraded (e.g., by heat, light, pH) used during transfer to demonstrate that the method remains stability-indicating and can separate degradants from the active ingredient [1]. |

| Pharmaceutical Grade Solvents | High-purity solvents (e.g., HPLC/MS grade) that prevent interference, baseline noise, and column degradation, which could lead to inconsistent results between labs. |

Troubleshooting Guides & FAQs for Food Laboratory Method Transfer

This technical support center provides targeted guidance for researchers and scientists facing challenges during the transfer of analytical methods in food laboratory settings. The following FAQs and troubleshooting guides address specific, high-impact issues related to common transfer triggers.

Frequently Asked Questions

FAQ 1: What is the primary objective of a formal method transfer protocol? The main objective is to formally demonstrate and document that a receiving laboratory can successfully perform an analytical method and generate results that are equivalent to those produced by the originating laboratory [4].

FAQ 2: What is the most common protocol used in analytical method transfer? The most common protocol is comparative testing, where both the originating and receiving laboratories analyze identical samples and compare their results against pre-defined, statistically justified acceptance criteria [4] [16].

FAQ 3: Why can a method that worked perfectly in the originating lab fail in a new facility? Failure is often due to undocumented or subtle differences between the two sites. Common root causes include [4] [17] [18]:

- Instrumentation Variability: Differences in calibration, maintenance, or minor components between the same instrument models.

- Reagent and Standard Variability: Different lot numbers of critical reagents introducing slight variations in purity.

- Personnel Technique: Unwritten techniques or sample preparation nuances used by experienced analysts in the originating lab that are not captured in the formal procedure.

- Documentation Gaps: An incomplete Standard Operating Procedure (SOP) or missing details in the original validation report.

FAQ 4: What are the key benefits of outsourcing comparative testing to a specialized lab? Outsourcing offers four key advantages [16]:

- Specialized Expertise: Access to experienced personnel and state-of-the-art equipment.

- Unbiased Results: An independent, third-party lab provides objective data.

- Cost Savings: Avoids the high expense of establishing specialized in-house capabilities.

- Convenience: Allows your team to focus on core research while experts handle the transfer.

Troubleshooting Common Method Transfer Challenges

The table below outlines common discrepancies, their potential root causes, and recommended resolutions.

| Discrepancy Observed | Potential Root Cause | Resolution & Preventive Action |

|---|---|---|

| Inconsistent results between labs during comparative testing [4] [17] | • Improperly defined acceptance criteria• Instrument calibration or performance differences• Sample degradation or non-homogeneity | • Establish statistically sound acceptance criteria based on original validation data in the transfer plan [4].• Ensure formal Instrument Qualification (IQ/OQ/PQ) is performed at the receiving site prior to transfer [4]. |

| Failed system suitability tests in the receiving lab [4] | • Differences in critical reagents or reference standards• Variation in mobile phase preparation or water quality• Minor hardware differences in HPLC systems or detectors | • Use the same lot numbers for critical reagents and standards during transfer [4].• Conduct a feasibility study in the receiving lab to practice the method and identify these issues early [16]. |

| High analyst-to-analyst variability in the receiving lab [4] [18] | • Insufficient training on nuanced techniques• Ambiguous or poorly detailed steps in the SOP (e.g., "sonicate until dissolved")• Lack of hands-on training with the originating analyst | • Implement cross-training and hands-on shadowing where the receiving analyst performs the method under the supervision of the originating expert [4].• Revise the SOP to be explicit and detailed, capturing all critical steps [4]. |

| Data integrity and traceability issues [18] | • Reliance on manual, paper-based systems for sample tracking and data recording• Inaccurate sample labeling or data entry errors | • Integrate automated systems like a Laboratory Information Management System (LIMS) and barcoding to reduce manual errors and ensure a clear chain of custody [18].• Use Electronic Laboratory Notebooks (ELNs) for secure, structured data recording [4]. |

Experimental Protocol: Comparative Testing for Method Transfer

Objective: To verify that a receiving laboratory can execute a specific analytical method and generate results statistically equivalent to those from the originating laboratory.

1. Pre-Transfer Planning:

- Develop a Formal Transfer Protocol: This document is mandatory and must include [4] [19]:

- Objective & Scope: Clear statement of the method and its purpose.

- Responsibilities: Defined roles for personnel in both originating and receiving labs, plus Quality Assurance (QA).

- Acceptance Criteria: Pre-established, statistically justified limits for success (e.g., results must fall within a specific range, coefficient of variation must not exceed a set percentage).

- Detailed Procedures: Step-by-step instructions for sample preparation, instrumentation, and run sequences.

- Data Analysis Plan: Instructions for how data will be compiled and statistically compared.

2. Execution:

- Parallel Testing: The same set of homogeneous samples (e.g., a stable batch of a food product) is tested by both laboratories using the identical analytical method and procedure [16].

- Blinded Analysis: Where possible, samples should be blinded to prevent analyst bias.

3. Data Analysis and Reporting:

- Statistical Comparison: Results from both labs are compared against the pre-defined acceptance criteria outlined in the protocol [4] [16].

- Investigate Deviations: Any out-of-specification (OOS) results or deviations must be documented and investigated per a pre-defined deviation management process [4].

- Formal Report: A comprehensive transfer report is generated. This report summarizes the results, confirms they meet acceptance criteria, documents any deviations, and provides a formal conclusion on the success of the transfer [4].

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below details key materials and their functions critical for ensuring a robust and successful method transfer.

| Item | Function & Importance in Method Transfer |

|---|---|

| Certified Reference Standards | Provides the benchmark for quantifying the analyte of interest. Using the same lot between labs is critical for ensuring data comparability and accuracy [4]. |

| Chromatography-Grade Solvents & Reagents | Ensures purity and consistency in mobile phase and sample preparation. Variability in reagent quality is a common source of transfer failure [4]. |

| Qualified & Calibrated Equipment | Instruments (HPLC, GC, MS) must undergo Installation, Operational, and Performance Qualification (IQ/OQ/PQ) to confirm they operate within specified parameters, directly addressing instrumentation variability [4] [19]. |

| Stable & Homogeneous Sample Lots | Provides a consistent test material for both laboratories. Inconsistent or degraded samples can invalidate comparative testing results [16]. |

| Detailed Standard Operating Procedure (SOP) | The definitive, step-by-step guide for the method. An ambiguous or incomplete SOP is a primary root cause of personnel-related transfer failures [4] [17]. |

Method Transfer Workflow

This diagram illustrates the formal, multi-stage process for transferring an analytical method, from initial planning to final closure.

Comparative Testing Process

This diagram details the specific workflow for conducting a comparative testing study, the most common method transfer protocol.

Troubleshooting Guides

Guide 1: Resolving Failures in Comparative Testing

Problem: The receiving laboratory's results are not equivalent to the originating lab's results during comparative testing.

Investigation & Solutions:

| Investigation Area | Common Causes | Corrective & Preventive Actions |

|---|---|---|

| Instrumentation | - Minor variations in the same instrument model [4]- Differences in calibration or maintenance history [4] | - Perform formal Instrument Qualification (IQ/OQ/PQ) at the receiving site [4]- Compare system suitability data between labs early on [4] |

| Reagents & Standards | - Different lot numbers of the same reagent grade causing purity variations [4] | - Use the same lot number of critical reagents and standards for transfer [4]- Verify new standards against a known reference before use [4] |

| Analyst Technique | - Subtle, undocumented sample preparation techniques [4] | - Implement hands-on, shadow training between originating and receiving analysts [4]- Ensure the SOP is exceptionally detailed and unambiguous [4] |

Guide 2: Addressing Incomplete or Inadequate Documentation

Problem: The method transfer is delayed or fails due to documentation gaps.

Investigation & Solutions:

| Symptom | Root Cause | Solution |

|---|---|---|

| Missing original validation report | Documentation not collated for transfer [4] | Create a checklist of required documents in the transfer plan [4] |

| Ambiguous SOP steps | Unwritten "tribal knowledge" not captured [4] | Analyst shadowing during procedure drafting to capture all nuances [4] |

| Unclear acceptance criteria | Criteria not pre-defined or statistically justified [4] | Define acceptance criteria (e.g., statistical limits for equivalence) in the formal transfer protocol [4] |

Guide 3: Managing Method Transfer Risks

Problem: Unforeseen issues cause delays and increase costs.

Investigation & Solutions:

| Risk | Impact | Mitigation Strategy |

|---|---|---|

| Transcription errors from manual data entry [20] | - Cost of deviation investigations: ~$10k-$14k per incident [20]- Potential for costly re-testing and product release delays [4] | - Adopt machine-readable, vendor-neutral method exchange formats where possible [20]- Implement a Laboratory Information Management System (LIMS) [4] |

| Delay in project timeline | - Average cost of one delay day for a commercial therapy: ~$500k [20] | - Include method transfer tasks on the project's critical path [21] |

| Complexity of transferred method | Higher risk of failure during transfer | - Adopt a risk-based approach: the extent of transfer protocol should be commensurate with the method's complexity [4] |

Frequently Asked Questions (FAQs)

Q1: What is the primary objective of an analytical method transfer? The main objective is to formally demonstrate and document that a receiving laboratory can successfully execute a validated analytical procedure and generate results that are statistically equivalent to those produced by the originating laboratory [4].

Q2: What are the different types of analytical method transfer protocols? There are four primary types:

- Comparative Testing: Both labs test the same samples and compare results against pre-defined criteria (most common) [4].

- Co-validation: Both labs collaborate from the start of the validation process [4].

- Partial or Full Revalidation: The receiving lab re-validates some or all method parameters [4].

- Waiver of Transfer: Granted under specific, justified circumstances (e.g., transfer of a compendial method) [4].

Q3: What are the essential components of a method transfer plan? A robust transfer plan should include [4]:

- Clear objective and scope

- Defined responsibilities for all parties

- A summary of the method

- Pre-established, statistically justified acceptance criteria

- Detailed list of materials and equipment

- Step-by-step experimental procedures

- Data analysis and reporting instructions

Q4: How can technology improve the method transfer process?

- LIMS (Laboratory Information Management System): Manages samples, tracks instruments, and centralizes method data to enforce consistency [4].

- ELN (Electronic Laboratory Notebook): Provides a secure, structured platform for sharing detailed experimental records, reducing undocumented techniques [4].

- Standardized Data Formats: Machine-readable, vendor-neutral formats reduce manual transcription errors and improve interoperability [20].

The following table summarizes key quantitative data related to the impact of efficient and failed method transfers.

Financial and Operational Impact of Method Transfer Efficiency

| Metric | Quantitative Impact | Source |

|---|---|---|

| Cost of Deviation Investigations | Average: $10,000 - $14,000 per incident | [20] |

| Cost of Project Delay | Average: ~$500,000 per day for a commercial therapy | [20] |

| HPLC Market Size (Global) | ~$5 Billion | [20] |

| Pharma Analytical Testing Outsourcing Market (2024) | ~$9.0 Billion | [20] |

Experimental Protocols

Protocol 1: Conducting a Comparative Testing Transfer

This is the most common method transfer protocol [4].

1. Objective: To demonstrate that the receiving laboratory can perform the analytical method and generate results equivalent to the originating laboratory's results by testing the same homogeneous samples.

2. Materials:

- Homogeneous samples from a single batch (e.g., a drug product or food substrate).

- Identical analytical method documentation.

- Qualified instrumentation in both labs.

3. Procedure: 1. Training: The receiving analyst undergoes training and may shadow the originating analyst [4]. 2. Execution: Both the originating and receiving laboratories analyze the same set of samples using the same validated method. 3. Replication: The testing is typically repeated over multiple days or by multiple analysts to demonstrate robustness.

4. Data Analysis: The results from both laboratories are statistically compared against pre-defined acceptance criteria. The criteria are often based on the original method validation data and may include limits for accuracy, precision, or a statistical equivalence test.

Protocol 2: Implementing a Risk-Based Approach for Transfer

This protocol outlines the decision-making process for selecting the appropriate transfer strategy.

1. Objective: To tailor the method transfer activities based on the complexity and criticality of the analytical method, focusing resources where the risk of failure is highest.

2. Methodology: 1. Risk Identification: Form a team to identify potential failure modes (e.g., instrument differences, reagent variability, analyst skill) [4]. 2. Risk Assessment: Score each risk based on its probability and impact. 3. Protocol Selection: Use the risk assessment to select the transfer type. A high-risk, complex method may require full comparative testing, while a low-risk, simple method may qualify for a partial revalidation or even a transfer waiver [4].

Workflow and Relationship Diagrams

Method Transfer Workflow

Risk Assessment Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key items and their functions in ensuring a successful analytical method transfer.

| Item Category | Function & Importance in Method Transfer |

|---|---|

| Reference Standards | A well-characterized substance used to ensure the identity, strength, quality, and purity of the analyte. Using the same lot during transfer is critical for equivalence [4]. |

| Chromatography Columns | The specific column (make, model, and lot) is often a critical method parameter. Variations can significantly alter results, so consistency is key [20]. |

| Critical Reagents | Reagents whose quality can directly impact the analytical result (e.g., specific enzymes, buffers). Sourcing from the same supplier and lot is a best practice [4]. |

| System Suitability Test (SST) Solutions | A representative mixture of analytes used to verify that the chromatographic system is adequate for the intended analysis. It is a gateway test before transfer experiments [4]. |

| Homogeneous Sample Batch | A single, uniform batch of the material (e.g., food product) from which all samples for comparative testing are drawn. This ensures variability is due to the lab/analyst, not the sample [4]. |

Implementing Robust Transfer Protocols and Food-Specific Analytical Approaches

Analytical method transfer is a documented process that qualifies a receiving laboratory to use a validated analytical test procedure that originated in another laboratory (the transferring laboratory) [1]. The primary goal is to demonstrate that the receiving laboratory can perform the method with equivalent accuracy, precision, and reliability, producing comparable results and ensuring data integrity across different sites [2] [6]. In the context of food laboratories, this process is crucial when scaling up production, outsourcing testing, or consolidating operations, ensuring that quality and safety results are consistent whether testing is performed in-house or at an external partner facility.

The need for a formal transfer can arise in several scenarios, including moving a method between multi-site operations, transferring methods to or from Contract Research/Manufacturing Organizations (CROs/CMOs), implementing a method on new equipment, or rolling out a method improvement across multiple labs [2]. Selecting the correct transfer strategy is not only a scientific imperative but also a regulatory requirement to maintain compliance with quality standards.

Selecting the appropriate transfer strategy depends on factors such as the method's complexity, its regulatory status, the experience of the receiving lab, and the level of risk involved [2]. Regulatory bodies like the USP (Chapter <1224>) provide guidance on these approaches [2].

The table below summarizes the four primary transfer strategies:

| Transfer Approach | Description | Best Suited For | Key Considerations |

|---|---|---|---|

| Comparative Testing [2] [6] | Both laboratories analyze the same set of samples. Results are statistically compared to demonstrate equivalence. | Well-established, validated methods; laboratories with similar capabilities and equipment [2]. | Requires careful sample preparation, homogeneity, and a robust statistical analysis plan (e.g., t-tests, equivalence testing) [2]. |

| Co-validation [2] [22] [23] | The analytical method is validated simultaneously by both the transferring and receiving laboratories. | New methods being developed for multi-site use from the outset [2]. | Requires high collaboration, harmonized protocols, and shared responsibilities. Data is presented in a single validation package [2] [22]. |

| Revalidation [2] [6] | The receiving laboratory performs a full or partial revalidation of the method. | Significant differences in lab conditions/equipment; substantial method changes; when the transferring lab cannot provide sufficient data [2]. | Most rigorous and resource-intensive approach; requires a full validation protocol and report [2]. |

| Transfer Waiver [2] [6] | The formal transfer process is waived based on strong scientific justification. | Highly experienced receiving lab with identical conditions; very simple and robust methods [2]. | Rarely used and subject to high regulatory scrutiny; requires robust documentation and risk assessment [2]. |

Decision Workflow for Selecting a Method Transfer Strategy

Troubleshooting Common Method Transfer Challenges

Even with a well-chosen strategy, method transfers can encounter obstacles. Below are common issues and their evidence-based solutions.

Failure to Meet Predefined Acceptance Criteria

Problem: During comparative testing, results from the receiving laboratory consistently fall outside the pre-defined acceptance criteria for parameters like precision or accuracy [1].

Solution:

- Investigate Root Causes Systematically: Check for differences in reagent vendors, equipment calibration (e.g., electronic pipettes), environmental conditions (e.g., temperature, humidity), and analyst training [1]. For instance, one investigation revealed a time-dependent increase in measured protein concentration due to a leachate from specific tubes used only at the receiving lab [1].

- Strengthen Pre-Transfer Feasibility: Conduct practice runs or a gap analysis before the formal transfer. This ensures the receiving lab is fully ready and can identify potential mismatches in equipment or operator skill early on [23].

Inconsistent Results in Bioassays or Complex Methods

Problem: Cell-based bioassays or other complex methods show high variability or unexpected results at the receiving site, such as unexpected cell growth or no signal [1].

Solution:

- Enhance Knowledge Transfer: Arrange for in-person, hands-on training from the transferring lab's experts [2] [1]. This is critical for conveying tacit knowledge about critical method parameters and troubleshooting tips.

- Qualify All Critical Reagents and Equipment: Ensure key reagents (e.g., cell lines, enzymes) are sourced from the same qualified vendors and that equipment like cell counters and automated pipettes are properly qualified and calibrated at the receiving site [1]. A case study highlighted that unexpected high results were traced back to an incorrectly calibrated electronic pipette [1].

Regulatory Scrutiny and Protocol Deficiencies

Problem: A regulatory agency questions the transfer, citing issues like insufficient sample size, inappropriate acceptance criteria, or a lack of direct comparison between laboratories [1].

Solution:

- Develop a Robust, Pre-Approved Protocol: The transfer protocol must be detailed and pre-approved. It should clearly define the scope, responsibilities, experimental design, and statistically justified acceptance criteria [2] [1]. Avoid using product specifications as acceptance criteria, as they are often too broad; criteria should be based on the method's historical performance and validation data [1] [23].

- Ensure Comprehensive Documentation: A final transfer report must summarize all activities, results, statistical analysis, deviations, and the conclusion of success [2]. All raw data must be meticulously maintained to support the report [2].

Frequently Asked Questions (FAQs)

1. What is the core difference between method validation, verification, and transfer?

- Method Validation is the process of proving that a new method is reliable, accurate, and suitable for its intended purpose [6].

- Method Verification is a check to confirm a laboratory can successfully perform a compendial method (e.g., from USP) under its own conditions [6].

- Method Transfer is the documented process of qualifying a receiving laboratory to use a method that was already validated in another laboratory [1] [6].

2. When can a transfer waiver be justified? A waiver is only justified in specific, well-documented cases. Examples include transferring a method to a satellite lab using identical equipment and highly trained personnel, or for a very simple and robust method. This approach is rare and requires strong scientific justification and a risk assessment approved by Quality Assurance [2].

3. What statistical methods are commonly used to demonstrate equivalence in comparative testing? Common methods include:

- t-test: To compare the means (accuracy) between the two laboratories [23].

- F-test: To compare the precision (variance) between the two laboratories [23].

- Equivalence Testing (TOST): A more rigorous approach using two one-sided t-tests to prove that the difference between labs is within a pre-defined, acceptable margin [23].

4. Our method transfer failed. What are the next steps? A failure requires a thorough investigation to determine the root cause. Depending on the findings, the solution may involve additional training, modifying the method procedure, requalifying equipment, or even performing a full revalidation at the receiving site. All investigations and corrective actions must be documented [1].

The Scientist's Toolkit: Essential Materials for a Successful Transfer

A successful method transfer relies on more than just a protocol. The following materials and documents are critical for ensuring a smooth process.

| Item Category | Specific Examples | Function & Importance |

|---|---|---|

| Documentation [2] [23] | Method Validation Report, Development Report, Draft SOP | Provides the foundational knowledge and approved procedure. A comprehensive document package is key to effective knowledge transfer. |

| Samples & References [2] | Homogeneous representative samples (e.g., drug substance/product), Stressed/aged samples, Qualified reference standards | Used in comparative testing to demonstrate equivalency. Stressed samples are critical for proving the specificity of stability-indicating methods [1]. |

| Qualified Reagents & Columns [2] [23] | Critical reagents, Qualified HPLC columns, Solvents | Ensures consistency in method performance. Differences in reagent vendors or column batches are a common source of transfer failure. |

| Qualified Equipment [2] [1] | Calibrated instruments (HPLC, pipettes), Qualified automated cell counters | Verifies that equipment at the receiving lab is comparable to that at the transferring lab and is in a state of control. |

Method Transfer Process Workflow

Frequently Asked Questions (FAQs)

Q1: What is the primary objective of an analytical method transfer protocol? The main objective is to provide formal, documented evidence that a receiving laboratory is qualified to execute a validated analytical procedure and can generate results equivalent to those produced by the original (sending) laboratory. This ensures the method remains in a validated state and data integrity is maintained after the move [4] [1].

Q2: When can a formal method transfer be waived? A transfer waiver may be justified in specific, low-risk scenarios. These include the transfer of a recognized compendial method (e.g., from the USP or Ph. Eur.) that only requires verification, when the method is applied to a new product strength with minimal changes, or when the personnel responsible for the method are physically relocated to the new laboratory. The rationale for any waiver must be thoroughly documented and approved by the Quality Assurance unit [4] [5] [24].

Q3: What are the most common causes of method transfer failure? Common failures often stem from unaccounted-for differences in laboratory environments, including:

- Instrumentation: Same model but different calibration, maintenance, or components [4] [1].

- Reagents and Standards: Different lots or suppliers with slight variations in purity [4] [1].

- Personnel Technique: Subjective interpretation of instructions or unwritten "tacit knowledge" not captured in the written procedure [4] [5].

- Documentation Gaps: Incomplete or ambiguous method descriptions that lead to multiple interpretations [4] [25].

Q4: How are acceptance criteria for a transfer defined? Acceptance criteria are pre-defined, statistically justified limits for success. They are typically based on the method's original validation data, particularly its intermediate precision or reproducibility. Criteria must be established for each performance parameter (e.g., assay, impurities) before the transfer is executed [4] [5] [24].

Troubleshooting Guides

Problem 1: Inconsistent Results for Impurity Profiles

- Potential Cause: Differences in chromatographic systems (e.g., HPLC), such as variations in delay volume, detector cell characteristics, or column temperature control [20] [24].

- Investigation & Resolution:

- System Suitability Check: Ensure the receiving lab's system meets all critical parameters defined in the method.

- Instrument Comparison: Compare key instrument parameters and qualification status between the sending and receiving labs.

- Standard & Reagent Traceability: Confirm both labs are using the same lot of critical reagents and reference standards.

- Hands-on Training: Arrange for the receiving analyst to be trained by an expert from the sending lab to capture unwritten technique nuances [4].

Problem 2: Systematic Bias or Shift in Assay Results

- Potential Cause: Calibration differences between instruments, use of different equipment models, or slight variations in sample preparation techniques (e.g., pipetting, sonication, filtration) [4] [1].

- Investigation & Resolution:

- Calibration Verification: Review calibration records for all balances, pipettes, and instruments used.

- Sample Homogeneity: Confirm that the identical, homogenous sample set was used in both laboratories.

- Statistical Analysis: Perform a statistical comparison (e.g., t-test) of the results to confirm the bias is significant and not due to random chance.

- Method Robustness Review: Re-visit the method's robustness data to identify critical parameters that may need tighter control.

Problem 3: Failure to Meet Predefined Acceptance Criteria

- Potential Cause: The acceptance criteria were not statistically appropriate for the method's performance, or an insufficient number of replicates were analyzed to reliably estimate variability [1].

- Investigation & Resolution:

- Root Cause Analysis: Initiate a formal investigation to document the failure and identify its root cause.

- Protocol Re-assessment: Review the transfer protocol's experimental design to ensure it was adequate. It may be necessary to perform additional testing with a revised protocol.

- Data Review: Scrutinize all raw data and instrument outputs for any anomalies or deviations.

Core Components of a Transfer Protocol

A robust analytical method transfer protocol serves as the blueprint for the entire process. It must be a pre-approved document that meticulously outlines the following elements [4] [5] [2]:

- Objective and Scope: A clear statement of the transfer's purpose and the specific analytical methods covered.

- Responsibilities: Defined roles for personnel at both the sending and receiving laboratories, including Quality Assurance (QA) oversight.

- Method Summary: A concise description of the analytical procedure, its purpose, and key performance parameters.

- Materials and Equipment: A detailed list of required instruments, reagents, reference standards, and consumables, including specific models and grades.

- Experimental Design: The number of batches, replicates, and injections to be performed by each laboratory.

- Acceptance Criteria: The pre-established, statistically justified limits for success for each method parameter.

- Data Analysis and Reporting: Instructions on how data will be compiled, statistically compared, and reported.

- Deviation Management: A process for handling and documenting any deviations from the protocol.

Typical Acceptance Criteria for Common Tests

The table below summarizes typical acceptance criteria used in comparative testing for different types of analytical tests. These should be tailored to each specific method based on its validation data [5] [24].

| Test | Typical Acceptance Criteria |

|---|---|

| Identification | Positive (or negative) identification must be obtained at the receiving site. |

| Assay | The absolute difference between the mean results from the two sites should typically not exceed 2-3%. |

| Related Substances | Criteria may vary by impurity level. For low-level impurities, recovery of 80-120% for spiked samples is common. For higher-level impurities, tighter absolute difference criteria are used. |

| Dissolution | The absolute difference in the mean results should be NMT 10% at time points when <85% is dissolved, and NMT 5% when >85% is dissolved. |

Experimental Protocol: Calibration Transfer for Spectral Analysis

In food quality control, Visible/Near-Infrared (Vis/NIR) spectroscopy is widely used, but models are sensitive to external factors like temperature and instrument differences. The following protocol outlines a calibration transfer strategy to maintain model prediction accuracy across different conditions [26].

1. Objective To enable a calibration model developed on a "master" instrument or under specific conditions to be reliably applied to spectral data collected on a "slave" instrument or under different conditions, minimizing the need for full re-calibration.

2. Experimental Workflow The following diagram illustrates the logical workflow for a standard-free calibration transfer strategy, such as the Modified Semi-Supervised Parameter-Free Calibration Enhancement (MSS-PFCE) method [26].

3. Materials and Reagents

- Master Spectrometer: The instrument on which the original calibration model was developed.

- Slave Spectrometer(s): The target instrument(s) for the transfer.

- Representative Samples: A small set (e.g., 5-10% of the original calibration set) of homogenous samples scanned on both the master and slave instruments. These should cover the expected concentration ranges of the analytes [26].

- Software: Chemometric software capable of performing the selected transfer algorithm (e.g., MSS-PFCE, PDS, SBC).

4. Procedure

- Data Collection: Collect spectral data for the representative transfer set on both the master and slave instruments.

- Algorithm Execution: Apply the chosen calibration transfer algorithm (e.g., MSS-PFCE). This involves using constrained optimization to adjust the coefficients of the master model so that it performs accurately on the slave instrument's data, utilizing only the spectral data and reference values from the slave instrument [26].

- Model Transfer: Generate the new, transferred calibration model for the slave instrument.

- Validation: Validate the performance of the transferred model by predicting the properties of an independent test set of samples on the slave instrument. Compare the predictions to the known reference values.

5. Acceptance Criteria The transferred model's performance should be comparable to the original master model. Common metrics include:

- Root Mean Square Error of Prediction (RMSEP): The RMSEP of the transferred model on the slave instrument should be close to that of the master model.

- Coefficient of Determination (R²): The R² for the predictions should demonstrate a strong correlation with reference values.

- A successful transfer using the MSS-PFCE method has been shown to reduce the average RMSEP for slave spectrum predictions by over 75% in some applications [26].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Method Transfer |

|---|---|

| Reference Standards | Qualified standards with known identity and purity used to calibrate instruments and validate method performance. Using the same lot at both sites is a best practice [4]. |

| System Suitability Test Mixtures | A preparation used to verify that the chromatographic system (or other instrument) is adequate for the intended analysis. It is a critical check before transfer experiments begin [24]. |

| Stable, Homogeneous Sample Batches | Identical and representative samples (e.g., drug product, food homogenate) are essential for comparative testing to ensure any differences are due to the laboratory and not the sample [4] [24]. |

| Critical Method Reagents | Specific reagents whose properties can significantly impact results (e.g., enzyme purity in an enzymatic assay, mobile phase pH). Sourcing from the same supplier and lot is recommended [4] [1]. |

| Chemometric Software | Software for multivariate data analysis is essential for implementing advanced calibration transfer strategies in spectroscopic applications, such as MSS-PFCE or Piecewise Direct Standardization (PDS) [26]. |

The transfer of analytical methods between laboratories is a critical, yet challenging, cornerstone of modern food science research and quality control. In an era of distributed manufacturing and globalized supply chains, ensuring that an analytical method—whether based on spectroscopy, chromatography, or non-targeted approaches—produces equivalent results when moved from a development lab to a quality control lab or between manufacturing sites is paramount for data integrity and regulatory compliance [4]. The process is fraught with obstacles, from subtle instrumental variations and reagent differences to personnel techniques and sample heterogeneity, all of which can compromise the reliability of food safety and authenticity assessments [27] [28] [29]. This technical support center addresses the specific, practical issues researchers and scientists encounter during method transfer and implementation, providing troubleshooting guidance and FAQs to enhance experimental success and methodological robustness within the unique context of food analysis.

Core Challenges in Analytical Method Transfer

Successful method transfer hinges on understanding and controlling key variables. The following table summarizes the primary sources of error and their impacts.

Table 1: Key Challenges in Analytical Method Transfer for Food Laboratories

| Challenge Category | Specific Source of Error | Impact on Analytical Results |

|---|---|---|

| Instrumental Variations | Differences in gradient delay volume, detector flow cells, or baseline noise between HPLC/UHPLC systems [28] [30] | Altered retention times, peak shape, sensitivity, and quantification accuracy [30] |

| Physical differences between spectrometers (e.g., NIR), including wavelength drift and absorbance fluctuations [29] | Baseline shifts and erroneous predictions in multivariate calibration models [29] | |

| Sample & Reagent Issues | Variability in reagent purity, grade, or vendor between laboratories [28] | Introduction of contaminant peaks or compromised analyte recovery |

| Different protocols for mobile phase or standard preparation (e.g., volumetric vs. gravimetric) [28] | Measurable changes in chromatographic selectivity and retention [28] | |

| Sample Properties & Handling | Poor powder flow properties and heterogeneity in solid food samples [29] | Significant spectral baseline variations and inconsistent predictions in spectroscopic methods [29] |

| Inadequate sampling procedures (e.g., grab vs. composite sampling) for heterogeneous materials [29] | High sampling error, which can be the largest component of total measurement uncertainty [29] | |

| Personnel & Documentation | Unwritten or subtle techniques in sample preparation not captured in the written method [4] | Poor reproducibility and method failure upon transfer |

| Insufficient detail in the standard operating procedure (SOP) [28] [4] | Ambiguity in execution, leading to inconsistent results between analysts and labs |

Troubleshooting Guides

Spectroscopy (FT-IR, NIR) Troubleshooting

Table 2: Common Issues and Solutions in Spectroscopic Analysis

| Problem | Potential Cause | Solution | Preventive Measure |

|---|---|---|---|

| Noisy Spectra or Baseline Shifts | Instrument vibration from nearby equipment or lab activity [31] | Relocate the spectrometer to a vibration-free bench or use vibration-dampening pads | Ensure the instrument is on a stable, dedicated surface away from heavy foot traffic or machinery |

| Poor flow of powdered samples causing air gaps and inconsistent packing (NIR) [29] | Adjust process parameters like feed rate to ensure consistent powder flow and packing [29] | Optimize material handling and process conditions during method development | |

| Negative Absorbance Peaks (ATR-FTIR) | Dirty or contaminated ATR crystal [31] | Clean the crystal with a suitable solvent and acquire a fresh background spectrum | Clean the crystal before and after each use and ensure proper sample handling |

| Inconsistent Model Predictions (NIR) | High baseline variations due to physical sample properties [29] | Identify and eliminate spectra with abnormally high baselines from the model; recalibrate if necessary [29] | During development, build models with samples covering the expected range of physical variability |

| Model transfer between spectrometers without proper calibration transfer algorithms [29] | Use techniques like spectral regression or orthogonal signal correction to standardize responses between instruments [32] | Develop the initial model on a master instrument and validate the transfer protocol to slave instruments |

Chromatography (HPLC, UHPLC) Troubleshooting

Table 3: Common Issues and Solutions in Chromatographic Analysis

| Problem | Potential Cause | Solution | Preventive Measure |

|---|---|---|---|

| Inconsistent Retention Times | Differences in gradient delay volume between the original and receiving lab's HPLC system [30] | Use a system with a tunable gradient delay volume to physically match the original system's volume [30] | Document the gradient delay volume of the originating system in the method SOP |

| Differences in mobile phase preparation (e.g., volumetric vs. gravimetric) [28] | Adhere strictly to a single, detailed preparation protocol documented in the method | Specify mobile phase preparation with explicit, step-by-step instructions in the SOP | |

| Peak Tailing or Splitting | Differences in pre-column volume and dispersion [30] | Use a custom injection program to match the dispersion profile of the original system [30] | Document all instrument module specifications in the method |

| Degraded or contaminated chromatographic column | Use the exact same column brand, model, and lot if possible [28] | Specify the column in detail (manufacturer, dimensions, particle size, pore size, etc.) in the method | |

| Blank Measurement Errors | Contaminated cuvette or mobile phase | Inspect and clean the sample cuvette; prepare fresh, high-quality mobile phase [33] | Use high-purity solvents and clean, dedicated labware |

Non-Targeted Analysis Troubleshooting

Table 4: Common Issues and Solutions in Non-Targeted Analysis

| Problem | Potential Cause | Solution | Preventive Measure |

|---|---|---|---|

| Ion Suppression/Enhancement in HRMS | Co-elution of matrix components with analytes, causing signal interference [34] | Improve sample cleanup (e.g., with optimized SPE or QuEChERS sorbents) or use matrix-matched calibration | Employ efficient sample preparation protocols like QuEChERSER for broad analyte coverage and matrix cleanup [34] |

| Inability to Cover Broad Polarity Range | Single extraction protocol is not suitable for all chemical classes [34] | Implement a "mega-method" like QuEChERSER, which extends coverage for both LC- and GC-amenable compounds [34] | Adopt a multi-protocol strategy or use a versatile, validated mega-method from the start |

| Lack of Reproducibility Between Labs | Absence of standardized workflows and guidelines for method validation [32] | Follow emerging guidelines from bodies like Eurachem and AOAC for validating non-targeted methods [32] | Implement and document a rigorous, standardized validation protocol internally before transfer |

Experimental Protocols for Robust Method Transfer

Protocol for Transfer of an NIR Method for Powder Blends

This protocol is based on a study transferring a near-infrared method for monitoring a disintegrant in a binary powder blend [29].

1. Objective: To successfully transfer a calibrated NIR model from a development laboratory to a commercial manufacturing site for at-line determination of blend uniformity.

2. Materials:

- Materials: Croscarmellose sodium and microcrystalline cellulose.

- Equipment: Master NIR spectrometer (development lab), Slave NIR spectrometers (commercial plant), and equipment for reference analysis (if applicable).

3. Procedure:

- Calibration Model Development (Master Lab):

- Prepare laboratory-scale calibration blends with the target component (croscarmellose) spanning the expected concentration range (e.g., 4.32–64.77 %w/w) [29].

- Collect NIR spectra for all blends.

- Develop a multivariate calibration model (e.g., using PLS regression) and validate it using an independent set of test blends.

- Initial Transfer & Process Understanding (Development Plant):

- Install the slave NIR spectrometer in-line or at-line at the development plant.

- Use the model to predict concentrations in real-time during blending runs.

- Critical Observation: Monitor for significant baseline shifts in the spectra, which may indicate poor powder flow, air gaps, or inconsistent powder bed height [29].

- Troubleshooting: If high bias and inconsistent predictions are observed, correlate them with process parameters. In the case study, reducing the feed rate significantly improved flow and reduced prediction bias by 42-51% [29].

- Final Implementation (Commercial Site):

- Transfer the model to the spectrometers at the commercial site.

- Collect powder samples (using composite sampling to ensure representativeness [29]) at the beginning, middle, and end of manufacturing runs.

- Acquire NIR spectra and use the model to predict concentrations.

- Compare the predictions to reference values or use for quality control monitoring.

4. Acceptance Criteria: The model is considered successfully transferred if the bias values for the slave instruments fall within a pre-defined, justified range (e.g., < 3.5 %w/w, as demonstrated in the case study [29]).

Diagram 1: NIR Method Transfer Workflow

Protocol for HPLC/UHPLC Method Transfer

This protocol outlines a systematic approach for transferring a chromatographic method.

1. Objective: To demonstrate that a receiving laboratory can execute a validated HPLC method and generate results equivalent to those from the originating laboratory.

2. Materials:

- Samples: Identical, homogenous batches of the test sample (e.g., a food extract) for both labs.

- Chemicals: The same lot numbers of solvents, buffers, and reference standards for both labs.

- Columns: The exact same column (brand, model, dimensions, particle size, and lot number).

- Equipment: HPLC/UHPLC systems in both the originating and receiving labs.

3. Procedure:

- Planning:

- Develop a formal, documented transfer protocol defining responsibilities, acceptance criteria (e.g., % difference in assay, resolution of critical pairs), and procedures [4].

- Ensure the receiving lab's instrument is properly qualified (IQ/OQ/PQ).

- Alignment:

- Characterize and align critical instrument parameters. For the receiving lab's system, this may involve tuning the gradient delay volume to match the original system and selecting the appropriate detector flow cell [30].

- Comparative Testing:

- Both laboratories analyze the same set of samples using the identical, detailed method.

- A minimum of six replicate injections per lab is typical for statistical comparison [4].

- Data Analysis:

- Statistically compare the results (e.g., peak area, retention time, assay value, precision) from both labs against the pre-defined acceptance criteria.

4. Acceptance Criteria: The method transfer is successful if the results from the receiving laboratory fall within the agreed-upon limits (e.g., a statistical F-test and t-test show no significant difference at a 95% confidence level) [4].

Frequently Asked Questions (FAQs)

Q1: What is the most common protocol for formal analytical method transfer? A1: Comparative testing is the most common protocol. It involves both the originating and receiving laboratories testing the same set of samples using the same method. The results are then statistically compared against pre-defined acceptance criteria to demonstrate equivalence [4].

Q2: How can we mitigate the impact of different analysts' techniques during transfer? A2: Proactive measures are key. These include:

- Detailed SOPs: Write procedures with exhaustive detail, leaving no room for interpretation [28].

- Hands-on Training: The receiving analyst should be trained by, or shadow, an experienced analyst from the originating lab to learn unwritten nuances [4].