Method Validation in Food Analysis: Bridging the Divide Between Authenticity and Safety Testing

This article provides a comprehensive guide for researchers and scientists on the distinct validation paradigms for food authenticity versus food safety testing.

Method Validation in Food Analysis: Bridging the Divide Between Authenticity and Safety Testing

Abstract

This article provides a comprehensive guide for researchers and scientists on the distinct validation paradigms for food authenticity versus food safety testing. It explores the foundational principles, from targeted safety checks to probabilistic authenticity models, and details advanced methodological applications including non-targeted screening, DNA-based techniques, and isotope ratio mass spectrometry. The content addresses critical troubleshooting aspects such as database robustness and regulatory alignment, and offers a comparative analysis of validation frameworks from organizations like AOAC and FDA. By synthesizing these elements, the article aims to equip professionals with the knowledge to develop robust, fit-for-purpose analytical methods that ensure both food safety and integrity in a complex global supply chain.

Divergent Aims, Divergent Methods: Core Principles of Food Safety and Authenticity Testing

Safeguarding the food supply involves two critical but fundamentally distinct disciplines: food safety and food authenticity. While both are essential for consumer protection, they differ in their core objectives, scope, and methodological approaches. Food safety focuses on preventing unintentional contamination from biological, chemical, physical, or radiological hazards that could cause consumer illness or injury [1]. Food authenticity, under the umbrella of food fraud prevention, is concerned with the deliberate misrepresentation of food for economic gain, protecting the supply chain from deceptive practices like adulteration, substitution, or mislabeling [2] [3]. For researchers and method developers, recognizing that food safety deals with unintentional hazards while authenticity tackles economically motivated adulteration is the foundational step in designing appropriate testing protocols and validation frameworks. This distinction has profound implications for the selection of analytical techniques, the design of quality control systems, and the development of regulatory strategies, forming the core of a modern food integrity strategy.

Conceptual Frameworks and Regulatory Foundations

The operational frameworks for food safety and authenticity are governed by different principles and regulatory requirements. Food safety management is historically built upon the Hazard Analysis and Critical Control Points (HACCP) system, a structured preventive approach that identifies specific points in the production process where control can be applied to prevent or eliminate a safety hazard or reduce it to an acceptable level [1]. This system focuses on Critical Control Points (CCPs) with established critical limits, monitoring procedures, and corrective actions.

In contrast, food fraud prevention employs a vulnerability-based model. Vulnerability Assessment and Critical Control Points (VACCP) is a systematic process used to identify and mitigate vulnerabilities in the supply chain that could be exploited for economic gain [3]. Where HACCP asks "What could go wrong to make the product unsafe?", VACCP asks "Where is the product vulnerable to fraudulent activity?".

Regulatorily, the U.S. Food Safety Modernization Act (FSMA) encapsulates this duality. FSMA's Preventive Controls for Human Food (PCHF) rule requires a comprehensive Food Safety Plan that addresses process, allergen, and sanitation controls, effectively broadening the traditional HACCP approach [4] [1]. Simultaneously, the Intentional Adulteration (IA) rule addresses food defense, focusing on preventing acts intended to cause widespread harm, distinct from the economically motivated fraud covered by VACCP [2].

Table 1: Fundamental Differences Between Food Safety and Food Authenticity

| Aspect | Food Safety (Hazard Control) | Food Authenticity (Fraud Prevention) |

|---|---|---|

| Primary Intent | Prevent unintentional consumer harm | Prevent economic deception and protect supply chain integrity |

| Core Driver | Public health protection | Financial gain [2] |

| Nature of Threat | Accidental contamination | Intentional adulteration or misrepresentation [2] [3] |

| Primary Framework | HACCP Plan | Food Fraud Vulnerability Assessment (VACCP) [2] [3] |

| Regulatory Focus | FSMA Preventive Controls Rule | FSMA IA Rule (for food defense); GFSI standards for fraud [2] |

| Assessment Tool | Hazard Analysis | Vulnerability Assessment [2] |

Analytical Methodologies: From Targeted Hazards to Non-Targeted Authenticity Profiling

The fundamental differences between food safety and authenticity directly shape their respective analytical approaches. Food safety testing typically employs targeted methods that detect, identify, and quantify specific known hazards. These methods are designed for precise measurement of contaminants like pathogens, mycotoxins, pesticide residues, or allergens, with established thresholds and regulatory limits.

Conversely, food authenticity testing often requires non-targeted methods and chemical fingerprinting techniques to detect discrepancies between a product's claimed and actual composition. This approach is necessary because the possible adulterants are often unknown, and fraudsters continuously develop new methods to evade detection.

Table 2: Core Analytical Approaches in Food Safety vs. Food Authenticity

| Methodology | Application in Food Safety | Application in Food Authenticity |

|---|---|---|

| DNA Barcoding | Species-specific pathogen identification | Species authentication in meat, fish, and herbs [5] [6] |

| Chromatography/Mass Spectrometry | Quantification of pesticide residues, mycotoxins, and allergens | Detection of undeclared additives, profiling of authentic compositions [7] |

| Spectroscopy (NIR, MIR, Raman) | Rapid screening for known contaminants | Geographic origin verification, variety discrimination [7] |

| Stable Isotope Analysis | Limited use in safety | Geographic origin authentication [7] |

| Protein-Based Methods (ELISA) | Allergen detection, pathogen identification | Limited use due to protein denaturation in processing |

| Multi-omics (Foodomics) | Tracking pathogen outbreaks | Comprehensive authentication of origin, processing, and composition [8] |

Experimental Protocol: DNA Barcoding for Species Authentication

DNA barcoding has emerged as a gold-standard method for species identification in food authenticity research, particularly for detecting species substitution in meat and seafood products [5] [6]. Below is a detailed experimental protocol:

1. DNA Extraction:

- Begin with 100 mg of tissue sample or 200 mg of processed food product.

- Use a commercial DNA extraction kit suitable for the food matrix (e.g., DNeasy Blood & Tissue Kit for raw meat, DNeasy Mericon Food Kit for processed products).

- Include a digestion step with proteinase K (20 mg/mL) at 56°C for 3 hours to ensure complete cell lysis.

- Perform final elution in 100 µL of AE buffer (10 mM Tris-Cl, 0.5 mM EDTA; pH 9.0).

- Quantify DNA yield and purity using spectrophotometry (NanoDrop), requiring A260/A280 ratio of 1.7-2.0 and minimum concentration of 10 ng/µL for reliable amplification.

2. PCR Amplification:

- Prepare 25 µL reaction mixtures containing: 1X PCR buffer, 2.5 mM MgCl₂, 0.2 mM dNTPs, 0.4 µM of each primer, 1.25 U of Taq DNA polymerase, and 2 µL (20-50 ng) of template DNA.

- For animal species, use the standard COI (cytochrome c oxidase subunit I) primer pair: FishF1 (5'-TCAACCAACCACAAAGACATTGGCAC-3') and FishR1 (5'-TAGACTTCTGGGTGGCCAAAGAATCA-3') [5].

- For plant species, use the rbcL (ribulose-1,5-bisphosphate carboxylase/oxygenase) primer pair: rbcLaF (5'-ATGTCACCACAAACAGAGACTAAAGC-3') and rbcLaR (5'-GTAAAATCAAGTCCACCRCG-3') [5].

- Use the following thermal cycling conditions: initial denaturation at 95°C for 2 min; 35 cycles of 95°C for 30 s, 52°C (COI) or 50°C (rbcL) for 40 s, 72°C for 1 min; final extension at 72°C for 10 min.

3. Sequencing and Data Analysis:

- Purify PCR products using magnetic bead-based clean-up systems.

- Perform bidirectional Sanger sequencing with the same primers used for amplification.

- Assemble forward and reverse sequences, then compare to reference databases (BOLD Systems or GenBank) using alignment algorithms (BLAST).

- Species identification is confirmed with ≥98.5% sequence similarity to reference barcodes and placement in a monophyletic cluster in phylogenetic analysis [5].

The Researcher's Toolkit: Essential Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Food Testing

| Reagent/Solution | Function | Application Context |

|---|---|---|

| Proteinase K | Enzymatic digestion of proteins for DNA release | DNA extraction for authenticity testing [5] |

| PCR Master Mix | Amplification of target DNA sequences | Species authentication via DNA barcoding [5] [8] |

| DNA Barcode Primers (COI, rbcL, matK) | Species-specific amplification | Targeting standardized genomic regions for identification [5] |

| LC-MS/MS Mobile Phases | Compound separation and ionization | Targeted contaminant analysis and non-targeted metabolomics [7] |

| Stable Isotope Reference Materials | Calibration of isotope ratio instruments | Geographic origin verification [7] |

| Selective Media & Enrichment Broths | Pathogen isolation and growth | Microbiological safety testing |

| Immunoaffinity Columns | Specific capture of target analytes | Mycotoxin and allergen detection |

Data Interpretation and Pattern Recognition in Authenticity Research

Food authenticity research increasingly relies on advanced chemometric tools and pattern recognition techniques to interpret complex analytical data [7]. Unlike food safety testing with its established thresholds and limits of detection, authenticity assessment often requires multivariate analysis to distinguish authentic from fraudulent products.

Principal Component Analysis (PCA) is routinely employed as an unsupervised method to reduce data dimensionality and visualize natural clustering of samples based on their chemical profiles. Linear Discriminant Analysis (LDA) serves as a supervised classification technique to maximize separation between pre-defined groups (e.g., geographic origins). For complex authentication problems, machine learning algorithms such as Support Vector Machines (SVM) and Artificial Neural Networks (ANN) are increasingly applied to build predictive models from spectroscopic or chromatographic data [9] [7].

The workflow typically involves: (1) data acquisition from analytical instruments, (2) data pre-processing (normalization, scaling, alignment), (3) exploratory analysis with unsupervised methods, (4) model building with supervised techniques, and (5) model validation using test sets and cross-validation. This approach transforms raw analytical data into actionable intelligence for food authentication.

Food safety's hazard control and authenticity's fraud prevention represent two complementary but fundamentally different disciplines within modern food science. While food safety focuses on preventing unintentional harm through targeted hazard analysis and control systems like HACCP, food authenticity addresses economically motivated adulteration through vulnerability assessments and sophisticated analytical profiling. For researchers and method development scientists, recognizing these distinctions is crucial for designing appropriate testing strategies, selecting relevant analytical platforms, and developing validated methods that effectively address the unique challenges presented by each domain. The future of food integrity lies in integrating both approaches, leveraging advances in DNA technologies, foodomics, and data science to create a comprehensive shield that protects consumers from both harm and deception.

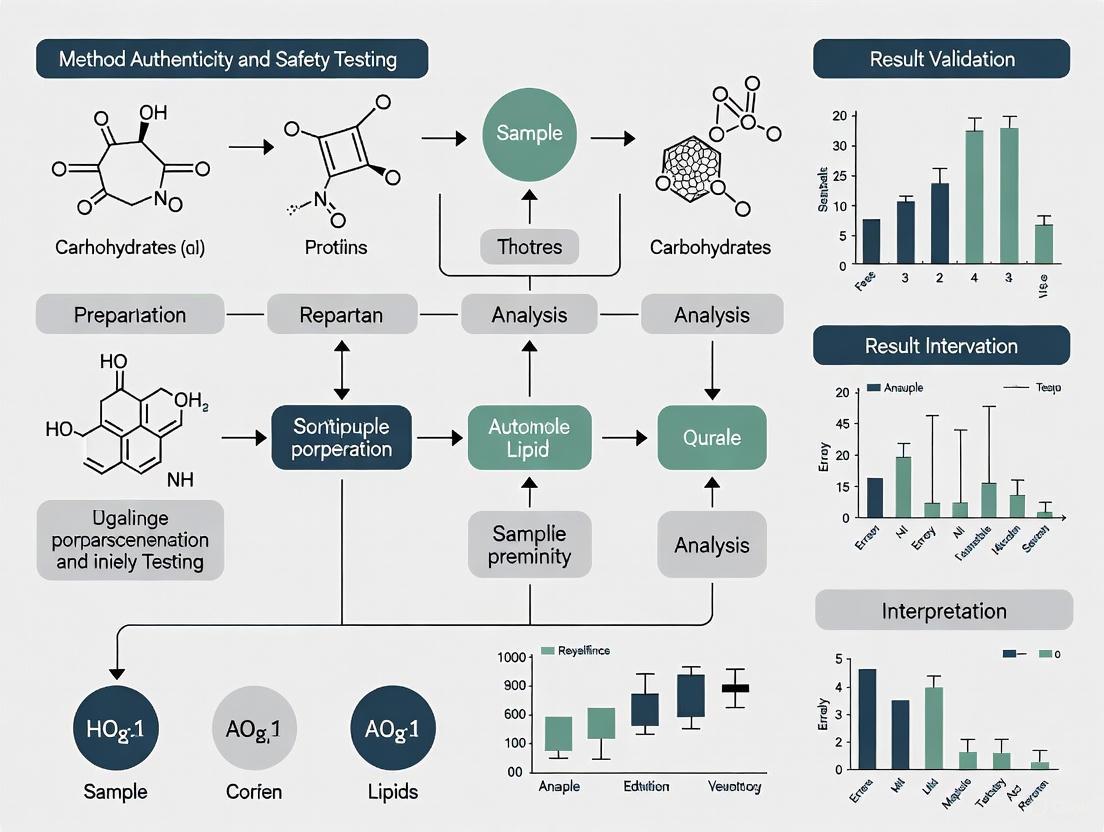

Food Protection Framework Diagram

In food testing, two analytical philosophies have emerged, each tailored to address fundamentally different challenges. Food safety testing is governed by a philosophy of definitive safety limits, seeking clear, binary answers against established regulatory thresholds. In contrast, food authenticity testing operates on probabilistic authenticity patterns, dealing with complex, multivariate data to verify claims about origin, composition, and production methods [10] [11]. This distinction arises from their core objectives: safety testing protects consumers from immediate health risks, while authenticity testing safeguards against economic fraud and ensures supply chain transparency [12].

The global food authenticity testing market, projected to grow from USD 8.49 billion in 2024 to USD 13.45 billion by 2032, reflects increasing recognition of both paradigms [12]. This growth is driven by rising consumer demand for transparency, technological advancements in analytical instrumentation, and increasingly sophisticated food fraud incidents that challenge traditional testing approaches [13] [11]. The philosophical divergence between these approaches influences every aspect of method development, validation, and application in modern food laboratories.

Methodological Foundations: Contrasting Analytical Approaches

Definitive Safety Limits in Food Safety Testing

Food safety testing employs methodologies designed to yield unambiguous results against predetermined regulatory thresholds. These methods focus on detecting and quantifying specific hazardous substances, including pathogens, chemical contaminants, allergens, and unauthorized additives [14]. The analytical philosophy is fundamentally binary – results either comply with safety limits or they do not, leaving little room for interpretation.

Regulatory frameworks worldwide enforce this definitive approach. Recent updates for 2025 include the USDA's proposed crackdown on Salmonella with new lower thresholds and the FDA's potential ban on Red Dye No. 3 following California's lead [14]. These regulations mandate specific analytical approaches that provide quantitative data with high precision and accuracy at established detection limits. The methodological emphasis is on specificity and sensitivity for targeted analytes, with validation parameters including linearity, accuracy, precision, and robustness determined for each defined substance [11].

Probabilistic Patterns in Food Authenticity Testing

Food authenticity verification relies on recognizing complex patterns rather than quantifying individual compounds. This approach utilizes multivariate data from advanced analytical techniques to build classification models that can distinguish authentic products from fraudulent ones based on subtle compositional differences [10] [15].

Table 1: Core Analytical Techniques in Food Authenticity Testing

| Technique Category | Specific Technologies | Application Examples | Pattern Type |

|---|---|---|---|

| Mass Spectrometry | LC-MS, DART-MS, ICP-MS, REIMS | Geographical origin verification, adulteration detection [16] [15] | Spectral fingerprints and elemental profiles |

| Molecular Spectroscopy | NMR, FTIR, NIR | Metabolic profiling, variety discrimination [10] [11] | Spectral patterns and metabolic fingerprints |

| Genomics | DNA barcoding, PCR, next-generation sequencing | Species identification, mislabeling detection [12] | Genetic sequence patterns |

| Isotope Analysis | IRMS, SNIF-NMR | Geographic origin, agricultural practices [12] | Isotopic ratio patterns |

The probabilistic nature of these techniques emerges from their reliance on chemometric models and machine learning algorithms to interpret complex datasets. Unlike safety testing with its definitive limits, authenticity assessment employs pattern recognition algorithms including Principal Component Analysis (PCA), Partial Least Squares Discriminant Analysis (PLS-DA), and Random Forests to classify products based on multivariate signatures [17] [15]. These models provide probability estimates rather than binary answers, reflecting the inherent complexity of natural products and the subtle nature of food fraud.

Experimental Paradigms: Case Studies in Method Application

Definitive Safety Limit Application: Allergen Testing

The detection of undeclared allergens exemplifies the definitive safety limit approach. Methods like ELISA (Enzyme-Linked Immunosorbent Assay) and PCR target specific allergenic proteins or DNA sequences with well-defined detection limits and binary outcomes – allergens are either present above regulatory thresholds or not [12]. With sesame recently added to the list of major allergens, manufacturers must implement testing protocols that provide definitive answers about its presence or absence, driving method development toward greater specificity and lower detection limits [14].

Experimental protocols for allergen detection emphasize quantification precision at critical threshold levels. For example, immunoassay-based methods undergo rigorous validation to establish Limit of Detection (LOD) and Limit of Quantification (LOQ) parameters, with results directly comparable to regulatory limits such as the 0.1-1.0 mg/kg range for many allergenic proteins [11]. This approach leaves no room for probabilistic interpretation when consumer health is at stake.

Probabilistic Pattern Application: Geographical Origin Verification

A comprehensive study on salmon origin authentication demonstrates the probabilistic pattern approach. Researchers analyzed 522 salmon samples from five regions (Alaska, Norway, Iceland, Scotland) and two production methods (farmed, wild-caught) using two mass spectrometry platforms: Rapid Evaporative Ionisation Mass Spectrometry (REIMS) and Inductively Coupled Plasma Mass Spectrometry (ICP-MS) [15].

Table 2: Experimental Results from Salmon Origin Authentication Study

| Analytical Platform | Data Type | Classification Accuracy | Key Discriminatory Features |

|---|---|---|---|

| REIMS | Lipidomic profiles | 100% cross-validation accuracy [15] | 18 lipid markers including unsaturated fatty acids and glycerophospholipids |

| ICP-MS | Elemental profiles | Significant regional differentiation [15] | 9 elemental markers reflecting water and feed composition |

| Mid-level data fusion | Combined lipid and element patterns | 100% test set accuracy (17/17 samples) [15] | Comprehensive biochemical signature |

The experimental workflow involved sample analysis using both platforms, followed by data preprocessing and fusion at the feature level. The fused dataset was then subjected to multivariate analysis including PCA and OPLS-DA to identify discriminant patterns. This approach successfully identified 18 robust lipid markers and 9 elemental markers that collectively provided a probabilistic signature of provenance [15]. The method's strength lies not in quantifying specific compounds but in recognizing the complex pattern that authenticates origin.

Figure 1: Experimental Workflow for Probabilistic Food Authentication

Validation Frameworks: Contrasting Method Verification Approaches

Validation of Definitive Safety Methods

Method validation for safety testing follows established protocols with quantitatively defined performance parameters. These include accuracy (degree of agreement with true value), precision (repeatability and reproducibility), specificity (ability to distinguish target analyte), linearity (relationship between concentration and response), range (interval between upper and lower concentration), LOD (lowest detectable amount), and LOQ (lowest quantifiable amount) [11].

Regulatory bodies provide explicit guidance on validation criteria, with acceptance thresholds defined for each parameter. For instance, precision is often required to demonstrate ≤15% relative standard deviation, while accuracy must fall within ±15% of the true value [14]. This quantitative framework ensures methods consistently produce reliable results against established safety limits, with validation data providing definitive evidence of methodological competence.

Validation of Probabilistic Authenticity Methods

Authenticity method validation employs fundamentally different parameters focused on classification performance rather than quantitative accuracy. Key validation metrics include classification accuracy (percentage of correctly classified samples), sensitivity (true positive rate), specificity (true negative rate), cross-validation error (performance on unseen data), and model robustness (consistency across different sample batches) [17] [15].

The validation process for probabilistic methods emphasizes model performance stability through techniques such as k-fold cross-validation and external validation with independent sample sets. For example, the salmon authentication study achieved 100% classification accuracy in both cross-validation and external testing with 17 supermarket samples, demonstrating robust model performance [15]. This approach validates the pattern recognition capability rather than quantitative precision.

Technological Requirements: Instrumentation and Data Analysis

The Scientist's Toolkit: Essential Research Solutions

Table 3: Essential Research Solutions for Food Authenticity Testing

| Tool Category | Specific Solutions | Function in Authenticity Testing |

|---|---|---|

| Mass Spectrometry Platforms | DART-MS, LC-MS, ICP-MS, REIMS [16] [15] | Provides lipidomic, elementomic, and metabolic profiling for pattern generation |

| Molecular Spectrometers | NMR, FTIR, NIR Spectrometers [10] [11] | Enables non-destructive spectral analysis and metabolic fingerprinting |

| Data Analysis Software | MetaboScape, SIMCA, Python/R with ML libraries [17] [16] | Facilitates chemometric analysis, machine learning, and pattern recognition |

| DNA Analysis Tools | PCR Systems, DNA Sequencers, Barcoding Databases [12] | Supports species identification and genetic authentication |

| Reference Databases | LipidMaps, Chemical Metabolite Libraries, Spectral Databases [15] | Enables compound identification and biomarker validation |

Emerging Technological Integration

The integration of artificial intelligence with advanced analytical technologies represents the future of food authenticity testing. AI algorithms, particularly machine learning and deep learning, can analyze complex magnetic resonance and mass spectrometry data to extract subtle patterns indicative of authenticity [10] [17]. Explainable AI (XAI) approaches are gaining prominence to address the "black box" nature of complex models, making probabilistic conclusions more transparent and actionable for researchers and regulators [17].

Data fusion strategies represent another technological advancement, where multiple analytical techniques are combined to enhance classification accuracy. The salmon study demonstrated that mid-level data fusion of REIMS and ICP-MS data achieved 100% classification accuracy, outperforming single-platform methods [15]. This approach leverages complementary data sources to create more robust probabilistic models capable of detecting sophisticated fraud.

Figure 2: AI-Enhanced Data Fusion for Food Authentication

The philosophical divide between definitive safety limits and probabilistic authenticity patterns represents complementary rather than contradictory approaches to food analysis. Safety testing provides the essential foundation for consumer protection through binary, regulation-driven methods that yield unambiguous results. Authenticity testing adds a crucial layer of supply chain integrity through multivariate, pattern-based approaches that detect subtle fraud patterns.

The future of food testing lies in recognizing the strengths and limitations of each paradigm while leveraging technological advancements to enhance both approaches. The integration of AI with advanced analytical platforms, the development of explainable machine learning models, and the implementation of data fusion strategies will continue to blur the lines between these philosophies while enhancing the accuracy, efficiency, and scope of food testing [10] [17]. This evolution will support the development of a more transparent, safe, and authentic global food system that addresses both health protection and economic integrity concerns.

For researchers and method development professionals, understanding these philosophical differences is crucial for selecting appropriate analytical strategies, validation approaches, and technological solutions based on the specific testing objective. Rather than favoring one approach over the other, the most effective food testing programs intelligently integrate both paradigms to address the complex challenges of modern food supply chains.

In the contemporary global food industry, two powerful and often interconnected forces are shaping analytical science and regulatory agendas: the unequivocal mandate for food safety and the growing demand for food authenticity. While food safety testing focuses on protecting consumers from harmful biological, chemical, or physical agents, food authenticity verification ensures that food is genuine, accurately labeled, and free from economically motivated adulteration (EMA) [18] [19]. The distinction is critical; safety failures pose direct public health risks, while authenticity breaches erode consumer trust, violate labeling laws, and can indirectly lead to health hazards, as exemplified by the 2008 melamine-in-milk incident which caused infant deaths and illnesses [19]. For researchers and scientists, the methodological approaches, regulatory frameworks, and analytical technologies for these two domains are converging, yet retain distinct characteristics. This guide provides a comparative analysis of the key drivers, focusing on the pivotal role of method validation in building a food system that is both safe and trustworthy.

Comparative Analysis of Key Drivers

The operational and strategic priorities for food testing are dictated by a combination of regulatory mandates and market-driven demands. The table below summarizes the core drivers for safety and authenticity testing, highlighting their distinct focuses and overlaps.

Table 1: Key Drivers for Food Safety and Authenticity Testing

| Driver | Food Safety Testing | Food Authenticity Testing |

|---|---|---|

| Primary Objective | To protect public health from immediate harm [19]. | To ensure food is genuine, accurately labeled, and to prevent fraud [20]. |

| Core Regulatory Focus | Compliance with preventive controls and defined safety limits (e.g., FSMA, EU 2023/915 on contaminants) [21]. | Prevention of mislabeling and economic fraud; verification of label claims (e.g., organic, geographic origin) [19] [22]. |

| Consumer Concern | Avoidance of illness, injury, or long-term health effects [18]. | Trust, transparency, receiving value for money, and ethical considerations [18] [23]. |

| Typical Analytes | Pathogens, mycotoxins, pesticide residues, heavy metals, PFAS [21]. | Species adulteration, geographic origin, production method (e.g., organic), undeclared substitutes [20] [19]. |

| Common Methodologies | Targeted methods for known contaminants (e.g., LC-MS/MS for mycotoxins, PCR for pathogens) [21]. | Combination of targeted (for known fraud) and non-targeted methods (for unknown fraud), e.g., DNA barcoding, NMR, Isotope Ratio MS [24] [20] [19]. |

Analytical Methodologies: A Comparative Workflow

The fundamental difference in analytical strategy between safety and authenticity testing often lies in the choice between targeted and non-targeted approaches. Safety protocols predominantly rely on targeted analysis, which quantifies specific, known hazardous compounds. In contrast, authenticity investigations increasingly employ non-targeted analysis to screen for unexpected adulterants or verify complex product profiles [19].

Experimental Protocol: Non-Targeted Analysis for Honey Authenticity

Honey is one of the most adulterated foods globally, making it a prime subject for authenticity research [21]. The following protocol, based on current methodologies, outlines a non-targeted approach using liquid chromatography–high-resolution mass spectrometry (LC-HRMS) to create a chemical fingerprint.

1. Sample Preparation:

- Weigh 1.0 g of honey into a 50 mL centrifuge tube.

- Add 10 mL of a water:acetonitrile (1:1 v/v) solution containing 0.1% formic acid.

- Vortex vigorously for 2 minutes until fully dissolved.

- Subject the mixture to ultrasonic extraction for 15 minutes at room temperature.

- Centrifuge at 10,000 × g for 10 minutes.

- Filter the supernatant through a 0.22 μm nylon membrane into an LC vial for analysis [21].

2. LC-HRMS Analysis:

- Column: Reversed-phase C18 column (e.g., 2.1 x 100 mm, 1.8 μm).

- Mobile Phase: A) Water with 0.1% formic acid; B) Acetonitrile with 0.1% formic acid.

- Gradient: 5% B to 95% B over 25 minutes, hold for 5 minutes.

- Flow Rate: 0.3 mL/min.

- Mass Spectrometer: High-resolution mass spectrometer (e.g., Q-TOF) in positive and negative electrospray ionization (ESI) modes.

- Data Acquisition: Full-scan mode from m/z 50 to 1200 with data-dependent MS/MS acquisition for fragmentation of top ions [21].

3. Data Processing and Model Building:

- Convert raw data files to an open format (e.g., mzML).

- Perform peak picking, alignment, and normalization using informatics software (e.g., XCMS, MS-DIAL).

- Statistically analyze the resulting data matrix (thousands of molecular features) using principal component analysis (PCA) and orthogonal projections to latent structures-discriminant analysis (OPLS-DA).

- Construct a statistical model that differentiates authentic honey from adulterated samples based on their unique chemical fingerprints [21].

Figure 1: Non-Targeted Workflow for Honey Authentication

Experimental Protocol: Targeted PFAS Analysis in Seafood

Per- and polyfluoroalkyl substances (PFAS) are persistent contaminants, and their analysis in seafood is a critical safety application. This protocol leverages targeted LC-MS/MS with automated sample preparation.

1. Automated Sample Preparation (QuEChERS with EMR):

- Homogenize 2 g of seafood tissue (e.g., fish fillet) with 10 mL of 1% acetic acid in acetonitrile.

- Add enhanced matrix removal (EMR) sorbent kits and internal standard mix.

- Shake vigorously for 1 minute.

- On an automated robotic system, the mixture is centrifuged, and an aliquot of the supernatant is transferred to a vial for analysis. This automation achieves approximately 80% time savings and 50% cost savings compared to conventional methods while maintaining accuracy [21].

2. LC-MS/MS Analysis:

- Chromatography: Utilize a reverse-phase column with a gradient elution optimized for PFAS separation.

- Mass Spectrometry: Operate a triple quadrupole (QQQ) mass spectrometer in multiple reaction monitoring (MRM) mode.

- Quantification: Measure specific precursor ion > product ion transitions for each target PFAS compound (e.g., PFOA, PFOS). The use of an internal standard corrects for matrix effects and recovery variations [21].

3. Compliance Assessment:

- Compare the quantified levels of each PFAS compound against the established regulatory limits or guidelines, such as those being intensively developed by the FDA and EFSA [21].

The Research Toolkit: Essential Reagents and Materials

Successful method development and validation in both safety and authenticity research rely on a suite of specialized reagents and materials.

Table 2: Essential Research Reagent Solutions for Food Testing

| Item | Function | Application Example |

|---|---|---|

| Enhanced Matrix Removal (EMR) Sorbents | Selective removal of matrix interferences like lipids and pigments for cleaner extracts. | PFAS analysis in seafood, pesticide residue analysis in produce [21]. |

| Stable Isotope-Labeled Internal Standards | Correct for matrix effects and analyte loss during sample preparation; enable precise quantification. | Targeted quantification of contaminants (e.g., mycotoxins, PFAS) and authenticity markers [21]. |

| DNA Extraction Kits | Isolate high-quality, inhibitor-free DNA from complex and processed food matrices. | Species identification in meat and seafood via PCR and DNA barcoding [20] [19]. |

| Certified Reference Materials (CRMs) | Calibrate instruments and validate method accuracy for specific analytes in defined matrices. | Method development and validation for both contaminants and authentic food profiles [24]. |

| Q-TOF Mass Spectrometer Calibration Solution | Ensure mass accuracy and reproducibility during high-resolution mass spectrometry runs. | Non-targeted fingerprinting for authenticity (honey, olive oil) and suspect screening for contaminants [21]. |

The Validation Framework and Regulatory Landscape

Method validation is the cornerstone of defensible data, whether for regulatory compliance or dispute resolution in international trade. Key organizations are actively developing standards to harmonize approaches.

AOAC INTERNATIONAL: Its Food Authenticity Methods (FAM) program focuses on identifying and validating analytical tools to combat economically motivated adulteration. The program develops Standard Method Performance Requirements (SMPRs) for high-risk commodities like olive oil, milk, and honey, with work expanding to botanicals, spices, meat, and seafood [24] [22]. AOAC Official Methods undergo rigorous multi-laboratory validation, making them highly defensible [25].

International Organization for Standardization (ISO): ISO committee ISO/TC 34 develops standardized methods for food products. Key sub-committees include ISO/TC34/SC16 for horizontal methods for molecular biomarker analysis (e.g., DNA-based meat speciation) and ISO/TC34/SC19 for bee products, including honey standards [22].

Codex Alimentarius: This international food standards body publishes recommended methods of analysis. Its work is increasingly relevant to authenticity, with an electronic working group (EWG) formed to create definitions and scope for Food Fraud, signaling its formal incorporation into the global food code [22].

The interplay of these forces and methodologies can be visualized as a strategic framework for researchers.

Figure 2: Strategic Framework for Food Testing R&D

The landscape of food testing is being reshaped by the powerful, parallel demands for safety and authenticity. For researchers and scientists, the path forward involves recognizing both the distinct and synergistic nature of these fields. Targeted methods, honed for regulatory compliance, remain the bedrock of food safety. In authenticity, non-targeted, fingerprinting approaches represent the innovative frontier for detecting novel fraud. The unifying element is a steadfast commitment to rigorous method validation under internationally recognized standards from bodies like AOAC, ISO, and Codex. By leveraging advanced technologies—from LC-HRMS and DNA sequencing to AI-driven data analysis—the scientific community can provide the reliable data needed to protect public health, uphold consumer trust, and ensure the integrity of the global food supply chain.

The integrity of the global food supply is challenged by a diverse and evolving array of threats, ranging from microbiological pathogens that directly impact public health to economically motivated adulteration (EMA) that undermines product authenticity and consumer trust. While food safety testing targets known hazardous contaminants, food authenticity testing confronts a more insidious challenge: deliberate, sophisticated fraud designed to evade detection. The global food authenticity testing market, valued at USD 8.39 billion in 2024 and projected to reach USD 15.4 billion by 2035, reflects the growing emphasis on combating these threats [26]. Economic adulteration and food counterfeiting are estimated to cost the industry US$30–40 billion annually, highlighting the scale of the problem [27]. This guide compares the methodologies, validation frameworks, and technological solutions deployed against these dual fronts, providing researchers and scientists with a critical analysis of their relative capabilities and applications.

Comparative Analysis of Testing Objectives and Methodologies

Food safety and authenticity testing, while complementary, are driven by fundamentally different objectives, which in turn dictate their methodological approaches. The table below summarizes the core distinctions.

Table 1: Core Distinctions Between Food Safety and Food Authenticity Testing

| Aspect | Food Safety Testing | Food Authenticity Testing |

|---|---|---|

| Primary Objective | Protect public health by detecting hazardous contaminants [28] | Verify product claims and detect economically motivated adulteration [26] |

| Regulatory Driver | Public health legislation (e.g., FSMA, MAHA Strategy) [21] | Labeling laws, consumer protection, and brand integrity [26] |

| Analytical Question | "Is a specific, known hazardous substance present above a safe limit?" [29] | "Does this sample conform to its label claims and is it what it claims to be?" [29] |

| Result Interpretation | Often binary (compliant/non-compliant against a regulatory limit) [29] | Often probabilistic and comparative (likelihood of being authentic) [29] |

| Key Challenge | Keeping pace with emerging contaminants (e.g., PFAS, new pathogens) [21] | The "unknown unknown" nature of fraud; requires untargeted approaches [29] |

The Paradigm of Targeted vs. Non-Targeted Analysis

This fundamental divergence in objective leads to a critical difference in analytical strategy:

- Targeted Analysis (Safety & Simple Authenticity): This approach is the mainstay of food safety testing and is used for some authenticity applications, such as testing for the presence of an illegal dye like Sudan Red in spices or checking for meat speciation [29] [28]. It is a hypothesis-driven method that answers a simple question, for example: "Is this specific compound present, and if so, at what concentration?" The result is measured against a defined limit [29].

- Non-Targeted Analysis (Complex Authenticity): For more sophisticated authenticity questions like geographic origin, organic status, or subtle adulteration, a non-targeted approach is required. This technique does not look for a specific compound. Instead, it uses advanced instrumentation like Mass Spectrometry (MS), Nuclear Magnetic Resonance (NMR), or spectroscopy to generate a chemical "fingerprint" of a sample [29] [21]. Machine learning models are then trained on these fingerprints from known authentic samples to create a statistical model of "normal." Unknown samples are compared against this model to determine their likelihood of being authentic [29]. As John Points of the Food Authenticity Network notes, the results are often "fuzzy" and probabilistic, not cut-and-dried [29].

Method Validation Frameworks: Ensuring Reliability and Relevance

The validation of analytical methods ensures that they are fit for their intended purpose, a process that differs significantly between the well-established pathways for safety methods and the emerging frameworks for non-targeted authenticity techniques.

Established Pathways for Safety and Targeted Methods

For food safety and targeted methods, validation is a mature process. Organizations like AOAC INTERNATIONAL provide standardized guidelines and performance testing programs (e.g., the Performance Tested Methods (PTM) program) to establish method performance characteristics like selectivity, accuracy, precision, and robustness [30]. A key requirement is the use of appropriate Reference Materials (RMs) to ensure metrological traceability and comparability of results across laboratories and time [27]. Laboratories operating under standards like ISO/IEC 17025 must demonstrate compliance with these validation requirements, creating a consistent and reliable foundation for safety and regulatory testing [28].

Emerging Frameworks for Non-Targeted Authenticity Methods

The validation of non-targeted methods presents unique challenges that are still being addressed by the scientific and standards communities. Key considerations include:

- Database Quality and Robustness: The model is only as good as the data it's built on. The training set must be large enough, cover multiple seasons, and have guaranteed authenticity to avoid "baking fraud into the model" from the start [29]. Factors like different fertilizers, weather patterns, and soil types must be accounted for.

- Model Applicability: A model designed to differentiate apples from two regions of New Zealand may fail entirely if presented with a French apple, highlighting the need for careful definition of the method's scope [29].

- Harmonization of Statistics: There is a growing need to harmonize the statistical models and performance characteristics (e.g., probability of detection) used in validation to ensure comparability across different non-targeted platforms [31].

- Research Grade Materials: The development of "research grade test materials" or "representative test materials" is recommended to harmonize untargeted testing methods and improve inter-laboratory comparability, as the availability of traditional RMs for these applications is limited [27].

Comparative Data: Market Trends and Technological Adoption

The technological and market landscape for food testing is dynamic, shaped by both persistent challenges and new threats. The following tables synthesize key quantitative data and trends.

Table 2: Market Dynamics and Key Growth Areas

| Segment | Market Data & Trends | Key Drivers |

|---|---|---|

| Overall Authenticity Market | Valued at USD 8.39B (2024); Projected USD 15.4B (2035); CAGR 5.69% (2025-2035) [26] | Consumer awareness, stringent regulations, complex global supply chains [26] |

| Leading Technology | PCR-Based methods held 41.5% revenue share (2024) [26] | High precision for meat speciation and GMO detection [26] |

| Leading Food Category | Processed Foods held 40.3% market share (2024) [26] | Complex ingredient lists offer more opportunities for adulteration [26] |

| Top Testing Target | Meat Speciation was the largest segment (27.2% share in 2024) [26] | High economic incentive for substitution and mislabeling [26] |

Table 3: Analytical Techniques and Their Applications in Food Testing

| Technique | Primary Application | Principle & Strengths |

|---|---|---|

| DNA Sequencing (NGS) | Authenticity: Meat speciation, botanical identification [26] | Provides high-level precision for species verification [26] |

| Mass Spectrometry (LC-MS/MS) | Safety: Multi-residue pesticides, veterinary drugs. Authenticity: Non-targeted profiling [21] [26] | Highly sensitive; can be used for both targeted quantification and untargeted fingerprinting [29] [21] |

| Isotope Ratio MS (IRMS) | Authenticity: Geographic origin verification [29] | Measures natural isotopic ratios influenced by local geology and climate [29] |

| Nuclear Magnetic Resonance (NMR) | Authenticity: Profiling of honey, wine, fruit juices [29] | Excellent for analyzing sugars, alcohols; good for non-targeted screening [29] |

| Immunoassays (ELISA) | Safety: Allergen testing. Authenticity: Dairy adulteration [26] [32] | Rapid, cost-effective, and suitable for high-throughput screening [26] |

Emerging Threats and Analytical Responses

The threat landscape is not static. Testing protocols must continuously evolve to address new risks:

- PFAS ("Forever Chemicals"): There is intensifying regulatory focus on per- and polyfluoroalkyl substances (PFAS) in food and food contact materials. This drives innovations in sample preparation and detection to meet increasingly stringent guidelines, with methods now being developed for products like vegetables, seafood, and even wine and spirits [21].

- Functional Foods and Nutraceuticals: The booming market for foods fortified with adaptogens, omega-3s, and other nutraceuticals is raising concerns about over-fortification and ingredient authenticity, necessitating new testing protocols for these complex matrices [21].

- Automation and AI: Workforce shortages and the complexity of data analysis are being addressed through lab automation and Artificial Intelligence (AI). AI is being integrated into data processing tools to save time and reduce manual intervention, making sophisticated analysis more accessible [33].

The Scientist's Toolkit: Essential Research Reagents and Materials

The reliability of any food testing method, whether for safety or authenticity, hinges on the quality of the materials used in the analytical process. The following table details key solutions and resources.

Table 4: Essential Research Reagents and Materials for Food Testing

| Item | Function & Importance | Specific Examples & Notes |

|---|---|---|

| Certified Reference Materials (CRMs) | Calibration, method validation, quality control. Essential for ensuring metrological traceability [27]. | Used for targeted analytes (e.g., pesticide standards) and matrix-matched materials. |

| Research Grade Test Materials | Harmonization of non-targeted methods. Used as a common baseline for building and comparing statistical models [27]. | Critical for inter-laboratory studies and validating untargeted authenticity methods. |

| Enhanced Matrix Removal (EMR) Sorbents | Advanced sample cleanup. Removes co-extractives for cleaner analysis and reduced instrument maintenance [21]. | Part of QuEChERS workflows; shown to save ~80% time and ~50% cost in PFAS analysis of fish [21]. |

| DNA Barcoding Libraries | Reference databases for genetic identification. Allows for comparison of unknown sample DNA to known species [26]. | Vital for the accuracy of DNA-based authenticity testing for meat, fish, and botanicals. |

| Stable Isotope Standards | Calibration of IRMS instruments for geographic origin determination. Ensures accuracy of isotopic ratio measurements [29]. | Necessary for validating methods that trace products like wine or honey to their origin. |

The frontiers of food safety and authenticity testing, while historically distinct, are increasingly converging. The fight against sophisticated adulteration requires the probabilistic, data-driven approach of non-targeted authenticity methods, while the detection of emerging chemical contaminants like PFAS demands the sensitivity and precision of advanced safety testing technologies. The future of food integrity lies in the strategic integration of these disciplines. This will be powered by cross-sector collaboration between industry, academia, and regulators [33], investment in automation and AI to overcome workforce and data challenges [33], and a continued focus on harmonizing validation frameworks to ensure that new methods are not just technologically advanced, but also reliable, comparable, and fit for purpose in protecting global food supply chains [31].

In the realm of food integrity, safety and authenticity testing have historically operated as distinct disciplines with fundamentally different analytical paradigms. Food safety testing traditionally focuses on hazard identification and compliance monitoring for known contaminants, employing targeted methods with established thresholds. In contrast, food fraud detection operates in a probabilistic space of authenticity verification, increasingly relying on non-targeted techniques and pattern recognition to identify deviations from a genuine product profile [29]. This guide systematically compares the methodologies, validation frameworks, and technological implementations for researchers developing integrated risk assessment protocols that bridge these domains.

The fundamental distinction lies in their analytical questions: safety testing asks "Is this contaminant present above a dangerous level?" while authenticity testing asks "Does this sample match the expected profile of a genuine product?" [29]. This divergence necessitates different methodological approaches, validation criteria, and data interpretation frameworks, yet modern food protection demands their integration within cohesive risk assessment plans.

Methodological Comparison: Targeted versus Non-Targeted Approaches

Core Analytical Paradigms

The experimental protocols for food safety and authenticity testing differ significantly in their underlying principles and implementation:

Food Safety Testing Protocols typically employ targeted methods with definitive thresholds. For pathogen detection, a standard protocol involves:

- Sample Preparation: Aseptically weigh 25g of food sample into 225mL of buffered peptone water for homogenization

- Enrichment: Incubate at 37°C for 18-24 hours to amplify target microorganisms

- Plating: Streak onto selective agar media (e.g., XLD for Salmonella, CHROMagar for Listeria)

- Confirmation: Perform biochemical and serological tests on suspect colonies

- Molecular Verification: Conduct PCR assays targeting species-specific genes (e.g., invA for Salmonella)

For chemical contaminant analysis like pesticide residues, Liquid Chromatography-Mass Spectrometry (LC-MS/MS) protocols follow:

- Extraction: Homogenize sample with acetonitrile containing 1% acetic acid

- Cleanup: Use dispersive Solid Phase Extraction (dSPE) with primary secondary amine (PSA) and magnesium sulfate

- Instrumental Analysis: Separate compounds on a C18 column with gradient elution, monitoring multiple reaction transitions (MRM)

- Quantification: Compare analyte peak areas to matrix-matched calibration standards

Food Authenticity Testing Protocols increasingly utilize non-targeted approaches with statistical classification. For geographic origin verification using Stable Isotope Ratio Mass Spectrometry (IRMS):

- Sample Preparation: Freeze-dry and pulverize samples to homogeneous powder

- Combustion: For δ13C and δ15N analysis, combust 0.5-1.0mg in an elemental analyzer at 1020°C

- Interface: Separate gases via gas chromatography before introduction to IRMS

- Calibration: Normalize data against international reference standards (VPDB for carbon, AIR for nitrogen)

- Statistical Modeling: Apply multivariate analysis (PCA, LDA) to establish origin classification models

Non-targeted fingerprinting using NMR spectroscopy follows this workflow:

- Extraction: Prepare liquid samples in D2O containing 0.05% TSP as internal standard

- Data Acquisition: Collect 1H-NMR spectra at 25°C using a NOESY-presat pulse sequence for water suppression

- Spectral Processing: Apply exponential line broadening (0.3Hz), Fourier transformation, phase and baseline correction

- Data Reduction: Segment spectra into bins (e.g., 0.04ppm) and normalize to total area

- Chemometric Analysis: Build classification models using Partial Least Squares-Discriminant Analysis (PLS-DA) or machine learning algorithms

Comparative Methodological Frameworks

Table 1: Fundamental Differences Between Safety and Authenticity Testing Paradigms

| Parameter | Food Safety Testing | Food Authenticity Testing |

|---|---|---|

| Analytical Question | "Is this contaminant present above a dangerous level?" [29] | "Does this sample match the expected profile of a genuine product?" [29] |

| Method Type | Primarily targeted | Increasingly non-targeted and fingerprinting |

| Result Interpretation | Binary (compliant/non-compliant) against regulatory limits | Probabilistic (likelihood of authenticity) [29] |

| Key Technologies | PCR, ELISA, LC-MS/MS [26] [34] | NGS, NMR, IRMS, AI-powered analytics [29] [35] |

| Data Output | Quantitative concentration values | Multivariate patterns and statistical similarity scores |

| Reference Materials | Certified reference standards with known analyte concentrations | Authenticated sample libraries with verified provenance [29] |

| Validation Approach | Accuracy, precision, limit of detection | Model sensitivity, specificity, predictive accuracy [29] |

Quantitative Market and Technology Adoption Trends

The growing emphasis on food authenticity is reflected in market projections, with the global food authenticity market valued at $10.2 billion in 2025 and expected to reach $17.9 billion by 2034, representing a compound annual growth rate (CAGR) of 6.4% [35]. This growth is fueled by increasing regulatory scrutiny and consumer demand for transparent supply chains.

Technology Adoption Patterns

Table 2: Market Share and Growth Projections for Testing Technologies

| Technology | Market Share (2025) | Primary Applications | Growth Drivers |

|---|---|---|---|

| PCR-Based Methods | 35-41.5% [26] [34] | Meat speciation, GMO detection, allergen testing | Precision, speed, adaptability across food matrices [34] |

| Liquid Chromatography-Mass Spectrometry | 28% (chromatography-based techniques) [34] | Adulteration analysis, pesticide residues, mycotoxins | High sensitivity and capability to detect multiple analytes |

| Isotope Methods | Not specified | Geographic origin verification, organic authentication | Ability to trace products to specific regions based on isotopic signatures [29] |

| Next-Generation Sequencing | Emerging | Species identification in complex products, microbiome analysis | High-resolution profiling of blended meats and complex matrices [34] |

| Immunoassay/ELISA | Established but declining | Allergen testing, specific protein markers | Rapid results and ease of use for specific applications |

Regional Implementation Variations

Table 3: Regional Adoption Rates and Focus Areas

| Region | Projected CAGR (2025-2035) | Regulatory Framework | Testing Emphasis |

|---|---|---|---|

| North America | 5.7% (USA) [34] | FDA, USDA guidelines [34] | Meat speciation, allergen testing [26] |

| European Union | 5.5% [34] | EU Food Fraud Network, harmonized directives [36] [34] | Geographic origin, olive oil, honey authentication [36] |

| Asia-Pacific | 6.0% [34] | Evolving standards, increasing government scrutiny [34] | Export verification, adulteration detection |

| United Kingdom | 5.2% [34] | FSA, BRC standards post-Brexit [34] | Organic verification, meat products |

Integrated Risk Assessment Workflow

The following workflow diagrams visualize the integration of safety and fraud considerations into a cohesive risk assessment plan, using the specified color palette (#4285F4, #EA4335, #FBBC05, #34A853, #FFFFFF, #F1F3F4, #202124, #5F6368) with appropriate contrast ratios meeting WCAG guidelines [37] [38].

Integrated Risk Assessment Workflow

This integrated workflow highlights the parallel consideration of safety and fraud vulnerabilities, which must be addressed through complementary but distinct testing methodologies.

Vulnerability Assessment Framework

Food fraud vulnerability assessment follows a structured process to identify and mitigate risks associated with fraudulent activities in the supply chain [39]. This framework is essential for developing effective mitigation strategies.

Food Fraud Vulnerability Assessment

Analytical Instrumentation and Reagent Solutions

The experimental protocols for integrated safety and authenticity testing require specific research-grade reagents and instrumentation. The following toolkit represents essential materials for implementing the methodologies discussed in this guide.

Table 4: Essential Research Reagent Solutions for Integrated Testing

| Reagent/Instrument | Function | Application Examples |

|---|---|---|

| DNA Extraction Kits | Isolation of high-quality DNA from complex matrices | Species identification in meat products, GMO detection [34] |

| PCR Master Mixes | Amplification of target DNA sequences with high fidelity | Real-time PCR for pathogen detection, DNA barcoding [26] |

| Stable Isotope Standards | Calibration of mass spectrometry instruments | Geographic origin verification of honey, wine, dairy products [29] |

| LC-MS/MS Reference Materials | Quantification of contaminant residues | Pesticide analysis, mycotoxin detection, adulterant screening [34] |

| NMR Solvents & Standards | Sample preparation and instrument calibration | Metabolic fingerprinting for authenticity verification [29] |

| Immunoassay Kits | Rapid detection of specific protein markers | Allergen testing, species-specific protein detection [26] |

| Sample Preparation Kits | Cleanup and concentration of analytes | Solid-phase extraction for contaminant analysis |

Emerging Technologies and Future Directions

The integration of artificial intelligence and machine learning is transforming both safety and authenticity testing landscapes. AI-powered authentication tools use big data and machine learning to detect anomalies and predict fraud risks, improving testing efficiency and accuracy [35]. These technologies enable the analysis of complex multivariate data from non-targeted techniques, identifying subtle patterns indicative of fraud that might escape conventional analysis.

Blockchain technology is increasingly deployed for enhancing traceability across complex supply chains, providing transparent and immutable records of food provenance [35] [39]. When integrated with analytical testing results, blockchain creates a powerful system for verifying authenticity claims and preventing fraud. Portable testing platforms including handheld PCR devices and miniature spectrometers are enabling decentralized testing models, allowing for on-site authentication checks and real-time fraud detection at various points in the supply chain [35] [26].

The convergence of these technologies—AI-powered analytics, blockchain traceability, and portable testing—represents the future of integrated food safety and authenticity protection, creating a more transparent, secure, and resilient global food system.

Advanced Analytical Techniques: From Targeted Quantitation to Untargeted Profiling

In the face of increasing global food fraud incidents and stringent safety regulations, the demand for robust analytical techniques to verify food authenticity and detect adulterants has never been greater. Targeted analysis methods form the cornerstone of modern food safety and authenticity testing, enabling precise detection and quantification of specific adulterants, allergens, and species substitution. Among these, Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS), Polymerase Chain Reaction (PCR), and Enzyme-Linked Immunosorbent Assay (ELISA) have emerged as the three principal techniques, each with distinct operational principles and applications [40].

The global food authenticity testing market, valued at USD 1.10 billion in 2025, reflects the critical importance of these technologies, with meat speciation and allergen testing representing the largest and fastest-growing segments, respectively [41]. This guide provides a comprehensive, data-driven comparison of LC-MS/MS, PCR, and ELISA to assist researchers and drug development professionals in selecting the optimal methodology for their specific food safety and authenticity challenges.

The selection of an appropriate analytical technique depends on a clear understanding of each method's fundamental principles, strengths, and limitations. Below, we compare the core operational focuses of these three key technologies.

Table 1: Core Analytical Capabilities and Performance Metrics

| Parameter | LC-MS/MS | PCR | ELISA |

|---|---|---|---|

| Analytical Principle | Separation and detection based on mass-to-charge ratio | Amplification of specific DNA sequences | Antibody-antigen binding with enzymatic detection |

| Primary Analyte | Proteins, peptides, small molecules | DNA | Proteins |

| Typical Sensitivity (LOD) | 0.01% (gelatin) [42] | 0.01% - 0.1% (meat species) [43] | Low ppm (allergens) [44] |

| Quantification Capability | Excellent (isotope dilution possible) | Semi-quantitative to quantitative | Excellent |

| Sample Throughput | Moderate to High | High | Very High |

| Multiplexing Potential | High (multiple reaction monitoring) | Moderate (multiple primers) | Low (typically single-analyte) |

| Susceptibility to Processing Effects | Moderate (protein denaturation) | High (DNA fragmentation) | High (protein denaturation) [44] |

Table 2: Applicability in Food Testing Scenarios

| Application | LC-MS/MS | PCR | ELISA | Key Evidence from Literature |

|---|---|---|---|---|

| Meat Speciation | Excellent (via peptide markers) [45] | Excellent (via species-specific DNA) | Not Applicable | Five species-specific peptides validated for pork quantification with 78-128% recovery [45]. |

| Allergen Detection | Excellent (e.g., pistachio/cashew) [46] | Good | Excellent (Gold Standard) [44] | LC-MS/MS developed for simultaneous pistachio/cashew detection (SDL=1 mg/kg) [46]. ELISA is the preferred, cost-effective choice for routine screening [44]. |

| Gelatin Source Identification | Excellent (0.01% LOD) [42] | Good | Not Commonly Used | LC-MS/MS identified bovine/porcine gelatin in pharmaceuticals and jellies; outperformed PCR in processed samples [42]. |

| Processed Food Analysis | Good (resistant to thermal processing) | Limited (DNA degradation) [43] | Limited (protein denaturation) [44] | NGS (DNA-based) struggles in thermally processed pet food due to DNA damage; LC-MS/MS is more robust [43]. |

| Routine Screening | Moderate (requires expertise) | Good | Excellent (high throughput, cost-effective) [44] | Global food allergen testing industry relies heavily on ELISA, projected to reach USD 1.9 billion by 2034 [44]. |

Detailed Experimental Protocols

LC-MS/MS for Meat Speciation and Gelatin Authentication

Workflow for Meat Speciation Using Species-Specific Peptides [45]

The following diagram outlines the key steps for identifying and quantifying meat species using LC-MS/MS.

- Sample Preparation: A 2 g meat or meat product sample is homogenized in a pre-cooled extraction solution (Tris-HCl, urea, thiourea). The extract is then centrifuged, and the supernatant is collected [45].

- Protein Digestion: An aliquot of the supernatant is reduced with dithiothreitol (DTT) and alkylated with iodoacetamide (IAA). The proteins are digested overnight at 37°C using trypsin, and the reaction is stopped with formic acid [45].

- Peptide Purification: The digest is purified using a C18 solid-phase extraction (SPE) column. The column is activated, equilibrated, loaded, washed, and the peptides are eluted with an acetonitrile/acetic acid solution. The eluate is filtered before analysis [45].

- LC-MS/MS Analysis:

- Chromatography: Peptides are separated on a C18 column using a gradient of water and acetonitrile, both containing 0.1% formic acid [45].

- Mass Spectrometry: Analysis is performed on a high-resolution mass spectrometer (e.g., Q Exactive HF-X). For discovery, a data-dependent acquisition (Full Scan-ddMS2) is used. For quantification, parallel reaction monitoring (PRM) is employed to target specific peptide ions [45].

- Data Analysis: High-resolution MS data is processed with multivariate statistical analysis, such as hierarchical clustering analysis (HCA), to rapidly screen for candidate species-specific peptides. These are then validated using targeted PRM methods [45].

Key Research Reagent Solutions for LC-MS/MS [45] [42]

| Reagent/Consumable | Function | Example Specification |

|---|---|---|

| Trypsin | Proteolytic enzyme that cleaves proteins at specific sites (lysine/arginine) for peptide analysis. | BioReagent Grade [45] |

| Dithiothreitol (DTT) | Reducing agent that breaks disulfide bonds in proteins. | 0.1 M Solution [45] |

| Iodoacetamide (IAA) | Alkylating agent that modifies cysteine residues to prevent reformation of disulfide bonds. | 0.1 M Solution [45] |

| C18 SPE Column | Solid-phase extraction cartridge for purifying and concentrating peptide mixtures. | 60 mg, 3 mL bed volume [45] |

| UPLC Column | Chromatographic column for separating peptides prior to mass spectrometry. | Hypersil GOLD C18, 2.1 mm x 150 mm, 1.9 µm [45] |

| Formic Acid (FA) | Mobile phase additive that improves chromatographic separation and ionization. | LC-MS Grade, 0.1% in water and ACN [45] |

| Specific Peptide Markers | Unique amino acid sequences used to identify and quantify target species. | E.g., >5 precursor ions per species for gelatin [42] |

Workflow for Allergen Detection Using ELISA

The standard protocol for detecting food allergens using the ELISA method is summarized below.

- Sample Extraction: A representative portion of the food matrix is homogenized and extracted using an appropriate buffer to solubilize the target allergen protein.

- Assay Procedure: The extracted sample is added to a microplate well pre-coated with an allergen-specific capture antibody. After incubation and washing, an enzyme-linked detection antibody is added, forming an antibody-allergen-antibody "sandwich." Following another wash, a substrate solution is added, which produces a colored product in the presence of the enzyme.

- Detection and Quantification: The color intensity, measured spectrophotometrically, is proportional to the concentration of the allergen in the sample. The concentration is determined by comparing the signal to a standard curve run concurrently [44].

- DNA Extraction: DNA is isolated from the food sample using commercial kits, often involving steps to remove PCR inhibitors common in food matrices.

- Amplification: Specific primers designed to hybridize with unique DNA sequences of the target species are used in a PCR reaction. The reaction cycles (denaturation, annealing, extension) amplify the target DNA fragment.

- Detection/Quantification:

- End-point PCR: The amplified product is visualized on an agarose gel.

- Real-time PCR (qPCR): The accumulation of amplified DNA is monitored in real-time using fluorescent dyes or probes, allowing for quantification of the target DNA in the original sample [43].

The comparative analysis of LC-MS/MS, PCR, and ELISA reveals a complementary technological landscape where method selection is dictated by the specific analytical question. LC-MS/MS excels in scenarios requiring high specificity and multiplexing for protein-based authentication, such as meat and gelatin speciation, demonstrating superior sensitivity (0.01% LOD) and reliability in processed foods where DNA degradation limits PCR efficacy [42]. PCR remains the gold standard for DNA-based species identification in raw and mildly processed foods, while ELISA maintains its position as the most cost-effective and high-throughput solution for routine allergen monitoring, despite limitations in processed matrices where protein denaturation occurs [44].

The future of food authenticity and safety testing lies in the strategic integration of these techniques. ELISA is ideal for initial, high-volume screening, with positive results confirmed by the definitive specificity of LC-MS/MS or PCR [44]. This multi-technique approach, supported by advancing methodologies like next-generation sequencing (NGS) and portable biosensors, provides a robust defense against economically motivated adulteration, ensuring food safety, regulatory compliance, and consumer trust in a complex global supply chain [41] [47].

In the face of increasing global concerns over food authenticity and safety, analytical science has responded with a shift from traditional targeted analyses toward more comprehensive non-targeted and untargeted strategies. Non-targeted methods aim to provide a global fingerprint of a sample's composition without prior selection of analytes, enabling the detection of both known and unexpected adulterants or quality markers [48] [49]. These approaches are particularly vital for combating economically motivated adulteration (EMA), where fraudsters continually devise new ways to evade detection by conventional targeted methods that focus on a pre-defined set of compounds [48] [50]. The core strength of non-targeted workflows lies in their ability to capture subtle changes in complex food metabolomes, offering a powerful tool for verifying claims of geographical origin, production methods, and species identity, which are critical for protecting both consumers and producers of high-value food products [49] [50].

This guide objectively compares three principal analytical platforms at the forefront of this paradigm shift: Nuclear Magnetic Resonance (NMR) spectroscopy, High-Resolution Accurate-Mass Mass Spectrometry (HRAM-MS), and vibrational spectroscopic fingerprinting (including NIR, FT-IR, and Raman techniques). We frame this comparison within the broader thesis of method validation for food authenticity, contrasting it with the more established validation pathways for targeted food safety testing. While safety testing often targets specific hazards with known thresholds (e.g., mycotoxins, pesticides), authenticity testing must often discriminate based on multi-variate patterns and unknown frauds, placing a premium on method robustness, transferability, and the construction of reliable, shared databases [49] [51].

Comparative Performance Analysis of Analytical Platforms

The following tables summarize the key operational characteristics and performance metrics of NMR, HRAM-MS, and spectroscopic techniques in the context of food authenticity testing.

Table 1: Key Technical and Operational Characteristics

| Feature | NMR | HRAM-MS | Spectroscopic Fingerprinting (FT-NIR/FT-IR) |

|---|---|---|---|

| Analytical Principle | Measurement of nuclear spin transitions in a magnetic field [52] | Separation and detection of ions based on mass-to-charge ratio with high resolution and mass accuracy [50] | Measurement of molecular vibration after light irradiation (absorption or scattering) [53] |

| Typical Sample Preparation | Often minimal; may involve simple extraction or dilution; high reproducibility [49] [51] | Can be complex; often requires extraction, purification, and sometimes derivatization [48] [50] | Minimal to none; direct analysis of solids, liquids, or powders; fastest preparation [54] [53] |

| Analysis Speed | Minutes to tens of minutes per sample [52] | Several minutes to an hour per sample (depending on chromatography) [50] | Seconds to a few minutes per sample [54] [53] |

| Destructive to Sample? | Non-destructive [52] [53] | Destructive | Non-destructive [53] |

| Ease of Method Transfer | High; protocols can be standardized across laboratories and instruments to generate statistically equivalent data [49] [51] [55] | Moderate to Low; can be instrument-dependent and require careful tuning and calibration [50] | High; methods can be transferred with calibration transfer protocols [54] |

Table 2: Performance Metrics in Food Authenticity Applications

| Performance Metric | NMR | HRAM-MS | Spectroscopic Fingerprinting (FT-NIR/FT-IR) |

|---|---|---|---|

| Metabolite Coverage | Broad coverage of major and minor metabolites, but generally less sensitive than MS [49] [52] | Very broad and deep coverage, including trace-level metabolites; can be tailored with different ionization modes [50] | Provides a global fingerprint but limited to functional groups and bonds; less specific for compound identity [53] |

| Quantitative Capability | Excellent; inherently quantitative without need for compound-specific calibration [52] [51] | Semi-quantitative; requires internal standards and compound-specific calibration for accurate quantification [50] | Indirect quantitative analysis reliant on chemometric models and reference methods [53] |

| Sensitivity | Moderate (µM-mM range) [52] | Very High (pM-nM range) [50] | Low to Moderate; suitable for major components [53] |

| Discriminatory Power | High for origin, variety, and process authentication [49] [51] | Very High; can differentiate based on subtle metabolite differences [50] | High for gross classification and screening; can distinguish species and origins [48] [53] |

| Reproducibility & Robustness | Exceptional; spectra are highly reproducible across instruments and laboratories, facilitating shared databases [49] [51] [55] | Good within a lab; can vary between instruments and labs without stringent standardization [50] | Good; instrument performance is stable, but physical sample presentation can affect results [48] [53] |

| Representative Applications | Geographic origin of tomatoes, wine, and olive oil; authentication of coffee, honey, and spices [49] [52] [51] | Detection of unknown adulterants; geographic origin via subtle markers; biomarker discovery (e.g., 16-O-Methylcafestol in coffee) [50] | Differentiation of truffle species; screening for adulterated raw materials; discrimination of Ganoderma lucidum [48] [50] |

Detailed Methodologies and Experimental Protocols

Non-Targeted NMR Workflow

The application of NMR-based non-targeted protocols (NTPs) follows a rigorous workflow designed to maximize reproducibility and data quality, which is critical for building reliable classification models.

A typical experimental protocol for a food matrix (e.g., tomato or fruit juice) involves the following steps [49] [51]:

- Sample Selection and Preparation: A representative set of authentic reference samples is crucial. For tomato authentication, one optimized protocol (P3) involves homogenizing the entire fruit, centrifuging the homogenate, and mixing the supernatant with a phosphate buffer made in D₂O. The buffer maintains a consistent pH (e.g., 4.2), and a defined compound such as DSS (4,4-dimethyl-4-silapentane-1-sulfonic acid) is often added as an internal chemical shift and quantitation reference [51].

- NMR Acquisition: The prepared sample is transferred to a standard NMR tube. Data is acquired using a standardized one-dimensional (1D) pulse sequence, most commonly the 1D Nuclear Overhauser Effect Spectroscopy (NOESY) presat sequence, which effectively suppresses the water signal. Key acquisition parameters are harmonized: spectral width (e.g., 20 ppm), relaxation delay (e.g., 4 s), number of scans (e.g., 64-128), and temperature (e.g., 300 K). The free induction decay (FID) is recorded [49] [51].

- Data Processing and Analysis: The raw FID is processed by applying an exponential window function (line broadening), Fourier transformation, phase and baseline correction, and calibration of the chemical shift scale (e.g., to DSS at 0 ppm). The spectrum is then reduced to a numerical data matrix by dividing it into small regions (bucketing or binning) and integrating the signal within each region. This reduces the impact of small chemical shift variations and makes the data manageable for statistical analysis [49] [51].

- Chemometric Analysis and Validation: The data matrix is imported into chemometric software. Unsupervised methods like Principal Component Analysis (PCA) are first used to explore natural clustering and identify outliers. Subsequently, supervised methods like Orthogonal Projections to Latent Structures-Discriminant Analysis (OPLS-DA) are used to build classification models that differentiate sample classes (e.g., by geographical origin). The model's performance is rigorously validated using cross-validation and by predicting the class of a separate set of samples not used to build the model [49] [51].

HRAM-MS-Based Untargeted Metabolomics

HRAM-MS workflows offer deep molecular coverage and are highly effective for biomarker discovery.

A generalized protocol for food analysis (e.g., olive oil, honey) is as follows [48] [50]:

- Sample Preparation and Metabolite Extraction: The sample is subjected to an extraction process to capture a wide range of metabolites. This often involves using a solvent mixture like methanol-water-chloroform to separate polar and non-polar compounds. The extract is centrifuged, and the supernatant is collected for analysis. The choice of extraction solvent and method is critical and depends on the target food matrix and the compounds of interest.

- Chromatographic Separation and MS Analysis: The extract is typically introduced into the mass spectrometer via a chromatographic system to reduce complexity and ion suppression. Common methods include:

- UHPLC-QTOF-MS: Reversed-phase UHPLC is used. The effluent is ionized, most commonly by Electrospray Ionization (ESI) in both positive and negative modes, and then analyzed by a Quadrupole Time-of-Flight (QTOF) or Orbitrap mass spectrometer. Data-Independent Acquisition (DIA) modes are often used for untargeted analysis.

- GC-MS: For volatile compounds or after derivatization, GC-MS is used. Electron Impact (EI) ionization is standard, generating reproducible fragmentation patterns.

- Direct Injection (DART-MS): For rapid screening, techniques like Direct Analysis in Real Time (DART) can be used, which ionizes samples directly from the solid or liquid state with little to no preparation [50] [16].

- Data Processing and Metabolite Identification: The raw HRAM-MS data is processed using specialized software. This involves peak picking, alignment across samples, and deconvolution to create a data matrix of molecular features (defined by m/z and retention time) and their intensities. Statistical analysis (e.g., OPLS-DA) is performed to identify features with the highest discriminatory power (VIP scores). These potential biomarkers are then tentatively identified by searching accurate mass and isotopic pattern databases (and MS/MS fragmentation libraries if available) [50].

Spectroscopic Fingerprinting with FT-NIR and Raman

Vibrational spectroscopy provides rapid fingerprinting ideal for high-throughput screening.