Heme vs. Non-Heme Iron Bioavailability: Mechanisms, Clinical Implications, and Future Directions for Biomedical Research

This article provides a comprehensive analysis of the relative bioavailability of heme and non-heme iron, a critical consideration for researchers and drug development professionals addressing global iron deficiency.

Heme vs. Non-Heme Iron Bioavailability: Mechanisms, Clinical Implications, and Future Directions for Biomedical Research

Abstract

This article provides a comprehensive analysis of the relative bioavailability of heme and non-heme iron, a critical consideration for researchers and drug development professionals addressing global iron deficiency. We explore the distinct absorption mechanisms, with heme iron demonstrating 25-30% bioavailability via specific transport pathways, compared to the highly variable 1-10% absorption of non-heme iron, which is strongly influenced by dietary factors. The scope includes an examination of the 'meat factor' phenomenon, methodological approaches for assessing iron status and absorption, strategies to overcome the inhibitory effects of phytates and polyphenols, and a comparative validation of supplementation strategies. Recent clinical evidence and emerging trends in supplement formulation, including plant-based nutraceuticals and novel delivery systems, are evaluated for their potential to enhance treatment efficacy and patient adherence in iron deficiency management.

Fundamental Mechanisms of Iron Absorption: From Chemistry to Physiology

Chemical Definitions and Key Characteristics

Iron in the human diet is categorized into two distinct chemical forms, which dictate its absorption and metabolism.

Heme iron is an integral component of hemoglobin and myoglobin, the oxygen-carrying proteins found in animal tissues. This form consists of an iron ion (Fe²⁺) situated at the center of a heterocyclic organic compound known as a porphyrin ring, specifically protoporphyrin IX [1] [2]. This complex is referred to as a heme group. Its presence in animal-based foods and its unique absorption pathway contribute to its high bioavailability, with estimated absorption rates ranging from 15% to 35% [3] [4].

Non-heme iron, in contrast, is the inorganic form of iron found predominantly in plant-based foods, such as grains, legumes, nuts, and vegetables [2] [5]. It is also present in animal products (e.g., eggs and dairy) and is the form used in most iron-fortified foods and supplements [2] [3]. Common chemical forms include iron salts like ferrous sulfate and ferrous fumarate [2]. Its absorption is generally lower than that of heme iron, typically ranging from 2% to 20%, and is highly susceptible to influence by other dietary components [3].

Table 1: Fundamental Characteristics of Heme and Non-Heme Iron

| Characteristic | Heme Iron | Non-Heme Iron |

|---|---|---|

| Primary Chemical Form | Iron (Fe²⁺) within protoporphyrin IX ring [1] | Inorganic iron salts (e.g., FeSO₄, Fe fumarate) [2] |

| Bioavailability | High (15% - 35% absorption) [3] [4] | Variable, generally lower (2% - 20% absorption) [3] |

| Absorption Regulation | Less tightly regulated by body iron stores [6] | Tightly regulated via the hepcidin-ferroportin axis [7] [6] |

| Primary Dietary Sources | Hemoglobin/myoglobin in meat, poultry, seafood [1] [8] | Plants, fortified foods, supplements, eggs, dairy [2] [8] |

The following tables catalog prominent dietary sources of heme and non-heme iron, with data compiled from the USDA FoodData Central database to provide standardized portion comparisons [8].

Table 2: Dietary Sources of Heme Iron (from Animal Proteins)

| Food Source | Standard Portion | Iron (mg) |

|---|---|---|

| Oysters | 3 oysters | 6.9 |

| Mussels | 3 ounces | 5.7 |

| Duck, breast | 3 ounces | 3.8 |

| Turkey Egg | 1 egg | 3.2 |

| Bison | 3 ounces | 2.9 |

| Beef | 3 ounces | 2.5 |

| Sardines, canned | 3 ounces | 2.5 |

| Clams | 3 ounces | 2.4 |

| Lamb | 3 ounces | 2.0 |

| Shrimp | 3 ounces | 1.8 |

Table 3: Dietary Sources of Non-Heme Iron (from Plants and Fortified Foods)

| Food Source | Standard Portion | Iron (mg) |

|---|---|---|

| Ready-to-eat cereal, fortified | 1/2 cup | 16.2 |

| Hot Wheat Cereal, fortified | 1 cup | 12.8 |

| Spinach, cooked | 1 cup | 6.4 |

| White Lima Beans, cooked | 1 cup | 4.9 |

| Soybeans, cooked | 1/2 cup | 4.4 |

| Lentils, cooked | 1/2 cup | 3.3 |

| Sesame Seeds | 1/2 ounce | 2.1 |

| Cashews | 1 ounce | 1.9 |

| Prune Juice, 100% | 1 cup | 3.0 |

Absorption Pathways and Regulatory Mechanisms

The absorption of heme and non-heme iron involves distinct transport systems and is subject to different levels of physiological regulation, primarily governed by the hormone hepcidin.

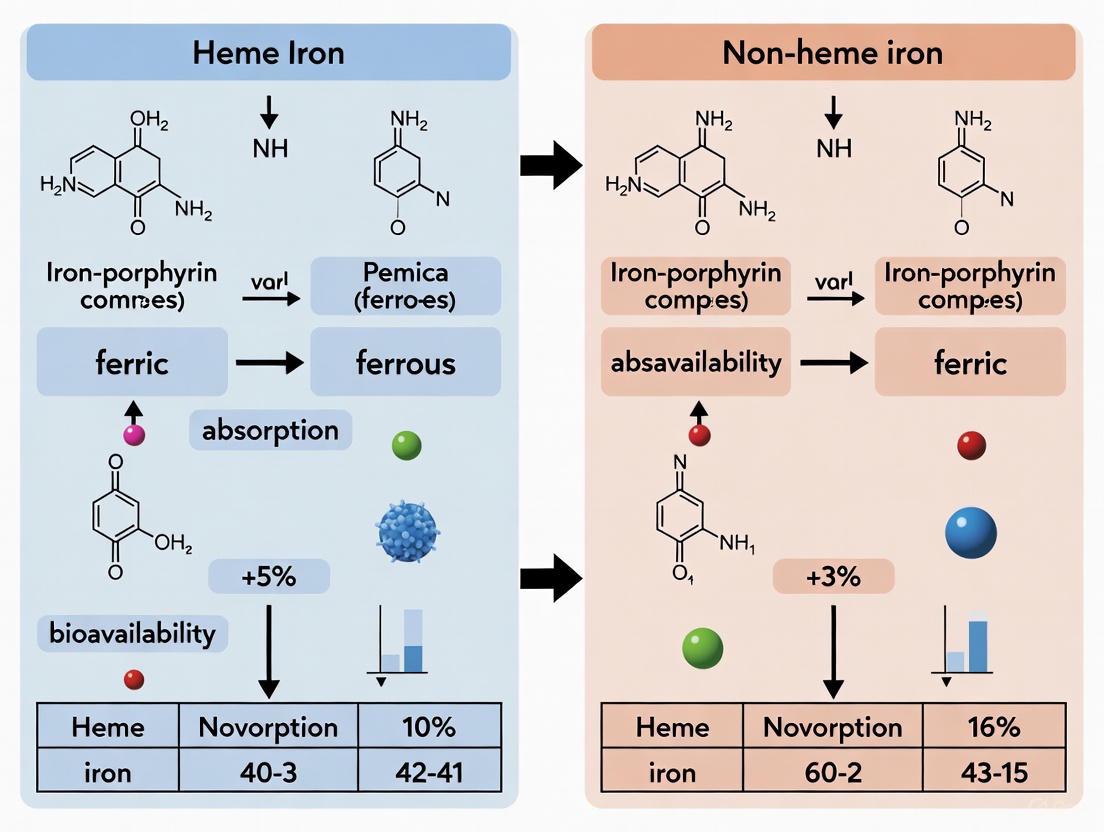

Diagram 1: Iron Absorption Pathways in the Duodenum. Heme iron is absorbed intact via HCP1, while non-heme iron requires reduction before DMT1 transport. Systemic iron levels regulate both pathways via hepcidin control of ferroportin.

The "meat factor" represents a significant interaction between the two iron forms. Heme iron not only is absorbed efficiently itself but also enhances the absorption of co-consumed non-heme iron. Studies have demonstrated that adding meat to a plant-based meal can increase non-heme iron absorption by 40% or more [2]. The exact mechanisms are still being elucidated but are thought to involve peptides from the partial digestion of muscle tissue that chelate non-heme iron, keeping it soluble and available for absorption in the intestine [9].

Comparative Bioavailability: Experimental Data and Clinical Evidence

Meta-Analysis of Supplementation Studies

A 2024 systematic review and meta-analysis provides direct comparative evidence on the efficacy of heme versus non-heme iron administration. The analysis, which included 13 randomized controlled trials, concluded that in children with anemia or low iron stores, heme iron interventions led to a significantly greater increase in hemoglobin (Mean Difference: 1.06 g/dL; 95% CI: 0.34 to 1.78) compared to non-heme iron [1]. Furthermore, a key finding was that participants receiving heme iron had a 38% relative risk reduction for total side effects (RR 0.62; 95% CI 0.40 to 0.96), suggesting superior tolerability [1]. The authors noted, however, that the overall certainty of the evidence was "very low," highlighting a need for more high-quality trials [1].

The "Meat Factor" in Long-Term Supplementation

A key randomized, double-blinded study investigated whether the "meat factor" provides a long-term benefit when an iron supplement is consumed. Women of reproductive age with iron deficiency were assigned to consume a 32 mg ferrous sulfate supplement with a lunch containing either beef (Animal group) or a plant-based meat alternative (Plant group) for 8 weeks [9].

Table 4: Changes in Iron Status from an 8-Week Supplementation Study [9]

| Iron Status Indicator | Animal Group (Beef Meal) | Plant Group (Plant-Based Meal) | Statistical Significance (Treatment-by-Time) |

|---|---|---|---|

| Serum Ferritin (μg/L) | +10.7 ± 9.6 (Main time effect) | +10.7 ± 9.6 (Main time effect) | Not Significant (P > 0.05) |

| Transferrin Saturation (%) | +5.1% ± 18.7% (Main time effect) | +5.1% ± 18.7% (Main time effect) | Not Significant (P > 0.05) |

| Hemoglobin (g/dL) | +0.5 ± 0.9 (Main time effect) | +0.5 ± 0.9 (Main time effect) | Not Significant (P > 0.05) |

| Body Iron Stores (mg/kg) | +2.8 ± 3.1 (Main time effect) | +2.8 ± 3.1 (Main time effect) | Not Significant (P > 0.05) |

The results demonstrated that all iron status indicators improved significantly from baseline in both groups. However, there were no statistically significant differences in the improvements between the group consuming the supplement with beef and the group consuming it with the plant-based meat [9]. This suggests that while the "meat factor" is potent in single-meal absorption studies, its contribution may not be substantial when combined with a high-dose iron supplement over a longer period, likely because the pharmacological dose of iron overwhelms the subtle enhancing effect [9].

Physiological Adaptation to Low-Bioavailability Diets

Research indicates that the body can adapt to lower dietary iron bioavailability. A 2025 study investigated non-heme iron absorption in vegans compared to omnivores. Following consumption of 150g of pistachios (a non-heme source providing ~5.7 mg iron), the area under the curve (AUC) for serum iron was significantly higher in vegans (1002.8 ± 143.9 µmol/L/h) than in omnivores (853 ± 268.2 µmol/L/h) [7]. This enhanced absorption was associated with lower baseline hepcidin levels in the vegan group, indicating a physiological adaptation to a diet reliant on non-heme iron by upregulating the absorption machinery [7].

Experimental Protocols for Iron Absorption Studies

Stable Isotope Technique for Non-Heme Iron Absorption

The stable isotope method is a gold-standard technique for precisely measuring non-heme iron absorption in humans. A detailed protocol from a large cross-sectional study is outlined below [6].

Objective: To compare nonheme iron absorption and its regulation between healthy adults of East Asian (EA) and Northern European (NE) ancestry [6].

Population: The study enrolled 504 participants (253 EA, 251 NE), males and premenopausal females aged 18-50, without obesity or conditions affecting iron status [6].

Intervention and Dosing:

- Participants fasted overnight.

- They ingested a stable 57Fe isotope in the form of an aqueous FeSO₄ solution mixed with a syrup vehicle.

- The solution was consumed under supervision to ensure complete dosing.

Sample Collection and Analysis:

- Baseline Blood Draw: Fasting blood samples were collected before isotope administration to measure baseline iron status (serum ferritin, soluble transferrin receptor), hormones (hepcidin, erythropoietin), and inflammatory markers.

- Post-Dosing Blood Draw: A second blood sample was collected 14 days after isotope ingestion. This interval allows for the incorporation of the absorbed isotope into circulating erythrocytes.

- Erythrocyte 57Fe Enrichment Measurement: The enrichment of 57Fe in erythrocytes was quantified using magnetic sector thermal ionization mass spectrometry, a highly precise method for isotope ratio analysis.

- Calculation of Absorption: Percent iron absorption was calculated based on the measured erythrocyte 57Fe enrichment, the estimated blood volume, and the amount of 57Fe administered. The values were often normalized to a standard serum ferritin level to facilitate comparison between individuals with different iron stores [6].

Diagram 2: Stable Isotope Absorption Study Workflow. This protocol measures non-heme iron absorption by tracking a safe, measurable 57Fe isotope through incorporation into red blood cells.

Key Findings: The study found that EA individuals had significantly higher serum ferritin-corrected iron absorption than NE individuals (e.g., 27.4% vs. 14.8% in females), suggesting a physiological predisposition that may increase the risk of iron overload-related diseases [6].

Randomized Controlled Trial (RCT) for Long-Term Efficacy

For comparing the long-term efficacy of different iron forms or dietary enhancers, a well-controlled RCT is the standard design.

Objective: To determine whether consuming an iron supplement with a meal containing animal meat leads to greater improvements in iron status than with a plant-based meat over 8 weeks [9].

Study Design:

- Design: Randomized, double-blinded, parallel-arm.

- Participants: Non-pregnant females of reproductive age (18-40 years) with low iron stores (serum ferritin <25 μg/L). Subjects with conditions affecting iron absorption were excluded.

- Intervention: Participants were randomly assigned to one of two groups:

- Animal Group: Consumed a 32 mg elemental iron (as ferrous sulfate) supplement with a lunch meal containing 4 oz (113 g) of beef.

- Plant Group: Consumed the same iron supplement with a lunch meal containing 4 oz (113 g) of a plant-based meat alternative (Beyond Meat).

- Blinding: The study meals were prepared and provided to be identical in appearance, ensuring participant and personnel blinding.

- Duration: 8 weeks of daily supplementation with the test meal.

Data Collection:

- Primary Outcomes: Biochemical indicators of iron status and anemia.

- Measurements: Serum ferritin, transferrin saturation, soluble transferrin receptor, total body iron, and hemoglobin were measured at baseline and after the 8-week intervention.

Key Outcomes: As summarized in Table 4, both groups showed significant improvements in all iron status indicators, with no statistically significant difference between the Animal and Plant groups [9].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 5: Essential Reagents and Materials for Iron Absorption Research

| Reagent / Material | Function / Application in Research |

|---|---|

| Stable Iron Isotopes (e.g., 57Fe, 58Fe) | Safe, tracable labels for precise measurement of iron absorption and kinetics in human studies [6]. |

| Ferrous Sulfate (FeSO₄) | The most common non-heme iron salt used as a reference compound in comparative bioavailability and supplementation trials [1] [9]. |

| Heme Iron Concentrate | A standardized preparation of heme iron from animal sources (e.g., hemoglobin) used in interventions comparing heme vs. non-heme iron efficacy [1]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | For quantifying key regulatory hormones and biomarkers, such as hepcidin, serum ferritin, and soluble transferrin receptor (sTfR) [7] [6]. |

| Magnetic Sector Thermal Ionization Mass Spectrometry (TIMS) | High-precision analytical instrument for measuring minute enrichments of stable iron isotopes in biological samples like blood [6]. |

| Phytic Acid / Polyphenol Standards | Used to quantify levels of these dietary inhibitors in test meals or to create standardized meals for absorption studies [7]. |

| Ascorbic Acid (Vitamin C) | Used as a reference enhancer to study the maximum potential absorption of non-heme iron and to control for its effect in experimental diets [3]. |

Iron is a critical micronutrient for fundamental biological processes, including oxygen transport, DNA synthesis, and cellular energy metabolism [10] [11]. Its absorption in the duodenum is a highly regulated process, pivotal to systemic iron homeostasis due to the absence of active excretory pathways [12] [13]. Dietary iron is primarily presented to enterocytes in two distinct forms: heme iron, derived from hemoglobin and myoglobin in animal products, and non-heme iron, the inorganic form found in both plant and animal-based foods [13]. The central thesis of heme versus non-heme iron bioavailability is unequivocally demonstrated by their differential absorption; heme iron constitutes only one-third of dietary iron intake in a typical Western diet yet contributes up to two-thirds of the total iron absorbed, explaining the higher prevalence of iron deficiency in vegetarian populations [10] [14]. This disparity in absorption efficiency is governed by two principal transmembrane transporters: Heme Carrier Protein 1 (HCP1) and Divalent Metal Transporter 1 (DMT1). This guide provides a structured, evidence-based comparison of HCP1 and DMT1 for researchers and drug development professionals, detailing their distinct mechanisms, regulation, and roles in iron homeostasis.

Transporter Profiles at a Glance

The following table summarizes the core characteristics of HCP1 and DMT1, highlighting their distinct roles in iron acquisition.

Table 1: Comparative Profile of HCP1 and DMT1

| Feature | Heme Carrier Protein 1 (HCP1) | Divalent Metal Transporter 1 (DMT1) |

|---|---|---|

| Primary Substrate | Heme (Intact Fe²⁺-protoporphyrin IX complex) [14] [13] | Divalent metal ions (Fe²⁺, Zn²⁺, Mn²⁺, Cu²⁺, Pb²⁺) [13] [15] |

| Dietary Iron Source | Heme Iron [14] | Non-Heme Iron [13] |

| Subcellular Localization | Apical membrane of duodenal enterocytes [16] | Apical membrane of enterocytes; endosomal membranes [16] [17] |

| Transport Mechanism | Proton-coupled import of intact heme [16] | Proton-coupled symport [13] [15] |

| Bioavailability Context | Accounts for high bioavailability of heme iron (~20-30%, up to 50%) [14] | Uptake efficiency influenced by dietary enhancers/inhibitors; generally lower bioavailability [14] [13] |

| Regulation by Iron Status | Post-translational shuttling (membrane to cytoplasm) [14] | Transcriptional and post-translational regulation [18] [17] |

Structural and Functional Mechanisms

Protein Structure and Transport Mechanism

HCP1 is a highly hydrophobic protein composed of 446 amino acids, belonging to the major facilitator superfamily (MFS) of proton-coupled transporters [14] [16]. Its proposed function is to facilitate the uptake of the intact heme molecule across the apical brush-border membrane of the duodenal enterocyte.

DMT1, also known as Nramp2 or SLC11A2, is a member of the natural resistance-associated macrophage protein (Nramp) family [17]. It functions as a proton symporter, co-transporting H⁺ and Fe²⁺ ions, a mechanism that is optimal in the acidic microclimate of the duodenum [17] [15]. DMT1 is not iron-specific and also transports other divalent cations, which can lead to competitive inhibition [13].

Intracellular Handling of Iron

The pathways diverge significantly after the initial uptake. Heme iron, transported intracellularly by HCP1, is catabolized in the cytoplasm or endosomes by the microsomal enzyme heme oxygenase (HO-1 or HO-2) [14] [16]. This reaction releases ferrous iron (Fe²⁺), carbon monoxide, and biliverdin. The liberated iron then joins the labile iron pool within the enterocyte, merging with the pathway of non-heme iron [14] [13].

In contrast, non-heme iron enters the cell directly as Fe²⁺ via DMT1. Prior to this uptake, dietary ferric iron (Fe³⁺) must be reduced to the ferrous form on the apical membrane surface. This critical reduction step is mediated by enzymes such as duodenal cytochrome B (Dcytb) [14] [13].

From the shared labile iron pool, iron's fate depends on the body's needs. It can be stored as ferritin or exported out of the enterocyte into the systemic circulation via ferroportin (FPN1), the sole known cellular iron exporter [13] [11]. The ferroxidase hephaestin, associated with ferroportin, oxidizes Fe²⁺ back to Fe³⁺ for loading onto transferrin in the plasma [13].

The diagram below illustrates these coordinated pathways.

Diagram Title: HCP1 and DMT1 Iron Absorption Pathways in Duodenal Enterocyte

Regulation of Absorption Pathways

Systemic and Cellular Regulation

Iron absorption is tightly regulated at both systemic and cellular levels to maintain homeostasis, with the liver-derived peptide hormone hepcidin being the central systemic regulator [14] [18]. Hepcidin binds to ferroportin, inducing its internalization and degradation, thereby reducing iron efflux from enterocytes (and macrophages) into the circulation [14] [11]. This provides a master switch for iron absorption.

Recent ex vivo studies on human duodenal biopsies have revealed that hepcidin's effect is more complex than just post-translational regulation of ferroportin. It also induces transcriptional downregulation of key genes involved in iron absorption. Treatment with hepcidin-25 significantly reduced mRNA levels of FPN1, DMT1, Dcytb, hephaestin, and HCP1 [18]. This demonstrates a coordinated suppression of both heme and non-heme iron uptake pathways in response to high iron status or inflammation.

At the cellular level, the iron-responsive element/iron-regulatory protein (IRE/IRP) system post-transcriptionally regulates the expression of proteins like DMT1 and ferritin in response to cytosolic iron levels [11] [17]. Furthermore, DMT1 activity is rapidly modulated by a "mucosal block" mechanism, where an initial dose of iron triggers the endocytosis of DMT1 from the apical membrane, reducing subsequent uptake [15].

Differential Response to Body Iron Status

A key functional difference between the two pathways is their regulatory response. Non-heme iron absorption via DMT1 is highly responsive to the body's iron status, significantly upregulating during deficiency and downregulating during overload [10] [13]. In contrast, heme iron absorption, while more efficient, demonstrates a more limited capacity for upregulation during iron deficiency [10]. This suggests potential rate-limiting steps at the level of heme catabolism or the handling of the liberated iron within the enterocyte.

Experimental Analysis and Methodologies

Key Experimental Models and Protocols

Research into HCP1 and DMT1 function employs a range of in vitro, ex vivo, and in vivo models.

In Vitro Transport Assays: A common methodology involves expressing HCP1 or DMT1 in heterologous systems like Xenopus laevis oocytes or mammalian cell lines (e.g., HeLa, CHO cells). Functional uptake is quantified using radioisotopic (⁵⁹Fe) or fluorescently labeled substrates (heme for HCP1; Fe²⁺ for DMT1). For instance, HCP1-transfected HeLa cells demonstrated a 2- to 3-fold increase in heme uptake compared to controls, which was temperature-sensitive and competitive with unlabelled heme [10]. Specificity for DMT1 is often confirmed using competitive inhibitors or non-transportable substrates.

Ex Vivo Human Organ Culture: This model uses endoscopically collected duodenal biopsies from human subjects, cultured for several hours with or without experimental treatments like hepcidin-25. Subsequent qPCR and immunoblotting analysis quantify changes in mRNA and protein levels of HCP1, DMT1, and other iron-related genes, providing direct insight into human regulatory physiology [18].

In Vivo Animal Studies: Closed duodenal loop experiments in rodents involve administering heme or iron doses into isolated intestinal segments. Tissue samples are collected over time to analyze iron uptake morphologically (e.g., using DAB staining for electron microscopy) or to measure the effect of antibodies (e.g., anti-HCP1) or genetic knockdown on tracer absorption [10].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Reagents for Studying HCP1 and DMT1 Function

| Research Reagent | Function & Application |

|---|---|

| ⁵⁹Fe-Labeled Heme | Radioisotopic tracer for quantifying heme iron uptake via HCP1 in transport assays [10]. |

| ⁵⁹FeCl₂ / ⁵⁹FeCl₃ | Radioisotopic tracer for quantifying non-heme iron uptake (requires reduction of Fe³⁺ to Fe²⁺ for DMT1) [13]. |

| Hepcidin-25 | The bioactive peptide hormone used to investigate transcriptional and post-translational regulation of HCP1, DMT1, and FPN1 in cell culture or ex vivo systems [18]. |

| siRNA/shRNA | For targeted knockdown of HCP1 or DMT1 gene expression in cell cultures to study loss-of-function phenotypes and validate transporter specificity [10] [18]. |

| Caco-2 Cell Line | A human intestinal epithelial cell model that undergoes enterocyte differentiation upon confluence; widely used for in vitro iron absorption and transport studies [18]. |

HCP1 and DMT1 are specialized transporters that mediate the absorption of heme and non-heme iron, respectively. HCP1 provides a highly efficient pathway for heme iron uptake, which explains its significant contribution to body iron stores. DMT1 handles the more abundant but less bioavailable non-heme iron, and its activity is more dynamically regulated to match bodily demands. The recent discovery that hepcidin transcriptionally represses both HCP1 and DMT1 underscores a sophisticated, coordinated regulatory mechanism that controls total dietary iron acquisition [18].

Future research should aim to further elucidate the precise protein structure and transport cycle of HCP1, given its dual role in folate transport. The development of specific, high-affinity inhibitors for both transporters would be invaluable for basic research and potential therapeutic applications. Furthermore, understanding how these pathways adapt throughout the human life cycle, from infancy to old age, and in various disease states, remains a critical area for investigation [12]. A deeper molecular understanding of HCP1 and DMT1 will continue to inform strategies for correcting iron deficiency and treating iron overload disorders.

Iron is an essential micronutrient critical for fundamental physiological processes including oxygen transport, cellular energy metabolism, DNA synthesis, and immune function [19] [20]. In humans, iron balance is uniquely regulated at the point of intestinal absorption rather than through active excretion, making bioavailability a crucial determinant of iron status [20] [21]. Dietary iron exists in two distinct forms with markedly different absorption characteristics: heme iron, derived from hemoglobin and myoglobin in animal tissues, and non-heme iron, obtained from plant-based foods, iron-fortified products, and supplements [19] [20]. This review systematically compares the bioavailability of these iron forms, examining the molecular mechanisms underlying their absorption differences, methodological approaches for assessing iron bioavailability, and the implications for public health and therapeutic development.

The profound disparity in absorption efficiency between heme and non-heme iron represents a critical focus for nutritional science and clinical practice. While heme iron typically demonstrates absorption rates of 15-35%, non-heme iron absorption ranges from 2-20% depending on dietary composition and individual iron status [22] [2]. This differential absorption has significant consequences for global iron deficiency prevention strategies and understanding the pathophysiology of iron overload disorders. The following sections provide a comprehensive evidence-based analysis of the factors governing iron bioavailability, with particular emphasis on the experimental approaches used to quantify absorption differences and the molecular pathways that regulate iron uptake.

Quantitative Bioavailability Comparison

The absorption efficiency of heme and non-heme iron has been quantified through multiple methodological approaches including isotopic studies, metabolic balance trials, and algorithm-based predictive models. The consistent finding across these diverse methodologies is the superior bioavailability of heme iron compared to its non-heme counterpart.

Table 1: Comparative Absorption Rates of Heme and Non-Heme Iron

| Iron Form | Typical Absorption Range | Primary Dietary Sources | Key Influencing Factors |

|---|---|---|---|

| Heme Iron | 15-35% [22] [2] | Meat, poultry, fish, seafood [19] [20] | Relatively unaffected by dietary composition [23] |

| Non-Heme Iron | 2-20% [22] [23] | Legumes, grains, fortified foods, vegetables [19] [20] | Strongly influenced by enhancers/inhibitors and individual iron status [19] [21] |

In Western populations, heme iron typically constitutes only 10-15% of total dietary iron intake but may contribute up to 40% of the total iron absorbed due to its enhanced bioavailability [20] [2]. This disproportionate contribution highlights its significant role in maintaining iron homeostasis. The absorption of non-heme iron demonstrates considerably greater variability, with studies reporting bioavailability from mixed diets ranging from 14-18%, while vegetarian diets typically yield only 5-12% absorption due to higher concentrations of inhibitory compounds [21].

Table 2: Population-Level Iron Bioavailability from Different Dietary Patterns

| Dietary Pattern | Mean Bioavailability | Key Determinants |

|---|---|---|

| Mixed Diet | 14-18% [21] | Balance of meat content and inhibitory compounds |

| Vegetarian Diet | 5-12% [21] | High phytate and polyphenol content reduces absorption |

| Heme Iron Supplementation | 25-30% [2] | Minimal interaction with dietary inhibitors |

A 2024 systematic review and meta-analysis of randomized controlled trials further substantiated the bioavailability disparity, demonstrating that heme iron interventions produced significantly greater hemoglobin increases in children with anemia or low iron stores compared to non-heme iron (mean difference 1.06 g/dL) [1]. This evidence confirms the clinical significance of the absorption differences between these iron forms, particularly in populations with elevated iron requirements.

Experimental Methodologies for Assessing Iron Bioavailability

Research quantifying heme and non-heme iron absorption employs sophisticated methodological approaches ranging from tightly controlled isotopic studies to population-level observational designs. Each methodology offers distinct advantages and limitations for characterizing iron bioavailability.

Isotopic Tracer Studies

Isotopic techniques represent the gold standard for precise measurement of iron absorption. These studies utilize stable (e.g., ⁵⁷Fe, ⁵⁸Fe) or radioactive (⁵⁵Fe, ⁵⁹Fe) iron isotopes to label test meals, allowing direct quantification of iron incorporation into erythrocytes or measurement of whole-body retention [24]. Participants consume labeled test meals specifically designed to isolate the effects of particular dietary components on iron absorption. Blood samples are collected 10-16 days post-consumption to measure isotope incorporation into hemoglobin, enabling calculation of the percentage of ingested iron absorbed [24]. This approach provides highly accurate absorption data for specific meals under controlled conditions but has limitations in representing long-term dietary patterns and natural eating contexts.

Algorithm-Based Predictive Models

Multiple mathematical algorithms have been developed to predict iron bioavailability from dietary intake data, eliminating the need for complex isotopic methodologies:

- Monsen Model (1978): The pioneering algorithm that estimates iron bioavailability based on heme iron intake and the presence of absorption enhancers in meals [25].

- Hallberg and Hulthén Model (2000): Extends the Monsen model by incorporating adjustment terms for dietary inhibitors like phytates and polyphenols [25].

- Reddy Model (2000): Another meal-based algorithm that accounts for multiple enhancers and inhibitors of non-heme iron absorption [25].

- Probabilistic Approach: Estimates population-level iron absorption by matching the prevalence of inadequate iron intakes with the observed prevalence of low iron stores, using serum ferritin concentrations as the biomarker [24] [25].

A cross-sectional study comparing these methodologies demonstrated strong correlations between algorithm estimates (r = 0.69-0.85) but significant quantitative differences, with diet-based models (8.5-8.9%) diverging from meal-based models (11.6-12.8%) and the probabilistic approach (17.2%) [25]. This highlights the methodological challenges in translating controlled experimental data to free-living populations with varied dietary patterns.

Serum Ferritin Response Studies

Intervention trials measuring changes in serum ferritin concentrations following supplementation with different iron forms provide clinically relevant bioavailability assessments. A 2024 meta-analysis of randomized controlled trials utilized this approach, demonstrating that heme iron supplementation produced superior hemoglobin responses in iron-deficient children compared to non-heme iron formulations [1]. These studies directly measure the functional impact of bioavailability differences on established iron status biomarkers, providing evidence for clinical and public health applications.

Diagram 1: Iron Absorption Pathways. This diagram illustrates the distinct intestinal absorption mechanisms for heme and non-heme iron, highlighting the dietary factors that influence non-heme iron uptake and the systemic regulation via hepcidin.

Molecular Mechanisms of Iron Absorption

The disparate bioavailability of heme and non-heme iron originates from their fundamentally different absorption pathways at the molecular level. Understanding these mechanisms provides insight into the factors governing iron homeostasis and potential therapeutic targets for modulating iron absorption.

Heme Iron Absorption Pathway

Heme iron undergoes a specialized absorption process that confers its high bioavailability. The initial uptake occurs via heme carrier protein 1 (HCP1) located on the apical membrane of duodenal enterocytes [2]. Unlike non-heme iron, heme is absorbed intact within the porphyrin ring, which protects it from precipitation and interaction with dietary inhibitors in the intestinal lumen [2]. Once inside the enterocyte, heme oxygenase enzymatically cleaves the porphyrin ring, releasing ionic iron into the intracellular labile iron pool [2]. This pathway bypasses the competitive inhibition and luminal complexation that markedly limit non-heme iron absorption, explaining why heme iron bioavailability remains relatively constant at 15-35% across different dietary contexts [23] [2].

Non-Heme Iron Absorption Pathway

Non-heme iron absorption employs a distinct and more complex pathway that is highly responsive to dietary composition and physiological requirements. Non-heme iron primarily exists in the ferric (Fe³⁺) state in foods but must be reduced to the ferrous (Fe²⁺) form for transport across the intestinal epithelium [19] [20]. This reduction is facilitated by duodenal cytochrome B (DcytB) and enhanced by ascorbic acid [19]. The divalent metal transporter 1 (DMT1) then mediates apical uptake of ferrous iron into enterocytes [19] [20]. Unlike heme iron absorption, this process is markedly influenced by luminal factors: phytates (in grains and legumes), polyphenols (in tea and coffee), and calcium competitively inhibit absorption, while ascorbic acid and "meat factor" (cysteine-containing peptides from animal tissue) enhance uptake [19] [20] [22].

Systemic Regulation via Hepcidin

Both absorption pathways converge at the basolateral export step, where ferroportin mediates iron transfer to circulating transferrin. This critical checkpoint is systemically regulated by hepcidin, a hepatic hormone that controls plasma iron concentrations [20]. During iron overload, hepcidin expression increases, promoting ferroportin degradation and reducing iron absorption [20]. Conversely, iron deficiency or elevated erythropoietic demand suppresses hepcidin, increasing ferroportin-mediated iron export [20]. Research indicates that heme and non-heme iron absorption respond differently to hepcidin regulation, with non-heme iron demonstrating greater suppression under high-hepcidin conditions [26]. This differential regulation further contributes to the bioavailability disparity between these iron forms, particularly in inflammatory states where hepcidin concentrations are elevated.

Research Reagents and Methodological Tools

Iron bioavailability research requires specialized reagents and methodological tools to accurately quantify absorption and investigate underlying mechanisms. The following table summarizes essential resources for conducting experimental studies in this field.

Table 3: Essential Research Reagents and Tools for Iron Bioavailability Studies

| Reagent/Tool | Experimental Function | Application Examples |

|---|---|---|

| Stable Iron Isotopes (⁵⁷Fe, ⁵⁸Fe) | Precise measurement of iron absorption using mass spectrometry | Metabolic studies quantifying iron incorporation into erythrocytes [24] |

| Radioiron Isotopes (⁵⁵Fe, ⁵⁹Fe) | Highly sensitive detection of iron absorption and distribution | Whole-body counting and erythrocyte incorporation assays [24] |

| Caco-2 Cell Model | In vitro simulation of intestinal iron absorption | Screening dietary factors affecting non-heme iron bioavailability [19] |

| Serum Ferritin Immunoassays | Quantification of iron storage status | Assessment of functional iron status in intervention trials [1] [24] |

| Hepcidin Assays | Measurement of regulatory hormone levels | Investigation of iron absorption regulation in different physiological states [20] |

| Dietary Assessment Algorithms | Prediction of bioavailable iron intake from food consumption data | Population-level estimation of iron bioavailability [24] [25] |

These research tools enable comprehensive investigation of iron absorption mechanisms from molecular through population levels. Isotopic methods provide the most direct and accurate absorption measurements but require specialized equipment and facilities [24]. Cell culture models offer high-throughput screening capabilities for identifying absorption modifiers but may not fully recapitulate in vivo physiology [19]. Algorithm-based approaches facilitate large epidemiological studies but depend on accurate dietary assessment and validation against biochemical measures [25]. The integration of these complementary methodologies has generated the robust evidence base characterizing the bioavailability disparity between heme and non-heme iron.

Implications for Research and Clinical Practice

The substantial difference in bioavailability between heme and non-heme iron has profound implications for nutritional guidance, public health interventions, and therapeutic development. Understanding these implications is essential for researchers and clinicians working to address iron deficiency and iron overload disorders.

Public Health and Nutritional Guidance

The bioavailability disparity necessitates distinct dietary recommendations based on iron source. The Recommended Dietary Allowance (RDA) for iron is 1.8 times higher for vegetarians than for meat-eaters to compensate for lower non-heme iron bioavailability [20]. Strategic dietary combinations can mitigate this difference: consuming vitamin C-rich foods with plant-based meals enhances non-heme iron absorption, while avoiding tea, coffee, and calcium-rich foods during iron-containing meals minimizes inhibition [19] [22]. These approaches are particularly important for population subgroups with elevated iron requirements, including pregnant women, infants, adolescents, and women of reproductive age [20]. Educational initiatives should emphasize both iron content and bioavailability when providing dietary guidance for preventing and treating iron deficiency.

Therapeutic Applications and Supplement Development

The tolerability and efficacy profiles of iron supplements vary substantially based on their iron form. Traditional non-heme iron supplements (e.g., ferrous sulfate, ferrous fumarate) frequently cause gastrointestinal adverse effects including dyspepsia and constipation, contributing to poor adherence [1] [2]. Heme iron supplements demonstrate superior tolerability while effectively improving iron status, offering a promising alternative for individuals unable to tolerate conventional supplements [1] [2]. Recent research also indicates that heme and non-heme iron have differential effects on the gut microbiome, with heme iron potentially promoting the growth of pathogenic bacteria more than non-heme iron [26]. This finding has important implications for supplement formulation, particularly for patients with inflammatory bowel diseases or other gastrointestinal conditions.

Safety Considerations and Health Risks

The high bioavailability and less regulated absorption of heme iron presents potential health concerns in certain contexts. Epidemiological evidence associates high heme iron intake with increased cardiovascular disease risk, with a meta-analysis reporting a 7% risk increase per 1 mg/day increment in heme iron consumption [26]. In contrast, non-heme iron intake demonstrates no significant association with cardiovascular risk [26]. This differential risk profile may reflect the more tightly regulated absorption of non-heme iron, which reduces the likelihood of excessive iron accumulation. Additionally, the pro-oxidant properties of iron necessitate careful consideration of supplementation strategies, particularly in individuals with adequate iron status [2]. These findings highlight the need for balanced iron intake recommendations that consider both deficiency prevention and overload risk.

Diagram 2: Iron Bioavailability Research Workflow. This diagram outlines the methodological approaches for estimating iron bioavailability, highlighting the integration of isotopic studies, in vitro models, and observational designs with biochemical and dietary assessment methods.

The substantial bioavailability disparity between heme (15-35%) and non-heme (2-20%) iron stems from their distinct absorption pathways, differential regulation by systemic factors, and varying susceptibility to dietary modifiers. This evidence-based analysis demonstrates that the superior bioavailability of heme iron confers advantages for rapidly correcting iron deficiency but may present greater risk for iron overload and specific chronic diseases. Non-heme iron, despite its lower absorption efficiency, benefits from tighter physiological regulation that may offer protection against excess accumulation. Future research should focus on refining bioavailability estimation methods, elucidating the precise molecular mechanisms of the "meat factor," and developing targeted interventions that optimize iron absorption while minimizing potential adverse effects. Understanding these fundamental differences enables researchers and clinicians to make informed decisions regarding dietary recommendations, supplement formulation, and public health strategies aimed at addressing iron-related disorders across diverse populations.

The Role of Hepcidin in Systemic Iron Homeostasis and Regulation

Systemic iron homeostasis is a complex process that must balance the essential biological functions of iron against its potential toxicity. The discovery of hepcidin, a peptide hormone predominantly synthesized in the liver, has revolutionized our understanding of iron regulation. This hormone serves as the master regulator of body iron distribution and absorption, functioning as the principal effector of the hepcidin-ferroportin axis that controls iron flow into plasma [27] [28]. Unlike other minerals, iron lacks a regulated excretory mechanism, making controlled absorption at the duodenal level the critical process for maintaining iron balance [29] [30]. Hepcidin provides this control by regulating the sole known cellular iron exporter, ferroportin, thereby determining how much iron enters the bloodstream from dietary sources, recycled erythrocytes, and hepatic stores [27] [29]. This review examines hepcidin's pivotal role within the context of comparative heme versus non-heme iron bioavailability research, providing researchers and drug development professionals with experimental data and methodological approaches relevant to this field.

Molecular Mechanisms of the Hepcidin-Ferroportin Axis

Hepcidin Synthesis and Regulation

Hepcidin (encoded by the HAMP gene) production in hepatocytes is transcriptionally regulated by multiple pathways that respond to different physiological stimuli. The primary regulatory pathway involves bone morphogenetic protein (BMP) signaling, specifically through a molecular complex of BMP receptors and their iron-specific ligands, modulators, and iron sensors [27] [29]. Under iron-replete conditions, increased transferrin saturation leads to elevated diferric transferrin, which binds to transferrin receptor 2 (TFR2) and interacts with HFE protein, activating the BMP-SMAD signaling pathway that upregulates hepcidin transcription [29] [28]. This sophisticated sensing mechanism ensures hepcidin production increases when body iron stores are sufficient, preventing iron overload.

Additional regulatory pathways include:

- Inflammatory signaling: Interleukin-6 (IL-6) and other inflammatory cytokines activate hepcidin transcription through the JAK-STAT pathway, reducing serum iron availability during infection or inflammation [29] [28].

- Erythropoietic activity: Enhanced erythropoiesis suppresses hepcidin production through erythroblast-derived erythroferrone (ERFE), allowing increased iron availability for hemoglobin synthesis [29] [28].

- Hypoxia: Oxygen deficiency downregulates hepcidin to facilitate increased iron absorption and mobilization [29].

Ferroportin Degradation and Iron Flux Control

Hepcidin exerts its physiological effects by binding to its receptor, the cellular iron exporter ferroportin (FPN, SLC40A1) [27] [29] [30]. This binding induces phosphorylation, internalization, and degradation of ferroportin, thereby reducing iron efflux from target cells [27] [29]. Ferroportin is expressed on the basolateral surface of duodenal enterocytes (where dietary iron is absorbed), macrophages of the reticuloendothelial system (where iron is recycled from senescent erythrocytes), and hepatocytes (which store iron) [27] [30]. By modulating ferroportin levels, hepcidin directly controls: (1) intestinal iron absorption, (2) iron release from storage sites, and (3) plasma iron concentrations [27] [29]. This regulatory mechanism ensures that iron enters the circulation according to bodily needs, preventing both deficiency and excess.

The following diagram illustrates the core regulatory pathway of hepcidin synthesis and its interaction with ferroportin:

Comparative Bioavailability: Heme versus Non-Heme Iron

Absorption Mechanisms and Efficiency

Dietary iron absorption occurs primarily in the duodenum and proximal jejunum, with significant differences between heme and non-heme iron pathways [20] [31]. Heme iron, derived from hemoglobin and myoglobin in animal products, is absorbed through a dedicated pathway involving heme carrier protein 1 (HCP1) on enterocyte apical membranes [31]. Once inside the enterocyte, heme is catabolized by microsomal heme oxygenase to release Fe²⁺ [31]. In contrast, non-heme iron, found in both plant sources and animal products, must be reduced from ferric (Fe³⁺) to ferrous (Fe²⁺) form by duodenal cytochrome B (Dcytb) before transport via divalent metal transporter 1 (DMT1) across the apical membrane [31] [30]. Both pathways converge at the basolateral membrane, where ferroportin exports iron with assistance from the ferroxidases hephaestin or ceruloplasmin [31] [30].

The critical distinction lies in their absorption efficiency. Heme iron demonstrates significantly higher bioavailability, with absorption rates ranging from 15% to 35%, while non-heme iron absorption varies from 2% to 20%, heavily influenced by dietary factors and individual iron status [20] [22] [32]. This differential absorption contributes to the estimation that mixed diets (containing both iron forms) have iron bioavailability of 14-18%, while vegetarian diets range from 5-12% [20] [21]. Notably, heme iron constitutes only 10-15% of dietary iron intake in Western populations but contributes approximately 40% of total absorbed iron due to its superior bioavailability [20].

Table 1: Comparative Absorption Characteristics of Heme and Non-Heme Iron

| Parameter | Heme Iron | Non-Heme Iron |

|---|---|---|

| Chemical Form | Iron incorporated into protoporphyrin IX ring (Fe²⁺) | Ionic iron (Fe²⁺ or Fe³⁺) |

| Dietary Sources | Meat, poultry, fish, seafood | Plants, fortified foods, supplements |

| Absorption Pathway | HCP1 transporter | Dcytb reduction → DMT1 transport |

| Average Absorption Rate | 15-35% | 2-20% |

| Influence of Iron Status | Minimal regulation | Tightly regulated (increased absorption during deficiency) |

| Contribution to Absorbed Iron in Western Diets | ~40% | ~60% |

Dietary Factors Influencing Iron Bioavailability

Numerous dietary components significantly modulate non-heme iron absorption, while heme iron remains relatively unaffected by these factors [20] [22]. Understanding these modifiers is crucial for designing dietary interventions and interpreting research on iron bioavailability.

Enhancers of Non-Heme Iron Absorption:

- Vitamin C (ascorbic acid): Acts as both a reducing agent (converting Fe³⁺ to Fe²⁺) and chelator, counteracting inhibitors like phytates [20] [22].

- Muscle tissue (MFP factor): The mechanism remains unclear, but cysteine-containing peptides in meat, fish, and poultry can enhance non-heme iron absorption 2-3 fold when consumed together [20].

- Organic acids: Citric, malic, and lactic acids can form soluble complexes with iron, enhancing absorption [21].

Inhibitors of Non-Heme Iron Absorption:

- Phytates: Found in whole grains, legumes, and seeds, strongly chelate iron and reduce absorption [20] [21].

- Polyphenols: Present in tea, coffee, red wine, and some cereals, form insoluble complexes with iron [20] [21].

- Calcium: High concentrations competitively inhibit both heme and non-heme iron absorption [20] [21].

- Plant proteins: Certain components in soy and other plant proteins may inhibit iron absorption independently of phytate content [21].

Table 2: Dietary Factors Modifying Iron Bioavailability

| Factor | Effect on Heme Iron | Effect on Non-Heme Iron | Mechanism |

|---|---|---|---|

| Vitamin C | Minimal effect | Strong enhancement | Reduction and chelation of iron |

| Phytates | Minimal effect | Strong inhibition | Formation of insoluble complexes |

| Polyphenols | Minimal effect | Strong inhibition | Binding and precipitation |

| Calcium | Moderate inhibition | Moderate inhibition | Competitive inhibition of absorption |

| MFP Factor | N/A (already heme iron) | Enhancement | Luminal carrier formation |

| Gastric Acid | Enhances release from heme | Essential for solubility | Acidic environment maintains solubility |

Experimental Models and Methodologies in Iron Research

In Vivo and Clinical Assessment Protocols

Research investigating hepcidin function and iron bioavailability employs sophisticated experimental approaches. Human studies typically utilize isotopic methods with ⁵⁵Fe or ⁵⁹Fe tracers to precisely quantify iron absorption from single meals or multiple meals over extended periods [21]. These methodologies have revealed that while single-meal studies clearly demonstrate the effects of enhancers and inhibitors, the impact of single dietary components becomes more modest in multi-meal studies with varied diets containing multiple inhibitors and enhancers [21].

Key methodological considerations include:

- Study duration: Short-term (single meal) versus long-term (multi-meal) assessments provide complementary data [21].

- Subject characteristics: Iron status (ferritin levels, transferrin saturation), obesity, and inflammatory status significantly influence results and must be carefully documented [33] [21].

- Dietary control: Precise quantification of all dietary components, including fortification iron and food additives like erythorbic acid, is essential [21].

- Biomarker analysis: Comprehensive assessment includes serum iron, transferrin saturation, ferritin, soluble transferrin receptor, hepcidin, and inflammatory markers like C-reactive protein [22] [32].

Molecular and Cellular Techniques

Laboratory-based research has been instrumental in elucidating the molecular mechanisms of hepcidin regulation and function. Key experimental approaches include:

- Gene expression analysis: Quantification of hepcidin (HAMP), ferroportin (SLC40A1), and related genes via RT-PCR and RNA sequencing [29].

- Protein detection: Western blotting, immunohistochemistry, and ELISA for hepcidin, ferroportin, and signaling molecules [29].

- Cell culture models: Primary hepatocytes, hepatoma cell lines (HepG2, HuH7), and intestinal cell models (Caco-2) for pathway analysis [29].

- Genetic manipulation: Knockout and transgenic mouse models, including Hfe⁻/⁻, Tfr2⁻/⁻, and Bmp6⁻/⁻ mice, to study iron regulatory pathways [27] [28] [30].

- Signaling pathway analysis: Investigation of BMP-SMAD, JAK-STAT, and ERK1/2 pathways through phosphoprotein analysis and reporter assays [29].

The following workflow diagram illustrates a comprehensive experimental approach for studying hepcidin regulation and iron bioavailability:

Research Reagents and Methodological Tools

Table 3: Essential Research Reagents for Iron Metabolism Studies

| Reagent/Category | Specific Examples | Research Application |

|---|---|---|

| Cell Culture Models | Primary hepatocytes, HepG2 cells, Caco-2 intestinal cells, J774 macrophages | In vitro investigation of iron uptake, regulation, and trafficking |

| Animal Models | Hfe⁻/⁻, Tfr2⁻/⁻, Bmp6⁻/⁻, Hamp⁻/⁻ mice; mk/mk rats with DMT1 mutations | In vivo study of systemic iron homeostasis and genetic disorders |

| Iron Isotopes | ⁵⁵Fe, ⁵⁹Fe for absorption studies; ⁵⁷Fe for stable isotope tracing | Quantitative measurement of iron absorption and distribution |

| Antibodies | Anti-hepcidin, anti-ferroportin, anti-HFE, anti-TfR1/TfR2, phospho-SMAD antibodies | Protein detection, quantification, and localization |

| Molecular Biology Tools | HAMP promoter constructs, siRNA against TMPRSS6, IRP1/2 expression plasmids | Mechanistic studies of gene regulation and signaling pathways |

| Iron Status Assays | Ferritin ELISA, transferrin saturation, soluble transferrin receptor, hepcidin-25 MS | Assessment of systemic and cellular iron status |

| Signaling Modulators | Recombinant BMP6, IL-6, ERFE; dorsomorphin (BMP inhibitor); STAT3 inhibitors | Pathway-specific manipulation of hepcidin regulation |

Pathophysiological Implications and Therapeutic Applications

Hepcidin Dysregulation in Disease States

Disturbances in the hepcidin-ferroportin axis underlie numerous iron-related disorders [27] [29] [28]. Hepcidin deficiency causes iron overload in hereditary hemochromatosis and ineffective erythropoiesis, while hepcidin excess leads to iron-restricted anemias seen in chronic kidney disease, inflammatory conditions, and some cancers [27] [29]. Understanding these pathological mechanisms has direct implications for diagnosing and treating iron disorders.

Key clinical associations include:

- Hereditary hemochromatosis: Mutations in HFE, TFR2, HJV, or HAMP itself result in inappropriately low hepcidin production, leading to uncontrolled iron absorption and tissue iron overload [29] [28] [30].

- Iron-loading anemias: In conditions like β-thalassemia, enhanced but ineffective erythropoiesis increases erythroferrone (ERFE) production, which sequesters BMP receptor ligands and suppresses hepcidin despite iron overload [29] [28].

- Anemia of inflammation: Chronic inflammatory conditions elevate IL-6, which stimulates hepcidin production, resulting in iron sequestration in macrophages and iron-restricted erythropoiesis despite adequate iron stores [29] [28].

- Iron-refractory iron deficiency anemia (IRIDA): Mutations in the hepcidin inhibitor TMPRSS6 lead to inappropriately high hepcidin levels, impairing iron absorption and causing iron deficiency anemia resistant to oral iron supplementation [29] [28].

Therapeutic Targeting of the Hepcidin-Ferroportin Axis

The elucidation of hepcidin's central role has spurred development of novel therapeutics targeting the hepcidin-ferroportin axis [29] [28]. These include:

- Hepcidin agonists: For treating iron overload disorders, including synthetic hepcidin analogs, hepcidin-inducing agents, and TMPRSS6 inhibitors (antisense oligonucleotides, siRNAs) [29] [28].

- Hepcidin antagonists: For treating anemia of inflammation, including hepcidin-neutralizing antibodies, anticalins, and ferroportin stabilizers [29] [28].

- Erythroferrone-targeting therapies: Potential approaches for managing iron-loading anemias by modulating the ERFE-hepcidin axis [29].

These targeted approaches represent a paradigm shift from conventional iron supplementation or phlebotomy toward mechanism-based treatments that directly address the underlying pathophysiology of iron disorders.

Hepcidin stands as the central regulator of systemic iron homeostasis, orchestrating iron absorption, recycling, and distribution through its targeted degradation of ferroportin. The distinction between heme and non-heme iron bioavailability reveals a complex absorption landscape where heme iron demonstrates superior bioavailability with minimal regulation, while non-heme iron absorption is tightly controlled but significantly influenced by dietary factors and subject iron status. Advanced research methodologies, including isotopic absorption measurements, molecular techniques for pathway analysis, and genetic animal models, have been instrumental in elucidating these mechanisms. The continuing refinement of our understanding of hepcidin biology promises further advances in diagnosing and treating iron disorders, with targeted therapies modulating the hepcidin-ferroportin axis representing a new frontier in clinical management. For researchers and drug development professionals, integrating bioavailability concepts with molecular regulatory mechanisms provides a comprehensive framework for advancing both basic science and clinical applications in iron metabolism.

Iron deficiency (ID) remains one of the most pervasive nutritional disorders worldwide, representing a significant global health challenge with profound implications for human health and economic development. As a condition characterized by inadequate iron stores to meet physiological demands, ID impairs physical activity, cognitive performance, and quality of life while increasing societal healthcare costs. The World Health Organization estimates that approximately 1.62 billion people worldwide are affected by anemia, with iron deficiency responsible for approximately 50% of these cases [32]. Despite being a preventable condition, ID continues to disproportionately affect vulnerable populations across different geographic regions, socioeconomic statuses, age groups, and sexes.

Understanding the relative bioavailability of heme versus non-heme iron is fundamental to addressing the global burden of iron deficiency. Heme iron, derived primarily from animal sources, demonstrates significantly higher bioavailability (15-35% absorption) compared to non-heme iron from plant sources (2-20% absorption) [22]. This differential absorption plays a crucial role in determining population-specific risk factors and designing effective intervention strategies. The complex interplay between dietary patterns, iron absorption mechanisms, and physiological requirements creates a multifaceted public health challenge that requires comprehensive epidemiological assessment and targeted solutions.

This review synthesizes current evidence on the global prevalence, geographical distribution, and population-specific risk factors for iron deficiency, with particular emphasis on the implications of heme versus non-heme iron bioavailability. We present systematically organized quantitative data, experimental methodologies, and analytical frameworks to inform researchers, scientists, and public health professionals working to mitigate this persistent nutritional deficiency.

Global Epidemiology of Iron Deficiency

Current Prevalence and Temporal Trends

The global burden of dietary iron deficiency remains substantial despite decades of intervention efforts. According to the Global Burden of Disease Study 2021, the age-standardized prevalence rate of dietary iron deficiency was 16,434.4 per 100,000 population (95% UI: 16,186.2–16,689.0), with age-standardized disability-adjusted life years (DALYs) of 423.7 per 100,000 (285.3–610.8) [34]. This represents a significant decrease from 1990, with prevalence declining by 9.8% (8.1–11.3) and DALYs decreasing by 18.2% (15.4–21.1) over the three-decade period.

Analysis of data from 1990 to 2019 reveals that the global age-standardized prevalence and DALY rates for childhood iron deficiency have shown consistent declines, with an average annual percentage change (AAPC) of -0.14% for prevalence and -0.25% for DALY rates [35]. The most significant decline in age-standardized prevalence rate occurred between 2015 and 2019, while DALY rates decreased most substantially between 2017 and 2019. Despite these improvements, iron deficiency remains the highest contributor to disability among all nutritional deficiencies globally [36].

Table 1: Global Burden of Dietary Iron Deficiency (1990-2021)

| Metric | 1990 Value | 2019/2021 Value | Percentage Change 1990-2019/2021 | Uncertainty Intervals |

|---|---|---|---|---|

| Age-Standardized Prevalence Rate (per 100,000) | 18,210 (1990) | 16,434.4 (2021) | -9.8% | 16,186.2–16,689.0 |

| Age-Standardized DALY Rate (per 100,000) | 518.1 (1990) | 423.7 (2021) | -18.2% | 285.3–610.8 |

| Childhood ASPR (per 100,000) | 20,972.41 (1990) | 20,146.35 (2019) | -3.9% | 19,407.85–20,888.54 |

| Childhood DALY Rate (per 100,000) | 751.98 (1990) | 698.90 (2019) | -7.1% | 466.54–1,015.31 |

Geographical Disparities and Socioeconomic Determinants

Significant geographical disparities exist in the distribution of iron deficiency burden, strongly correlated with socioeconomic development levels. The Socio-demographic Index (SDI), a composite measure of income, educational attainment, and fertility rates, demonstrates an inverse relationship with ID burden [35]. Regions with low SDI consistently exhibit higher prevalence rates, with Sub-Saharan Africa and South Asia bearing the greatest burden.

Analysis of GBD 2019 data reveals that the highest national point prevalences of anemia (as a proxy for severe iron deficiency) were found in Zambia [49,327.1], Mali [46,890.1], and Burkina Faso [46,117.2] per 100,000 population [37]. In contrast, high-SDI countries have achieved substantially greater improvements in iron status over the past three decades, demonstrating a 25.7% reduction in ID burden compared to only 11.5% in low-SDI countries [34].

Between 1990 and 2017, inequalities in ID burden have actually increased despite overall prevalence decreases, with Gini coefficients rising from 0.366 to 0.431, indicating widening disparities between regions [36]. East Asia & Pacific and South Asia regions made substantial progress in ID control across both sexes, while Sub-Saharan Africa experienced persistent high burden, particularly among men [age-adjusted DALYs per 100,000 population: 572.5 in 1990 and 562.6 in 2017] [36].

Table 2: Iron Deficiency Burden by Region and Development Indicator

| Region/SDI Category | Age-Standized Prevalence Rate (per 100,000, 2019) | Age-Standardized DALY Rate (per 100,000, 2019) | Temporal Trend (1990-2019) |

|---|---|---|---|

| Low SDI Regions | 23,500-28,000 (estimated) | 900-1,200 (estimated) | Slow decline (11.5% reduction) |

| High SDI Regions | 8,000-12,000 (estimated) | 200-350 (estimated) | Substantial decline (25.7% reduction) |

| Sub-Saharan Africa | 25,176.2 | 872.1 | Persistent high burden |

| South Asia | 23,847.5 | 801.3 | Significant improvement |

| East Asia & Pacific | 15,234.1 | 512.6 | Major improvement |

Population-Specific Risk Factors

Sex and Age Stratification

Significant disparities in iron deficiency burden exist across sex and age groups. Females consistently bear a disproportionately higher burden of iron deficiency compared to males across almost all geographic regions and age groups. In 2021, the age-standardized prevalence of dietary iron deficiency was 21,334.8 per 100,000 (95% UI: 20,984.8–21,697.4) in females compared to 11,684.7 (11,374.6–12,008.8) in males, representing a nearly two-fold difference [34]. Similarly, DALY rates were 598.0 per 100,000 in females versus 253.0 in males [34].

This sex-based disparity is particularly pronounced during the reproductive years, when women experience regular iron losses through menstruation and have increased requirements during pregnancy and lactation. A study of Polish adolescents aged 15-20 years found that menstruating females had significantly lower iron intake compared to their male counterparts, with particular deficiencies in heme iron consumption [32]. The gap in ID burden between sexes narrows significantly in regions with higher Human Development Index (β = -364.11, p < 0.001), suggesting that socioeconomic development can mitigate some sex-based disparities [36].

Age represents another critical determinant of iron deficiency risk. Children under 5 years represent a particularly vulnerable group due to high iron requirements for growth and development. In 2019, the global number of prevalent cases of ID in children under 15 years was 391,491,699, with 13,620,231 DALYs [35]. The prevalence among children under 5 is notably higher than in older age groups, with the age-standardized prevalence rate for childhood ID at 20,146.35 per 100,000 in 2019 [35].

Table 3: Age and Sex-Specific Burden of Iron Deficiency

| Population Subgroup | Prevalence Rate (per 100,000) | DALY Rate (per 100,000) | Key Risk Factors |

|---|---|---|---|

| Female (all ages) | 21,334.8 | 598.0 | Menstrual blood loss, pregnancy, lactation |

| Male (all ages) | 11,684.7 | 253.0 | Low dietary intake, parasitic infections |

| Children <5 years | 22,500 (estimated) | 850-1,100 (estimated) | Rapid growth, inadequate complementary feeding |

| Female Adolescents (15-20 years) | 19,500 (estimated) | 550-750 (estimated) | Growth spurt, menstrual losses, dietary habits |

| Elderly (>65 years) | 12,000-15,000 (estimated) | 400-600 (estimated) | Chronic diseases, malabsorption, medication use |

Socioeconomic and Dietary Determinants

Socioeconomic status and dietary patterns significantly influence iron deficiency risk through multiple pathways. Lower socioeconomic status is associated with food insecurity, limited access to diverse diets, and higher rates of infections that impair iron absorption or increase losses. The inverse relationship between Socio-demographic Index (SDI) and ID burden highlights the importance of broader developmental factors [35].

Dietary patterns profoundly impact iron status through both the quantity and bioavailability of iron consumed. Heme iron from animal sources (meat, poultry, fish) has higher bioavailability (25-30% absorption) compared to non-heme iron from plant sources (1-10% absorption) [20] [32]. Population groups relying predominantly on plant-based diets without careful attention to enhancers of iron absorption are at increased risk of deficiency. Adolescent females following vegetarian diets have been shown to have significantly lower iron intake, particularly heme iron, compared to their non-vegetarian peers [32].

Several dietary factors can enhance or inhibit iron absorption:

- Enhancers: Vitamin C (found in citrus fruits, bell peppers, tomatoes) significantly improves non-heme iron absorption when consumed with meals [20] [22]. The "MFP factor" (meat, fish, poultry) enhances non-heme iron absorption when animal and plant sources are consumed together [20].

- Inhibitors: Phytates (in whole grains and legumes), tannins (in tea and coffee), and calcium (in dairy products) can reduce non-heme iron absorption when consumed simultaneously with iron-rich foods [20] [22].

Cultural practices, food preparation methods, and cooking practices also influence iron status. Cooking in iron cookware can increase the iron content of foods by 1.5 to 3.3 times, representing a simple, low-cost intervention to improve iron intake [20].

Methodological Framework for Iron Status Assessment

Laboratory Assessment and Diagnostic Protocols

Accurate assessment of iron status requires a comprehensive approach utilizing multiple biochemical markers and clinical assessments. The standard diagnostic workflow begins with hemoglobin measurement to detect anemia, followed by specific iron status parameters to determine if anemia is due to iron deficiency.

The most common methodology for hemoglobin assessment in population-based surveys is the HemoCue system, which is particularly suitable for field settings [37]. In clinical and research laboratories, automated hematology analyzers (e.g., Coulter counters) are typically employed, which function by reacting hemoglobin with Drabkin's solution and measuring absorbance wavelengths [37]. For altitude-adjusted hemoglobin values, the WHO-recommended formula is applied to account for increased hemoglobin concentrations at higher elevations.

Key iron status parameters include:

- Ferritin: The primary storage iron protein and most specific indicator of iron stores; low levels indicate depleted stores

- Transferrin saturation: Measures the percentage of transferrin bound with iron; reflects iron availability for erythropoiesis

- Soluble transferrin receptor: Elevated in iron deficiency; useful for distinguishing iron deficiency anemia from anemia of chronic disease

- Hemoglobinopathy screening: Essential in regions with high prevalence of thalassemias and hemoglobin variants

The disability weights used in GBD calculations for anemia are: mild anemia 0.004 (0.001-0.008), moderate anemia 0.052 (0.034-0.076), and severe anemia 0.149 (0.101-0.209) [38]. These weights are applied to prevalence data to calculate Years Lived with Disability (YLDs).

Dietary Assessment and Bioavailability Estimation

Comprehensive assessment of iron intake and bioavailability requires detailed dietary evaluation methods. The Food Frequency Questionnaire (FFQ) specifically designed for iron intake calculation, such as the IRONIC-FFQ validated for Polish adolescents, provides a practical tool for estimating habitual iron intake [32].

The critical distinction between heme and non-heme iron must be incorporated into dietary assessment methodologies. The standard calculation assumes that heme iron constitutes 40% of iron from animal products, while non-heme iron represents 60% of iron from animal products and 100% of iron from plant products [32]. This differentiation is essential for accurate bioavailability estimation.

Advanced approaches to dietary iron assessment include:

- Chemical analysis of duplicate portions: Direct measurement of iron content in consumed foods

- Isotopic studies: Using stable iron isotopes to precisely measure absorption rates

- In vitro digestion models: Simulating gastrointestinal conditions to predict iron bioavailability

- Dietary pattern analysis: Examining how combinations of foods influence iron absorption

For population-level assessments, the GBD study employs sophisticated modeling techniques, including Spatiotemporal Gaussian Process Regression (ST-GPR) of mean hemoglobin concentration and standard deviation, ensemble model weighting, and calculation of area under the curve for anemia severity distributions [37].

Research Reagents and Methodological Tools

Table 4: Essential Research Reagents and Methodological Tools for Iron Status Assessment

| Reagent/Tool | Application | Technical Specification | Research Context |

|---|---|---|---|

| HemoCue System | Point-of-care hemoglobin measurement | Portable photometer, disposable microcuvettes | Field surveys, rapid anemia screening [37] |

| Coulter Counter | Automated hematology analysis | Impedance-based cell counting, hemoglobinometry | Clinical laboratories, high-throughput settings [37] |

| Drabkin's Reagent | Hemoglobin quantification | Cyanmethemoglobin method, spectrophotometric detection | Standardized hemoglobin measurement [37] |

| Ferritin ELISA | Iron storage assessment | Immunoassay, chemiluminescent/colorimetric detection | Iron status evaluation, deficiency diagnosis [22] |

| IRONIC-FFQ | Dietary iron intake assessment | Validated food frequency questionnaire, heme/non-heme differentiation | Nutritional epidemiology, intake patterns [32] |

| Altitude Adjustment Formula | Hemoglobin correction | WHO-standardized algorithm | Cross-study comparability, high-altitude populations [37] |

The global burden of iron deficiency remains substantial despite modest improvements over the past three decades. Significant disparities persist across geographical regions, socioeconomic groups, sexes, and age cohorts, with the highest burden concentrated in low-SDI regions and among vulnerable populations including women of reproductive age and young children. The differential bioavailability of heme versus non-heme iron represents a crucial factor in determining population-specific risks and designing effective interventions.

Future strategies to reduce iron deficiency must address both dietary adequacy and bioavailability considerations, with particular attention to enhancing non-heme iron absorption through dietary modifications. Public health interventions should prioritize vulnerable populations while addressing socioeconomic determinants that perpetuate disparities. Continued monitoring using standardized methodologies and advanced analytical approaches will be essential for tracking progress and refining intervention strategies to eliminate this preventable nutritional deficiency.

Assessing Iron Status and Bioavailability: Analytical Methods and Clinical Applications

Iron deficiency remains one of the most prevalent nutritional disorders worldwide, affecting approximately 27% of the global population and representing a significant public health concern [39]. Accurate assessment of iron status is fundamental for both clinical diagnosis and nutritional research, particularly in the context of comparing the relative bioavailability of heme versus non-heme iron. The efficacy of iron absorption from dietary sources varies substantially, with heme iron (derived from animal products) demonstrating 25-30% bioavailability compared to just 3-5% for non-heme iron (primarily from plant sources) [39]. This differential bioavailability underscores the necessity for precise and reliable biomarkers to evaluate iron status in diverse physiological and pathological conditions.

This guide provides a comprehensive comparison of three principal iron status biomarkers: serum ferritin, transferrin saturation (TSAT), and soluble transferrin receptor (sTfR). Each biomarker reflects distinct aspects of iron metabolism, from storage and transport to cellular demand. We objectively evaluate their performance characteristics, diagnostic accuracy, limitations, and applications in both research and clinical settings, with particular emphasis on their utility in bioavailability studies investigating heme versus non-heme iron absorption.

Iron Biomarker Comparative Analysis

The following table summarizes the core characteristics, strengths, and limitations of the three primary iron status biomarkers.

Table 1: Comprehensive Comparison of Key Iron Status Biomarkers

| Biomarker | Physiological Role | Reference Ranges | Strengths | Limitations |

|---|---|---|---|---|

| Serum Ferritin | Storage iron compartment; reflects iron reserves [40] | Varies by age/sex/status; Common ID cutoff: <15-30 μg/L [40] [41] | Strong correlation with body iron stores; High specificity for iron deficiency in absence of inflammation [40] | Acute phase reactant - falsely elevated in inflammation, infection, liver disease [42] [40] |

| Transferrin Saturation (TSAT) | Circulating iron transport capacity; calculated as (Iron/TIBC)×100 [42] | <20% indicates iron-deficient erythropoiesis [43] | Assesses functional iron compartment; Useful indicator for iron availability for erythropoiesis [43] | Affected by inflammation, catabolism, malnutrition (reduces transferrin); Diurnal variation in serum iron levels [43] |

| Soluble Transferrin Receptor (sTfR) | Tissue iron demand; reflects erythropoietic activity and iron requirements at cellular level [43] [44] | Highly assay-dependent [44]; >1.63 mg/L associated with tissue ID in heart failure [43] | Unaffected by inflammation [44]; Marker of tissue iron deficiency [43] | Lack of assay standardization; Limited utility in early iron deficiency without anemia; No universally accepted reference ranges [44] |

Diagnostic Performance and Clinical Utility

Context-Dependent Diagnostic Accuracy

The diagnostic performance of iron biomarkers varies significantly across different clinical populations and physiological conditions. In critically ill patients with sepsis, standard iron biomarkers demonstrate poor correlation with newer parameters, making accurate diagnosis of iron deficiency particularly challenging [42]. A 2023 study of 90 sepsis patients found no meaningful correlation between standard biomarkers (ferritin, transferrin, TSAT) and newer indicators like reticulocyte hemoglobin equivalent, suggesting limited utility of traditional markers in inflammatory states [42].

The sTfR biomarker shows particular value in detecting tissue-level iron deficiency before it manifests in systemic circulation. In heart failure patients with normal hemoglobin and systemic iron parameters, elevated sTfR levels (>1.63 mg/L) were strongly associated with impaired functional capacity and reduced quality of life [43]. This finding highlights sTfR's sensitivity to subclinical iron deficiency at the tissue level, which may not be detected by ferritin or TSAT measurements.

Comparative Limitations in Specific Populations

Table 2: Biomarker Limitations and Interfering Factors

| Biomarker | Major Interfering Conditions | Impact on Interpretation | Compensatory Assessment Methods |

|---|---|---|---|

| Serum Ferritin | Inflammation, infection, liver disease, metabolic syndrome [42] [40] | Falsely elevated values masking iron deficiency | Concurrent CRP measurement; Alternative biomarkers (sTfR, Ret-He) [42] |

| Transferrin Saturation | Inflammation, malnutrition, catabolic states, diurnal variation [43] | Reduced transferrin independent of iron status | Clinical correlation; Serial measurements; Combination with other biomarkers |

| Soluble Transferrin Receptor | Conditions with increased erythropoiesis (hemolysis, hemoglobinopathies) [44] | Elevated levels not specific to iron deficiency | sTfR-ferritin index; Clinical context; Bone marrow examination (gold standard) |

Novel Approaches and Functional Assessments