Global Harmonization of Food Method Validation Protocols: Current Initiatives, Challenges, and Future Pathways

This article explores the critical drive towards international harmonization of food method validation protocols.

Global Harmonization of Food Method Validation Protocols: Current Initiatives, Challenges, and Future Pathways

Abstract

This article explores the critical drive towards international harmonization of food method validation protocols. Aimed at researchers, scientists, and drug development professionals, it examines the foundational principles and global regulatory landscape, including initiatives by organizations like ICH and IMDRF. It details the methodological aspects of validation and verification, supported by real-world case studies. The content also addresses common troubleshooting and optimization challenges, such as inter-laboratory variability, and provides a comparative analysis of validation approaches. The article concludes by synthesizing key takeaways and outlining future directions for creating a more unified, efficient, and reliable global framework for food safety and quality assurance.

The Imperative for Global Harmonization: Understanding the Landscape and Key Drivers

In the globalized food industry, the safety and quality of products depend on the reliability of analytical methods used for testing. Harmonization of food method validation refers to the process of aligning technical protocols, performance criteria, and acceptance standards for these analytical methods across international jurisdictions, organizations, and sectors. The primary goal is to ensure that a test method, whether used in a laboratory in the United States, the European Union, or Japan, produces consistent, reliable, and comparable results that are recognized by regulatory authorities and trading partners worldwide. This alignment is crucial for facilitating international trade, protecting public health, and fostering innovation in food safety testing. Without harmonization, manufacturers face redundant testing, regulatory delays, and potential trade barriers, while regulators struggle with recognizing data from different validation systems. This guide objectively compares the key international validation systems and the experimental protocols that underpin them, providing researchers and scientists with a clear framework for navigating the complex landscape of method validation.

Key International Validation Systems and Frameworks

Several international organizations and standards bodies have established frameworks for the validation of food testing methods. The most prominent of these are the International Organization for Standardization (ISO) and AOAC INTERNATIONAL, alongside regional systems like NF Validation. The table below summarizes the core frameworks and their applicable sectors.

Table 1: Key International Method Validation Frameworks

| Framework Name | Governing Body | Primary Focus & Scope | Key Documentary Standard |

|---|---|---|---|

| ISO 16140 Series | International Organization for Standardization (ISO) | Microbiological method validation for the food chain; a comprehensive multi-part protocol for alternative method validation [1]. | ISO 16140-2: Protocol for the validation of alternative (proprietary) methods against a reference method [1]. |

| AOAC Official Methods of Analysis (OMA) | AOAC INTERNATIONAL | Chemical and microbiological methods; validation of standard methods for foods, dietary supplements, and agricultural commodities [2] [3]. | AOAC Appendix J: Guidelines for microbiological method validation, currently under revision to reflect new technologies and user needs [2]. |

| NF Validation | AFNOR Certification (France) | Certification of commercial alternative methods for microbiological analysis and veterinary drug residue screening in Europe [4]. | ISO 16140-2 & proprietary protocols; recognized under EU Regulation 2073/2005 [4]. |

| ICH Q2(R1) / Q2(R2) | International Council for Harmonisation (ICH) | Analytical procedure validation for pharmaceuticals; a rigorous quality-based framework, sometimes referenced as a model for other sectors [5]. | ICH Q2(R2): "Validation of Analytical Procedures" (Implemented in 2025) [5]. |

The push for harmonization is driven by tangible challenges in global trade. A review of risk assessment protocols for Food Contact Materials (FCMs) across the FDA, EU, Mercosur, India, China, Japan, and Thailand highlighted that while the same substances are used globally, they must comply with different regulatory limits and testing requirements in each region, creating inefficiency and uncertainty [6]. The review concluded that there is significant room for harmonization in many areas of risk assessment, which would facilitate global trade and safety assurance [6].

Comparative Analysis of Validation Systems

A direct comparison of the technical requirements, acceptance criteria, and operational aspects of different validation systems reveals both convergence and divergence. This analysis is critical for laboratories and manufacturers operating in multiple markets.

Table 2: Comparative Analysis of Validation System Requirements

| Aspect of Validation | ISO 16140 Series | AOAC Official Methods | NF Validation (Europe) |

|---|---|---|---|

| Core Philosophy | Validation of alternative methods against a standardized reference method [1]. | Fit-for-purpose method validation for adoption as an official standard; includes both proprietary and non-proprietary methods [2]. | Third-party certification of commercial alternative methods for European market access [4]. |

| Key Performance Studies (Microbiology) | Method comparison study & interlaboratory study [1]. | Interlaboratory collaborative study, single-laboratory validation for Performance Tested Methods℠ [3]. | Follows ISO 16140-2 protocol for microbiology; independent certification by AFNOR [4]. |

| Scope & Categorization | 15 defined food categories; validation with 5 categories grants "broad range of foods" status [1]. | Defined food commodity triangles (e.g., dairy, meats, plant proteins) [2]. | Aligned with ISO 16140 food categories; recognized specifically under EU Regulation 2073/2005 [4]. |

| Post-Validation Requirement | Method verification by the end-user laboratory (ISO 16140-3) [1]. | Method verification in the user's laboratory under a quality system (e.g., ISO/IEC 17025) [3]. | Method verification by the user, facilitated by public validation reports and certificates [4]. |

| Regulatory Standing | Internationally recognized; referenced in EU food safety regulations [1] [4]. | Widely recognized by US FDA, USDA, and other international bodies [2]. | Formally recognized by European authorities, including the French DGAL [4]. |

The data shows that while core principles are similar, the pathways to recognition and specific technical requirements can differ. For instance, the ISO 16140 series offers a highly structured, two-stage process (validation and verification) with a clear definition of food categories [1]. AOAC, while also relying on interlaboratory studies, is actively modernizing its statistical approaches, as seen in the revision of its Appendix J, which questions if culture should still be the "gold standard" for confirmation and how to handle non-culturable entities like viruses [2]. NF Validation effectively builds upon the ISO framework to provide a certified mark for the European market, demonstrating how regional systems can align with international standards [4].

Core Experimental Protocols for Method Validation

The validation of a food testing method, whether microbiological or chemical, follows a structured series of experimental studies designed to generate robust performance data.

The Validation and Verification Workflow

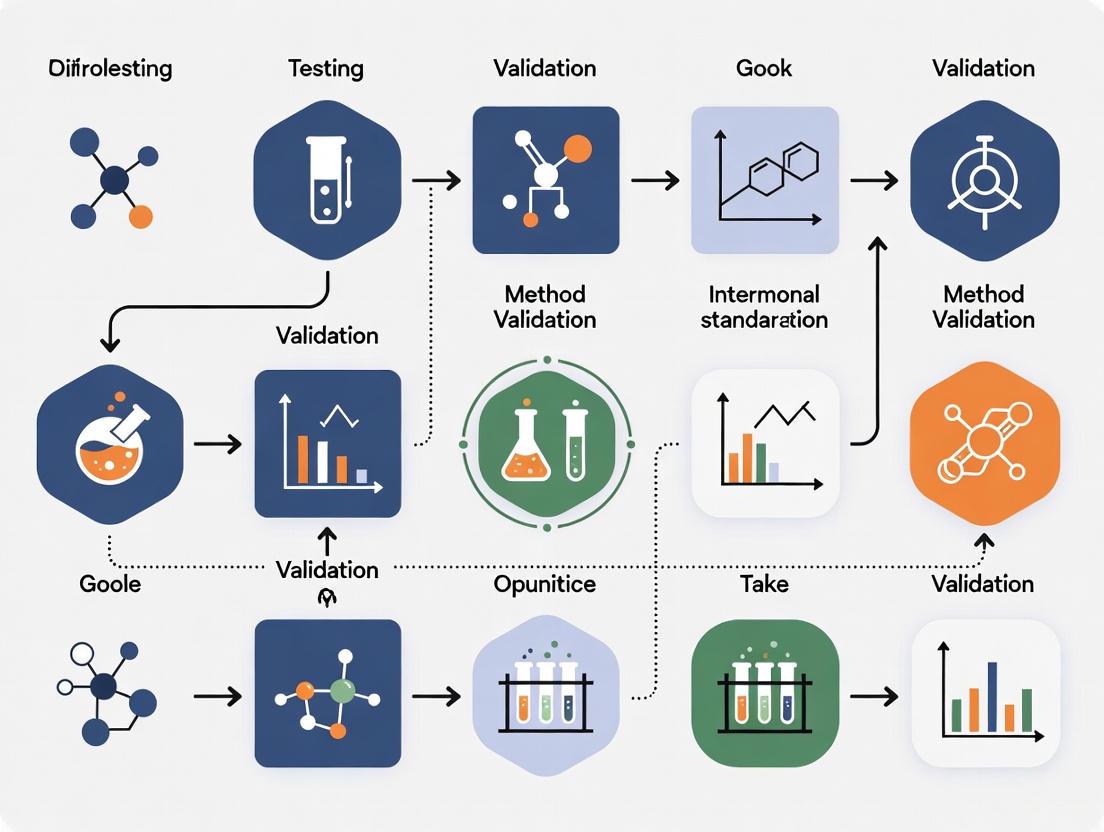

The following diagram illustrates the standard pathway from method development to routine use in a laboratory, integrating the requirements of frameworks like ISO 16140.

Detailed Protocol for a Microbiological Method (Based on ISO 16140-2)

For a qualitative microbiological method (e.g., detecting Salmonella), the validation protocol is meticulously designed to challenge the method with a variety of samples and compare its performance to a reference method.

- Objective: To validate a proprietary, rapid Salmonella detection kit against the ISO 6579-1 reference method.

- Experimental Design: A method comparison study is conducted in a single laboratory, followed by an interlaboratory study involving at least 10 laboratories [1].

- Sample Preparation: The study must include a minimum of five different food categories from a predefined list of 15 (e.g., meat, dairy, vegetables) [1]. For each food category, both artificially contaminated samples (at low and high levels) and uncontaminated samples are tested.

- Testing Procedure: Each laboratory tests the same set of samples using both the alternative method and the reference method. The study is typically conducted blind to avoid bias.

- Data Analysis: The results are compiled into a 2x2 table comparing the alternative and reference methods. Key performance metrics are calculated, including:

- Relative Accuracy: The degree of agreement between the alternative and reference methods.

- Relative Sensitivity: The ability of the alternative method to detect true positives.

- Relative Specificity: The ability of the alternative method to correctly identify true negatives.

- Relative Limit of Detection (RLOD): The lowest level of contamination the alternative method can reliably detect compared to the reference method.

Following a successful validation according to ISO 16140-2, the method is considered "validated." However, before a laboratory can use it routinely, it must undergo a verification process as described in ISO 16140-3 [1]. This involves two steps: 1) Implementation verification, where the lab tests a sample from the validation study to prove it can operate the method correctly; and 2) Food item verification, where the lab tests challenging food items specific to its scope to confirm the method performs as expected for those matrices [1].

The Scientist's Toolkit: Essential Reagents and Materials

The execution of validation studies requires specific, high-quality reagents and materials to ensure the integrity of the results. The following table details key solutions used in the validation of microbiological and chemical methods for food testing.

Table 3: Essential Research Reagent Solutions for Method Validation

| Reagent / Material | Function in Validation | Application Example |

|---|---|---|

| Selective & Non-Selective Growth Media | To support the growth and isolation of target microorganisms; selective media suppress competing flora. | Tryptic Soy Agar (non-selective) and Xylose Lysine Deoxycholate (XLD) Agar (selective for Salmonella) in a method comparison study [1]. |

| Certified Reference Materials (CRMs) | To provide a traceable and characterized quantity of an analyte for accuracy and calibration studies. | A CRM for aflatoxin M1 used to validate an analytical method in milk, ensuring accurate quantification and recovery calculations [2]. |

| Inactivated Culture Suspensions | To serve as a consistent and safe source of target microorganisms for artificial contamination of food samples. | A suspension of inactivated Listeria monocytogenes used to spike sterile food homogenate for sensitivity and detection limit studies [1] [4]. |

| Primary Secondary Amine (PSA) | A solid-phase dispersive sorbent used in sample cleanup to remove fatty acids and other organic acids from food extracts. | Used in the QuEChERS method for pesticide residue analysis; its performance must be validated as it can affect recovery of certain analytes like chlorothalonil [7]. |

| Buffers & Diluents | To maintain a stable pH and osmolarity during sample preparation, dilution, and microbial enrichment. | Phosphate Buffered Saline (PBS) used for serial dilution of food samples to ensure microbial viability and accurate enumeration. |

Harmonization in food method validation is not about creating a single, monolithic system, but about fostering alignment, mutual recognition, and transparency between different systems. The comparative analysis shows a strong foundation built on common principles of scientific rigor, statistical soundness, and demonstrable fitness-for-purpose. The ongoing collaboration between organizations like AOAC and AFNOR to establish mutual recognition agreements is a testament to this trend [4].

The future of harmonization will be shaped by several key developments. First, the integration of advanced statistical models and Bayesian methods is being explored to address inconsistencies in the interpretation of performance characteristics like the limit of detection [2]. Second, the rise of novel foods and ingredients demands the development of updated analytical methods fit for new matrices, pushing validation requirements into uncharted territories [2]. Finally, the expansion of continuous manufacturing in related sectors like pharmaceuticals, guided by new guidelines like ICH Q13, offers a model for how dynamic process control and real-time release testing could eventually influence food safety assurance, requiring a new generation of validated analytical methods [5]. For researchers and scientists, engaging with these evolving international protocols is essential for driving the next wave of innovation in global food safety.

The international harmonization of food method validation protocols represents a critical frontier in global public health and trade. For researchers, scientists, and drug development professionals, navigating the complex patchwork of international regulations is not merely an administrative challenge—it carries significant costs for trade efficiency, public safety, and technological innovation. Regulatory divergence occurs when different jurisdictions implement varying technical requirements, validation standards, or compliance procedures for ostensibly similar products or analytical methods. This fragmentation creates substantial barriers to efficient global commerce and safety assurance.

The pharmaceutical and food industries face particularly acute challenges, where method validation guidelines from agencies like the FDA, EMA, and ICH, while sharing common goals of ensuring data reliability and protecting public health, often emphasize different validation parameters or documentation requirements [8]. Meanwhile, broader regulatory shifts, such as those between the UK and EU following Brexit, illustrate how political developments can trigger significant regulatory misalignment that directly impacts scientific commerce and collaboration [9] [10]. As global supply chains become increasingly interconnected, these disparities in validation protocols and safety standards create inefficiencies that ultimately impair innovation and consumer safety.

The Real-World Costs of Regulatory Divergence

Economic Impacts on Trade and Commerce

Regulatory misalignment creates substantial economic burdens throughout the product development and distribution lifecycle. These costs manifest most directly through:

Duplicate Testing and Certification: Companies operating in multiple markets often must conduct redundant testing to meet differing national requirements. For instance, products certified for sale in the UK may require complete retesting for EU market entry, creating particular hardship for smaller firms that cannot afford duplicative processes [10].

Supply Chain Complexities: A table manufacturer exporting to both UK and EU markets must navigate two sets of regulations for timber sourcing (EUTR), product safety (General Product Safety Directive), and chemical usage (REACH) [10]. Even minor divergences in permitted chemical thresholds—such as cadmium levels in paint—force manufacturers to choose between maintaining separate production lines or designing to the strictest standard, increasing operational complexity [10].

Implementation Costs: The UK's initial introduction of the UKCA marking system, which mirrored EU CE marking requirements, created new compliance burdens before Parliament later allowed use of either mark—a adjustment that provided an estimated £640.5 million savings to businesses over 10 years compared to a full transition to UKCA [9].

Safety and Public Health Implications

Beyond economic impacts, regulatory divergence creates tangible risks to public health and safety through:

Inconsistent Safety Standards: Differing requirements for product safety testing, chemical restrictions, and hazard classifications can create protection gaps that vary by jurisdiction. For example, the EU and UK now have numerous examples of specific chemistries with different hazard classifications, meaning REACH restrictions triggered by classification apply differently in each market [9].

Validation Inconsistencies: In food safety, the absence of universally accepted standards for method validation complicates regulatory oversight and method development, potentially compromising the integrity of food safety systems [11].

Compliance Challenges: Knowledge gaps emerge when contract manufacturers outside Europe are unfamiliar with current European controls and restrictions, leading to non-compliance despite well-managed compliance systems [9].

Impediments to Scientific Innovation

Regulatory divergence creates significant headwinds for technological advancement and methodological improvements:

Delayed Adoption of Advanced Technologies: Rapidly advancing technologies in food safety, including pathogen detection and traceability systems, face delayed implementation because standardized validation protocols have not kept pace with innovation [11].

Research and Development Inefficiencies: The absence of harmonized validation requirements means developers must design studies to satisfy multiple regulatory frameworks, increasing costs and complicating study design.

Method Validation Gaps: As noted in AOAC discussions, "the gap between advancing technologies and the standards that are used to support them needs to be addressed and minimized" to ensure new tools are appropriately challenged and qualified for widespread adoption [11].

Table 1: Documented Impacts of Regulatory Divergence Across Sectors

| Sector | Economic Impact | Safety Consequence | Innovation Effect |

|---|---|---|---|

| Manufacturing | Duplicate testing for UK/EU markets; Estimated £640M+ savings from reduced duplication [9] [10] | Differing chemical classifications between UK/EU REACH create consumer protection gaps [9] | Slowed adoption of new production methods and materials |

| Food Safety | Increased compliance costs for multinational market access [8] | Variable validation requirements for pathogen detection methods [11] [12] | Delayed implementation of advanced detection technologies [11] |

| Botanical Products | Multiple validation pathways for botanical identification [11] | Inconsistent authentication requirements affect product quality [11] | Orthogonal method development required for different markets [11] |

Comparative Analysis of Method Validation Frameworks

Global Validation Guidelines and Requirements

International regulatory bodies have established distinct validation guidelines that create a complex landscape for researchers and developers seeking global market access. These frameworks, while sharing common scientific principles, differ in specific requirements and emphases:

ICH Guidelines: The International Council for Harmonisation provides globally influential standards through documents like ICH Q2(R1), emphasizing scientific rigor in analytical performance with focus on parameters like specificity, accuracy, precision, and robustness [8].

FDA Approach: The U.S. Food and Drug Administration emphasizes lifecycle validation and risk management, with requirements that often extend beyond basic analytical validation to include ongoing verification and monitoring [8].

EMA Standards: The European Medicines Agency aligns with ICH guidelines but incorporates region-specific requirements that may differ in emphasis or documentation standards [8].

Country-Specific Variations: Markets like China, Japan, and South Korea continue to develop their own validation requirements, with recent updates spanning food contaminants, additives, and health food standards [13].

The fundamental challenge lies in the fact that "choosing the wrong method validation guideline can cause serious problems," including regulatory submissions being "rejected by agencies, leading to delays, extra testing, and cost overruns" [8]. For example, "if a U.S.-based pharma company submits EMA-style data, the FDA may reject it" [8].

Comparative Experimental Data: A Case Study in Food Quality

Recent empirical research demonstrates how variable standards and testing requirements can yield different quality assessments for identical products. A 2023 study examining quality attributes of four apple cultivars during storage and transportation provides illuminating experimental data on how environmental conditions affect quality parameters measured under different protocols [14].

The research evaluated weight loss and firmness changes in apple cultivars ('Granny Smith', 'Royal Gala', 'Pink Lady', and 'Red Delicious') at temperatures ranging from 2°C to 8°C, documenting significant quality differences based on storage conditions. These parameters are crucial as "firmness and loss of weight are the crucial attributes used to evaluate the quality of various fruits, as many other quality attributes are related to these two attributes" [14].

Table 2: Apple Firmness Degradation Across Cultivars and Temperatures [14]

| Apple Cultivar | Initial Firmness (kg·cm²) | Firmness at 48h, 2°C (kg·cm²) | Firmness at 48h, 8°C (kg·cm²) | Rate of Firmness Loss (R² value range) |

|---|---|---|---|---|

| Pink Lady | 8.69 | 7.89 | 6.81 | 0.9972–0.9647 |

| Granny Smith | 8.45 | 7.92 | 6.95 | 0.9964–0.9484 |

| Royal Gala | 7.89 | 7.31 | 6.42 | 0.9871–0.9129 |

| Red Delicious | 7.52 | 6.98 | 6.07 | 0.9489–0.8691 |

The experimental results demonstrated that "the degradation of quality was evident in all four cultivars, with temperature having a significant impact on firmness" [14]. The decline was minimal at 2°C but increased progressively with higher storage temperatures. These findings have profound implications for establishing harmonized quality standards across jurisdictions, as different storage condition regulations would yield significantly different quality outcomes even for identical products.

Validation in Practice: Microbiological Method Certification

The October 2025 NF VALIDATION certifications for food microbiology methods provide a concrete example of current validation practices. The certification of 142 food microbiology analysis methods validated according to the ISO 16140-2:2016 protocol demonstrates both the movement toward standardization and the specific technical requirements that must be met across international markets [12].

Recent certifications include:

- TEMPO TC (bioMérieux) renewal

- LUMIprobe 24 Listeria monocytogenes (EUROPROBE) validation

- Various Thermo Scientific SureTect PCR assays for pathogen detection

- Neogen Petrifilm Lactic Acid Bacteria Count Plate approval [12]

These certifications reflect the ongoing effort to maintain rigorous methodological standards while accommodating technological advancements in detection methods. The extension of validation for methods like RAPID'E. coli 2 to enable colony counting on a single plate illustrates how validation frameworks must evolve with technological improvements [12].

Methodological Approaches to Validation Assessment

Experimental Protocols for Validation Studies

Robust method validation requires carefully designed experimental protocols that address relevant regulatory requirements while generating scientifically defensible data. Based on current research and validation practices, key methodological considerations include:

Comprehensive Parameter Assessment: Evaluation of accuracy, precision, specificity, detection limit, quantification limit, linearity, and robustness as fundamental validation parameters [8].

Cultivar-Specific Validation: As demonstrated in the apple quality study, validation protocols must account for intrinsic material variations, with research showing significantly different degradation patterns across four apple cultivars under identical storage conditions [14].

Temperature-Integrated Modeling: Development of "multiple regression quality prediction model[s] as a function of temperature and time" that can accurately predict changes in critical quality attributes under variable supply chain conditions [14].

Orthogonal Method Verification: Particularly for botanical identification, employing "a multi-method approach to ensure high certainty" using complementary techniques including HPTLC, microscopy, macroscopic analysis, and emerging genetic testing [11].

The experimental approach used in the apple quality study exemplifies rigorous validation methodology: apples were "harvested at their optimum maturity" from a commercial farm, stored under controlled temperature conditions (2°C-8°C), and measured systematically over time to generate degradation kinetics [14]. The resulting model achieved "an R² value of 0.9544, indicating a high degree of accuracy" in predicting quality changes [14].

Assessment of Validation Tool Reliability

A 2024 methodological study developed a framework for assessing the reliability and validity of food safety culture assessment tools, identifying eleven key elements required for proper tool validation [15]. This research revealed significant variations in how thoroughly different tools implement validation protocols:

Validation Gaps: While "face validation, and pilot testing were evident and appeared to be done the most," many tools showed deficiencies in critical areas, with "content, ecological, and cultural validity" being the least validated for scientific tools [15].

Comprehensive Validation: Of eight tools assessed, "only one tool (CT2) was validated on each of the elements," demonstrating the current inconsistency in validation practices even for established assessment methods [15].

Statistical Limitations: The study found that "none of the tools were assessed for postdictive validity, concurrent validity and the correlation coefficient relating to construct validity," indicating significant methodological gaps [15].

This research underscores that "having an established science-based approach is key as it provides a way to determine the trustworthiness of established assessment tools against accepted methods" [15]—a principle that applies equally to method validation in broader regulatory contexts.

Visualization of Method Validation Pathways

The complex process of analytical method validation and regulatory approval can be visualized through the following workflow, which integrates multiple validation components and decision points:

Diagram 1: Method Validation and Regulatory Assessment Pathway

This visualization illustrates the complex pathway from method development through regulatory assessment, highlighting critical decision points where divergent requirements can necessitate additional testing or protocol revisions. The pathway demonstrates how methods may achieve harmonized status across multiple jurisdictions or remain jurisdiction-specific, with the latter creating the inefficiencies and costs documented throughout this analysis.

The Researcher's Toolkit: Essential Materials for Validation Studies

Table 3: Essential Research Reagent Solutions for Validation Studies

| Reagent/Material | Function in Validation | Application Examples |

|---|---|---|

| Reference Standards | Provide benchmark for accuracy and calibration | Method qualification, instrument calibration [8] |

| Quality Control Materials | Monitor assay performance and variability | Precision studies, longitudinal monitoring [8] [14] |

| Certified Reference Materials | Establish metrological traceability | Method verification, proficiency testing [11] |

| Culture Collections | Support microbiological method validation | Pathogen detection assays, enrichment studies [12] |

| Chemical Standards | Enable quantification and identification | Contaminant testing, additive analysis [13] |

| Botanical Reference Materials | Support authentication and identification | Orthogonal botanical ID, purity assessment [11] |

The high costs of regulatory disparity—in economic, safety, and innovation dimensions—present a compelling case for intensified international cooperation on validation protocols. Current examples from pharmaceutical method validation, food safety certification, and product compliance demonstrate that divergence creates significant inefficiencies without necessarily enhancing safety outcomes.

The path forward requires concerted effort across multiple domains: regulatory agencies must prioritize mutual recognition agreements and harmonized standards; researchers should develop validation protocols that accommodate technological advancements while maintaining scientific rigor; and industry stakeholders must advocate for streamlined requirements that maintain safety while reducing redundant testing.

As global supply chains continue to integrate and scientific innovation accelerates, establishing internationally harmonized validation frameworks becomes increasingly essential. Such harmonization would reduce costs, enhance safety through consistent standards, and accelerate the adoption of innovative technologies—ultimately benefiting consumers, industry, and regulatory authorities alike. The scientific community has both the expertise and the imperative to lead this transition toward more collaborative, efficient, and effective global regulatory systems.

In the globalized landscape of food and pharmaceutical development, the harmonization of method validation protocols is not merely a regulatory convenience but a fundamental requirement for ensuring product safety, facilitating international trade, and accelerating the availability of innovative products to consumers worldwide. The existence of disparate regional regulations creates significant logistical and scientific challenges for multinational companies and laboratories, complicating the path from development to market [16]. This article objectively maps the roles of key international organizations—the International Council for Harmonisation (ICH), the International Medical Device Regulators Forum (IMDRF), the World Health Organization (WHO), and various regional bodies—in shaping this landscape in 2025. By comparing their scopes, outputs, and memberships, and by detailing the experimental protocols they endorse, this guide provides researchers, scientists, and drug development professionals with a clear framework for navigating global regulatory expectations. The central thesis is that while these organizations have distinct mandates, their collaborative and increasingly aligned efforts are crucial for building a robust, efficient, and cohesive global regulatory system [17].

Organizational Landscape and Comparative Analysis

A detailed analysis of the selected international organizations reveals distinct yet complementary roles in harmonizing technical requirements and regulatory practices. The following table provides a structured, quantitative comparison of their primary activities based on documented outputs from 2018 to 2024, highlighting their unique contributions to global harmonization [17].

Table 1: Comparative Analysis of International Regulatory Organizations

| Organization | Primary Focus & Scope | Key Output Types | Dominant Activity Domains | Relevance to Food Method Validation |

|---|---|---|---|---|

| ICH (International Council for Harmonisation) | Technical requirements for human pharmaceuticals; global harmonization of standards for quality, safety, and efficacy [16] [17]. | Guidance (e.g., ICH Q2(R2) on analytical procedure validation) [16]. | Quality, Non-clinical, Clinical [17]. | Indirect; principles of analytical method validation (Q2(R2)) are foundational and often inform best practices in other sectors, including food chemical safety [16]. |

| IMDRF (International Medical Device Regulators Forum) | Medical devices, including AI-enabled software (SaMD, SiMD) and associated software [18]. | Guidance, Collaborative work, Standards and norms (e.g., on AI change control) [18]. | Medical Devices, Digital Health, Innovative Therapies [17]. | Limited; focused on devices, but its work on AI (GMLP principles) is relevant for emerging digital food safety tools [18]. |

| WHO (World Health Organization) | Public health; ensuring the quality, safety, and efficacy of medicines globally, with a focus on essential medicines and capacity building [17]. | Standards and norms, Guidance, Information, Training [17]. | Public Health, Quality, Pharmacovigilance [17]. | High; establishes global norms and standards for food safety and quality, crucial for validating methods in public health contexts. |

| Regional Bodies (e.g., FDA, EMA, Health Canada) | Regional implementation and enforcement of harmonized standards; often adopt ICH guidelines into regional regulation [16] [19]. | Guidance, Regulatory decisions, Enforcement. | Varies by region; covers all domains within their jurisdiction. | Direct; regional authorities (e.g., FDA HFP) set enforceable validation requirements for methods used in regulatory submissions within their markets [19]. |

The interactions and collaborative efforts between these organizations are fundamental to a functional global system. The following diagram visualizes the logical relationships and primary collaborative pathways between these key players in the international regulatory ecosystem.

Diagram 1: Interaction of International Regulatory Organizations. This diagram shows how global standards set by ICH, IMDRF, and WHO are adopted and implemented by regional bodies, with ongoing collaboration between the international organizations.

A 2025 scientific mapping of regulatory activities confirms that quality is the most active domain for international organizations, followed by public health, convergence and reliance, and pharmacovigilance [17]. This analysis also demonstrates that membership in one international organization often correlates with participation in others. For instance, ICH member countries are significantly more active in other multinational regulatory organizations compared to non-member countries, suggesting that engagement in one forum facilitates broader international regulatory cooperation [17]. This synergy is critical for reducing duplication and promoting a more predictable regulatory environment for industry and researchers.

Detailed Methodologies and Validation Protocols

A core component of harmonization lies in the standardization of experimental protocols for method validation. The following section details key methodologies prescribed by leading international standards, providing a clear roadmap for laboratory implementation.

ICH Q2(R2) for Analytical Procedure Validation

The ICH Q2(R2) guideline, modernized in the recent revision, provides the foundational framework for validating analytical procedures for pharmaceuticals, the principles of which are often applied in food chemical safety [16]. The validation process is designed to prove that an analytical method is fit for its intended purpose. The core parameters and their experimental protocols are as follows:

Accuracy: This is assessed by determining the closeness of agreement between the measured value and a reference accepted as a true value. The protocol involves:

- Sample Preparation: Analyze the sample using a reference standard of known concentration. Alternatively, spike a placebo or blank matrix with a known amount of the analyte.

- Testing: Perform multiple analyses (minimum n=9) across at least three concentration levels covering the specified range.

- Data Analysis: Calculate the percentage recovery of the analyte or the difference between the mean and the accepted true value [16].

Precision: This evaluates the degree of agreement among individual test results from multiple samplings of a homogeneous sample. The protocol is stratified into three tiers:

- Repeatability (Intra-assay): Multiple analyses (minimum n=6) of the same homogeneous sample under identical operating conditions over a short interval.

- Intermediate Precision: Experiments performed on different days, with different analysts, or using different equipment within the same laboratory.

- Reproducibility (Inter-laboratory): Precision between different laboratories, typically assessed during collaborative studies [16].

Specificity: The protocol must demonstrate the method's ability to unequivocally assess the analyte in the presence of other potentially interfering components.

- Interference Testing: Analyze samples containing impurities, degradation products, or matrix components that are expected to be present.

- Comparison: Compare the results with those obtained from a pure analyte standard to confirm that the response is due solely to the target analyte [16].

Linearity and Range: This establishes that the method produces results directly proportional to analyte concentration.

- Preparation: Prepare a series of standard solutions (minimum 5 concentrations) across the claimed range.

- Analysis: Analyze each concentration multiple times.

- Statistical Analysis: Plot the measured response against the concentration and perform linear regression analysis. The range is the interval between the upper and lower concentration levels for which linearity, accuracy, and precision have been demonstrated [16].

ISO 16140 Series for Microbiological Method Validation

For food microbiology, the ISO 16140 series is the internationally recognized standard for the validation and verification of alternative (proprietary) methods against reference methods [12] [1]. The workflow for implementing a new microbiological method involves two critical stages: validation and verification, as outlined in the diagram below.

Diagram 2: Microbiological Method Workflow. This diagram outlines the two-stage process per the ISO 16140 series: initial method validation to prove it is fit-for-purpose, followed by laboratory verification to demonstrate proficiency.

Validation Protocol (ISO 16140-2): The validation of an alternative method is a structured, two-phase process conducted by the method developer or an independent body (e.g., AFNOR Certification) [12] [1].

- Method Comparison Study: A single laboratory performs a comparative study of the alternative method against a reference method. For qualitative methods, this involves testing a panel of contaminated and uncontaminated samples to determine relative accuracy, inclusivity, and exclusivity. For quantitative methods, the comparison focuses on parameters like linearity and precision.

- Interlaboratory Study: A ring trial is conducted with a minimum of 10 laboratories. Each laboratory tests a set of samples, typically from at least 5 different food categories (e.g., dairy, meat, vegetables), using both the alternative and reference methods. The data generated is statistically analyzed to determine the alternative method's performance characteristics, such as relative accuracy, repeatability, and reproducibility [1].

Verification Protocol (ISO 16140-3): Once a method is validated, an end-user laboratory must verify its ability to perform the method correctly. This is also a two-stage process [1].

- Implementation Verification: The laboratory demonstrates its technical competence by testing one of the exact food items used in the original validation study. The goal is to achieve results that align with the performance criteria established during validation.

- Food Item Verification: The laboratory then tests a selection of challenging food items that are specific to its own scope of testing but fall within the validated categories. This confirms that the method performs reliably for the laboratory's specific application needs [1].

The Scientist's Toolkit: Key Research Reagent Solutions

The successful implementation of the validation protocols described above relies on a suite of essential reagents and materials. The following table details key components of a researcher's toolkit for method validation and verification.

Table 2: Essential Research Reagents and Materials for Method Validation

| Item | Function / Purpose | Example in Context |

|---|---|---|

| Certified Reference Materials (CRMs) | To establish accuracy and calibrate equipment by providing a substance with one or more property values that are certified as traceable and defined [16]. | A certified analyte standard of known purity and concentration for a chemical hazard (e.g., lead) or a certified microbial strain for a pathogen (e.g., Listeria monocytogenes). |

| Matrix-Matched Calibrators | To account for matrix effects and ensure accurate quantification by preparing standards in a material that mimics the sample's composition. | Calibrators prepared in a blank food homogenate (e.g., ground meat for veterinary drug analysis or infant formula for nutrient testing). |

| Selective Culture Media & Agar | For the cultivation, isolation, and confirmation of specific microorganisms in microbiological methods, as defined in validation scopes [1]. | Chromogenic agars for E. coli (e.g., ChromID Coli [12]) or selective agars for Salmonella used in confirmation procedures per ISO 16140-6. |

| Proprietary Test Kits & Reagents | Validated alternative (proprietary) methods that offer faster, more specific, or automated detection of analytes [12] [1]. | Commercial kits like TEMPO (bioMérieux) for microbial enumeration or Thermo Scientific SureTect PCR assays for pathogen detection [12]. |

| Quality Control (QC) Samples | To monitor the ongoing performance and precision of a method during routine use and for intermediate precision studies. | Stable, homogeneous samples with known concentrations of the analyte, run with each batch of test samples to ensure the method remains in control. |

The harmonization of food and pharmaceutical method validation protocols is a dynamic and multi-faceted endeavor, driven by the collaborative efforts of ICH, IMDRF, WHO, and regional bodies. As of 2025, the trend is decisively shifting from a prescriptive, "check-the-box" approach to a more scientific, risk-based, and lifecycle-oriented model, as exemplified by the modernized ICH Q2(R2) and Q14 guidelines and the comprehensive ISO 16140 series [16] [1]. For researchers and scientists, success in this environment requires a dual focus: a deep understanding of the specific experimental protocols mandated by these organizations and a strategic awareness of how these frameworks interact and evolve. Emerging priorities, such as the application of artificial intelligence in regulatory science and the validation of methods for innovative therapies and digital health tools, will continue to test and shape this harmonized landscape [19] [17] [18]. By engaging with these international standards and actively participating in the scientific discourse, the global research community can help ensure that harmonization efforts continue to protect public health while fostering innovation and efficiency.

In the globalized food industry, the reliability of analytical methods is paramount for ensuring food safety, facilitating international trade, and protecting public health. Method validation provides the foundational evidence that an analytical method is fit for its intended purpose, ensuring data reliability, accuracy, and regulatory compliance [8]. However, the existence of multiple validation standards from different agencies and regions can create significant barriers. Harmonization of these protocols aims to establish a common framework, reducing redundant testing, simplifying regulatory submissions, and fostering mutual recognition of data across international borders. This guide compares key global validation standards, providing researchers and scientists with a clear understanding of their core principles, terminology, and experimental requirements to support the broader objective of international harmonization.

Core Principles and Terminology

At its core, method validation is a structured process for confirming that an analytical procedure performs as intended for a specific application. Adherence to recognized guidelines is not merely a regulatory formality; it is essential for producing trustworthy data that supports product safety and efficacy. The core objectives shared by all validation guidelines are to ensure method reliability, protect patient and public safety, and support regulatory approval [8].

A harmonized understanding of key validation parameters is the first step toward a common language. The following table defines the essential characteristics assessed during method validation.

Table 1: Core Terminology in Method Validation

| Term | Definition | Role in Establishing Quality |

|---|---|---|

| Accuracy | The closeness of agreement between a measured value and a true reference value. | Ensures that results are correct and unbiased, foundational for decision-making. |

| Precision | The closeness of agreement between a series of measurements from multiple sampling. | Quantifies random error and ensures consistency and repeatability of results. |

| Specificity | The ability to assess the analyte unequivocally in the presence of other components. | Demonstrates that the method measures only the intended analyte, ensuring relevance. |

| Linearity | The ability of the method to obtain results directly proportional to analyte concentration. | Defines the quantitative range of the method and confirms its suitability for the scope. |

| Range | The interval between upper and lower levels of analyte for which suitable precision/accuracy is demonstrated. | Establishes the boundaries within which the method is proven to be reliable. |

| Robustness | A measure of method capacity to remain unaffected by small, deliberate variations in method parameters. | Indicates the reliability of a method during routine use in different environments. |

Comparison of International Validation Guidelines

Different regulatory bodies publish method validation guidelines tailored to their jurisdictional and industry needs. Selecting the appropriate guideline is critical, as a mismatch can lead to costly revalidation, regulatory rejections, or delayed product launches [8]. The following section compares the focus and testing requirements of major international bodies.

Table 2: Comparison of Key International Validation Guidelines

| Guideline / Agency | Regional/Global Focus | Key Characteristics & Emphasis | Ideal Application Context |

|---|---|---|---|

| ICH Q2(R2) | Global (Pharmaceutical) | Scientific rigor in analytical performance; lifecycle approach. | Global drug development and registration. |

| FDA | United States | Risk-based approach, lifecycle validation (ALCOA+ principles). | Pharmaceutical and food products for the US market. |

| EMA | European Union | Adherence to ICH principles with specific EU adaptations. | Products marketed within the European Union. |

| ISO 16140 | Global (Food Microbiology) | Validation of alternative microbiological methods against a reference method. | Food safety testing for pathogens and hygiene indicators [12]. |

| EFSA EU Menu | European Union (Food Consumption) | Harmonized food consumption data collection for dietary exposure assessments. | Pan-European dietary surveys and exposure assessments [20]. |

The technical requirements for validation parameters can also differ between guidelines. For instance, the number of replicates required or the specific way a parameter like precision is defined and tested may vary. The FDA may emphasize a risk-management approach throughout the method's lifecycle, while ICH provides detailed specifications for analytical performance characteristics [8]. Understanding these nuances is essential for designing a validation study that will be accepted by the target regulatory authority.

Experimental Protocols and Data Presentation

A harmonized validation protocol follows a systematic sequence of activities, from planning to execution. The workflow below illustrates the typical stages of a method validation study, from initial planning to final reporting.

Detailed Experimental Methodology

The execution of validation experiments requires meticulous planning. The following provides a generalized protocol for key experiments, which must be adapted to the specific requirements of the chosen guideline (e.g., ICH, FDA, ISO).

Accuracy Assessment:

- Objective: To determine the closeness of test results to the true value.

- Protocol: Analyze a minimum of 9 determinations across a minimum of 3 concentration levels (e.g., 80%, 100%, 120% of the target concentration). The sample matrix should be spiked with a known quantity of the analyte. Accuracy is calculated as the percentage recovery of the known amount of analyte or as the difference between the mean and the accepted true value (bias).

- Data Presentation: Results are typically summarized in a table showing the mean recovery (%) and relative standard deviation (%RSD) at each concentration level.

Precision Evaluation:

- Objective: To measure the degree of scatter between a series of measurements.

- Protocol: Precision is evaluated at multiple levels:

- Repeatability: Requires a minimum of 6 determinations at 100% of the test concentration. This assesses precision under the same operating conditions over a short interval of time.

- Intermediate Precision: Assesses the impact of random variations, such as different days, different analysts, or different equipment. The experimental design should include these variables, and the combined standard deviation is calculated.

- Data Presentation: Precision is expressed as the variance, standard deviation, or relative standard deviation (%RSD) of the data set.

Specificity/Selectivity Demonstration:

- Objective: To prove that the method can accurately measure the analyte in the presence of potential interferents.

- Protocol: Analyze samples containing the analyte along with other components that are expected to be present (e.g., impurities, degradants, matrix components). For chromatographic methods, this often involves demonstrating that the analyte peak is pure and baseline-separated from all other peaks.

- Data Presentation: Chromatograms or spectra are presented to show resolution between peaks. A table summarizing the resolution factors between the analyte peak and the closest eluting potential interferent is commonly used.

The quantitative data generated from these experiments should be compiled into structured tables for clear comparison and review. The following table provides a template for summarizing validation results for a hypothetical analytical method.

Table 3: Example Summary of Method Validation Results for a Hypothetical Assay

| Validation Parameter | Acceptance Criteria | Results Obtained | Conclusion |

|---|---|---|---|

| Accuracy (Mean % Recovery) | 98.0% - 102.0% | 99.5% | Pass |

| Repeatability (%RSD, n=6) | NMT 2.0% | 0.8% | Pass |

| Intermediate Precision (%RSD) | NMT 3.0% | 1.5% | Pass |

| Specificity (Resolution) | NLT 1.5 from closest peak | 2.1 | Pass |

| Linearity (R²) | NLT 0.998 | 0.9995 | Pass |

| Range | 50% - 150% of target | Confirmed | Pass |

The Scientist's Toolkit: Research Reagent Solutions

Successful method validation relies on a suite of essential materials and reagents. The following table details key items and their functions in the context of a validation study.

Table 4: Essential Research Reagents and Materials for Method Validation

| Item / Solution | Function in Validation | Example & Key Consideration |

|---|---|---|

| Certified Reference Materials (CRMs) | Serves as the primary standard for establishing accuracy and calibrating instruments. Provides a traceable value. | Purity must be well-characterized and certified by a recognized body. Stored under appropriate conditions to maintain stability. |

| High-Purity Solvents & Reagents | Used for preparation of mobile phases, buffers, and sample solutions. Critical for achieving baseline stability and specificity. | Must be of suitable grade (e.g., HPLC-grade) to minimize interference and background noise. |

| Spiked Placebo/Matrix Samples | Used to assess accuracy, precision, and specificity by simulating the real sample with a known amount of analyte added. | The placebo or blank matrix must be representative of the actual test samples to properly evaluate matrix effects. |

| Stability Samples | Used to demonstrate the stability of the analyte in solution and in the matrix under specific storage conditions. | Stored at various conditions (e.g., ambient, refrigerated) and analyzed over time against a fresh reference standard. |

| System Suitability Test Solutions | A reference preparation used to verify that the chromatographic or instrumental system is performing adequately at the time of analysis. | Typically a mixture of the analyte and key potential interferents to confirm resolution, efficiency, and repeatability. |

The path toward full international harmonization of food method validation protocols is complex but critically important. By understanding the core principles, comparing the nuances between different guidelines, and implementing rigorous experimental protocols, researchers and scientists can contribute to building a more unified and efficient global framework. This common language of reliability, relevance, and quality not only streamlines regulatory processes but also strengthens the scientific foundation upon which public health and food safety are built. Continuous training, staying updated with evolving guidelines, and meticulous documentation are the best practices that will enable professionals to navigate this landscape successfully and foster a culture of compliance and excellence [8].

The harmonization of food method validation protocols represents a critical frontier in global food safety and public health research. In an era of increasingly interconnected food supply chains, the absence of universally accepted validation standards creates significant challenges for regulatory bodies, food manufacturers, and researchers attempting to compare data across international boundaries. This analysis examines the current progress toward international alignment of these protocols, identifies persistent gaps in harmonization efforts, and evaluates emerging frameworks designed to bridge these divides. The imperative for harmonization extends beyond mere administrative convenience—it directly impacts the reliability of food safety assessments, the efficiency of trade, and the robustness of scientific research comparing food products and methodologies across different regions and laboratories. Through a systematic review of existing validation frameworks, interlaboratory studies, and regulatory initiatives, this article provides researchers and drug development professionals with a comprehensive understanding of the contemporary landscape of food method validation harmonization.

Major International Validation Frameworks

International efforts to standardize food method validation have primarily coalesced around several prominent frameworks, each with distinct applications, strengths, and limitations. Understanding these frameworks is essential for researchers selecting appropriate validation protocols for specific applications and for contextualizing experimental data within the broader regulatory environment.

The ISO 16140 series has emerged as a cornerstone for microbiological method validation, providing structured protocols for both validation and verification processes [1]. This framework establishes a critical distinction between method validation (proving a method is fit-for-purpose) and method verification (demonstrating a laboratory can properly perform a validated method) [1]. The series encompasses multiple parts addressing specific scenarios: Part 2 covers validation of alternative methods against reference methods; Part 3 outlines verification protocols in single laboratories; while Parts 4 and 5 address validation in specialized circumstances [1]. A key innovation within ISO 16140 is the concept of "food categories," which allows methods validated against a representative subset of foods (typically 5 out of 15 defined categories) to be considered validated for a "broad range of foods" [1]. This pragmatic approach facilitates broader applicability while maintaining scientific rigor.

ICH Guidelines, particularly Q2(R2) and Q14, provide a complementary framework primarily focused on analytical procedures in pharmaceutical and chemical safety contexts [16]. These guidelines emphasize a lifecycle approach to method validation, moving from a "check-the-box" mentality to a more scientific, risk-based model [16]. The introduction of the Analytical Target Profile (ATP) represents a significant advancement, requiring researchers to prospectively define a method's intended purpose and performance criteria before development begins [16]. The ICH framework outlines core validation parameters including accuracy, precision, specificity, linearity, range, limit of detection (LOD), limit of quantitation (LOQ), and robustness [16]. The FDA's adoption of these ICH guidelines creates regulatory alignment across major markets, though applicability to food methods varies depending on the analyte and matrix [16].

Table 1: Comparison of Major International Validation Frameworks

| Framework | Primary Scope | Core Validation Parameters | Strengths | Limitations |

|---|---|---|---|---|

| ISO 16140 Series | Microbiological methods for food and feed | Relative accuracy, specificity, LOD, LOQ, reproducibility | Detailed protocols for specific scenarios; Food category concept for broad applicability | Complexity of multi-part standards; Primary focus on microbiology |

| ICH Q2(R2)/Q14 | Analytical procedures for pharmaceuticals and chemicals | Accuracy, precision, specificity, linearity, range, LOD, LOQ, robustness | Science- and risk-based approach; Lifecycle management; Regulatory harmonization | Less specific to food matrices; Limited guidance on cultural adaptation |

| Codex Alimentarius | General food safety principles and guidelines | Varies by specific standard or guideline | International recognition; Basis for WTO SPS agreements | Often high-level without detailed technical protocols |

The Codex Alimentarius, jointly administered by the FAO and WHO, provides overarching principles and guidelines that inform national food safety systems and international trade [21]. While Codex standards typically operate at a higher level than technical validation protocols, they establish essential reference points for method performance criteria and facilitate mutual recognition between trading partners. Recent Codex updates have strengthened requirements for traceability, allergen management, and food safety culture, indirectly influencing validation requirements [21].

Experimental Protocols for Validation Studies

Protocol Adaptation and Cultural Validation

The translation and cultural adaptation of internationally developed protocols for local contexts represents a critical step in the harmonization process. A recent study from Shiraz, Iran demonstrates a systematic approach to this challenge, adapting and validating a food retail environment assessment protocol through a rigorous multi-stage process [22]. The researchers integrated two internationally recognized tools—the Nutrition Environment Measurement Survey for Stores (NEMS-S) and the food retail module from the International Network for Food and Obesity/Non-communicable Diseases Research, Monitoring, and Action Support (INFORMAS) [22].

The methodological sequence followed Beaton et al.'s framework for translating and culturally adapting self-report measures, comprising: (1) translation from English to Farsi by two independent translators; (2) synthesis of translations with discrepancy resolution; (3) back-translation by native English speakers; and (4) expert committee review for cultural and contextual appropriateness [22]. The expert panel, consisting of seven nutrition specialists, two school health experts, and two environmental health specialists, evaluated the protocol using the Lawshe scale, calculating content validity ratio (CVR: 0.64-1), content validity index (CVI: 0.78-1), and item impact score (2.84-4.83) to quantify validity [22]. Field testing across nine retail establishments in different socio-economic areas demonstrated high inter-rater reliability (93.77% agreement) and strong intraclass correlation coefficients (0.89-1) [22]. This systematic approach to cultural adaptation provides a template for implementing international protocols in diverse regional contexts while maintaining methodological integrity.

Interlaboratory Validation Studies

Interlaboratory validation represents the gold standard for establishing method reproducibility across different settings and operational conditions. A recent international ring trial conducted by the INFOGEST research network exemplifies best practices in this domain, evaluating an optimized protocol for measuring α-amylase activity [23]. The study involved 13 laboratories across 12 countries and 3 continents, all analyzing identical samples of human saliva and three porcine enzyme preparations using a standardized protocol but their own local equipment [23].

The optimized protocol modified the classic Bernfeld assay by implementing four time-point measurements at physiologically relevant temperature (37°C) instead of single-point measurement at 20°C [23]. Each laboratory tested all products at three different concentrations, establishing calibration curves using a provided maltose standard (2% w/v) across a concentration range of 0-3 mg/mL [23]. The statistical analysis calculated both repeatability (intralaboratory precision) and reproducibility (interlaboratory precision) as coefficients of variation (CVs), with the revised protocol demonstrating substantially improved reproducibility (CVs 16-21%) compared to the original method (CVs up to 87%) [23]. This study highlights how relatively simple modifications to established protocols—changing incubation conditions and increasing sampling points—can dramatically improve interlaboratory consistency while maintaining physiological relevance.

Protocol Optimization and False-Positive Reduction

Even validated methods may require ongoing optimization to address practical implementation challenges. Research on Listeria monocytogenes detection demonstrates this iterative improvement process, where a validated labor-efficient culture-based (LECB) protocol derived from ISO 11290:2017 was further optimized to reduce false positives without increasing labor requirements [24]. The original LECB protocol consisted of three steps: initial detection on a selective medium, purification of the isolate, and confirmation [24]. Researchers screened eight false-positive and 13 true-positive L. monocytogenes strains using five selective media and four confirmation tests to identify the most reliable combination [24].

The optimized protocol incorporated RAPID' L. mono medium, which successfully distinguished false from true positives, along with a hemolysis test [24]. When tested in parallel with the original LECB protocol using 66 fresh presumptive isolates, the optimized version demonstrated significantly improved specificity while maintaining detection sensitivity [24]. Genomic analysis revealed that false-positive strains lacked genes linked to the selectivity of chromogenic media, providing a mechanistic explanation for the improved performance [24]. This case study illustrates that method validation is not necessarily a terminal endpoint but rather part of an ongoing optimization cycle, particularly for methods addressing evolving food safety challenges.

Progress in International Alignment

Substantial progress has been achieved in harmonizing food method validation protocols across several domains, though implementation remains uneven across regions and methodological applications. The globalization of food standards has created powerful drivers for alignment, with several notable success stories emerging from recent initiatives.

The ISO 16140 series has established a comprehensive infrastructure for microbiological method validation that gains increasing international recognition [1]. The structured approach to alternative method validation provides a clear pathway for technology developers to demonstrate equivalence to reference methods, while the food category concept enables efficient extrapolation of validation data across product types [1]. The 2024-2025 amendments to various parts of ISO 16140, addressing identification methods and larger test portion sizes, demonstrate the framework's ongoing evolution to meet emerging needs [1]. The European Union's incorporation of ISO 16140 validation requirements into Regulation 2073/2005 represents a significant regulatory adoption of these international standards [1].

The ICH guidelines have achieved remarkable harmonization in analytical method validation for pharmaceutical applications, with the FDA formally adopting these internationally developed standards [16]. The simultaneous release of ICH Q2(R2) and Q14 in 2023-2024 represents a fundamental modernization of validation philosophy, shifting from prescriptive checklists to a science-based, lifecycle approach [16]. While primarily focused on pharmaceuticals, these principles increasingly influence chemical safety assessment in foods, particularly for the FDA's pre-market review programs for food additives, color additives, and Generally Recognized as Safe (GRAS) substances [19].

Table 2: Quantitative Performance Metrics from Validation Studies

| Study Description | Sample Matrix | Performance Metrics | Original Method Performance | Optimized/Harmonized Method Performance |

|---|---|---|---|---|

| Interlaboratory α-amylase assay [23] | Human saliva, porcine enzyme preparations | Interlab reproducibility (CVR) | CVR up to 87% (single-point, 20°C) | CVR 16-21% (multi-point, 37°C) |

| Interlaboratory α-amylase assay [23] | Human saliva, porcine enzyme preparations | Intra-lab repeatability (CVr) | Not reported | CVr below 15% (range 8-13%) |

| Cultural adaptation of food retail assessment [22] | Iranian food retail environments | Inter-rater reliability | Not applicable (new adaptation) | 93.77% agreement; ICC 0.89-1.00 |

| Cultural adaptation of food retail assessment [22] | Iranian food retail environments | Content validity indices | Not applicable (new adaptation) | CVR 0.64-1.00; CVI 0.78-1.00 |

Global surveillance networks like GenomeTrakr demonstrate practical operational alignment, with the FDA actively onboarding laboratories internationally and creating public training resources to standardize genomic surveillance workflows [19]. This distributed network model, which integrates data from U.S. and international laboratories into unified platforms like the CDC's PulseNet 2.0, represents a functional harmonization that transcends geographical and jurisdictional boundaries [19]. Similarly, the INFOGEST network's success in standardizing digestion protocols across 52 countries illustrates how researcher-led initiatives can drive practical harmonization through consensus-building and interlaboratory validation [23].

The Codex Alimentarius continues to make incremental progress in aligning food safety standards, with recent updates strengthening requirements for traceability, allergen management, and food safety culture [21]. While implementation varies across member nations, these standards provide an important reference point for international trade and domestic regulation. The 2023 HACCP revisions incorporating these elements into the main body of the standard (previously separated from good hygiene practices) represent meaningful progress toward more integrated food safety systems [21].

Persistent Gaps and Challenges

Despite measurable progress, significant gaps and challenges persist in the international alignment of food method validation protocols. These limitations represent both obstacles to current research and opportunities for future harmonization initiatives.

A fundamental challenge lies in the regional fragmentation of regulatory requirements. While frameworks like ISO 16140 provide technical protocols, their adoption into binding regulations remains uneven. The FDA's reorganization of its Human Foods Program in 2024 represents an opportunity for greater alignment, but also creates transitional uncertainty as new structures and priorities emerge [19]. Meanwhile, divergent state-level regulations, such as California's bans on Red Dye No. 3 and other substances, create sub-national fragmentation that complicates compliance and method validation [25]. This trend toward "food safety going local" potentially signals a broader fragmentation that could undermine international harmonization efforts [25].

The validation of methods for emerging hazards represents another significant gap. As new chemical and microbiological risks are identified, validation protocols struggle to keep pace. The FDA's ongoing efforts to address PFAS (Per- and Polyfluoroalkyl Substances) exposure assessment highlight this challenge, requiring the development and validation of new analytical methods to understand occurrence and exposure [19]. Similarly, methods for detecting highly pathogenic avian influenza (HPAI) in dairy products are being developed through multi-agency collaboration, but validated standardized protocols are not yet widely established [19].

Resource and implementation disparities between developed and developing regions create practical barriers to harmonization. The cultural adaptation study in Iran demonstrates that even well-validated international protocols require significant modification and re-validation for local contexts [22]. Similarly, the emphasis on sophisticated technologies like whole-genome sequencing, artificial intelligence, and blockchain for food safety monitoring [19] [21] may create de facto harmonization barriers for laboratories and regulatory agencies with limited technical infrastructure or funding.

The tension between innovation and standardization presents an ongoing challenge. Rapidly evolving technologies like AI and machine learning for hazard prediction [21], New Approach Methods (NAMs) for chemical safety assessment [19], and rapid detection methods often outpace the development of validation frameworks. The FDA's development of tools like the Warp Intelligent Learning Engine (WILEE) for signal detection illustrates this innovation, but standardized validation approaches for such systems remain underdeveloped [19]. Similarly, the optimization of validated Listeria detection protocols to reduce false positives demonstrates that even established methods may require refinement, creating a moving target for harmonization [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful participation in international validation studies requires access to standardized reagents and materials. Based on the protocols analyzed in this review, the following toolkit represents essential components for researchers conducting method validation studies:

Table 3: Essential Research Reagents and Materials for Validation Studies

| Item | Specification/Properties | Function in Validation | Example from Literature |

|---|---|---|---|

| Reference microbiological strains | Well-characterized true-positive and false-positive strains | Specificity testing; Controlls | L. monocytogenes true-positive (n=13) and false-positive (n=8) strains [24] |

| Selective culture media | Formula-specific agars with defined selectivity | Differential growth; Specificity enhancement | RAPID' L. mono agar for Listeria differentiation [24] |

| Enzyme preparations | Standardized activity units; Defined origin | Interlaboratory comparison; Reference standards | Porcine pancreatic α-amylase preparations; Human saliva pool [23] |

| Chemical standards | High-purity analytes with documented stability | Calibration curves; Quantitative reference | Maltose standard solution (2% w/v) for α-amylase assay [23] |

| Reference materials | Certified matrix-matched materials with assigned values | Accuracy determination; Method comparison | Food category representatives for microbiological validation [1] |

| Substrate solutions | Defined composition; Standardized preparation | Reaction consistency; Reproducibility | Potato starch solution for α-amylase activity determination [23] |

The international alignment of food method validation protocols demonstrates measurable progress alongside persistent challenges. Established frameworks like the ISO 16140 series and ICH guidelines provide robust foundations for harmonization, while interlaboratory studies demonstrate that methodological refinements can dramatically improve reproducibility across diverse settings. Success stories in genomic surveillance and dietary assessment protocols illustrate the feasibility of practical alignment. However, regulatory fragmentation, resource disparities, and the rapid pace of technological innovation continue to challenge complete harmonization. The path forward requires balanced approaches that maintain scientific rigor while accommodating regional needs, embrace innovation while developing appropriate validation frameworks, and strengthen global cooperation networks to build capacity across economic divides. Researchers contributing to this field should prioritize studies that bridge existing gaps, particularly for emerging hazards, culturally adapted protocols, and validation frameworks for novel technologies. Through such targeted efforts, the scientific community can accelerate progress toward the ultimate goal: reliable, comparable food safety assessments that protect public health across global supply chains.

From Theory to Practice: Implementing Internationally Aligned Validation Protocols

In the globalized landscape of pharmaceutical and food safety, the harmonization of analytical method validation protocols is a critical scientific and regulatory endeavor. Validation provides documented evidence that an analytical procedure is fit for its intended purpose, ensuring the reliability of data supporting product quality, safety, and efficacy across international borders [26]. Organizations like the International Council for Harmonisation (ICH) and the AOAC International work to provide a harmonized framework, creating global gold standards that ensure a method validated in one region is recognized and trusted worldwide [2] [16]. This guide deconstructs the validation process into its key parameters, providing researchers and scientists with a detailed, step-by-step protocol aligned with international harmonization principles, as outlined in modern guidelines such as ICH Q2(R2) and ICH Q14 [16] [27].

The shift in modern regulatory science is from a prescriptive, "check-the-box" approach to a scientific, risk-based, and lifecycle-oriented model. This is underscored by the introduction of the Analytical Target Profile (ATP), a prospective summary of a method's intended purpose and desired performance characteristics, which ensures the method is designed to be fit-for-purpose from the very beginning [16]. This guide operates within this contemporary framework, providing the toolkit necessary for building robust, transferable, and harmonized analytical methods.

Core Principles: From Method Development to Validation

The journey to a validated method is a structured process, progressing through distinct stages: development, qualification, and validation [27]. Understanding this progression is key to efficient and compliant analytical practices.

Analytical Method Development: This initial phase focuses on creating a robust and reliable analytical method. It is an iterative process that involves defining objectives, conducting a literature review, planning the approach, and optimizing parameters like sample preparation and operating conditions [27]. The goal is to establish a scientifically sound procedure that can be consistently reproduced.

Analytical Method Qualification: This stage involves the initial evaluation and characterization of the method's performance as an analytical tool. It assesses key factors such as specificity, robustness, precision, and accuracy to ensure the method is capable of delivering reliable results in a development setting [27].

Analytical Method Validation: This is the formal, documented process of demonstrating that the method's performance meets all predefined acceptance criteria for its intended use, particularly for regulatory submissions [27]. It is a rigorous assessment that provides a high degree of assurance that the method will consistently yield reliable results during routine use [26]. The validation parameters, which form the core of this guide, are defined by international guidelines.

The relationship between these stages and the overarching method lifecycle, which includes continued performance verification, can be visualized as a continuous cycle.

Deconstructing Key Validation Parameters: Experimental Protocols and Data Interpretation

This section provides a detailed, step-by-step guide for experimentally determining each key validation parameter. The protocols are designed to be universally applicable, supporting the harmonization of methods across different laboratories and regions.

Specificity