Developing Predictive Bioavailability Equations: A Framework Integrating Machine Learning and Clinical Translation

This article provides a comprehensive overview of modern approaches for developing predictive equations to estimate nutrient and drug bioavailability.

Developing Predictive Bioavailability Equations: A Framework Integrating Machine Learning and Clinical Translation

Abstract

This article provides a comprehensive overview of modern approaches for developing predictive equations to estimate nutrient and drug bioavailability. Aimed at researchers, scientists, and drug development professionals, it synthesizes current frameworks, machine learning methodologies, optimization techniques, and validation strategies. Covering foundational concepts to advanced applications, the content explores the transition from traditional regression to AI-driven models like LSTM networks and Gaussian Process Regression. It addresses critical challenges in model development, including data limitations and parameter optimization, while highlighting the significant potential of these predictive tools to enhance food formulation, drug design, nutritional recommendations, and personalized medicine.

Understanding Bioavailability Prediction: Core Concepts and Current Frameworks

Bioavailability is a critical pharmacokinetic parameter that measures the proportion of a substance that reaches systemic circulation in an active form to exert its biological effect. The definition varies slightly between fields but maintains the same fundamental principle.

In pharmacology, bioavailability is defined as the fraction of an administered drug that reaches the systemic circulation unaltered [1]. It is denoted by the letter F and expressed as a percentage, with intravenous administration providing 100% bioavailability by definition [2]. In nutritional science, bioavailability generally designates the quantity or fraction of an ingested nutrient that is absorbed and available for use or storage by the body [2]. This definition accounts for the additional complexity of nutritional status and physiological state on nutrient utilization.

A crucial distinction exists between bioavailability and absorption. Absorption refers specifically to the process of a substance moving from its site of administration into the bloodstream [3]. Bioavailability encompasses absorption but also includes subsequent processes that affect systemic availability, such as first-pass metabolism, binding to proteins, or excretion [3]. Therefore, while absorption is a prerequisite for bioavailability, it does not guarantee that the substance will reach systemic circulation in an active form.

Quantitative Foundations and Calculations

The gold standard for determining bioavailability involves calculating the Area Under the Curve (AUC) of drug concentration versus time, which represents total drug exposure in systemic circulation [1] [2].

Table 1: Key Bioavailability Equations and Calculations

| Bioavailability Type | Formula | Variables | Application |

|---|---|---|---|

| Absolute Bioavailability (Fabs) | F_abs = 100 * (AUC_po * D_iv) / (AUC_iv * D_po) |

AUC_po = AUC after oral doseD_iv = Intravenous doseAUC_iv = AUC after IV doseD_po = Oral dose |

Compares systemic exposure from a non-IV route (e.g., oral) to IV administration [2]. |

| Relative Bioavailability (Frel) | F_rel = 100 * (AUC_A * D_B) / (AUC_B * D_A) |

AUC_A = AUC for formulation AD_B = Dose for formulation BAUC_B = AUC for formulation BD_A = Dose for formulation A |

Compares bioavailability between two different formulations (e.g., generic vs. brand-name) [2]. |

| Fraction Absorbed (Basic) | F = Mass of drug delivered to plasma / Total mass of drug administered |

F = Bioavailability fraction |

A fundamental definition of the proportion of the administered dose that reaches the systemic circulation [1]. |

For regulatory approval of generic drugs, bioequivalence (BE) must be demonstrated by showing the 90% confidence interval for the ratio of the mean AUC and maximum concentration (Cmax) of the test product to the reference product falls within 80% to 125% [2].

Framework for Developing Predictive Bioavailability Equations

Weaver et al. (2025) propose a structured 4-step framework designed to guide researchers in developing predictive equations for nutrient absorption and bioavailability, which is also highly applicable to pharmaceutical research [4].

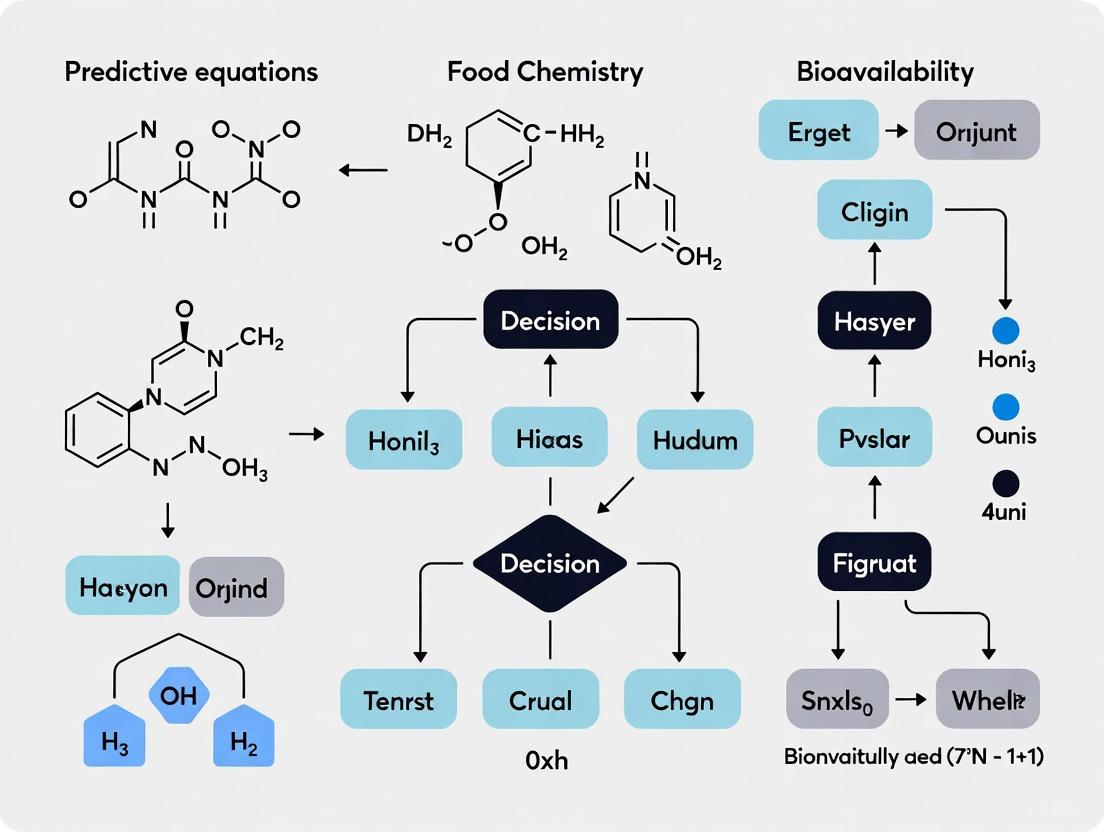

Diagram 1: Framework for Predictive Equation Development

This framework emphasizes a systematic approach to address data limitations, highlight evidence gaps, and enhance the accuracy of bioavailability estimates for both nutrients and drugs [4].

Key Factors Influencing Bioavailability

Multiple intrinsic and extrinsic factors significantly impact the rate and extent of bioavailability, which must be considered in predictive modeling and experimental design.

Table 2: Key Factors Influencing Bioavailability

| Category | Specific Factors | Impact on Bioavailability |

|---|---|---|

| Physiological Barriers | Intestinal epithelium absorption, First-pass metabolism, Gastric emptying rate, GI tract health | Reduces bioavailability for non-IV routes; subject to inter- and intra-individual variation [1] [2]. |

| Drug/Compound Properties | Hydrophobicity, pKa, solubility, particle size, chemical stability | Affects dissolution, permeability, and susceptibility to degradation [2]. |

| Formulation Factors | Dosage form (tablet, capsule, liquid), excipients, modified release (extended, delayed), manufacturing methods | Can enhance or hinder drug release and absorption; critical for generic vs. brand-name bioequivalence [5] [2]. |

| Metabolic Factors | Hepatic cytochrome P450 enzymes, enzyme induction/inhibition, genetic polymorphisms, transport proteins (e.g., P-glycoprotein) | Can inactivate a compound before it reaches systemic circulation; subject to drug-drug and drug-food interactions [1]. |

| Patient-Specific Factors | Age, gender, phenotypic differences, genetic makeup, disease states (hepatic/renal insufficiency), diet/fasting state | Causes significant inter-individual variability in drug response and bioavailability [1] [2]. |

| Concurrent Interactions | Food (e.g., grapefruit juice, high-fat meals), other drugs, herbal supplements (e.g., St. John's wort), alcohol, nicotine | Can inhibit or induce metabolic enzymes or transporters, altering bioavailability and risk of toxicity [1] [2]. |

Experimental Protocols for Assessing Bioavailability

In Vivo Pharmacokinetic Study Protocol

This protocol outlines the standard method for determining absolute bioavailability in humans or animal models.

- Objective: To determine the absolute bioavailability (Fabs) of a test formulation by comparing its systemic exposure to that of an intravenous reference.

- Materials:

- Test formulation (e.g., oral tablet, capsule)

- Reference intravenous (IV) formulation

- Validated analytical method (e.g., LC-MS/MS)

- Cannulation equipment for serial blood sampling

- Centrifuge and freezer (-80°C) for plasma storage

- Procedure:

- Study Design: A randomized, crossover study design is preferred, with a sufficient washout period between administrations.

- Dosing: Administer the test and reference formulations to subjects at therapeutic doses. The IV dose may be lower but must be dose-normalized in the calculation.

- Blood Sampling: Collect serial blood samples at pre-dose and at appropriate time points post-dose (e.g., 0.25, 0.5, 1, 2, 4, 8, 12, 24 hours) to fully characterize the concentration-time profile.

- Sample Processing: Centrifuge blood samples to obtain plasma and store frozen until analysis.

- Bioanalysis: Analyze plasma samples using a validated specific and sensitive analytical method to determine drug concentrations.

- Data Analysis: Calculate AUC0-t and AUC0-∞ for both the test and reference formulations. Apply Equation 1 (from Table 1) to calculate absolute bioavailability.

Combined In Vitro Dissolution/Permeability Protocol

This protocol, adapted from Sironi et al. (2021), combines two in vitro assays to predict in vivo performance and explain bioequivalence failures [5].

- Objective: To simultaneously evaluate the drug release and intestinal permeability of a formulation, providing insights into its absorption potential.

- Materials:

- USP apparatus for dissolution testing (e.g., Paddle apparatus)

- Parallel Artificial Membrane Permeability Assay (PAMPA) plates

- Dissolution medium (e.g., simulated gastric/intestinal fluid)

- Phospholipid solution for creating artificial membrane

- UV-Visible spectrophotometer or HPLC system for quantification

- Procedure:

- Dissolution Test: Place the test tablet in the dissolution vessel containing a suitable volume of medium, maintained at 37°C. Operate the apparatus as per pharmacopeial standards (e.g., 50-75 rpm paddle speed).

- Sample Withdrawal: At predetermined time intervals, withdraw aliquots from the dissolution vessel.

- PAMPA Assay: Immediately transfer the aliquots to the donor compartment of the PAMPA plate. The receiver compartment contains a suitable buffer. The plate has an artificial lipid membrane separating the compartments.

- Incubation: Incubate the PAMPA plate for a set period (e.g., several hours) to allow for passive diffusion.

- Analysis: Quantify the drug concentration in both the donor and receiver compartments after the incubation period.

- Data Analysis:

- Dissolution Profile: Plot % drug released vs. time.

- Apparent Permeability (Pe): Calculate using the formula:

P_e = -ln(1 - C_receiver / C_equilibrium) / (A * (1/V_donor + 1/V_receiver) * t), where A is the membrane area, V is volume, and t is time.

Diagram 2: Combined Dissolution/Permeability Workflow

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Bioavailability Studies

| Reagent / Material | Function and Application | Key Considerations |

|---|---|---|

| Caco-2 Cell Line | A human colon adenocarcinoma cell line that differentiates into enterocyte-like cells. Used in in vitro models to study active and passive intestinal drug transport and metabolism [5]. | Long cultivation time (~21 days), expresses transporters and metabolic enzymes, more biologically relevant but higher variability [5]. |

| PAMPA Plates | Parallel Artificial Membrane Permeability Assay. Uses an artificial phospholipid membrane in a multi-well plate to assess passive transcellular permeability [5]. | High-throughput, low cost, reproducible, ideal for early-stage screening of passive permeability [5]. |

| Simulated Gastrointestinal Fluids | Biorelevant dissolution media (e.g., FaSSGF, FaSSIF) that mimic the pH, surface tension, and composition of human gastric and intestinal fluids for in vitro dissolution testing [5]. | Crucial for predicting in vivo dissolution, especially for poorly soluble drugs whose absorption is dissolution-rate limited [5]. |

| Stable Isotope Labels (e.g., ¹³C, ¹⁴C) | Used in absolute bioavailability studies. A low dose of an isotopically labelled IV dose is co-administered with a therapeutic oral dose, allowing simultaneous measurement of both PK profiles [2]. | Avoids the need for separate IV and oral studies and associated toxicity testing. ¹⁴C requires sensitive AMS (Accelerator Mass Spectrometry) for detection [2]. |

| Cytochrome P450 Isozyme Assays | Recombinant enzymes or human liver microsomes used to identify specific metabolic pathways, potential drug-drug interactions, and inhibitory/inductive effects of a new compound. | Critical for understanding first-pass metabolism and anticipating inter-individual variability due to genetic polymorphisms (e.g., CYP2D6, CYP2C19) [1]. |

| Liposomal Encapsulation Systems | A formulation technology where the active compound is enclosed within a phospholipid bilayer. Used to enhance the solubility, stability, and bioavailability of poorly absorbed drugs and nutrients [3]. | Protects the compound from degradation in the GI tract and can facilitate improved absorption through various mechanisms. |

The Critical Need for Predictive Equations in Nutrition and Pharmacology

Accurate prediction of bioavailability—the fraction of a substance that reaches systemic circulation and is available for biological activity—represents a critical frontier in both nutrition and pharmacology. Current nutrient intake recommendations and drug dosage determinations often rely on total content rather than bioavailable content, creating significant uncertainty in efficacy assessments. *Bioavailability prediction equations are essential computational tools that translate ingested amounts into biologically active doses, enabling more precise dietary planning and drug dosing. The development of these equations follows a structured scientific framework designed to identify key influencing factors, integrate high-quality human study data, construct mathematical models, and validate predictions against gold-standard measurements. This approach addresses fundamental limitations in both fields, where direct measurement of bioavailability in human subjects is often costly, ethically challenging, and impractical for routine application.

The consequences of inaccurate bioavailability estimates are substantial across these domains. In nutrition, overestimating bioavailability can lead to persistent nutrient deficiencies despite apparently adequate intake, while underestimation may drive unnecessary supplementation or food fortification. In pharmacology, inaccurate predictions directly impact therapeutic efficacy and safety, potentially resulting in treatment failure or adverse drug reactions. A unified framework for developing predictive equations across these disciplines enables more efficient resource allocation in research and product development while improving outcomes for end users. This document presents specialized protocols and application notes for researchers developing and applying these vital predictive tools.

Foundational Framework for Predictive Equation Development

Standardized Four-Step Development Methodology

A robust, structured framework guides the development of predictive bioavailability equations, ensuring scientific rigor and translational applicability [6] [4] [7]. This methodology provides a standardized approach applicable to both nutrient and drug bioavailability assessment.

Step 1: Identify Key Influencing Factors - Systematically identify intrinsic and extrinsic factors affecting bioavailability. For nutrients, this includes food matrix effects, chemical speciation, and nutrient-nutrient interactions. For drugs, this encompasses physicochemical properties, formulation characteristics, and administration routes. This step requires comprehensive analysis of compound-specific absorption, distribution, metabolism, and excretion (ADME) pathways.

Step 2: Conduct Comprehensive Literature Review - Perform systematic review of high-quality human studies investigating bioavailability. Prioritize research employing validated methods such as stable isotopes for nutrients or pharmacokinetic studies for drugs. Extract quantitative data on absorption parameters, variability measures, and covariate influences to inform equation structure and parameterization.

Step 3: Construct Predictive Equations - Develop mathematical models based on mechanistic understanding and empirical relationships identified in Step 2. Select appropriate statistical approaches (multiple linear regression, nonlinear mixed-effects modeling, machine learning) based on data structure and research question. Define equation parameters with measurable inputs for practical application.

Step 4: Validate and Translate Equations - Assess predictive performance against internal and external datasets. When feasible, conduct validation studies comparing equation predictions against gold-standard measurements (e.g., doubly labeled water for energy, pharmacokinetic studies for drugs). Establish precision, bias, and limits of agreement to define appropriate use contexts [8].

Table 1: Key Influencing Factors for Bioavailability Prediction

| Category | Nutrient-Specific Factors | Drug-Specific Factors |

|---|---|---|

| Compound Properties | Chemical form (e.g., heme vs. non-heme iron), solubility, stability | Lipophilicity, pKa, molecular size, crystal form |

| Host Factors | Age, health status, genetic polymorphisms in transporters, nutrient status | Genetic polymorphisms in metabolizing enzymes, disease states, age |

| Matrix Effects | Food composition, inhibitory/enhancing components (e.g., phytates, vitamin C) | Formulation excipients, dosage form, release characteristics |

| Luminal Factors | Digestive enzymes, pH conditions, gut microbiota | Gut metabolism, transporter interactions, luminal degradation |

Quantitative Assessment of Predictive Performance

Validated predictive equations demonstrate specific statistical performance characteristics that determine their appropriate application contexts. Comparison of measured versus predicted values employs standardized metrics including *coefficient of determination (R²), *root mean square error (RMSE), *mean bias, and *limits of agreement [9] [8].

Recent research demonstrates the advancement possible with targeted equation development. A new equation for predicting resting energy expenditure in patients with obesity achieved an R² of 0.923 with a root mean square error of 81.872 kcal/day, representing significant improvement over widely used models like Mifflin-St Jeor and Harris-Benedict that show errors exceeding 250-315 kcal/day in this population [9]. Similarly, equations for fat-free mass estimation developed specifically for Brazilian populations with overweight/obesity demonstrated concordance correlation coefficients of 0.982 with standard errors of estimate of 2.50 kg, substantially outperforming generalized equations [10].

Table 2: Performance Metrics for Recent Predictive Equations in Nutrition

| Equation Application | Population | Performance Metrics | Comparison to Standard Equations |

|---|---|---|---|

| Resting Energy Expenditure [9] | Hospitalized patients with obesity (n=89) | R² = 0.923, RMSE = 81.872 kcal/day, Mean bias = -0.054 kcal/day | Narrower limits of agreement (-156.8 to 156.7 kcal/day) vs. conventional equations |

| Fat-Free Mass Estimation [10] | Brazilian adults with overweight/obesity (n=269) | CCC = 0.982, SEE = 2.50 kg, LOA = -5.0 to 4.8 kg | Most existing equations invalid for this specific population |

| Energy Requirements [8] | Older adults (n=41) | RMSE% ≥ 10%, individual prediction accuracy varied (15-35% misclassification) | Both NASEM and Porter equations showed significant individual-level inaccuracies |

Application Note: Nutrient Bioavailability Prediction

Protocol for Nutrient Bioavailability Equation Development

Objective: Develop a predictive equation for iron bioavailability that incorporates key dietary factors influencing absorption.

Background: Iron bioavailability varies dramatically (3-20%) depending on dietary composition, with heme iron from animal sources demonstrating higher bioavailability than non-heme iron from plant sources. Accurate prediction requires accounting for enhancing and inhibiting factors present in the meal matrix.

Experimental Workflow:

Materials and Methods:

Study Population: Recruit healthy adults (n=40-60) with comprehensive exclusion criteria for conditions affecting iron metabolism. Stratify by iron status (ferritin levels) and genotype for relevant iron regulators.

Test Meals: Prepare standardized meals varying systematically in heme/non-heme iron ratio, ascorbic acid content, phytate content, calcium content, and polyphenol content using natural food sources.

Isotope Administration: Administer stable iron isotopes (⁵⁷Fe, ⁵⁸Fe) with test meals in fasted state. Use different isotopes for different meal components when investigating complex interactions.

Sample Collection: Draw blood samples at baseline, 2h, 4h, 6h, and 24h post-ingestion. Process serum and isolate erythrocytes for isotope ratio analysis.

Analytical Methods: Determine isotope ratios in erythrocytes using inductively coupled plasma mass spectrometry (ICP-MS) after 14 days to allow for erythrocyte incorporation.

Data Analysis: Calculate fractional absorption based on isotope incorporation using established models. Employ multiple linear regression with meal composition factors as independent variables and fractional absorption as dependent variable. Validate using leave-one-out cross-validation and external validation in independent cohort.

Key Research Reagents:

Table 3: Essential Research Reagents for Nutrient Bioavailability Studies

| Reagent/Category | Specific Examples | Function in Research Protocol |

|---|---|---|

| Stable Isotope Tracers | ⁵⁷Fe, ⁵⁸Fe, ⁴⁴Ca, ⁶⁷Zn, ²⁶Mg | Metabolic tracing without radioactivity, enabling human studies |

| Reference Standards | Certified IRMM/ NIST standards | Quality control for mass spectrometry analysis |

| Specialized Meals | Controlled composition meals with varying enhancers/inhibitors | Systematic evaluation of dietary factors on bioavailability |

| Sample Collection | EDTA tubes, trace-element-free collection tubes | Prevention of contamination during biological sampling |

| Analytical Instruments | ICP-MS, HPLC-MS | Precise quantification of tracer incorporation and analyte concentrations |

Application Note: Drug Bioavailability Prediction

Protocol for Gut-Liver Microphysiological System for Bioavailability Estimation

Objective: Utilize human-relevant in vitro models to predict human oral drug bioavailability by recreating combined intestinal permeability and first-pass metabolism.

Background: Traditional approaches to predicting human drug bioavailability rely heavily on animal models, which show poor correlation with human outcomes (R² = 0.34 for 184 drugs) due to interspecies differences in enzyme expression and physiology [11]. Microphysiological systems (MPS) incorporating gut and liver tissues offer a human-relevant alternative for more accurate preclinical estimation.

Experimental Workflow:

Materials and Methods:

System Setup: Utilize commercial gut-liver MPS platform (e.g., PhysioMimix) or assemble custom system. Establish human intestinal epithelial cells (primary RepliGut or Caco-2) in gut compartment and primary human hepatocytes or HepaRG cells in liver compartment. Maintain under physiological fluidic perfusion for 7-14 days to promote polarization and functionality.

Functional Validation: Confirm gut barrier integrity via transepithelial electrical resistance (TEER >300 Ω·cm²) and permeability markers. Verify liver metabolic competence through albumin production, urea synthesis, and cytochrome P450 activity (particularly CYP3A4).

Dosing Strategy: For oral route simulation, apply test compound to gut compartment apical side. For intravenous simulation, apply directly to liver compartment. Use physiologically-relevant concentrations based on anticipated human doses.

Sampling Protocol: Collect serial samples from liver compartment effluent at predetermined timepoints (e.g., 0, 0.5, 1, 2, 4, 6, 8, 24 hours). Immediately process samples for storage at -80°C until analysis.

Bioanalytical Methods: Quantify parent drug and major metabolites using validated LC-MS/MS methods. Generate concentration-time curves for both dosing routes.

Data Analysis and Modeling:

- Calculate area under the curve (AUC) for both oral and IV simulations

- Determine key ADME parameters: hepatic clearance (CLint,liver), gut permeability (Papp), efflux ratio

- Apply mechanistic mathematical modeling to estimate:

- Fraction absorbed (Fa)

- Fraction escaping gut metabolism (Fg)

- Fraction escaping hepatic metabolism (Fh)

- Calculate predicted oral bioavailability: F = Fa × Fg × Fh

- Compare predictions with clinical human data when available for validation

Key Research Reagents:

Table 4: Essential Research Reagents for Drug Bioavailability Assessment

| Reagent/Category | Specific Examples | Function in Research Protocol |

|---|---|---|

| Cell Systems | Primary human hepatocytes, RepliGut intestinal cells, Caco-2 cells | Recreate human-relevant absorption and metabolism interfaces |

| Microphysiological Hardware | Gut-liver chips, perfusion controllers, multi-well plates | Provide physiological fluid flow and organ interconnection |

| Bioanalytical Standards | Certified drug standards, stable isotope-labeled internal standards | Enable precise LC-MS/MS quantification |

| Functional Assay Kits | CYP450 activity assays, albumin/urea quantification kits, LDH cytotoxicity assays | Monitor tissue functionality and viability |

| Modeling Software | PBPK platforms (GastroPlus, Simcyp), mathematical modeling tools | Translate experimental data into human bioavailability predictions |

Integrated Data Analysis and Validation Approaches

Statistical Framework for Equation Validation

Robust validation of predictive bioavailability equations requires multiple complementary statistical approaches to assess different aspects of performance [9] [8] [10].

Correlation and Residual Analysis: Evaluate strength and direction of linear relationships between predictor variables and bioavailability outcomes using Pearson's correlation coefficient. Assess residual distributions for normality using Shapiro-Wilk test and graphical methods (Q-Q plots, histograms). Verify homoscedasticity by plotting residuals against predicted values.

Agreement Assessment: Apply Bland-Altman analysis to quantify agreement between measured and predicted values. Calculate mean bias (average difference between methods) and 95% limits of agreement (mean bias ± 1.96 × standard deviation of differences). Identify any relationship between differences and magnitude of measurement.

Individual Prediction Accuracy: Assess clinical relevance at individual level by calculating percentage of predictions falling within ±10% of measured values. This is particularly important for applications where individual dosing or recommendations are required.

Cross-Validation: Employ k-fold cross-validation or leave-one-out cross-validation to assess model performance on data not used in development. For larger datasets, hold out a random subset (typically 20-30%) for external validation.

Implementation Considerations and Limitations

Successful implementation of predictive bioavailability equations requires careful consideration of their inherent limitations and appropriate application boundaries. Even well-validated equations demonstrate variable performance at the individual level, as evidenced by studies showing 15-35% of individual predictions falling outside acceptable error ranges for energy expenditure equations [8]. This highlights the critical need for clinical judgment and periodic verification in applied settings.

Equation performance depends heavily on the representativeness of the development population. Equations developed for specific populations (e.g., Brazilian adults with obesity [10]) typically outperform generalized equations when applied to similar groups but may show reduced accuracy in divergent populations. Regular re-evaluation and potential refinement is necessary when applying equations to populations with different characteristics.

Emerging technologies continue to enhance predictive capabilities. Microphysiological systems that replicate human organ interactions show promise for improving drug bioavailability predictions while reducing reliance on animal models [11]. Similarly, standardized frameworks for nutrient bioavailability [6] [7] enable more systematic development of predictive tools that account for food matrix effects and host factors. Continued refinement of these approaches will further strengthen the scientific foundation for bioavailability prediction across nutrition and pharmacology.

A Standardized 4-Step Framework for Equation Development

The accurate prediction of nutrient and bioactive compound absorption is a critical challenge in nutritional science and pharmaceutical development. Current nutrient intake recommendations, nutritional assessments, and food labeling primarily rely on the estimated total nutrient content in foods and dietary supplements. However, the biological adequacy of any nutrient intake depends not only on the total amount consumed but also on the fraction that is ultimately absorbed and utilized by the body—a property known as bioavailability [6] [7]. This discrepancy between consumption and utilization highlights a significant gap in nutritional assessment methodologies.

Accurate assessments of nutrient bioavailability require robust predictive equations or algorithms that can translate food composition data into meaningful estimates of biological availability. A standardized framework for developing such equations is essential for enhancing the accuracy and precision of nutrient bioavailability estimates, addressing existing data limitations, and highlighting evidence gaps to inform future research and policy on nutrients and bioactive compounds [6]. This protocol details a standardized 4-step framework designed to guide researchers in developing predictive equations for estimating nutrient absorption and bioavailability, with specific application to the development of predictive bioavailability equations.

Theoretical Background

Bioavailability refers to the proportion of an ingested nutrient or compound that is absorbed from the gastrointestinal tract and becomes available for physiological functions or storage in the body. For orally administered substances, this encompasses the processes of liberation, absorption, distribution, metabolism, and elimination. The fundamental principle driving the need for predictive equations is that multiple factors beyond chemical structure influence these processes, including dietary matrix effects, host-related factors, and nutrient-nutrient interactions.

Quantitative Structure-Activity Relationship (QSAR) models represent one computational approach for predicting toxicokinetic properties like oral bioavailability. These models correlate the structural properties of molecules with their biological activity or absorption characteristics, allowing for the prediction of new compounds based on their similarity to previously studied molecules [12]. The framework described herein provides a standardized methodology for developing such models specifically for nutrient bioavailability prediction.

Materials and Reagents

Research Reagent Solutions for Bioavailability Research

Table 1: Essential Research Reagents and Computational Tools for Bioavailability Equation Development

| Item Category | Specific Examples | Function and Application |

|---|---|---|

| Data Sources | High-quality human studies, Animal model data, Epidemiologic datasets, Existing nutrient databases | Provides experimental evidence on absorption parameters; forms the foundation for variable identification and model training [6] [13]. |

| Computational Tools | QSAR Modeling Software, Machine Learning algorithms (e.g., R-CatBoost, R-RF), Statistical Analysis Packages, Mordred descriptor calculator | Used for descriptor calculation, model construction, variable selection, and statistical validation of predictive equations [12]. |

| Literature Review Databases | PubMed, Scopus, Web of Science, EMBASE, Specialist nutritional databases | Enable comprehensive literature review to identify influencing factors and existing evidence [6]. |

| Validation Datasets | Independent human clinical trials, Stable isotope studies, In vitro digestion models | Provide external data not used in model development to test predictive performance and generalizability [6] [12]. |

Standardized 4-Step Framework Protocol

This framework provides a systematic approach for developing robust, validated equations to predict nutrient bioavailability, enhancing the translation of research into improved dietary recommendations and product formulations.

Step 1: Identification of Influencing Factors

Objective: To systematically identify and categorize all known factors that influence the bioavailability of the target nutrient or bioactive compound.

Procedure:

- Define Compound Characteristics: Document the physicochemical properties of the target compound, including molecular weight, solubility, stability under different pH conditions, and chemical forms (e.g., different iron salts, vitamin E isomers) [13].

- Identify Dietary Factors: Catalog dietary components that may enhance or inhibit bioavailability. For minerals like iron and zinc, this includes:

- Enhancers: Ascorbic acid, meat or fish protein (the "MFP factor"), certain organic acids.

- Inhibitors: Phytic acid, polyphenols, tannins, calcium, certain dietary fibers [6].

- Document Host-Related Factors: Identify subject characteristics that modify absorption, such as:

- Physiological Status: Nutrient status (e.g., iron stores), life stage (e.g., pregnancy, infancy), health status.

- Genetic Factors: Variations in transporters or metabolic enzymes.

- Compile a Factor Inventory: Create a comprehensive table of all identified factors for reference during model development. This inventory ensures that potential predictor variables are not overlooked.

Troubleshooting Tip: If information on a specific factor is conflicting, note the discrepancy and prioritize factors with consistent evidence from high-quality human studies for initial model building.

Step 2: Comprehensive Literature Review

Objective: To gather, evaluate, and synthesize high-quality human data on the bioavailability of the target compound to inform variable selection and model structure.

Procedure:

- Develop a Review Protocol: Define specific inclusion and exclusion criteria a priori. Prioritize:

- Study Designs: Randomized controlled trials, stable isotope studies, and balance studies in human subjects.

- Data Richness: Studies that report absolute absorption values (e.g., Fractional Absorption - F%) rather than only relative differences.

- Population Relevance: Studies in populations relevant to the intended application of the final equation [6].

- Execute Systematic Search: Conduct a comprehensive search across multiple scientific databases using a predefined search strategy. Document the number of studies identified, included, and excluded.

- Data Extraction: Systematically extract key data from included studies into a standardized template. Essential data points include:

- Participant characteristics and sample size.

- Compound form and dosage.

- Dietary context and meal composition.

- Method used to assess bioavailability.

- Mean absorption values and measures of variance (SD, SEM).

- Quality Assessment: Evaluate each study for potential biases (e.g., selection bias, measurement bias) and overall quality. Tools like the GRADE approach can be adapted for this purpose [14].

Troubleshooting Tip: For data-poor nutrients, the review may need to be expanded to include high-quality animal studies, but these should be clearly flagged and their limitations acknowledged due to interspecies differences [13].

Step 3: Equation Construction

Objective: To develop one or more candidate mathematical equations that predict bioavailability based on the key variables identified in Steps 1 and 2.

Procedure:

- Dataset Curation: Compile a unified dataset from the literature review. Handle missing data appropriately (e.g., imputation or exclusion) and document all decisions.

- Variable Selection: Use statistical methods to select the most influential predictors from the factor inventory. Techniques include:

- VSURF Algorithm: For selecting the most relevant molecular descriptors from a large pool [12].

- Stepwise Regression: To iteratively add/remove variables based on statistical criteria.

- Domain Knowledge: Forcing critically important biological factors into the model even if statistical significance is marginal.

- Model Fitting: Apply appropriate modeling techniques to derive the equation.

- For Continuous Outcomes (e.g., F%): Use multiple linear regression or machine learning regression algorithms (Random Forest, CatBoost) [12].

- For Categorical Outcomes (e.g., Low/Medium/High): Use logistic regression or classification algorithms.

- Equation Specification: Document the final candidate equation(s) in standard mathematical form, including all coefficients and their standard errors. For example:

Predicted Iron Absorption (%) = β₀ + β₁*(Vitamin C intake) + β₂*(Phytic acid intake) + β₃*(Iron status).

Diagram 1: Workflow for equation construction and internal evaluation.

Step 4: Model Validation

Objective: To assess the predictive performance and generalizability of the developed equation using data not used in its construction.

Procedure:

- Internal Validation: Assess model performance on the training data using resampling techniques like bootstrapping or cross-validation. Calculate key performance metrics (see Table 2) [15].

- External Validation: This is the critical step for establishing model credibility.

- Obtain one or more independent datasets of human studies that were not used in model building.

- Apply the developed equation to this new data.

- Calculate validation metrics by comparing predicted versus observed bioavailability values.

- Performance Benchmarking: Compare the performance of the new equation against existing models or default assumptions (e.g., a fixed absorption percentage).

- Sensitivity Analysis: Explore how model predictions change with variations in key input parameters to identify the most sensitive drivers of bioavailability.

Table 2: Key Validation Metrics for Predictive Bioavailability Equations

| Metric | Formula/Description | Interpretation and Ideal Value | ||

|---|---|---|---|---|

| Q²F₃ (for Regression) | A metric for external validation prediction performance [12]. | Values closer to 1.0 indicate better predictive performance. A value of 0.34 was reported for a robust oral bioavailability QSAR model [12]. | ||

| Geometric Mean Fold Error (GMFE) | `GMFE = 10^(∑ | log10(Predicted/Observed) | / n)` | Measures central tendency of prediction error. Ideal value is 1.0, indicating no systematic over- or under-prediction. A value of 2.35 was achieved for a VDss model [12]. |

| Root Mean Square Error (RMSE) | RMSE = √[Σ(Predictedᵢ - Observedᵢ)² / n] |

Measures the average magnitude of prediction error. Lower values indicate better accuracy. Should be interpreted in the context of the absorption range. | ||

| Correlation Coefficient (R) | R = cov(P, O) / (σₚ σₒ) |

Measures the strength and direction of the linear relationship between predicted (P) and observed (O) values. Closer to 1.0 is better. |

Diagram 2: Model validation workflow illustrating the critical pathway from internal to external validation.

Applications and Implications

Successfully developed and validated equations have transformative applications across multiple fields. In public health and nutrition, they enable more accurate assessment of nutrient adequacy at the population level and refine dietary reference intakes by moving beyond total intake to consider utilizable nutrient levels [6] [7]. For the food and pharmaceutical industries, these algorithms are powerful tools for comparing products, enhancing formulations to maximize nutrient delivery, and reducing ingredient waste by optimizing inclusion levels [16]. Furthermore, they contribute to scientific research by providing a standardized method to estimate bioavailability when direct measurement is impractical, thereby supporting the evaluation of global food system sustainability [16].

The transdisciplinary nature of this framework bridges fields from computational chemistry and machine learning to clinical nutrition and regulatory science, fostering a more integrated and evidence-based approach to understanding nutrient utilization.

Identifying Key Factors Influencing Nutrient and Bioactive Compound Bioavailability

The bioavailability of a nutrient or bioactive compound—defined as the proportion that is absorbed from the diet and becomes available for physiological functions—is a critical determinant of its efficacy. Current nutrient intake recommendations, nutritional assessments, and food labeling primarily rely on the total nutrient content in foods, which does not account for variations in absorption and utilization [4] [6]. Accurately predicting bioavailability remains a significant challenge in both nutrition science and drug development. This application note outlines a structured framework and detailed protocols for identifying key factors influencing bioavailability and developing predictive equations, providing researchers with practical methodologies to advance this field.

Core Framework for Developing Predictive Bioavailability Equations

A recently proposed structured framework for developing predictive bioavailability equations consists of four sequential steps designed to enhance the accuracy and precision of nutrient bioavailability estimates [4] [6] [7].

Table 1: Four-Step Framework for Bioavailability Prediction Equation Development

| Step | Description | Key Activities | Primary Output |

|---|---|---|---|

| 1 | Identify Key Influencing Factors | Systematic analysis of food matrix, host, and nutrient-specific factors | Comprehensive list of critical bioavailability modulators |

| 2 | Conduct Literature Review | Gather data from high-quality human studies on absorption and utilization | Curated database of bioavailability measurements |

| 3 | Construct Predictive Equations | Apply statistical modeling and machine learning algorithms | Preliminary predictive equation or algorithm |

| 4 | Validate and Translate | Conduct validation studies to verify predictive accuracy | Validated, ready-to-use prediction model |

The following diagram illustrates the workflow and logical relationships between these steps:

Key Factors Influencing Bioavailability

Bioavailability is influenced by a complex interplay of factors that can be categorized into three primary domains: food matrix effects, host-related factors, and nutrient-specific characteristics.

Table 2: Key Factors Influencing Bioavailability of Nutrients and Bioactive Compounds

| Category | Specific Factors | Impact on Bioavailability |

|---|---|---|

| Food Matrix | Food composition and structure | The physical entrapment of nutrients within plant cell walls can limit their release during digestion [17]. |

| Presence of inhibitors/enhancers | Dietary components like phytates can inhibit mineral absorption, while lipids can enhance absorption of fat-soluble vitamins [18]. | |

| Food processing and preparation | Techniques like heating, grinding, or fermentation can break down cell walls and anti-nutritional factors, increasing bioavailability [17]. | |

| Host Factors | Gastrointestinal physiology | Age-dependent changes in gastric pH, intestinal surface area, and transit time significantly impact absorption [19]. |

| Genetic polymorphisms | Variations in genes encoding metabolizing enzymes (e.g., CYP450) and transport proteins (e.g., P-glycoprotein) affect nutrient and drug disposition [20]. | |

| Health status and microbiome | Gut microbiota can metabolize compounds into more or less bioavailable forms; inflammation or disease states can alter absorption [18]. | |

| Nutrient/Drug Properties | Chemical structure | Molecular size, lipophilicity, and solubility directly influence membrane permeability and absorption potential [20] [21]. |

| Interaction with transport systems | Affinity for efflux transporters like P-glycoprotein can significantly reduce systemic availability [20]. | |

| Metabolism by intestinal/hepatic enzymes | First-pass metabolism by enzymes like CYP3A4 is a major determinant of oral bioavailability for many compounds [20]. |

Experimental Protocols for Bioavailability Assessment

Protocol for Systematic Literature Review and Data Collection

Purpose: To gather high-quality human data for informing predictive equation development.

Materials:

- Electronic database access (e.g., PubMed, Scopus, Web of Science)

- Reference management software (e.g., EndNote, Zotero)

- Data extraction forms (electronic or paper-based)

Procedure:

- Define Inclusion/Exclusion Criteria: Establish specific criteria based on population (e.g., healthy adults, specific age groups), intervention (e.g., specific nutrient/compound), study design (e.g., randomized controlled trials, crossover studies), and outcomes (e.g., absorption measured by stable isotopes, plasma concentration).

- Develop Search Strategy: Create comprehensive search queries using relevant keywords and Medical Subject Headings (MeSH terms) related to the nutrient/compound of interest and "bioavailability," "absorption," or "pharmacokinetics."

- Screen Studies: Perform initial title/abstract screening followed by full-text review of potentially relevant studies. Use two independent reviewers to minimize bias.

- Extract Data: Systematically extract data using standardized forms, including study characteristics, participant demographics, intervention details, methodology, and outcome measures.

- Assess Quality: Evaluate study quality using appropriate tools (e.g., Cochrane Risk of Bias tool, Newcastle-Ottawa Scale).

- Synthesize Evidence: Tabulate and summarize findings, noting consistent patterns and evidence gaps.

Protocol for Computational Prediction of Oral Bioavailability

Purpose: To develop in silico models for predicting human oral bioavailability using molecular descriptors.

Materials:

- Chemical structures of compounds (in SMILES or SDF format)

- Molecular descriptor calculation software (e.g., Dragon, Mordred)

- Machine learning environment (e.g., Python with scikit-learn, R)

- Validated dataset of compounds with known bioavailability values

Procedure:

- Dataset Curation:

- Compile a dataset of compounds with experimentally measured oral bioavailability values.

- Divide the dataset into training (≈80%) and test (≈20%) sets, ensuring structural diversity.

Descriptor Calculation:

- Generate a comprehensive set of molecular descriptors (e.g., constitutional, topological, geometrical, quantum-chemical) for each compound.

- Pre-process descriptors by removing those with zero variance and high correlation.

Model Building:

- Select appropriate machine learning algorithms (e.g., Random Forest, Support Vector Machine, Multiple Linear Regression).

- Train models using the training set and selected descriptors.

- Optimize model hyperparameters via cross-validation.

Model Validation:

- Evaluate model performance on the held-out test set using metrics such as Accuracy, Sensitivity, Specificity, and Matthews Correlation Coefficient (MCC).

- Apply Y-randomization or external validation to test model robustness.

Model Interpretation:

- Use feature importance analysis (e.g., SHAP values) to identify molecular descriptors most critical for bioavailability prediction.

Table 3: Example Performance Metrics for a Random Forest Bioavailability Prediction Model (Based on [22])

| Model Type | Cutoff | Test Set Accuracy | Sensitivity | Specificity | AUC-ROC |

|---|---|---|---|---|---|

| Consensus Random Forest | 50% | 82.3% | 0.85 | 0.80 | 0.878 |

| Consensus Random Forest | 20% | 85.0% | 0.87 | 0.83 | 0.830 |

The computational workflow for this protocol is detailed below:

Protocol for Pediatric Bioavailability Prediction Using Allometric Scaling

Purpose: To predict absolute bioavailability in pediatric populations when only adult data are available.

Materials:

- Adult pharmacokinetic data (systemic and oral clearance values)

- Population data (body weights for different age groups)

- Statistical software for calculations

Procedure:

- Predict Pediatric Clearance:

- Apply the Age-Dependent Exponent (ADE) allometric model using equation:

- Use age-dependent exponents (b): 1.2 (preterm neonates ≤3 months), 1.1 (term neonates ≤3 months), 1.0 (>3 months to 2 years), 0.9 (>2-5 years), 0.75 (>5 years) [19].

Predict Absolute Bioavailability:

- Calculate predicted absolute bioavailability (F) using the ratio of predicted systemic clearance (CLiv) to predicted oral clearance (CLoral):

Validation:

- Compare predicted bioavailability with observed values when available.

- Acceptable prediction error is typically defined as within 0.5-1.5 fold (≤50% error) [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Bioavailability Studies

| Reagent/Material | Function/Application | Example Use Cases |

|---|---|---|

| Stable Isotopes | Safe tracers for studying mineral and vitamin absorption in humans without radioactivity. | Quantifying fractional absorption of iron, zinc, calcium using isotopic enrichment measurements in blood or urine [4]. |

| Caco-2 Cell Line | Human colon adenocarcinoma cell line that differentiates into enterocyte-like cells, forming a polarized monolayer. | In vitro model for predicting intestinal permeability and absorption of nutrients and drugs [21]. |

| Molecular Descriptor Software (e.g., Dragon, Mordred) | Computes theoretical molecular descriptors from chemical structure. | Generating 1,143+ 2D descriptors (constitutional, topological, etc.) for QSAR modeling of oral bioavailability [22]. |

| P-glycoprotein (P-gp) Assays | Assess interaction with key efflux transporter that limits intestinal absorption. | Determining substrate affinity for P-gp to predict potential bioavailability limitations due to active efflux [20]. |

| Cytochrome P450 Assay Kits | Evaluate metabolism by major drug/nutrient metabolizing enzymes (e.g., CYP3A4, CYP2D6). | Estimating first-pass metabolism potential, a critical factor determining oral bioavailability [20]. |

| Simulated Gastrointestinal Fluids | Standardized media mimicking gastric and intestinal conditions for in vitro digestion models. | Studying nutrient release from food matrices and stability during digestion in a controlled, reproducible system [17]. |

The accurate prediction of nutrient and bioactive compound bioavailability requires a multidisciplinary approach integrating food science, physiology, and computational modeling. The framework and protocols outlined in this document provide a structured pathway for developing robust predictive equations. Key success factors include the use of high-quality human data, consideration of critical modifying factors, application of appropriate computational methods, and rigorous validation. As research in this field advances, these methodologies will contribute significantly to the development of evidence-based dietary recommendations and more efficient drug development processes.

Current Limitations in Bioavailability Assessment and Data Gaps

Bioavailability, defined as the fraction of an administered dose that reaches systemic circulation unaltered, serves as a critical determinant of a drug's therapeutic efficacy and commercial viability [1]. Despite significant advancements in life sciences, accurate assessment of bioavailability remains challenging due to a complex interplay of physicochemical, biological, and technological factors [23] [24]. This application note examines the current limitations and data gaps in bioavailability assessment within the context of developing predictive bioavailability equations, providing researchers with structured experimental protocols to address these challenges.

Key Limitations in Bioavailability Assessment

Methodological and Technical Limitations

Table 1: Methodological Limitations in Bioavailability Assessment

| Limitation Category | Specific Challenge | Impact on Bioavailability Assessment |

|---|---|---|

| Traditional Model Systems | High cost and methodological rigidity of in vivo trials and in vitro digestion models [25] | Inability to fully simulate the physiological environment; limited predictive accuracy for human outcomes |

| Computational Gaps | Limited mechanistic interpretability of "black box" AI algorithms [25] | Hinders regulatory approval and scientific validation of predictive models |

| Data Quality Issues | Absence of high-quality standardized datasets representing biological complexity [25] | Leads to model overfitting and bias; reduces predictive reliability |

| Analytical Simplifications | Assumption of constant drug clearance and uniform distribution in AUC calculations [1] | Generates unreliable data when physiological conditions vary |

Biological Complexity and Variability

The journey of an active pharmaceutical ingredient from administration to target site involves navigating complex biological barriers that introduce significant variability in bioavailability assessment.

Biological factors such as genetic polymorphisms of intestinal transporters (e.g., P-glycoprotein), hepatic cytochrome P450 enzyme variations, and disease states that alter gastrointestinal physiology significantly impact bioavailability but are difficult to standardize in assessment models [1]. Additionally, the gut microbiota, food effects, and concurrent medications introduce further variability that is not fully captured in conventional study designs [1] [25].

Technological and Regulatory Gaps

The 2023 FDA Pilot Program for the Review of Innovation and Modernization of Excipients (PRIME) highlights regulatory recognition of bioavailability challenges, particularly for novel excipients [23]. However, a 2020 USP survey revealed that 84% of drug formulators reported limitations imposed by currently approved excipients, with 28% experiencing drug development discontinuation due to these limitations [23]. This underscores a critical technological gap in formulation tools for bioavailability enhancement.

Experimental Protocols for Addressing Data Gaps

Protocol for High-Throughput Formulation Screening

Objective: Systematically identify optimal formulation candidates for bioavailability enhancement of poorly soluble compounds.

Materials:

- Test Compound: API with known solubility limitations

- Polymer Library: Diverse set of pharmaceutical polymers (e.g., HPMC, PVP, PEG)

- Solubilizers: Surfactants and lipid-based excipients

- Equipment: Automated liquid handling system, dissolution apparatus, HPLC-MS system

Procedure:

- Formulation Design: Create a design of experiments (DoE) matrix varying polymer type, polymer:API ratio, and solubilizer concentration.

- Library Preparation: Utilize automated systems to prepare formulation libraries in 96-well plate format. Include control formulations for baseline comparison.

- Dissolution Testing: Conduct miniaturized dissolution studies using USP apparatus II adaptations with physiological relevant media (FaSSGF, FaSSIF, FeSSIF).

- Analytical Characterization: Sample at predetermined time points (5, 10, 15, 30, 45, 60, 90, 120 min) and analyze drug concentration using HPLC-MS with validated methods.

- Stability Assessment: Subject lead formulations to accelerated stability conditions (40°C/75% RH) for 4 weeks with weekly sampling.

- Data Integration: Calculate key parameters including dissolution efficiency (DE), mean dissolution time (MDT), and supersaturation maintenance.

Data Analysis: Apply multivariate analysis to identify critical formulation factors influencing dissolution performance. Select lead formulations based on a combined evaluation of dissolution rate, extent, and stability.

Protocol for Assessing Transporter-Mediated Absorption

Objective: Characterize the role of specific intestinal transporters in API absorption and identify potential drug-drug interactions.

Materials:

- Cell Models: Caco-2, MDCK, or transfected cell lines overexpressing specific transporters

- Test Compound: API with suspected transporter involvement

- Reference Compounds: Known substrates and inhibitors of relevant transporters

- Buffers: Transport buffers (pH 6.0-7.4) with and without inhibitors

Procedure:

- Cell Culture and Validation: Maintain cell monolayers on permeable supports. Validate monolayer integrity by measuring transepithelial electrical resistance (TEER) and lucifer yellow permeability.

- Bidirectional Transport Studies:

- A-to-B Direction: Apply test compound to apical chamber, sample from basolateral chamber

- B-to-A Direction: Apply test compound to basolateral chamber, sample from apical chamber

- Include control compounds with known transport characteristics

- Inhibition Studies: Repeat transport studies in presence of specific transporter inhibitors (e.g., verapamil for P-gp, Ko143 for BCRP)

- Concentration-Dependent Studies: Assess transport across a range of concentrations (typically 1-100 μM) to determine kinetic parameters

- Sample Analysis: Quantify compound concentrations using LC-MS/MS with stable isotope-labeled internal standards

Data Analysis: Calculate apparent permeability (Papp), efflux ratio, and determine kinetic parameters (Km, Vmax) for saturable processes. Significant reduction in efflux ratio with specific inhibitors indicates transporter involvement.

Protocol for In Vitro - In Vivo Correlation (IVIVC) Development

Objective: Establish predictive relationships between in vitro dissolution and in vivo bioavailability to support biowaivers and formulation development.

Materials:

- Formulations: Multiple formulations with different release rates (slow, medium, fast)

- In Vitro Data: Dissolution profiles in physiologically relevant media

- In Vivo Data: Plasma concentration-time profiles from human or animal studies

Procedure:

- In Vitro Dissolution: Characterize dissolution profiles of all formulations using appropriate apparatus and media simulating gastrointestinal conditions.

- In Vivo Pharmacokinetics: Obtain plasma concentration-time data for each formulation from clinical or preclinical studies with crossover design.

- Data Preprocessing: Calculate fraction dissolved in vitro and fraction absorbed in vivo using Wagner-Nelson or Loo-Riegelman methods.

- Model Development:

- Level A: Point-to-point correlation between fraction dissolved and fraction absorbed

- Level B: Correlation based on statistical moments (mean dissolution time vs mean residence time)

- Level C: Single point correlation (e.g., dissolution efficiency vs AUC)

- Model Validation: Evaluate predictive performance using internal validation (cross-validation) and external validation (with additional formulations).

Data Analysis: Develop linear or non-linear regression models correlating in vitro and in vivo parameters. Establish acceptance criteria for prediction errors (≤10% for Cmax and AUC) to demonstrate predictive capability.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Bioavailability Studies

| Reagent/Material | Function in Bioavailability Assessment | Application Examples |

|---|---|---|

| Amorphous Solid Dispersions (ASDs) | Enhance solubility and dissolution rate of poorly soluble compounds [23] | Formulation platform for BCS Class II and IV compounds [23] |

| Novel Excipients | Overcome limitations of traditional excipients in drug development [23] | Amphiphilic polymers for nanoparticle drug delivery [23] |

| PBPK Modeling Software | Mechanistic modeling of drug disposition incorporating physiology and drug properties [26] | Prediction of first-pass metabolism, food effects, and drug-drug interactions [26] |

| Caco-2 Cell Lines | In vitro model of intestinal permeability and transporter effects [24] | Assessment of passive and active transport mechanisms [24] |

| Biorelevant Media | Simulate gastrointestinal fluids for dissolution testing [24] | FaSSGF, FaSSIF, FeSSIF for predicting in vivo performance [24] |

| Stable Isotope Labels | Track drug absorption and metabolism without interference from endogenous compounds [4] | Human studies to quantify nutrient and drug bioavailability [4] |

The assessment of bioavailability remains constrained by methodological limitations, biological complexities, and technological gaps. The protocols and tools outlined in this application note provide a systematic approach to addressing critical data gaps, particularly in the realms of formulation screening, transporter interactions, and IVIVC development. As the field evolves, integrating artificial intelligence with high-quality experimental data presents a promising path toward more predictive bioavailability equations that can accelerate drug development and improve therapeutic outcomes [25].

Methodological Approaches: From Traditional Regression to Advanced Machine Learning

Multivariate Linear Regression for Nutritional Biomarker Prediction

The development of robust predictive equations is paramount for advancing the understanding of nutrient bioavailability, moving beyond the simplistic measurement of total nutrient content in foods to accurately estimating the fraction that is absorbed and utilized by the body [6] [4]. This shift is critical for refining nutrient intake recommendations, nutritional assessments, and food labeling practices. Within this context, multivariate linear regression serves as a foundational statistical methodology for modeling the relationship between multiple nutritional biomarkers and clinically relevant outcomes, such as bioactive compound absorption or disease risk. The core objective is to construct predictive models that can quantify these complex relationships, thereby enabling more precise and personalized dietary interventions and health management strategies [27] [28].

The application of these models extends into various domains of nutritional science. For instance, machine learning-based frameworks have been successfully implemented to predict Metabolic Syndrome (MetS) using serum liver function tests and high-sensitivity C-reactive protein (hs-CRP), demonstrating the power of combining multiple biomarkers for enhanced predictive accuracy [28]. Similarly, latent variable approaches like the multiMarker framework have been developed to model the relationship between food intake and multiple metabolomic biomarkers, providing a tool for objective food intake assessment that accounts for prediction uncertainty [29]. These approaches highlight the evolution from single-biomarker models to more sophisticated multi-biomarker strategies that offer greater specificity and sensitivity.

Core Predictive Equation Development Framework

A structured, multi-step framework is essential for developing valid and reliable predictive equations for nutrient bioavailability. The following table summarizes a standardized four-step process adapted for nutritional biomarker prediction [6] [4]:

Table 1: Framework for Developing Predictive Equations for Nutrient Bioavailability

| Step | Description | Key Activities | Application to Nutritional Biomarkers |

|---|---|---|---|

| 1. Identify Key Factors | Determine which factors influence the bioavailability of the nutrient or bioactive compound. | Systematic review of physiological mechanisms, food matrix effects, and host factors. | Identify relevant nutritional biomarkers (e.g., liver enzymes, inflammatory markers) and confounding variables (e.g., age, sex). |

| 2. Comprehensive Literature Review | Gather data from high-quality human studies to inform equation development. | Critically appraise intervention studies that measure biomarker responses to controlled nutrient intakes. | Collect data on biomarker levels across different intake levels and population subgroups to establish dose-response relationships. |

| 3. Construct Predictive Equations | Build the multivariate regression model using the identified biomarkers and factors. | Apply statistical modeling techniques, including variable selection and parameter estimation. | Develop an equation where a health outcome or nutrient status is predicted by a linear combination of multiple biomarker values. |

| 4. Validate the Equation | Assess the model's performance and generalizability to new populations. | Internal validation (e.g., cross-validation) and external validation in an independent cohort. | Quantify predictive performance using metrics like R², correlation coefficients, and error rates, and refine the model as needed. |

This framework ensures a systematic approach from conceptualization to validation. The construction phase (Step 3) often employs advanced regression techniques. For example, penalized regression methods like LASSO (Least Absolute Shrinkage and Selection Operator) and Elastic Net are particularly valuable for identifying the most relevant biomarkers from a larger set of potential predictors, thereby building a more parsimonious and interpretable model [27]. These methods prevent overfitting by applying a penalty to the regression coefficients, which can shrink insignificant coefficients to zero, effectively performing variable selection.

Validation (Step 4) is a critical final step. Techniques such as Monte Carlo cross-validation can be used to enhance the reliability of the feature selection process and provide a robust estimate of how the model will perform on unseen data [27]. In practice, a well-constructed model using top biomarker predictors can achieve high correlation between predicted and observed outcomes (e.g., correlations above 0.92) and significantly reduce prediction uncertainty [27].

Detailed Experimental Protocol for Model Development

Data Collection and Preprocessing

Begin by assembling a dataset from a controlled intervention study or a well-characterized cohort. The dataset should include precise measurements of food intake or nutrient administration and corresponding biomarker measurements from blood, urine, or other relevant biofluids [29]. The unit of measure for biomarkers should be consistent and documented. Key preprocessing steps include:

- Data Cleaning: Address missing values through appropriate imputation methods or exclusion criteria.

- Data Transformation: Apply transformations (e.g., log-transformation) to normalize skewed distributions of biomarker levels.

- Data Structuring: Format data into a matrix where each row represents an observation (e.g., a study participant) and columns represent the administered food quantity and the P biomarker measurements [29].

Model Fitting and Variable Selection

Using the preprocessed dataset, fit a multivariate linear regression model. The model can be represented as:

Outcome_i = β₀ + β₁*Biomarker₁i + β₂*Biomarker₂i + ... + βₚ*Biomarker_Pi + ε_i

where Outcome_i is the health outcome or nutrient status for individual i, β₀ is the intercept, β₁ to βₚ are the regression coefficients for each biomarker, and ε_i is the error term.

For high-dimensional data (many potential biomarkers), implement a penalized regression approach:

- LASSO Regression: Applies an L1 penalty that tends to force some coefficients to exactly zero, thus performing feature selection.

- Elastic Net Regression: Combines L1 and L2 penalties, which is particularly useful when biomarkers are highly correlated [27].

The model is fitted to maximize the goodness-of-fit, often using techniques like maximum likelihood estimation within a cross-validation framework to tune the penalty parameters.

Model Validation and Intake Prediction

Once the model is fitted and the parameters are estimated, it must be validated.

- Internal Validation: Use k-fold cross-validation or hold-out validation within the original dataset to assess performance metrics like R-squared, mean squared error, and the correlation between predicted and observed values [27] [28].

- External Validation: If possible, test the model on a completely independent dataset to evaluate its generalizability.

For predicting food intake or nutrient status from new biomarker data alone, the estimated model coefficients are applied to the new biomarker measurements. Software tools like the multiMarker R package can be used to generate predictions along with their associated uncertainty, often expressed as credible intervals [29].

Workflow Visualization

The following diagram illustrates the logical workflow for developing and applying a multivariate linear regression model for nutritional biomarker prediction.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, software, and methodological approaches essential for research in this field.

Table 2: Essential Research Reagents and Solutions for Nutritional Biomarker Prediction

| Category | Item / Technique | Specification / Function |

|---|---|---|

| Biomarker Assays | High-sensitivity C-reactive Protein (hs-CRP) | Quantifies systemic inflammation, a key predictor in metabolic syndrome models [28]. |

| Liver Function Tests (ALT, AST, Bilirubin) | Enzymes and metabolites indicating liver health, correlated with metabolic dysregulation [28]. | |

| Statistical Software | R Statistical Environment | Primary platform for data analysis, model fitting, and visualization. |

multiMarker R Package |

Specialized package for modeling food intake from multiple biomarkers using a Bayesian latent variable approach [29]. | |

ordinalNet, truncnorm |

Supporting R packages for ordinal regression and truncated normal distributions, used by multiMarker [29]. |

|

| Modeling Algorithms | Penalized Regression (LASSO, Elastic Net) | Advanced regression methods for feature selection and managing multicollinearity among biomarkers [27]. |

| Gradient Boosting (GB) | Machine learning algorithm known for high predictive accuracy in complex biological datasets [28]. | |

| Computational Methods | Monte Carlo Cross-Validation | Technique to assess model stability and the reliability of selected biomarkers [27]. |

| SHAP (SHapley Additive exPlanations) | Framework for interpreting complex machine learning models and identifying influential predictors [28]. |

The integration of multivariate linear regression and related machine learning techniques with carefully selected nutritional biomarkers provides a powerful approach for predicting nutrient bioavailability and associated health outcomes. By adhering to a structured development framework that emphasizes rigorous validation and uncertainty quantification, researchers can create robust tools that advance the field of precision nutrition. These models hold significant promise for improving dietary assessment, informing public health policy, and ultimately guiding personalized nutritional interventions.

QSAR Models for Predicting Oral Bioavailability and Volume of Distribution

Within the framework of a broader thesis on developing predictive bioavailability equations, the in silico estimation of toxicokinetic (TK) properties represents a critical pillar. TK profiles provide essential information on the fate of chemicals in the human body, namely absorption, distribution, metabolism, and excretion (ADME) [12]. In the context of drug discovery and chemical risk assessment, Quantitative Structure-Activity Relationship (QSAR) models are indispensable computational tools for predicting key TK parameters, thereby reducing reliance on costly and time-consuming in vivo experiments [30] [12]. This document details the application of newly developed QSAR models for two fundamental TK properties: oral bioavailability (F%) and volume of distribution at steady state (VDss). The focus is placed on their practical application, particularly for mapping the TK space of potential endocrine-disrupting chemicals (EDCs), which can pose significant risks to human health [30].

Data Presentation and Model Performance

The development of robust QSAR models relies on large, curated datasets. The following tables summarize the core data and performance metrics for the oral bioavailability and VDss models.

Table 1: Summary of Datasets for Model Development

| TK Endpoint | Dataset Purpose | Number of Chemicals | Key Data Characteristics |

|---|---|---|---|

| Oral Bioavailability (F%) | Regression Models | 1,712 | Values span 0% to 100%; peaks at 0% and 100% [12] |

| Classification Models (>50% threshold) | 1,307 | Binary classification based on a 50% bioavailability threshold [12] | |

| Multiclass Models | 1,244 | Utilized 30-60% thresholds for intermediate classes [12] | |

| Volume of Distribution (VDss) | Regression & Multiclass Models | 1,591 | Range: 0.035 L·kg⁻¹ to 700 L·kg⁻¹; values log-transformed (ln) for modeling [12] |

Table 2: Predictive Performance of Top Models

| TK Endpoint | Model Type | Best Algorithm | Performance Metric & Value |

|---|---|---|---|

| Oral Bioavailability | Regression | R-CatBoost | Q2F3 = 0.34 [30] [12] |

| Volume of Distribution (VDss) | Regression | R-Random Forest (R-RF) | GMFE = 2.35 [30] [12] |

The chemical space of the collected compounds was characterized using Uniform Manifold Approximation and Projection (UMAP), revealing a diverse landscape for both F% and VDss, supporting the use of machine learning to capture complex, non-linear patterns for prediction [12].

Experimental Protocols

Protocol 1: Developing a QSAR Model for Oral Bioavailability

Objective: To construct a regression QSAR model for predicting the oral bioavailability (F%) of new chemical entities.

Materials:

- A curated dataset of 1,712 chemicals with experimentally measured F% values [12].

- Molecular descriptor calculation software (e.g., Mordred) [12].

- Machine learning environment (e.g., Python with Scikit-learn, CatBoost).

Procedure:

- Data Curation and Preparation: Assemble the chemical dataset and corresponding F% values. Ensure chemical structures are standardized.

- Molecular Descriptor Calculation: Compute a comprehensive set of molecular descriptors (e.g., 1,826 descriptors via Mordred) for each chemical in the dataset [12].

- Descriptor Selection: Apply a feature selection algorithm (e.g., VSURF) to identify the most relevant and non-redundant descriptors, reducing the initial set to a manageable number (e.g., 66 descriptors) to improve model interpretability and avoid overfitting [12].

- Dataset Splitting: Divide the dataset into a training set (e.g., 1,213 chemicals) for model building and a hold-out validation set (e.g., 405 chemicals) for final performance evaluation [12].

- Model Training and Validation: Train multiple regression algorithms (e.g., CatBoost, Random Forest, Support Vector Machine) on the training set using the selected descriptors. Optimize model hyperparameters via cross-validation.

- Model Evaluation: Apply the trained model to the validation set. Assess performance using metrics such as Q2F3, which is a measure of external validation predictability [12].

Protocol 2: Predicting VDss and Mapping TK Space for EDCs

Objective: To apply validated QSAR models for VDss and F% to a list of potential Endocrine-Disrupting Chemicals (EDCs) to identify high-risk compounds.

Materials:

- Pre-trained QSAR models for VDss (R-RF) and oral bioavailability (R-CatBoost).

- A list of potential EDCs with defined chemical structures.

- Software for calculating molecular descriptors compatible with the pre-trained models.

Procedure:

- Chemical Input Preparation: Standardize the molecular structures of the target EDCs.

- Descriptor Generation: Calculate the same set of molecular descriptors used in the original model training for each EDC.

- Property Prediction: Input the calculated descriptors into the pre-trained VDss and oral bioavailability models to obtain predicted values.

- TK Space Mapping: Create a scatter plot or similar visualization with predicted VDss and oral bioavailability as the axes. Plot each EDC on this graph to map the collective "TK Space."

- Risk Identification: Identify EDCs that fall into high-risk quadrants, for instance, those with high predicted oral bioavailability (indicating efficient systemic absorption) and high predicted VDss (suggesting extensive tissue distribution and potential for accumulation) [30] [12]. These compounds should be prioritized for further experimental investigation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Resources for QSAR Modeling of TK Properties

| Item / Reagent | Function in Research |

|---|---|

| Curated Chemical Dataset | Provides the experimental (in vivo or in vitro) data on F% and VDss required for training and validating computational models. Serves as the ground truth [12]. |

| Molecular Descriptor Software (e.g., Mordred) | Generates quantitative numerical representations of chemical structures that serve as the input variables (features) for QSAR models [12]. |

| Feature Selection Algorithm (e.g., VSURF) | Identifies the most predictive and non-redundant molecular descriptors from a large initial pool, improving model performance, robustness, and interpretability [12]. |

| Machine Learning Algorithms (e.g., CatBoost, Random Forest) | The core computational engines that learn the complex mathematical relationships between molecular descriptors and the target TK endpoint [30] [12]. |