Analytical Target Profile (ATP) in Food Chemistry: A Practical Guide for Method Development and Validation

This article provides a comprehensive guide for researchers and scientists on developing and implementing Analytical Target Profiles (ATP) in food chemistry.

Analytical Target Profile (ATP) in Food Chemistry: A Practical Guide for Method Development and Validation

Abstract

This article provides a comprehensive guide for researchers and scientists on developing and implementing Analytical Target Profiles (ATP) in food chemistry. It covers the foundational principles of ATP as defined by modern regulatory guidelines like ICH Q14, outlines a step-by-step methodological approach for creating an ATP, discusses troubleshooting and optimization strategies using Analytical Quality by Design (AQbD), and explores validation protocols and comparative framework selection. By synthesizing current best practices, this guide aims to equip professionals with the knowledge to build robust, fit-for-purpose analytical methods that ensure food safety, quality, and regulatory compliance throughout the method lifecycle.

What is an Analytical Target Profile? Foundational Concepts and Regulatory Drivers

An Analytical Target Profile (ATP) is a foundational document that defines the required performance criteria for an analytical procedure. It specifies the quality standards an analytical method must meet to reliably report a result for its intended use [1]. The ATP is established prior to method development and is method-agnostic, focusing on the desired outcome rather than prescribing a specific technique [1]. This approach shifts the paradigm from a compliance-focused, check-box exercise to a structured, lifecycle-based model that enhances method understanding, robustness, and fitness for purpose [1]. Within the context of food chemistry and drug development, the ATP ensures that analytical results are sufficiently reliable to support critical decisions regarding product quality, safety, and efficacy.

The Structure and Components of an ATP

The core of an ATP is a clear statement of the intended purpose of the analytical method and a definition of the required performance standards. A well-constructed ATP includes the following key elements:

- Analyte and Attribute: Clearly defines what is being measured (e.g., an active ingredient, an impurity, or a microbial contaminant).

- Sample Type: Describes the matrix and the specific type of sample (e.g., raw material, finished product, or surface swab).

- Performance Criteria: Quantifies the maximum permissible error (bias) and the acceptable levels of uncertainty (precision) for the reportable result. The ATP defines these criteria based on the impact of the analytical result on patient safety or product quality.

Unlike traditional validation criteria which assess characteristics like specificity and accuracy in isolation, the ATP defines a "total error" requirement. This provides a direct measure of the quality and error associated with the results the procedure generates, ensuring they fall within a pre-defined range of the true value with a high degree of probability [1].

Table 1: Traditional Validation Criteria vs. ATP Performance Criteria

| Aspect | Traditional Validation Approach | ATP-Based Approach |

|---|---|---|

| Focus | Validating a specific, developed method | Defining method-independent performance requirements |

| Bias & Precision | Often evaluated separately, potentially allowing trade-offs | Considers total error (bias + precision) combined |

| Lifecycle View | Treats development, validation, and transfer as separate activities | Integrates validation, verification, and transfer as interrelated stages |

| Outcome | Demonstrates method performance against default criteria | Ensures results are fit for their intended decision-making purpose |

The ATP Within the Analytical Procedure Lifecycle

The Analytical Procedure Lifecycle is a holistic framework that integrates the ATP with all stages of an analytical method's existence, from initial conception through routine use and eventual retirement.

The lifecycle model, as illustrated above, consists of three interconnected stages [1]:

- Stage 1: Procedure Design and Development: The ATP, created based on the intended use of the method, drives the selection and development of a suitable analytical procedure. This stage involves knowledge gathering, risk assessment, and establishing an analytical control strategy.

- Stage 2: Procedure Performance Qualification: This stage provides objective evidence that the developed method, when executed in its intended environment, consistently meets the performance criteria defined in the ATP.

- Stage 3: Continued Procedure Performance Verification: During routine use, the method's performance is continuously monitored to ensure it remains in a state of control. Data is trended, and the method is managed through a structured change control process, with any major changes potentially triggering a return to Stage 1.

Application Notes and Experimental Protocols

Application Note: Implementing an ATP for a High-Throughput Screening Assay

Objective: To establish a reliable, high-throughput ATP-based viability assay for screening compound libraries in drug development.

Background: Cellular ATP concentration is a direct indicator of cell viability and metabolic activity [2]. Bioluminescent ATP assays utilizing the firefly luciferase reaction provide a highly sensitive and rapid means of quantifying ATP, making them ideal for high-throughput applications [2].

Key Research Reagent Solutions: Table 2: Essential Reagents for a Bioluminescent ATP Assay

| Reagent / Material | Function / Description |

|---|---|

| Ultra-Glo Recombinant Luciferase | A stable, engineered luciferase resistant to detergent inhibition, enabling a stable "glow-type" signal for flexible workflow [2]. |

| Cell Lysis Reagent | A detergent-based reagent to rapidly lyse cells and release intracellular ATP while inactivating ATPases. |

| D-Luciferin | The substrate for the luciferase enzyme, which is oxidized in an ATP-dependent reaction to produce light [2]. |

| Luminometer or Multiwell Plate Reader | Instrumentation capable of detecting the luminescent signal produced by the reaction, correlating light intensity to ATP concentration. |

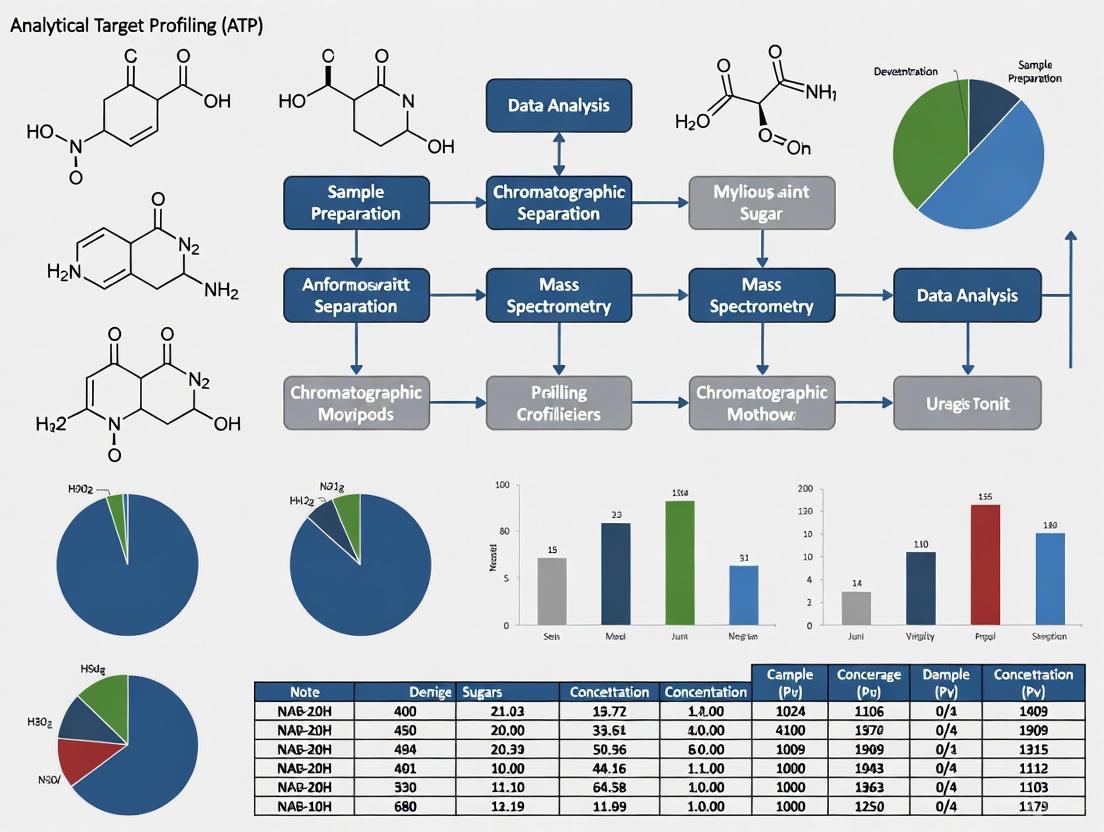

Experimental Workflow: The following diagram outlines the core steps in executing a bioluminescent cell viability assay for high-throughput screening.

Protocol: Quantitative Determination of Cellular ATP Synthetic Activity

This protocol details a method for making permeable E. coli cells to dynamically measure glycolytic ATP synthesis activity, adaptable for studying microbial metabolism in food and pharmaceutical contexts [3].

Principle: Cells are rendered permeable via osmotic shock and detergent treatment, allowing substrates (like glucose) to enter while preventing ATP loss. Externally added luciferase produces light proportional to the ATP synthesized from the glycolytic pathway within the permeable cells [3].

Materials:

- E. coli cell culture

- Permeabilization Buffer (e.g., containing Tris-HCl, EDTA, and lysozyme)

- Detergent Solution (e.g., Brij 58)

- Reaction Buffer (containing MgSO₄)

- D-Luciferin/Luciferase Mixture

- Glucose solution (substrate)

- Luminometer

Procedure:

- Cell Preparation: Harvest E. coli cells from a mid-log phase culture by centrifugation. Wash and resuspend in Permeabilization Buffer.

- Permeabilization: Incubate the cell suspension on ice for a defined period (e.g., 10 minutes) to allow lysozyme to weaken the cell wall. Add a non-ionic detergent (e.g., Brij 58) to final concentration of 0.05% and mix gently to create pores in the membrane.

- Reaction Setup: In a luminometer tube or multiwell plate, combine the following:

- Permeabilized cell suspension

- Reaction Buffer with MgSO₄

- D-Luciferin/Luciferase Mixture

- Initiation and Measurement: Start the reaction by injecting a glucose solution. Immediately begin measuring luminescence continuously for several minutes.

- Data Analysis: The luminescence signal will increase linearly as the cells synthesize ATP from glucose. Plot luminescence versus time. The slope of the linear increase is directly proportional to the cellular ATP biosynthetic activity [3].

Quality Control: Include an ATP standard curve in each experiment to confirm the linear relationship between ATP concentration and luminescent signal. A no-glucose control should be included to establish background signal.

Data Presentation and Analysis

The quantitative data generated from analytical methods must be structured and presented to facilitate easy comparison against the ATP's performance criteria.

Table 3: Example ATP Performance Criteria Table for an Impurity Method

| Performance Characteristic | ATP Requirement | Method A Results | Method B Results | Conclusion |

|---|---|---|---|---|

| Target Measurement Uncertainty | ≤ 15% (at target concentration) | 12% | 8% | Both Acceptable |

| Accuracy (Bias) | Within ± 10% of true value | +8% | -5% | Both Acceptable |

| Precision (%RSD) | ≤ 5% | 4.5% | 3.1% | Both Acceptable |

| Total Error (Bias + 2SD) | < 20% | 17% | 11.2% | Both Acceptable |

The relationship between the total error observed from a method's performance and the criteria set in the ATP can be visualized to aid in decision-making.

The Analytical Target Profile is more than a document; it is the strategic cornerstone that guides the entire analytical method lifecycle. By defining performance requirements upfront, the ATP ensures analytical methods are developed and selected based on their ability to produce reliable, meaningful data [1]. This approach provides a direct measure of the error associated with the results and integrates validation, transfer, and ongoing monitoring into a cohesive, science-based framework [1]. Adopting an ATP-centric approach ultimately enhances product and process understanding, reduces variability, and strengthens the overall quality system in both food chemistry and pharmaceutical research, thereby better ensuring patient safety and product efficacy.

The International Council for Harmonisation (ICH) finalized two pivotal guidelines in March 2024, ICH Q2(R2) on "Validation of Analytical Procedures" and ICH Q14 on "Analytical Procedure Development," which together modernize the framework for analytical sciences in regulated industries [4]. The U.S. Food and Drug Administration (FDA) has adopted these guidelines, making them critical for regulatory submissions and compliance [5]. These documents represent a significant evolution from the previous "check-the-box" approach to a scientific, risk-based lifecycle model that begins with proactive procedure design and continues through ongoing monitoring and improvement [6]. This framework, while developed for pharmaceuticals, provides an invaluable structured approach for food chemistry research seeking to implement robust, defensible analytical methods.

The enhanced approach emphasizes built-in quality rather than retrospective testing, encouraging a deeper understanding of methods through their entire lifecycle [7]. For food chemists, this means developing methods that are not only validated but truly fit-for-purpose, with clearly defined performance criteria established before development begins. The Analytical Target Profile (ATP) serves as this foundation, prospectively defining the quality attributes a method must measure and the required performance characteristics [6]. This systematic approach ensures methods remain reliable despite complex food matrices and evolving compositional profiles.

Core Regulatory Guidelines and Their Interrelationships

Key Guidelines and Their Roles

Table 1: Core ICH and FDA Guidelines for Analytical Procedures

| Guideline | Focus Area | Key Contribution | Status |

|---|---|---|---|

| ICH Q2(R2) | Analytical procedure validation | Provides framework for validation principles, including modern techniques like multivariate methods [4] | Final March 2024 [5] |

| ICH Q14 | Analytical procedure development | Science- and risk-based approaches for development; introduces ATP and enhanced approach [8] | Final March 2024 [4] |

| ICH Q12 | Pharmaceutical product lifecycle | Enables post-approval change management; works with Q14 for analytical procedure changes [9] | Adopted May 2021 [9] |

| FDA cGMP (§211.110) | In-process controls & testing | Requires monitoring for batch uniformity; flexible for advanced manufacturing [10] | Draft guidance January 2025 [10] |

The Lifecycle Approach Integration

ICH Q2(R2) and Q14 function as interconnected components within a comprehensive Analytical Procedure Lifecycle Management (APLM) framework [7]. This model consists of three continuous stages: Procedure Design and Development, where the ATP is defined and the method is developed; Procedure Performance Qualification, where the method is formally validated; and Procedure Performance Verification, involving ongoing monitoring during routine use [7]. This represents a fundamental shift from viewing validation as a one-time event to treating it as part of a continuous quality process.

The relationship between these guidelines and the analytical lifecycle is illustrated below:

Figure 1: Analytical Procedure Lifecycle with ICH Guidelines

The Analytical Target Profile (ATP) Foundation

ATP Concept and Definition

The Analytical Target Profile (ATP) is a prospective summary of the intended purpose of an analytical procedure and its required performance characteristics [6]. It serves as the foundational specification that guides all subsequent development, validation, and monitoring activities [7]. In essence, the ATP defines what the method must be capable of achieving, rather than prescribing how to achieve it. For food chemistry applications, this means focusing on the essential measurements needed to ensure food safety, quality, and authenticity without prematurely limiting the technical approach.

The ATP establishes performance criteria directly linked to the critical quality attributes (CQAs) of the product or material being tested [9]. In food research, these attributes might include nutrient content, contaminant levels, authenticity markers, or sensory characteristics. By defining these requirements upfront, method development becomes more efficient and targeted, reducing the risk of later-stage failures and facilitating continuous improvement throughout the method's lifecycle [7].

Developing an ATP for Food Chemistry Research

Table 2: ATP Components for Food Chemistry Applications

| ATP Element | Description | Food Chemistry Example |

|---|---|---|

| Analyte/Attribute | Substance or property to be measured | Vitamin D3 concentration in fortified milk |

| Sample Matrix | Material in which analyte exists | Whole milk with varying fat content |

| Required Performance | Accuracy, precision, specificity | ±5% accuracy of true value; RSD <3% |

| Measurement Range | Interval between upper and lower concentrations | 0.5-5.0 μg/mL to cover expected fortification |

| Acceptance Criteria | Predefined limits for reportable results | 95-105% of labeled claim for compliance |

Creating an effective ATP requires cross-functional collaboration between food chemists, quality professionals, and regulatory affairs specialists. The process begins with clearly defining the business and regulatory needs for the method, followed by identifying the critical quality attributes that must be controlled [9]. For food authentication methods, this might include specificity requirements to detect adulterants at economically motivated levels. The ATP should be sufficiently detailed to guide development but flexible enough to allow for multiple technical approaches.

ICH Q14: Enhanced Analytical Procedure Development

Minimal vs. Enhanced Approaches

ICH Q14 describes two complementary approaches to analytical procedure development: the traditional minimal approach and the more systematic enhanced approach [4]. The minimal approach represents the conventional practices that have historically been used, with limited formal requirement for understanding the method's capabilities and limitations. In contrast, the enhanced approach employs structured, science-based, and risk-based methodologies to gain more comprehensive procedure understanding [11].

The enhanced approach provides significant advantages for methods requiring post-approval changes, as the deeper understanding facilitates more flexible regulatory pathways [9]. For food chemistry laboratories dealing with evolving supply chains and emerging contaminants, this approach enables method adaptability without compromising data quality. The knowledge gained through enhanced development directly informs the analytical procedure control strategy, defining which parameters are critical and must be carefully controlled versus those that can vary within established ranges [11].

Risk Assessment in Method Development

A cornerstone of the enhanced approach is the application of systematic risk assessment throughout method development [11]. This involves identifying potential sources of variability that could impact method performance, then designing experiments to understand and control these factors. For complex food matrices, this might include assessing the impact of sample composition variation, extraction efficiency differences, or matrix effects on detection.

Risk assessment tools such as Fishbone diagrams, Failure Mode and Effects Analysis (FMEA), and Prior Knowledge Reviews help method developers identify which factors merit focused experimentation [6]. The output of this assessment guides the development strategy, ensuring resources are allocated to understanding and controlling the factors that most significantly impact method performance.

ICH Q2(R2): Validation of Analytical Procedures

Core Validation Parameters

ICH Q2(R2) provides detailed guidance on validating analytical procedures to demonstrate they are fit for their intended purpose [4]. The validation parameters required depend on the type of method (identification, testing for impurities, assay, etc.), but core concepts apply across analytical techniques.

Table 3: Core Validation Parameters per ICH Q2(R2)

| Parameter | Definition | Experimental Approach | Food Chemistry Application |

|---|---|---|---|

| Accuracy | Closeness to true value [12] | Recovery studies with spiked samples [12] | Vitamin fortification recovery in complex matrices |

| Precision | Degree of scatter in results [12] | Repeatability, intermediate precision [12] | Consistency of pesticide residue measurements |

| Specificity | Ability to measure analyte despite interferences [12] | Challenge with potential interferents | Distinguishing authentic from adulterated honey |

| Linearity | Proportionality of response to concentration [12] | Minimum 5 concentration levels [12] | Calibration for mycotoxin quantification |

| Range | Interval demonstrating suitability [6] | Demonstrated through accuracy, precision, linearity | Covering expected contaminant concentrations |

| Robustness | Resilience to parameter variations [12] | Deliberate changes to critical parameters | Method transfer between laboratory environments |

| LOD/LOQ | Detection/quantitation limits [6] | Signal-to-noise or statistical approaches | Trace contaminant monitoring |

Validation Experimental Protocol

Protocol: Validation of HPLC-UV Method for Antioxidant Quantification in Botanical Extracts

1.0 Purpose To validate an HPLC-UV method for quantification of specific antioxidant compounds in complex botanical extracts per ICH Q2(R2) requirements.

2.0 Scope Applies to stability-indicating method for catechin, epicatechin, and procyanidin B2 in grape seed extract.

3.0 Experimental Design

- 3.1 Specificity: Inject individual analyte standards, placebo formulation, and forced degradation samples (acid, base, oxidation, heat, light). Resolution between closest eluting peaks should be ≥2.0.

- 3.2 Linearity and Range: Prepare minimum 5 concentrations from 50-150% of target concentration (n=3 each). Calculate correlation coefficient (R² ≥0.998), y-intercept, and slope of regression line.

- 3.3 Accuracy: Spike placebo with analytes at 50%, 100%, and 150% of target (n=3 each). Calculate mean recovery (98-102%).

- 3.4 Precision:

- Repeatability: Six replicate preparations at 100% concentration by same analyst same day (RSD ≤2.0%).

- Intermediate precision: Two analysts, different days, different instruments (RSD ≤3.0%).

- 3.5 Robustness: Deliberately vary column temperature (±2°C), flow rate (±10%), mobile phase pH (±0.2 units). System suitability must still be met.

4.0 Acceptance Criteria All validation parameters must meet pre-defined criteria based on ATP requirements. Any deviation requires investigation and justification.

Application in Food Chemistry Research

Implementing the Lifecycle Approach

Implementing the ICH Q14 and Q2(R2) framework in food chemistry research requires adapting pharmaceutical concepts to food-specific challenges. Food matrices are typically more variable than pharmaceutical formulations, and analytes of interest may be less defined. The lifecycle approach provides structure for managing this complexity through systematic development and knowledge management.

The enhanced approach is particularly valuable for food methods requiring high specificity, such as authenticity testing and allergen detection, where method robustness across variable sample types is essential. The control strategy developed through enhanced understanding explicitly defines how to maintain method performance despite matrix variations common in agricultural-sourced materials.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Food Analytical Chemistry

| Item/Category | Function/Purpose | Application Examples |

|---|---|---|

| Stable Isotope-Labeled Internal Standards | Correct for matrix effects and extraction losses | Quantification of mycotoxins, veterinary drug residues |

| Matrix-Matched Reference Materials | Establish accuracy in complex backgrounds | Calibration for elemental analysis in various food types |

| Multi-Component Proficiency Test Materials | Verify method performance and comparability | Interlaboratory method validation studies |

| Solid Phase Extraction (SPE) Cartridges | Sample clean-up and analyte enrichment | Pesticide residue analysis before GC/MS or LC/MS |

| Immunoaffinity Columns | High specificity extraction | Aflatoxin, ochratoxin determination |

| Certified Reference Materials | Method validation and quality control | Nutrient analysis accuracy verification |

Change Management in Food Methods

As with pharmaceutical methods, food analytical procedures require updates due to technological advances, reagent obsolescence, or new scientific knowledge [9]. ICH Q14 provides a framework for managing these changes through risk-based classification and bridging studies [9]. For food methods, this might include transitioning from HPLC to UPLC platforms, updating detection techniques, or modifying sample preparation to use more sustainable solvents.

The change management process involves assessing the potential impact on method performance relative to the ATP, conducting appropriate bridging studies to demonstrate comparable performance, and implementing the change with appropriate regulatory notifications [9]. For standardized methods used in food compliance, this systematic approach ensures changes don't compromise the method's ability to correctly classify compliant and non-compliant samples.

Regulatory Submissions and Compliance

Submission Requirements

When submitting methods to regulatory bodies, the level of detail required depends on whether a minimal or enhanced approach was used [11]. For enhanced approaches, submissions should include the ATP, summary of development studies, risk assessments, and justification of the control strategy [11]. This comprehensive documentation demonstrates the scientific rigor applied during development and provides regulators confidence in the method's robustness.

For food methods referenced in standards or regulatory methods, the enhanced approach documentation facilitates future updates and method improvements. The established conditions (ECs) for the method—those parameters critical to ensuring the method performs as intended—should be clearly identified, along with their proven acceptable ranges [9]. This clarity streamlines the evaluation of proposed changes throughout the method's lifecycle.

FDA Expectations and cGMP Considerations

While cGMP regulations (21 CFR 211) specifically apply to pharmaceuticals, their principles of method validation, equipment qualification, and documentation practices represent best practices for food analytical laboratories [10] [7]. FDA's draft guidance on complying with 21 CFR 211.110 emphasizes a scientific, risk-based approach to in-process controls and testing, which aligns with the ICH Q14 enhanced approach [10].

For food chemistry research supporting product development or quality assurance, adopting these principles strengthens the scientific foundation of analytical methods. FDA encourages the use of modern analytical technologies and process models, while emphasizing the need to understand their limitations and implement appropriate controls [10]. This is particularly relevant for food laboratories implementing rapid screening methods or process analytical technology (PAT) for real-time quality assessment.

The modernized regulatory landscape for analytical procedures, embodied in ICH Q14 and Q2(R2), represents a significant advancement toward science-based, risk-informed method lifecycle management. For food chemistry researchers, these guidelines provide a robust framework for developing, validating, and maintaining analytical methods that reliably measure critical quality and safety attributes in complex food matrices.

The Analytical Target Profile serves as the cornerstone of this approach, ensuring methods are developed with a clear understanding of their intended purpose and performance requirements. The distinction between minimal and enhanced approaches provides flexibility based on method criticality, while the emphasis on method understanding and control strategies enhances robustness and facilitates managed evolution over time.

As food analytical challenges grow more complex—with needs for lower detection limits, greater specificity, and faster results—the principles outlined in these guidelines provide a path toward more reliable, adaptable analytical procedures. By adopting this lifecycle approach, food chemistry researchers can better ensure the accuracy, reliability, and defensibility of their analytical data, ultimately supporting food safety, quality, and authenticity in an evolving global marketplace.

In the realm of food chemistry research, ensuring consistent product quality is paramount. The Quality by Design (QbD) framework provides a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and control, based on sound science and quality risk management [13]. This approach is crucial for linking analytical goals, such as monitoring adenosine triphosphate (ATP) levels, to the Critical Quality Attributes (CQAs) of food products. A CQA is a physical, chemical, biological, or microbiological property or characteristic that must be within an appropriate limit, range, or distribution to ensure the desired product quality [13]. In food matrices, these attributes could include texture (firmness), color, microbial stability, and nutritional content.

The foundational step in QbD is the definition of a Quality Target Product Profile (QTPP), which is a prospective summary of the quality characteristics of a product that must be achieved to ensure the desired quality, taking into account safety and efficacy [13]. For a food product, the QTPP would define its essential characteristics as experienced by the consumer. The analytical target profile (ATP), a central concept in this thesis context, serves as the blueprint for the analytical method itself, ensuring it is fit-for-purpose in monitoring the CQAs defined by the QTPP. In postharvest food science, energy status, quantified by ATP levels and Energy Charge (EC), has emerged as a critical metabolic indicator. Recent studies on fruits like longan and shiitake mushrooms have demonstrated that sufficient ATP levels are vital for maintaining quality and delaying senescence [14] [15]. This document details application notes and protocols for utilizing ATP as a key analytical target to control CQAs in food matrices.

Quantitative Data on ATP's Role in Food Quality

Exogenous application of ATP has been shown to directly influence several CQAs in various food matrices, particularly in postharvest commodities. The data below summarize the quantitative effects of ATP and its uncoupler, 2,4-Dinitrophenol (DNP), on the quality attributes of longan fruit and shiitake mushrooms.

Table 1: Impact of ATP and DNP on CQAs and Related Metabolites in Longan Fruit Pulp [14]

| Quality Attribute / Metabolite | Measurement | ATP Treatment Effect | DNP Treatment Effect |

|---|---|---|---|

| Pulp Breakdown Index | Unitless | Decreased | Increased |

| Pulp Firmness | Force (N) | Increased | Decreased |

| Cell Membrane Permeability | Relative Electrolyte Leakage (%) | Decreased | Increased |

| Cellulose & Hemicellulose Content | mg/g | Increased | Decreased |

| Reactive Oxygen Species (ROS) | fluorescence intensity/g | Decreased | Increased |

| Malondialdehyde (MDA) | μmol/g | Decreased | Increased |

| Antioxidants (AsA, GSH) | μmol/g | Increased | Decreased |

Table 2: Effect of ATP on Energy Metabolism and Quality in Shiitake Mushrooms During Spore Release [15]

| Parameter | Measurement | ATP Treatment Effect | Functional Implication |

|---|---|---|---|

| Microstructural Integrity | SEM Observation | Retained | Delayed quality deterioration |

| Energy Metabolic Enzymes (SDH, G6PDH) | Enzyme Activity (U/mg) | Enhanced | Improved energy supply |

| Catalase (CAT) Activity | Enzyme Activity (U/mg) | Enhanced | Strengthened antioxidant system |

| Hydrogen Peroxide (H₂O₂) | Content (μmol/g) | Decreased | Reduced oxidative stress |

Experimental Protocols

Protocol 1: ATP Treatment and Quality Assessment in Fresh Fruit

This protocol outlines the methodology for treating fresh longan fruit with ATP and evaluating its impact on key CQAs like pulp breakdown and firmness [14].

- Key CQAs Monitored: Pulp breakdown index, pulp firmness, cell membrane permeability, contents of cellulose and hemicellulose, ROS and MDA production, antioxidant (AsA, GSH) levels.

- Reagents and Materials:

- Fresh longan fruits at commercial maturity.

- Adenosine triphosphate (ATP).

- 2,4-Dinitrophenol (DNP).

- Distilled water.

- Equipment: Texture analyzer, spectrophotometer, centrifuge.

- Procedure:

- Fruit Selection and Preparation: Select fruits for uniform maturity, size, color, and absence of disease. Randomly divide into three treatment groups (e.g., 50 fruits per group for statistical power): Control, ATP treatment, and DNP treatment.

- Solution Preparation: Prepare a 1.5 mmol L⁻¹ ATP solution and a 0.2 mmol L⁻¹ DNP solution using distilled water. Use distilled water for the control group.

- Treatment Application: Immerse the fruits in their respective solutions (ATP, DNP, or control) for 10 minutes. Air-dry the fruits post-treatment.

- Storage: Store the treated fruits in temperature and humidity-controlled chambers (e.g., 25°C, 85-90% relative humidity).

- Sampling and Measurement: At regular intervals (e.g., day 0, 1, 2, 3...), sample fruits from each group for destructive analysis.

- Pulp Breakdown Index: Assess visually using a standardized scoring system based on the extent of pulp collapse and juice release.

- Pulp Firmness: Measure using a texture analyzer on predetermined areas of the pulp.

- Cell Membrane Permeability: Determine by measuring relative electrolyte leakage from pulp tissue discs.

- Biochemical Assays: Quantify cellulose, hemicellulose, ROS, MDA, AsA, and GSH using established spectrophotometric methods.

Protocol 2: ATP Treatment and Proteomic Analysis in Shiitake Mushrooms

This protocol describes the use of 4D-DIA quantitative proteomics to investigate the mechanisms of ATP-induced quality retention in shiitake mushrooms during spore release [15].

- Key CQAs Monitored: Microstructural integrity (via SEM), spore release status, activity of energy metabolism enzymes (SDH, G6PDH), H₂O₂ content.

- Reagents and Materials:

- Fresh shiitake mushrooms (Lentinula edodes).

- Adenosine triphosphate (ATP).

- Enzyme assay kits (e.g., for G6PDH, SDH, CAT, H₂O₂).

- Reagents for protein extraction, digestion, and desalting (e.g., DTT, IAA, Trypsin).

- Equipment: Scanning Electron Microscope (SEM), LC-MS/MS system with ion mobility capability, centrifuge.

- Procedure:

- Mushroom Treatment: Divide mushrooms into control and ATP-treated groups. Immerse the ATP group in a 1.5 mmol L⁻¹ ATP solution for 10 minutes; immerse the control group in distilled water.

- Storage and Sampling: Store mushrooms at room temperature. Collect samples at both the initial and final stages of spore release.

- Microstructure Evaluation: Observe the spore and hyphal structure of mushroom samples using Scanning Electron Microscopy (SEM).

- Physicochemical Analysis: Homogenize mushroom tissue and extract enzymes. Measure the activities of SDH, G6PDH, and CAT, as well as H₂O₂ content using commercial assay kits according to manufacturers' instructions.

- 4D-DIA Quantitative Proteomics:

- Protein Extraction and Digestion: Extract total protein from mushroom tissue, reduce with DTT, alkylate with IAA, and digest with trypsin.

- LC-MS/MS Analysis: Analyze the resulting peptides using a 4D-DIA (four-dimensional data-independent acquisition) workflow on a LC-MS/MS system coupled with ion mobility spectrometry.

- Data Analysis: Process the raw data using specialized software (e.g., Spectronaut) to identify and quantify proteins. Perform statistical analysis to identify Differentially Expressed Proteins (DEPs) between control and ATP-treated groups.

- Bioinformatic Analysis: Conduct Gene Ontology (GO) enrichment and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway analysis on the DEPs to identify activated biological processes and signaling pathways (e.g., mTOR signaling).

Signaling Pathways and Mechanisms

The following diagrams, generated using Graphviz DOT language, illustrate the core mechanisms by which ATP modulates product quality.

ATP Mechanism on Fruit Quality

Proteomic Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for ATP-Related Quality Research

| Reagent / Material | Function / Role in Experimentation |

|---|---|

| Adenosine Triphosphate (ATP) | Exogenous energy source applied to treatments to directly enhance cellular energy status and study its effect on maintaining CQAs [14] [15]. |

| 2,4-Dinitrophenol (DNP) | Respiratory uncoupler used as a negative control; disrupts mitochondrial ATP synthesis, forcing energy to dissipate as heat, thereby lowering ATP levels and accelerating quality decline [14]. |

| Enzyme Assay Kits (e.g., for SDH, G6PDH, CAT) | Commercial kits used for precise, reproducible measurement of the activity of key enzymes involved in energy metabolism (SDH, G6PDH) and the antioxidant system (CAT) [15]. |

| Reagents for Proteomics (DTT, IAA, Trypsin) | Key chemicals for protein preparation: DTT (Dithiothreitol) reduces disulfide bonds; IAA (Iodoacetamide) alkylates cysteine residues; Trypsin digests proteins into peptides for LC-MS/MS analysis [15]. |

| Cellulose & Hemicellulose Assay Reagents | Chemicals used in colorimetric or gravimetric methods to quantify the content of these key cell wall polysaccharides, which are direct indicators of textural CQAs like firmness [14]. |

| Antioxidant Assay Reagents (for AsA, GSH) | Reagents used to spectrophotometrically measure the concentration of non-enzymatic antioxidants like Ascorbic Acid (AsA) and Glutathione (GSH), indicating the redox state of the tissue [14]. |

The paradigm for validating analytical methods is undergoing a fundamental shift across scientific disciplines, from food chemistry to pharmaceutical research. This transition moves from a checklist approach of verifying fixed performance parameters to a holistic, science-based framework centered on an Analytical Target Profile (ATP). The ATP defines the required quality of the analytical result based on its intended use, providing a structured framework for development and validation [16].

Traditional method validation often encounters challenges due to discrepancies and contradictory information among numerous international guidelines, leading to potential confusion regarding terminology and experimental requirements for parameters like accuracy, precision, and specificity [17]. The ATP approach, underpinned by Analytical Quality by Design (AQbD) principles, seeks to overcome these inconsistencies by establishing performance-based objectives at the outset, ensuring methods are robust, reliable, and fit-for-purpose throughout their entire lifecycle [16].

Comparative Analysis: Traditional versus ATP-Based Approaches

The core difference between the two paradigms lies in their philosophy and execution. The traditional model is often reactive, validating a finalized method against a fixed set of parameters. In contrast, the ATP model is proactive and strategic, guiding method development from the beginning to meet predefined quality standards.

Table 1: Core Differences Between Traditional and ATP-Based Analytical Approaches

| Feature | Traditional Approach | ATP-Based Approach |

|---|---|---|

| Core Philosophy | Compliance with a fixed checklist of validation parameters [17]. | Fulfillment of a predefined ATP, ensuring fitness for purpose [16]. |

| Development Mindset | Often linear and empirical (e.g., One Factor at a Time) [16]. | Systematic, risk-based, and iterative using AQbD principles [16]. |

| Primary Focus | Verification of performance parameters post-development [12]. | Designing quality into the method from the start [13]. |

| Defining Requirements | Fixed acceptance criteria for universal parameters (e.g., R² ≥ 0.999) [12]. | Flexible, performance-based objectives tailored to the method's intent [16]. |

| Control Strategy | Fixed operational conditions and system suitability tests [12]. | A robust control strategy potentially including a Method Operable Design Region (MODR) [16]. |

| Lifecycle Management | Often rigid, requiring major revalidation for changes [17]. | Flexible within the defined design space, facilitating continual improvement [16]. |

Performance-Based Objectives in Practice: ATP in Food and Pharmaceutical Research

The application of performance-based objectives is exemplified by the comparison of hygiene monitoring methods in food chemistry. A 2023 study compared the traditional coliform paper test with the rapid ATP bioluminescence assay for assessing kitchenware sanitation [18]. The coliform test, a standard method, provides a fixed, binary result (positive/negative) after a lengthy incubation. The ATP method, while not yet a standard, offers a rapid, quantitative result (in Relative Light Units, RLU) that correlates with microbial contamination levels [18].

This case demonstrates a shift towards valuing rapid, on-site results that support immediate decision-making—a performance objective defined by the need for efficient hygiene supervision. The kappa coefficient of 0.549 indicated medium consistency between the methods, showing that the ATP method provides a reliable, though not identical, assessment of cleanliness aligned with a different performance objective [18].

In pharmaceuticals, the ATP is formally defined as a prospective summary of the analytical procedure's requirements. It is established before development and drives the entire method lifecycle [16]. A well-constructed ATP includes:

- The Analyte and its Level: What is being measured and at what concentration (e.g., trace impurity vs. major component).

- The Analytical Technique: The intended technology, selected based on its ability to meet the ATP [19].

- Performance Criteria: Defined based on the method's purpose, including parameters such as accuracy, precision, and specificity [16].

- Business and End-User Needs: Throughput, cost, and ease of use in the quality control (QC) laboratory [16].

Experimental Protocols

Protocol 1: Establishing an Analytical Target Profile (ATP)

This protocol outlines the steps for defining an ATP, the critical first step in a modern, performance-based method development process.

1. Define the Analytical Need: Formulate the primary question the method must answer (e.g., "What is the concentration of allergen X in product Y?"). This determines the method's purpose [16]. 2. Define the QTPP and CQAs (for drug products): For pharmaceutical applications, the ATP is informed by the Quality Target Product Profile (QTPP) and the drug product's Critical Quality Attributes (CQAs) [13]. 3. Draft the ATP Document: Create a document containing the following elements [16]: - Analyte and Matrix: Clearly identify the substance to be measured and the sample material. - Intended Purpose: State the method's use (e.g., identity test, quantitative assay for release, impurity testing). - Performance Criteria: Define the required performance for each relevant parameter: - Specificity: Ability to measure the analyte unequivocally in the presence of potential interferents [12]. - Accuracy/Trueness: Closeness of agreement between the accepted reference value and the value found [17]. - Precision: Degree of agreement among individual test results (repeatability, intermediate precision) [12]. - Range: The interval between the upper and lower levels of analyte for which suitable precision and accuracy are demonstrated [12]. - Reportable Range and Linearity: The range of concentrations over which the method will be used and the required correlation coefficient [12]. - Business/Operational Requirements: Specify needs for sample throughput, analysis time, cost-per-test, environmental impact ("green" analytics), and ease of transfer to QC labs [19] [16].

Protocol 2: Method Development and Validation Based on ATP

Once the ATP is established, it guides the subsequent development and validation activities.

1. Technique Selection: Based on the ATP, select the most appropriate analytical technique (e.g., HPLC, GC, SFC, CE). For instance, Supercritical Fluid Chromatography (SFC) may be selected for chiral separations, water-sensitive analytes, or to meet sustainability goals [19]. 2. Risk Assessment and Knowledge Management: Use risk management tools (e.g., FMEA) to identify method parameters (Critical Method Parameters, CMPs) that can impact the performance criteria outlined in the ATP [16]. 3. Systematic Experimentation (DoE): Instead of a one-factor-at-a-time approach, use Design of Experiments (DoE) to efficiently explore the impact of CMPs and their interactions on method performance. This builds robustness into the method [16]. 4. Define the Control Strategy: Establish an Analytical Procedure Control Strategy (APCS) to ensure the method consistently meets the ATP. This includes system suitability tests (SSTs) derived from the ATP requirements [16]. 5. Method Validation: Perform validation studies to experimentally demonstrate that the method performs as specified in the ATP, following ICH Q2(R2) principles [16]. The acceptance criteria for each validation parameter are directly taken from the ATP.

Diagram 1: ATP-Based Method Lifecycle Workflow. This workflow illustrates the systematic, iterative process of developing and maintaining an analytical method based on predefined performance objectives, in alignment with ICH Q14 guidelines [16].

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of analytical methods, whether traditional or ATP-based, relies on key reagents and technologies.

Table 2: Essential Research Reagent Solutions for Analytical Method Development

| Item | Function/Application | Example Use-Case |

|---|---|---|

| ATP Bioluminescence Assay Kits | Rapid, on-site detection of organic debris via adenosine triphosphate (ATP) from microbial and food residues [18] [20]. | Hygiene monitoring of kitchenware surfaces; verification of cleaning protocols in food manufacturing [18]. |

| Advanced Chromatography Columns | Stationary phases for separation techniques (e.g., UPLC, SFC, HPLC). Selection is critical for achieving specificity [19] [21]. | Chiral separations in API development using SFC [19]; impurity profiling in drug substances using UPLC-MS/MS [21]. |

| LC-MS/MS Compatible Solvents & Reagents | High-purity mobile phase components and additives for mass spectrometry detection to minimize background noise and ion suppression [21]. | Quantitative bioanalysis of drugs and metabolites in biological matrices during preclinical DMPK studies [21]. |

| Chemometric Software Packages | Statistical tools for designing experiments (DoE), analyzing multivariate data, and building predictive models from complex spectral data [22]. | Developing non-destructive spectroscopic methods (NIR, Raman) for food authentication and quality analysis [22]. |

| System Suitability Test (SST) Standards | Reference materials used to verify that the chromatographic system is performing adequately before sample analysis [16]. | Part of the control strategy to ensure daily performance of a validated HPLC method meets ATP criteria [16]. |

The evolution from fixed-parameter checking to performance-based objectives, crystallized in the Analytical Target Profile (ATP), represents a significant advancement in analytical science. This approach, formalized in guidelines like ICH Q14, provides a structured framework for developing methods that are not only compliant but also robust, reliable, and perfectly aligned with their intended purpose—whether in ensuring drug safety or verifying food quality [16].

This paradigm shift emphasizes building quality into the method from the very beginning, guided by a clear understanding of the required analytical performance. By adopting an ATP and AQbD framework, researchers and drug development professionals can enhance regulatory success, improve efficiency, and foster a culture of continuous improvement throughout the analytical method lifecycle.

Building Your ATP: A Step-by-Step Methodology for Food Chemistry Applications

In analytical chemistry, particularly within food chemistry and pharmaceutical development, defining the intended purpose and scope of an analytical procedure is the critical first step that forms the foundation for all subsequent activities. This initial stage determines the direction for method development, validation, and eventual application, ensuring the resulting data is fit-for-purpose. A clearly defined purpose and scope provides the framework for establishing the Analytical Target Profile (ATP), which prospectively summarizes the performance requirements for the procedure [6] [23]. For researchers and scientists, this strategic approach transforms method development from a prescriptive, "check-the-box" exercise into a systematic, science-based process that aligns with modern regulatory guidelines such as ICH Q14 and ICH Q2(R2) [6] [24]. This document outlines the principles and practical protocols for effectively establishing the purpose and scope of an analytical procedure within the context of food chemistry research.

Core Principles: From Purpose to Performance

The Role of the Analytical Target Profile (ATP)

The Analytical Target Profile (ATP) is a central concept introduced in ICH Q14 that formalizes the definition of an analytical procedure's purpose [6] [23]. It is a prospective summary that describes the intended purpose of the analytical procedure and its required performance characteristics [6]. Defining the ATP at the outset ensures that the method is designed to be fit-for-purpose from the very beginning [6]. The ATP directly links the analytical procedure to the Quality Target Product Profile (QTPP) and the Critical Quality Attributes (CQAs) of the product or compound under investigation [24]. For a food chemistry researcher, this could mean linking a method to a food's safety, authenticity, or nutritional value.

Key Questions to Define Purpose and Scope

Before drafting the ATP, researchers must answer fundamental questions about the analytical need. The table below summarizes these critical considerations.

Table 1: Key Questions to Define Analytical Procedure Purpose and Scope

| Category | Guiding Questions for Researchers |

|---|---|

| Analyte & Matrix | What specific analyte(s) must be measured? What is the chemical and physical nature of the sample matrix (e.g., solid, liquid, complexity)? |

| Intended Use | Is the method for qualitative identification or quantitative measurement? Is it for release, stability testing, raw material screening, or in-process control? |

| Performance Needs | What level of accuracy and precision is required for decision-making? What is the required sensitivity (LOD/LOQ)? What is the expected concentration range? |

| Operational Context | Where will the method be used (R&D lab, QC lab, production floor)? What are the sample throughput requirements? What technical expertise and equipment are available? |

Experimental Protocols for Scoping and Pre-Development

A systematic, science-based approach to defining the procedure's scope involves gathering key information through specific experimental and literature-based activities.

Protocol for Sample and Analyte Characterization

Objective: To understand the fundamental physicochemical properties of the analyte and the sample matrix to inform technique selection and method development. Materials:

- Purified analyte standard

- Representative sample matrix (blank and fortified)

- Relevant solvents and reagents

- Instrumentation: HPLC-UV/VIS, GC-MS, LC-MS, pH meter, balance

Methodology:

- Analyte Properties Profiling:

- Determine solubility in various solvents (water, methanol, acetonitrile, hexane).

- Estimate or measure key physicochemical properties: pKa (for ionizable compounds), LogP/LogD (lipophilicity), and chemical stability under various conditions (e.g., pH, heat, light) [19].

- Identify the analyte's spectral properties (UV-Vis absorbance maxima, fluorescence) using a spectrophotometer.

- Matrix Characterization:

- Analyze a blank (analyte-free) sample matrix to identify potential interfering components.

- Perform forced degradation studies on the sample (e.g., exposure to acid, base, oxidation, heat, and light) to understand potential degradation products and the stability-indicating requirements of the method [24].

- Documentation: Record all data in a technical report that captures process and formulation development activities, which will feed into the ATP [24].

Protocol for Defining Performance Criteria via the ATP

Objective: To formally document the quality standards the analytical procedure must meet. Materials: Data generated from Protocol 3.1, prior knowledge, and regulatory or internal quality requirements.

Methodology:

- Define the Attribute and Procedure Type: Clearly state what is being measured (e.g., "concentration of preservative X") and the type of procedure (e.g., identity test, quantitative assay for impurity, limit test for a contaminant).

- Establish Performance Criteria: Based on the intended use from Table 1, define and justify numerical targets for the following characteristics [6] [23]:

- Accuracy (e.g., mean recovery of 95-105%)

- Precision (e.g., Repeatability %RSD ≤ 2.0%)

- Specificity (e.g., baseline separation from all known interferences)

- Linearity and Range (e.g., a range of 50-150% of target concentration with R² ≥ 0.998)

- Limit of Detection (LOD) and Quantitation (LOQ) (e.g., LOQ at 0.05% of the target concentration)

- Document the ATP: Create a controlled document that includes all the above information. This document will serve as the target for method development and the benchmark for validation [6] [24].

Visualization of the Analytical Procedure Definition Workflow

The following diagram illustrates the logical workflow and key decision points for defining the purpose and scope of an analytical procedure, culminating in the ATP.

Diagram 1: Workflow for defining analytical procedure purpose and scope

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials essential for the initial scoping and characterization phases of analytical procedure development.

Table 2: Essential Reagents and Materials for Scoping and Characterization

| Item/Category | Function/Application |

|---|---|

| Certified Reference Materials (CRMs) | Provides a known quantity of the target analyte with high purity and traceability; essential for determining method accuracy, linearity, and for preparing calibration standards. |

| High-Purity Solvents (HPLC/MS Grade) | Used for sample preparation, dilution, and as mobile phase components; high purity is critical to minimize background noise and interference, especially for sensitive detection methods. |

| Buffer Salts & Additives | Used to control mobile phase pH and ionic strength; critical for modulating retention and selectivity, particularly for ionizable analytes in chromatographic separations. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for matrix effects and variability in sample preparation and ionization; improves data accuracy and precision. |

| Derivatization Reagents | Chemicals used to modify the analyte to enhance its detectability (e.g., UV/FL) or volatility for analysis by techniques like GC. |

| Solid-Phase Extraction (SPE) Sorbents | Used for sample clean-up and pre-concentration of analytes; helps remove matrix interferences and improves method sensitivity and robustness. |

In food chemistry research and quality-by-design frameworks, the Analytical Target Profile (ATP) is a foundational concept that prospectively defines the requirements for a measurement on a specific quality attribute. The ATP outlines the necessary performance criteria—such as specificity, accuracy, precision, and the maximum allowable uncertainty for a reportable result—to ensure confidence in quality decisions made from the analytical data [25]. This report provides a structured guide for selecting the most appropriate analytical technique—whether HPLC, GC, MS, or an emerging methodology—based on the specific goals defined in the ATP for a given food analysis application. The global chromatography food testing market, anticipated to grow from USD 24.27 billion in 2025 to USD 41.70 billion by 2034, reflects the critical and expanding role of these techniques in ensuring food safety, quality, and authenticity [26].

Core Analytical Techniques: Principles and ATP Alignment

The selection of an analytical technique must be driven by the physicochemical properties of the target analytes and the performance requirements stipulated in the ATP. The table below summarizes the primary techniques and their optimal applications in food analysis.

Table 1: Core Analytical Techniques and Their Alignment with ATP Requirements in Food Analysis

| Technique | Core Principle | Best-Suited Analytes in Food | Key Performance Metrics (from ATP) | Common Food Applications |

|---|---|---|---|---|

| High-Performance Liquid Chromatography (HPLC/UHPLC) | Separation based on differential partitioning between a liquid mobile phase and a stationary phase [27]. | Non-volatile, thermally labile, and polar compounds [27] [28]. | Specificity for target compounds in complex matrices, precision, accuracy, linearity of calibration [28]. | Analysis of vitamins, pigments, synthetic dyes, mycotoxins, pesticides, and pharmaceutical residues [26] [27] [28]. |

| Gas Chromatography (GC) | Separation of volatilized analytes between a gaseous mobile phase and a liquid stationary phase. | Volatile and semi-volatile, thermally stable compounds [29]. | Specificity, sensitivity (low LOD/LOQ), resolution of complex volatile profiles [30]. | Profiling of flavor compounds, fragrance allergens, pesticide residues, and volatile contaminants [26] [29]. |

| Mass Spectrometry (MS) - Hyphenated | Ionization and separation of ions based on their mass-to-charge ratio (m/z), often coupled with LC or GC for separation. | Virtually all classes of compounds, providing structural identity [31] [32]. | Unparalleled specificity and sensitivity, ability to identify unknown compounds, high confidence in authentication [31] [32]. | Targeted and untargeted analysis of contaminants, metabolomics, food fraud detection, and compound identification [30] [31]. |

| Ambient Ionization MS (AIMS) | Direct ionization and analysis of samples under ambient conditions with minimal preparation [32] [33]. | Broad range; ideal for rapid fingerprinting of surface compounds. | Speed of analysis (<1 min/sample), minimal sample preparation, high-throughput screening capability [32]. | Non-destructive authentication of herbs, spices, and complex foods; geographical origin verification [32] [33]. |

Ultra-High-Performance Liquid Chromatography (UHPLC) represents a significant advancement, utilizing sub-2µm particles and higher pressures to deliver superior resolution, sensitivity, and faster analysis times compared to traditional HPLC, making it ideal for high-throughput laboratory environments [26] [27]. The "green" analytical chemistry trend also encourages selecting techniques like UHPLC that reduce solvent consumption [27].

Application-Oriented Technique Selection: From Targeted Quantification to Non-Destructive Authentication

Targeted Contaminant and Residue Analysis

For the precise quantification of specific contaminants, such as pesticides, heavy metals, or veterinary drug residues, HPLC or UHPLC coupled with tandem mass spectrometry (LC-MS/MS) is often the gold standard. This technique provides the high sensitivity and specificity required to meet stringent regulatory limits. Its ability to analyze non-volatile and thermally labile compounds in complex food matrices like meat, dairy, and processed foods makes it indispensable for compliance and safety monitoring [31] [28]. The ATP for such applications would mandate low limits of detection (LOD) and quantification (LOQ), high accuracy, and robust precision.

Flavor, Fragrance, and Volatile Profiling

The analysis of volatile organic compounds responsible for aroma and flavor is the domain of Gas Chromatography-Mass Spectrometry (GC-MS). This technique effectively separates and identifies complex mixtures of esters, aldehydes, alcohols, and acids [30]. When integrated with sensory evaluation data, GC-MS provides a comprehensive chemical basis for flavor perception, crucial for product development and quality control of fruits, beverages, and spices [30].

Food Authentication and Fraud Detection

Combating food fraud requires non-targeted methods that can detect subtle chemical differences indicative of adulteration or misrepresentation of geographical origin. Emerging techniques like Ambient Ionization Mass Spectrometry (AIMS), paired with machine learning, are revolutionizing this field. For example, Vapor Assisted Desorption Chemical Ionization MS (VADCI-MS) has been successfully used to non-destructively authenticate the geographical origin of Zanthoxylum bungeanum (Sichuan pepper) with 96.88% accuracy in under one minute per sample [32]. The ATP for authentication prioritizes speed, non-destructiveness, and the ability to process and classify complex spectral fingerprints using chemometrics.

Table 2: Emerging and Integrated Approaches for Advanced Food Analysis

| Application Area | Recommended Technique(s) | Key Experimental Considerations | Recent Market & Research Trends |

|---|---|---|---|

| High-Throughput Quantification | UHPLC-MS, UPLC (CAGR ~18%) [26] | Column chemistry (e.g., C18), particle size (<2µm), mobile phase gradient, injection volume. | Demand for automated, high-throughput systems in labs [26] [34]. |

| Food Fraud & Origin Screening | AIMS (e.g., VADCI-MS, PS-MS) + Machine Learning [32] [33] | Minimal sample prep, chemometric model training/validation, database building. | Shift from targeted to non-targeted, rapid screening methods [33]. |

| Comprehensive Flavor Science | GC-MS + E-nose/E-tongue + Sensory Panels [30] | Headspace sampling, sensor array calibration, panelist training for QDA. | Integration of chemical data with sensory and neuroimaging (e.g., EEG) [30]. |

| Multi-Contaminant Screening | LC-MS/MS, GC-MS/MS | Sample cleanup (SPE, GPC), dynamic MRM modes, extensive libraries. | Driven by stricter regulations on PFAS, pesticides, and toxins [26] [31]. |

Detailed Experimental Protocols

Protocol 4.1: Quantitative Analysis of Mycotoxins in Cereals using UHPLC-MS/MS

Objective: To accurately quantify specific mycotoxins (e.g., aflatoxins) in a grain sample to ensure compliance with regulatory limits.

ATP Alignment: The method is designed to meet ATP requirements for high sensitivity (LOD in low ppb), specificity (unambiguous identification via MRM), accuracy (≥90% recovery), and precision (RSD ≤10%).

Workflow:

Step-by-Step Procedure:

- Sample Preparation: Homogenize a representative grain sample to a fine powder using a laboratory mill.

- Extraction: Weigh 5.0 ± 0.1 g of the homogenized sample into a 50 mL centrifuge tube. Add 20 mL of acetonitrile/water (80:20, v/v) extraction solvent. Shake vigorously for 60 minutes on an orbital shaker.

- Clean-up: Centrifuge the extract at 4000 rpm for 10 minutes. Pass a 5 mL aliquot of the supernatant through a dedicated mycotoxin solid-phase extraction (SPE) cartridge. Elute the analytes with an appropriate solvent (e.g., methanol).

- Concentration & Reconstitution: Evaporate the eluate to dryness under a gentle stream of nitrogen. Reconstitute the residue in 1 mL of initial mobile phase (e.g., water/methanol 95:5) and filter through a 0.22 µm PVDF syringe filter into an LC vial.

- UHPLC-MS/MS Analysis:

- Column: C18, 100 mm x 2.1 mm, 1.7 µm.

- Mobile Phase: A: Water with 0.1% Formic Acid; B: Methanol with 0.1% Formic Acid.

- Gradient: 5% B to 95% B over 10 minutes.

- Flow Rate: 0.4 mL/min.

- Injection Volume: 5 µL.

- MS Detection: Electrospray Ionization (ESI) in positive mode. Monitor at least two precursor-to-product ion transitions per mycotoxin using Multiple Reaction Monitoring (MRM).

- Quantification: Use a matrix-matched calibration curve of the target mycotoxin standards (e.g., 1-100 ppb) for accurate quantification, correcting for matrix effects.

Protocol 4.2: Non-Destructive Authentication of Spices using AIMS and Machine Learning

Objective: To rapidly differentiate and authenticate the geographical origin of a spice sample (e.g., Sichuan pepper) without destructive sample preparation.

ATP Alignment: This protocol fulfills ATP needs for rapid analysis (<1 min/sample), non-destructiveness, and high classification accuracy for origin verification.

Workflow:

Step-by-Step Procedure:

- Minimal Sample Handling: Place a single, intact dried spice berry (or a small pinch of powder) directly into the sample chamber or holder of the AIMS instrument (e.g., VADCI-MS, PS-MS). No extraction is required.

- MS Fingerprint Acquisition: Initiate analysis. The ambient ionization source (e.g., using a vaporized solvent) desorbs and ionizes molecules from the sample surface in ambient air. Acquire mass spectral data for approximately 30-60 seconds per sample to obtain a full mass fingerprint (e.g., m/z 50-1200).

- Data Preprocessing & Feature Selection: Subject the raw MS data from a large number of authentic reference samples (e.g., 100+ batches from known origins) to preprocessing: baseline correction, peak alignment, and normalization. Subsequently, use statistical methods (e.g., ANOVA) to select a subset of key m/z features (e.g., 415 peaks) that are most discriminatory between classes [32].

- Machine Learning Model Training: Use the selected features from the reference set to train a supervised classification model, such as a Support Vector Machine (SVM) or Random Forest. A portion of the data (e.g., 70-80%) is used for training, ensuring the model learns the spectral patterns associated with each geographical origin.

- Model Validation & Prediction: Validate the trained model's performance using a separate, blinded test set (the remaining 20-30% of reference samples) to report accuracy, precision, and recall. Once validated, the model can be used to predict the origin of unknown samples based on their AIMS fingerprint [32] [33].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Food Analysis Techniques

| Item | Function/Application | Technical Notes |

|---|---|---|

| SPE Cartridges (C18, Ion-Exchange) | Sample clean-up and pre-concentration of analytes from complex food matrices. | Critical for reducing matrix effects in LC-MS/MS; selection depends on analyte polarity/charge [28]. |

| UHPLC Columns (e.g., C18, 1.7-1.8µm) | High-resolution separation of complex mixtures. | Core of UHPLC performance; sub-2µm or core-shell particles enable faster, more efficient separations [26] [27]. |

| Isotopically Labeled Internal Standards | Correction for analyte loss during sample preparation and quantification in MS. | Essential for achieving high accuracy and precision in quantitative LC-MS/MS assays [31]. |

| HRAM Mass Spectrometer (e.g., Orbitrap) | Accurate mass measurement for untargeted screening and identification of unknowns. | Provides the high resolution and mass accuracy needed for confident formula assignment in foodomics [31] [34]. |

| Chemometric Software | Processing and modeling of multivariate data from AIMS, GC-MS, or spectroscopic techniques. | Enables pattern recognition, classification, and feature selection for authentication and fraud detection [30] [32] [33]. |

Selecting the correct analytical technique is a critical step that is intrinsically guided by the Analytical Target Profile. From the robust, quantitative power of UHPLC-MS/MS for compliance testing to the rapid, non-destructive screening capabilities of AIMS with machine learning for authentication, each technology offers unique advantages. The future of food analysis lies in the intelligent integration of these techniques, leveraging automation, green chemistry principles, and advanced data analytics to meet the evolving demands of food safety, quality, and authenticity verification in a growing global market.

In the development of an Analytical Target Profile (ATP) for food chemistry research, establishing well-defined performance characteristics and acceptance criteria is a critical step. This step translates the qualitative goals of the ATP into quantitative, measurable standards that ensure the analytical method is fit for its intended purpose. These characteristics form the foundation for method validation and subsequent quality control, providing clear benchmarks for evaluating method performance and ensuring the reliability, accuracy, and reproducibility of data generated for food analysis, regulatory submissions, and quality assurance.

Key Performance Characteristics

For an analytical method to be considered valid, specific performance parameters must be evaluated and meet pre-defined acceptance criteria. The International Council for Harmonisation (ICH) guideline Q2(R1) provides a globally recognized framework for these validation parameters [12]. The following table summarizes the core characteristics and their typical acceptance criteria for a quantitative assay, such as the determination of an active ingredient or a major food component.

Table 1: Core Validation Parameters and Acceptance Criteria for a Quantitative Analytical Method

| Performance Characteristic | Definition | Typical Acceptance Criteria & Methodology |

|---|---|---|

| Specificity/Selectivity | The ability to assess the analyte unequivocally in the presence of other components, such as excipients, impurities, or the food matrix [12]. | The analyte peak should be well-resolved from all other peaks (e.g., Resolution Factor > 1.5). Demonstrated through analysis of blank samples and samples spiked with potential interferents. |

| Accuracy | The closeness of agreement between the value found and the value accepted as a true or conventional reference value [12]. | Typically assessed by recovery studies. Recovery of the spiked analyte should be within 98–102% for the API in a drug product, with similar expectations for key food components [12]. |

| Precision | The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample. | Expressed as Relative Standard Deviation (RSD):• Repeatability: RSD ≤ 1.0% for same analyst/equipment/short time.• Intermediate Precision: RSD ≤ 2.0% for different analysts/days/equipment [12]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte within a given range [12]. | A minimum of 5 concentration levels are tested. The correlation coefficient (R²) should typically be ≥ 0.999 [12]. |

| Range | The interval between the upper and lower concentrations of analyte for which it has been demonstrated that the method has suitable levels of precision, accuracy, and linearity. | Established from the linearity study, confirming that the intended working concentrations fall within the validated interval. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [12]. | The method should maintain system suitability (e.g., resolution, tailing factor) when parameters like flow rate (±0.1 mL/min), mobile phase pH (±0.2 units), or column temperature (±2°C) are varied [12]. |

Experimental Protocols for Verification

The following protocols provide detailed methodologies for establishing the key performance characteristics listed above.

Protocol for Determining Specificity

1. Objective: To demonstrate that the method can accurately quantify the target analyte without interference from the sample matrix, impurities, or degradation products. 2. Materials:

- HPLC system with UV-Vis or DAD detector

- Reference standards of the target analyte and known potential interferents

- Mobile phase and other solvents as per the developed method

3. Procedure:

- Prepare and inject a blank sample (the matrix without the analyte).

- Prepare and inject a standard solution of the pure analyte.

- Prepare and inject a sample solution spiked with the analyte and all known potential interferents (e.g., degradation products induced by stress conditions like heat or acid/base treatment).

- Compare the chromatograms to confirm that the analyte peak is pure and baseline-separated from any other peaks. 4. Data Analysis: Calculate the resolution between the analyte peak and the closest eluting interfering peak. Acceptance is typically met if the resolution is greater than 1.5.

Protocol for Determining Accuracy and Precision

1. Objective: To determine the closeness of measurement to the true value (accuracy) and the agreement between a series of measurements (precision). 2. Materials:

- Analyte reference standard

- Homogeneous representative sample matrix

- Appropriate analytical instrumentation (e.g., HPLC, GC)

3. Procedure:

- Prepare a sample of the matrix at 100% of the target concentration (n=6).

- Prepare samples of the matrix spiked with the analyte at three concentration levels (e.g., 80%, 100%, 120% of the target) in triplicate.

- Analyze all samples according to the method. 4. Data Analysis:

- Accuracy: For each spike level, calculate the percentage recovery of the analyte. The mean recovery should be within the pre-defined range (e.g., 98-102%).

- Precision: For the six samples at 100%, calculate the mean, standard deviation, and Relative Standard Deviation (RSD). The RSD should meet the repeatability criterion (e.g., ≤ 1.0%).

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are fundamental for developing and validating robust analytical methods in food chemistry.

Table 2: Key Research Reagent Solutions for Analytical Method Development

| Item | Function / Explanation |

|---|---|

| Certified Reference Standards | High-purity chemicals with certified concentration and identity; essential for method calibration, determining accuracy, and establishing linearity. |

| Chromatography Columns (e.g., C18, HILIC) | The stationary phase for separation; column selection (particle size, length, chemistry) is critical for achieving specificity and resolution of analytes from the food matrix [12]. |

| HPLC-Grade Solvents | High-purity solvents for mobile phase preparation; minimize baseline noise and ghost peaks, ensuring detection sensitivity and accuracy. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for matrix effects and losses during sample preparation; significantly improves the accuracy and precision of quantitative results. |

| Sample Preparation Kits (SPE, QuEChERS) | Solid-phase extraction (SPE) and QuEChERS (Quick, Easy, Cheap, Effective, Rugged, Safe) kits are vital for cleaning up complex food matrices, reducing interferences, and improving detection limits. |

Workflow and Relationship Visualizations

The following diagrams illustrate the logical relationships and workflows involved in establishing performance characteristics.

ATP Validation Parameter Relationships

Method Validation Experimental Workflow

The Analytical Target Profile (ATP) is a foundational concept in analytical quality by design that prospectively summarizes the performance requirements for an analytical procedure. In the context of food chemistry research, the ATP defines the criteria that a measurement method must meet to generate reportable results with acceptable uncertainty for making confident decisions about food safety, quality, and composition [25]. The ATP serves as the cornerstone of the analytical method lifecycle, guiding development, validation, and continuous verification activities while ensuring that methods remain fit-for-purpose throughout their use [25].

For food chemistry researchers, the ATP provides a structured framework for communicating analytical needs across multidisciplinary teams, including food scientists, regulatory affairs professionals, and quality control personnel. By explicitly defining measurement quality requirements rather than specific methodological approaches, the ATP offers flexibility in method selection and optimization while maintaining focus on the fundamental objective: producing reliable data to support food safety decisions and quality assessments [25]. This document provides a practical template for documenting ATPs in food chemistry research and illustrates its application through a real-world example from food safety monitoring.

Core Components of an Analytical Target Profile Template

A comprehensive ATP template for food chemistry applications should contain the following essential elements, which collectively define the analytical requirements for a specific measurement:

- Analyte and Matrix Definition: Precise identification of the target analyte(s) and the specific food matrix in which it will be measured

- Measurable Attribute: Clear description of what is being measured (e.g., concentration, presence/absence, compositional profile)

- Required Level of Uncertainty: Specification of the maximum acceptable uncertainty for the reportable result

- Performance Characteristics: Defined requirements for key method performance parameters including specificity, accuracy, precision, and range

- Decision Rules: Guidelines for interpreting results in the context of regulatory limits or quality specifications

- Acceptance Criteria: Predefined criteria that must be met to demonstrate the method is fit-for-purpose

Table 1: Analytical Target Profile Template for Food Chemistry Applications

| ATP Component | Description | Food Analysis Example |

|---|---|---|

| Analyte | Specific substance or property being measured | Aflatoxin B1 |

| Matrix | Food material in which the analyte is determined | Corn-based products |

| Reportable Result | Format and units of the final value | Concentration in μg/kg |

| Target Measurement Uncertainty | Maximum acceptable uncertainty in the reportable result | ± 0.5 μg/kg at decision level |

| Specificity | Ability to distinguish analyte from interfering components | No interference from other aflatoxins or matrix components |

| Accuracy/Trueness | Closeness of agreement between measured and true value | Recovery 90-110% |

| Precision | Closeness of agreement between independent measurements | RSDr ≤ 15% |