A Modern Lifecycle Approach to Managing Instrument Qualification and Analytical Method Validation

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for integrating modern instrument qualification with robust analytical method validation.

A Modern Lifecycle Approach to Managing Instrument Qualification and Analytical Method Validation

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for integrating modern instrument qualification with robust analytical method validation. Covering the latest regulatory trends, including the updated USP <1058> lifecycle model and ICH Q2(R2)/Q14 guidelines, it offers foundational principles, practical application strategies, troubleshooting techniques, and advanced validation approaches. The content is designed to help laboratories enhance data integrity, ensure regulatory compliance, and improve operational efficiency through risk-based lifecycle management and emerging technologies like AI.

Building a Solid Foundation: Principles, Regulations, and the Modern Qualification Lifecycle

In pharmaceutical analysis and drug development, ensuring that instruments and systems produce reliable data is paramount. Two key concepts in this realm are Analytical Instrument Qualification (AIQ) and Analytical Instrument and System Qualification (AISQ).

- Analytical Instrument Qualification (AIQ) is the process that guarantees an analytical instrument performs suitably for its intended purpose, contributing to confidence in the validity of generated data [1].

- Analytical Instrument and System Qualification (AISQ) is an updated term and framework that expands upon AIQ, providing a more integrated lifecycle approach to qualification and validation for a broader range of apparatus, instruments, and instrument systems [2].

These processes are critical for compliance with Good Manufacturing Practice (GMP) and other regulatory guidelines, ensuring the integrity of data used in drug development and quality control [1] [2].

The Evolution from the 4Q Model to a Three-Phase Lifecycle

The qualification process is undergoing a significant shift from a traditional model to a more modern, integrated lifecycle approach.

Traditional Model: The 4Qs

The well-established 4Q model subdivides qualification into four sequential stages [1]:

- Design Qualification (DQ): The documented collection of activities that define the functional and operational specifications and the intended use of the instrument.

- Installation Qualification (IQ): The assurance that the instrument is delivered as designed and specified, is properly installed, and that the environment is suitable.

- Operational Qualification (OQ): The verification that the instrument will function according to its operational specification in the selected environment.

- Performance Qualification (PQ): The confirmation that the instrument performs according to user-defined specifications and requirements in its actual operating environment.

In practice, the 4Q model has been seen as sometimes too rigid, making it difficult to clearly define the differences between stages like OQ and PQ [1].

Modern Approach: The Integrated Three-Phase Lifecycle

A new integrated lifecycle model is now being introduced, deliberately deviating from the 4Q model [1] [2]. This approach aligns with modern validation guidance and consists of three core phases:

Phase 1: Specification and Selection This initial stage covers specifying the instrument’s intended use in a User Requirements Specification (URS), selection, risk assessment, and purchase. The URS is a "living document" that may change over the instrument's lifecycle [2].

Phase 2: Installation, Qualification, and Validation In this phase, the instrument is installed, components are integrated and commissioned, and qualification/validation is performed. This includes writing SOPs, conducting user training, and ultimately releasing the system for operational use [2].

Phase 3: Ongoing Performance Verification (OPV) This final, continuous phase demonstrates that the instrument continues to perform against the URS requirements throughout its operational life. It includes activities like maintenance, calibration, change control, and periodic review [2].

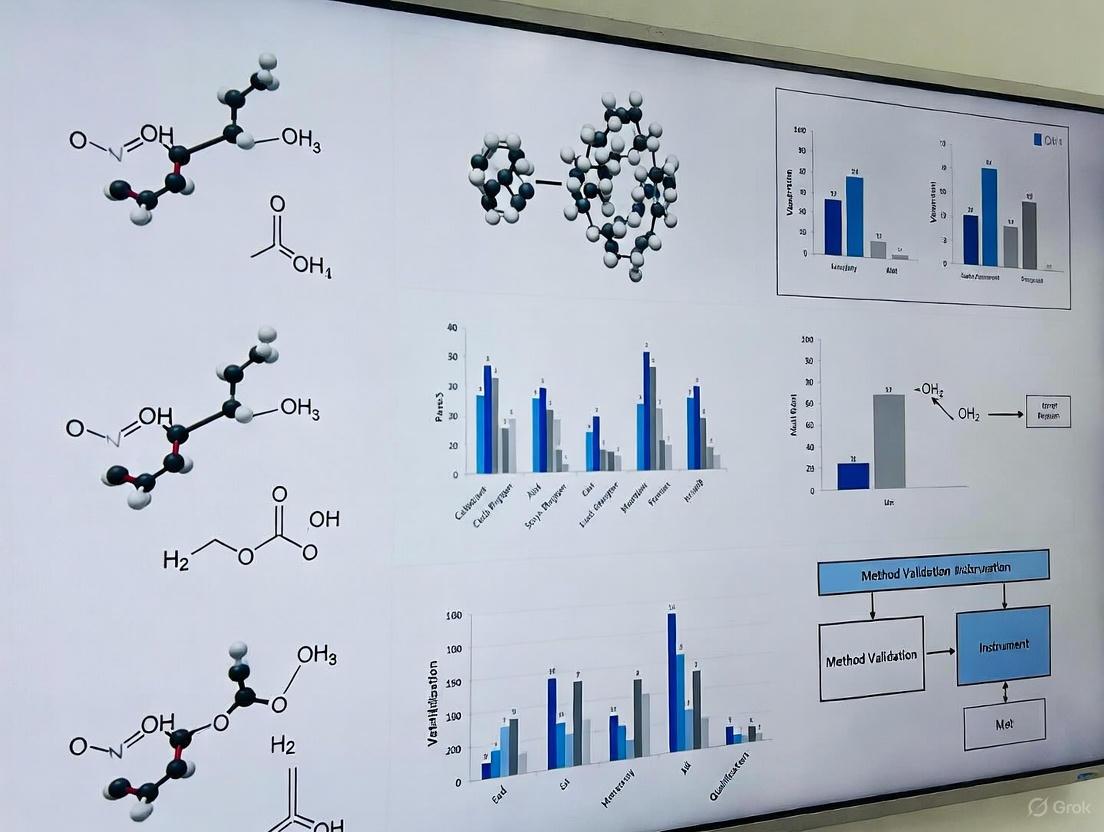

The following diagram illustrates the structure of this three-phase lifecycle:

Model Comparison Table

The table below summarizes the key differences and mappings between the traditional and modern qualification models.

| Aspect | Traditional 4Q Model | Modern Three-Phase Lifecycle |

|---|---|---|

| Core Philosophy | Sequential, stage-gated process [1]. | Integrated, continuous lifecycle approach [1] [2]. |

| Key Stages | DQ, IQ, OQ, PQ [1]. | 1. Specification & Selection2. Installation, Qualification & Validation3. Ongoing Performance Verification [2]. |

| Primary Focus | Documentary verification at specific stages [1]. | Overall process control and continued fitness for purpose over the entire instrument life [2]. |

| Stage 1 Focus | Design Qualification (DQ) focuses on defining specifications [1]. | Broader scope: User Requirements Spec (URS), selection, risk assessment, and purchase [2]. |

| Stage 2 Focus | IQ and OQ are distinct installation and operational verification stages [1]. | Integrated installation, commissioning, qualification, and validation activities [2]. |

| Stage 3 Focus | Performance Qualification (PQ) confirms initial performance [1]. | Ongoing Performance Verification (OPV) for continuous monitoring, maintenance, and change control [2]. |

| Adaptability | Can be rigid; difficult to differentiate OQ and PQ in practice [1]. | More flexible; allows for risk-based strategies tailored to instrument complexity [2]. |

Troubleshooting Guides & FAQs for Your Experiments

This section addresses common challenges you might encounter during instrument qualification in your research.

Frequently Asked Questions

Q1: What is the most critical element for success in the new three-phase lifecycle model? The key to success is Phase 1: Specification and Selection. If you fail to accurately define what you want the instrument or system to do in a comprehensive User Requirements Specification (URS), the subsequent phases will be built on an unstable foundation. The URS is a "living document" that should be updated as your knowledge of the system grows or your needs change [2].

Q2: How do I apply a risk-based approach to qualification? Instruments and systems are classified into groups (e.g., USP Groups A, B, and C) based on their complexity and risk to data integrity. The extent of qualification and validation activities is then scaled accordingly. A simple apparatus (Group A) requires minimal qualification, while a complex computerized system (Group C) requires extensive validation. This ensures effort is focused where it is most needed [2].

Q3: What does "fitness for intended use" actually mean for my instrument? An instrument is considered "fit for intended use" if it meets several criteria, including [2]:

- It is metrologically capable of operating over the ranges required by your analytical procedures.

- Its calibration is traceable to national or international standards.

- Its contribution to the overall measurement uncertainty of your analytical procedure is small and well-understood.

- Its critical parameters remain in a state of statistical control within established limits during Ongoing Performance Verification.

Q4: The 4Q model is deeply embedded in our SOPs. How crucial is it to switch to the new model? While the 4Q model is still recognized, the industry is moving towards the integrated lifecycle model because it is more flexible and aligned with modern regulatory guidance (like FDA Process Validation and ICH Q14) [2]. Adopting the new model is considered best practice for ensuring data integrity and regulatory compliance over the full instrument lifecycle. The transition can be managed by mapping your existing 4Q activities to the corresponding phases of the new model.

Common Qualification Issues and Resolutions

| Problem | Possible Root Cause | Recommended Resolution & Experiment Protocol |

|---|---|---|

| Failure during Operational Qualification (OQ) | Incorrect installation, unsuitable operating environment, or faulty instrument component. | Protocol: 1. Re-verify Installation Qualification (IQ) prerequisites. 2. Check environmental conditions (temp, humidity). 3. Consult vendor installation logs. 4. Isolate and retest the failed parameter. 5. Engage vendor support if a hardware fault is suspected. |

| Performance Drift During Ongoing Verification | Gradual component wear, inadequate calibration schedule, or unresolved system changes. | Protocol: 1. Trend OPV data to identify drift pattern. 2. Review calibration status and history. 3. Check recent change control records for modifications. 4. Perform root cause analysis (e.g., using a 5-Whys approach). 5. Escalate to preventive maintenance. |

| Inability to Reproduce Method Results | Instrument not qualified for the method's required operating range, or instrument contribution to uncertainty is too high. | Protocol: 1. Cross-reference method requirements against instrument URS and qualification ranges. 2. Ensure instrument's measurement uncertainty has been assessed and is fit for purpose (ideally <1/3 of the procedure's uncertainty) [2]. 3. Re-qualify instrument at the specific parameters used in the method. |

| Data Integrity Gaps Post-Qualification | Qualification did not fully cover the system's computerized components or data flow. | Protocol: 1. Re-assess the system under a risk-based classification (e.g., as a Group C system). 2. Review and update the validation plan to include data integrity controls (e.g., audit trails, electronic records security). 3. Perform additional testing on the specific data flow path. |

The Scientist's Toolkit: Key Reagents & Materials for Qualification

The following materials and documents are essential for successfully executing instrument qualification protocols.

| Item / Reagent | Function in Qualification |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable standard with known, certified properties for calibrating instruments and verifying accuracy during OQ and PQ. |

| User Requirements Specification (URS) | The foundational "living document" that defines the instrument's intended use, operational specs, and acceptance criteria; guides the entire qualification lifecycle [2]. |

| Standard Operating Procedures (SOPs) | Provides detailed, approved instructions for routine operations, calibration, maintenance, and troubleshooting, ensuring consistency and compliance. |

| System Suitability Test (SST) Solutions | A mixture of known compounds used to verify that the total system (instrument, reagents, and method) is performing adequately before sample analysis. |

| Preventive Maintenance Kits | Vendor-provided or approved parts and consumables (e.g., seals, lamps, lenses) used during scheduled maintenance to keep the instrument in a qualified state. |

| Qualification/Validation Protocol | A pre-approved plan that describes the specific tests, data requirements, and acceptance criteria for each stage of qualification (IQ, OQ, PQ) or the lifecycle. |

| Change Control Record | A formal document used to track, review, and approve any modifications to the qualified instrument or system, ensuring it remains validated after changes [2]. |

The foundation of reliable analytical data in pharmaceutical development rests on a robust understanding of the modern regulatory landscape. This framework integrates instrument qualification, analytical procedure development, and procedure validation into a cohesive lifecycle approach. Key documents include USP General Chapter <1058> on Analytical Instrument Qualification (AIQ), ICH Q2(R2) on validation of analytical procedures, and ICH Q14 on analytical procedure development. These guidelines are interconnected; properly qualified instruments (as per USP <1058>) provide the essential foundation for performing validated analytical methods (as per ICH Q2(R2)) that have been developed under the principles of ICH Q14. The U.S. Food and Drug Administration (FDA) adopts and enforces these standards, expecting a scientific, risk-based approach to ensure that data generated is reliable and that drug products are safe, effective, and of high quality [3] [4].

Key Guidelines and Their Latest Updates

Staying current with recent revisions is critical for regulatory compliance.

USP <1058>: Analytical Instrument and System Qualification

USP <1058> is an informational chapter that provides a framework for establishing the fitness for intended use of analytical apparatus, instruments, and systems [2]. A significant update was proposed in 2025, reflected in a draft currently open for comment until May 31, 2025 [2] [5].

- Title Change: The title is proposed to change from "Analytical Instrument Qualification" to "Analytical Instrument and System Qualification (AISQ)" [2] [5].

- New Lifecycle Model: The update introduces a new, integrated three-stage lifecycle model to align with modern quality standards [2] [5]:

- Specification and Selection

- Installation, Performance Qualification, and Validation

- Ongoing Performance Verification (OPV) [2]

- Alignment with Other Standards: The revised chapter explicitly links to other USP chapters and aligns its philosophy with the FDA's process validation guidance and the Analytical Procedure Lifecycle (APL) concepts in USP <1220> and ICH Q14 [2].

ICH Q2(R2) and ICH Q14: Modernizing Method Development and Validation

ICH Q2(R2), officially finalized in March 2024, and ICH Q14 provide the contemporary framework for analytical procedures [3] [4].

- ICH Q2(R2) - Validation of Analytical Procedures: This guideline details the validation requirements for analytical procedures. Key updates in Q2(R2) include [4]:

- A broader scope that now explicitly includes techniques like multivariate analysis, bioassays, and spectroscopic methods used as standalone procedures.

- Replacement of the term "Linearity" with "Response," with new guidance for both linear and non-linear calibration models.

- Clarification on "Range," distinguishing between the "reportable range" and "working range."

- New recommendations for assessing accuracy and precision, including a combined assessment approach using statistical intervals.

- Renaming "Detection Limit and Quantitation Limit" to "Lower Range Limit."

- ICH Q14 - Analytical Procedure Development: This new guideline emphasizes a systematic, science- and risk-based approach to analytical development. It introduces the central concept of the Analytical Target Profile (ATP) as a pre-defined objective that articulates the required quality of the analytical reportable value [4].

- Harmonized Training: In July 2025, the ICH released comprehensive training materials for both Q2(R2) and Q14 to ensure a harmonized global understanding and implementation [6].

Frequently Asked Questions (FAQs) and Troubleshooting

This section addresses common challenges and questions regarding the implementation of these guidelines.

FAQ 1: Our laboratory has always used the 4Qs model (DQ, IQ, OQ, PQ) for instrument qualification. How does the new three-stage lifecycle in the proposed USP <1058> affect us?

- Answer: The proposed three-stage lifecycle (Specification & Selection; Installation, Performance Qualification & Validation; Ongoing Performance Verification) does not abolish the 4Qs but integrates them into a more holistic and aligned process [2] [5]. The 4Qs model is maintained but is now viewed as activities within the broader lifecycle stages. For instance, DQ is a primary activity in Stage 1, while IQ, OQ, and PQ are key activities in Stage 2 [2]. This change emphasizes that qualification is a journey, not a one-time event, and ensures better alignment with process validation and analytical procedure lifecycle concepts.

FAQ 2: According to ICH Q2(R2), when should we use a "combined accuracy and precision" assessment, and how is it performed?

- Answer: ICH Q2(R2) introduces the option of a combined assessment as an alternative to evaluating accuracy and precision independently. This approach is particularly useful for procedures where the total error (bias + imprecision) is the most relevant metric for fitness of purpose [4].

- Troubleshooting Tip: If you encounter difficulties in setting appropriate acceptance criteria for the combined approach, consult USP <1210> Statistical Tools for Procedure Validation. This chapter provides guidance on estimating prediction, tolerance, or confidence intervals, which are compared to pre-defined performance criteria in the combined approach [4].

- Methodology: The combined approach typically involves:

- Analyzing a sufficient number of samples at multiple concentration levels.

- Calculating the total error or constructing a statistical interval (e.g., a β-expectation tolerance interval) around the measured results.

- Verifying that this interval falls within pre-defined acceptance limits based on the ATP [4].

FAQ 3: What is the practical relationship between the Analytical Target Profile (ATP) from ICH Q14 and instrument qualification per USP <1058>?

- Answer: The ATP defines the required quality of the analytical reportable value. This requirement flows down to the performance demands on the analytical instrument. The User Requirements Specification (URS) developed during the "Specification and Selection" stage of USP <1058> must be written to ensure the selected instrument is metrologically capable of meeting the needs of the ATP [2]. Specifically, the instrument's measurement uncertainty should contribute no more than one-third of the target measurement uncertainty specified in the ATP [2].

FAQ 4: ICH Q2(R2) now includes "Response" instead of "Linearity." How do we validate a procedure with a non-linear response?

- Answer: For non-linear responses (e.g., from ELISA, cell-based assays, or charged aerosol detectors), the validation focus shifts from proving linearity to demonstrating the suitability of the non-linear calibration model [4].

- Methodology:

- Model Selection: Choose an appropriate non-linear regression model (e.g., quadratic, power function, 4- or 5-parameter logistic).

- Goodness-of-Fit: Evaluate the model using the coefficient of determination (R²) and, critically, by analyzing residual plots to check for patterns that indicate poor model fit [4].

- Accuracy and Precision: Assess the accuracy and precision of reportable values across the range using the chosen model. The Q2(R2) guideline notes that estimating limits from signal-to-noise extrapolation, common for linear detectors, is unsuitable for non-linear ones [4].

Essential Research Reagent Solutions and Materials

The following table details key materials and documents crucial for successfully implementing these regulatory guidelines.

| Item/Category | Function & Purpose in the Regulatory Context |

|---|---|

| User Requirements Specification (URS) | A living document that defines the instrument's intended use, operating parameters, and acceptance criteria. It is the foundation of the "Specification and Selection" stage in USP <1058> and links instrument capability to the ATP [2]. |

| System Suitability Test (SST) | An integral part of chromatographic methods used to verify that the total analytical system (instrument, method, samples) is adequate for the intended analysis on the day of use. It operates above the foundation of AIQ [7]. |

| Reference Standards (Calibrators) | Well-characterized substances used to establish the calibration model (both linear and non-linear) for an analytical procedure. Their traceability and stability are critical for the "Accuracy" and "Response" validation parameters in Q2(R2). |

| Quality Control (QC) Samples | Samples with known values used to monitor the ongoing performance of the analytical procedure during routine use. They are part of the ongoing verification that the system remains in a state of control [7]. |

| Validation Protocol | A pre-approved plan that describes the specific experiments, acceptance criteria, and methodologies that will be used to validate an analytical procedure as per ICH Q2(R2) requirements. |

Experimental Protocols and Workflows

Workflow: Integrated Lifecycle for Instrument and Method Management

The following diagram illustrates the interconnected lifecycle of an analytical instrument and the analytical procedures it runs, as guided by modern standards.

Protocol: Implementing the New Three-Phase AISQ per Proposed USP <1058>

This protocol provides a step-by-step methodology for qualifying an analytical instrument under the proposed updated chapter.

Objective: To establish and maintain documented evidence that an analytical instrument or system is fit for its intended use throughout its operational lifecycle.

Principle: The process is divided into three integrated phases, moving from planning through operational release to continuous monitoring. The extent of activities is risk-based, depending on the instrument's complexity and criticality [2].

Step-by-Step Procedure:

Phase 1: Specification and Selection

- Action: Draft a User Requirements Specification (URS). This document must define the intended use, key operational parameters (e.g., flow rate range, wavelength accuracy, balance precision), and required services/environment.

- Critical Note: The URS must be based on the user's needs, not solely the manufacturer's marketing specifications. It is a living document [2] [7].

- Action: Perform a risk assessment and vendor assessment. Select the instrument that best meets the URS.

Phase 2: Installation, Qualification, and Validation

- Action: Execute Installation Qualification (IQ). Document that the correct instrument was received, installed properly in the selected environment, and that all components are present as specified.

- Action: Execute Operational Qualification (OQ). Verify that the instrument operates according to its operational specifications across its intended ranges. Use calibrated tools traceable to national standards where applicable.

- Action: Execute Performance Qualification (PQ). Demonstrate that the instrument performs consistently according to the URS for its intended application using a known, well-characterized test method (e.g., a system suitability test).

- Action: For computerized systems, include software validation activities, ensuring configuration and any custom calculations are verified.

- Deliverable: A summary report that reviews all qualification data and authorizes the release of the instrument for operational use.

Phase 3: Ongoing Performance Verification (OPV)

- Action: Implement a periodic review and monitoring plan. This includes regular calibration, preventative maintenance, and using system suitability tests (SSTs) before critical use.

- Action: Establish a robust change control procedure. Any modification to the instrument, software, or intended use must be evaluated and may require re-qualification.

- Action: Maintain a log of all service, repairs, and performance data to build a history of the instrument's performance over its lifecycle [2].

Data Presentation: ICH Q2(R2) Validation Parameters

The table below summarizes the key validation characteristics for analytical procedures as defined in ICH Q2(R2), providing a quick-reference overview.

| Validation Characteristic | Definition & Purpose (Per ICH Q2(R2)) | Key Considerations from Update |

|---|---|---|

| Accuracy | The closeness of agreement between a measured value and an accepted reference value. | Recommendation to report mean % recovery with a confidence interval. Combined assessment with precision is now an option [4]. |

| Precision | The closeness of agreement between a series of measurements. Includes repeatability, intermediate precision, and reproducibility. | Should be reported as standard deviation or relative standard deviation, with confidence intervals [4]. |

| Specificity/Selectivity | The ability to assess the analyte unequivocally in the presence of other components. | Selectivity is now acknowledged for procedures where specificity is not attainable. "Technology inherent justification" may be used (e.g., for MS, NMR) [4]. |

| Response (Linearity) | The ability of the procedure to produce results directly proportional to analyte concentration. | Replaces "Linearity." Now covers both linear and non-linear relationships. Assessment should include residual plots in addition to correlation coefficient [4]. |

| Range | The interval between the upper and lower levels of analyte for which suitable levels of precision, accuracy, and linearity have been demonstrated. | Clarified distinction between "reportable range" (in sample) and "working range" (in solution). New specific recommendations for assay and purity tests [4]. |

| Lower Range Limit | The lowest amount of analyte that can be reliably detected (LOD) or quantified (LOQ). | New terminology for "Detection Limit/Quantitation Limit." Can be linked to the reporting threshold for impurities [4]. |

| Robustness | A measure of the procedure's capacity to remain unaffected by small, deliberate variations in procedural parameters. | Highlights the link to ICH Q14, where understanding robustness is a key outcome of procedure development and informs the control strategy [4]. |

In highly regulated research environments, such as pharmaceutical development and food method validation, ensuring data integrity and reliability is paramount. A systematic approach to managing laboratory instruments throughout their entire operational life is not just a best practice but a regulatory expectation. The Integrated Lifecycle Model—encompassing Specification & Selection, Installation & Qualification, and Ongoing Performance Verification (OPV)—provides a structured framework to guarantee that instruments are consistently fit for their intended use [8] [9].

This model moves beyond a one-time validation event to a continuous state of control. It is firmly grounded in the principles of Quality by Design (QbD), which emphasize building quality into processes and products from the very beginning, a concept central to ICH Q8 guidelines [10] [11]. By adopting this lifecycle model, researchers and scientists can proactively manage instrument performance, reduce costly downtime, and generate defensible data for regulatory submissions.

Lifecycle Phase 1: Specification & Selection

The foundation of a successful instrument lifecycle is laid during the Specification & Selection phase. This initial stage focuses on defining precise requirements and choosing equipment that is technically capable and compliant with your research needs.

Key Activities and Deliverables

The primary goal of this phase is to create a User Requirement Specification (URS). The URS is a detailed document that outlines what the instrument must do from the end-user's perspective. It serves as the foundational document against which the instrument will eventually be qualified [9].

Critical elements of a URS include:

- Performance Requirements: Specific technical capabilities, such as detection limits, accuracy, precision, throughput, and measurement ranges required for your intended applications and methods.

- Operational Needs: Requirements for integration with existing laboratory systems, data output formats, and ease of use.

- Compliance and Regulatory Requirements: Any necessary adherence to standards like FDA 21 CFR Part 211 (cGMP), ALCOA+ data integrity principles, or other relevant guidelines [12] [13].

- Vendor Assessment: Evaluation of the supplier's reputation, service support, and documentation quality.

Linkage to QbD and Risk Management

The Specification & Selection phase aligns with the QbD principle of beginning with predefined objectives. The URS is analogous to a Quality Target Product Profile (QTPP) in drug development, as it defines the target profile for the instrument's performance [10] [11]. A preliminary risk assessment should be conducted to identify what could go wrong if the instrument fails to meet a specific requirement, helping to prioritize critical requirements during selection.

Lifecycle Phase 2: Installation & Qualification

Once an instrument is selected, it must be formally verified that it is installed correctly and operates as intended. This is achieved through a structured sequence of qualification protocols, often referred to as IQ, OQ, PQ [8] [9].

The IQ, OQ, PQ Protocol Sequence

The following workflow illustrates the sequential and dependent nature of the qualification process:

Installation Qualification (IQ) verifies that the instrument has been received, installed, and configured correctly according to the manufacturer's specifications and design intentions [8]. Key activities include:

- Verifying the installation location and environmental conditions (e.g., temperature, humidity, power supply) [8].

- Documenting all computer-controlled instrumentation, firmware versions, and serial numbers [8].

- Ensuring all manuals and certifications are received and that components are undamaged [8].

- Checking that software is installed correctly and is accessible [8].

Operational Qualification (OQ) tests the instrument's operational capabilities across its specified ranges. The goal is to demonstrate that the instrument will function as intended in its operational environment [8]. Testing typically includes:

- Testing hardware features like temperature control, pressure controllers, and fan-speed controllers [8].

- Verifying the instrument's built-in error detection mechanisms [8].

- Ensuring the equipment operates reliably within all manufacturer-specified limits [8].

Performance Qualification (PQ) is the final step, where the instrument is tested under actual conditions using your specific methods and materials to prove it is "fit-for-purpose" [8]. This phase generates documented evidence that the instrument consistently produces results that meet the acceptance criteria defined in the URS.

Quantitative Acceptance Criteria Examples

Qualification protocols must define measurable acceptance criteria. The table below provides illustrative examples for a hypothetical analytical balance.

| Instrument | Test Parameter | Acceptance Criterion | Method of Measurement |

|---|---|---|---|

| Analytical Balance | Accuracy | ±0.05 mg of certified standard weight | Weighing a traceable standard weight |

| Analytical Balance | Repeatability (Precision) | RSD ≤ 0.02% for 10 measurements | 10 repeated weighings of the same standard |

| HPLC UV Detector | Wavelength Accuracy | ±1 nm of known holmium oxide peak | Scanning a holmium oxide filter |

| pH Meter | Accuracy | ±0.01 pH units of standard buffer | Measuring certified pH buffer solutions |

Lifecycle Phase 3: Ongoing Performance Verification (OPV)

Qualification is not a one-time event. Ongoing Performance Verification ensures the instrument continues to operate within its qualified state throughout its productive life, a concept integral to a state of control as described in ICH Q10 [14] [11].

OPV Strategy and Schedules

OPV is a continuous process that combines routine checks and periodic reviews.

Key components of an OPV strategy include:

- Routine Performance Checks: These are quick tests performed at a frequency based on risk and instrument stability, such as system suitability tests before a critical analytical run.

- Preventive Maintenance (PM): Adherence to a scheduled PM program as defined by the manufacturer or internal reliability data.

- Periodic Requalification: A full or partial repetition of PQ testing at a defined interval (e.g., annually) to reconfirm the instrument's fitness for purpose.

- Review of Data and Events: Regular review of performance data, out-of-specification (OOS) results, and deviation reports to identify negative performance trends.

OPV Frequency and Triggers

The frequency of OPV activities should be risk-based. The following table outlines common triggers and corresponding actions.

| Trigger | OPV Activity | Purpose |

|---|---|---|

| Before each use | System Suitability Test | Verify the total system (instrument, method, analyst) is performing adequately for the specific test at the time of analysis. |

| Scheduled (Monthly/Quarterly) | Performance Verification Check | Use a simplified PQ test to ensure the instrument has not drifted from its qualified state. |

| After major maintenance or repair | Re-qualification (OQ/PQ) | Document that the instrument's performance has been restored following a significant change [8]. |

| Annual Review | Full PQ Re-test | Comprehensive re-verification that the instrument continues to meet all original URS/PQ requirements. |

Troubleshooting Guides and FAQs

This section provides direct, actionable guidance for common issues encountered during the instrument lifecycle, framed within a technical support context.

Troubleshooting Common Instrument Issues

Problem: Instrument fails a routine performance check (e.g., precision is out of specification).

| Step | Action | Rationale & Reference |

|---|---|---|

| 1 | Stop all analysis and clearly label the instrument as "OUT OF SERVICE." | Prevents the generation of invalid data and alerts other users. |

| 2 | Repeat the test following the exact procedure. | Confirms the result was not caused by a transient error or user mistake. |

| 3 | Check consumables and reagents. Verify age, integrity, and preparation of standards, buffers, and gases. | A common root cause; degraded reagents directly impact performance [15]. |

| 4 | Review recent maintenance and event logs. Look for recent repairs, power outages, or changes in environmental conditions. | Identifies potential triggers for the performance shift [15]. |

| 5 | Perform diagnostic checks. Run instrument self-diagnostics or use built-in test routines. | Isolates the problem to a specific module or component. |

| 6 | Escalate and Document. If the issue persists, escalate to specialized service. Initiate a Deviation Report and subsequent CAPA to document the investigation and resolution [13]. | Ensures regulatory compliance and creates a record for future trend analysis. |

Problem: Newly installed instrument software is inaccessible or fails to communicate with peripherals.

| Step | Action | Rationale & Reference |

|---|---|---|

| 1 | Verify IQ documentation. Confirm that folder structures, software versions, and system requirements were verified during Installation Qualification [8]. | Ensures the installation was completed as per the manufacturer's specifications and protocol. |

| 2 | Re-check physical connections and power to the peripheral device and the host computer. | Loose cables or unpowered devices are a frequent cause of communication failures [15]. |

| 3 | Check Device Manager (Windows) or System Information (Mac). Look for the device status. A yellow exclamation mark may indicate a driver issue [15]. | Provides direct insight into how the operating system recognizes the hardware. |

| 4 | Reinstall or update drivers from the manufacturer's website, ensuring compatibility with your OS version [15]. | Corrects corrupted or incompatible driver software. |

| 5 | Test the peripheral on another computer. If it works, the issue is isolated to the original computer's configuration [15]. | A critical step for root cause analysis, isolating the fault to the computer or the peripheral. |

Frequently Asked Questions (FAQs)

Q1: What is the difference between Equipment Qualification and Process Validation? A: Equipment Qualification (IQ, OQ, PQ) proves that a piece of equipment works correctly on its own and is fit for its intended use [9]. Process Validation proves that a specific manufacturing or analytical process, which may use several qualified pieces of equipment, consistently produces a result meeting its pre-determined specifications. You qualify equipment; you validate processes [9].

Q2: How can I use commissioning data in my qualification protocols to avoid duplication? A: Data generated during Factory Acceptance Tests (FAT) and Site Acceptance Tests (SAT) can often be used as evidence in qualification protocols, provided it is generated under a state of control and meets the pre-defined acceptance criteria [9]. This must be approved by your Quality Assurance unit to ensure the data is robust and reproducible.

Q3: During a QSIT audit, what should I expect regarding instrument qualification? A: An FDA auditor will likely examine your CAPA system and may "follow the thread" from an instrument-related failure or deviation directly back to your qualification and OPV records [13]. They will want to see that the instrument was properly qualified, that personnel are trained, and that any changes or performance issues are managed through your change control and CAPA systems [13].

Q4: What documentation is essential for demonstrating a state of control during an audit? A: Be prepared to present:

- Approved IQ, OQ, and PQ protocols and reports [8].

- Records of personnel training on the instrument.

- A complete and up-to-date preventive maintenance schedule and logs.

- All OPV records, including system suitability tests and periodic performance checks.

- The complete history of any deviations, investigations, and CAPAs related to the instrument [13].

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key materials and reagents used in the qualification and OPV of common laboratory instruments.

| Item | Function in Qualification/OPV |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable standard with a certified value and uncertainty. Used in OQ/PQ to verify instrument accuracy for balances, pH meters, and chromatographic systems. |

| System Suitability Test Mixtures | A specific mixture of analytes used to verify the resolution, precision, and sensitivity of chromatographic systems (HPLC, GC) before use. |

| Holmium Oxide Wavelength Filter | A solid-state filter with known sharp absorption peaks. Used for verifying the wavelength accuracy of UV-Vis spectrophotometers during OQ and OPV. |

| Stable Quality Control (QC) Sample | A homogeneous, stable sample representative of the test articles. Run repeatedly over time to monitor the stability and precision of the entire analytical process (instrument, method, analyst) for trend analysis in OPV. |

| NIST-Traceable Thermometer | A calibrated thermometer used to verify the temperature accuracy of incubators, refrigerators, freezers, and other temperature-controlled units during IQ/OQ. |

The United States Pharmacopeia (USP) General Chapter <1058> provides a framework for Analytical Instrument Qualification (AIQ) and has been updated to Analytical Instrument and System Qualification (AISQ) [16] [2]. This risk-based model classifies instruments into three groups—A, B, and C—to ensure they are fit for their intended use in pharmaceutical analysis while optimizing resource allocation [5] [17]. The classification dictates the extent and type of qualification activities required, focusing efforts where the risk to data integrity and product quality is highest [16].

Instrument Group Classifications and Requirements

The table below summarizes the core characteristics and qualification focus for each instrument group.

| Group | Instrument Type & Examples | Qualification & Validation Focus |

|---|---|---|

| A | Standard apparatus with no measurement capability or user calibration [2].Examples: analytical balances, pH meters, magnetic stirrers [18]. | Qualification via calibration and maintenance only [2]. No extensive AIQ testing [18]. |

| B | Instruments with measurement capability and firmware-controlled operation [2].Examples: spectrophotometers, centrifuges [16]. | Firmware validated through functional testing during Operational Qualification (OQ) [2]. Standardized IQ/OQ/PQ protocols [18]. |

| C | Computerized instrument systems requiring software for operation or data processing [2].Examples: HPLC, GC-MS systems [16]. | Integrated qualification: hardware (IQ/OQ/PQ) and computerized system validation (CSV) for software [16] [18]. Requires traceability matrix and validation report [18]. |

This risk-based approach ensures that qualification efforts are commensurate with the complexity and impact of the instrument or system, promoting efficiency and regulatory compliance [16] [17].

Troubleshooting Guides by Instrument Group

Group B: Spectrophotometer Fails Operational Qualification (OQ) Accuracy Test

Problem: During OQ, the spectrophotometer's absorbance readings for a standard solution are outside acceptable limits.

Investigation & Resolution:

- Confirm the Standard: Verify the standard solution was prepared correctly and is within its expiry date.

- Inspect the Cuvette: Check the cuvette for scratches, cracks, or fingerprints. Clean it with appropriate solvent and lint-free cloth.

- Check Source and Detector: Ensure the instrument has warmed up properly. Run a diagnostic test on the lamp (e.g., check hours of use) and detector.

- Perform Wavelength Accuracy Check: Use a holmium oxide filter to verify the instrument's wavelength accuracy is within specification.

Documentation: Document all steps, observations, and results in the OQ report. Any parts replaced (e.g., lamp) require re-qualification.

Group C: HPLC System Shows Peak Tailing and Retention Time Drift

Problem: An HPLC system used for drug product assay shows significant peak tailing and inconsistent retention times, compromising data integrity.

Investigation & Resolution:

- Isolate the Issue: Follow a systematic workflow to identify the root cause.

- Data Integrity & Re-qualification:

Frequently Asked Questions (FAQs)

What is the difference between instrument qualification and software validation?

The core principle is: instruments are qualified, and software is validated [18]. Instrument qualification (AIQ) demonstrates a piece of equipment is installed properly (IQ), operates as specified (OQ), and performs consistently for its intended use (PQ) [18] [19]. Software validation (CSV) confirms that software consistently produces results meeting predetermined acceptance criteria, ensuring data are reliable, accurate, and secure [18]. For Group C systems, these activities are integrated [16].

Our lab has a new Group C instrument. What does the integrated life cycle approach entail?

The updated USP <1058> draft promotes a three-stage life cycle approach aligned with modern quality standards [2] [17]:

- Stage 1: Specification and Selection: Define intended use in a User Requirements Specification (URS), select the system, and conduct a risk assessment [2].

- Stage 2: Installation, Qualification, and Validation: Covers installation, hardware qualification (IQ/OQ), and software validation (CSV), culminating in release for operational use [2].

- Stage 3: Ongoing Performance Verification (OPV): Ensures the instrument continues to meet performance standards through regular checks, calibration, maintenance, and change control [2] [5].

How do we manage firmware and software updates for a Group B instrument?

- Firmware Updates: Treat as a change control event [16]. The firmware version used during OQ should be recorded [19]. Evaluate the update's impact. If the vendor states it does not affect analytical functions, documentation may suffice. If it impacts performance, re-qualification (OQ/PQ) is necessary [19].

- Software for Group C Systems: Requires a more rigorous change control process. All updates must be systematically evaluated, tested, and documented, often requiring full re-validation [16].

The Scientist's Toolkit: Essential Reagents for Instrument Qualification

| Reagent / Material | Critical Function in Qualification |

|---|---|

| Certified Reference Standards | Provides a traceable benchmark for verifying instrument accuracy, precision, and linearity during OQ and PQ [2]. |

| Holmium Oxide Filter (Spectroscopy) | Used for wavelength accuracy verification in UV-Vis spectrophotometers, a key OQ test [2]. |

| System Suitability Test Mix (Chromatography) | A standardized mixture to confirm critical parameters (e.g., resolution, peak symmetry) for HPLC/GC systems before analysis. |

| Stable, Pure Analytical Samples | Essential for running performance qualification (PQ) tests that demonstrate the instrument's consistency in a live method environment [19]. |

A User Requirements Specification (URS) is a foundational document that describes the business needs and what users require from a system, equipment, or process to ensure it is fit for its intended use in a regulated environment [20]. It is typically written early in the validation lifecycle, often before a system is created or acquired, by the system owner and end-users with input from Quality Assurance [20]. The URS is not a technical document but rather should be understandable to readers with general system knowledge, focusing on what the system must do rather than how it should be built [20] [21].

In the context of instrument qualification for food method validation research, the URS is critical for establishing that analytical instruments and systems are capable of performing their required functions accurately and reliably, thereby ensuring the integrity of analytical data and compliance with regulatory standards.

The URS in the Instrument Qualification Lifecycle

Modern regulatory guidance, including the proposed update to USP <1058> on Analytical Instrument and System Qualification (AISQ), emphasizes a risk-based lifecycle approach rather than treating qualification as a series of isolated events [2] [17] [5]. This lifecycle encompasses the entire journey of an analytical instrument from specification and selection through installation and performance verification to eventual retirement [5].

The following diagram illustrates how the URS integrates into the three-stage instrument qualification lifecycle, aligning with modern regulatory expectations:

The URS serves as a living document throughout this lifecycle [22]. As your knowledge of the instrument or system increases, or as intended use changes, the URS should be updated accordingly through proper change control procedures [2] [22].

Essential Components of a URS for Analytical Instruments

A well-structured URS for analytical instrument qualification should include the following key components:

Table: Key Components of an Effective URS for Analytical Instruments

| Component | Description | Examples for Analytical Instruments |

|---|---|---|

| Introduction & Scope | Defines the intent, scope, and key objectives for the system | Scope: HPLC system for pesticide residue analysis in food samples [20] [21] |

| Intended Use | Description of how the system supports compliance or product quality | GMP testing of final product, raw material identification, stability testing [21] |

| Functional Requirements | Specific functions the system must perform | "System must maintain column oven temperature at ±0.5°C of set point"; "Auto-sampler must inject samples with ≤0.5% RSD" [20] [21] |

| Performance Requirements | Quantitative performance criteria | "Detection limit of 0.01 ppm for target analytes"; "System must process 40 samples unattended" [21] |

| Data Integrity & Security | Requirements for data handling, storage, and protection | "System must maintain audit trails for all data modifications"; "Role-based access control for different user types" [21] |

| Regulatory Compliance | Applicable regulatory standards | "Compliant with 21 CFR Part 11"; "Meets requirements of USP <1058>" [20] [21] |

| Environmental Requirements | Operating environment conditions | "Operates in ambient temperatures of 15-30°C"; "Withstands relative humidity of 20-80%" [21] |

| Lifecycle Requirements | Maintenance, calibration, and training needs | "Annual preventive maintenance required"; "On-site user training for operators" [20] |

Troubleshooting Guide: Common URS Issues and Solutions

Frequently Asked Questions

Q: What is the difference between a URS and a Functional Requirements Specification (FRS)? A: The URS defines what users need the system to do from a business perspective, while the FRS specifies how the system will functionally fulfill these requirements. The URS is user-focused, while the FRS is more technical and serves as a blueprint for developers [23].

Q: Are URS documents always required for instrument qualification? A: When a system is being created, User Requirements Specifications are valuable for ensuring the system will do what users need. For existing systems being validated retrospectively, user requirements can be combined with Functional Requirements into a single document [20].

Q: How specific should requirements be in a URS? A: Requirements should be clear, unambiguous, and testable. Avoid vague terms like "user-friendly" or "fast" without specific measures. Instead, use quantifiable metrics like "system must generate reports within 2 minutes of user request" [21].

Q: Can URS be updated after Factory Acceptance Testing (FAT) or Site Acceptance Testing (SAT)? A: Yes, the URS is a living document. While FAT and SAT shouldn't primarily drive changes, you may discover missed requirements that need addition through these activities. Any revisions should be managed through formal change control [22].

Q: How do I define requirements for a multi-purpose instrument? A: Write the URS around a platform with operating ranges matching equipment capability. For new products or methods, review requirements against the URS. Ideally, new applications should fit within existing requirements, otherwise equipment changes may be needed [22].

Troubleshooting Common URS Problems

The following flowchart outlines a systematic approach to identifying and resolving common URS development issues:

Experimental Protocol: Developing a URS for Analytical Instrument Qualification

Methodology for URS Development

Objective: To establish a standardized protocol for developing a comprehensive User Requirements Specification for analytical instruments used in food method validation research.

Materials and Equipment:

- Document control system

- Requirement tracking software (optional)

- Regulatory guidance documents (USP <1058>, EU GMP Annex 15, FDA guidelines)

- Template for URS documentation

Procedure:

Define Scope and Objectives

- Clearly delineate the boundaries of the system and its intended use

- Identify key objectives and what constitutes successful implementation

- Document applicable regulatory concerns and quality standards [20]

Assemble Multidisciplinary Team

Gather Requirements

- Conduct interviews with stakeholders to identify needs

- Review process parameters and critical quality attributes that the instrument will impact [22]

- Consider both current and anticipated future requirements

Categorize and Prioritize Requirements

Document Requirements with Clear Language

- Use unambiguous, concise statements

- Assign unique identifiers to each requirement for traceability [20]

- Avoid technical jargon where possible; focus on user needs

Incorporate Regulatory and Compliance Requirements

- Include relevant data integrity principles (ALCOA+)

- Specify necessary audit trail capabilities

- Address electronic records and signatures requirements if applicable [21]

Establish Verification Methods

- Define how each requirement will be verified (FAT, SAT, IQ, OQ, PQ)

- Include acceptance criteria for each requirement [21]

Review and Approve

- Conduct formal reviews with all stakeholders

- Obtain approval from system owner, quality unit, and end-users [20]

- Implement document control for future revisions

Table: Essential Resources for Effective URS Development

| Resource | Function | Application in URS Development |

|---|---|---|

| Regulatory Guidelines (USP <1058>, EU GMP Annex 15, 21 CFR Part 11) | Provide compliance framework and requirements | Ensure URS addresses all regulatory expectations for instrument qualification [2] [21] |

| Risk Assessment Tools (FMEA, FTA) | Identify and prioritize potential failure modes | Apply risk-based approach to focus on critical requirements that impact product quality and patient safety [22] |

| Requirement Traceability Matrix | Track requirements through development and testing | Maintain clear linkage between user needs, functional requirements, and verification tests [20] [21] |

| Design Control Software | Manage requirements and document version control | Facilitate collaboration, maintain revision history, and ensure all stakeholders work from current version [22] |

| Vendor Documentation | Provide technical specifications and capabilities | Inform realistic requirement setting based on available technology and vendor capabilities [2] |

Best Practices for URS Implementation and Maintenance

Treat URS as a Living Document: The URS should be updated as requirements change during any project phase or as additional risk controls are identified [22]. Implement a robust change control process to manage revisions while maintaining document integrity.

Align with Critical Process Parameters: For instruments used in manufacturing, ensure the URS reflects critical process parameters (CPPs) and critical quality attributes (CQAs) identified through quality risk assessment [22].

Maintain Traceability: Establish and maintain traceability from user requirements through functional specifications, design documents, and verification tests. This provides a clear audit trail for regulatory inspections [20] [21].

Focus on Fitness for Intended Use: Ensure the URS clearly defines what makes the instrument "fit for intended use," including metrological capability, traceability to standards, and contribution to measurement uncertainty budgets [2].

Verify Vendor Capabilities: Assess supplier ability to meet URS requirements before selection. The true role of the supplier begins long before purchase, as instruments are designed, built, and tested before laboratory consideration [2].

From Theory to Practice: Executing IQ, OQ, PQ and Integrating Method Validation

Installation Qualification (IQ) is the documented verification that a piece of equipment, system, or instrument has been delivered, installed, and configured according to the manufacturer's specifications, approved design intentions, and relevant regulatory codes [8] [24]. In highly regulated industries like pharmaceuticals, medical devices, and food manufacturing, IQ serves as the critical first step in the equipment qualification lifecycle, which also includes Operational Qualification (OQ) and Performance Qualification (PQ) [8] [25]. Its primary purpose is to establish confidence that the system has the necessary prerequisite conditions to function as expected in its operational environment [24].

The core objectives of IQ are to [26]:

- Verify Correct Installation: Ensure all components are installed correctly according to manufacturer specifications and design requirements.

- Review and Gather Documentation: Assemble all necessary documentation, including manuals, certificates, and installation records.

- Establish a Baseline: Create a documented baseline of the installed system for future validation phases and change control.

For researchers and scientists, a robust IQ process is foundational to data integrity. It ensures that analytical instruments used in food method validation research are properly set up, which is a prerequisite for generating reliable, accurate, and reproducible experimental data.

Prerequisites for IQ Execution

Before executing the IQ protocol, several prerequisites must be in place to ensure a smooth and compliant process.

- Approved Protocol: The IQ protocol itself must be formally approved before execution begins. This approval should come from the System Owner and Quality Assurance personnel [24].

- Trained Personnel: Individuals involved in the installation and qualification process must be thoroughly trained and skilled in their roles, with a clear understanding of the equipment, the IQ procedure, and Good Manufacturing Practice (GMP) requirements [26].

- Site Preparation: The installation site must be prepared and verified to meet all environmental and operational requirements specified by the manufacturer, such as power supply, temperature, humidity, and necessary floor space [27] [28].

The IQ Protocol: A Step-by-Step Guide

The following section provides a detailed, step-by-step methodology for executing an Installation Qualification.

Step 1: Equipment and Documentation Inspection

The first step involves a thorough inspection of the delivered equipment and its accompanying documentation.

- Action: Physically unpack the instrument and cross-check all components, parts, and accessories against the shipping list and purchase order to ensure everything is present and undamaged [8] [28].

- Documentation: Gather and review all documentation provided by the manufacturer. This typically includes:

- Record: Document the model numbers, serial numbers, and firmware versions for all major components [8].

Step 2: Verification of Installation Conditions

This step verifies that the installation environment meets the required specifications for the equipment to operate correctly.

- Action: Check that the installation location provides adequate floor space, clearance, and access for operation and maintenance [8].

- Action: Verify that all environmental conditions, such as ambient temperature, humidity, and cleanliness, are within the manufacturer's specified ranges [28] [8].

- Action: Confirm that the power supply (voltage, amperage, phase) is correct and stable. Also, check other utilities like compressed air, water, or gas if required [8] [27].

- Record: Document the environmental conditions and power supply verification results.

Step 3: Mechanical and Physical Installation Verification

Here, you verify that the physical installation of the equipment and its components has been completed correctly.

- Action: Ensure the equipment is mounted or placed securely and is level, if required [28].

- Action: Inspect all mechanical components for damage and verify that all connections (e.g., tubing, cables, fittings) are secure and correct [8] [28].

- Action: For IT systems or instruments with software, verify that the required folder structures are established and that the minimum system requirements (processor, RAM, etc.) are met [8] [24].

Step 4: Electrical and Ancillary System Checks

This step ensures that the instrument is properly connected to power and can communicate with any peripheral systems.

- Action: Confirm that all electrical connections are safe and comply with local regulations [27].

- Action: Verify correct connections and communication with peripheral units, such as printers, computers, or additional sensors [8].

- Record: Document the calibration and validation dates of any tools used to perform the IQ checks [8].

Step 5: Final Review and Report Generation

The final step involves compiling all the data and observations into a formal report.

- Action: Review all collected data and check for any deviations from the protocol's acceptance criteria. Any deviations must be documented and resolved before the IQ is considered complete [24].

- Action: Compile a final IQ Report that summarizes the execution of the protocol, the findings, and concludes whether the installation is qualified [8].

- Documentation: The executed protocol, all raw data, and the final report become part of the permanent equipment qualification file [27].

The workflow below summarizes the key stages of the Installation Qualification process:

Essential Documentation

Proper documentation is the cornerstone of a defensible IQ. The table below outlines the key documents required for a complete IQ package.

Table: Essential Installation Qualification Documentation

| Document Type | Purpose and Description | Key Contents |

|---|---|---|

| IQ Protocol [8] | A comprehensive, pre-approved plan that outlines the scope, methodology, and acceptance criteria for the IQ. | Equipment identification (model, serial number), list of systems to be qualified, installation requirements, environmental needs, and verification checklists. |

| IQ Checklist [8] | A detailed checklist derived from the IQ protocol, used to systematically verify each installation criterion. | Physical installation checks, electrical connections, software installation, environmental conditions, and safety inspections. |

| IQ Report [8] [26] | The final report documenting the execution of the IQ protocol and summarizing the findings. | Summary of all activities, raw data, documented deviations, and a formal statement on whether the installation meets all predefined criteria. |

| Manufacturer's Documentation [8] [27] | Evidence that the equipment is as designed and supplied. | User manuals, installation manuals, specifications, and calibration certificates. |

| Drawings and Diagrams [29] | Visual verification of the installed system. | P&I diagrams (Piping and Instrumentation) and control system documentation, if applicable. |

Troubleshooting Common IQ Challenges

Researchers and validation scientists often encounter specific challenges during the IQ process. Here are solutions to common issues.

Problem: Incomplete or Missing Manufacturer Documentation

- Solution: For old or used equipment where documents are missing, create a retrospective User Requirement Specification (URS) based on the products processed on the equipment. Compile any available technical documentation (e.g., P&I diagrams) and attach it to the qualification documents [29]. A risk assessment should be used to justify the approach.

Problem: Discrepancy Between Expected and Actual Result During IQ Testing

- Solution: Do not ignore the discrepancy. Document it immediately as a deviation in the IQ protocol. The deviation must be investigated, and a root cause identified. Corrective actions must be taken and documented. The IQ cannot be considered complete until all deviations are resolved [24].

Problem: Inadequate Environmental Conditions at Installation Site

- Solution: During the pre-installation review, proactively verify that temperature, humidity, and power supply meet the manufacturer's requirements. If conditions are inadequate, work with facilities management to implement environmental controls before proceeding with installation [8] [27].

Problem: Unclear Acceptance Criteria in Protocol

- Solution: Avoid ambiguous statements like "install as per manufacturer's instructions." Instead, define acceptance criteria that are specific, measurable, and objective. For example, "ensure the centrifuge rotor speed reaches 15,000 rpm ± 100 rpm as per manufacturer’s operational specification" [8].

Best Practices for a Successful IQ

Implementing the following best practices can significantly enhance the efficiency and compliance of your IQ process.

- Integrate Risk Management: Incorporate a risk-based approach from the start. Identify potential risks associated with the equipment installation and prioritize IQ activities based on their impact on product quality and safety [8].

- Use Visual Aids: Incorporate diagrams, flowcharts, and photographs within the protocol. Visual aids enhance the clarity of installation instructions and reduce execution errors [8].

- Plan for Future Changes: Design the IQ protocol with flexibility to accommodate potential future equipment upgrades or modifications. This can involve modular sections that can be easily updated [8].

- Cross-Reference Related Documents: Clearly reference related validation documents, such as the Validation Master Plan (VMP) or Design Qualification (DQ), within the IQ protocol. This creates a cohesive and traceable documentation suite [8].

- Centralize Documentation: Implement a centralized document management system to store and organize all validation records, ensuring traceability and ready access during audits [28].

Frequently Asked Questions (FAQs)

Q1: Can IQ, OQ, and PQ be combined into a single document? A: Yes, for less complex systems, it is acceptable and common practice to combine the IQ, OQ, and PQ activities into a single document, often referred to as IOPQ or IOQ [26]. This streamlines the documentation process for equipment where a full-scale, separate qualification is not justified by risk.

Q2: How often does equipment need requalification? A: Requalification should be performed periodically based on a risk evaluation. It is also mandatory after any major maintenance, repair, or modification that could impact the equipment's performance [8] [26].

Q3: What is the difference between IQ of a physical instrument and software? A: The core principle is the same—verifying correct installation against specifications. For a physical instrument, IQ focuses on location, utilities, and physical components [8]. For software, IQ involves verifying that the correct version is installed, folder structures are established, minimum system requirements are met, and that the software is accessible [8] [24].

Q4: What is the FDA's definition of Installation Qualification? A: The FDA defines IQ as "Establishing confidence that process equipment and ancillary systems are compliant with appropriate codes and approved design intentions, and that manufacturer recommendations are suitably considered." In practice, it is the executed protocol documenting that a system has the necessary prerequisite conditions to function as expected [24].

Table: Essential Research Reagent Solutions for Qualification and Validation

| Item / Solution | Function in Qualification / Validation |

|---|---|

| Certified Reference Materials | Used for calibration and verification of instrument accuracy during OQ and PQ phases following a successful IQ [28]. |

| Standardized Protocols and Templates | Pre-defined, standardized documents (e.g., IQ checklist) ensure consistency, compliance, and efficiency across multiple qualification projects [25]. |

| Calibrated Measurement Tools | Tools with valid calibration certificates (e.g., multimeters, thermometers) are essential for objectively verifying installation parameters like voltage and temperature [8]. |

| Document Management System | A centralized system (electronic or physical) for storing all qualification documents, ensuring version control, and facilitating audit readiness [28]. |

| Risk Assessment Software | Aids in implementing a risk-based approach to qualification by helping to identify, analyze, and mitigate potential installation and operational risks [8]. |

This guide provides detailed methodologies and troubleshooting advice for researchers and scientists conducting Operational Qualification (OQ) as part of instrument qualification in food method validation and drug development.

Frequently Asked Questions (FAQs)

What is the primary goal of Operational Qualification (OQ)? The primary goal of OQ is to provide documented verification that an instrument's subsystems operate according to the manufacturer's operational specifications and the user's requirements. It identifies process control limits and potential failure modes to ensure the equipment functions reliably within its specified operating ranges [8] [30].

When should OQ be performed? OQ is performed after a successful Installation Qualification (IQ). It should also be repeated after major repairs, instrument relocation, significant modifications, or as required by your site's standard operating procedures (SOPs) or quality requirements [19] [31].

Who is responsible for executing the OQ? OQ can be performed by the equipment vendor, internal qualified personnel, or certified field service engineers. The key requirement is that it must be performed in the user's specific environment to ensure real-world operational conditions are met [19] [31].

What is the difference between OQ and Performance Qualification (PQ)? OQ verifies that the instrument operates correctly according to its design specifications, while PQ demonstrates that the instrument consistently produces the correct results under real-world, routine operating conditions. OQ focuses on equipment function, and PQ focuses on process output [8] [30].

Core OQ Testing Protocol

The OQ phase involves a systematic testing approach to verify all instrument functions and establish operational limits.

OQ Execution Workflow

The following diagram outlines the logical sequence and key decision points for executing an Operational Qualification.

Key Parameters for OQ Testing

Operational Qualification should identify and inspect equipment features that can impact final product quality. The table below summarizes common parameters and functions to test during OQ.

| Parameter/Function | Testing Methodology | Acceptance Criteria |

|---|---|---|

| Temperature Control [8] | Use calibrated probes and data loggers to measure temperature at multiple points across the operating range. | Meets manufacturer's specified range and uniformity (e.g., ±0.5°C). |

| Humidity Measurement & Control [8] [30] | Utilize calibrated hygrometers to challenge the system at setpoints across its operational range. | Readings and control are within specified tolerances of the setpoint. |

| Fan or Motor RPM [30] | Measure rotational speed using a calibrated tachometer at different setpoints. | Speed is stable and matches the setpoint within the manufacturer's tolerance. |

| Servo Motors & Air-Flap Controllers [8] | Program sequences of movements and positions. Verify with precision measuring tools. | Movements are precise, repeatable, and reach all programmed positions accurately. |

| Displays & Operational Signals (LEDs) [30] | Visually verify all indicators and displays function correctly under normal and dim lighting. | All indicators are visible and convey the correct status information. |

| Pressure & Vacuum Controllers [8] | Use calibrated pressure gauges and transducers to test setpoints and stability over time. | System achieves and maintains setpoints within the specified control limits. |

| Timers & Activity Triggers [30] | Use a calibrated timer to verify the accuracy of internal timers and event triggers. | All timed functions and triggers operate within the specified time tolerance. |

| Card Readers & Access Systems [8] | Test with authorized and unauthorized access credentials. | System correctly grants or denies access as expected. |

Troubleshooting Common OQ Issues

Problem: Temperature fluctuations exceed acceptance criteria.

- Potential Causes: Faulty sensor, unstable power supply, improper calibration, or issues with the heating/cooling unit.

- Corrective Action: Verify sensor calibration, check for stable input voltage, inspect heating/cooling elements for damage, and ensure environmental conditions (e.g., ambient temperature, drafts) are within the instrument's requirements [8].

Problem: Consistent out-of-specification readings from a specific sensor.

- Potential Causes: Sensor drift, contamination, incorrect configuration, or electrical interference.

- Corrective Action: Re-calibrate the sensor, clean it according to the manufacturer's SOP, verify configuration settings in the software, and check cable connections and shielding [31].

Problem: Instrument fails to communicate with peripheral devices or software.

- Potential Causes: Incorrect communication drivers, faulty cables, wrong protocol settings (e.g., baud rate, parity), or network configuration issues.

- Corrective Action: Reinstall and verify drivers, replace and reseat communication cables, confirm all communication settings match between devices, and check network connectivity and permissions [8] [19].

Problem: Test results are inconsistent or not repeatable.

- Potential Causes: Unstable environmental conditions, operator error, variation in reagent quality, or instrument wear and tear.

- Corrective Action: Document and control environmental factors (temperature, humidity), retrain the operator on the procedure, use a new batch of reagents or calibrated standards, and inspect critical components for wear [30] [19].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following materials are critical for the accurate execution of OQ protocols.

| Item | Function in OQ |

|---|---|

| Calibrated Temperature Probes/Data Loggers | Provide traceable measurement to verify the accuracy and uniformity of temperature-controlled systems (e.g., incubators, baths) [31]. |

| Certified Reference Materials (CRMs) | Act as known and stable standards to challenge instrument response, accuracy, and linearity across the intended operational range [31]. |

| Calibrated Hygrometer | Used to verify the accuracy of an instrument's built-in humidity sensors and controls [8]. |

| Precision Tachometer | Measures the rotational speed (RPM) of motors and fans to ensure they operate within specified limits [30]. |

| Calibrated Pressure Gauge/Transducer | Provides an independent, accurate measurement to validate the readings of the instrument's internal pressure or vacuum sensors [8]. |

| Calibrated Multimeter | Verifies electrical signals, power supply stability, and input/output voltages for various instrument components [8]. |

Performance Qualification (PQ) is the final stage in the qualification of analytical instruments and processes, providing documented verification that a system consistently performs according to specifications defined by the user and is appropriate for its intended use in real-world conditions [19] [32]. Unlike earlier qualification phases that focus on installation and operational parameters, PQ demonstrates that the integrated system can reliably produce valid results in its actual working environment, using the same materials, personnel, and procedures employed in daily operations [33] [34].

In regulated laboratories, PQ is not a one-time event but an ongoing requirement to ensure instruments remain in a state of control throughout their operational life. Performance checks are conducted regularly and after major repairs, relocations, or modifications to verify that instrument performance has not drifted outside acceptable limits [19]. For researchers and drug development professionals, establishing and maintaining a robust PQ program is fundamental to generating reliable, defensible data that complies with Good Laboratory Practice (GLP) regulations and other quality standards [19].

The relationship between PQ and other qualification stages follows a logical progression, with each phase building upon the documentation and verification of the previous one. The following diagram illustrates this qualification lifecycle and where PQ fits within the overall process:

The Scientific and Regulatory Foundation of PQ

Distinguishing PQ from Other Qualification Phases

Understanding the distinction between Operational Qualification (OQ) and Performance Qualification (PQ) is crucial for proper implementation. While OQ verifies that an instrument operates according to manufacturer specifications within defined limits, PQ confirms that it consistently meets user requirements under actual working conditions [34]. The OQ demonstrates that the equipment can function correctly, while the PQ demonstrates that it does function correctly when integrated into the specific analytical processes for which it is intended [8] [34].

For example, for an infrared instrument, OQ might verify that the wavenumber accuracy meets manufacturer specifications using a certified reference material, while PQ would demonstrate that the instrument correctly identifies known materials from your specific research samples according to established acceptance criteria [34]. This distinction highlights why PQ must be performed in the user's environment with relevant test materials, as it validates the entire analytical process rather than just the instrument's standalone capabilities.

Regulatory Framework and Compliance Requirements

Performance Qualification is mandated by accrediting agencies such as the College of American Pathologists (CAP) and The Joint Commission, which routinely request and review PQ documentation during inspections [19]. Although the term "qualification" isn't explicitly mentioned in 21 CFR 211, FDA investigators typically reference the requirement under 21 CFR 211.160(b), which states that "equipment shall be adequately calibrated, inspected, or checked according to a written program designed to assure proper performance" [34].

The United States Pharmacopeia (USP) General Chapter <1058> on Analytical Instrument Qualification (AIQ) provides comprehensive guidance on PQ implementation, emphasizing that both OQ and PQ must be directly linked to requirements documented in the User Requirements Specification (URS) [34]. Without an adequate URS that clearly defines intended use, researchers cannot properly establish relevant PQ tests with appropriate ranges and acceptance criteria [34].

Implementing PQ: Protocols and Procedures